Hadoop单节点搭建

一、实验环境

1、安装环境

- 操作系统:redhat6.5 iptables selinux off

- 此处我们采用Hadoop-3.1.0版本,java 8版本,下载方法可参考多节点博客链接进行下载,这里就不进行介绍了

- 由于单节点,所以此处我们只需要准备一台虚拟机即可完成实验环境的搭建

2、host解析

[root@server1 ~]# cat /etc/hosts

10.10.10.1 server13、官网链接:

http://hadoop.apache.org/docs/r3.1.0/hadoop-project-dist/hadoop-common/SingleCluster.html (单节点)

多节点博客链接:https://blog.csdn.net/dream_ya/article/details/80375614

二、Hadoop安装及java环境搭建

1、解压tar包

[root@server1 ~]# tar xf hadoop-3.1.0.tar.gz -C /usr/local

[root@server1 ~]# tar xf jdk-8u171-linux-x64.tar.gz -C /usr/local2、声明java

[root@server1 ~]# vim /usr/local/hadoop-3.1.0/etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr/local/jdk1.8.0_171 3、独立操作debug

[root@server1 ~]# cd /usr/local/hadoop-3.1.0/

[root@server1 hadoop-3.1.0]# mkdir input

[root@server1 hadoop-3.1.0]# cp etc/hadoop/*.xml input

[root@server1 hadoop-3.1.0]# bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.0.jar grep input output 'dfs[a-z.]+'

[root@server1 hadoop-3.1.0]# cat output/*

1 dfsadmin三、伪分布集群搭建

1、配置core-site.xml

[root@server1 hadoop-3.1.0]# vim etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://10.10.10.1:9000value> ###这里也可以localhost

property>

configuration>2、配置hdfs-site.xml

[root@server1 hadoop-3.1.0]# vim etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replicationname>

<value>1value>

property>

configuration>3、免密登陆

(1)设置免密

[root@server1 hadoop-3.1.0]# cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[root@server1 hadoop-3.1.0]# chmod 0600 ~/.ssh/authorized_keys(2)测试

ssh root@10.10.10.1 ###发现输入yes后可以直接进行ssh连接则成功4、启动hdfs

(1)第一次要进行格式化

[root@server1 hadoop-3.1.0]# bin/hdfs namenode -format(2)通过脚本启动报错

<1> 报错:

[root@server1 hadoop-3.1.0]# sbin/start-dfs.sh

Starting namenodes on [server1]

ERROR: Attempting to operate on hdfs namenode as root

ERROR: but there is no HDFS_NAMENODE_USER defined. Aborting operation.

Starting datanodes

ERROR: Attempting to operate on hdfs datanode as root

ERROR: but there is no HDFS_DATANODE_USER defined. Aborting operation.

Starting secondary namenodes [server1]

ERROR: Attempting to operate on hdfs secondarynamenode as root

ERROR: but there is no HDFS_SECONDARYNAMENODE_USER defined. Aborting operation.

2018-05-21 14:49:57,012 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable此处发现没有定义所导致的错误,root用户会出现此情况,普通用户就不用担心此情况

<2> 解决方法:

安装报错博客链接:https://blog.csdn.net/dream_ya/article/details/80376002

[root@server1 hadoop-3.1.0]# vim etc/hadoop/hadoop-env.sh

export HDFS_NAMENODE_USER="root"

export HDFS_DATANODE_USER="root"

export HDFS_SECONDARYNAMENODE_USER="root"

export YARN_RESOURCEMANAGER_USER="root"

export YARN_NODEMANAGER_USER="root"(3)再次启动可以发现启动成功

[root@server1 hadoop-3.1.0]# sbin/start-dfs.sh ###其脚本都在sbin下面5、测试:

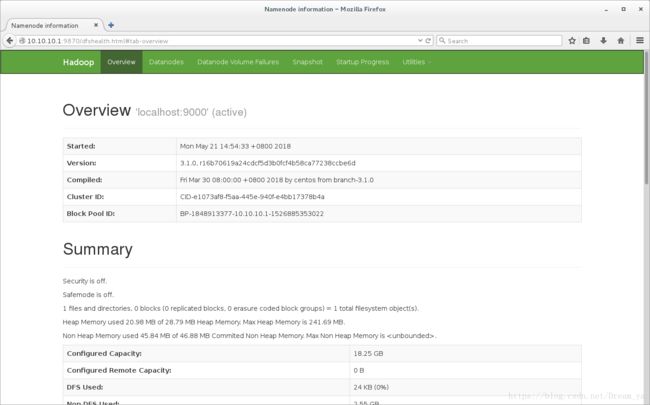

(1)浏览器**

http://10.10.10.1:98706、配置java环境

(1)加入环境变量

[root@server1 hadoop-3.1.0]# vim /root/.bash_profile

PATH=$PATH:$HOME/bin:/usr/local/jdk1.8.0_171/bin

[root@server1 hadoop-3.1.0]# . /root/.bash_profile(2)通过java命令查看:

如果jps查看不存在,只要进程或者端口开启说明服务也启动成功了

[root@server1 hadoop-3.1.0]# jps

2992 Jps

2243 NameNode

2553 SecondaryNameNode

2366 DataNode7、测试

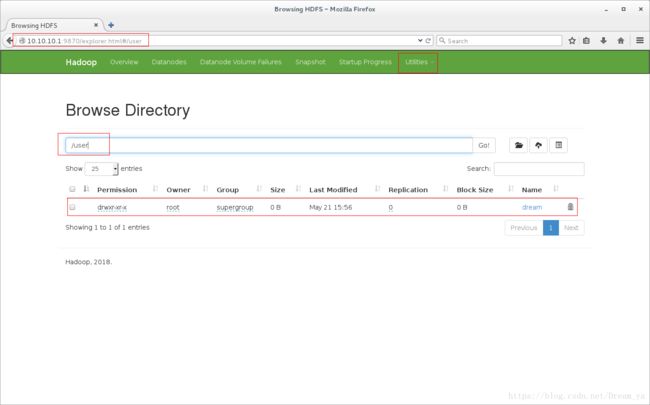

(1)创建目录

[root@server1 hadoop-3.1.0]# bin/hdfs dfs -mkdir /user

[root@server1 hadoop-3.1.0]# bin/hdfs dfs -mkdir /user/dream(2)查看:

<1> 浏览器:

http://10.10.10.1:9870四、YARN单节点

1、配置mapred-site.xml

[root@server1 hadoop-3.1.0]# vim etc/hadoop/mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

configuration>2、配置yarn-site.xml

[root@server1 hadoop-3.1.0]# vim etc/hadoop/yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

configuration> 3、启动yarn

[root@server1 hadoop-3.1.0]# sbin/start-yarn.sh ###停止的话把start换成stop即可

Starting resourcemanager

Starting nodemanagers4、测试

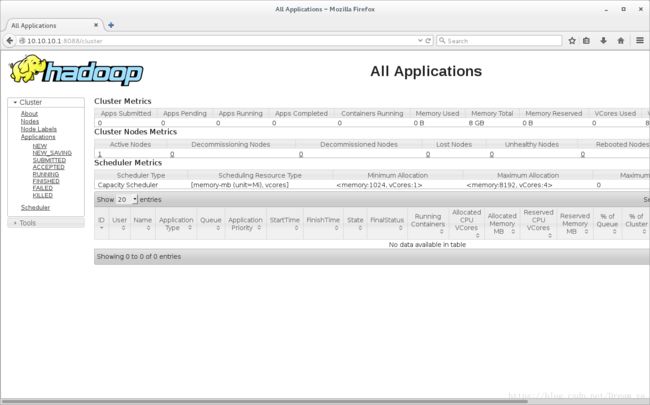

(1)浏览器:

http://10.10.10.1:8088