爬虫之代理池维护

一 准备工作

1 安装aiohttp

(venv) E:\WebSpider>pip3 install aiohttp2 安装flask

(venv) E:\WebSpider>pip install flask二 代理池的模块

1 存储模块:负载存储抓取下来的代理。存储模块使用Redis的有序集合,用来做代理的去重和状态标识,同时它也是中心模块和基础模块,将其他模块串联起来。

2 获取模块:需要定时在各大代理网址抓取代理。将获取的代理传递给存储模块,并保存到数据库。

3 检测模块:需要定时检测数据库中的代理。并对代理进行检测,根据不同的检查结果对代理设置不同的标识。

4 接口模块:需要用API来提取对外服务的接口。接口通过连接数据库并通过Web形式返回可用的代理。

三 代理池的实现

1 存储模块实现

import redis

from proxypool.error import PoolEmptyError

from proxypool.setting import REDIS_HOST, REDIS_PORT, REDIS_PASSWORD, REDIS_KEY

from proxypool.setting import MAX_SCORE, MIN_SCORE, INITIAL_SCORE

from random import choice

import re

class RedisClient(object):

def __init__(self, host=REDIS_HOST, port=REDIS_PORT, password=REDIS_PASSWORD):

"""

初始化

:param host: Redis 地址

:param port: Redis 端口

:param password: Redis密码

"""

self.db = redis.StrictRedis(host=host, port=port, password=password, decode_responses=True)

def add(self, proxy, score=INITIAL_SCORE):

"""

添加代理,设置分数为最高

:param proxy: 代理

:param score: 分数

:return: 添加结果

"""

if not re.match('\d+\.\d+\.\d+\.\d+\:\d+', proxy):

print('代理不符合规范', proxy, '丢弃')

return

if not self.db.zscore(REDIS_KEY, proxy):

proxy1 = proxy

print(score)

print(proxy)

#return self.db. zadd('zz', 'n1', 1)

#return self.db.zadd(REDIS_KEY, '171.42.172.114:8060',10)

#return self.db.zadd(REDIS_KEY, proxy1=score)

return self.db.zadd(REDIS_KEY, {proxy1:score})

def random(self):

"""

随机获取有效代理,首先尝试获取最高分数代理,如果不存在,按照排名获取,否则异常

:return: 随机代理

"""

result = self.db.zrangebyscore(REDIS_KEY, MAX_SCORE, MAX_SCORE)

if len(result):

return choice(result)

else:

result = self.db.zrevrange(REDIS_KEY, 0, 100)

if len(result):

return choice(result)

else:

raise PoolEmptyError

def decrease(self, proxy):

"""

代理值减一分,小于最小值则删除

:param proxy: 代理

:return: 修改后的代理分数

"""

score = self.db.zscore(REDIS_KEY, proxy)

if score and score > MIN_SCORE:

print('代理', proxy, '当前分数', score, '减1')

#return self.db.zincrby(REDIS_KEY, proxy, -1)

return self.db.zincrby(REDIS_KEY,-1, proxy,)

else:

print('代理', proxy, '当前分数', score, '移除')

return self.db.zrem(REDIS_KEY, proxy)

def exists(self, proxy):

"""

判断是否存在

:param proxy: 代理

:return: 是否存在

"""

return not self.db.zscore(REDIS_KEY, proxy) == None

def max(self, proxy):

"""

将代理设置为MAX_SCORE

:param proxy: 代理

:return: 设置结果

"""

print('代理', proxy, '可用,设置为', MAX_SCORE)

return self.db.zadd(REDIS_KEY, {proxy: MAX_SCORE})

def count(self):

"""

获取数量

:return: 数量

"""

return self.db.zcard(REDIS_KEY)

def all(self):

"""

获取全部代理

:return: 全部代理列表

"""

return self.db.zrangebyscore(REDIS_KEY, MIN_SCORE, MAX_SCORE)

def batch(self, start, stop):

"""

批量获取

:param start: 开始索引

:param stop: 结束索引

:return: 代理列表

"""

return self.db.zrevrange(REDIS_KEY, start, stop - 1)

if __name__ == '__main__':

conn = RedisClient()

result = conn.batch(680, 688)

print(result)关于Redis操作,可参考:https://blog.csdn.net/hongyd/article/details/84588524

https://blog.csdn.net/qq_41389266/article/details/86674910

2 获取模块实现

import json

import re

from .utils import get_page

from pyquery import PyQuery as pq

class ProxyMetaclass(type):

def __new__(cls, name, bases, attrs):

count = 0

attrs['__CrawlFunc__'] = []

for k, v in attrs.items():

if 'crawl_' in k:

attrs['__CrawlFunc__'].append(k)

count += 1

attrs['__CrawlFuncCount__'] = count

return type.__new__(cls, name, bases, attrs)

class Crawler(object, metaclass=ProxyMetaclass):

def get_proxies(self, callback):

proxies = []

for proxy in eval("self.{}()".format(callback)):

print('成功获取到代理', proxy)

proxies.append(proxy)

return proxies

def crawl_daili66(self, page_count=4):

"""

获取代理66

:param page_count: 页码

:return: 代理

"""

start_url = 'http://www.66ip.cn/{}.html'

urls = [start_url.format(page) for page in range(1, page_count + 1)]

for url in urls:

print('Crawling', url)

html = get_page(url)

if html:

doc = pq(html)

trs = doc('.containerbox table tr:gt(0)').items()

for tr in trs:

ip = tr.find('td:nth-child(1)').text()

port = tr.find('td:nth-child(2)').text()

yield ':'.join([ip, port])

def crawl_ip3366(self):

for page in range(1, 4):

start_url = 'http://www.ip3366.net/free/?stype=1&page={}'.format(page)

html = get_page(start_url)

ip_address = re.compile('\s*(.*?) \s*(.*?) ')

# \s * 匹配空格,起到换行作用

re_ip_address = ip_address.findall(html)

for address, port in re_ip_address:

result = address+':'+ port

yield result.replace(' ', '')

def crawl_kuaidaili(self):

for i in range(1, 4):

start_url = 'http://www.kuaidaili.com/free/inha/{}/'.format(i)

html = get_page(start_url)

if html:

ip_address = re.compile('(.*?) ')

re_ip_address = ip_address.findall(html)

port = re.compile('(.*?) ')

re_port = port.findall(html)

for address,port in zip(re_ip_address, re_port):

address_port = address+':'+port

yield address_port.replace(' ','')

def crawl_xicidaili(self):

for i in range(1, 3):

start_url = 'http://www.xicidaili.com/nn/{}'.format(i)

headers = {

'Accept':'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

'Cookie':'_free_proxy_session=BAh7B0kiD3Nlc3Npb25faWQGOgZFVEkiJWRjYzc5MmM1MTBiMDMzYTUzNTZjNzA4NjBhNWRjZjliBjsAVEkiEF9jc3JmX3Rva2VuBjsARkkiMUp6S2tXT3g5a0FCT01ndzlmWWZqRVJNek1WanRuUDBCbTJUN21GMTBKd3M9BjsARg%3D%3D--2a69429cb2115c6a0cc9a86e0ebe2800c0d471b3',

'Host':'www.xicidaili.com',

'Referer':'http://www.xicidaili.com/nn/3',

'Upgrade-Insecure-Requests':'1',

}

html = get_page(start_url, options=headers)

if html:

find_trs = re.compile(' (.*?) ', re.S)

trs = find_trs.findall(html)

for tr in trs:

find_ip = re.compile('(\d+\.\d+\.\d+\.\d+) ')

re_ip_address = find_ip.findall(tr)

find_port = re.compile('(\d+) ')

re_port = find_port.findall(tr)

for address,port in zip(re_ip_address, re_port):

address_port = address+':'+port

yield address_port.replace(' ','')

def crawl_ip3366(self):

for i in range(1, 4):

start_url = 'http://www.ip3366.net/?stype=1&page={}'.format(i)

html = get_page(start_url)

if html:

find_tr = re.compile('(.*?) ', re.S)

trs = find_tr.findall(html)

for s in range(1, len(trs)):

find_ip = re.compile('(\d+\.\d+\.\d+\.\d+) ')

re_ip_address = find_ip.findall(trs[s])

find_port = re.compile('(\d+) ')

re_port = find_port.findall(trs[s])

for address,port in zip(re_ip_address, re_port):

address_port = address+':'+port

yield address_port.replace(' ','')

def crawl_iphai(self):

start_url = 'http://www.iphai.com/'

html = get_page(start_url)

if html:

find_tr = re.compile('(.*?) ', re.S)

trs = find_tr.findall(html)

for s in range(1, len(trs)):

find_ip = re.compile('\s+(\d+\.\d+\.\d+\.\d+)\s+ ', re.S)

re_ip_address = find_ip.findall(trs[s])

find_port = re.compile('\s+(\d+)\s+ ', re.S)

re_port = find_port.findall(trs[s])

for address,port in zip(re_ip_address, re_port):

address_port = address+':'+port

yield address_port.replace(' ','')

def crawl_data5u(self):

start_url = 'http://www.data5u.com/free/gngn/index.shtml'

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

'Accept-Encoding': 'gzip, deflate',

'Accept-Language': 'en-US,en;q=0.9,zh-CN;q=0.8,zh;q=0.7',

'Cache-Control': 'max-age=0',

'Connection': 'keep-alive',

'Cookie': 'JSESSIONID=47AA0C887112A2D83EE040405F837A86',

'Host': 'www.data5u.com',

'Referer': 'http://www.data5u.com/free/index.shtml',

'Upgrade-Insecure-Requests': '1',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_13_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.108 Safari/537.36',

}

html = get_page(start_url, options=headers)

if html:

ip_address = re.compile('(\d+\.\d+\.\d+\.\d+) .*?(\d+) ', re.S)

re_ip_address = ip_address.findall(html)

for address, port in re_ip_address:

result = address + ':' + port

yield result.replace(' ', '')from proxypool.tester import Tester

from proxypool.db import RedisClient

from proxypool.crawler import Crawler

from proxypool.setting import *

import sys

class Getter():

def __init__(self):

self.redis = RedisClient()

self.crawler = Crawler()

def is_over_threshold(self):

"""

判断是否达到了代理池限制

"""

if self.redis.count() >= POOL_UPPER_THRESHOLD:

return True

else:

return False

def run(self):

print('获取器开始执行')

if not self.is_over_threshold():

for callback_label in range(self.crawler.__CrawlFuncCount__):

callback = self.crawler.__CrawlFunc__[callback_label]

# 获取代理

proxies = self.crawler.get_proxies(callback)

sys.stdout.flush()

print(proxies)

for proxy in proxies:

self.redis.add(proxy)

if __name__ == '__main__':

getter = Getter()

getter.run()3 检测模块

import asyncio

import aiohttp

import time

import sys

try:

from aiohttp import ClientError

except:

from aiohttp import ClientProxyConnectionError as ProxyConnectionError

from proxypool.db import RedisClient

from proxypool.setting import *

class Tester(object):

def __init__(self):

self.redis = RedisClient()

async def test_single_proxy(self, proxy):

"""

测试单个代理

:param proxy:

:return:

"""

conn = aiohttp.TCPConnector(verify_ssl=False)

async with aiohttp.ClientSession(connector=conn) as session:

try:

if isinstance(proxy, bytes):

proxy = proxy.decode('utf-8')

real_proxy = 'http://' + proxy

print('正在测试', proxy)

async with session.get(TEST_URL, proxy=real_proxy, timeout=15, allow_redirects=False) as response:

if response.status in VALID_STATUS_CODES:

self.redis.max(proxy)

print('代理可用', proxy)

else:

self.redis.decrease(proxy)

print('请求响应码不合法 ', response.status, 'IP', proxy)

except (ClientError, aiohttp.client_exceptions.ClientConnectorError, asyncio.TimeoutError, AttributeError):

self.redis.decrease(proxy)

print('代理请求失败', proxy)

def run(self):

"""

测试主函数

:return:

"""

print('测试器开始运行')

try:

count = self.redis.count()

print('当前剩余', count, '个代理')

for i in range(0, count, BATCH_TEST_SIZE):

start = i

stop = min(i + BATCH_TEST_SIZE, count)

print('正在测试第', start + 1, '-', stop, '个代理')

test_proxies = self.redis.batch(start, stop)

loop = asyncio.get_event_loop()

tasks = [self.test_single_proxy(proxy) for proxy in test_proxies]

loop.run_until_complete(asyncio.wait(tasks))

sys.stdout.flush()

time.sleep(5)

except Exception as e:

print('测试器发生错误', e.args)4 接口模块

from flask import Flask, g

from .db import RedisClient

__all__ = ['app']

app = Flask(__name__)

def get_conn():

if not hasattr(g, 'redis'):

g.redis = RedisClient()

return g.redis

@app.route('/')

def index():

return 'Welcome to Proxy Pool System

'

@app.route('/random')

def get_proxy():

"""

Get a proxy

:return: 随机代理

"""

conn = get_conn()

return conn.random()

@app.route('/count')

def get_counts():

"""

Get the count of proxies

:return: 代理池总量

"""

conn = get_conn()

return str(conn.count())

if __name__ == '__main__':

app.run()5 调度模块

import time

from multiprocessing import Process

from proxypool.api import app

from proxypool.getter import Getter

from proxypool.tester import Tester

from proxypool.db import RedisClient

from proxypool.setting import *

class Scheduler():

def schedule_tester(self, cycle=TESTER_CYCLE):

"""

定时测试代理

"""

tester = Tester()

while True:

print('测试器开始运行')

tester.run()

time.sleep(cycle)

def schedule_getter(self, cycle=GETTER_CYCLE):

"""

定时获取代理

"""

getter = Getter()

while True:

print('开始抓取代理')

getter.run()

time.sleep(cycle)

def schedule_api(self):

"""

开启API

"""

app.run(API_HOST, API_PORT)

def run(self):

print('代理池开始运行')

if TESTER_ENABLED:

tester_process = Process(target=self.schedule_tester)

tester_process.start()

if GETTER_ENABLED:

getter_process = Process(target=self.schedule_getter)

getter_process.start()

if API_ENABLED:

api_process = Process(target=self.schedule_api)

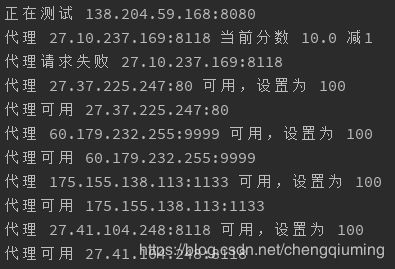

api_process.start()四 运行

(venv) E:\Python\ProxyPool>python run.py访问:http://127.0.0.1:5555/

访问:http://127.0.0.1:5555/random

访问:http://127.0.0.1:5555/count

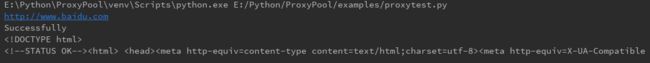

获取代理的代码

import requests

from proxypool.setting import TEST_URL

PROXY_POOL_URL = 'http://localhost:5555/random'

def get_proxy():

try:

response = requests.get(PROXY_POOL_URL)

if response.status_code == 200:

return response.text

except ConnectionError:

return None

proxy = get_proxy()

proxies = {

'http': 'http://' + proxy,

'https': 'https://' + proxy,

}

print(TEST_URL)

response = requests.get(TEST_URL, proxies=proxies, verify=False)

if response.status_code == 200:

print('Successfully')

print(response.text)测试结果