【Python-ML】SKlearn库特征抽取-PCA

# -*- coding: utf-8 -*-

'''

Created on 2018年1月18日

@author: Jason.F

@summary: 特征抽取-PCA方法,无监督、线性

'''

import pandas as pd

import numpy as np

from sklearn.cross_validation import train_test_split

from sklearn.preprocessing import MinMaxScaler

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

import matplotlib.pyplot as plt

from matplotlib.colors import ListedColormap

from sklearn.decomposition import PCA

#定义绘制函数

def plot_decision_regions(X, y, classifier, resolution=0.02):

# setup marker generator and color map

markers = ('s', 'x', 'o', '^', 'v')

colors = ('red', 'blue', 'lightgreen', 'gray', 'cyan')

cmap = ListedColormap(colors[:len(np.unique(y))])

# plot the decision surface

x1_min, x1_max = X[:, 0].min() - 1, X[:, 0].max() + 1

x2_min, x2_max = X[:, 1].min() - 1, X[:, 1].max() + 1

xx1, xx2 = np.meshgrid(np.arange(x1_min, x1_max, resolution),

np.arange(x2_min, x2_max, resolution))

Z = classifier.predict(np.array([xx1.ravel(), xx2.ravel()]).T)

Z = Z.reshape(xx1.shape)

plt.contourf(xx1, xx2, Z, alpha=0.4, cmap=cmap)

plt.xlim(xx1.min(), xx1.max())

plt.ylim(xx2.min(), xx2.max())

# plot class samples

for idx, cl in enumerate(np.unique(y)):

plt.scatter(x=X[y == cl, 0], y=X[y == cl, 1],

alpha=0.8, c=cmap(idx),

marker=markers[idx], label=cl)

#第一步:导入数据,对原始d维数据集做标准化处理

df_wine = pd.read_csv('https://archive.ics.uci.edu/ml/machine-learning-databases/wine/wine.data',header=None)

df_wine.columns=['Class label','Alcohol','Malic acid','Ash','Alcalinity of ash','Magnesium','Total phenols','Flavanoids','Nonflavanoid phenols','Proanthocyanins','Color intensity','Hue','OD280/OD315 of diluted wines','Proline']

print ('class labels:',np.unique(df_wine['Class label']))

#print (df_wine.head(5))

#分割训练集合测试集

X,y=df_wine.iloc[:,1:].values,df_wine.iloc[:,0].values

X_train,X_test,y_train,y_test=train_test_split(X,y,test_size=0.3,random_state=0)

#特征值缩放-标准化

stdsc=StandardScaler()

X_train_std=stdsc.fit_transform(X_train)

X_test_std=stdsc.fit_transform(X_test)

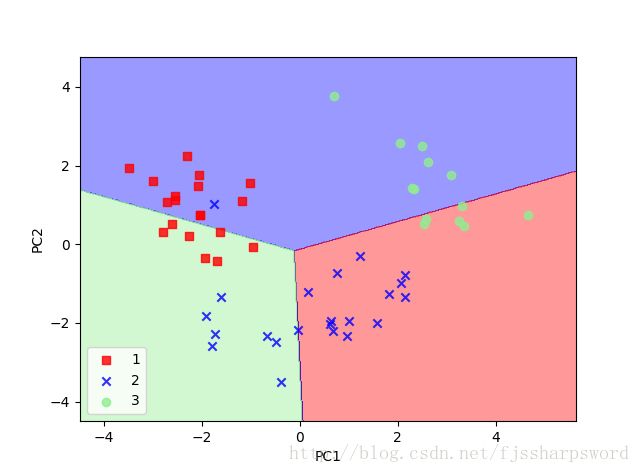

#第二步:PCA降维

pca=PCA(n_components=2)#参数设置选择前2个方差最大的特征

lr=LogisticRegression()

X_train_pca=pca.fit_transform(X_train_std)

X_test_pca=pca.fit_transform(X_test_std)

lr.fit(X_train_pca,y_train)

plot_decision_regions(X_train_pca,y_train,classifier=lr)

plt.xlabel('PC1')

plt.ylabel('PC2')

plt.legend(loc='lower left')

plt.show()

plot_decision_regions(X_test_pca,y_test,classifier=lr)

plt.xlabel('PC1')

plt.ylabel('PC2')

plt.legend(loc='lower left')

plt.show()

#观察不同主成分的防线贡献率

pca_all=PCA(n_components=None)#None值保留所有成分

X_train_pca_all=pca_all.fit_transform(X_train_std)

print (pca_all.explained_variance_ratio_)

结果:

('class labels:', array([1, 2, 3], dtype=int64))

[ 0.37329648 0.18818926 0.10896791 0.07724389 0.06478595 0.04592014

0.03986936 0.02521914 0.02258181 0.01830924 0.01635336 0.01284271

0.00642076]