虚拟机virtualbox安装kubernetes 1.14【第二篇、安装k8s】

上一章讲了virtualbox中安装Centos7虚拟机,本章我们在其基础上讲安装k8s.

概述

| 主机名 | IP | |

|---|---|---|

| 主节点 | master-node | 192.168.56.109 |

| 从节点1 | work-node1 | 192.168.56.110 |

| 从节点2 | work-node2 | 192.168.56.108 |

必要的准备

关闭防火墙

防火墙一定要提前关闭,否则在后续安装K8S集群的时候是个trouble maker。执行下面语句关闭,并禁用开机启动:

systemctl stop firewalld & systemctl disable firewalld关闭Swap

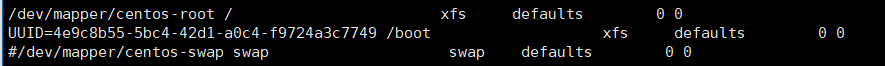

类似ElasticSearch集群,在安装K8S集群时,Linux的Swap内存交换机制是一定要关闭的,否则会因为内存交换而影响性能以及稳定性。这里,我们可以提前进行设置:

- 执行

swapoff -a可临时关闭,但系统重启后恢复 - 编辑

/etc/fstab,注释掉包含swap的那一行即可,重启后可永久关闭,如下所示:

关闭SeLinux

setenforce 0配置yum源

首先去/etc/yum.repos.d/目录,删除该目录下所有repo文件(备份到backup目录)

[root@bogon yum.repos.d]# ls

CentOS-Base.repo CentOS-Debuginfo.repo CentOS-Media.repo CentOS-Vault.repo

CentOS-CR.repo CentOS-fasttrack.repo CentOS-Sources.repo

[root@bogon yum.repos.d]# mkdir backup

[root@bogon yum.repos.d]# mv Cen* backup/下载centos基础yum源配置(这里用的是阿里云的镜像)

curl -o CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo下载docker的yum源配置

curl -o docker-ce.repo https://download.docker.com/linux/centos/docker-ce.repo配置kubernetes的yum源

cat < /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF 执行下列命令刷新yum源缓存

# yum clean all

# yum makecache

# yum repolist安装docker

在此,我们直接安装最新版

yum install -y docker-ce如果指定docker版本,可搜索docker-ce可用镜像:

yum list docker-ce --showduplicates | sort -r列出所有版本,再执行

yum install -y docker-ce-查看docker版本:

[root@bogon yum.repos.d]# docker -v

Docker version 18.09.6, build 481bc77156

启动Docker服务并激活开机启动:

systemctl start docker & systemctl enable docker运行一条命令验证一下:

[root@bogon yum.repos.d]# docker run hello-world

Unable to find image 'hello-world:latest' locally

latest: Pulling from library/hello-world

1b930d010525: Pull complete

Digest: sha256:92695bc579f31df7a63da6922075d0666e565ceccad16b59c3374d2cf4e8e50e

Status: Downloaded newer image for hello-world:latest

Hello from Docker!

This message shows that your installation appears to be working correctly.

To generate this message, Docker took the following steps:

1. The Docker client contacted the Docker daemon.

2. The Docker daemon pulled the "hello-world" image from the Docker Hub.

(amd64)

3. The Docker daemon created a new container from that image which runs the

executable that produces the output you are currently reading.

4. The Docker daemon streamed that output to the Docker client, which sent it

to your terminal.

To try something more ambitious, you can run an Ubuntu container with:

$ docker run -it ubuntu bash

Share images, automate workflows, and more with a free Docker ID:

https://hub.docker.com/

For more examples and ideas, visit:

https://docs.docker.com/get-started/

[1]+ 完成 systemctl start docker

[root@bogon yum.repos.d]#

kubeadm安装k8s

由于之前已经设置好了kubernetes的yum源,我们只要执行

yum install -y kubeadm系统就会帮我们自动安装最新版的kubeadm了,一共会安装kubelet、kubeadm、kubectl、kubernetes-cni这四个程序。

- kubeadm:k8集群的一键部署工具,通过把k8的各类核心组件和插件以pod的方式部署来简化安装过程

- kubelet:运行在每个节点上的node agent,k8集群通过kubelet真正的去操作每个节点上的容器,由于需要直接操作宿主机的各类资源,所以没有放在pod里面,还是通过服务的形式装在系统里面

- kubectl:kubernetes的命令行工具,通过连接api-server完成对于k8的各类操作

- kubernetes-cni:k8的虚拟网络设备,通过在宿主机上虚拟一个cni0网桥,来完成pod之间的网络通讯,作用和docker0类似。

安装完后,关闭系统。是的,关闭系统。

复制计算节点

复制出计算节点“work-node1”和“work-node1”

关闭虚拟机后,在virtualbox中的虚拟机上右键【复制】,按照下图设置。格外注意红框部分。

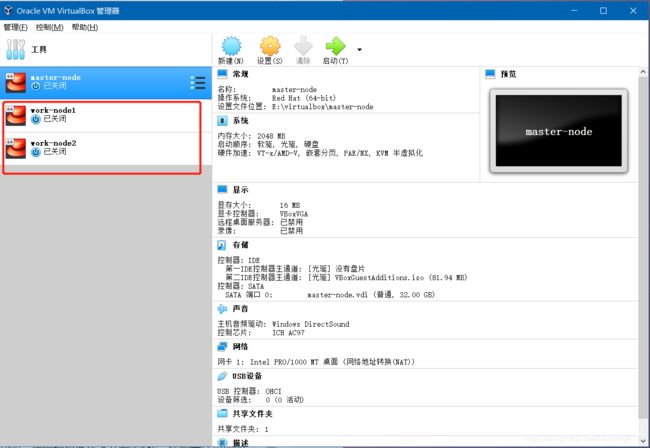

按照上述步骤,再复制出work-node2,复制完以后,如下图

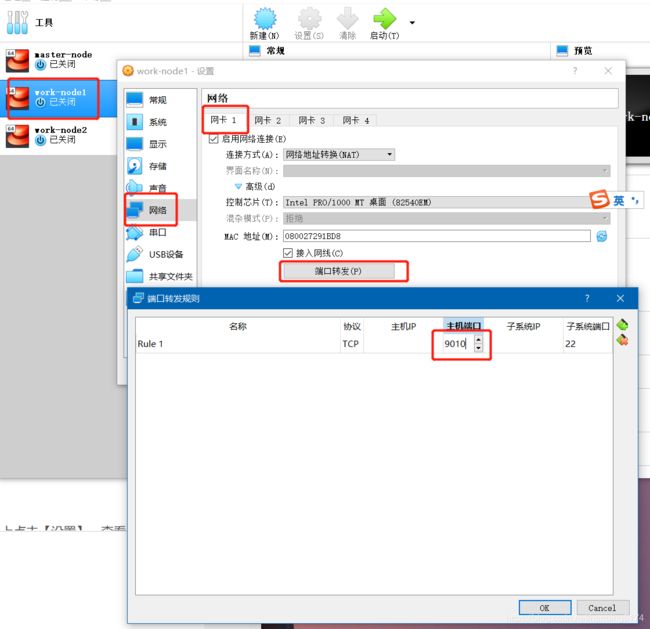

设置网卡1

设置计算节点work-node1的网卡1,修改主机端口为9010,如下图:

跟上图同样的道理,把计算节点work-node2的主机端口设置为9011。这样master-node、work-node1、work-node2的主机端口分别为9000、9010、9011。这样做的目的是能在XShell中远程连接三个虚拟机。

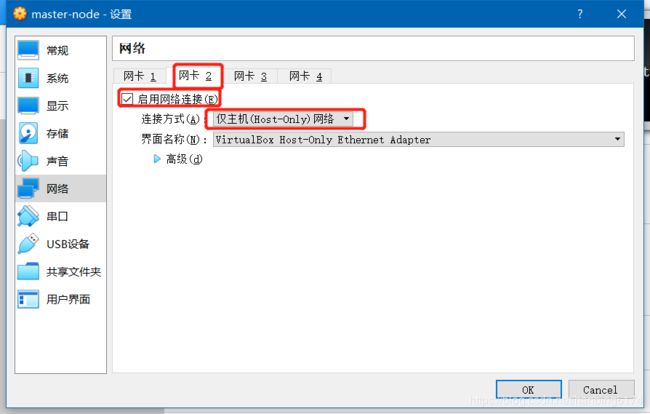

添加网卡2

由于这三个节点(master-node、work-node1、work-node2)只有一个网卡(可以在virtualbox虚拟机上点击【设置】,查看【网络】),也就是上一章提到的“网卡1”中的网络地址转换。网卡1只能保证每个虚拟机能和宿主机通信,但是三个虚拟机之间是没办法通信的。

为了让这三个节点之间相互通信,还需要启用“网卡2”。在三个节点的【设置】【网络】中,分别如下图设置:

如上图,“高级”里面不做任何修改。

这样每个虚拟机除了有一个通过宿主机联网的“网卡1”,还会有一个相互通信的“网卡2”。

分别启动三个虚拟机

可以选择在virtualbox中操作虚拟机,也可以在XShell中操作。本文毫无疑问选择后者。上一章只提到了怎么设置XShell中master-node的连接方式,由于我们新添加了两个计算节点,因此,还需要分别设置这俩的会话。以下是work-node1的设置方式,work-node2不再详述,请自行设置。

设置三个虚拟机网络

该步骤是非常重要的。三个虚拟机的设置内容是一样的。这里仅设置master-node,其余两个请自行设置。

在中,输入ip addr 查看IP地址,结果如下:

[root@bogon ~]# ip addr

1: lo: mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp0s3: mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:50:44:ee brd ff:ff:ff:ff:ff:ff

inet 10.0.2.15/24 brd 10.0.2.255 scope global noprefixroute dynamic enp0s3

valid_lft 85973sec preferred_lft 85973sec

inet6 fe80::7b1f:2c4c:fae7:1363/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: enp0s8: mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:b0:9a:0b brd ff:ff:ff:ff:ff:ff

inet 192.168.56.109/24 brd 192.168.56.255 scope global noprefixroute dynamic enp0s8

valid_lft 773sec preferred_lft 773sec

inet6 fe80::987b:fa3c:a84d:d6b9/64 scope link noprefixroute

valid_lft forever preferred_lft forever

4: docker0: mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:58:fd:64:80 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

5: virbr0: mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:4a:b0:b8 brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

6: virbr0-nic: mtu 1500 qdisc pfifo_fast master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:4a:b0:b8 brd ff:ff:ff:ff:ff:ff

[root@bogon ~]#

上面的enp0s3其实对应的就是“网卡1”,而enp0s8就是我们刚添加的“网卡2”,可以看到master-node的enp0s8网卡IP为“192.168.56.109”(注意你的虚拟机可能跟我的IP地址不一样),同样的方法,我查看了另外的两个虚拟机work-node1和work-node2的enp0s8网卡IP分别是“192.168.56.110”和“192.168.56.108”。

对master-node做如下设置(work-node1和work-node2请自行设置):

- 编辑

/etc/hostname,将hostname修改为master-node - 编辑

/etc/hosts,追加内容 【192.168.56.109-node1】【192.168.56.108

过程展示如下:

[root@bogon ~]# vi /etc/hostname

[root@bogon ~]# vi /etc/hosts

[root@bogon ~]# cat /etc/hostname

master-node

#localhost.localdomain

[root@bogon ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.56.109 master-node

192.168.56.110 work-node1

192.168.56.108 work-node2

不要忘记设置work-node1和work-node2。方法同上。

重启三个虚拟机,使上述设置生效。

主节点初始化K8S

主节点就是本文提到的“master-node”虚拟机。 执行下列代码,开始master节点的初始化工作:

kubeadm init --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.56.109注意这边的--pod-network-cidr=10.244.0.0/16,是k8的网络插件所需要用到的配置信息,用来给node分配子网段,用到的网络插件是flannel,就是这么配,其他的插件也有相应的配法。选项--apiserver-advertise-address表示绑定的网卡IP,这里一定要绑定前面提到的enp0s8网卡,否则会默认使用enp0s3网卡。

一堆信息如下:

[root@master-node ~]# kubeadm init --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.56.109

I0511 02:44:59.286251 4117 version.go:96] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get https://dl.k8s.io/release/stable-1.txt: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

I0511 02:44:59.286366 4117 version.go:97] falling back to the local client version: v1.14.1

[init] Using Kubernetes version: v1.14.1

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

提示:/proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

解决方法如下:

echo "1" >/proc/sys/net/bridge/bridge-nf-call-iptables再次执行上面的初始化代码。等待一会之后又出现了新的问题:

[root@master-node ~]# kubeadm init --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.56.109

I0511 02:47:13.060854 4194 version.go:96] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get https://dl.k8s.io/release/stable-1.txt: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

I0511 02:47:13.060910 4194 version.go:97] falling back to the local client version: v1.14.1

[init] Using Kubernetes version: v1.14.1

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-apiserver:v1.14.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-controller-manager:v1.14.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-scheduler:v1.14.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-proxy:v1.14.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/pause:3.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/etcd:3.3.10: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/coredns:1.3.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

ERROR ImagePull指的是gcr.io无法访问(谷歌自己的容器镜像仓库)。我们的思路是,先从国内阿里云拉取镜像,然后再修改名字,改为和谷歌容器镜像仓库名字相同的镜像。当然,你可以按照错误提示,一个个执行:

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1下载完后修改tag:

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1 k8s.gcr.io/k8s.gcr.io/kube-apiserver:v1.14.1为了执行效率更高,我只做了一个shell文件,可以批处理执行。内容如下,你可以复制下来,然后保存成“k8s_docker.sh”文件:

echo ""

echo "=========================================================="

echo "Pull Kubernetes v1.14.1 Images from aliyuncs.com ......"

echo "=========================================================="

echo ""

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.14.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.14.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.14.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.10

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1 k8s.gcr.io/kube-apiserver:v1.14.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.14.1 k8s.gcr.io/kube-controller-manager:v1.14.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.14.1 k8s.gcr.io/kube-scheduler:v1.14.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.14.1 k8s.gcr.io/kube-proxy:v1.14.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.10 k8s.gcr.io/etcd:3.3.10

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1 k8s.gcr.io/coredns:1.3.1可是怎么传到虚拟机呢?这时,第一章中提到的共享文件夹就起作用了。

把上述k8s_docker.sh放到“E:\virtualbox_share”,然后在主节点虚拟机中定位到“/mnt/share”执行以下命令:

[root@master-node ~]# cd /mnt/share/

[root@master-node share]# ls

k8s_docker.sh kube-flannel.yml mongodb-linux-x86_64-rhel70-4.0.6 mongodb-linux-x86_64-rhel70-4.0.6.tar mongodb-linux-x86_64-rhel70-4.0.6.tgz

[root@master-node share]# ./k8s_docker.sh

==========================================================

Pull Kubernetes v1.14.1 Images from aliyuncs.com ......

==========================================================

v1.14.1: Pulling from google_containers/kube-apiserver

346aee5ea5bc: Pull complete

7f0e834d5a94: Pull complete

Digest: sha256:0c8710b83841950515d3bdea9ad052df00dc730ac22ac07b27c02adaaad30a36

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1

v1.14.1: Pulling from google_containers/kube-controller-manager

346aee5ea5bc: Already exists

f4db69ee8ade: Pull complete

Digest: sha256:f6ddbc332516d73afc7c81fabe47ed6b1e0a43461a0b861aae9608a4692602c0

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.14.1

v1.14.1: Pulling from google_containers/kube-scheduler

346aee5ea5bc: Already exists

b88909b8f99f: Pull complete

Digest: sha256:650dd7e101652a2b98db5fe18e9ff0c09b5530b211529ccf5f62241d21db1ee2

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.14.1

v1.14.1: Pulling from google_containers/kube-proxy

346aee5ea5bc: Already exists

1e695dec1fee: Pull complete

100690d61cf6: Pull complete

Digest: sha256:020d25ff45a33ec7958d7128308cb499d5b24cdaa228a2344514bcab9b7296c0

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.14.1

3.1: Pulling from google_containers/pause

cf9202429979: Pull complete

Digest: sha256:759c3f0f6493093a9043cc813092290af69029699ade0e3dbe024e968fcb7cca

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

3.3.10: Pulling from google_containers/etcd

90e01955edcd: Pull complete

6369547c492e: Pull complete

bd2b173236d3: Pull complete

Digest: sha256:240bd81c2f54873804363665c5d1a9b8e06ec5c63cfc181e026ddec1d81585bb

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.10

1.3.1: Pulling from google_containers/coredns

e0daa8927b68: Pull complete

3928e47de029: Pull complete

Digest: sha256:638adb0319813f2479ba3642bbe37136db8cf363b48fb3eb7dc8db634d8d5a5b

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1可以看到,镜像已经拉取到了本地,并且名字已经改为初始化中提到的名字。再次执行

[root@master-node share]# kubeadm init --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.56.109

I0511 03:04:59.473877 5085 version.go:96] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get https://dl.k8s.io/release/stable-1.txt: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

I0511 03:04:59.474160 5085 version.go:97] falling back to the local client version: v1.14.1

[init] Using Kubernetes version: v1.14.1

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [master-node kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.56.109]

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [master-node localhost] and IPs [192.168.56.109 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [master-node localhost] and IPs [192.168.56.109 127.0.0.1 ::1]

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 16.002135 seconds

[upload-config] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.14" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --experimental-upload-certs

[mark-control-plane] Marking the node master-node as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node master-node as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: vmwftc.2034sih0ok8kyy3w

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.56.109:6443 --token vmwftc.2034sih0ok8kyy3w \

--discovery-token-ca-cert-hash sha256:c4bac97ad9d4ab4f8ef37bfe623e56acf0532b58208cecb54b1b3d264895330e

可以看到终于安装成功了,kudeadm帮你做了大量的工作,包括kubelet配置、各类证书配置、kubeconfig配置、插件安装等等。注意最后一行,kubeadm提示你,其他节点需要加入集群的话,只需要执行这条命令就行了:

kubeadm join 192.168.56.109:6443 --token vmwftc.2034sih0ok8kyy3w \

--discovery-token-ca-cert-hash sha256:c4bac97ad9d4ab4f8ef37bfe623e56acf0532b58208cecb54b1b3d264895330e 请记住你自己电脑上的这个命令,接下来配置计算节点的时候会用到,里面包含了加入集群所需要的token。

同时kubeadm还提醒你,要完成全部安装,还需要安装一个网络插件kubectl apply -f [podnetwork].yaml。同时也提示你,需要执行

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config上述三条命令逐条执行。

启动kubelet

安装kubelet、kubeadm、kubectl三者后,要求启动kubelet:

systemctl enable kubelet && systemctl start kubelet

执行完以后,查看节点情况:

[root@master-node share]# kubectl get node

NAME STATUS ROLES AGE VERSION

master-node NotReady master 3m40s v1.14.1发现只有主节点。这时当然了,以为我们还没把计算节点加进来。

再查看下pod情况

[root@master-node share]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-fb8b8dccf-ggw6c 0/1 Pending 0 5m7s

coredns-fb8b8dccf-xwgl5 0/1 Pending 0 5m7s

etcd-master-node 1/1 Running 0 4m28s

kube-apiserver-master-node 1/1 Running 0 4m28s

kube-controller-manager-master-node 1/1 Running 0 4m21s

kube-proxy-rdpv9 1/1 Running 0 5m7s

kube-scheduler-master-node 1/1 Running 0 4m2s可以看到coredns的两个pod都是pending状态,这是因为网络插件还没有安装。我们kubernetes初始化语句可以看出,我用到的是flannel,其实还有其他的网络插件,比如Calico网络,但是我一直配不好,对应的pod总是显示失败状态。Calico网络改天再回过头来研究。

安装flannel比较简单,执行代码如下:

kubectl create -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml如果后面的网址不通,可以先把yml文件下载到本地,然后导入虚拟机执行。上述代码执行结果如下:

[root@master-node share]# kubectl create -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

podsecuritypolicy.extensions/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.extensions/kube-flannel-ds-amd64 created

daemonset.extensions/kube-flannel-ds-arm64 created

daemonset.extensions/kube-flannel-ds-arm created

daemonset.extensions/kube-flannel-ds-ppc64le created

daemonset.extensions/kube-flannel-ds-s390x created再次查看pods,发现都正常了:

[root@master-node share]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-fb8b8dccf-ggw6c 1/1 Running 0 15m

coredns-fb8b8dccf-xwgl5 1/1 Running 0 15m

etcd-master-node 1/1 Running 0 14m

kube-apiserver-master-node 1/1 Running 0 14m

kube-controller-manager-master-node 1/1 Running 0 14m

kube-flannel-ds-amd64-dc92s 1/1 Running 0 2m41s

kube-proxy-rdpv9 1/1 Running 0 15m

kube-scheduler-master-node 1/1 Running 0 14m至此,主节点配置完毕!

计算节点初始化K8S

计算节点就是本问提到的“work-node1”和“work-node2”。计算节点同样需要docker镜像,只是不像主节点那样多。但是为了省事,我们同样执行k8s_docker.sh,这其中可能包含了一些无用的镜像,但是无关紧要。

分别将两个节点定位到“/mnt/share”执行以下命令:

[root@work-node1 ~]# cd /mnt/share/

[root@work-node1 share]# ls

k8s_docker.sh kube-flannel.yml mongodb-linux-x86_64-rhel70-4.0.6 mongodb-linux-x86_64-rhel70-4.0.6.tar mongodb-linux-x86_64-rhel70-4.0.6.tgz

[root@work-node1 share]# ./k8s_docker.sh

==========================================================

Pull Kubernetes v1.14.1 Images from aliyuncs.com ......

==========================================================

v1.14.1: Pulling from google_containers/kube-apiserver

346aee5ea5bc: Pull complete

7f0e834d5a94: Pull complete

Digest: sha256:0c8710b83841950515d3bdea9ad052df00dc730ac22ac07b27c02adaaad30a36

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.14.1

v1.14.1: Pulling from google_containers/kube-controller-manager

346aee5ea5bc: Already exists

f4db69ee8ade: Pull complete

Digest: sha256:f6ddbc332516d73afc7c81fabe47ed6b1e0a43461a0b861aae9608a4692602c0

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.14.1

v1.14.1: Pulling from google_containers/kube-scheduler

346aee5ea5bc: Already exists

b88909b8f99f: Pull complete

Digest: sha256:650dd7e101652a2b98db5fe18e9ff0c09b5530b211529ccf5f62241d21db1ee2

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.14.1

v1.14.1: Pulling from google_containers/kube-proxy

346aee5ea5bc: Already exists

1e695dec1fee: Pull complete

100690d61cf6: Pull complete

Digest: sha256:020d25ff45a33ec7958d7128308cb499d5b24cdaa228a2344514bcab9b7296c0

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.14.1

3.1: Pulling from google_containers/pause

cf9202429979: Pull complete

Digest: sha256:759c3f0f6493093a9043cc813092290af69029699ade0e3dbe024e968fcb7cca

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

3.3.10: Pulling from google_containers/etcd

90e01955edcd: Pull complete

6369547c492e: Pull complete

bd2b173236d3: Pull complete

Digest: sha256:240bd81c2f54873804363665c5d1a9b8e06ec5c63cfc181e026ddec1d81585bb

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.10

1.3.1: Pulling from google_containers/coredns

e0daa8927b68: Pull complete

3928e47de029: Pull complete

Digest: sha256:638adb0319813f2479ba3642bbe37136db8cf363b48fb3eb7dc8db634d8d5a5b

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.3.1

回到主节点,查看当前节点状况:

[root@master-node share]# kubectl get node

NAME STATUS ROLES AGE VERSION

master-node Ready master 27m v1.14.1

work-node1 Ready 88s v1.14.1

work-node2 Ready 52s v1.14.1 发现两个计算节点都已经加进来了。

至此kubernets配置完毕。

接下来的章节将介绍如何配置Dashboard。

参考:

https://www.jianshu.com/p/ad3c712e1d95

https://www.jianshu.com/p/70efa1b853f5

问题集:

开机无法查看pods和nodes?

可能是没有关闭swap分区?导致kubelet没有启动。参考https://blog.csdn.net/nklinsirui/article/details/80855415