Intel® Movidius™ 神经计算棒(NCS)是个使用USB接口的深度学习设备,比U盘略大,功耗1W,浮点性能可达100GFLOPs。

100GFLOPs大概是什么概念呢,i7-8700K有59.26GLOPs,Titan V FP16 有24576GLOPs……(仅供娱乐参考,对比是不同精度的)。

安装NCSDK

目前NCSDK官方安装脚本只支持Ubuntu 16.04和Raspbian Stretch,折腾一下在其他Linux系统运行也是没问题的,例如我用ArchLinux,大概步骤如下:

- 安装python 3、opencv、tensorflow 1.4.0还有其他依赖

- 编译安装caffe,需要用到这个没合并的PR:Fix boost_python discovery for distros with different naming scheme

- 改官方的脚本,跳过系统检查,跳过依赖安装(在第一步就手工装完了)

当我顺利折腾完之外,才发现AUR是有了现成的ncsdk。

安装完成后改LD_LIBRARY_PATH和PYTHONPATH:

export LD_LIBRARY_PATH=/opt/movidius/bvlc-caffe/build/install/lib64/:/usr/local/lib/

export PYTHONPATH="${PYTHONPATH}:/opt/movidius/caffe/python"模型编译

使用NCS需要把caffe或tensorflow训练好的模型转换成NCS支持的格式。因为Keras有TF后端,所以用Keras的模型也是可以的。这里以Keras自带的VGG16为例:

from keras.applications import VGG16

from keras import backend as K

import tensorflow as tf

mn = VGG16()

saver = tf.train.Saver()

sess = K.get_session()

saver.save(sess, "./TF_Model/vgg16")这里直接用tf.train.Saver保存了一个tf模型,然后用mvNCCompile命令进行编译,需要指定网络的输入和输出节点,-s 12表示使用12个SHAVE处理器:

$ mvNCCompile TF_Model/vgg16.meta -in=input_1 -on=predictions/Softmax -s 12顺利的话就的到一个graph文件。

(这里用了我自己改的TensorFlowParser.py才能用)

模型调优

mvNCProfile命令可以查看模型中每一层使用的内存带宽、算力,模型调优可以以此为参考。

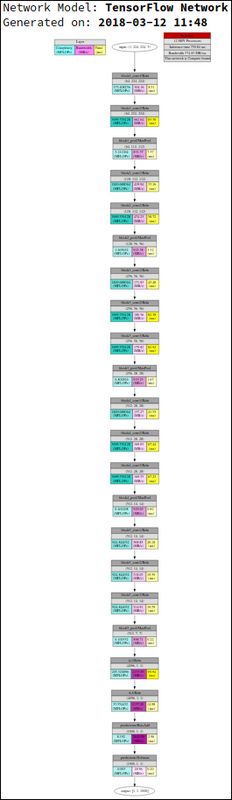

$ mvNCProfile TF_Model/vgg16.meta -in=input_1 -on=predictions/Softmax -s 12

Detailed Per Layer Profile

Bandwidth time

# Name MFLOPs (MB/s) (ms)

=======================================================================

0 block1_conv1/Relu 173.4 304.2 8.510

1 block1_conv2/Relu 3699.4 662.6 83.297

2 block1_pool/MaxPool 3.2 831.6 7.366

3 block2_conv1/Relu 1849.7 419.9 33.158

4 block2_conv2/Relu 3699.4 474.2 58.718

5 block2_pool/MaxPool 1.6 923.4 3.317

6 block3_conv1/Relu 1849.7 171.8 43.401

7 block3_conv2/Relu 3699.4 180.6 82.579

8 block3_conv3/Relu 3699.4 179.8 82.921

9 block3_pool/MaxPool 0.8 919.2 1.666

10 block4_conv1/Relu 1849.7 137.3 41.554

11 block4_conv2/Relu 3699.4 169.0 67.442

12 block4_conv3/Relu 3699.4 169.6 67.232

13 block4_pool/MaxPool 0.4 929.7 0.825

14 block5_conv1/Relu 924.8 308.9 20.176

15 block5_conv2/Relu 924.8 318.0 19.594

16 block5_conv3/Relu 924.8 314.9 19.788

17 block5_pool/MaxPool 0.1 888.7 0.216

18 fc1/Relu 205.5 2155.9 90.937

19 fc2/Relu 33.6 2137.2 14.980

20 predictions/BiasAdd 8.2 2645.0 2.957

21 predictions/Softmax 0.0 19.0 0.201

-----------------------------------------------------------------------

Total inference time 750.84

-----------------------------------------------------------------------

Generating Profile Report 'output_report.html'...可以看到执行1此VGG16推断需要750ms,主要时间花在了几个卷积层,所以这个模型用在实时的视频分析是不合适的,这时可以试试其他的网络,例如SqueezeNet只要48ms:

Detailed Per Layer Profile

Bandwidth time

# Name MFLOPs (MB/s) (ms)

=======================================================================

0 data 0.0 78350.0 0.004

1 conv1 347.7 1622.7 8.926

2 pool1 2.6 1440.0 1.567

3 fire2/squeeze1x1 9.3 1214.8 0.458

4 fire2/expand1x1 6.2 155.2 0.608

5 fire2/expand3x3 55.8 476.3 1.783

6 fire3/squeeze1x1 12.4 1457.4 0.509

7 fire3/expand1x1 6.2 152.6 0.618

8 fire3/expand3x3 55.8 478.3 1.776

9 fire4/squeeze1x1 24.8 1022.0 0.730

10 fire4/expand1x1 24.8 176.2 1.093

11 fire4/expand3x3 223.0 389.7 4.450

12 pool4 1.7 1257.7 1.174

13 fire5/squeeze1x1 11.9 780.3 0.476

14 fire5/expand1x1 6.0 154.0 0.341

15 fire5/expand3x3 53.7 359.8 1.314

16 fire6/squeeze1x1 17.9 639.4 0.593

17 fire6/expand1x1 13.4 159.5 0.531

18 fire6/expand3x3 120.9 259.7 2.935

19 fire7/squeeze1x1 26.9 826.1 0.689

20 fire7/expand1x1 13.4 159.7 0.530

21 fire7/expand3x3 120.9 255.2 2.987

22 fire8/squeeze1x1 35.8 727.0 0.799

23 fire8/expand1x1 23.9 164.0 0.736

24 fire8/expand3x3 215.0 191.4 5.677

25 pool8 0.8 1263.8 0.563

26 fire9/squeeze1x1 11.1 585.9 0.388

27 fire9/expand1x1 5.5 154.1 0.340

28 fire9/expand3x3 49.8 283.2 1.664

29 conv10 173.1 335.8 3.400

30 pool10 0.3 676.1 0.477

31 prob 0.0 9.5 0.200

-----------------------------------------------------------------------

Total inference time 48.34

-----------------------------------------------------------------------

推断

有了图文件,我们就可以把它加载到NCS,然后进行推断:

from mvnc import mvncapi as mvnc

## 枚举设备

devices = mvnc.EnumerateDevices()

## 打开第一个NCS

device = mvnc.Device(devices[0])

device.OpenDevice()

## 读取图文件

with open("graph", mode='rb') as f:

graphfile = f.read()

## 加载图

graph = device.AllocateGraph(graphfile)图加载加载完成后,就可以graph.LoadTensor给它一个输入,graph.GetResult得到结果。

# 从摄像头获取图像

ret, frame = cap.read()

# 预处理

img = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

img = cv2.resize(img, (224, 224))

img = preprocess_input(img.astype('float32'))

# 输入

graph.LoadTensor(img.astype(numpy.float16), 'user object')

# 获取结果

output, userobj = graph.GetResult()

result = decode_predictions(output.reshape(1, 1000))识别出鼠标:

Kindle和iPod还算相似吧:)

完整的代码在oraoto/learn_ml/ncs,有MNIST和VGG16的例子。