一、Hive集群安装

1,安装好Hadoop,并启动HDFS和YARN。

2,下载hive 1.2.1

http://apache.fayea.com/hive/hive-1.2.1/

apache-hive-1.2.1-bin.tar.gz

上传文件至集群中

3. 安装Hive

root@spark-master:~# ls apache-hive-1.2.1-bin.tar.gz core links-anon.txtaaa 公共的 模板 视频 图片 文档 下载 音乐 桌面 root@spark-master:~# mkdir /usr/local/hive root@spark-master:~# tar -zxvf apache-hive-1.2.1-bin.tar.gz -C /usr/local/hive/

重命名hive目录名称

root@spark-master:~# cd /usr/local/hive/ root@spark-master:/usr/local/hive# ls apache-hive-1.2.1-bin root@spark-master:/usr/local/hive# mv apache-hive-1.2.1-bin/ apache-hive-1.2.1

4,安装MySQL

root@spark-master:/usr/local/hive# dpkg -l|grep mysql root@spark-master:/usr/local/hive# apt-get install mysql-server

在弹出的对话框中输入MySQL数据库的root用户的密码

安装成功后,接着初始化数据库

root@spark-master:/usr/bin# /usr/bin/mysql_secure_installation

登录MySQL

root@spark-master:/usr/bin# mysql -uroot -p Enter password: Welcome to the MySQL monitor. Commands end with ; or \g. Your MySQL connection id is 48 Server version: 5.5.47-0ubuntu0.14.04.1 (Ubuntu) Copyright (c) 2000, 2015, Oracle and/or its affiliates. All rights reserved. Oracle is a registered trademark of Oracle Corporation and/or its affiliates. Other names may be trademarks of their respective owners. Type 'help;' or '\h' for help. Type '\c' to clear the current input statement. mysql>

赋权限给root用户,使其可以远程登录数据库(windowns 客户端可以连接到MySQL数据库)

mysql> select user,host from mysql.user; +------------------+-----------+ | user | host | +------------------+-----------+ | root | 127.0.0.1 | | root | ::1 | | debian-sys-maint | localhost | | root | localhost | +------------------+-----------+ 4 rows in set (0.00 sec) mysql> GRANT ALL PRIVILEGES ON *.* TO 'root'@'%' IDENTIFIED BY 'vincent' WITH GRANT OPTION; Query OK, 0 rows affected (0.00 sec) mysql> FLUSH PRIVILEGES; Query OK, 0 rows affected (0.00 sec) mysql> GRANT ALL PRIVILEGES ON *.* TO 'root'@'%' IDENTIFIED BY 'vincent' WITH GRANT OPTION; Query OK, 0 rows affected (0.00 sec) mysql> select user,host from mysql.user; +------------------+-----------+ | user | host | +------------------+-----------+ | root | % | | root | 127.0.0.1 | | root | ::1 | | debian-sys-maint | localhost | | root | localhost | +------------------+-----------+ 5 rows in set (0.00 sec)

修改MySQL的监听地址

root@spark-master:/usr/bin# vi /etc/mysql/my.cnf

注释掉第47行

47 #bind-address = 127.0.0.1

重启MySQL

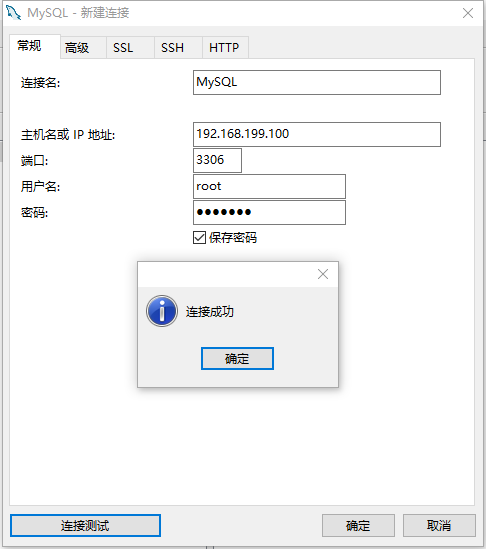

使用Navicat连接MySQL数据库

5,配置Hive

5.1 修改hive-env.sh ,添加如下项;

root@spark-master:/usr/local/hive/apache-hive-1.2.1/conf# pwd /usr/local/hive/apache-hive-1.2.1/conf root@spark-master:/usr/local/hive/apache-hive-1.2.1/conf# cp hive-env.sh.template hive-env.sh root@spark-master:/usr/local/hive/apache-hive-1.2.1/conf# vi hive-env.sh export HIVE_HOME=/usr/local/hive/apache-hive-1.2.1/ export HIVE_CONF_DIR=/usr/local/hive/apache-hive-1.2.1/conf

5.2 修改hive-config.sh

root@spark-master:/usr/local/hive/apache-hive-1.2.1/bin# vi hive-config.sh

在最后一行添加如下内容

export JAVA_HOME=/usr/lib/java/jdk1.8.0_60 export HADOOP_HOME=/usr/local/hadoop/hadoop-2.6.0 export SPARK_HOME=/usr/local/spark/spark-1.6.0-bin-hadoop2.6/

5.3 修改hive-site.xml

root@spark-master:/usr/local/hive/apache-hive-1.2.1/conf# cp hive-default.xml.template hive-site.xml root@spark-master:/usr/local/hive/apache-hive-1.2.1/conf# vi hive-site.xml

为了简单起见,

javax.jdo.option.ConnectionURL jdbc:mysql://spark-master:3306/hive?createDatabaseIfNotExist=true JDBC connect string for a JDBC metastore javax.jdo.option.ConnectionDriverName com.mysql.jdbc.Driver Driver class name for a JDBC metastore javax.jdo.option.ConnectionUserName root Username to use against metastore database javax.jdo.option.ConnectionPassword vincent password to use against metastore database

5.4 将mysql-connector-java-5.1.13-bin.jar MySQL的驱动文件上传到hive的lib目录下

root@spark-master:/usr/local/hive/apache-hive-1.2.1/lib# ls mysql-connector-java-5.1.13-bin.jar mysql-connector-java-5.1.13-bin.jar

6,启动HDFS、YARN

root@spark-master:/usr/local/hadoop/hadoop-2.6.0/sbin# ./start-dfs.sh root@spark-master:/usr/local/hadoop/hadoop-2.6.0/sbin# ./start-yarn.sh root@spark-master:/usr/local/hadoop/hadoop-2.6.0/sbin# jps 16336 ResourceManager 15974 NameNode 16183 SecondaryNameNode 16574 Jps root@spark-master:/usr/local/hadoop/hadoop-2.6.0/sbin#

7,打开Hive的客户端

root@spark-master:/usr/local/hive/apache-hive-1.2.1/bin# ./hive SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/usr/local/hadoop/hadoop-2.6.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/usr/local/spark/spark-1.6.0-bin-hadoop2.6/lib/spark-assembly-1.6.0-hadoop2.6.0.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/usr/local/hadoop/hadoop-2.6.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/usr/local/spark/spark-1.6.0-bin-hadoop2.6/lib/spark-assembly-1.6.0-hadoop2.6.0.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] Logging initialized using configuration in jar:file:/usr/local/hive/apache-hive-1.2.1/lib/hive-common-1.2.1.jar!/hive-log4j.properties [ERROR] Terminal initialization failed; falling back to unsupported java.lang.IncompatibleClassChangeError: Found class jline.Terminal, but interface was expected

报错,jline类有问题。

解决方法:

root@spark-master:/usr/local/hive/apache-hive-1.2.1/lib# cp jline-2.12.jar $HADOOP_HOME/share/hadoop/yarn/lib/ #删除老版本 root@spark-master:/usr/local/hadoop/hadoop-2.6.0/share/hadoop/yarn/lib# rm jline-0.9.94.jar

再次启动

root@spark-master:/usr/local/hive/apache-hive-1.2.1/bin# ./hive SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/usr/local/hadoop/hadoop-2.6.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/usr/local/spark/spark-1.6.0-bin-hadoop2.6/lib/spark-assembly-1.6.0-hadoop2.6.0.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/usr/local/hadoop/hadoop-2.6.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/usr/local/spark/spark-1.6.0-bin-hadoop2.6/lib/spark-assembly-1.6.0-hadoop2.6.0.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] Logging initialized using configuration in jar:file:/usr/local/hive/apache-hive-1.2.1/lib/hive-common-1.2.1.jar!/hive-log4j.properties hive>

测试

hive> create table t1(a string,b int); OK Time taken: 1.67 seconds hive> show tables > ; OK t1 Time taken: 0.29 seconds, Fetched: 1 row(s)

我们查看一下MySQL中是否有元数据存在

数据已同步!!