kubernetes 源码分析之ingress(一)

kubernetes的服务对外暴露通常有三种方式分别为nodeport、loadbalancer和ingress。nodeport很容易理解就是在每个主机上面启动一个服务端口暴露出去,这样弊端是造成端口浪费;loadbalancer这种方式目前只能在gce的平台跑的很好,就是在集群之外对接云平台的负载均衡;还有从kubernetes1.2出来的ingress,他是通过在计算结算启动一个软负载均衡(Nginx、Haproxy)转发流量。

在第一篇我想先介绍安装使用。

先启动一个后端服务:

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: default-http-backend

labels:

k8s-app: default-http-backend

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

k8s-app: default-http-backend

spec:

terminationGracePeriodSeconds: 60

nodeName: slave2

containers:

- name: default-http-backend

image: gcr.io/google_containers/defaultbackend:1.0

imagePullPolicy: IfNotPresent

livenessProbe:

httpGet:

path: /healthz

port: 8080

scheme: HTTP

initialDelaySeconds: 30

timeoutSeconds: 5

ports:

- containerPort: 8080

resources:

limits:

cpu: 10m

memory: 20Mi

requests:

cpu: 10m

memory: 20Mi

---

apiVersion: v1

kind: Service

metadata:

name: default-http-backend

namespace: kube-system

labels:

k8s-app: default-http-backend

spec:

ports:

- port: 80

targetPort: 8080

selector:

k8s-app: default-http-backend由于我机器有限,我就在指定的节点启动这个后端服务,这个服务启动80端口,提供默认的服务连接。验证一下:

curl 10.254.12.58:80

default backend - 404然后ingress controller,它本质就是一个负载均衡配置器

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nginx-ingress-controller

labels:

k8s-app: nginx-ingress-controller

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

k8s-app: nginx-ingress-controller

annotations:

prometheus.io/port: '10254'

prometheus.io/scrape: 'true'

spec:

terminationGracePeriodSeconds: 60

nodeName: slave1

containers:

- image: docker.io/chancefocus/nginx-ingress-controller

imagePullPolicy: IfNotPresent

name: nginx-ingress-controller

readinessProbe:

httpGet:

path: /healthz

port: 10254

scheme: HTTP

livenessProbe:

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

timeoutSeconds: 1

ports:

- containerPort: 80

hostPort: 80

- containerPort: 443

hostPort: 443

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

args:

- /nginx-ingress-controller

- --default-backend-service=kube-system/default-http-backend

- --apiserver-host=http://10.39.0.6:8080ingress-controller会同步k8s的资源修改负载均衡规则,然后就可以创建一个负载均衡规则了

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: test

namespace: kube-system

annotations:

ingress.kubernetes.io/force-ssl-redirect: "false"

ingress.kubernetes.io/ssl-redirect: "false"

spec:

rules:

- http:

paths:

- path: /api

backend:

serviceName: heapster

servicePort: 80把/api的请求转发到heapster这个service里面。其中和http平级的还有一个参数

- host: xx.xxx.xxx,注明域名

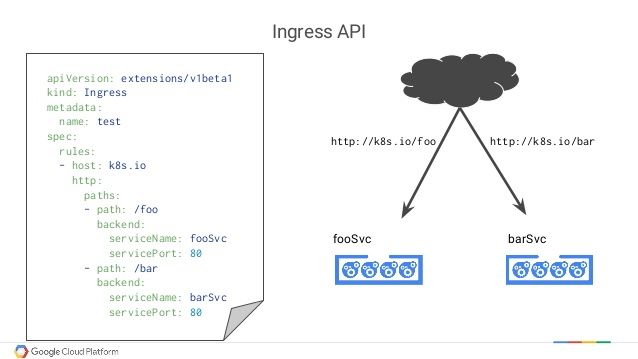

下面是来自google的解说图片

下面就可以测试了:

首先确认服务是ok的,10.254.51.153是clusterIP,192.168.90.6是容器的IP

curl http://10.254.51.153/api/v1/model/namespaces/default/pods/busybox-1865195333-zkwtt/containers/busybox/metrics/cpu/usage

{

"metrics": [

{

"timestamp": "2017-04-20T02:20:00Z",

"value": 7412767

},

{

"timestamp": "2017-04-20T02:21:00Z",

"value": 7412767

},

{

"timestamp": "2017-04-20T02:22:00Z",

"value": 7412767

}

],

"latestTimestamp": "2017-04-20T02:22:00Z"

}

curl http://192.168.90.6:8082/api/v1/model/namespaces/default/pods/busybox-1865195333-zkwtt/containers/busybox/metrics/cpu/usage

{

"metrics": [

{

"timestamp": "2017-04-20T02:44:00Z",

"value": 8960064

},

{

"timestamp": "2017-04-20T02:45:00Z",

"value": 8960064

},

{

"timestamp": "2017-04-20T02:46:00Z",

"value": 8960064

}

],

"latestTimestamp": "2017-04-20T02:46:00Z"

}然后在任何一台机器上面的都可以通过slave1上面的80端口访问服务了

curl http://10.39.0.17/api/v1/model/namespaces/default/pods/busybox-1865195333-zkwtt/containers/busybox/metrics/cpu/usage

{

"metrics": [

{

"timestamp": "2017-04-20T02:42:00Z",

"value": 8960064

},

{

"timestamp": "2017-04-20T02:43:00Z",

"value": 8960064

}

],

"latestTimestamp": "2017-04-20T02:43:00Z"

}这儿端口80就可以重用了。再进入容器看看,nginx.conf文件:

upstream kube-system-heapster-80 {

least_conn;

server 192.168.90.6:8082 max_fails=0 fail_timeout=0;

}

upstream upstream-default-backend {

least_conn;

server 192.168.90.2:8080 max_fails=0 fail_timeout=0;

}

location /api {

set $proxy_upstream_name "kube-system-heapster-80";

location / {

set $proxy_upstream_name "upstream-default-backend";

可以看到其实就是修改了Nginx的配置达到服务转发的效果。ok,基本按照使用已经了解了,其中有个细节就是为啥转发upstream是的容器地址而不是service的地址,有两个主要原因:第一,多次转发降低效率,第二,为了保持session,如果通过service会做轮训,无法保持session。接下来的blog将进行代码详细讲解。