Docker实现Canal MySQL增量日志订阅&消费环境搭建

https://github.com/alibaba/canal

Canal:阿里巴巴mysql数据库binlog的增量订阅&消费组件 。阿里云DRDS( https://www.aliyun.com/product/drds )、阿里巴巴TDDL 二级索引、小表复制powerd by canal. Aliyun Data Lake Analytics https://www.aliyun.com/product/datalakeanalytics

目录

开启Canal之旅

Docker快速开始

MySQL要求

运行Canal容器

错误运行方式

正确运行方式一

正确运行方式二

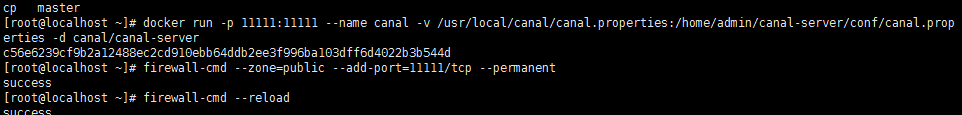

开启服务器端口访问

Java测试客户端

错误运行容器测试结果

正常运行容器测试结果

数据库异常问题

开启Canal之旅

Docker快速开始

https://github.com/alibaba/canal/wiki/Docker-QuickStart

MySQL要求

https://github.com/alibaba/canal/wiki/AdminGuide

a. 当前的canal开源版本支持5.7及以下的版本(阿里内部mysql 5.7.13, 5.6.10, mysql 5.5.18和5.1.40/48),ps. mysql4.x版本没有经过严格测试,理论上是可以兼容

b. canal的原理是基于mysql binlog技术,所以这里一定需要开启mysql的binlog写入功能,并且配置binlog模式为row.

[mysqld] log-bin=mysql-bin

#添加这一行就ok

binlog-format=ROW #选择row模式

server_id=1 #配置mysql replaction需要定义,不能和canal的slaveId重复 数据库重启后, 简单测试 my.cnf 配置是否生效:

mysql> show variables like 'binlog_format';

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| binlog_format | ROW |

+---------------+-------+

mysql> show variables like 'log_bin';

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| log_bin | ON |

+---------------+-------+

如果 my.cnf 设置不起作用,请参考:

https://stackoverflow.com/questions/38288646/changes-to-my-cnf-dont-take-effect-ubuntu-16-04-mysql-5-6

https://stackoverflow.com/questions/52736162/set-binlog-for-mysql-5-6-ubuntu16-4

c. canal的原理是模拟自己为mysql slave,所以这里一定需要做为mysql slave的相关权限

CREATE USER canal IDENTIFIED BY 'canal';

GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'canal'@'%';

-- GRANT ALL PRIVILEGES ON *.* TO 'canal'@'%' ;

FLUSH PRIVILEGES; 针对已有的账户可通过grants查询权限:

show grants for 'canal' 运行Canal容器

注意:需要明确端口号和配置文件路径。

错误运行方式

错误运行是什么意思?即缺少一些基本的配置参数,虽然有修改跟我们理解上还是有一定区别

#运行canal容器

docker run -p 11111:11111 --name canal -d canal/canal-server

#带配置映射

docker run -p 11111:11111 --name canal -v /usr/local/canal/canal.properties:/home/admin/canal-server/conf/canal.properties -d canal/canal-servercanal.properties配置:需要注意id和instance配置(真实情况Docker支持单个instance不需要修改canal.properties)

#################################################

######### common argument #############

#################################################

#canal.manager.jdbc.url=jdbc:mysql://127.0.0.1:3306/canal_manager?useUnicode=true&characterEncoding=UTF-8

#canal.manager.jdbc.username=root

#canal.manager.jdbc.password=121212

#id不能重复(默认id=1)

canal.id = 10002

canal.ip =

canal.port = 11111

canal.metrics.pull.port = 11112

canal.zkServers =

# flush data to zk

canal.zookeeper.flush.period = 1000

canal.withoutNetty = false

# tcp, kafka, RocketMQ

canal.serverMode = tcp

# flush meta cursor/parse position to file

canal.file.data.dir = ${canal.conf.dir}

canal.file.flush.period = 1000

## memory store RingBuffer size, should be Math.pow(2,n)

canal.instance.memory.buffer.size = 16384

## memory store RingBuffer used memory unit size , default 1kb

canal.instance.memory.buffer.memunit = 1024

## meory store gets mode used MEMSIZE or ITEMSIZE

canal.instance.memory.batch.mode = MEMSIZE

canal.instance.memory.rawEntry = true

## detecing config

canal.instance.detecting.enable = false

#canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

canal.instance.detecting.sql = select 1

canal.instance.detecting.interval.time = 3

canal.instance.detecting.retry.threshold = 3

canal.instance.detecting.heartbeatHaEnable = false

# support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

canal.instance.transaction.size = 1024

# mysql fallback connected to new master should fallback times

canal.instance.fallbackIntervalInSeconds = 60

# network config

canal.instance.network.receiveBufferSize = 16384

canal.instance.network.sendBufferSize = 16384

canal.instance.network.soTimeout = 30

# binlog filter config

canal.instance.filter.druid.ddl = true

canal.instance.filter.query.dcl = false

canal.instance.filter.query.dml = false

canal.instance.filter.query.ddl = false

canal.instance.filter.table.error = false

canal.instance.filter.rows = false

canal.instance.filter.transaction.entry = false

# binlog format/image check

canal.instance.binlog.format = ROW,STATEMENT,MIXED

canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

# binlog ddl isolation

canal.instance.get.ddl.isolation = false

# parallel parser config

canal.instance.parser.parallel = true

## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors()

#canal.instance.parser.parallelThreadSize = 16

## disruptor ringbuffer size, must be power of 2

canal.instance.parser.parallelBufferSize = 256

# table meta tsdb info

canal.instance.tsdb.enable = true

canal.instance.tsdb.dir = ${canal.file.data.dir:../conf}/${canal.instance.destination:}

canal.instance.tsdb.url = jdbc:h2:${canal.instance.tsdb.dir}/h2;CACHE_SIZE=1000;MODE=MYSQL;

canal.instance.tsdb.dbUsername = canal

canal.instance.tsdb.dbPassword = canal

# dump snapshot interval, default 24 hour

canal.instance.tsdb.snapshot.interval = 24

# purge snapshot expire , default 360 hour(15 days)

canal.instance.tsdb.snapshot.expire = 360

# aliyun ak/sk , support rds/mq

canal.aliyun.accessKey =

canal.aliyun.secretKey =

#################################################

######### destinations #############

#################################################

canal.destinations = example

# conf root dir

canal.conf.dir = ../conf

# auto scan instance dir add/remove and start/stop instance

canal.auto.scan = true

canal.auto.scan.interval = 5

canal.instance.tsdb.spring.xml = classpath:spring/tsdb/h2-tsdb.xml

#canal.instance.tsdb.spring.xml = classpath:spring/tsdb/mysql-tsdb.xml

canal.instance.global.mode = spring

canal.instance.global.lazy = false

#canal.instance.global.manager.address = 127.0.0.1:1099

#canal.instance.global.spring.xml = classpath:spring/memory-instance.xml

canal.instance.global.spring.xml = classpath:spring/file-instance.xml

#canal.instance.global.spring.xml = classpath:spring/default-instance.xml

##################################################

######### MQ #############

##################################################

canal.mq.servers = 127.0.0.1:6667

canal.mq.retries = 0

canal.mq.batchSize = 16384

canal.mq.maxRequestSize = 1048576

canal.mq.lingerMs = 100

canal.mq.bufferMemory = 33554432

canal.mq.canalBatchSize = 50

canal.mq.canalGetTimeout = 100

canal.mq.flatMessage = true

canal.mq.compressionType = none

canal.mq.acks = all

# use transaction for kafka flatMessage batch produce

canal.mq.transaction = false

#canal.mq.properties. =

正确运行方式一

#带配置映射

docker run -p 11111:11111 --name canal -v /usr/local/canal/example/instance.properties:/home/admin/canal-server/conf/example/instance.properties -d canal/canal-serverinstance.properties配置,只需要设置MySQL容器实例的地址: canal.instance.master.address=172.17.0.4:3306

#################################################

## mysql serverId , v1.0.26+ will autoGen

# canal.instance.mysql.slaveId=0

# enable gtid use true/false

canal.instance.gtidon=false

# position info

canal.instance.master.address=172.17.0.4:3306

canal.instance.master.journal.name=

canal.instance.master.position=

canal.instance.master.timestamp=

canal.instance.master.gtid=

# rds oss binlog

canal.instance.rds.accesskey=

canal.instance.rds.secretkey=

canal.instance.rds.instanceId=

# table meta tsdb info

canal.instance.tsdb.enable=true

#canal.instance.tsdb.url=jdbc:mysql://127.0.0.1:3306/canal_tsdb

#canal.instance.tsdb.dbUsername=canal

#canal.instance.tsdb.dbPassword=canal

#canal.instance.standby.address =

#canal.instance.standby.journal.name =

#canal.instance.standby.position =

#canal.instance.standby.timestamp =

#canal.instance.standby.gtid=

# username/password

canal.instance.dbUsername=canal

canal.instance.dbPassword=canal

canal.instance.connectionCharset = UTF-8

# enable druid Decrypt database password

canal.instance.enableDruid=false

#canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ==

# table regex

canal.instance.filter.regex=.*\\..*

# table black regex

canal.instance.filter.black.regex=

# mq config

canal.mq.topic=example

# dynamic topic route by schema or table regex

#canal.mq.dynamicTopic=mytest1.user,mytest2\\..*,.*\\..*

canal.mq.partition=0

# hash partition config

#canal.mq.partitionsNum=3

#canal.mq.partitionHash=test.table:id^name,.*\\..*

#################################################

正确运行方式二

参考文章地址:https://my.oschina.net/amhuman/blog/1941540

采用如下方式可以直接运行canal实现binlog的增量消费:

docker run --name canal -e canal.instance.master.address=192.168.1.111:3365 -e canal.instance.dbUsername=canal -e canal.instance.dbPassword=canal -p 11111:11111 -d canal/canal-server开启服务器端口访问

#开启端口访问

firewall-cmd --zone=public --add-port=11111/tcp --permanent

#重载防火墙

firewall-cmd --reloadJava测试客户端

请使用canal提供的示例进行测试,https://github.com/alibaba/canal/wiki/ClientExample

package com.alibaba.otter;

import java.net.InetSocketAddress;

import java.util.List;

import com.alibaba.otter.canal.client.CanalConnector;

import com.alibaba.otter.canal.client.CanalConnectors;

import com.alibaba.otter.canal.common.utils.AddressUtils;

import com.alibaba.otter.canal.protocol.CanalEntry.Column;

import com.alibaba.otter.canal.protocol.CanalEntry.Entry;

import com.alibaba.otter.canal.protocol.CanalEntry.EntryType;

import com.alibaba.otter.canal.protocol.CanalEntry.EventType;

import com.alibaba.otter.canal.protocol.CanalEntry.RowChange;

import com.alibaba.otter.canal.protocol.CanalEntry.RowData;

import com.alibaba.otter.canal.protocol.Message;

/**

*

* @author PJL

*

* @note 功能描述:TODO增删改查--事件捕捉

* @package com.alibaba.otter

* @filename SimpleCanalClientExample.java

* @date 2019年4月16日 上午9:16:24

*/

public class SimpleCanalClientExample {

public static void main(String args[]) {

// 创建链接

CanalConnector connector = CanalConnectors.newSingleConnector(new InetSocketAddress("192.168.1.111"/*AddressUtils.getHostIp()*/,

11111), "example", "canal", "canal");

int batchSize = 1000;

int emptyCount = 0;

try {

connector.connect();

connector.subscribe(".*\\..*");

connector.rollback();

int totalEmptyCount = 120;

while (emptyCount < totalEmptyCount) {

Message message = connector.getWithoutAck(batchSize); // 获取指定数量的数据

long batchId = message.getId();

int size = message.getEntries().size();

if (batchId == -1 || size == 0) {

emptyCount++;

System.out.println("empty count : " + emptyCount);

try {

Thread.sleep(1000);

} catch (InterruptedException e) {

}

} else {

emptyCount = 0;

// System.out.printf("message[batchId=%s,size=%s] \n", batchId, size);

printEntry(message.getEntries());

}

connector.ack(batchId); // 提交确认

// connector.rollback(batchId); // 处理失败, 回滚数据

}

System.out.println("empty too many times, exit");

} finally {

connector.disconnect();

}

}

private static void printEntry(List entrys) {

for (Entry entry : entrys) {

if (entry.getEntryType() == EntryType.TRANSACTIONBEGIN || entry.getEntryType() == EntryType.TRANSACTIONEND) {

continue;

}

RowChange rowChage = null;

try {

rowChage = RowChange.parseFrom(entry.getStoreValue());

} catch (Exception e) {

throw new RuntimeException("ERROR ## parser of eromanga-event has an error , data:" + entry.toString(),

e);

}

EventType eventType = rowChage.getEventType();

// 可以获取到数据库实例名称、日志文件、当前操作的表以及执行的增删改查的操作

String logFileName= entry.getHeader().getLogfileName();

long logFileOffset= entry.getHeader().getLogfileOffset();

String dbName=entry.getHeader().getSchemaName();

String tableName=entry.getHeader().getTableName();

System.out.println(String.format("=======> binlog[%s:%s] , name[%s,%s] , eventType : %s",

logFileName, logFileOffset,

dbName, tableName,

eventType));

for (RowData rowData : rowChage.getRowDatasList()) {

if (eventType == EventType.DELETE) {

// 删除

printColumn(rowData.getBeforeColumnsList());

} else if (eventType == EventType.INSERT) {

// 新增

printColumn(rowData.getAfterColumnsList());

} else {

System.out.println("-------> before");

printColumn(rowData.getBeforeColumnsList());

System.out.println("-------> after");

printColumn(rowData.getAfterColumnsList());

}

}

}

}

private static void printColumn(List columns) {

for (Column column : columns) {

System.out.println(column.getName() + " : " + column.getValue() + " update=" + column.getUpdated());

}

}

}

错误运行容器测试结果

此处测试捕获数据库插入删除事件,不过未捕获到,有待进一步研究!超时关闭机制:

empty count : 1

empty count : 2

empty count : 3

empty count : 4

empty count : 5

empty count : 6

empty count : 7

empty count : 8

empty count : 9

empty count : 10

empty count : 11

empty count : 12

empty count : 13

empty count : 14

empty count : 15

empty count : 16

empty count : 17

empty count : 18

empty count : 19

empty count : 20

empty count : 21

empty count : 22

empty count : 23

empty count : 24

empty count : 25

empty count : 26

empty count : 27

empty count : 28

empty count : 29

empty count : 30

empty count : 31

empty count : 32

empty count : 33

empty count : 34

empty count : 35

empty count : 36

empty count : 37

empty count : 38

empty count : 39

empty count : 40

empty count : 41

empty count : 42

empty count : 43

empty count : 44

empty count : 45

empty count : 46

empty count : 47

empty count : 48

empty count : 49

empty count : 50

empty count : 51

empty count : 52

empty count : 53

empty count : 54

empty count : 55

empty count : 56

empty count : 57

empty count : 58

empty count : 59

empty count : 60

empty count : 61

empty count : 62

empty count : 63

empty count : 64

empty count : 65

empty count : 66

empty count : 67

empty count : 68

empty count : 69

empty count : 70

empty count : 71

empty count : 72

empty count : 73

empty count : 74

empty count : 75

empty count : 76

empty count : 77

empty count : 78

empty count : 79

empty count : 80

empty count : 81

empty count : 82

empty count : 83

empty count : 84

empty count : 85

empty count : 86

empty count : 87

empty count : 88

empty count : 89

empty count : 90

empty count : 91

empty count : 92

empty count : 93

empty count : 94

empty count : 95

empty count : 96

empty count : 97

empty count : 98

empty count : 99

empty count : 100

empty count : 101

empty count : 102

empty count : 103

empty count : 104

empty count : 105

empty count : 106

empty count : 107

empty count : 108

empty count : 109

empty count : 110

empty count : 111

empty count : 112

empty count : 113

empty count : 114

empty count : 115

empty count : 116

empty count : 117

empty count : 118

empty count : 119

empty count : 120

empty too many times, exit

正常运行容器测试结果

=======> binlog[mysql-bin.000004:3504] , name[service_db,sys_user] , eventType : INSERT

id : 52 update=true

name : boonya update=true

age : 28 update=true

empty count : 1

empty count : 2

empty count : 3

empty count : 4

empty count : 5

empty count : 6

empty count : 7

empty count : 8

empty count : 9

empty count : 10

empty count : 11

empty count : 12

empty count : 13

empty count : 14

empty count : 15

=======> binlog[mysql-bin.000004:3790] , name[service_db,sys_user] , eventType : INSERT

id : 53 update=true

name : boonya update=true

age : 28 update=true

empty count : 1

empty count : 2

empty count : 3

empty count : 4

empty count : 5

empty count : 6

empty count : 7

empty count : 8

empty count : 9

empty count : 10

empty count : 11

empty count : 12

empty count : 13

empty count : 14

empty count : 15

empty count : 16

empty count : 17

empty count : 18

empty count : 19

empty count : 20

empty count : 21

empty count : 22

empty count : 23

empty count : 24

=======> binlog[mysql-bin.000004:4076] , name[service_db,sys_user] , eventType : INSERT

id : 54 update=true

name : boonya update=true

age : 28 update=true

=======> binlog[mysql-bin.000004:4362] , name[service_db,sys_user] , eventType : INSERT

id : 55 update=true

name : boonya update=true

age : 28 update=true

empty count : 1

=======> binlog[mysql-bin.000004:4648] , name[service_db,sys_user] , eventType : INSERT

id : 56 update=true

name : boonya update=true

age : 28 update=true

=======> binlog[mysql-bin.000004:4934] , name[service_db,sys_user] , eventType : INSERT

id : 57 update=true

name : boonya update=true

age : 28 update=true

empty count : 1

empty count : 2

empty count : 3

empty count : 4

empty count : 5

empty count : 6

empty count : 7

empty count : 8

empty count : 9

empty count : 10

empty count : 11

empty count : 12

empty count : 13

empty count : 14

empty count : 15

empty count : 16

empty count : 17

empty count : 18

empty count : 19

empty count : 20

empty count : 21

empty count : 22

empty count : 23

empty count : 24

empty count : 25

empty count : 26

empty count : 27

empty count : 28

empty count : 29

empty count : 30

empty count : 31

empty count : 32

empty count : 33

empty count : 34

empty count : 35

=======> binlog[mysql-bin.000004:5220] , name[service_db,sys_user] , eventType : INSERT

id : 58 update=true

name : boonya update=true

age : 28 update=true

=======> binlog[mysql-bin.000004:5506] , name[service_db,sys_user] , eventType : INSERT

id : 59 update=true

name : boonya update=true

age : 28 update=true

empty count : 1

=======> binlog[mysql-bin.000004:5792] , name[service_db,sys_user] , eventType : INSERT

id : 60 update=true

name : boonya update=true

age : 28 update=true

=======> binlog[mysql-bin.000004:6078] , name[service_db,sys_user] , eventType : INSERT

id : 61 update=true

name : boonya update=true

age : 28 update=true

empty count : 1

empty count : 2

empty count : 3

empty count : 4

empty count : 5

empty count : 6

empty count : 7

empty count : 8

empty count : 9

empty count : 10

empty count : 11

empty count : 12

empty count : 13

empty count : 14

empty count : 15

empty count : 16

empty count : 17

empty count : 18

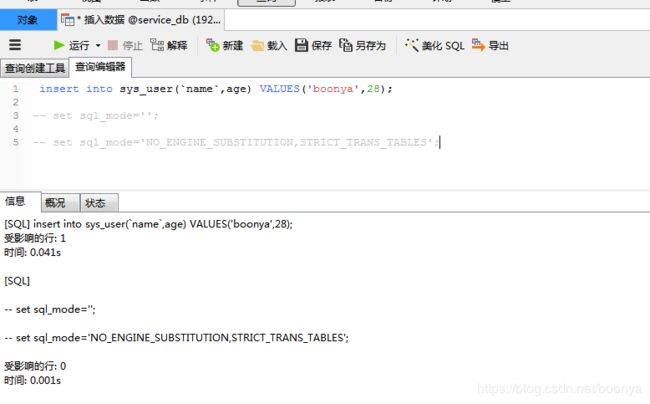

empty count : 19

empty count : 20数据库异常问题

mysql5.7报错:

[Err] 1055 - Expression #1 of ORDER BY clause is not in GROUP BY clause and contains nonaggregated column 'information_schema.PROFILING.SEQ' which is not functionally dependent on columns in GROUP BY clause; this is incompatible with sql_mode=only_full_group_by

解决方法:

show variables like "sql_mode";

set sql_mode='';

set sql_mode='NO_ENGINE_SUBSTITUTION,STRICT_TRANS_TABLES';Canal只是一个基于增量日志的通道,如果需要数据库实现备份还需要在Canal基础上引入Otter,Otter定义了Channel、Pipleline、Node(机器节点)、数据源、目标数据源、数据库表、主备配置等等帮助实现数据库增量备份机制。