Centos7.6 kubernets1.15.4集群搭建(亲测可用)

- kube-master:192.168.3.11

- kube-node1:192.168.3.12

- kube-node2:192.168.3.13

| IP地址 | 节点角色 | CPU | 内存 | 主机名 | 磁盘空间 |

|---|---|---|---|---|---|

| 192.168.3.11 | master | >=2c | >=1G | kube-master | >=3G |

| 192.168.3.12 | worker | >=1c | >=1G | kube-node1 | >=3G |

| 192.168.3.13 | worker | >=1c | >=1G | kube-node2 | >=3G |

1、分别设置主机名hostname

# hostnamectl set-hostname kube-master

# hostnamectl set-hostname kube-node1

# hostnamectl set-hostname kube-node2

2、配置添加主机名解析

# cat <

192.168.3.11 kube-master

192.168.3.12 kube-node1

192.168.3.13 kube-node2

EOF

3、关闭防火墙、selinux和swap

systemctl stop firewalld

systemctl disable firewalld

setenforce 0

sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

Kubernetes 1.8开始要求关闭系统的Swap,如果不关闭,默认配置下kubelet将无法启动。 关闭系统的Swap方法如下:

swapoff -a

修改 /etc/fstab 文件,注释掉 SWAP 的自动挂载,使用free -m确认swap已经关闭。

# & 保存查找串以便在替换串中引用; s/my/**&**/ 符号&代表查找串。my将被替换为**my**

sed -i 's/.*swap.*/#&/' /etc/fstab

swappiness参数调整,修改/etc/sysctl.d/k8s.conf添加下面一行:

vm.swappiness=0

执行sysctl -p /etc/sysctl.d/k8s.conf使修改生效。

若用于测试主机上还运行其他服务,关闭swap可能会对其他服务产生影响,所以这里修改kubelet的配置去掉这个限制。

使用kubelet的启动参数–fail-swap-on=false去掉必须关闭Swap的限制,修改/etc/sysconfig/kubelet,加入:

KUBELET_EXTRA_ARGS=--fail-swap-on=false

4、配置内核参数,将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf <

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

5、配置国内yum源、配置 docker 源、配置国内Kubernetes源

#yum install -y wget

# mkdir /etc/yum.repos.d/bak && mv /etc/yum.repos.d/*.repo /etc/yum.repos.d/bak

# wget -O /etc/yum.repos.d/centos7_base.repo http://mirrors.cloud.tencent.com/repo/centos7_base.repo

# wget -O /etc/yum.repos.d/epel-7.repo http://mirrors.cloud.tencent.com/repo/epel-7.repo

# yum clean all && yum makecache

# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

#cat <

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

6、干净卸载docker

#ystemctl disable docker

#systemctl stop docker

搜索已经安装的docker安装包 yum list installed|grep docker 和rpm -qa|grep docker

yum remove docker \

docker-ce \

docker-ce-cli.x86_64 \

containerd.io.x86_64 \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-engine

rm -rf /etc/systemd/system/docker.service.d

rm -rf /var/lib/docker

rm -rf /var/run/docker

rm -rf /etc/systemd/system/docker.service.d

rm -rf /var/lib/docker

rm -rf /var/run/docker

7、kube-proxy开启ipvs的前置条件

由于ipvs已经加入到了内核的主干,所以为kube-proxy开启ipvs的前提需要加载以下的内核模块:

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack_ipv4

在所有的Kubernetes节点node1和node2上执行以下脚本:

# cat > /etc/sysconfig/modules/ipvs.modules <

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

上面脚本创建了的/etc/sysconfig/modules/ipvs.modules文件,保证在节点重启后能自动加载所需模块。 使用lsmod | grep -e ip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块。

接下来还需要确保各个节点上已经安装了ipset软件包yum install ipset。 为了便于查看ipvs的代理规则,最好安装一下管理工具ipvsadm yum install ipvsadm。

如果以上前提条件如果不满足,则即使kube-proxy的配置开启了ipvs模式,也会退回到iptables模式。

8、安装Docker

yum makecache fast

yum install -y --setopt=obsoletes=0 docker-ce-18.09.7-3.el7

systemctl start docker

systemctl enable docker

配置Master

https://kubernetes.io/docs/setup/production-environment/container-runtimes/#containerd

cat > /etc/docker/daemon.json <

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

mkdir -p /etc/systemd/system/docker.service.d

systemctl daemon-reload

systemctl restart docker

docker info | grep Cgroup

Cgroup Driver: systemd

确认一下iptables filter表中FOWARD链的默认策略(pllicy)为ACCEPT

#iptables -nvL iptables -P FORWARD ACCEPT service iptables save

安装kubeadm、kubelet、kubectl

# yum install -y kubelet-1.15.4 kubeadm-1.15.4 kubectl-1.15.4

# systemctl enable kubelet

Kubelet负责与其他节点通信,并进行本节点Pod和容器生命周期的管理。Kubeadm是Kubernetes的自动化部署工具,降低部署难度,提高效率。Kubectl是Kubernetes集群管理工具。

停机并备份虚拟机,复制kube-master、kube-node1、kube-node2三份,按照开头的表格要求表配置CPU、MEM、分别启动并配置MAC地址和IP地址、分别设置主机名hostname

# hostnamectl set-hostname kube-master

# hostnamectl set-hostname kube-node1

# hostnamectl set-hostname kube-node2

部署master 节点

# kubeadm init --kubernetes-version=1.15.4 \

--apiserver-advertise-address=192.168.3.11 \

--image-repository registry.aliyuncs.com/google_containers \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.15.4

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kube-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.1.0.1 192.168.3.11]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [kube-master localhost] and IPs [192.168.3.11 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [kube-master localhost] and IPs [192.168.3.11 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 38.003641 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.15" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node kube-master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node kube-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 1eqtg0.751hv2yde5e2r347

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.3.11:6443 --token 1eqtg0.751hv2yde5e2r347 \

--discovery-token-ca-cert-hash sha256:2fa4db469837198d2f7a0ff9f33402b7a944071a2ee179fb832574905a2b1786

配置kubectl工具

mkdir -p /root/.kube

cp /etc/kubernetes/admin.conf /root/.kube/config

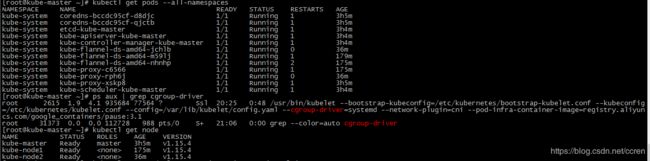

kubectl get nodes

kubectl get cs

部署flannel网络(不适用kubernetes1.16)

# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.extensions/kube-flannel-ds-amd64 created

daemonset.extensions/kube-flannel-ds-arm64 created

daemonset.extensions/kube-flannel-ds-arm created

daemonset.extensions/kube-flannel-ds-ppc64le created

daemonset.extensions/kube-flannel-ds-s390x created

kube-node、kube-node2两个节点分别执行

kubeadm join 192.168.3.11:6443 --token 1eqtg0.751hv2yde5e2r347 \

--discovery-token-ca-cert-hash sha256:2fa4db469837198d2f7a0ff9f33402b7a944071a2ee179fb832574905a2b1786

若在此处卡住则重新生成token

# kubeadm token create

获取ca证书sha256编码hash值

# openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

2fa4db469837198d2f7a0ff9f33402b7a944071a2ee179fb832574905a2b1786

kubeadm join 192.168.3.11:6443 --token xi4n4n.uf53oftg90c13uw8 --discovery-token-ca-cert-hash sha256:2fa4db469837198d2f7a0ff9f33402b7a944071a2ee179fb832574905a2b1786 --skip-preflight-checks

未完待续。。。