Module

-

generator_resnet

def generator_resnet (image, options, reuse=False, name="generator"):

with tf.variable_scope(name):

# image is 256 x 256 x input_c_dim

if reuse:

tf.get_variable_scope().reuse_variables()

else:

assert tf.get_variable_scope().reuse is False

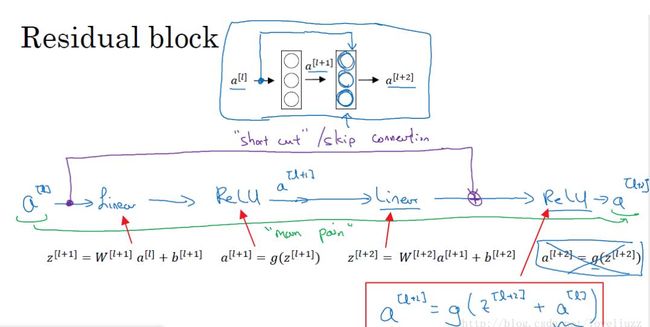

def residule_block(x, dim, ks=3, s=1, name='res'):

p = int((ks - 1) / 2)

y = tf.pad(x, [[0, 0], [p, p], [p, p], [0, 0]], "REFLECT")

y = instance_norm(conv2d(y, dim, ks, s, padding='VALID', name=name+'_c1'), name+'_bn1')

y = tf.pad(tf.nn.relu(y), [[0, 0], [p, p], [p, p], [0, 0]], "REFLECT")

y = instance_norm(conv2d(y, dim, ks, s, padding='VALID', name=name+'_c2'), name+'_bn2')

return y + x

# Justin Johnson's model from https://github.com/jcjohnson/fast-neural-style/

# The network with 9 blocks consists of: c7s1-32, d64, d128, R128, R128, R128,

# R128, R128, R128, R128, R128, R128, u64, u32, c7s1-3

c0 = tf.pad(image, [[0, 0], [3, 3], [3, 3], [0, 0]], "REFLECT")

c1 = tf.nn.relu(instance_norm(conv2d(c0, options.gf_dim, 7, 1, padding='VALID', name='g_e1_c'), 'g_e1_bn'))

c2 = tf.nn.relu(instance_norm(conv2d(c1, options.gf_dim*2, 3, 2, name='g_e2_c'), 'g_e2_bn'))

c3 = tf.nn.relu(instance_norm(conv2d(c2, options.gf_dim*4, 3, 2, name='g_e3_c'), 'g_e3_bn'))

# define G network with 9 resnet blocks

r1 = residule_block(c3, options.gf_dim*4, name='g_r1')

r2 = residule_block(r1, options.gf_dim*4, name='g_r2')

r3 = residule_block(r2, options.gf_dim*4, name='g_r3')

r4 = residule_block(r3, options.gf_dim*4, name='g_r4')

r5 = residule_block(r4, options.gf_dim*4, name='g_r5')

r6 = residule_block(r5, options.gf_dim*4, name='g_r6')

r7 = residule_block(r6, options.gf_dim*4, name='g_r7')

r8 = residule_block(r7, options.gf_dim*4, name='g_r8')

r9 = residule_block(r8, options.gf_dim*4, name='g_r9')

d1 = deconv2d(r9, options.gf_dim*2, 3, 2, name='g_d1_dc')

d1 = tf.nn.relu(instance_norm(d1, 'g_d1_bn'))

d2 = deconv2d(d1, options.gf_dim, 3, 2, name='g_d2_dc')

d2 = tf.nn.relu(instance_norm(d2, 'g_d2_bn'))

d2 = tf.pad(d2, [[0, 0], [3, 3], [3, 3], [0, 0]], "REFLECT")

pred = tf.nn.tanh(conv2d(d2, options.output_c_dim, 7, 1, padding='VALID', name='g_pred_c'))

return pred

note that:

1.here instance norm be used.

Batch norm averages and standard deviations all pixels of a picture in a batch. The instance norm averages and standard deviations all pixels of a single picture.

What the style transfer wants is to match the deep activation distribution of the generated image with the distribution of the style image. This can actually be considered as a problem of domain adaptation. So in this case, instance norm is more suitable.

2.about pad

tf.pad

pad( tensor, #input tensor , paddings,

mode=’CONSTANT’, # it means 0 be filled up

name=None

)

example:

# 't' is [[1, 2, 3], [4, 5, 6]].

# 'paddings' is [[1, 1,], [2, 2]].

# 'constant_values' is 0.

# rank of 't' is 2.

pad(t, paddings, "CONSTANT")

==> [[0, 0, 0, 0, 0, 0, 0],

[0, 0, 1, 2, 3, 0, 0],

[0, 0, 4, 5, 6, 0, 0],

[0, 0, 0, 0, 0, 0, 0]]

pad(t, paddings, "REFLECT") ==> [[6, 5, 4, 5, 6, 5, 4],

[3, 2, 1, 2, 3, 2, 1],

[6, 5, 4, 5, 6, 5, 4],

[3, 2, 1, 2, 3, 2, 1]]

note that:

'paddings' is [[1, 1,], [2, 2]]. means:

[1,1] refers to expanding one line up and one line down

[2,2] refers to 2 columns to the left and 2 columns to the right

and when "REFLECT" is on, 'paddings' is [[1, 1,], [2, 2]].

original

1, 2, 3

4, 5, 6

Scroll up one line, 123 as the up edge(axis of symmetry)

4,5,6

1,2,3

4,5,6

Scroll down one line, 456 as the axis of symmetry

4,5,6

1,2,3

4,5,6

1,2,3

turn left 2 rows, 4141 as axis of symmetry

6,5,4,5,6

3,2,1,2,3

6,5,4,5,6

3,2,1,2,3

turn right 2 rows, 6362 as axis of symmetry

6,5,4,5,6,5,4

3,2,1,2,3,2,1

6,5,4,5,6,5,4

3,2,1,2,3,2,1

OP

-

conv2d

def conv2d(input, filter, kernel, strides=1, stddev=0.02, name='conv2d'):

with tf.variable_scope(name):

w = tf.get_variable(

'w',

(kernel, kernel, input.get_shape()[-1], filter),

initializer=tf.truncated_normal_initializer(stddev=stddev)

)

conv = tf.nn.conv2d(input, w, strides=[1, strides, strides, 1], padding='VALID')

b = tf.get_variable(

'b',

[filter],

initializer=tf.constant_initializer(0.0)

)

conv = tf.reshape(tf.nn.bias_add(conv, b), tf.shape(conv))

return conv

compare with the following code :

def scope(default_name):

def deco(fn):

def wrapper(*args, **kwargs):

if 'name' in kwargs:

name = kwargs['name']

kwargs.pop('name')

else:

name = default_name

with tf.variable_scope(name):

return fn(*args, **kwargs)

return wrapper

return deco

@scope('conv2d')

def conv2d(input, filter, kernel, strides=1, stddev=0.02):

w = tf.get_variable(

'w',

(kernel, kernel, input.get_shape()[-1], filter),

initializer=tf.truncated_normal_initializer(stddev=stddev)

)

conv = tf.nn.conv2d(input, w, strides=[1, strides, strides, 1], padding='VALID')

b = tf.get_variable(

'b',

[filter],

initializer=tf.constant_initializer(0.0)

)

conv = tf.reshape(tf.nn.bias_add(conv, b), tf.shape(conv))

return conv

anyway, i prefer just using "with tf.variable_scope(name):"

-

res_block

def res_block(x, dim, name='res_block'):

with tf.variable_scope(name):

y = reflect_pad(x, name='rp1')

y = conv2d(y, dim, 3, name='conv1')

y = lrelu(y)

y = reflect_pad(y, name='rp2')

y = conv2d(y, dim, 3, name='conv2')

y = lrelu(y)

return tf.add(x, y)