1.Background

Winsock kernel buffer

To optimize performance at the application layer, Winsock copies data buffers from application send calls to a Winsock kernel buffer. Then, the stack uses its own heuristics (such as Nagle algorithm) to determine when to actually put the packet on the wire.

You can change the amount of Winsock kernel buffer allocated to the socket using the SO_SNDBUF option (it is 8K by default). If necessary, Winsock can buffer significantly more than the SO_SNDBUF buffer size.

send completion in most cases

In most cases, the send completion in the application only indicates the data buffer in an application send call is copied to the Winsock kernel buffer and does not indicate that the data has hit the network medium.

The only exception is when you disable the Winsock buffering by setting SO_SNDBUF to 0.

rules to indicate a send completion

Winsock uses the following rules to indicate a send completion to the application (depending on how the send is invoked, the completion notification could be the function returning from a blocking call, signaling an event or calling a notification function, and so forth):

- If the socket is still within SO_SNDBUF quota, Winsock copies the data from the application send and indicates the send completion to the application.

- If the socket is beyond SO_SNDBUF quota and there is only one previously buffered send still in the stack kernel buffer, Winsock copies the data from the application send and indicates the send completion to the application.

- If the socket is beyond SO_SNDBUF quota and there is more than one previously buffered send in the stack kernel buffer, Winsock copies the data from the application send. Winsock does not indicate the send completion to the application until the stack completes enough sends to put the socket back within SO_SNDBUF quota or only one outstanding send condition.

https://support.microsoft.com/en-us/kb/214397

2.SO_SNDBUF & SO_RCVBUF

2.1基本说明

SO_SNDBUF

Sets send buffer size. This option takes an int value. (it is 8K by default).

SO_RCVBUF

Sets receive buffer size. This option takes an int value.

Note: SO stands for Socket Option

每个套接口都有一个发送缓冲区和一个接收缓冲区,使用SO_SNDBUF & SO_RCVBUF可以改变缺省缓冲区大小。

对于客户端,SO_RCVBUF选项须在connect之前设置.

对于服务器,SO_RCVBUF选项须在listen前设置.

2.2 Using in C/C++

int setsockopt(SOCKET s,int level,int optname,const char* optval,int optlen);

SOCKET socket = ...

int nRcvBufferLen = 64*1024;

int nSndBufferLen = 4*1024*1024;

int nLen = sizeof(int);

setsockopt(socket, SOL_SOCKET, SO_SNDBUF, (char*)&nSndBufferLen, nLen);

setsockopt(socket, SOL_SOCKET, SO_RCVBUF, (char*)&nRcvBufferLen, nLen);

TCP的可靠性

TCP的突出特点是可靠性比较好,主要是怎么实现的呢?

可靠性好不意味着不出错,可靠性好意味着容错能力强。

容错能力强就要求有 备份,也就是说要有缓存,这样的话才能支持重传等功能。

每个Socket都有自己的Send Buffer和Receive Buffer。

当进行send和recv操作时,立即返回,其实是将数据并没有发送出去,而是存放在对应的Send Buffer和Receive Buffer马上返回成功。

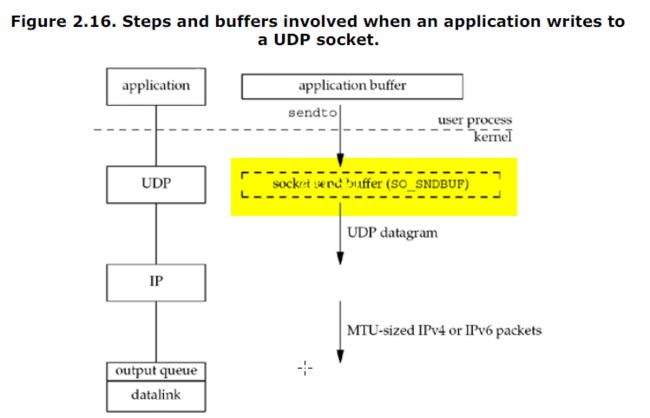

文献上send buffer的一点说明

“we show the socket send buffer as a dashed box because it doesn't really exist.

A UDP socket has a send buffer size (which we can change with the SO_SNDBUF socket option, Section 7.5), but this is simply an upper limit on the maximum-sized UDP datagram that can be written to the socket.

If an application writes a datagram larger than the socket send buffer size, EMSGSIZE is returned.

Since UDP is unreliable, it does not need to keep a copy of the application's data and does not need an actual send buffer.

(The application data is normally copied into a kernel buffer

of some form as it passes down the protocol stack, but this copy is discarded by the datalink layer after the data is transmitted.)”

(UNIX® Network Programming Volume 1, Third Edition: The Sockets Networking API,Pub Date: November 21, 2003)

根据以上《UNIX 网络编程第一卷》(此版本是2003年出版的,但是未查询到其它有效的文献)中的描述,针对UDP而言,利用SO_SNDBUF设置的值,是可写到该socket的UDP报文的最大值;如果当前程序接收到的报文大于send buffer size,会返回EMSGSIZE。

作用和意义

接收缓冲区

如何使用接收缓冲区

接收缓冲区把数据缓存入内核,应用进程一直没有调用read进行读取的话,此数据会一直缓存在相应socket的接收缓冲区内。

再啰嗦一点,不管进程是否读取socket,对端发来的数据都会经由内核接收并且缓存到socket的内核接收缓冲区之中。

read所做的工作,就是把内核缓冲区中的数据拷贝到应用层用户的buffer里面,仅此而已。

接收缓冲区buffer满之后的处理策略

接收缓冲区被TCP和UDP用来缓存网络上来的数据,一直保存到应用进程读走为止。

- TCP

对于TCP,如果应用进程一直没有读取,buffer满了之后,发生的动作是:通知对端TCP协议中的窗口关闭。这个便是滑动窗口的实现。

保证TCP套接口接收缓冲区不会溢出,从而保证了TCP是可靠传输。因为对方不允许发出超过所通告窗口大小的数据。 这就是TCP的流量控制,如果对方无视窗口大小而发出了超过窗口大小的数据,则接收方TCP将丢弃它。- UDP

当套接口接收缓冲区满时,新来的数据报无法进入接收缓冲区,此数据报就被丢弃。UDP是没有流量控制的;快的发送者可以很容易地就淹没慢的接收者,导致接收方的UDP丢弃数据报。

发送缓冲区

如何使用发送缓冲区

进程调用send发送的数据的时候,最简单情况(也是一般情况),将数据拷贝进入socket的内核发送缓冲区之中,然后send便会在上层返回。

换句话说,send返回之时,数据不一定会发送到对端去(和write写文件有点类似),send仅仅是把应用层buffer的数据拷贝进socket的内核发送buffer中。

每个UDP socket都有一个接收缓冲区,没有发送缓冲区,从概念上来说就是只要有数据就发,不管对方是否可以正确接收,所以不缓冲,不需要发送缓冲区。

SO_SNDBUF的大小

为了达到最大网络吞吐,socket send buffer size(SO_SNDBUF)不应该小于带宽和延迟的乘积。

之前我遇到2个性能问题,都和SO_SNDBUF设置得太小有关。

但是,写程序的时候可能并不知道把SO_SNDBUF设多大合适,而且SO_SNDBUF也不宜设得太大,浪费内存啊(是么??)。

操作系统动态调整SO_SNDBUF

于是,有OS提供了动态调整缓冲大小的功能,这样应用程序就不用再对SO_SNDBUF调优了。(接受缓冲SO_RCVBUF也是类似的问题,不应该小于带宽和延迟的乘积)。

Dynamic send buffering for TCP was added on Windows 7 and Windows Server 2008 R2. By default, dynamic send buffering for TCP is enabled unless an application sets the SO_SNDBUF socket option on the stream socket.

较新的OS都支持socket buffer的自动调整,不需要应用程序去调优。但对Windows 2012(和Win8)以前的Windows,为了达到最大网络吞吐,还是要应用程序操心一下SO_SNDBUF的设置。

另外,

需要注意的是,如果应用设置了SO_SNDBUF,Dynamic send buffering会失效 。https://msdn.microsoft.com/enus/library/windows/desktop/bb736549(v=vs.85).aspx

将SO_RCVBUF SO_SNDBUF设置为0 没什么好处

Let’s look at how the system handles a typical send call when the send buffer size is non-zero.

When an application makes a send call, if there is sufficient buffer space, the data is copied into the socket’s send buffers, the call completes immediately with success, and the completion is posted.

On the other hand, if the socket’s send buffer is full, then the application’s send buffer is locked and the send call fails with WSA_IO_PENDING. After the data in the send buffer is processed (for example, handed down to TCP for processing), then Winsock will process the locked buffer directly. That is, the data is handed directly to TCP from the application’s buffer and the socket’s send buffer is completely by passed.

可以看出发送数据时,如果socket的send buffer(内核层)已满,这时候应用程序的send buffer(应用层)会被锁定,send 调用返回WSA_IO_PENDING。

当send buffer中的数据已经处理完,Winsock会直接处理锁定的send buffer(应用层)。也就是说,程序跳过socket的send buffer,直接处理程序的buffer(应用层)。

The opposite is true for receiving data. When an overlapped receive call is performed, if data has already been received on the connection, it will be buffered in the socket’s receive buffer. This data will be copied directly into the application’s buffer (as much as will fit), the receive call returns success, and a completion is posted. However, if the socket’s receive buffer is empty, when the overlapped receive call is made, the application’s buffer is locked and the call fails with WSA_IO_PENDING. Once data arrives on the connection, it will be copied directly into the application’s buffer, bypassing the socket’s receive buffer altogether.

接收缓冲区的处理也是如此。

Setting the per-socket buffers to zero generally will not increase performance because the extra memory copy can be avoided as long as there are always enough overlapped send and receive operations posted. Disabling the socket’s send buffer has less of a performance impact than disabling the receive buffer because the application’s send buffer will always be locked until it can be passed down to TCP for processing. However, if the receive buffer is set to zero and there are no outstanding overlapped receive calls, any incoming data can be buffered only at the TCP level. The TCP driver will buffer only up to the receive window size, which is 17 KB—TCP will increase these buffers as needed to this limit; normally the buffers are much smaller.

These TCP buffers (one per connection) are allocated out of non-paged pool, which means if the server has 1000 connections and no receives posted at all, 17 MB of the non- paged pool will be consumed!

The non-paged pool is a limited resource, and unless the server can guarantee there are always receives posted for a connection, the per-socket receive buffer should be left intact.

Only in a few specific cases will leaving the receive buffer intact lead to decreased performance. Consider the situation in which a server handles many thousands of connections and cannot have a receive posted on each connection (this can become very expensive, as you’ll see in the next section). In addition, the clients send data sporadically. Incoming data will be buffered in the per-socket receive buffer and when the server does issue an overlapped receive, it is performing unnecessary work. The overlapped operation issues an I/O request packet (IRP) that completes, immediately after which notification is sent to the completion port. In this case, the server cannot keep enough receives posted, so it is better off performing simple non-blocking receive calls.

References:

http://pubs.opengroup.org/onlinepubs/009695399/functions/setsockopt.html

UNIX® Network Programming Volume 1, Third Edition: The Sockets Networking API,Pub Date: November 21, 2003

http://blog.csdn.net/xiaokaige198747/article/details/75388458

http://www.cnblogs.com/kex1n/p/7801343.html

http://blog.csdn.net/summerhust/article/details/6726337