- 钱不是万能,但没有钱万万不能!

倔强的双鱼

“钱不是万能,但没有钱万万不能”这句话相信每个人都听过,年轻人可能会觉得很肤浅,相对中年人,特别是上有老,下有小的更能体会,没钱的生活捉襟见肘。如果在经济不富足的情况下,一场疾病,就可以轻易击垮一个家庭。跨年巨献《送你一朵小红花》中,围绕两个抗癌家庭的两组生活轨迹展开,讲述了一个温情的现实故事,易烊千玺饰演的韦一航抗癌花了不少钱,妈妈为省几块钱停车费,和人磨破嘴皮,省吃俭用,爸爸偷偷去开专车职位贴

- 数据结构错题收录(十)

程序员丶星霖

1、下列关于广度优先算法的说法中,正确的是()。Ⅰ.当各边的权值相等时,广度优先算法可以解决单源最短路径问题Ⅱ.当个边的权值不等时,广度优先算法可用来解决单源最短路径问题Ⅲ.广度优先遍历算法类似于树中的后序遍历算法Ⅳ.实现图的广度优先算法时,使用的数据结构是队列•A:Ⅰ、Ⅳ•B:Ⅱ、Ⅲ、Ⅳ•C:Ⅱ、Ⅳ•D:Ⅰ、Ⅲ、Ⅳ解析广度优先搜索以起始结点为中心,一层一层地向外层扩展遍历图的顶点,因此无法考虑到

- 素食1000餐|429-431/1000|20220530早餐午餐晚餐

心心666

【今日素食】20220530农历四月三十星期一早餐:麻心汤圆。午餐:米饭,海带豆芽,豆腐干炒咸菜。晚餐:米饭,海带豆芽,豆腐干炒咸菜。【文摘】在这个世上,有些人辛苦了一辈子,依旧一事无成。其根本原因就在于,认不清自己的位置。要么过分高估自己的能力,眼高手低导致碌碌无为;要么太过贬低自己的实力,畏手畏脚导致无所作为。古语有言:“不失其所者久、死而不亡者寿。”

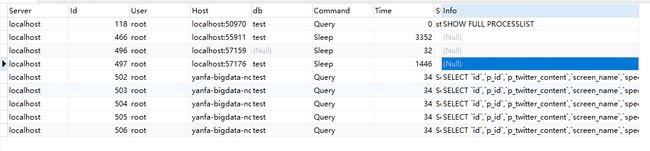

- python大数据论文_大数据环境下基于python的网络爬虫技术

weixin_39775976

python大数据论文

软件开发大数据环境下基于python的网络爬虫技术作者/谢克武,重庆工商大学派斯学院软件工程学院摘要:随着互联网的发展壮大,网络数据呈爆炸式增长,传统捜索引擎已经不能满足人们对所需求数据的获取的需求,作为搜索引擎的抓取数据的重要组成部分,网络爬虫的作用十分重要,本文首先介绍了在大数据环境下网络爬虫的重要性,接着介绍了网络爬虫的概念,工作原理,工作流程,网页爬行策略,python在编写爬虫领域的优势

- 独家章节《醉人之香》周小强&小说全文阅读笔趣阁

寒风书楼

独家章节《醉人之香》周小强&小说全文阅读笔趣阁主角:周小强简介:醉人之香关注微信公众号【风车文楼】去回个书号【257】,即可阅读【醉人之香】小说全文!山坡上,草丛里。“宝贝,谁遭报应,两说呢!”王守平咂了咂嘴,又继续忙碌了起来,粗大的舌头宛如一条小蛇似的,在柔若无骨的香肩上四处探索。王守平吻遍了王心妍的香肩,沿着羊脂玉似的后背,一点点的向下滑去,很快就到了胸罩带子边缘。老色鬼两眼泛着红光,一把扯断

- Redis-redis.conf 详解

Hollow_Knight

##############################################基本设置#########################################redis默认是不作为守护进程来运行的,如果你需要可以改为'yes'(设置为守护进程)#当作为守护进程时,redis会把进程ID写到/var/run/redis.pid(默认)文件中daemonizeno(一般改为ye

- 一些岁月静好的唯美句子

金彩儿

1.只闻花香,不谈悲喜。饮茶颂书,不争朝夕。——杨绛《将饮茶》2.我本是槐花院落闲散的人,满襟酒气。小池塘边跌坐看鱼,眉挑烟火过一生。——沈离淮3.我以为,最美的日子,当是晨起侍花,闲来煮茶,阳光下打盹,细雨中闲逛,夜灯下读书,在这清浅时光里,一手烟火一手诗意,任窗外花开花落,云来云往,自是余味无尽,万般满意。——汪曾祺《慢煮生活》4.一个人能观察落叶,羞花,从细微处欣赏一切,生活就不能把他怎么样

- 推荐文章:《同济大学软件学院万院长谈择业》

weixin_34087301

同济大学软件学院万院长谈择业一、关于企业计算方向企业计算(EnterpriseComputing)是稍时髦较好听的名词,主要是指企业信息系统,如ERP软件(企业资源规划)、CRM软件(客户关系管理)、SCM软件(供应链管理,即物流软件),银行证券软件,财务软件,电子商务/政务(包括各种网站),数据仓库,数据挖掘,商务智能等企业信息管理系统。企业计算领域对人才的需求显然永远是数量最大的,因为这是计算

- React Native iOS 全栈开发:跨平台开发的最佳实践

AI天才研究院

ChatGPT计算AI人工智能与大数据reactnativeiosreact.jsai

ReactNativeiOS全栈开发:跨平台开发的最佳实践关键词:ReactNative、iOS开发、跨平台开发、全栈开发、最佳实践摘要:本文围绕ReactNativeiOS全栈开发展开,详细探讨了跨平台开发的最佳实践。从核心概念入手,介绍了ReactNative和iOS开发相关知识,阐述它们之间的联系。深入讲解核心算法原理和具体操作步骤,通过数学模型和公式进一步剖析。提供项目实战案例,包含开发环

- 磁悬浮转子不平衡质量的高精度控制:从原理到实战

FanXing_zl

磁悬浮轴承磁悬浮磁悬浮轴承控制磁悬浮轴承不平衡质量控制陷波器自适应陷波器

高速旋转的磁悬浮轴承系统中,一个微米级的质量偏心足以引发灾难性振动。如何驯服这只“旋转的猛兽”?核心在于精准的不平衡控制策略。引言:高速旋转世界的“阿喀琉斯之踵”磁悬浮轴承(ActiveMagneticBearing,AMB)凭借无接触、无摩擦、高速度、长寿命等革命性优势,已成为高端旋转机械(如高速电机、离心压缩机、飞轮储能)的核心支撑技术。然而,转子固有的不平衡质量分布始终是悬在其头上的“达摩克

- 磁悬浮轴承电感测试全攻略:攻克核心技术挑战迈向高精度稳定控制

FanXing_zl

磁悬浮系统测试磁悬浮轴承控制磁悬浮磁悬浮控制磁悬浮系统

磁悬浮轴承的卓越性能背后,电感测试精度是其核心保障——这看似简单的参数,却是决定系统成败的关键命门。引言:磁悬浮的魅力与电感测试的“暗礁”磁悬浮轴承(ActiveMagneticBearing,AMB)以其无接触、无摩擦、高速度、无需润滑的革命性优势,在高速电机、飞轮储能、精密制造、航空航天等领域展现出巨大潜力。它通过实时控制的电磁力使转子稳定悬浮,彻底摆脱了传统机械轴承的物理限制。然而,精准的悬

- 磁悬浮轴承平动控制的核心技术解析:从PID到自适应鲁棒控制

FanXing_zl

磁悬浮轴承磁悬浮磁悬浮轴承控制磁悬浮磁悬浮轴承磁悬浮控制算法

在高速旋转机械的王国里,平动精度决定了系统的生死存亡,而磁悬浮轴承正是这一领域的“无接触舞者”。磁悬浮轴承作为革命性的无接触支承技术,彻底改变了传统机械轴承的摩擦、磨损和润滑限制。其核心优势在于通过电磁力实现转子的稳定悬浮,而平动控制则是保障转子在轴向和径向精确悬浮的核心技术。本文将深入解析平动控制的技术原理、实现路径与发展趋势。01磁悬浮轴承平动控制的基本原理磁悬浮轴承系统是一个典型的机电一体化

- 读后感-《精英习惯》

victoria李小薇

差距不是一朝一夕形成的,而是跟习惯有关,职场精英的养成都离不开三种习惯:习惯一:积极主动人性本质是主动而非被动的,不仅能消极选择反应,更能主动创造有利环境。采取主动并不表示要强求、惹人厌或具侵略性,只是不逃避为自己开创前途的责任。要做到很难,积极主动往往意味着我们要牺牲难得的休闲时光,要面临一系列难以解决的问题,以及承担责任的风险……相对于“积极”,有时“消极”反而更加保险。但想要成为职场精英,我

- 一句话感悟

杀猪就一刀

常听说一句话,你是想当一只快乐的猪,还是想当一个痛苦的思想家。刚一听很有道理,深虑后,不以为然。猪再快乐他永远是猪,思想家想通了也会很快乐,而且他毕竟是一个人

- 【Python爬虫(26)】Python爬虫进阶:数据清洗与预处理的魔法秘籍

奔跑吧邓邓子

Python爬虫python爬虫开发语言数据清洗预处理

【Python爬虫】专栏简介:本专栏是Python爬虫领域的集大成之作,共100章节。从Python基础语法、爬虫入门知识讲起,深入探讨反爬虫、多线程、分布式等进阶技术。以大量实例为支撑,覆盖网页、图片、音频等各类数据爬取,还涉及数据处理与分析。无论是新手小白还是进阶开发者,都能从中汲取知识,助力掌握爬虫核心技能,开拓技术视野。目录一、数据清洗的重要性二、数据清洗的常见任务2.1去除噪声数据2.2

- 2019-01-14 提高生活的感受

与乱

我买了一个新鼠标,白色的,手感很好,样子也好看。用起来很顺手,但是它的价钱也不便宜——至少对现在的我来说。我买了不少价钱不便宜的东西。比如旅行箱、衣服、键盘、耳机,等等。以我现在的薪资水平,虽然能够负担得起,但我依然会有一种负罪感。这些东西的确能提高一点我生活的感受。但是我想,是不是还有别的更多东西,比这些更迫切。我需要抑制自己。

- 【RK3568 嵌入式linux QT开发笔记】 二维码开源库 libqrencode 交叉静态编译和使用

本文参考文章:https://blog.csdn.net/qq_41630102/article/details/108306720参考文章有些地方描述的有疏漏,导致笔者学习过程中,编译的.a文件无法在RK3568平台运行,故写本文做了修正,以下仅是自我学习的笔记,没有写的很详细。一:下载软件包https://download.csdn.net/download/qq_41630102/12781

- 为什么朋友越来越少?

东心子

越长大朋友越少。试图分析过原因,除了性格越来越趋于被动外,更多的是对血脉亲情之外的其他关系抱有天然的不自信态度。因为深知建立并维系好一段关系是需要耗费大量时间和精力的,而随着年岁的渐长人在诸多不确定面前也变得越发谨慎和小心了。不敢主动靠近,更怕无意义的投入,只想活在自己的舒服区和安全区,想把珍贵的时间和精力用在天然更易亲近的关系上。这好像是件可悲的事,因为人变得越来越现实了。但又好像是件必然的事,

- 《恶鬼道》剧本杀复盘解析+谁是凶手+真相答案+角色剧透

VX搜_小燕子复盘

为了你获得更好的游戏体验,本文仅显示《恶鬼道》剧本杀部分真相复盘,获取完整真相复盘只需两步①【微信关注公众号:集美复盘】②回复【恶鬼道】即可查看获取哦﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎﹎1、剧本杀《恶鬼道》角色介绍“无恐不入”是一档专门实景记录拍摄和探究灵异现象的网络节目。每一次节目都会邀请所记录拍摄的闹鬼地点里的曾住户作为受邀嘉宾再次回到闹鬼之地入住一

- 117、Python机器学习:数据预处理与特征工程技巧

多多的编程笔记

python机器学习开发语言

Python开发之机器学习准备:数据预处理与特征工程机器学习是当前人工智能领域的热门方向之一。而作为机器学习的核心组成部分,数据预处理与特征工程对于模型的性能有着至关重要的影响。本文将带领大家了解数据预处理与特征工程的基本概念,以及它们在实际应用场景中的重要性。数据预处理数据预处理是机器学习中的第一步,它的主要目的是将原始数据转换成适合进行机器学习模型训练的形式。就像我们在做饭之前需要清洗和准备食

- 解析直返APP的优势:为何它成为用户的首选

氧惠帮朋友一起省

直返的返利高低取决于具体的直返平台和商品,不同的平台和商品可能会有不同的返利比例和规则。但是,一般来说,直返的返利相对较高,因为直返平台通常会直接向消费者提供返现或优惠券等形式的返利,而不需要消费者进行额外的操作或满足特定的条件。氧惠APP(带货领导者)——是与以往完全不同的抖客+淘客app!2023全新模式,我的直推也会放到你下面。主打:带货高补贴,深受各位带货团队长喜爱(每天出单带货几十万单)

- 装修公司如何提高业绩,装修公司如何开发客户

孔磊_1f81

家装公司如何突破,如何在现有业务上不增加推广费用每个月增加几十万甚至上百万收入,如何突破传统家装公司业务限制,本文介绍一种新的服务做为切入口,加上新业务每年妥妥增加几十万甚至上百万营业增长本文介绍一下家装公司之空气治理:随着经济发展,人们生活水平的提升,对新房装修的关注已不单单是设计美观、真材实料,人民逐渐对环保重视程度越来越高,装修好的家是温馨安逸健康的象征,如果没有健康则失去安居的真正意义。现

- 如何通过linux黑窗口实现对远程服务器的操作

①选择合适的云平台进行设备的租用并复制好远程设备的IP地址②使用管理员权限打开黑窗口③输入命令连接远程的设备:ssh用户名@服务器IP地址,此时得到的是一个什么都没有的设备④由于该设备什么都没有,故先:sudoaptupdate,然后安装gcc编译器:sudoaptinstallbulid-essential,再然后安装python:sudoaptinstallpython-3.8,再然后安装mi

- 亲子日记第386篇

海内存知己_bd9e

亲子日记第386篇,2018年12月14日,星期五,天气晴。时间过得真快,又到了周五放假的日子。虽然我们下午就放假了,但由于要出月考试题,于是我只能自己在办公室加班了。一个人的精力是有限的,工作上付出的多,家里的事包括孩子学习上就有点顾不过来。这段时间每天不仅要上课还要写教案、做课件、出学案,真是忙得不亦乐乎,回到家就什么事情都不爱干了。还好儿子在学习上比较自觉,不仅能把作业高效的完成,还能帮助我

- Redis——API的理解和使用

莫问以

一、全局命令1、查看所有键keys*下面插入了3对字符串类型的键值对:127.0.0.1:6379>sethelloworldOK127.0.0.1:6379>setjavajedisOK127.0.0.1:6379>setpythonredis-pyOKkeys*命令会将所有的键输出:127.0.0.1:6379>keys*1)"python"2)"java"3)"hello"2、键总数dbsi

- PYTHON对接第三方验证码短信接口

短信接口开发

PYTHON短信接口对接demo#接口类型:互亿无线触发短信接口,支持发送验证码短信、订单通知短信等。#账户注册:请通过该地址开通账户http://user.ihuyi.com/?DKimmu#注意事项:#(1)调试期间,请使用用系统默认的短信内容:您的验证码是:【变量】。请不要把验证码泄露给其他人。#(2)请使用APIID及APIKEY来调用接口,可在会员中心获取;#(3)该代码仅供接入互亿无线

- C#-Linq源码解析之Concat

黑哥聊dotNet

DotNet-Linq详解linqc#

前言在Dotnet开发过程中,Concat作为IEnumerable的扩展方法,十分常用。本文对Concat方法的关键源码进行简要分析,以方便大家日后更好的使用该方法。使用Concat连接两个序列。假如我们有这样的两个集合,我们需要把两个集合进行连接!List lst = new List { "张三", "李四" };List lst2 = new List { "王麻子" };不使用Linq大

- 你是喜欢18岁的自己还是现在的自己

够潮

1今天和90后的小姑子聊天,我这个80后好生羡慕这个明白清楚自己要什么的菇凉。28岁没有男朋友,辞掉银行工作后全心全意打理起自己的裹卷店。一个貌美如花的妹纸为了能让顾客觉得放心,给自己的裹卷店取名老张妈。在我们这个准二线城市里拥有五、六套房子也算阔以,偏偏想要自己挣钱买自己喜欢的房子。作为中年人的我不解风情的问她:一个女娃娃家里又不缺房子你还这么拼命干嘛?人家说:那些房子我不喜欢,我想要在市区有一

- Redis性能测试:工具、参数与实战示例

Seal^_^

数据库专栏#数据库--Redisredis数据库Redis性能测试

Redis性能测试:工具、参数与实战示例1.Redis性能测试概述2.redis-benchmark基础使用2.1基本语法2.2简单示例3.性能测试参数详解4.实战测试示例4.1基础测试4.2指定命令测试4.3带随机key的测试4.4大数据测试4.5管道测试5.性能测试流程图6.测试结果分析与优化建议6.1结果解读6.2优化建议7.高级测试场景7.1持久化影响测试7.2集群测试7.3长时间稳定性测

- C# Linq源码解析之Aggregate

黑哥聊dotNet

DotNet-Linq详解c#linqlist

前言在Dotnet开发过程中,Aggregate作为IEnumerable的扩展方法,十分常用。本文对Aggregate方法的关键源码进行简要分析,以方便大家日后更好的使用该方法。使用Aggregate是对序列应用累加器的函数。看下面一段代码:List lst = new List() { "张三", "李四", "王麻子" };给了我们这样的一个list集合,我们想要得到"张三哈哈哈李四哈哈哈王

- 多线程编程之存钱与取钱

周凡杨

javathread多线程存钱取钱

生活费问题是这样的:学生每月都需要生活费,家长一次预存一段时间的生活费,家长和学生使用统一的一个帐号,在学生每次取帐号中一部分钱,直到帐号中没钱时 通知家长存钱,而家长看到帐户还有钱则不存钱,直到帐户没钱时才存钱。

问题分析:首先问题中有三个实体,学生、家长、银行账户,所以设计程序时就要设计三个类。其中银行账户只有一个,学生和家长操作的是同一个银行账户,学生的行为是

- java中数组与List相互转换的方法

征客丶

JavaScriptjavajsonp

1.List转换成为数组。(这里的List是实体是ArrayList)

调用ArrayList的toArray方法。

toArray

public T[] toArray(T[] a)返回一个按照正确的顺序包含此列表中所有元素的数组;返回数组的运行时类型就是指定数组的运行时类型。如果列表能放入指定的数组,则返回放入此列表元素的数组。否则,将根据指定数组的运行时类型和此列表的大小分

- Shell 流程控制

daizj

流程控制if elsewhilecaseshell

Shell 流程控制

和Java、PHP等语言不一样,sh的流程控制不可为空,如(以下为PHP流程控制写法):

<?php

if(isset($_GET["q"])){

search(q);}else{// 不做任何事情}

在sh/bash里可不能这么写,如果else分支没有语句执行,就不要写这个else,就像这样 if else if

if 语句语

- Linux服务器新手操作之二

周凡杨

Linux 简单 操作

1.利用关键字搜寻Man Pages man -k keyword 其中-k 是选项,keyword是要搜寻的关键字 如果现在想使用whoami命令,但是只记住了前3个字符who,就可以使用 man -k who来搜寻关键字who的man命令 [haself@HA5-DZ26 ~]$ man -k

- socket聊天室之服务器搭建

朱辉辉33

socket

因为我们做的是聊天室,所以会有多个客户端,每个客户端我们用一个线程去实现,通过搭建一个服务器来实现从每个客户端来读取信息和发送信息。

我们先写客户端的线程。

public class ChatSocket extends Thread{

Socket socket;

public ChatSocket(Socket socket){

this.sock

- 利用finereport建设保险公司决策分析系统的思路和方法

老A不折腾

finereport金融保险分析系统报表系统项目开发

决策分析系统呈现的是数据页面,也就是俗称的报表,报表与报表间、数据与数据间都按照一定的逻辑设定,是业务人员查看、分析数据的平台,更是辅助领导们运营决策的平台。底层数据决定上层分析,所以建设决策分析系统一般包括数据层处理(数据仓库建设)。

项目背景介绍

通常,保险公司信息化程度很高,基本上都有业务处理系统(像集团业务处理系统、老业务处理系统、个人代理人系统等)、数据服务系统(通过

- 始终要页面在ifream的最顶层

林鹤霄

index.jsp中有ifream,但是session消失后要让login.jsp始终显示到ifream的最顶层。。。始终没搞定,后来反复琢磨之后,得到了解决办法,在这儿给大家分享下。。

index.jsp--->主要是加了颜色的那一句

<html>

<iframe name="top" ></iframe>

<ifram

- MySQL binlog恢复数据

aigo

mysql

1,先确保my.ini已经配置了binlog:

# binlog

log_bin = D:/mysql-5.6.21-winx64/log/binlog/mysql-bin.log

log_bin_index = D:/mysql-5.6.21-winx64/log/binlog/mysql-bin.index

log_error = D:/mysql-5.6.21-win

- OCX打成CBA包并实现自动安装与自动升级

alxw4616

ocxcab

近来手上有个项目,需要使用ocx控件

(ocx是什么?

http://baike.baidu.com/view/393671.htm)

在生产过程中我遇到了如下问题.

1. 如何让 ocx 自动安装?

a) 如何签名?

b) 如何打包?

c) 如何安装到指定目录?

2.

- Hashmap队列和PriorityQueue队列的应用

百合不是茶

Hashmap队列PriorityQueue队列

HashMap队列已经是学过了的,但是最近在用的时候不是很熟悉,刚刚重新看以一次,

HashMap是K,v键 ,值

put()添加元素

//下面试HashMap去掉重复的

package com.hashMapandPriorityQueue;

import java.util.H

- JDK1.5 returnvalue实例

bijian1013

javathreadjava多线程returnvalue

Callable接口:

返回结果并且可能抛出异常的任务。实现者定义了一个不带任何参数的叫做 call 的方法。

Callable 接口类似于 Runnable,两者都是为那些其实例可能被另一个线程执行的类设计的。但是 Runnable 不会返回结果,并且无法抛出经过检查的异常。

ExecutorService接口方

- angularjs指令中动态编译的方法(适用于有异步请求的情况) 内嵌指令无效

bijian1013

JavaScriptAngularJS

在directive的link中有一个$http请求,当请求完成后根据返回的值动态做element.append('......');这个操作,能显示没问题,可问题是我动态组的HTML里面有ng-click,发现显示出来的内容根本不执行ng-click绑定的方法!

- 【Java范型二】Java范型详解之extend限定范型参数的类型

bit1129

extend

在第一篇中,定义范型类时,使用如下的方式:

public class Generics<M, S, N> {

//M,S,N是范型参数

}

这种方式定义的范型类有两个基本的问题:

1. 范型参数定义的实例字段,如private M m = null;由于M的类型在运行时才能确定,那么我们在类的方法中,无法使用m,这跟定义pri

- 【HBase十三】HBase知识点总结

bit1129

hbase

1. 数据从MemStore flush到磁盘的触发条件有哪些?

a.显式调用flush,比如flush 'mytable'

b.MemStore中的数据容量超过flush的指定容量,hbase.hregion.memstore.flush.size,默认值是64M 2. Region的构成是怎么样?

1个Region由若干个Store组成

- 服务器被DDOS攻击防御的SHELL脚本

ronin47

mkdir /root/bin

vi /root/bin/dropip.sh

#!/bin/bash/bin/netstat -na|grep ESTABLISHED|awk ‘{print $5}’|awk -F:‘{print $1}’|sort|uniq -c|sort -rn|head -10|grep -v -E ’192.168|127.0′|awk ‘{if($2!=null&a

- java程序员生存手册-craps 游戏-一个简单的游戏

bylijinnan

java

import java.util.Random;

public class CrapsGame {

/**

*

*一个简单的赌*博游戏,游戏规则如下:

*玩家掷两个骰子,点数为1到6,如果第一次点数和为7或11,则玩家胜,

*如果点数和为2、3或12,则玩家输,

*如果和为其它点数,则记录第一次的点数和,然后继续掷骰,直至点数和等于第一次掷出的点

- TOMCAT启动提示NB: JAVA_HOME should point to a JDK not a JRE解决

开窍的石头

JAVA_HOME

当tomcat是解压的时候,用eclipse启动正常,点击startup.bat的时候启动报错;

报错如下:

The JAVA_HOME environment variable is not defined correctly

This environment variable is needed to run this program

NB: JAVA_HOME shou

- [操作系统内核]操作系统与互联网

comsci

操作系统

我首先申明:我这里所说的问题并不是针对哪个厂商的,仅仅是描述我对操作系统技术的一些看法

操作系统是一种与硬件层关系非常密切的系统软件,按理说,这种系统软件应该是由设计CPU和硬件板卡的厂商开发的,和软件公司没有直接的关系,也就是说,操作系统应该由做硬件的厂商来设计和开发

- 富文本框ckeditor_4.4.7 文本框的简单使用 支持IE11

cuityang

富文本框

<html xmlns="http://www.w3.org/1999/xhtml">

<head>

<meta http-equiv="Content-Type" content="text/html; charset=UTF-8" />

<title>知识库内容编辑</tit

- Property null not found

darrenzhu

datagridFlexAdvancedpropery null

When you got error message like "Property null not found ***", try to fix it by the following way:

1)if you are using AdvancedDatagrid, make sure you only update the data in the data prov

- MySQl数据库字符串替换函数使用

dcj3sjt126com

mysql函数替换

需求:需要将数据表中一个字段的值里面的所有的 . 替换成 _

原来的数据是 site.title site.keywords ....

替换后要为 site_title site_keywords

使用的SQL语句如下:

updat

- mac上终端起动MySQL的方法

dcj3sjt126com

mysqlmac

首先去官网下载: http://www.mysql.com/downloads/

我下载了5.6.11的dmg然后安装,安装完成之后..如果要用终端去玩SQL.那么一开始要输入很长的:/usr/local/mysql/bin/mysql

这不方便啊,好想像windows下的cmd里面一样输入mysql -uroot -p1这样...上网查了下..可以实现滴.

打开终端,输入:

1

- Gson使用一(Gson)

eksliang

jsongson

转载请出自出处:http://eksliang.iteye.com/blog/2175401 一.概述

从结构上看Json,所有的数据(data)最终都可以分解成三种类型:

第一种类型是标量(scalar),也就是一个单独的字符串(string)或数字(numbers),比如"ickes"这个字符串。

第二种类型是序列(sequence),又叫做数组(array)

- android点滴4

gundumw100

android

Android 47个小知识

http://www.open-open.com/lib/view/open1422676091314.html

Android实用代码七段(一)

http://www.cnblogs.com/over140/archive/2012/09/26/2611999.html

http://www.cnblogs.com/over140/arch

- JavaWeb之JSP基本语法

ihuning

javaweb

目录

JSP模版元素

JSP表达式

JSP脚本片断

EL表达式

JSP注释

特殊字符序列的转义处理

如何查找JSP页面中的错误

JSP模版元素

JSP页面中的静态HTML内容称之为JSP模版元素,在静态的HTML内容之中可以嵌套JSP

- App Extension编程指南(iOS8/OS X v10.10)中文版

啸笑天

ext

当iOS 8.0和OS X v10.10发布后,一个全新的概念出现在我们眼前,那就是应用扩展。顾名思义,应用扩展允许开发者扩展应用的自定义功能和内容,能够让用户在使用其他app时使用该项功能。你可以开发一个应用扩展来执行某些特定的任务,用户使用该扩展后就可以在多个上下文环境中执行该任务。比如说,你提供了一个能让用户把内容分

- SQLServer实现无限级树结构

macroli

oraclesqlSQL Server

表结构如下:

数据库id path titlesort 排序 1 0 首页 0 2 0,1 新闻 1 3 0,2 JAVA 2 4 0,3 JSP 3 5 0,2,3 业界动态 2 6 0,2,3 国内新闻 1

创建一个存储过程来实现,如果要在页面上使用可以设置一个返回变量将至传过去

create procedure test

as

begin

decla

- Css居中div,Css居中img,Css居中文本,Css垂直居中div

qiaolevip

众观千象学习永无止境每天进步一点点css

/**********Css居中Div**********/

div.center {

width: 100px;

margin: 0 auto;

}

/**********Css居中img**********/

img.center {

display: block;

margin-left: auto;

margin-right: auto;

}

- Oracle 常用操作(实用)

吃猫的鱼

oracle

SQL>select text from all_source where owner=user and name=upper('&plsql_name');

SQL>select * from user_ind_columns where index_name=upper('&index_name'); 将表记录恢复到指定时间段以前

- iOS中使用RSA对数据进行加密解密

witcheryne

iosrsaiPhoneobjective c

RSA算法是一种非对称加密算法,常被用于加密数据传输.如果配合上数字摘要算法, 也可以用于文件签名.

本文将讨论如何在iOS中使用RSA传输加密数据. 本文环境

mac os

openssl-1.0.1j, openssl需要使用1.x版本, 推荐使用[homebrew](http://brew.sh/)安装.

Java 8

RSA基本原理

RS

68{N1WMVFA.png)