Time: 2017.9.15

Targets: 实时处理 Kafka 数据

Owner: C. L. Wang

代码

导入Kafka的Jar包

org.apache.kafka

kafka_2.12

0.11.0.0

Kafka的服务器地址

cat /etc/hosts

10.215.33.xx md3 m3 hive_server hue_server hive_server.chunyu.me zk_share_3 zk_kafka_3 log_kafka_1

10.215.33.xx md6 log_kafka_2

10.215.33.xx md11 log_kafka_3

Kafka的数据格式,即ConsumerRecord

record: ConsumerRecord(

topic = elapsed.log,

partition = 1,

offset = 42418829,

CreateTime = 1505455758331,

serialized key size = -1,

serialized value size = 906,

headers = RecordHeaders(headers = [], isReadOnly = false),

key = null,

value={

"@timestamp": "2017-09-15T06:09:18.331Z",

"beat": {

"hostname": "db06",

"name": "db06",

"version": "5.5.2"

},

"input_type": "log",

"log_name": "elapsed",

"log_type": "project",

"message": "2017-09-15 14:09:17,328 INFO log_utils.log_elapsed_info Line:134 Time Elapsed: 0.073685s, Path: /api/problem/detail/user_view/, Code: 200, Get: [u'installId=1497448830616', u'vendor=xiaomi', u'app=0', u'secureId=c0e6aa0a403c760d', u'platform=android', u'mac=02:00:00:00:00:00', u'version=8.4.0', u'limit=120', u'phoneType=MI NOTE LTE_by_Xiaomi', u'imei=867993021875040', u'app_ver=8.4.0', u'systemVer=6.0.1', u'problem_id=576822674', u'device_id=867993021875040', 'uid=3636454'], Post: [], 112.67.96.208, Chunyuyisheng/8.4.0 (Android 6.0.1;MI+NOTE+LTE_by_Xiaomi), view_name: ask.view.problem_views.problem_detail_for_user_view, ",

"offset": 5708029368,

"source": "/home/chunyu/backup/django_log/elapsed_logger.log-20170915",

"type": "log"

}

)

Kafka的读取数据类

public class KafkaMain implements ILaMain {

// Kafka的服务器地址

private static final String KAFKA_SERVERS = "log_kafka_1:9092, log_kafka_2:9092, log_kafka_3:9092";

private static final String DEF_GROUP_ID = "test"; // 测试的Group ID

private final String[] mTopics;

private final KafkaConsumer mConsumer;

private ILaManager mKafkaManager;

/**

* 构造函数,Topic即数据源

* 日志处理的Topic,{@link me.chunyu.log_analysis.utils.LaValues.Topics}

*

* @param topics Topic

*/

public KafkaMain(String[] topics) {

mConsumer = new KafkaConsumer<>(createProperties(KAFKA_SERVERS, DEF_GROUP_ID));

mTopics = topics;

mKafkaManager = KafkaManager.getInstance();

}

private Properties createProperties(String servers, String groupId) {

Properties props = new Properties();

props.put("bootstrap.servers", servers);

props.put("group.id", groupId);

props.put("auto.commit.interval.ms", "1000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

return props;

}

private void shutdown() {

if (mConsumer != null)

mConsumer.close();

}

@Override public void run() {

List list = new ArrayList<>(Arrays.asList(mTopics));

mConsumer.subscribe(list);

System.out.println("++++++++++++++++++++ Kafka接受数据 ++++++++++++++++++++");

try {

while (true) {

// Kafka可能会一次加载多条数据

ConsumerRecords records = mConsumer.poll(1000);

for (ConsumerRecord record : records) {

System.out.println(record.toString());

KafkaValueEntity entity = new Gson().fromJson(record.value(), KafkaValueEntity.class);

mKafkaManager.process(entity.message);

}

if (!records.isEmpty()) { // 用于测试数据

break;

}

}

} finally {

shutdown();

}

System.out.println("++++++++++++++++++++ Kafka终止数据 ++++++++++++++++++++");

}

}

配置

Kafka的端口:9000

Kafka的配置:5个Partition;保留时间1天;

主页:

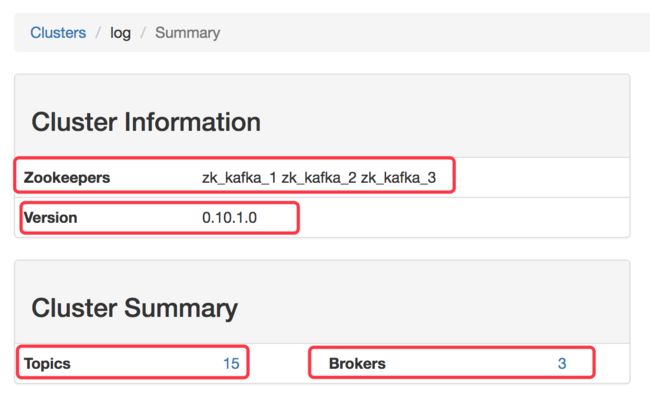

- Zookeepers是Kafka分发数据的服务器,同Brokers,默认3个;

- Topics是数据源,含有15个,日志数据源是

elapsed.log; - Version是版本,显示版本0.10.1.0是错误的,实际是0.11.0.0,同pom的配置;

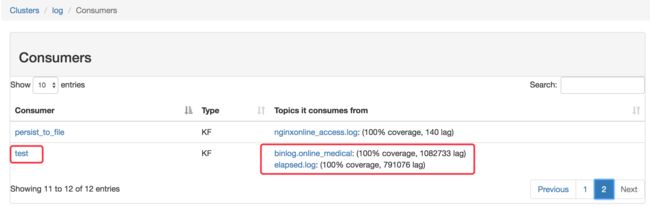

消费者:

- Consumer的名称,即GroupId;

- Topics就是当前消费者所消费的数据源;

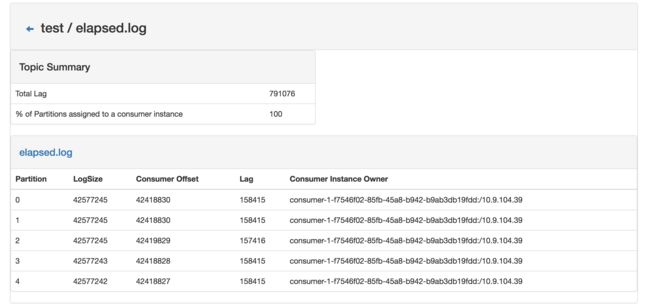

Topic:

- Patition是Kafka的分片,默认5份,在相同消费者(GroupId)中,最多不要超过5个消费源(进程或线程);

- LogSize是当前数据的位置,Consumer Offset是消费的位置,默认从注册之后才开始消费;从头消费需要指定参数,参考

OK, that's all!