android使用MediaCodec实现异步视频编解码

android使用MediaCodec实现异步视频编解码

最近在做屏幕投影的项目中需要对H.264的视频流做解码播放显示,项目基于Android 7.0的系统,虽然android系统已经提供了一套以MediaCodec为核心的硬解码实现方案。但是在实际运用过程中,遇到许多问题,在这里进行一个总结,方便自己以后查阅,主要涉及以下内容:

- TextureView与SurfaceView

- MediaCodec介绍

- 异步编解码实现方式

- 同步编解码实现方式

TextureView与SurfaceView

我们在做解码的过程中,需要创建一个Surface来显示我们的解码内容,Surface的来源View用的较多的有两种,一种是TextureView,还有一种是SurfaceView,那这两种view有什么区别呢?

SurfaceView: 与普通View不同的是,它有自己的Surface,并且Surface的渲染可以放到单独线程去做,这对于一些游戏、视频等性能相关的应用非常有益,因为它不会影响主线程对事件的响应。但它也有缺点,因为这个Surface不在View hierachy中,它的显示也不受View的属性控制,所以不能进行平移,缩放等变换,也不能放在其它ViewGroup中,一些View中的特性也无法使用。

TextureView: 它可以将内容流直接投影到View中,可以用于实现Live preview等功能。和SurfaceView不同,它不会在WMS中单独创建窗口,而是作为View hierachy中的一个普通View,因此可以和其它普通View一样进行移动,旋转,缩放,动画等变化。值得注意的是TextureView必须在硬件加速的窗口中。它显示的内容流数据可以来自App进程或是远端进程.

MediaCodec介绍

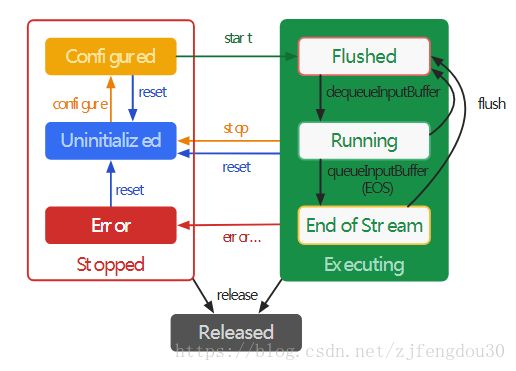

MediaCodec是Android系统提供开发者用于访问底层多媒体编解码的组件,作为Android底层多媒体支持基础通常与MediaExtractor, MediaSync, MediaMuxer,MediaCrypto, MediaDrm, Image, Surface, AudioTrack一起使用,后面我将一一介绍这些功能组件的使用方式。MediaCodec的工作流程:

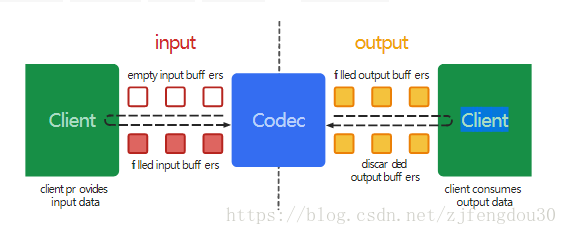

当MediaCodec开始工作时, 首先会提供一个空的输入buff给到Client端,Client端把要编码的数据填充到这个空buff中回给MediaCodec,MediaCodec内部将数据编码完成后,再填充一个buff提供给client端进行处理。针对编解码两种情况,MediaCodec流程如下:

编码时:

1.将数据填充到MediaCodec提供的空buffer中。填充方式,同步与异步的实现有所差别。

2.客户端通知MediaCodec数据已填好

3.MediaCodec内部对数据进行硬编码

4.填充完成后将数据输出给客户端,客户端根据需求传输数据

解码时:

1. 将编码后的数据流填充buffer提供给MediaCodec。

2. 客户端通知MediaCodec数据已填好

3. MediaCodec内部对数据硬解码

4. 将解码后的数据输出给Surface显示

异步编解码实现方式

异步编码器实现代码如下:

package com.zdragon.videoio;

import android.media.MediaCodec;

import android.media.MediaCodecInfo;

import android.media.MediaFormat;

import android.os.Handler;

import android.os.HandlerThread;

import android.support.annotation.NonNull;

import android.util.Log;

import android.view.Surface;

import java.io.IOException;

import java.nio.ByteBuffer;

import java.util.concurrent.ArrayBlockingQueue;

/**

* This class use for Encode Video Frame Data.

* Created by zj on 2018/7/29 0029.

*/

public class VideoEncoder {

private final static String TAG = "VideoEncoder";

private final static int CONFIGURE_FLAG_ENCODE = MediaCodec.CONFIGURE_FLAG_ENCODE;

private final static int CACHE_BUFFER_SIZE = 8;

private MediaCodec mMediaCodec;

private MediaFormat mMediaFormat;

private int mViewWidth;

private int mViewHeight;

private Handler mVideoEncoderHandler;

private HandlerThread mVideoEncoderHandlerThread = new HandlerThread("VideoEncoder");

//This video stream format must be I420

private final static ArrayBlockingQueue<byte []> mInputDatasQueue = new ArrayBlockingQueue<byte []>(CACHE_BUFFER_SIZE);

//Cachhe video stream which has been encoded.

private final static ArrayBlockingQueue<byte []> mOutputDatasQueue = new ArrayBlockingQueue<byte[]>(CACHE_BUFFER_SIZE);

private MediaCodec.Callback mCallback = new MediaCodec.Callback() {

@Override

public void onInputBufferAvailable(@NonNull MediaCodec mediaCodec, int id) {

ByteBuffer inputBuffer = mediaCodec.getInputBuffer(id);

inputBuffer.clear();

byte [] dataSources = mInputDatasQueue.poll();

int length = 0;

if(dataSources != null) {

inputBuffer.put(dataSources);

length = dataSources.length;

}

mediaCodec.queueInputBuffer(id,0, length,0,0);

}

@Override

public void onOutputBufferAvailable(@NonNull MediaCodec mediaCodec, int id, @NonNull MediaCodec.BufferInfo bufferInfo) {

ByteBuffer outputBuffer = mMediaCodec.getOutputBuffer(id);

MediaFormat outputFormat = mMediaCodec.getOutputFormat(id);

if(outputBuffer != null && bufferInfo.size > 0){

byte [] buffer = new byte[outputBuffer.remaining()];

outputBuffer.get(buffer);

boolean result = mOutputDatasQueue.offer(buffer);

if(!result){

Log.d(TAG, "Offer to queue failed, queue in full state");

}

}

mMediaCodec.releaseOutputBuffer(id, true);

}

@Override

public void onError(@NonNull MediaCodec mediaCodec, @NonNull MediaCodec.CodecException e) {

Log.d(TAG, "------> onError");

}

@Override

public void onOutputFormatChanged(@NonNull MediaCodec mediaCodec, @NonNull MediaFormat mediaFormat) {

Log.d(TAG, "------> onOutputFormatChanged");

}

};

public VideoEncoder(String mimeType, int viewwidth, int viewheight){

try {

mMediaCodec = MediaCodec.createEncoderByType(mimeType);

} catch (IOException e) {

Log.e(TAG, Log.getStackTraceString(e));

mMediaCodec = null;

return;

}

this.mViewWidth = viewwidth;

this.mViewHeight = viewheight;

mVideoEncoderHandlerThread.start();

mVideoEncoderHandler = new Handler(mVideoEncoderHandlerThread.getLooper());

mMediaFormat = MediaFormat.createVideoFormat(mimeType, mViewWidth, mViewHeight);

mMediaFormat.setInteger(MediaFormat.KEY_COLOR_FORMAT, MediaCodecInfo.CodecCapabilities.COLOR_FormatYUV420Flexible);

mMediaFormat.setInteger(MediaFormat.KEY_BIT_RATE, 1920 * 1280);

mMediaFormat.setInteger(MediaFormat.KEY_FRAME_RATE, 30);

mMediaFormat.setInteger(MediaFormat.KEY_I_FRAME_INTERVAL, 1);

}

/**

* Input Video stream which need encode to Queue

* @param needEncodeData I420 format stream

*/

public void inputFrameToEncoder(byte [] needEncodeData){

boolean inputResult = mInputDatasQueue.offer(needEncodeData);

Log.d(TAG, "-----> inputEncoder queue result = " + inputResult + " queue current size = " + mInputDatasQueue.size());

}

/**

* Get Encoded frame from queue

* @return a encoded frame; it would be null when the queue is empty.

*/

public byte [] pollFrameFromEncoder(){

return mOutputDatasQueue.poll();

}

/**

* start the MediaCodec to encode video data

*/

public void startEncoder(){

if(mMediaCodec != null){

mMediaCodec.setCallback(mCallback, mVideoEncoderHandler);

mMediaCodec.configure(mMediaFormat, null, null, CONFIGURE_FLAG_ENCODE);

mMediaCodec.start();

}else{

throw new IllegalArgumentException("startEncoder failed,is the MediaCodec has been init correct?");

}

}

/**

* stop encode the video data

*/

public void stopEncoder(){

if(mMediaCodec != null){

mMediaCodec.stop();

mMediaCodec.setCallback(null);

}

}

/**

* release all resource that used in Encoder

*/

public void release(){

if(mMediaCodec != null){

mInputDatasQueue.clear();

mOutputDatasQueue.clear();

mMediaCodec.release();

}

}

}

异步解码器实现代码如下:

package com.zdragon.videoio;

import android.media.MediaCodec;

import android.media.MediaCodecInfo;

import android.media.MediaFormat;

import android.os.Handler;

import android.os.HandlerThread;

import android.support.annotation.NonNull;

import android.util.Log;

import android.view.Surface;

import java.io.IOException;

import java.nio.ByteBuffer;

import java.util.concurrent.ArrayBlockingQueue;

/**

* This class use for Decode Video Frame Data and show to SurfaceTexture

* Created by zj on 2018/7/29 0029.

*/

public class VideoDecoder {

private final static String TAG = "VideoEncoder";

private final static int CONFIGURE_FLAG_DECODE = 0;

private MediaCodec mMediaCodec;

private MediaFormat mMediaFormat;

private Surface mSurface;

private int mViewWidth;

private int mViewHeight;

private VideoEncoder mVideoEncoder;

private Handler mVideoDecoderHandler;

private HandlerThread mVideoDecoderHandlerThread = new HandlerThread("VideoDecoder");

private MediaCodec.Callback mCallback = new MediaCodec.Callback() {

@Override

public void onInputBufferAvailable(@NonNull MediaCodec mediaCodec, int id) {

ByteBuffer inputBuffer = mediaCodec.getInputBuffer(id);

inputBuffer.clear();

byte [] dataSources = null;

if(mVideoEncoder != null) {

dataSources = mVideoEncoder.pollFrameFromEncoder();

}

int length = 0;

if(dataSources != null) {

inputBuffer.put(dataSources);

length = dataSources.length;

}

mediaCodec.queueInputBuffer(id,0, length,0,0);

}

@Override

public void onOutputBufferAvailable(@NonNull MediaCodec mediaCodec, int id, @NonNull MediaCodec.BufferInfo bufferInfo) {

ByteBuffer outputBuffer = mMediaCodec.getOutputBuffer(id);

MediaFormat outputFormat = mMediaCodec.getOutputFormat(id);

if(mMediaFormat == outputFormat && outputBuffer != null && bufferInfo.size > 0){

byte [] buffer = new byte[outputBuffer.remaining()];

outputBuffer.get(buffer);

}

mMediaCodec.releaseOutputBuffer(id, true);

}

@Override

public void onError(@NonNull MediaCodec mediaCodec, @NonNull MediaCodec.CodecException e) {

Log.d(TAG, "------> onError");

}

@Override

public void onOutputFormatChanged(@NonNull MediaCodec mediaCodec, @NonNull MediaFormat mediaFormat) {

Log.d(TAG, "------> onOutputFormatChanged");

}

};

public VideoDecoder(String mimeType, Surface surface, int viewwidth, int viewheight){

try {

mMediaCodec = MediaCodec.createDecoderByType(mimeType);

} catch (IOException e) {

Log.e(TAG, Log.getStackTraceString(e));

mMediaCodec = null;

return;

}

if(surface == null){

return;

}

this.mViewWidth = viewwidth;

this.mViewHeight = viewheight;

this.mSurface = surface;

mVideoDecoderHandlerThread.start();

mVideoDecoderHandler = new Handler(mVideoDecoderHandlerThread.getLooper());

mMediaFormat = MediaFormat.createVideoFormat(mimeType, mViewWidth, mViewHeight);

mMediaFormat.setInteger(MediaFormat.KEY_COLOR_FORMAT, MediaCodecInfo.CodecCapabilities.COLOR_FormatYUV420Flexible);

mMediaFormat.setInteger(MediaFormat.KEY_BIT_RATE, 1920 * 1280);

mMediaFormat.setInteger(MediaFormat.KEY_FRAME_RATE, 30);

mMediaFormat.setInteger(MediaFormat.KEY_I_FRAME_INTERVAL, 1);

}

public void setEncoder(VideoEncoder videoEncoder){

this.mVideoEncoder = videoEncoder;

}

public void startDecoder(){

if(mMediaCodec != null && mSurface != null){

mMediaCodec.setCallback(mCallback, mVideoDecoderHandler);

mMediaCodec.configure(mMediaFormat, mSurface,null,CONFIGURE_FLAG_DECODE);

mMediaCodec.start();

}else{

throw new IllegalArgumentException("startDecoder failed, please check the MediaCodec is init correct");

}

}

public void stopDecoder(){

if(mMediaCodec != null){

mMediaCodec.stop();

}

}

/**

* release all resource that used in Encoder

*/

public void release(){

if(mMediaCodec != null){

mMediaCodec.release();

mMediaCodec = null;

}

}

}

验证编解码的正确性,我这里做了一个demo, 在华为荣耀V8上打开相机预览,并将预览数据编解码后,在同界面的TextureView上显示出来。具体实现方式如下:

1.界面UI,第一个TextureView用于预览摄像头,第二个TextureView用于显示解码后画面

<LinearLayout xmlns:android="http://schemas.android.com/apk/res/android"

xmlns:tools="http://schemas.android.com/tools"

android:layout_width="match_parent"

android:layout_height="match_parent"

android:orientation="vertical"

tools:context="com.zdragon.videoio.MainActivity">

<TextureView

android:id="@+id/camera"

android:layout_width="wrap_content"

android:layout_height="0dp"

android:layout_weight="1"/>

<TextureView

android:id="@+id/decode"

android:layout_width="wrap_content"

android:layout_height="0dp"

android:layout_weight="1" />

LinearLayout>- 在Activity的实现

package com.zdragon.videoio;

import android.Manifest;

import android.content.pm.PackageManager;

import android.graphics.ImageFormat;

import android.graphics.SurfaceTexture;

import android.hardware.Camera;

import android.support.annotation.NonNull;

import android.support.v4.app.ActivityCompat;

import android.support.v4.content.ContextCompat;

import android.support.v7.app.AppCompatActivity;

import android.os.Bundle;

import android.util.Log;

import android.util.Size;

import android.view.Surface;

import android.view.TextureView;

import java.io.IOException;

import java.util.List;

public class MainActivity extends AppCompatActivity {

private final static String TAG = "VideoIO";

private final static String MIME_FORMAT = "video/avc"; //support h.264

private TextureView mCameraTexture;

private TextureView mDecodeTexture;

private VideoDecoder mVideoDecoder;

private VideoEncoder mVideoEncoder;

private Camera mCamera;

private int mPreviewWidth;

private int mPreviewHeight;

private Camera.PreviewCallback mPreviewCallBack = new Camera.PreviewCallback() {

@Override

public void onPreviewFrame(byte[] bytes, Camera camera) {

byte[] i420bytes = new byte[bytes.length];

//from YV20 to i420

System.arraycopy(bytes, 0, i420bytes, 0, mPreviewWidth * mPreviewHeight);

System.arraycopy(bytes, mPreviewWidth * mPreviewHeight + mPreviewWidth * mPreviewHeight / 4, i420bytes, mPreviewWidth * mPreviewHeight, mPreviewWidth * mPreviewHeight / 4);

System.arraycopy(bytes, mPreviewWidth * mPreviewHeight, i420bytes, mPreviewWidth * mPreviewHeight + mPreviewWidth * mPreviewHeight / 4, mPreviewWidth * mPreviewHeight / 4);

if(mVideoEncoder != null) {

mVideoEncoder.inputFrameToEncoder(i420bytes);

}

}

};

private TextureView.SurfaceTextureListener mCameraTextureListener = new TextureView.SurfaceTextureListener() {

@Override

public void onSurfaceTextureAvailable(SurfaceTexture surfaceTexture, int i, int i1) {

openCamera(surfaceTexture,i, i1);

mVideoEncoder = new VideoEncoder(MIME_FORMAT, mPreviewWidth, mPreviewHeight);

mVideoEncoder.startEncoder();

}

@Override

public void onSurfaceTextureSizeChanged(SurfaceTexture surfaceTexture, int i, int i1) {

}

@Override

public boolean onSurfaceTextureDestroyed(SurfaceTexture surfaceTexture) {

if(mVideoEncoder != null){

mVideoEncoder.release();

}

closeCamera();

return true;

}

@Override

public void onSurfaceTextureUpdated(SurfaceTexture surfaceTexture) {

}

};

private TextureView.SurfaceTextureListener mDecodeTextureListener = new TextureView.SurfaceTextureListener() {

@Override

public void onSurfaceTextureAvailable(SurfaceTexture surfaceTexture, int i, int i1) {

System.out.println("----------" + i + " ," + i1);

mVideoDecoder = new VideoDecoder(MIME_FORMAT, new Surface(surfaceTexture), mPreviewWidth, mPreviewHeight);

mVideoDecoder.setEncoder(mVideoEncoder);

mVideoDecoder.startDecoder();

}

@Override

public void onSurfaceTextureSizeChanged(SurfaceTexture surfaceTexture, int i, int i1) {

}

@Override

public boolean onSurfaceTextureDestroyed(SurfaceTexture surfaceTexture) {

mVideoDecoder.stopDecoder();

mVideoDecoder.release();

return true;

}

@Override

public void onSurfaceTextureUpdated(SurfaceTexture surfaceTexture) {

}

};

@Override

public void onRequestPermissionsResult(int requestCode, @NonNull String[] permissions, @NonNull int[] grantResults) {

super.onRequestPermissionsResult(requestCode, permissions, grantResults);

initView();

}

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_main);

if (ContextCompat.checkSelfPermission(this, Manifest.permission.CAMERA) == PackageManager.PERMISSION_GRANTED) {

Log.i("TEST","Granted");

initView();

} else {

ActivityCompat.requestPermissions(this, new String[]{Manifest.permission.CAMERA}, 1);//1 can be another integer

}

}

private void initView(){

mCameraTexture = (TextureView)findViewById(R.id.camera);

mDecodeTexture = (TextureView)findViewById(R.id.decode);

mCameraTexture.setSurfaceTextureListener(mCameraTextureListener);

mDecodeTexture.setSurfaceTextureListener(mDecodeTextureListener);

}

private void openCamera(SurfaceTexture texture,int width, int height){

if(texture == null){

Log.e(TAG, "openCamera need SurfaceTexture");

return;

}

mCamera = Camera.open(0);

try{

mCamera.setPreviewTexture(texture);

Camera.Parameters parameters = mCamera.getParameters();

parameters.setPreviewFormat(ImageFormat.YV12);

List list = parameters.getSupportedPreviewSizes();

for(Camera.Size size: list){

System.out.println("----size width = " + size.width + " size height = " + size.height);

}

mPreviewWidth = 640;

mPreviewHeight = 480;

parameters.setPreviewSize(mPreviewWidth,mPreviewHeight);

mCamera.setParameters(parameters);

mCamera.setPreviewCallback(mPreviewCallBack);

mCamera.startPreview();

}catch(IOException e){

Log.e(TAG, Log.getStackTraceString(e));

mCamera = null;

}

}

private void closeCamera(){

if(mCamera == null){

Log.e(TAG, "Camera not open");

return;

}

mCamera.stopPreview();

mCamera.release();

}

} 同步编解码的方式可以参考Android API文档,MediaCodec的使用说明上有详细使用用例。同步的时候有时候在不同的机型上,有的机器可以正确播放,有的机器不能出画面,这时候需要针对机型,在初始化Codec的时候,延时200ms调用start函数即可。

工程代码下载路径:

http下载:

https://github.com/RunningWay/android.git

ssh下载:

[email protected]:RunningWay/android.git