Pandas基本操作指南-2天学会pandas

作者:光城Pandas 是基于NumPy 的一种工具,该工具是为了解决数据分析任务而创建的。Pandas 纳入了大量库和一些标准的数据模型,提供了高效地操作大型数据集所需的工具。pandas提供了大量能使我们快速便捷地处理数据的函数和方法。你很快就会发现,它是使Python成为强大而高效的数据分析环境的重要因素之一。本文总结了pandas的常用操作,建议读者用两天时间看完,本文代码已经在github公布,建议边运行边学习。作者认为,学完这篇文章,pandas的基本操作没有问题了,以后碰到问题也可以查这篇文章。本文代码的github地址:https://github.com/fengdu78/machine_learning_beginner/tree/master/pandas目录0.导语1.Series2.DataFrame2.1 DataFrame的简单运用3.pandas选择数据3.1 实战筛选3.2 筛选总结4.Pandas设置值4.1 创建数据4.2 根据位置设置loc和iloc4.3 根据条件设置4.4 按行或列设置4.5 添加Series序列(长度必须对齐)4.6 设定某行某列为特定值4.7 修改一整行数据5.Pandas处理丢失数据5.1 创建含NaN的矩阵5.2 删除掉有NaN的行或列5.3 替换NaN值为0或者其他5.4 是否有缺失数据NaN6.Pandas导入导出6.1 导入数据6.2 导出数据7.Pandas合并操作7.1 Pandas合并concat7.2.Pandas 合并 merge7.2.1 定义资料集并打印出7.2.2 依据key column合并,并打印7.2.3 两列合并7.2.4 Indicator设置合并列名称7.2.5 依据index合并7.2.6 解决overlapping的问题8.Pandas plot出图9.参考

0.导语

Pandas是基于Numpy构建的,让Numpy为中心的应用变得更加简单。

本文为一篇长文,建议收藏,转发~

1.Series

import pandas as pd

import numpy as np

# Series

s = pd.Series([1,3,6,np.nan,44,1])

print(s)

'''

0 1.0

1 3.0

2 6.0

3 NaN

4 44.0

5 1.0

dtype: float64

'''

# 默认index从0开始,如果想要按照自己的索引设置,则修改index参数,如:index=[3,4,3,7,8,9]

2.DataFrame

2.1 DataFrame的简单运用

# DataFrame

dates = pd.date_range('2018-08-19',periods=6)

# dates = pd.date_range('2018-08-19','2018-08-24') # 起始、结束 与上述等价

'''

numpy.random.randn(d0, d1, …, dn)是从标准正态分布中返回一个或多个样本值。

numpy.random.rand(d0, d1, …, dn)的随机样本位于[0, 1)中。

(6,4)表示6行4列数据

'''

df = pd.DataFrame(np.random.randn(6,4),index=dates,columns=['a','b','c','d'])

print(df)

# DataFrame既有行索引也有列索引, 它可以被看做由Series组成的大字典。

print(df['b'])

'''

2018-08-19 -0.217424

2018-08-20 -1.421058

2018-08-21 -0.424589

2018-08-22 0.534675

2018-08-23 -0.018525

2018-08-24 0.635075

Freq: D, Name: b, dtype: float64

'''

# 未指定行标签和列标签的数据

df1 = pd.DataFrame(np.arange(12).reshape(3,4))

print(df1)

# 另一种方式

df2 = pd.DataFrame({

'A': [1,2,3,4],

'B': pd.Timestamp('20180819'),

'C': pd.Series([1,6,9,10],dtype='float32'),

'D': np.array([3] * 4,dtype='int32'),

'E': pd.Categorical(['test','train','test','train']),

'F': 'foo'

})

print(df2)

'''

A B C D E F

0 1 2018-08-19 1.0 3 test foo

1 2 2018-08-19 6.0 3 train foo

2 3 2018-08-19 9.0 3 test foo

3 4 2018-08-19 10.0 3 train foo

'''

print(df2.dtypes)

'''

A int64

B datetime64[ns]

C float32

D int32

E category

F object

dtype: object

'''

print(df2.index)

# RangeIndex(start=0, stop=4, step=1)

print(df2.columns)

# Index(['A', 'B', 'C', 'D', 'E', 'F'], dtype='object')

print(df2.values)

'''

[[1 Timestamp('2018-08-19 00:00:00') 1.0 3 'test' 'foo']

[2 Timestamp('2018-08-19 00:00:00') 6.0 3 'train' 'foo']

[3 Timestamp('2018-08-19 00:00:00') 9.0 3 'test' 'foo']

[4 Timestamp('2018-08-19 00:00:00') 10.0 3 'train' 'foo']]

'''

# 数据总结

print(df2.describe())

'''

A C D

count 4.000000 4.000000 4.0

mean 2.500000 6.500000 3.0

std 1.290994 4.041452 0.0

min 1.000000 1.000000 3.0

25% 1.750000 4.750000 3.0

50% 2.500000 7.500000 3.0

75% 3.250000 9.250000 3.0

max 4.000000 10.000000 3.0

'''

# 翻转数据

print(df2.T)

# print(np.transpose(df2))等价于上述操作

'''

axis=1表示行

axis=0表示列

默认ascending(升序)为True

ascending=True表示升序,ascending=False表示降序

下面两行分别表示按行升序与按行降序

'''

print(df2.sort_index(axis=1,ascending=True))

print(df2.sort_index(axis=1,ascending=False))

'''

A B C D E F

0 1 2018-08-19 1.0 3 test foo

1 2 2018-08-19 6.0 3 train foo

2 3 2018-08-19 9.0 3 test foo

3 4 2018-08-19 10.0 3 train foo

F E D C B A

0 foo test 3 1.0 2018-08-19 1

1 foo train 3 6.0 2018-08-19 2

2 foo test 3 9.0 2018-08-19 3

3 foo train 3 10.0 2018-08-19 4

'''

# 表示按列降序与按列升序

print(df2.sort_index(axis=0,ascending=False))

print(df2.sort_index(axis=0,ascending=True))

'''

A B C D E F

3 4 2018-08-19 10.0 3 train foo

2 3 2018-08-19 9.0 3 test foo

1 2 2018-08-19 6.0 3 train foo

0 1 2018-08-19 1.0 3 test foo

A B C D E F

0 1 2018-08-19 1.0 3 test foo

1 2 2018-08-19 6.0 3 train foo

2 3 2018-08-19 9.0 3 test foo

3 4 2018-08-19 10.0 3 train foo

'''

# 对特定列数值排列

# 表示对C列降序排列

print(df2.sort_values(by='C',ascending=False))

3.pandas选择数据

3.1 实战筛选

import pandas as pd

import numpy as np

dates = pd.date_range('20180819', periods=6)

df = pd.DataFrame(np.arange(24).reshape((6,4)),index=dates, columns=['A','B','C','D'])

print(df)

# 检索A列

print(df['A'])

print(df.A)

# 选择跨越多行或多列

# 选取前3行

print(df[0:3])

print(df['2018-08-19':'2018-08-21'])

'''

A B C D

2018-08-19 0 1 2 3

2018-08-20 4 5 6 7

2018-08-21 8 9 10 11

'''

# 根据标签选择数据

# 获取特定行或列

# 指定行数据

print(df.loc['20180819'])

'''

A 0

B 1

C 2

D 3

Name: 2018-08-19 00:00:00, dtype: int32

'''

# 指定列

# 两种方式

print(df.loc[:,'A':'B'])

print(df.loc[:,['A','B']])

'''

A B

2018-08-19 0 1

2018-08-20 4 5

2018-08-21 8 9

2018-08-22 12 13

2018-08-23 16 17

2018-08-24 20 21

'''

# 行与列同时检索

print(df.loc['20180819',['A','B']])

'''

A 0

B 1

Name: 2018-08-19 00:00:00, dtype: int32

'''

# 根据序列iloc

# 获取特定位置的值

print(df.iloc[3,1])

print(df.iloc[3:5,1:3]) # 不包含末尾5或3,同列表切片

'''

B C

2018-08-22 13 14

2018-08-23 17 18

'''

# 跨行操作

print(df.iloc[[1,3,5],1:3])

'''

B C

2018-08-20 5 6

2018-08-22 13 14

2018-08-24 21 22

'''

# 混合选择

print(df.ix[:3,['A','C']])

'''

A C

2018-08-19 0 2

2018-08-20 4 6

2018-08-21 8 10

'''

print(df.iloc[:3,[0,2]]) # 结果同上

# 通过判断的筛选

print(df[df.A>8])

'''

A B C D

2018-08-22 12 13 14 15

2018-08-23 16 17 18 19

2018-08-24 20 21 22 23

'''

3.2 筛选总结

1.iloc与ix区别

总结:相同点:iloc可以取相应的值,操作方便,与ix操作类似。

不同点:ix可以混合选择,可以填入column对应的字符选择,而iloc只能采用index索引,对于列数较多情况下,ix要方便操作许多。

2.loc与iloc区别

总结:相同点:都可以索引处块数据

不同点:iloc可以检索对应值,两者操作不同。

3.ix与loc、iloc三者的区别

总结:ix是混合loc与iloc操作

如下:对比三者操作

print(df.loc['20180819','A':'B'])

print(df.iloc[0,0:2])

print(df.ix[0,'A':'B'])

输出结果相同,均为:

A 0

B 1

Name: 2018-08-19 00:00:00, dtype: int32

4.Pandas设置值

4.1 创建数据

import pandas as pd

import numpy as np

# 创建数据

dates = pd.date_range('20180820',periods=6)

df = pd.DataFrame(np.arange(24).reshape(6,4), index=dates, columns=['A','B','C','D'])

print(df)

'''

A B C D

2018-08-20 0 1 2 3

2018-08-21 4 5 6 7

2018-08-22 8 9 10 11

2018-08-23 12 13 14 15

2018-08-24 16 17 18 19

2018-08-25 20 21 22 23

'''

4.2 根据位置设置loc和iloc

# 根据位置设置loc和iloc

df.iloc[2,2] = 111

df.loc['20180820','B'] = 2222

print(df)

'''

A B C D

2018-08-20 0 2222 2 3

2018-08-21 4 5 6 7

2018-08-22 8 9 111 11

2018-08-23 12 13 14 15

2018-08-24 16 17 18 19

2018-08-25 20 21 22 23

'''

4.3 根据条件设置

# 根据条件设置

# 更改B中的数,而更改的位置取决于4的位置,并设相应位置的数为0

df.B[df.A>4] = 0

print(df)

'''

A B C D

2018-08-20 0 2222 2 3

2018-08-21 4 5 6 7

2018-08-22 8 0 111 11

2018-08-23 12 0 14 15

2018-08-24 16 0 18 19

2018-08-25 20 0 22 23

'''

4.4 按行或列设置

# 按行或列设置

# 列批处理,F列全改为NaN

df['F'] = np.nan

print(df)

4.5 添加Series序列(长度必须对齐)

df['E'] = pd.Series([1,2,3,4,5,6], index=pd.date_range('20180820',periods=6))

print(df)

4.6 设定某行某列为特定值

# 设定某行某列为特定值

df.ix['20180820','A'] = 56

df.loc['20180820','A'] = 67

df.iloc[0,0] = 76

4.7 修改一整行数据

# 修改一整行数据

df.iloc[1] = np.nan # df.iloc[1,:]=np.nan

df.loc['20180820'] = np.nan # df.loc['20180820,:']=np.nan

df.ix[2] = np.nan # df.ix[2,:]=np.nan

df.ix['20180823'] = np.nan

print(df)

5.Pandas处理丢失数据

5.1 创建含NaN的矩阵

# Pandas处理丢失数据

import pandas as pd

import numpy as np

# 创建含NaN的矩阵

# 如何填充和删除NaN数据?

dates = pd.date_range('20180820',periods=6)

df = pd.DataFrame(np.arange(24).reshape((6,4)),index=dates,columns=['A','B','C','D']) # a.reshape(6,4)等价于a.reshape((6,4))

df.iloc[0,1] = np.nan

df.iloc[1,2] = np.nan

print(df)

'''

A B C D

2018-08-20 0 NaN 2.0 3

2018-08-21 4 5.0 NaN 7

2018-08-22 8 9.0 10.0 11

2018-08-23 12 13.0 14.0 15

2018-08-24 16 17.0 18.0 19

2018-08-25 20 21.0 22.0 23

'''

5.2 删除掉有NaN的行或列

# 删除掉有NaN的行或列

print(df.dropna()) # 默认是删除掉含有NaN的行

print(df.dropna(

axis=0, # 0对行进行操作;1对列进行操作

how='any' # 'any':只要存在NaN就drop掉;'all':必须全部是NaN才drop

))

'''

A B C D

2018-08-22 8 9.0 10.0 11

2018-08-23 12 13.0 14.0 15

2018-08-24 16 17.0 18.0 19

2018-08-25 20 21.0 22.0 23

'''

# 删除掉所有含有NaN的列

print(df.dropna(

axis=1,

how='any'

))

'''

A D

2018-08-20 0 3

2018-08-21 4 7

2018-08-22 8 11

2018-08-23 12 15

2018-08-24 16 19

2018-08-25 20 23

'''

5.3 替换NaN值为0或者其他

# 替换NaN值为0或者其他

print(df.fillna(value=0))

'''

A B C D

2018-08-20 0 0.0 2.0 3

2018-08-21 4 5.0 0.0 7

2018-08-22 8 9.0 10.0 11

2018-08-23 12 13.0 14.0 15

2018-08-24 16 17.0 18.0 19

2018-08-25 20 21.0 22.0 23

'''

5.4 是否有缺失数据NaN

# 是否有缺失数据NaN

# 是否为空

print(df.isnull())

'''

A B C D

2018-08-20 False True False False

2018-08-21 False False True False

2018-08-22 False False False False

2018-08-23 False False False False

2018-08-24 False False False False

2018-08-25 False False False False

'''

# 是否为NaN

print(df.isna())

'''

A B C D

2018-08-20 False True False False

2018-08-21 False False True False

2018-08-22 False False False False

2018-08-23 False False False False

2018-08-24 False False False False

2018-08-25 False False False False

'''

# 检测某列是否有缺失数据NaN

print(df.isnull().any())

'''

A False

B True

C True

D False

dtype: bool

'''

# 检测数据中是否存在NaN,如果存在就返回True

print(np.any(df.isnull())==True)

6.Pandas导入导出

6.1 导入数据

import pandas as pd # 加载模块

# 读取csv

data = pd.read_csv('student.csv')

# 打印出data

print(data)

# 前三行

print(data.head(3))

# 后三行

print(data.tail(3))

6.2 导出数据

# 将资料存取成pickle

data.to_pickle('student.pickle')

# 读取pickle文件并打印

print(pd.read_pickle('student.pickle'))

7.Pandas合并操作

7.1 Pandas合并concat

import pandas as pd

import numpy as np

# 定义资料集

df1 = pd.DataFrame(np.ones((3,4))*0, columns=['a','b','c','d'])

df2 = pd.DataFrame(np.ones((3,4))*1, columns=['a','b','c','d'])

df3 = pd.DataFrame(np.ones((3,4))*2, columns=['a','b','c','d'])

print(df1)

'''

a b c d

0 0.0 0.0 0.0 0.0

1 0.0 0.0 0.0 0.0

2 0.0 0.0 0.0 0.0

'''

print(df2)

'''

a b c d

0 1.0 1.0 1.0 1.0

1 1.0 1.0 1.0 1.0

2 1.0 1.0 1.0 1.0

'''

print(df3)

'''

a b c d

0 2.0 2.0 2.0 2.0

1 2.0 2.0 2.0 2.0

2 2.0 2.0 2.0 2.0

'''

# concat纵向合并

res = pd.concat([df1,df2,df3],axis=0)

# 打印结果

print(res)

'''

a b c d

0 0.0 0.0 0.0 0.0

1 0.0 0.0 0.0 0.0

2 0.0 0.0 0.0 0.0

0 1.0 1.0 1.0 1.0

1 1.0 1.0 1.0 1.0

2 1.0 1.0 1.0 1.0

0 2.0 2.0 2.0 2.0

1 2.0 2.0 2.0 2.0

2 2.0 2.0 2.0 2.0

'''

# 上述合并过程中,index重复,下面给出重置index方法

# 只需要将index_ignore设定为True即可

res = pd.concat([df1,df2,df3],axis=0,ignore_index=True)

# 打印结果

print(res)

'''

a b c d

0 0.0 0.0 0.0 0.0

1 0.0 0.0 0.0 0.0

2 0.0 0.0 0.0 0.0

3 1.0 1.0 1.0 1.0

4 1.0 1.0 1.0 1.0

5 1.0 1.0 1.0 1.0

6 2.0 2.0 2.0 2.0

7 2.0 2.0 2.0 2.0

8 2.0 2.0 2.0 2.0

'''

# join 合并方式

#定义资料集

df1 = pd.DataFrame(np.ones((3,4))*0, columns=['a','b','c','d'], index=[1,2,3])

df2 = pd.DataFrame(np.ones((3,4))*1, columns=['b','c','d','e'], index=[2,3,4])

print(df1)

print(df2)

'''

join='outer',函数默认为join='outer'。此方法是依照column来做纵向合并,有相同的column上下合并在一起,

其他独自的column各自成列,原来没有值的位置皆为NaN填充。

'''

# 纵向"外"合并df1与df2

res = pd.concat([df1,df2],axis=0,join='outer')

print(res)

'''

a b c d e

1 0.0 0.0 0.0 0.0 NaN

2 0.0 0.0 0.0 0.0 NaN

3 0.0 0.0 0.0 0.0 NaN

2 NaN 1.0 1.0 1.0 1.0

3 NaN 1.0 1.0 1.0 1.0

4 NaN 1.0 1.0 1.0 1.0

'''

# 修改index

res = pd.concat([df1,df2],axis=0,join='outer',ignore_index=True)

print(res)

'''

a b c d e

0 0.0 0.0 0.0 0.0 NaN

1 0.0 0.0 0.0 0.0 NaN

2 0.0 0.0 0.0 0.0 NaN

3 NaN 1.0 1.0 1.0 1.0

4 NaN 1.0 1.0 1.0 1.0

5 NaN 1.0 1.0 1.0 1.0

'''

# join='inner'合并相同的字段

# 纵向"内"合并df1与df2

res = pd.concat([df1,df2],axis=0,join='inner')

# 打印结果

print(res)

'''

b c d

1 0.0 0.0 0.0

2 0.0 0.0 0.0

3 0.0 0.0 0.0

2 1.0 1.0 1.0

3 1.0 1.0 1.0

4 1.0 1.0 1.0

'''

# join_axes(依照axes合并)

#定义资料集

df1 = pd.DataFrame(np.ones((3,4))*0, columns=['a','b','c','d'], index=[1,2,3])

df2 = pd.DataFrame(np.ones((3,4))*1, columns=['b','c','d','e'], index=[2,3,4])

print(df1)

'''

a b c d

1 0.0 0.0 0.0 0.0

2 0.0 0.0 0.0 0.0

3 0.0 0.0 0.0 0.0

'''

print(df2)

'''

b c d e

2 1.0 1.0 1.0 1.0

3 1.0 1.0 1.0 1.0

4 1.0 1.0 1.0 1.0

'''

# 依照df1.index进行横向合并

res = pd.concat([df1,df2],axis=1,join_axes=[df1.index])

print(res)

'''

a b c d b c d e

1 0.0 0.0 0.0 0.0 NaN NaN NaN NaN

2 0.0 0.0 0.0 0.0 1.0 1.0 1.0 1.0

3 0.0 0.0 0.0 0.0 1.0 1.0 1.0 1.0

'''

# 移除join_axes参数,打印结果

res = pd.concat([df1,df2],axis=1)

print(res)

'''

a b c d b c d e

1 0.0 0.0 0.0 0.0 NaN NaN NaN NaN

2 0.0 0.0 0.0 0.0 1.0 1.0 1.0 1.0

3 0.0 0.0 0.0 0.0 1.0 1.0 1.0 1.0

'''

# append(添加数据)

# append只有纵向合并,没有横向合并

#定义资料集

df1 = pd.DataFrame(np.ones((3,4))*0, columns=['a','b','c','d'])

df2 = pd.DataFrame(np.ones((3,4))*1, columns=['a','b','c','d'])

df3 = pd.DataFrame(np.ones((3,4))*2, columns=['a','b','c','d'])

s1 = pd.Series([1,2,3,4], index=['a','b','c','d'])

# 将df2合并到df1下面,以及重置index,并打印出结果

res = df1.append(df2,ignore_index=True)

print(res)

'''

a b c d

0 0.0 0.0 0.0 0.0

1 0.0 0.0 0.0 0.0

2 0.0 0.0 0.0 0.0

3 1.0 1.0 1.0 1.0

4 1.0 1.0 1.0 1.0

5 1.0 1.0 1.0 1.0

'''

# 合并多个df,将df2与df3合并至df1的下面,以及重置index,并打印出结果

res = df1.append([df2,df3], ignore_index=True)

print(res)

'''

a b c d

0 0.0 0.0 0.0 0.0

1 0.0 0.0 0.0 0.0

2 0.0 0.0 0.0 0.0

3 1.0 1.0 1.0 1.0

4 1.0 1.0 1.0 1.0

5 1.0 1.0 1.0 1.0

6 2.0 2.0 2.0 2.0

7 2.0 2.0 2.0 2.0

8 2.0 2.0 2.0 2.0

'''

# 合并series,将s1合并至df1,以及重置index,并打印结果

res = df1.append(s1,ignore_index=True)

print(res)

'''

a b c d

0 0.0 0.0 0.0 0.0

1 0.0 0.0 0.0 0.0

2 0.0 0.0 0.0 0.0

3 1.0 2.0 3.0 4.0

'''

# 总结:两种常用合并方式

res = pd.concat([df1, df2, df3], axis=0, ignore_index=True)

res1 = df1.append([df2, df3], ignore_index=True)

print(res)

print(res1)

7.2.Pandas 合并 merge

7.2.1 定义资料集并打印出

import pandas as pd

# 依据一组key合并

# 定义资料集并打印出

left = pd.DataFrame({'key' : ['K0','K1','K2','K3'],

'A' : ['A0','A1','A2','A3'],

'B' : ['B0','B1','B2','B3']})

right = pd.DataFrame({'key': ['K0', 'K1', 'K2', 'K3'],

'C' : ['C0', 'C1', 'C2', 'C3'],

'D' : ['D0', 'D1', 'D2', 'D3']})

print(left)

'''

A B key

0 A0 B0 K0

1 A1 B1 K1

2 A2 B2 K2

3 A3 B3 K3

'''

print(right)

'''

C D key

0 C0 D0 K0

1 C1 D1 K1

2 C2 D2 K2

3 C3 D3 K3

'''

7.2.2 依据key column合并,并打印

# 依据key column合并,并打印

res = pd.merge(left,right,on='key')

print(res)

'''

A B key C D

0 A0 B0 K0 C0 D0

1 A1 B1 K1 C1 D1

2 A2 B2 K2 C2 D2

3 A3 B3 K3 C3 D3

'''

# 依据两组key合并

#定义资料集并打印出

left = pd.DataFrame({'key1': ['K0', 'K0', 'K1', 'K2'],

'key2': ['K0', 'K1', 'K0', 'K1'],

'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3']})

right = pd.DataFrame({'key1': ['K0', 'K1', 'K1', 'K2'],

'key2': ['K0', 'K0', 'K0', 'K0'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']})

print(left)

'''

A B key1 key2

0 A0 B0 K0 K0

1 A1 B1 K0 K1

2 A2 B2 K1 K0

3 A3 B3 K2 K1

'''

print(right)

'''

C D key1 key2

0 C0 D0 K0 K0

1 C1 D1 K1 K0

2 C2 D2 K1 K0

3 C3 D3 K2 K0

'''

7.2.3 两列合并

依据key1与key2 columns进行合并

# 依据key1与key2 columns进行合并,并打印出四种结果['left', 'right', 'outer', 'inner']

res = pd.merge(left, right, on=['key1', 'key2'], how='inner')

print(res)

res = pd.merge(left, right, on=['key1', 'key2'], how='outer')

print(res)

res = pd.merge(left, right, on=['key1', 'key2'], how='left')

print(res)

res = pd.merge(left, right, on=['key1', 'key2'], how='right')

print(res)

'''

---------------inner方式---------------

A B key1 key2 C D

0 A0 B0 K0 K0 C0 D0

1 A2 B2 K1 K0 C1 D1

2 A2 B2 K1 K0 C2 D2

---------------outer方式---------------

A B key1 key2 C D

0 A0 B0 K0 K0 C0 D0

1 A1 B1 K0 K1 NaN NaN

2 A2 B2 K1 K0 C1 D1

3 A2 B2 K1 K0 C2 D2

4 A3 B3 K2 K1 NaN NaN

5 NaN NaN K2 K0 C3 D3

---------------left方式---------------

A B key1 key2 C D

0 A0 B0 K0 K0 C0 D0

1 A1 B1 K0 K1 NaN NaN

2 A2 B2 K1 K0 C1 D1

3 A2 B2 K1 K0 C2 D2

4 A3 B3 K2 K1 NaN NaN

--------------right方式---------------

A B key1 key2 C D

0 A0 B0 K0 K0 C0 D0

1 A2 B2 K1 K0 C1 D1

2 A2 B2 K1 K0 C2 D2

3 NaN NaN K2 K0 C3 D3

'''

7.2.4 Indicator设置合并列名称

# Indicator

df1 = pd.DataFrame({'col1':[0,1],'col_left':['a','b']})

df2 = pd.DataFrame({'col1':[1,2,2],'col_right':[2,2,2]})

print(df1)

'''

col1 col_left

0 0 a

1 1 b

'''

print(df2)

'''

col1 col_right

0 1 2

1 2 2

2 2 2

'''

# 依据col1进行合并,并启用indicator=True,最后打印

res = pd.merge(df1,df2,on='col1',how='outer',indicator=True)

print(res)

'''

col1 col_left col_right _merge

0 0 a NaN left_only

1 1 b 2.0 both

2 2 NaN 2.0 right_only

3 2 NaN 2.0 right_only

'''

# 自定义indicator column的名称,并打印出

res = pd.merge(df1,df2,on='col1',how='outer',indicator='indicator_column')

print(res)

'''

col1 col_left col_right indicator_column

0 0 a NaN left_only

1 1 b 2.0 both

2 2 NaN 2.0 right_only

3 2 NaN 2.0 right_only

'''

7.2.5 依据index合并

# 依据index合并

#定义资料集并打印出

left = pd.DataFrame({'A': ['A0', 'A1', 'A2'],

'B': ['B0', 'B1', 'B2']},

index=['K0', 'K1', 'K2'])

right = pd.DataFrame({'C': ['C0', 'C2', 'C3'],

'D': ['D0', 'D2', 'D3']},

index=['K0', 'K2', 'K3'])

print(left)

'''

A B

K0 A0 B0

K1 A1 B1

K2 A2 B2

'''

print(right)

'''

C D

K0 C0 D0

K2 C2 D2

K3 C3 D3

'''

# 依据左右资料集的index进行合并,how='outer',并打印

res = pd.merge(left,right,left_index=True,right_index=True,how='outer')

print(res)

'''

A B C D

K0 A0 B0 C0 D0

K1 A1 B1 NaN NaN

K2 A2 B2 C2 D2

K3 NaN NaN C3 D3

'''

# 依据左右资料集的index进行合并,how='inner',并打印

res = pd.merge(left,right,left_index=True,right_index=True,how='inner')

print(res)

'''

A B C D

K0 A0 B0 C0 D0

K2 A2 B2 C2 D2

'''

7.2.6 解决overlapping的问题

# 解决overlapping的问题

#定义资料集

boys = pd.DataFrame({'k': ['K0', 'K1', 'K2'], 'age': [1, 2, 3]})

girls = pd.DataFrame({'k': ['K0', 'K0', 'K3'], 'age': [4, 5, 6]})

print(boys)

'''

age k

0 1 K0

1 2 K1

2 3 K2

'''

print(girls)

'''

age k

0 4 K0

1 5 K0

2 6 K3

'''

# 使用suffixes解决overlapping的问题

# 比如将上面两个合并时,age重复了,则可通过suffixes设置,以此保证不重复,不同名

res = pd.merge(boys,girls,on='k',suffixes=['_boy','_girl'],how='inner')

print(res)

'''

age_boy k age_girl

0 1 K0 4

1 1 K0 5

'''

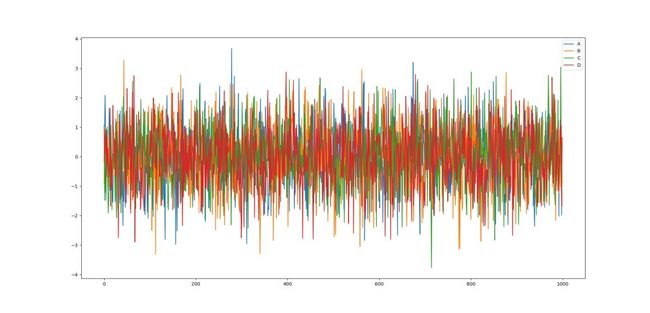

8.Pandas plot出图

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

data = pd.Series(np.random.randn(1000), index=np.arange(1000))

print(data)

print(data.cumsum())

# data本来就是一个数据,所以我们可以直接plot

data.plot()

plt.show()

# np.random.randn(1000,4) 随机生成1000行4列数据

# list("ABCD")会变为['A','B','C','D']

data = pd.DataFrame(

np.random.randn(1000,4),

index=np.arange(1000),

columns=list("ABCD")

)

data.cumsum()

data.plot()

plt.show()

ax = data.plot.scatter(x='A',y='B',color='DarkBlue',label='Class1')

# 将之下这个 data 画在上一个 ax 上面

data.plot.scatter(x='A',y='C',color='LightGreen',label='Class2',ax=ax)

plt.show()

9.参考

1.https://morvanzhou.github.io/tutorials/data-manipulation/np-pd/

本文代码的github地址:https://github.com/fengdu78/machine_learning_beginner/tree/master/pandas

请关注和分享↓↓↓

![]()

机器学习初学者

QQ群:554839127

(注意:本站有6个qq群,加入过任何一个的不需要再加)

往期精彩回顾

良心推荐:机器学习入门资料汇总及学习建议(2018版)

黄海广博士的github镜像下载(机器学习及深度学习资源)

吴恩达老师的机器学习和深度学习课程笔记打印版

机器学习小抄-(像背托福单词一样理解机器学习)

首发:深度学习入门宝典-《python深度学习》原文代码中文注释版及电子书

科研工作者的神器-zotero论文管理工具

机器学习的数学基础

机器学习必备宝典-《统计学习方法》的python代码实现、电子书及课件

吐血推荐收藏的学位论文排版教程(完整版)

机器学习入门的百科全书-2018年“机器学习初学者”公众号文章汇总