k8s日志收集实战(无坑)

一、k8s收集日志方案简介

本文主要介绍在k8s中收集应用的日志方案,应用运行中日志,一般情况下都需要收集存储到一个集中的日志管理系统中,可以方便对日志进行分析统计,监控,甚至用于机器学习,智能分析应用系统问题,及时修复应用所存在的问题。

在k8s集群中应用一般有如下日志输出方式

直接遵循docker官方建议把日志输出到标准输出或者标准错误输出

输出日志到容器内指定目录中

应用直接发送日志给日志收集系统

本文会综合部署上述日志收集方案。

日志收集组件说明

elastisearch 存储收集到的日志

kibana 可视化收集到的日志

logstash 汇总处理日志发送给elastisearch 存储

filebeat 读取容器或者应用日志文件处理发送给elastisearch或者logstash,也可用于汇总日志

fluentd 读取容器或者应用日志文件处理发送给elastisearch,也可用于汇总日志

fluent-bit 读取容器或者应用日志文件处理发送给elastisearch或者fluentd

二、部署

本次实验使用了3台虚拟机做k8s集群,每台虚拟机8C16G内存

1、部署前准备工作

# 拉取文件

yum -y install git #服务器没有git命令直接安装即可,有请忽略

git clone https://github.com/mgxian/k8s-log.git

cd k8s-log

git checkout v1

# 创建 logging namespace

kubectl apply -f logging-namespace.yaml

2、部署elastisearch

注意:

# 本次部署虽然使用 StatefulSet 但是没有使用pv进行持久化数据存储

# pod重启之后,数据会丢失,生产环境一定要使用pv持久化存储数据

# 部署

kubectl apply -f elasticsearch.yaml

# 查看状态

kubectl get pods,svc -n logging -o wide

[root@k8s-node1 k8s-log]# kubectl get pods,svc -n logging -o wide

NAME READY STATUS RESTARTS AGE IP NODE

pod/elasticsearch-logging-0 1/1 Running 0 20h 10.2.11.85 192.168.29.182

pod/elasticsearch-logging-1 1/1 Running 0 10h 10.2.11.91 192.168.29.182

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/elasticsearch-logging ClusterIP 10.1.84.47 9200/TCP 20h k8s-app=elasticsearch-logging

# 等待所有pod变成running状态

# 访问测试

# 如果测试都有数据返回代表部署成功

[root@k8s-node1 k8s-log]# kubectl run curl -n logging --image=radial/busyboxplus:curl -i --tty

If you don't see a command prompt, try pressing enter.

[ root@curl-775f9567b5-hpslj:/ ]$

[ root@curl-775f9567b5-hpslj:/ ]$ nslookup elasticsearch-logging

Server: 10.1.0.2

Address 1: 10.1.0.2 coredns.kube-system.svc.cluster.local

Name: elasticsearch-logging

Address 1: 10.1.84.47 elasticsearch-logging.logging.svc.cluster.local

#查看集群状态

[ root@curl-775f9567b5-hpslj:/ ]$ curl 'http://elasticsearch-logging:9200/_cluster/health?pretty'

{

"cluster_name" : "kubernetes-logging",

"status" : "green", #green说明集群很健康

"timed_out" : false,

"number_of_nodes" : 2,

"number_of_data_nodes" : 2,

"active_primary_shards" : 131,

"active_shards" : 262,

"relocating_shards" : 0,

"initializing_shards" : 0,

"unassigned_shards" : 0,

"delayed_unassigned_shards" : 0,

"number_of_pending_tasks" : 0,

"number_of_in_flight_fetch" : 0,

"task_max_waiting_in_queue_millis" : 0,

"active_shards_percent_as_number" : 100.0

}

#查看集群node节点

[ root@curl-775f9567b5-hpslj:/ ]$ curl 'http://elasticsearch-logging:9200/_cat/nodes'

10.2.11.91 58 92 7 1.61 0.89 0.57 mdi - elasticsearch-logging-1

10.2.11.85 65 92 7 1.61 0.89 0.57 mdi * elasticsearch-logging-0

exit

# 清理测试

kubectl delete deploy curl -n logging

3、部署kibana

# 部署

kubectl apply -f kibana.yaml

# 查看状态

kubectl get pods,svc -n logging -o wide

[root@k8s-node1 k8s-log]# kubectl get pods,svc -n logging -o wide

NAME READY STATUS RESTARTS AGE IP NODE

pod/elasticsearch-logging-0 1/1 Running 0 20h 10.2.11.85 192.168.29.182

pod/elasticsearch-logging-1 1/1 Running 0 10h 10.2.11.91 192.168.29.182

pod/kibana-logging-6c49699bc7-sdkvn 1/1 Running 0 20h 10.2.1.136 192.168.29.176

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/elasticsearch-logging ClusterIP 10.1.84.47 9200/TCP 20h k8s-app=elasticsearch-logging

service/kibana-logging NodePort 10.1.116.28 5601:9001/TCP 20h k8s-app=kibana-logging

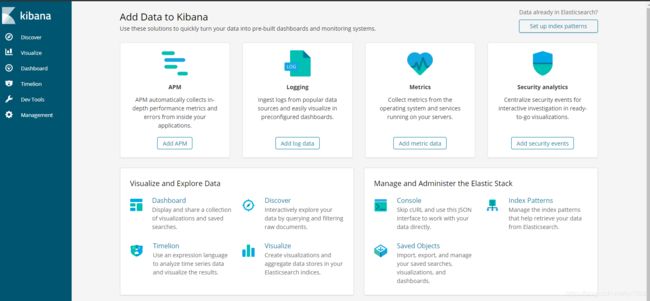

# 访问测试

# 浏览器访问下面输出的地址 看到 kibana 界面代表正常

# 192.168.29.176 为集群中某个 node 节点ip

KIBANA_NODEPORT=$(kubectl get svc -n logging | grep kibana-logging | awk '{print $(NF-1)}' | awk -F[:/] '{print $2}')

echo "http://192.168.29.176:$KIBANA_NODEPORT/"

说明:出现如下图示说明kibana启动正常

4、部署fluentd收集日志

# fluentd 以 daemoset 方式部署

# 在每个节点上启动fluentd容器,收集k8s组件,docker以及容器的日志

# 给每个需要启动fluentd的节点打相关label

# kubectl label node lab1 beta.kubernetes.io/fluentd-ds-ready=true

kubectl label nodes --all beta.kubernetes.io/fluentd-ds-ready=true

# 部署

kubectl apply -f fluentd-es-configmap.yaml

kubectl apply -f fluentd-es-ds.yaml

# 查看状态

kubectl get pods,svc -n logging -o wide

[root@k8s-node1 k8s-log]# kubectl get pods,svc -n logging -o wide

NAME READY STATUS RESTARTS AGE IP NODE

pod/elasticsearch-logging-0 1/1 Running 0 20h 10.2.11.85 192.168.29.182

pod/elasticsearch-logging-1 1/1 Running 0 10h 10.2.11.91 192.168.29.182

pod/fluentd-es-v2.2.0-bb5t4 1/1 Running 0 20h 10.2.11.87 192.168.29.182

pod/fluentd-es-v2.2.0-lvkhj 1/1 Running 0 20h 10.2.1.137 192.168.29.176

pod/kibana-logging-6c49699bc7-sdkvn 1/1 Running 0 20h 10.2.1.136 192.168.29.176

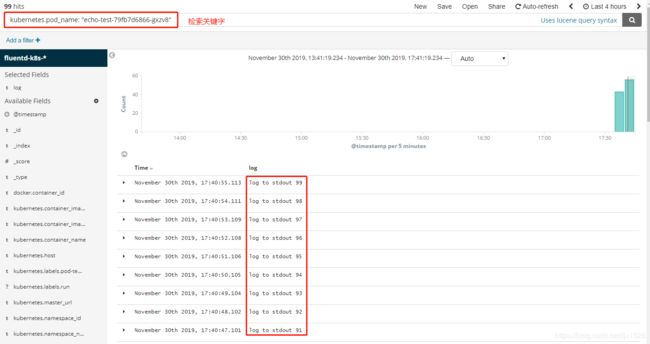

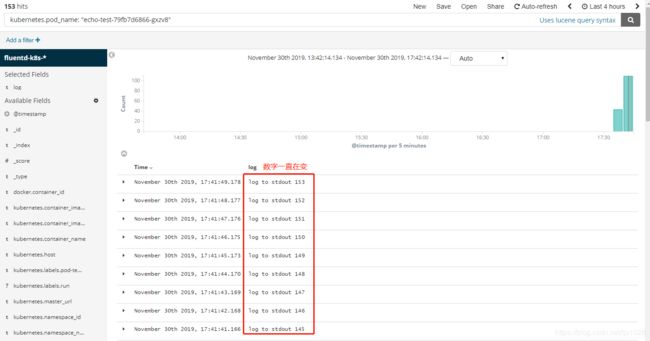

5、kibana查看日志

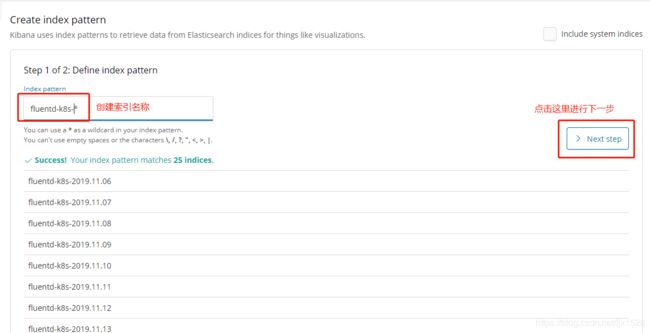

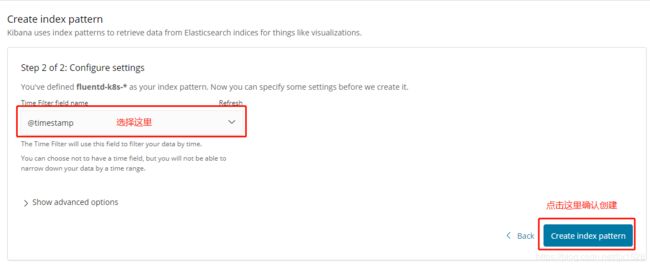

5.1、创建index fluentd-k8s-*,由于需要拉取镜像启动容器,可能需要等待几分钟才能看到索引和数据

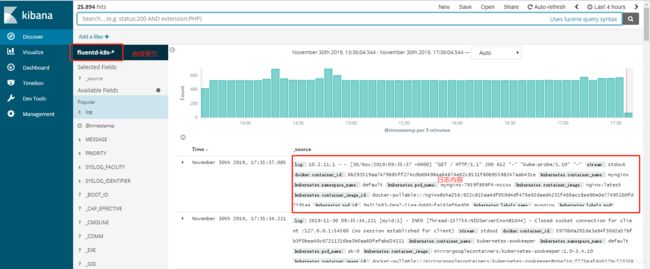

5.2、查看日志

#检索nginx日志

6、应用日志收集测试

6.1、应用日志输出到标准输出测试

# 启动测试日志输出

kubectl run echo-test --image=radial/busyboxplus:curl -- sh -c 'count=1;while true;do echo log to stdout $count;sleep 1;count=$(($count+1));done'

# 查看状态

[root@k8s-node1 k8s-log]# kubectl get pod

NAME READY STATUS RESTARTS AGE

echo-test-79fb7d6866-gxzv8 1/1 Running 0 6s

# 命令行查看日志

ECHO_TEST_POD=$(kubectl get pods | grep echo-test | awk '{print $1}')

kubectl logs -f $ECHO_TEST_POD

# 刷新 kibana 查看是否有新日志进入

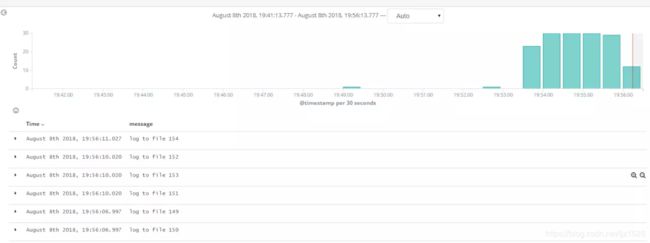

# 部署

kubectl apply -f log-contanier-file-filebeat.yaml

# 查看

kubectl get pods -o wide

# 添加index filebeat-k8s-* 查看日志

# 部署

kubectl apply -f log-contanier-file-fluentbit.yaml

# 查看

kubectl get pods -o wide

# 添加index fluentbit-k8s-* 查看日志

# 本次测试应用直接输出日志到 elasticsearch

# 部署

kubectl apply -f log-contanier-es.yaml

# 查看

kubectl get pods -o wide

# 添加index k8s-app-* 查看日志

kubectl delete -f log-contanier-es.yaml

kubectl delete -f log-contanier-file-fluentbit.yaml

kubectl delete -f log-contanier-file-filebeat.yaml

kubectl delete deploy echo-test

附录:以上各yaml文件详情

1、elasticsearch.yaml文件(无数据持久化)

[root@k8s-node1 k8s-log]# cat elasticsearch.yaml

# RBAC authn and authz

apiVersion: v1

kind: ServiceAccount

metadata:

name: elasticsearch-logging

namespace: logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- "services"

- "namespaces"

- "endpoints"

verbs:

- "get"

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: logging

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

subjects:

- kind: ServiceAccount

name: elasticsearch-logging

namespace: logging

apiGroup: ""

roleRef:

kind: ClusterRole

name: elasticsearch-logging

apiGroup: ""

---

# Elasticsearch deployment itself

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch-logging

namespace: logging

labels:

k8s-app: elasticsearch-logging

version: v6.2.5

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

serviceName: elasticsearch-logging

replicas: 2

selector:

matchLabels:

k8s-app: elasticsearch-logging

version: v6.2.5

template:

metadata:

labels:

k8s-app: elasticsearch-logging

version: v6.2.5

kubernetes.io/cluster-service: "true"

spec:

serviceAccountName: elasticsearch-logging

containers:

- image: registry.cn-hangzhou.aliyuncs.com/google_containers/elasticsearch:v6.2.5

name: elasticsearch-logging

resources:

# need more cpu upon initialization, therefore burstable class

limits:

cpu: 1000m

requests:

cpu: 100m

ports:

- containerPort: 9200

name: db

protocol: TCP

- containerPort: 9300

name: transport

protocol: TCP

volumeMounts:

- name: elasticsearch-logging

mountPath: /data

env:

- name: "NAMESPACE"

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumes:

- name: elasticsearch-logging

emptyDir: {}

# Elasticsearch requires vm.max_map_count to be at least 262144.

# If your OS already sets up this number to a higher value, feel free

# to remove this init container.

initContainers:

- image: alpine:3.6

command: ["/sbin/sysctl", "-w", "vm.max_map_count=262144"]

name: elasticsearch-logging-init

securityContext:

privileged: true

---

apiVersion: v1

kind: Service

metadata:

name: elasticsearch-logging

namespace: logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Elasticsearch"

spec:

ports:

- port: 9200

protocol: TCP

targetPort: db

selector:

k8s-app: elasticsearch-logging

1.1、elasticsearch.yaml文件(数据持久化)

[root@k8s-node1 tmp]# cat elasticsearch.yaml

# RBAC authn and authz

apiVersion: v1

kind: ServiceAccount

metadata:

name: elasticsearch-logging

namespace: logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- "services"

- "namespaces"

- "endpoints"

verbs:

- "get"

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: logging

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

subjects:

- kind: ServiceAccount

name: elasticsearch-logging

namespace: logging

apiGroup: ""

roleRef:

kind: ClusterRole

name: elasticsearch-logging

apiGroup: ""

---

# Elasticsearch deployment itself

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch-logging

namespace: logging

labels:

k8s-app: elasticsearch-logging

version: v6.2.5

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

serviceName: elasticsearch-logging

replicas: 2

selector:

matchLabels:

k8s-app: elasticsearch-logging

version: v6.2.5

template:

metadata:

labels:

k8s-app: elasticsearch-logging

version: v6.2.5

kubernetes.io/cluster-service: "true"

spec:

serviceAccountName: elasticsearch-logging

containers:

- image: registry.cn-hangzhou.aliyuncs.com/google_containers/elasticsearch:v6.2.5

name: elasticsearch-logging

resources:

# need more cpu upon initialization, therefore burstable class

limits:

cpu: 1000m

requests:

cpu: 100m

ports:

- containerPort: 9200

name: db

protocol: TCP

- containerPort: 9300

name: transport

protocol: TCP

volumeMounts:

- name: elasticsearch-logging

mountPath: /data

env:

- name: "NAMESPACE"

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumes:

- name: elasticsearch-logging

hostPath:

path: /app/es_data #此目录需要在各node节点自行创建

initContainers:

- image: alpine:3.6

command: ["/sbin/sysctl", "-w", "vm.max_map_count=262144"]

name: elasticsearch-logging-init

securityContext:

privileged: true

---

apiVersion: v1

kind: Service

metadata:

name: elasticsearch-logging

namespace: logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Elasticsearch"

spec:

ports:

- port: 9200

protocol: TCP

targetPort: db

selector:

k8s-app: elasticsearch-logging

2、kibana yaml文件

[root@k8s-node1 k8s-log]# cat kibana.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana-logging

namespace: logging

labels:

k8s-app: kibana-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

replicas: 1

selector:

matchLabels:

k8s-app: kibana-logging

template:

metadata:

labels:

k8s-app: kibana-logging

annotations:

seccomp.security.alpha.kubernetes.io/pod: 'docker/default'

spec:

containers:

- name: kibana-logging

image: registry.cn-shanghai.aliyuncs.com/k8s-log/kibana:6.2.4

resources:

# need more cpu upon initialization, therefore burstable class

limits:

cpu: 1000m

requests:

cpu: 100m

env:

- name: ELASTICSEARCH_URL

value: http://elasticsearch-logging:9200

- name: SERVER_BASEPATH

value: ""

ports:

- containerPort: 5601

name: ui

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: kibana-logging

namespace: logging

labels:

k8s-app: kibana-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Kibana"

spec:

type: NodePort

ports:

- port: 5601

protocol: TCP

targetPort: ui

nodePort: 9001

selector:

k8s-app: kibana-logging

3、fluentd-es-configmap yaml文件

[root@k8s-node1 k8s-log]# cat fluentd-es-configmap.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: fluentd-es-config-v0.1.4

namespace: logging

labels:

addonmanager.kubernetes.io/mode: Reconcile

data:

system.conf: |-

root_dir /tmp/fluentd-buffers/

containers.input.conf: |-

@id fluentd-containers.log

@type tail

path /var/log/containers/*.log

pos_file /var/log/es-containers.log.pos

time_format %Y-%m-%dT%H:%M:%S.%NZ

tag raw.kubernetes.*

read_from_head true

@type multi_format

format json

time_key time

time_format %Y-%m-%dT%H:%M:%S.%NZ

format /^(?

# Detect exceptions in the log output and forward them as one log entry.

@id raw.kubernetes

@type detect_exceptions

remove_tag_prefix raw

message log

stream stream

multiline_flush_interval 5

max_bytes 500000

max_lines 1000

system.input.conf: |-

# Examples:

# time="2016-02-04T06:51:03.053580605Z" level=info msg="GET /containers/json"

# time="2016-02-04T07:53:57.505612354Z" level=error msg="HTTP Error" err="No such image: -f" statusCode=404

# TODO(random-liu): Remove this after cri container runtime rolls out.

@id docker.log

@type tail

format /^time="(?

# Multi-line parsing is required for all the kube logs because very large log

# statements, such as those that include entire object bodies, get split into

# multiple lines by glog.

# Example:

# I0204 07:32:30.020537 3368 server.go:1048] POST /stats/container/: (13.972191ms) 200 [[Go-http-client/1.1] 10.244.1.3:40537]

@id kubelet.log

@type tail

format multiline

multiline_flush_interval 5s

format_firstline /^\w\d{4}/

format1 /^(?\w)(? 4、fluentd-es-ds yaml文件

[root@k8s-node1 k8s-log]# cat fluentd-es-ds.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: fluentd-es

namespace: logging

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: fluentd-es

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- "namespaces"

- "pods"

verbs:

- "get"

- "watch"

- "list"

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: fluentd-es

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

subjects:

- kind: ServiceAccount

name: fluentd-es

namespace: logging

apiGroup: ""

roleRef:

kind: ClusterRole

name: fluentd-es

apiGroup: ""

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: fluentd-es-v2.2.0

namespace: logging

labels:

k8s-app: fluentd-es

version: v2.2.0

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

k8s-app: fluentd-es

version: v2.2.0

template:

metadata:

labels:

k8s-app: fluentd-es

kubernetes.io/cluster-service: "true"

version: v2.2.0

# This annotation ensures that fluentd does not get evicted if the node

# supports critical pod annotation based priority scheme.

# Note that this does not guarantee admission on the nodes (#40573).

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

seccomp.security.alpha.kubernetes.io/pod: 'docker/default'

spec:

# priorityClassName: system-node-critical

serviceAccountName: fluentd-es

containers:

- name: fluentd-es

image: registry.cn-hangzhou.aliyuncs.com/google_containers/fluentd-elasticsearch:v2.2.0

env:

- name: FLUENTD_ARGS

value: --no-supervisor -q

resources:

limits:

memory: 500Mi

requests:

cpu: 100m

memory: 200Mi

volumeMounts:

- name: varlog

mountPath: /var/log

- name: varlibdockercontainers

mountPath: /var/lib/docker/containers

readOnly: true

- name: config-volume

mountPath: /etc/fluent/config.d

nodeSelector:

beta.kubernetes.io/fluentd-ds-ready: "true"

terminationGracePeriodSeconds: 30

tolerations:

- effect: NoSchedule

key: node-role.kubernetes.io/master

operator: Exists

volumes:

- name: varlog

hostPath:

path: /var/log

- name: varlibdockercontainers

hostPath:

path: /var/lib/docker/containers

- name: config-volume

configMap:

name: fluentd-es-config-v0.1.4