大数据基础知识学习-----Zookeeper学习笔记(四)Zookeeper实战

Zookeeper实战

分布式安装部署

集群规划

在hadoop102、hadoop103和hadoop104三个节点上部署Zookeeper

解压安装

- 解压zookeeper安装包到/opt/module/目录下:

[luo@hadoop102 software]$ tar -zxvf zookeeper-3.4.10.tar.gz -C /opt/module/ - 在/opt/module/zookeeper-3.4.10/这个目录下创建zkData:

mkdir zkData - 重命名/opt/module/zookeeper-3.4.10/conf这个目录下的zoo_sample.cfg为zoo.cfg:

mv zoo_sample.cfg zoo.cfg

配置zoo.cfg文件

具体配置:

dataDir=/opt/module/zookeeper-3.4.10/zkData,增加如下配置

server.2=hadoop102:2888:3888

server.3=hadoop103:2888:3888

server.4=hadoop104:2888:3888

配置参数说明:Server.A=B:C:D

- A是一个数字,表示这个是第几号服务器

- B是这个服务器的ip地址

- C是这个服务器与集群中的Leader服务器交换信息的端口

- D是万一集群中的Leader服务器挂了,需要一个端口来重新进行选举,选出一个新的Leader,而这个端口就是用来执行选举时服务器相互通信的端口

集群模式下配置一个文件myid,这个文件在dataDir目录下,这个文件里面有一个数据就是A的值,Zookeeper启动时读取此文件,拿到里面的数据与zoo.cfg里面的配置信息比较从而判断到底是哪个server

集群操作

- 在/opt/module/zookeeper-3.4.10/zkData目录下创建一个myid的文件:

touch myid,添加myid文件,注意一定要在linux里面创建,在notepad++里面很可能乱码 - 编辑myid文件:

vi myid,在文件中添加与server对应的编号:如2 - 拷贝配置好的zookeeper到其他机器上:

- scp -r zookeeper-3.4.10/ [email protected]:/opt/app/

- scp -r zookeeper-3.4.10/ [email protected]:/opt/app/

- 并分别修改myid文件中内容为3、4

- 分别启动zookeeper

- [root@hadoop102 zookeeper-3.4.10]# bin/zkServer.sh start

- [root@hadoop103 zookeeper-3.4.10]# bin/zkServer.sh start

- [root@hadoop104 zookeeper-3.4.10]# bin/zkServer.sh start

- 查看状态

- [root@hadoop102 zookeeper-3.4.10]# bin/zkServer.sh status

- [root@hadoop103 zookeeper-3.4.10]# bin/zkServer.sh status

- [root@hadoop104 zookeeper-3.4.10]# bin/zkServer.sh status

客户端命令操作

| 命令基本语法 | 功能描述 |

|---|---|

| help | 显示所有操作命令 |

| ls path [watch] | 使用 ls 命令来查看当前znode中所包含的内容 |

| ls2 path [watch] | 查看当前节点数据并能看到更新次数等数据 |

| create | 普通创建,-s 含有序列,-e 临时(重启或者超时消失) |

| get path [watch] | 获得节点的值 |

| set | 设置节点的具体值 |

| stat | 查看节点状态 |

| delete | 删除节点 |

| rmr | 递归删除节点 |

- 启动客户端:

[luo@hadoop103 zookeeper-3.4.10]$ bin/zkCli.sh- 显示所有操作命令:

[zk: localhost:2181(CONNECTED) 1] help- 查看当前znode中所包含的内容:

[zk: localhost:2181(CONNECTED) 0] ls /[zookeeper]

- 查看当前节点数据并能看到更新次数等数据

[zk: localhost:2181(CONNECTED) 1] ls2/[zookeeper]

cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0x0

cversion = -1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 1- 创建普通节点:

[zk: localhost:2181(CONNECTED) 2] create /app1 "hello app1"- Created /app1

[zk: localhost:2181(CONNECTED) 4] create /app1/server101 "192.168.1.101"Created /app1/server101

- 获得节点的值

[zk: localhost:2181(CONNECTED) 6] get /app1hello app1

cZxid = 0x20000000a

ctime = Mon Jul 17 16:08:35 CST 2017

mZxid = 0x20000000a

mtime = Mon Jul 17 16:08:35 CST 2017

pZxid = 0x20000000b

cversion = 1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 10

numChildren = 1[zk: localhost:2181(CONNECTED) 8] get /app1/server101192.168.1.101

cZxid = 0x20000000b

ctime = Mon Jul 17 16:11:04 CST 2017

mZxid = 0x20000000b

mtime = Mon Jul 17 16:11:04 CST 2017

pZxid = 0x20000000b

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 13

numChildren = 0- 创建短暂节点

[zk: localhost:2181(CONNECTED) 9] create -e /app-emphemeral 8888- 在当前客户端是能查看到的

- [zk: localhost:2181(CONNECTED) 10] ls /

- [app1, app-emphemeral, zookeeper]

- 退出当前客户端然后再重启启动客户端

- [zk: localhost:2181(CONNECTED) 12] quit

- [luo@hadoop104 zookeeper-3.4.10]$ bin/zkCli.sh

再次查看根目录下短暂节点已经删除

- [zk: localhost:2181(CONNECTED) 0] ls /

[app1, zookeeper]

创建带序号的节点

先创建一个普通的根节点app2

- [zk: localhost:2181(CONNECTED) 11] create /app2 “app2”

创建带序号的节点

- [zk: localhost:2181(CONNECTED) 13] create -s /app2/aa 888

Created /app2/aa0000000000- [zk: localhost:2181(CONNECTED) 14] create -s /app2/bb 888

Created /app2/bb0000000001- [zk: localhost:2181(CONNECTED) 15] create -s /app2/cc 888

Created /app2/cc0000000002如果原节点下有1个节点,则再排序时从1开始,以此类推。- [zk: localhost:2181(CONNECTED) 16] create -s /app1/aa 888

Created /app1/aa0000000001

修改节点数据值:

[zk: localhost:2181(CONNECTED) 2] set /app1 999

- 节点的值变化监听

在104主机上注册监听/app1节点数据变化

- [zk: localhost:2181(CONNECTED) 26] get /app1 watch

- 在103主机上修改/app1节点的数据

- [zk: localhost:2181(CONNECTED) 5] set /app1 777

观察104主机收到数据变化的监听

- WATCHER::

WatchedEvent state:SyncConnected type:NodeDataChanged path:/app1

节点的子节点变化监听(路径变化)

在104主机上注册监听/app1节点的子节点变化

- [zk: localhost:2181(CONNECTED) 1] ls /app1 watch

- [aa0000000001, server101]

- 在103主机/app1节点上创建子节点

- [zk: localhost:2181(CONNECTED) 6] create /app1/bb 666

- Created /app1/bb

观察104主机收到子节点变化的监听

- WATCHER::

WatchedEvent state:SyncConnected type:NodeChildrenChanged path:/app1

删除节点

[zk: localhost:2181(CONNECTED) 4] delete /app1/bb

- 递归删除节点

[zk: localhost:2181(CONNECTED) 7] rmr /app2

- 查看节点状态

[zk: localhost:2181(CONNECTED) 12] stat /app1

cZxid = 0x20000000a

ctime = Mon Jul 17 16:08:35 CST 2017

mZxid = 0x200000018

mtime = Mon Jul 17 16:54:38 CST 2017

pZxid = 0x20000001c

cversion = 4

dataVersion = 2

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 3

numChildren = 2

API应用

eclipse环境搭建

- 创建一个工程

- 解压zookeeper-3.4.10.tar.gz文件

- 拷贝zookeeper-3.4.10.jar、jline-0.9.94.jar、log4j-1.2.16.jar、netty-3.10.5.Final.jar、slf4j-api-1.6.1.jar、slf4j-log4j12-1.6.1.jar到工程的lib目录。并build一下,导入工程。

- 拷贝log4j.properties文件到项目根目录

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n 创建Zookeeper客户端

private static String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";

private static int sessionTimeout = 2000;

private ZooKeeper zkClient = null;

@Before

public void init() throws Exception {

zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

@Override

public void process(WatchedEvent event) {

// 收到事件通知后的回调函数(用户的业务逻辑)

System.out.println(event.getType() + "--" + event.getPath());

}

});

}创建子节点

// 创建子节点

@Test

public void create() throws Exception {

// 数据的增删改查

// 参数1:要创建的节点的路径; 参数2:节点数据 ; 参数3:节点权限 ;参数4:节点的类型

String nodeCreated = zkClient.create("/eclipse", "hello zk".getBytes(), Ids.OPEN_ACL_UNSAFE,CreateMode.PERSISTENT);

}获取子节点

// 获取子节点

@Test

public void getChildren() throws Exception {

List children = zkClient.getChildren("/", true);

for (String child : children) {

System.out.println(child);

}

// 延时阻塞

Thread.sleep(Long.MAX_VALUE);

} 判断znode是否存在

// 判断znode是否存在

@Test

public void exist() throws Exception {

Stat stat = zkClient.exists("/eclipse", false);

System.out.println(stat == null ? "not exist" : "exist");

}案例实战

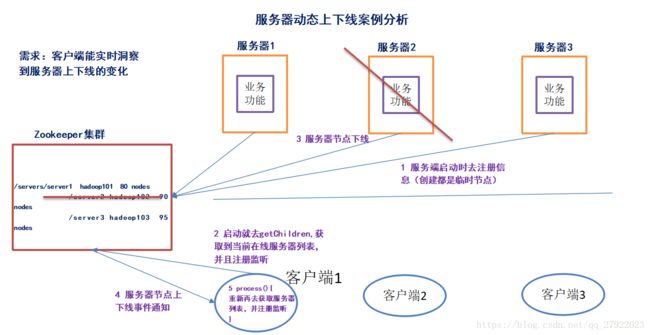

需求:某分布式系统中,主节点可以有多台,可以动态上下线,任意一台客户端都能实时感知到主节点服务器的上下线

需求分析

具体实现

先在集群上创建/servers节点

[zk: localhost:2181(CONNECTED) 10] create /servers “servers”

Created /servers

服务端代码

package com.luo.zkcase;

import java.io.IOException;

import org.apache.zookeeper.CreateMode;

import org.apache.zookeeper.WatchedEvent;

import org.apache.zookeeper.Watcher;

import org.apache.zookeeper.ZooKeeper;

import org.apache.zookeeper.ZooDefs.Ids;

public class DistributeServer {

private static String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";

private static int sessionTimeout = 2000;

private ZooKeeper zk = null;

private String parentNode = "/servers";

// 创建到zk的客户端连接

public void getConnect() throws IOException{

zk = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

@Override

public void process(WatchedEvent event) {

}

});

}

// 注册服务器

public void registServer(String hostname) throws Exception{

String create = zk.create(parentNode + "/server", hostname.getBytes(), Ids.OPEN_ACL_UNSAFE, CreateMode.EPHEMERAL_SEQUENTIAL);

System.out.println(hostname +" is online "+ create);

}

// 业务功能

public void business(String hostname) throws Exception{

System.out.println(hostname+" is working ...");

Thread.sleep(Long.MAX_VALUE);

}

public static void main(String[] args) throws Exception {

// 获取zk连接

DistributeServer server = new DistributeServer();

server.getConnect();

// 利用zk连接注册服务器信息

server.registServer(args[0]);

// 启动业务功能

server.business(args[0]);

}

}客户端代码

package com.luo.zkcase;

import java.io.IOException;

import java.util.ArrayList;

import java.util.List;

import org.apache.zookeeper.WatchedEvent;

import org.apache.zookeeper.Watcher;

import org.apache.zookeeper.ZooKeeper;

public class DistributeClient {

private static String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";

private static int sessionTimeout = 2000;

private ZooKeeper zk = null;

private String parentNode = "/servers";

// 创建到zk的客户端连接

public void getConnect() throws IOException {

zk = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

@Override

public void process(WatchedEvent event) {

// 再次启动监听

try {

getServerList();

} catch (Exception e) {

e.printStackTrace();

}

}

});

}

//

public void getServerList() throws Exception {

// 获取服务器子节点信息,并且对父节点进行监听

List children = zk.getChildren(parentNode, true);

ArrayList servers = new ArrayList<>();

for (String child : children) {

byte[] data = zk.getData(parentNode + "/" + child, false, null);

servers.add(new String(data));

}

// 把servers赋值给成员serverList,已提供给各业务线程使用

serversList = servers;

System.out.println(serversList);

}

// 业务功能

public void business() throws Exception {

System.out.println("client is working ...");

}

public static void main(String[] args) throws Exception {

// 获取zk连接

DistributeClient client = new DistributeClient();

client.getConnect();

// 获取servers的子节点信息,从中获取服务器信息列表

client.getServerList();

// 业务进程启动

client.business();

}

}