Android音频编解码和混音实现

原文链接:http://my.oschina.net/daxia/blog/636074

相关源码:https://github.com/YeDaxia/MusicPlus

认识数字音频:

在实现之前,我们先来了解一下数字音频的有关属性。

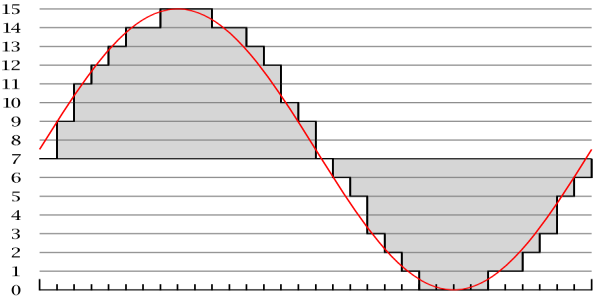

采样频率(Sample Rate):每秒采集声音的数量,它用赫兹(Hz)来表示。(采样率越高越靠近原声音的波形)

采样精度(Bit Depth):指记录声音的动态范围,它以位(Bit)为单位。(声音的幅度差)

声音通道(Channel):声道数。比如左声道右声道。

采样量化后的音频最终是一串数字,声音的大小(幅度)会体现在这个每个数字数值大小上;而声音的高低(频率)和声音的音色(Timbre)都和时间维度有关,会体现在数字之间的差异上。

在编码解码之前,我们先来感受一下原始的音频数据究竟是什么样的。我们知道wav文件里面放的就是原始的PCM数据,下面我们通过AudioTrack来直接把这些数据write进去播放出来。下面是某个wav文件的格式,关于wav的格式内容可以看:http://soundfile.sapp.org/doc/WaveFormat/ ,可以通过Binary Viewer等工具去查看一下wav文件的二进制内容。

播放wav文件:

int sampleRateInHz = 44100;

int channelConfig = AudioFormat.CHANNEL_OUT_STEREO;

int audioFormat = AudioFormat.ENCODING_PCM_16BIT;

int bufferSizeInBytes = AudioTrack.getMinBufferSize(sampleRateInHz, channelConfig, audioFormat);

AudioTrack audioTrack = new AudioTrack(AudioManager.STREAM_MUSIC, sampleRateInHz, channelConfig, audioFormat, bufferSizeInBytes, AudioTrack.MODE_STREAM);

audioTrack.play();

FileInputStream audioInput = null;

try {

audioInput = new FileInputStream(audioFile);//put your wav file in

audioInput.read(new byte[44]);//skid 44 wav header

byte[] audioData = new byte[512];

while(audioInput.read(audioData)!= -1){

audioTrack.write(audioData, 0, audioData.length); //play raw audio bytes

}

} catch (FileNotFoundException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

}finally{

audioTrack.stop();

audioTrack.release();

if(audioInput != null)

try {

audioInput.close();

} catch (IOException e) {

e.printStackTrace();

}

}如果你有试过一下上面的例子,那你应该对音频的源数据有了一个概念了。

音频的解码:

通过上面的介绍,我们不难知道,解码的目的就是让编码后的数据恢复成wav中的源数据。

利用MediaExtractor和MediaCodec来提取编码后的音频数据并解压成音频源数据:

final String encodeFile = "your encode audio file path";

MediaExtractor extractor = new MediaExtractor();

extractor.setDataSource(encodeFile);

MediaFormat mediaFormat = null;

for (int i = 0; i < extractor.getTrackCount(); i++) {

MediaFormat format = extractor.getTrackFormat(i);

String mime = format.getString(MediaFormat.KEY_MIME);

if (mime.startsWith("audio/")) {

extractor.selectTrack(i);

mediaFormat = format;

break;

}

}

if(mediaFormat == null){

DLog.e("not a valid file with audio track..");

extractor.release();

return null;

}

FileOutputStream fosDecoder = new FileOutputStream(outDecodeFile);//your out file path

String mediaMime = mediaFormat.getString(MediaFormat.KEY_MIME);

MediaCodec codec = MediaCodec.createDecoderByType(mediaMime);

codec.configure(mediaFormat, null, null, 0);

codec.start();

ByteBuffer[] codecInputBuffers = codec.getInputBuffers();

ByteBuffer[] codecOutputBuffers = codec.getOutputBuffers();

final long kTimeOutUs = 5000;

MediaCodec.BufferInfo info = new MediaCodec.BufferInfo();

boolean sawInputEOS = false;

boolean sawOutputEOS = false;

int totalRawSize = 0;

try{

while (!sawOutputEOS) {

if (!sawInputEOS) {

int inputBufIndex = codec.dequeueInputBuffer(kTimeOutUs);

if (inputBufIndex >= 0) {

ByteBuffer dstBuf = codecInputBuffers[inputBufIndex];

int sampleSize = extractor.readSampleData(dstBuf, 0);

if (sampleSize < 0) {

DLog.i(TAG, "saw input EOS.");

sawInputEOS = true;

codec.queueInputBuffer(inputBufIndex,0,0,0,MediaCodec.BUFFER_FLAG_END_OF_STREAM );

} else {

long presentationTimeUs = extractor.getSampleTime();

codec.queueInputBuffer(inputBufIndex,0,sampleSize,presentationTimeUs,0);

extractor.advance();

}

}

}

int res = codec.dequeueOutputBuffer(info, kTimeOutUs);

if (res >= 0) {

int outputBufIndex = res;

// Simply ignore codec config buffers.

if ((info.flags & MediaCodec.BUFFER_FLAG_CODEC_CONFIG)!= 0) {

DLog.i(TAG, "audio encoder: codec config buffer");

codec.releaseOutputBuffer(outputBufIndex, false);

continue;

}

if(info.size != 0){

ByteBuffer outBuf = codecOutputBuffers[outputBufIndex];

outBuf.position(info.offset);

outBuf.limit(info.offset + info.size);

byte[] data = new byte[info.size];

outBuf.get(data);

totalRawSize += data.length;

fosDecoder.write(data);

}

codec.releaseOutputBuffer(outputBufIndex, false);

if ((info.flags & MediaCodec.BUFFER_FLAG_END_OF_STREAM) != 0) {

DLog.i(TAG, "saw output EOS.");

sawOutputEOS = true;

}

} else if (res == MediaCodec.INFO_OUTPUT_BUFFERS_CHANGED) {

codecOutputBuffers = codec.getOutputBuffers();

DLog.i(TAG, "output buffers have changed.");

} else if (res == MediaCodec.INFO_OUTPUT_FORMAT_CHANGED) {

MediaFormat oformat = codec.getOutputFormat();

DLog.i(TAG, "output format has changed to " + oformat);

}

}

}finally{

fosDecoder.close();

codec.stop();

codec.release();

extractor.release();

}解压之后,可以用AudioTrack来播放验证一下这些数据是否正确。

音频的混音:

音频混音的原理: 量化的语音信号的叠加等价于空气中声波的叠加。

反应到音频数据上,也就是把同一个声道的数值进行简单的相加,但是这样同时会产生一个问题,那就是相加的结果可能会溢出,当然为了解决这个问题已经有很多方案了,在这里我们采用简单的平均算法(average audio mixing algorithm, 简称V算法)。在下面的演示程序中,我们假设音频文件是的采样率,通道和采样精度都是一样的,这样会便于处理。另外要注意的是,在源音频数据中是按照little-endian的顺序来排放的,PCM值为0表示没声音(振幅为0)。

public void mixAudios(File[] rawAudioFiles){

final int fileSize = rawAudioFiles.length;

FileInputStream[] audioFileStreams = new FileInputStream[fileSize];

File audioFile = null;

FileInputStream inputStream;

byte[][] allAudioBytes = new byte[fileSize][];

boolean[] streamDoneArray = new boolean[fileSize];

byte[] buffer = new byte[512];

int offset;

try {

for (int fileIndex = 0; fileIndex < fileSize; ++fileIndex) {

audioFile = rawAudioFiles[fileIndex];

audioFileStreams[fileIndex] = new FileInputStream(audioFile);

}

while(true){

for(int streamIndex = 0 ; streamIndex < fileSize ; ++streamIndex){

inputStream = audioFileStreams[streamIndex];

if(!streamDoneArray[streamIndex] && (offset = inputStream.read(buffer)) != -1){

allAudioBytes[streamIndex] = Arrays.copyOf(buffer,buffer.length);

}else{

streamDoneArray[streamIndex] = true;

allAudioBytes[streamIndex] = new byte[512];

}

}

byte[] mixBytes = mixRawAudioBytes(allAudioBytes);

//mixBytes 就是混合后的数据

boolean done = true;

for(boolean streamEnd : streamDoneArray){

if(!streamEnd){

done = false;

}

}

if(done){

break;

}

}

} catch (IOException e) {

e.printStackTrace();

if(mOnAudioMixListener != null)

mOnAudioMixListener.onMixError(1);

}finally{

try {

for(FileInputStream in : audioFileStreams){

if(in != null)

in.close();

}

} catch (IOException e) {

e.printStackTrace();

}

}

}

/**

* 每一行是一个音频的数据

*/

byte[] averageMix(byte[][] bMulRoadAudioes) {

if (bMulRoadAudioes == null || bMulRoadAudioes.length == 0)

return null;

byte[] realMixAudio = bMulRoadAudioes[0];

if(bMulRoadAudioes.length == 1)

return realMixAudio;

for(int rw = 0 ; rw < bMulRoadAudioes.length ; ++rw){

if(bMulRoadAudioes[rw].length != realMixAudio.length){

Log.e("app", "column of the road of audio + " + rw +" is diffrent.");

return null;

}

}

int row = bMulRoadAudioes.length;

int coloum = realMixAudio.length / 2;

short[][] sMulRoadAudioes = new short[row][coloum];

for (int r = 0; r < row; ++r) {

for (int c = 0; c < coloum; ++c) {

sMulRoadAudioes[r][c] = (short) ((bMulRoadAudioes[r][c * 2] & 0xff) | (bMulRoadAudioes[r][c * 2 + 1] & 0xff) << 8);

}

}

short[] sMixAudio = new short[coloum];

int mixVal;

int sr = 0;

for (int sc = 0; sc < coloum; ++sc) {

mixVal = 0;

sr = 0;

for (; sr < row; ++sr) {

mixVal += sMulRoadAudioes[sr][sc];

}

sMixAudio[sc] = (short) (mixVal / row);

}

for (sr = 0; sr < coloum; ++sr) {

realMixAudio[sr * 2] = (byte) (sMixAudio[sr] & 0x00FF);

realMixAudio[sr * 2 + 1] = (byte) ((sMixAudio[sr] & 0xFF00) >> 8);

}

return realMixAudio;

}同样,你可以把混音后的数据用AudioTrack播放出来,验证一下混音的效果。

音频的编码:

对音频进行编码的目的用更少的空间来存储和传输,有有损编码和无损编码,其中我们常见的Mp3和ACC格式就是有损编码。在下面的例子中,我们通过MediaCodec来对混音后的数据进行编码,在这里,我们将采用ACC格式来进行。

ACC音频有ADIF和ADTS两种,第一种适用于磁盘,第二种则可以用于流的传输,它是一种帧序列。我们这里用ADTS这种来进行编码,首先要了解一下它的帧序列的构成:

ADTS的帧结构:

| header |

body |

ADTS帧的Header组成:

| Length (bits) | Description |

| 12 | syncword 0xFFF, all bits must be 1 |

| 1 | MPEG Version: 0 for MPEG-4, 1 for MPEG-2 |

| 2 | Layer: always 0 |

| 1 | protection absent, Warning, set to 1 if there is no CRC and 0 if there is CRC |

| 2 | profile, the MPEG-4 Audio Object Type minus 1 |

| 4 | MPEG-4 Sampling Frequency Index (15 is forbidden) |

| 1 | private bit, guaranteed never to be used by MPEG, set to 0 when encoding, ignore when decoding |

| 3 | MPEG-4 Channel Configuration (in the case of 0, the channel configuration is sent via an inband PCE) |

| 1 | originality, set to 0 when encoding, ignore when decoding |

| 1 | home, set to 0 when encoding, ignore when decoding |

| 1 | copyrighted id bit, the next bit of a centrally registered copyright identifier, set to 0 when encoding, ignore when decoding |

| 1 | copyright id start, signals that this frame's copyright id bit is the first bit of the copyright id, set to 0 when encoding, ignore when decoding |

| 13 | frame length, this value must include 7 or 9 bytes of header length: FrameLength = (ProtectionAbsent == 1 ? 7 : 9) + size(AACFrame) |

| 11 | Buffer fullness |

| 2 | Number of AAC frames (RDBs) in ADTS frame minus 1, for maximum compatibility always use 1 AAC frame per ADTS frame |

| 16 | CRC if protection absent is 0 |

我们的思路就很明确了,把编码后的每一帧数据加上header写到文件中,保存后的.acc文件应该是可以被播放器识别播放的。为了简单,我们还是假设之前生成的混音数据源的采样率是44100Hz,通道数是2,采样精度是16Bit。

把音频源数据编码成ACC格式完成源代码:

class AACAudioEncoder{

private final static String TAG = "AACAudioEncoder";

private final static String AUDIO_MIME = "audio/mp4a-latm";

private final static long audioBytesPerSample = 44100*16/8;

private String rawAudioFile;

AACAudioEncoder(String rawAudioFile) {

this.rawAudioFile = rawAudioFile;

}

@Override

public void encodeToFile(String outEncodeFile) {

FileInputStream fisRawAudio = null;

FileOutputStream fosAccAudio = null;

try {

fisRawAudio = new FileInputStream(rawAudioFile);

fosAccAudio = new FileOutputStream(outEncodeFile);

final MediaCodec audioEncoder = createACCAudioDecoder();

audioEncoder.start();

ByteBuffer[] audioInputBuffers = audioEncoder.getInputBuffers();

ByteBuffer[] audioOutputBuffers = audioEncoder.getOutputBuffers();

boolean sawInputEOS = false;

boolean sawOutputEOS = false;

long audioTimeUs = 0 ;

BufferInfo outBufferInfo = new BufferInfo();

boolean readRawAudioEOS = false;

byte[] rawInputBytes = new byte[4096];

int readRawAudioCount = 0;

int rawAudioSize = 0;

long lastAudioPresentationTimeUs = 0;

int inputBufIndex, outputBufIndex;

while(!sawOutputEOS){

if (!sawInputEOS) {

inputBufIndex = audioEncoder.dequeueInputBuffer(10000);

if (inputBufIndex >= 0) {

ByteBuffer inputBuffer = audioInputBuffers[inputBufIndex];

inputBuffer.clear();

int bufferSize = inputBuffer.remaining();

if(bufferSize != rawInputBytes.length){

rawInputBytes = new byte[bufferSize];

}

if(!readRawAudioEOS){

readRawAudioCount = fisRawAudio.read(rawInputBytes);

if(readRawAudioCount == -1){

readRawAudioEOS = true;

}

}

if(readRawAudioEOS){

audioEncoder.queueInputBuffer(inputBufIndex,0 , 0 , 0 ,MediaCodec.BUFFER_FLAG_END_OF_STREAM);

sawInputEOS = true;

}else{

inputBuffer.put(rawInputBytes, 0, readRawAudioCount);

rawAudioSize += readRawAudioCount;

audioEncoder.queueInputBuffer(inputBufIndex, 0, readRawAudioCount, audioTimeUs, 0);

audioTimeUs = (long) (1000000 * (rawAudioSize / 2.0) / audioBytesPerSample);

}

}

}

outputBufIndex = audioEncoder.dequeueOutputBuffer(outBufferInfo, 10000);

if(outputBufIndex >= 0){

// Simply ignore codec config buffers.

if ((outBufferInfo.flags & MediaCodec.BUFFER_FLAG_CODEC_CONFIG)!= 0) {

DLog.i(TAG, "audio encoder: codec config buffer");

audioEncoder.releaseOutputBuffer(outputBufIndex, false);

continue;

}

if(outBufferInfo.size != 0){

ByteBuffer outBuffer = audioOutputBuffers[outputBufIndex];

outBuffer.position(outBufferInfo.offset);

outBuffer.limit(outBufferInfo.offset + outBufferInfo.size);

DLog.i(TAG, String.format(" writing audio sample : size=%s , presentationTimeUs=%s", outBufferInfo.size, outBufferInfo.presentationTimeUs));

if(lastAudioPresentationTimeUs < outBufferInfo.presentationTimeUs){

lastAudioPresentationTimeUs = outBufferInfo.presentationTimeUs;

int outBufSize = outBufferInfo.size;

int outPacketSize = outBufSize + 7;

outBuffer.position(outBufferInfo.offset);

outBuffer.limit(outBufferInfo.offset + outBufSize);

byte[] outData = new byte[outBufSize + 7];

addADTStoPacket(outData, outPacketSize);

outBuffer.get(outData, 7, outBufSize);

fosAccAudio.write(outData, 0, outData.length);

DLog.i(TAG, outData.length + " bytes written.");

}else{

DLog.e(TAG, "error sample! its presentationTimeUs should not lower than before.");

}

}

audioEncoder.releaseOutputBuffer(outputBufIndex, false);

if ((outBufferInfo.flags & MediaCodec.BUFFER_FLAG_END_OF_STREAM) != 0) {

sawOutputEOS = true;

}

}else if (outputBufIndex == MediaCodec.INFO_OUTPUT_BUFFERS_CHANGED) {

audioOutputBuffers = audioEncoder.getOutputBuffers();

} else if (outputBufIndex == MediaCodec.INFO_OUTPUT_FORMAT_CHANGED) {

MediaFormat audioFormat = audioEncoder.getOutputFormat();

DLog.i(TAG, "format change : "+ audioFormat);

}

}

} catch (FileNotFoundException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

} finally {

try {

if (fisRawAudio != null)

fisRawAudio.close();

if(fosAccAudio != null)

fosAccAudio.close();

} catch (IOException e) {

e.printStackTrace();

}

}

}

private MediaCodec createACCAudioDecoder() throws IOException {

MediaCodec codec = MediaCodec.createEncoderByType(AUDIO_MIME);

MediaFormat format = new MediaFormat();

format.setString(MediaFormat.KEY_MIME, AUDIO_MIME);

format.setInteger(MediaFormat.KEY_BIT_RATE, 128000);

format.setInteger(MediaFormat.KEY_CHANNEL_COUNT, 2);

format.setInteger(MediaFormat.KEY_SAMPLE_RATE, 44100);

format.setInteger(MediaFormat.KEY_AAC_PROFILE,MediaCodecInfo.CodecProfileLevel.AACObjectLC);

codec.configure(format, null, null, MediaCodec.CONFIGURE_FLAG_ENCODE);

return codec;

}

/**

* Add ADTS header at the beginning of each and every AAC packet.

* This is needed as MediaCodec encoder generates a packet of raw

* AAC data.

*

* Note the packetLen must count in the ADTS header itself.

**/

private void addADTStoPacket(byte[] packet, int packetLen) {

int profile = 2; //AAC LC

//39=MediaCodecInfo.CodecProfileLevel.AACObjectELD;

int freqIdx = 4; //44.1KHz

int chanCfg = 2; //CPE

// fill in ADTS data

packet[0] = (byte)0xFF;

packet[1] = (byte)0xF9;

packet[2] = (byte)(((profile-1)<<6) + (freqIdx<<2) +(chanCfg>>2));

packet[3] = (byte)(((chanCfg&3)<<6) + (packetLen>>11));

packet[4] = (byte)((packetLen&0x7FF) >> 3);

packet[5] = (byte)(((packetLen&7)<<5) + 0x1F);

packet[6] = (byte)0xFC;

}

}参考资料:

数字音频: http://en.flossmanuals.net/pure-data/ch003_what-is-digital-audio/

WAV文件格式: http://soundfile.sapp.org/doc/WaveFormat/

ACC文件格式: http://www.cnblogs.com/caosiyang/archive/2012/07/16/2594029.html

有关Android Media编程的一些CTS:https://android.googlesource.com/platform/cts/+/jb-mr2-release/tests/tests/media/src/android/media/cts

WAV转ACC相关问题: http://stackoverflow.com/questions/18862715/how-to-generate-the-aac-adts-elementary-stream-with-android-mediacodec