转载请注明出处哈:http://carlosfu.iteye.com/blog/2240426

一、现象:

我们的redis私有云,对外提供了redis-standalone, redis-sentinel, redis-cluster三种类型的redis服务。

其中redis-cluster, 使用的版本是 Redis Cluster 3.0.2, 客户端是jedis 2.7.2。

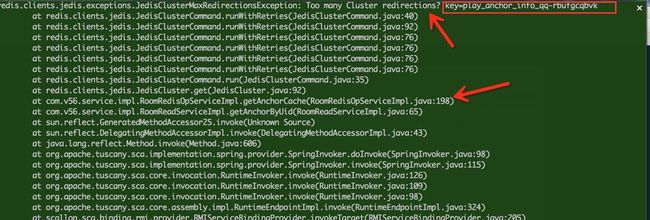

有人在使用时候,业务的日志中发现了一些异常(Too many Cluster redirections)。

二、jedis源码分析:

先从jedis源码中找到这个异常,这段异常是在JedisClusterCommand类中

if (redirections <= 0) {

throw new JedisClusterMaxRedirectionsException("Too many Cluster redirections? key=" + key);

}

在jedis中调用redis-cluster使用的JedisCluster类,所有api的调用方式类似如下:

public String set(final String key, final String value) {

return new JedisClusterCommand(connectionHandler, maxRedirections) {

@Override

public String execute(Jedis connection) {

return connection.set(key, value);

}

}.run(key);

}

所以重点代码在JedisClusterCommand这个类里,重要代码如下:

public T run(int keyCount, String... keys) {

if (keys == null || keys.length == 0) {

throw new JedisClusterException("No way to dispatch this command to Redis Cluster.");

}

if (keys.length > 1) {

int slot = JedisClusterCRC16.getSlot(keys[0]);

for (int i = 1; i < keyCount; i++) {

int nextSlot = JedisClusterCRC16.getSlot(keys[i]);

if (slot != nextSlot) {

throw new JedisClusterException("No way to dispatch this command to Redis Cluster "

+ "because keys have different slots.");

}

}

}

return runWithRetries(SafeEncoder.encode(keys[0]), this.redirections, false, false);

}

private T runWithRetries(byte[] key, int redirections, boolean tryRandomNode, boolean asking) {

if (redirections <= 0) {

JedisClusterMaxRedirectionsException exception = new JedisClusterMaxRedirectionsException(

"Too many Cluster redirections? key=" + SafeEncoder.encode(key));

throw exception;

}

Jedis connection = null;

try {

if (asking) {

// TODO: Pipeline asking with the original command to make it

// faster....

connection = askConnection.get();

connection.asking();

// if asking success, reset asking flag

asking = false;

} else {

if (tryRandomNode) {

connection = connectionHandler.getConnection();

} else {

connection = connectionHandler.getConnectionFromSlot(JedisClusterCRC16.getSlot(key));

}

}

return execute(connection);

} catch (JedisConnectionException jce) {

if (tryRandomNode) {

// maybe all connection is down

throw jce;

}

// release current connection before recursion

releaseConnection(connection);

connection = null;

// retry with random connection

return runWithRetries(key, redirections - 1, true, asking);

} catch (JedisRedirectionException jre) {

// if MOVED redirection occurred,

if (jre instanceof JedisMovedDataException) {

// it rebuilds cluster's slot cache

// recommended by Redis cluster specification

this.connectionHandler.renewSlotCache(connection);

}

// release current connection before recursion or renewing

releaseConnection(connection);

connection = null;

if (jre instanceof JedisAskDataException) {

asking = true;

askConnection.set(this.connectionHandler.getConnectionFromNode(jre.getTargetNode()));

} else if (jre instanceof JedisMovedDataException) {

} else {

throw new JedisClusterException(jre);

}

return runWithRetries(key, redirections - 1, false, asking);

} finally {

releaseConnection(connection);

}

}

代码解释:

1. 所有jedis.set这样的调用,都用JedisClusterCommand包装起来(模板方法)

2. 如果操作的是多个不同的key, 会抛出如下异常,因为redis-cluster不支持key的批量操作(可以通过其他方法解决,以后会介绍):

throw new JedisClusterException("No way to dispatch this command to Redis Cluster because keys have different slots."); private T runWithRetries(byte[] key, int redirections, boolean tryRandomNode, boolean asking) {

(1) key: 不多说了

(2) redirections: 节点调转次数(实际可以看做是重试次数)

(3) tryRandomNode: 是否从redis cluster随机选一个节点进行操作

(4) asking: 是否发生了asking问题

4. 逻辑说明:

正常逻辑:

(1) asking = true: 获取asking对应的jedis, 然后用这个jedis操作。

(2) tryRandomNode= true: 从jedis连接池随机获取一个可用的jedis, 然后用这个jedis操作。

(3) 都不是:直接用key->slot->jedis,直接找到key对应的jedis, 然后用这个jedis操作。

异常逻辑:

(1) JedisConnectionException: 连接出了问题,连接断了、超时等等,tryRandomNode= true,递归调用本函数

(2) JedisRedirectionException分两种:

---JedisMovedDataException: 节点迁移了,重新renew本地slot对redis节点的对应Map

---JedisAskDataException: 数据迁移,发生asking问题, 获取asking的Jedis

此过程最多循环redirections次。

异常含义:试了redirections次(上述),仍然没有完成redis操作。

三、原因猜测:

1. 超时比较多,默认超时时间是2秒。

(1). 网络原因:比如是否存在跨机房、网络割接等等。

(2). 慢查询,因为redis是单线程,如果有慢查询的话,会阻塞住之后的操作。

(3). value值过大?

(4). aof 重写/rdb fork发生?

2. 节点之间关系发生变化,会发生JedisMovedDataException

3. 数据在节点之间迁移,会发生JedisAskDataException