TensorFlow深度学习框架搭建

与keras类似,tensorflow是一个很流行的机器学习、深度学习算法框架,开发者只需要专注于模型的设计,大大的提高了开发效率,tensorflow内置的模型丰富可以直接用于实践应用;加上自带有WEBUI监控,我们可以实时观测模型的运行状态。

1 安装与测试

安装环境:联网环境下win7+python3.x

安装:

声明:win7环境下的Tensorflow只能运行在Python 3.X版本中,不可运行下在Python2.X中。

进入CMD 环境中,python -m pip install tensorflow

经过漫长的等待后,安装成功!

此次安装没有安装CUDA,tensorflow的计算在单机CPU下进行。

测试:

Python 3.6.3 shell:

Python 3.6.3 (v3.6.3:2c5fed8, Oct 3 2017, 18:11:49) [MSC v.1900 64 bit (AMD64)] on win32

Type "copyright", "credits" or "license()" for more information.

>>> import tensorflow as tf

>>> hello = tf.constant('Hello, TensorFlow!')

>>> sess = tf.Session()

>>> print(sess.run(hello))

b'Hello, TensorFlow!'

>>> a=tf.constant(10)

>>> b=tf.constant(10)

>>> print(sess.run(a+b))

20

>>> 2学习使用

这里先放上两个教程,后续再补充学习心得:

推荐教程:https://github.com/nlintz/TensorFlow-Tutorials

官网教程:http://www.tensorfly.cn/tfdoc/tutorials/overview.html

3 TensorBoard

TensorBoard为tensorflow框架的WEBUI界面,可以实时监控模型运行状态。

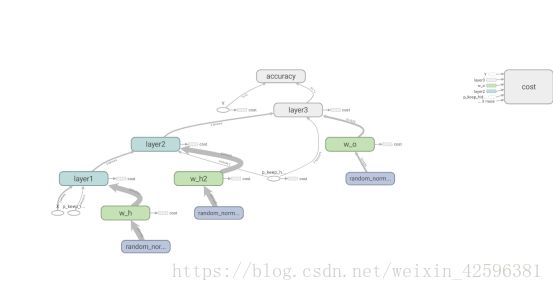

搭建tensorboard,查看深度学习图形可视化。 TensorBoard 来展现你的 TensorFlow 图像,绘制图像生成的定量指标图以及附加数据。监控模型训练状态。

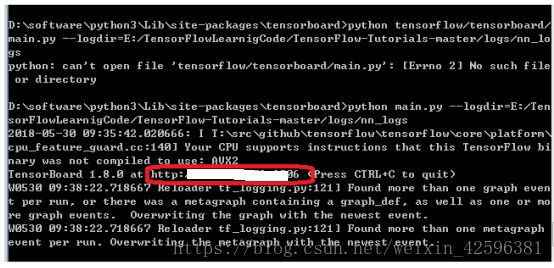

进入路径:python3\Lib\site-packages\tensorboard

在此路径下运行: python main.py --logdir=path/to/log-directory(替换成自己训练log日志的位置)

logdir =改为训练过程中产生的Log日志对应的路径。出现下图的界面后,在浏览器中输入红框对应的地址。即可打开tensorboard。

在pycharm中运行一下下面的Python脚本,在console中可以看到训练中的结果,同时我们打开TensorBoard。

跑一段大佬的神经网络模型的python代码,来源:https://github.com/nlintz/TensorFlow-Tutorials

代码编写简洁清晰,需要好好拜读!

#!/usr/bin/env python

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

def init_weights(shape, name):

return tf.Variable(tf.random_normal(shape, stddev=0.01), name=name)

# This network is the same as the previous one except with an extra hidden layer + dropout

def model(X, w_h, w_h2, w_o, p_keep_input, p_keep_hidden):

# Add layer name scopes for better graph visualization

with tf.name_scope("layer1"):

X = tf.nn.dropout(X, p_keep_input)

h = tf.nn.relu(tf.matmul(X, w_h))

with tf.name_scope("layer2"):

h = tf.nn.dropout(h, p_keep_hidden)

h2 = tf.nn.relu(tf.matmul(h, w_h2))

with tf.name_scope("layer3"):

h2 = tf.nn.dropout(h2, p_keep_hidden)

return tf.matmul(h2, w_o)

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

trX, trY, teX, teY = mnist.train.images, mnist.train.labels, mnist.test.images, mnist.test.labels

X = tf.placeholder("float", [None, 784], name="X")

Y = tf.placeholder("float", [None, 10], name="Y")

w_h = init_weights([784, 625], "w_h")

w_h2 = init_weights([625, 625], "w_h2")

w_o = init_weights([625, 10], "w_o")

# Add histogram summaries for weights

tf.summary.histogram("w_h_summ", w_h)

tf.summary.histogram("w_h2_summ", w_h2)

tf.summary.histogram("w_o_summ", w_o)

p_keep_input = tf.placeholder("float", name="p_keep_input")

p_keep_hidden = tf.placeholder("float", name="p_keep_hidden")

py_x = model(X, w_h, w_h2, w_o, p_keep_input, p_keep_hidden)

with tf.name_scope("cost"):

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=py_x, labels=Y))

train_op = tf.train.RMSPropOptimizer(0.001, 0.9).minimize(cost)

# Add scalar summary for cost

tf.summary.scalar("cost", cost)

with tf.name_scope("accuracy"):

correct_pred = tf.equal(tf.argmax(Y, 1), tf.argmax(py_x, 1)) # Count correct predictions

acc_op = tf.reduce_mean(tf.cast(correct_pred, "float")) # Cast boolean to float to average

# Add scalar summary for accuracy

tf.summary.scalar("accuracy", acc_op)

with tf.Session() as sess:

# create a log writer. run 'tensorboard --logdir=./logs/nn_logs'

writer = tf.summary.FileWriter("./logs/nn_logs", sess.graph) # for 1.0

merged = tf.summary.merge_all()

# you need to initialize all variables

tf.global_variables_initializer().run()

for i in range(100):

for start, end in zip(range(0, len(trX), 128), range(128, len(trX)+1, 128)):

sess.run(train_op, feed_dict={X: trX[start:end], Y: trY[start:end],

p_keep_input: 0.8, p_keep_hidden: 0.5})

summary, acc = sess.run([merged, acc_op], feed_dict={X: teX, Y: teY,

p_keep_input: 1.0, p_keep_hidden: 1.0})

writer.add_summary(summary, i) # Write summary

print(i, acc) # Report the accuracy

writer.close()Python脚本中通过此函数将模型训练的参数均记录在nn_log中。启动tensorboard要记录下log日志的路径。

writer = tf.summary.FileWriter("./logs/nn_logs", sess.graph) #

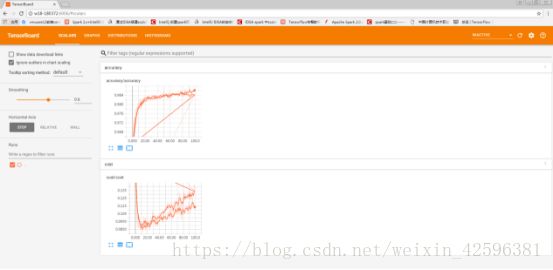

此截图中,由于多次训练模型而且都存放在一个log中,才会绘制出这个感觉很奇怪的训练过程,实际工程中应该一个模型对应一个log,模型训练完成后,清理Log或重命名。

例子:

以上脚本运行一次,记录在nn_log1中,训练过程中结果记录:

控制台中的神经网络的训练过程记录,可以看出来第99次训练分类准确率为98.47%。

0 0.9357

1 0.9632

2 0.9688

3 0.9751

...

90 0.9859

91 0.9856

92 0.9848

93 0.9852

94 0.9849

95 0.9843

96 0.9854

97 0.9839

98 0.985

99 0.9847

4 模型可视化

训练过程

acc记录