使用kubeadm安装K8s

初始化master

初始化k8s并锁定v1.17.0版本。

kubeadm init \

--image-repository registry.cn-hangzhou.aliyuncs.com/google_containers \

--kubernetes-version v1.17.0 \

--pod-network-cidr=10.244.0.0/16下载完成后使用【docker images】命令查看镜像,然后打上标签

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.17.0 k8s.gcr.io/kube-proxy:v1.17.0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.17.0 k8s.gcr.io/kube-controller-manager:v1.17.0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.17.0 k8s.gcr.io/kube-apiserver:v1.17.0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.17.0 k8s.gcr.io/kube-scheduler:v1.17.0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.17.0 k8s.gcr.io/coredns:v1.17.0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.6.5 k8s.gcr.io/coredns:1.6.5

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.3-0 k8s.gcr.io/etcd:3.4.3-0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1重新执行kubeadm init操作,此时报错

The connection to the server :6443 was refused - did you specify the right host or port? 执行【kubeadm reset】即可。

W1215 21:37:47.571117 2663 validation.go:28] Cannot validate kube-proxy config - no validator is available

W1215 21:37:47.571186 2663 validation.go:28] Cannot validate kubelet config - no validator is available

[init] Using Kubernetes version: v1.17.0

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [localhost.localdomain kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.125.58]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost.localdomain localhost] and IPs [192.168.125.58 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost.localdomain localhost] and IPs [192.168.125.58 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W1215 21:37:55.230428 2663 manifests.go:214] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W1215 21:37:55.233799 2663 manifests.go:214] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 29.729964 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet-check] Initial timeout of 40s passed.

[kubelet] Creating a ConfigMap "kubelet-config-1.17" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node localhost.localdomain as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node localhost.localdomain as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: gvn5ya.2bh36o4adqp5rrni

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.125.58:6443 --token mu4mmq.pdfqfe6pms0m98gd \

--discovery-token-ca-cert-hash sha256:ce2afa1b29a373970f93bc62c3ae213e95d45e88effa0f3bbbdf7a312c24ea22 配置KUBECONFIG环境变量

cp -f /etc/kubernetes/admin.conf $HOME/

chown $(id -u):$(id -g) $HOME/admin.conf

export KUBECONFIG=$HOME/admin.conf

echo "export KUBECONFIG=$HOME/admin.conf" >> ~/.bash_profile允许master运行pod

kubectl taint nodes --all node-role.kubernetes.io/master-添加node

在从主机执行命令

kubeadm join 192.168.125.58:6443 --token mu4mmq.pdfqfe6pms0m98gd \

--discovery-token-ca-cert-hash sha256:ce2afa1b29a373970f93bc62c3ae213e95d45e88effa0f3bbbdf7a312c24ea22 查看pod运行情况

[root@localhost ~]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6955765f44-d9mtm 1/1 Running 2 2d1h

coredns-6955765f44-tctz9 1/1 Running 2 2d1h

etcd-localhost.localdomain 1/1 Running 3 2d1h

kube-apiserver-localhost.localdomain 1/1 Running 3 2d1h

kube-controller-manager-localhost.localdomain 1/1 Running 42 2d1h

kube-flannel-ds-amd64-mxk57 1/1 Running 2 25h

kube-flannel-ds-amd64-rd9gj 0/1 Pending 0 25h

kube-flannel-ds-amd64-w6jhv 0/1 Pending 0 25h

kube-proxy-j6429 0/1 ImagePullBackOff 0 2d1h

kube-proxy-tnhh8 0/1 ImagePullBackOff 0 2d1h

kube-proxy-zw4pq 1/1 Running 3 2d1h

kube-scheduler-localhost.localdomain 1/1 Running 41 2d1h安装flannel

先查看当前node

[root@localhost ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

host1 NotReady 23h v1.17.0

host2 NotReady 23h v1.17.0

localhost.localdomain NotReady master 24h v1.17.0 因为国内下载不了k8s的镜像,所以需要通过下列方式构建镜像:

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

curl -sSL "https://github.com/coreos/flannel/blob/master/Documentation/kube-flannel.yml?raw=true" | kubectl create -f -此时再次查看,master节点变为Ready状态说明成功。

[root@localhost ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

host1 NotReady 23h v1.17.0

host2 NotReady 23h v1.17.0

localhost.localdomain Ready master 24h v1.17.0 安装dashboard

执行下面的命令:

kubectl apply -f http://mirror.faasx.com/kubernetes/dashboard/master/src/deploy/recommended/kubernetes-dashboard.yaml此时会报错:error: unable to recognize "http://mirror.faasx.com/kubernetes/dashboard/master/src/deploy/recommended/kubernetes-dashboard.yaml": no matches for kind "Deployment" in version "apps/v1beta2"

百度后说需要把 apps/v1beta2 去掉beta2,实践证明是成功的,把kubernetes-dashboard.yaml下载到本地改就行。

[root@localhost ~]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6955765f44-d9mtm 1/1 Running 2 2d1h

coredns-6955765f44-tctz9 1/1 Running 2 2d1h

etcd-localhost.localdomain 1/1 Running 3 2d1h

kube-apiserver-localhost.localdomain 1/1 Running 3 2d1h

kube-controller-manager-localhost.localdomain 1/1 Running 42 2d1h

kube-flannel-ds-amd64-mxk57 1/1 Running 2 25h

kube-flannel-ds-amd64-rd9gj 0/1 Pending 0 25h

kube-flannel-ds-amd64-w6jhv 0/1 Pending 0 25h

kube-proxy-j6429 0/1 ImagePullBackOff 0 2d1h

kube-proxy-tnhh8 0/1 ImagePullBackOff 0 2d1h

kube-proxy-zw4pq 1/1 Running 3 2d1h

kube-scheduler-localhost.localdomain 1/1 Running 41 2d1h

kubernetes-dashboard-6ddc5f4f97-4f6sb 1/1 Running 2 23h启动容器后,我们需要暴露服务

[root@localhost ~]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 53/UDP,53/TCP,9153/TCP 2d1h

kubernetes-dashboard NodePort 10.96.65.147 443/TCP 24h 为了方便访问,我们把kubernetes-dashboard暴露给nodePort

[root@localhost ~]# kubectl edit svc kubernetes-dashboard -n kube-system

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file will abort the edit. If an error occurs while saving this file will be

# reopened with the relevant failures.

#

apiVersion: v1

kind: Service

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":{"k8s-app":"kubernetes-dashboard"},"name":"kubernetes-dashboard","namespace":"kube-system"},"spec":{"ports":[{"port":443,"targetPort":8443}],"selector":{"k8s-app":"kubernetes-dashboard"}}}

creationTimestamp: "2019-12-16T15:22:02Z"

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

resourceVersion: "30670"

selfLink: /api/v1/namespaces/kube-system/services/kubernetes-dashboard

uid: dd167a41-b2b0-4c32-a727-8fbf41fe32be

spec:

clusterIP: 10.96.65.147

externalTrafficPolicy: Cluster

ports:

- nodePort: 31307

port: 443

protocol: TCP

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

sessionAffinity: None

type: NodePort

status:

loadBalancer: {}此时再查看pod,可以看到对外暴露了端口:

[root@localhost ~]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 53/UDP,53/TCP,9153/TCP 2d1h

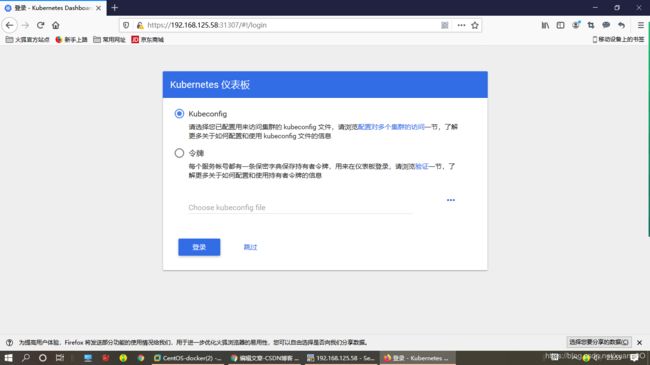

kubernetes-dashboard NodePort 10.96.65.147 443:31307/TCP 24h 如图所示,我们进入登录页面:

1.创建账号

我们首先创建一个admin的账号,并放在kube-system名称空间下

[root@localhost k8s]# vi admin.yaml

#admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin

namespace: kube-system

[root@localhost k8s]# kubectl create -f admin.yaml

serviceaccount/admin created2.绑定角色

默认情况下,kubeadm创建集群时已经创建了admin角色,我们直接绑定即可

[root@localhost k8s]# kubectl create -f admin.yaml

serviceaccount/admin created

[root@localhost k8s]# vi admin-role-binding.yaml

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: admin

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin

namespace: kube-system

[root@localhost k8s]# kubectl create -f admin-role-binding.yaml

clusterrolebinding.rbac.authorization.k8s.io/admin created3.获取Token

[root@localhost k8s]# kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin | awk '{print $1}')

Name: admin-token-nwgp7

Namespace: kube-system

Labels:

Annotations: kubernetes.io/service-account.name: admin

kubernetes.io/service-account.uid: d7786423-5881-486f-9bfc-70485393763d

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IlFpSFN0eGZEMXVXNldGcG15MWRMQm1mbmtEazVPVlBBNVZHaW5MakJqU28if

Q.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9

uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUi

OiJhZG1pbi10b2tlbi1ud2dwNyIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50L

m5hbWUiOiJhZG1pbiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6Im

Q3Nzg2NDIzLTU4ODEtNDg2Zi05YmZjLTcwNDg1MzkzNzYzZCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDp

rdWJlLXN5c3RlbTphZG1pbiJ9.k6z8FvGDbTl3WilcIK2iMvl3ilkBKffIrGV3hMuGrdkwZTScg0Fi6EGjZomi0P4

9MO0LnhjgSm3WiwR4JJmKeSrl0dP-Srh_2wiBnqnwbHySj5ZLorGJDouam9jvdT14gH0CV1og0PC-HB-wuV583iQW1fn9u0qfQKd1LBstdskgG-_FGY730zXz3YwXK3nX12_zCJ3LXcO_tQ9DnIG3kvIP_kYYEzvTidbSnRXlQv2qckeKLyd3g788fjDbfw2wwjYsxsFAfzIMIzVeKwdD5FKhPW2tE4EBo_Vr_pOjuoFCJqgwl1PtxMAulKbF-9Y2RIlvPAL9q0K2E_qC8jBt5w

ca.crt: 1025 bytes

namespace: 11 bytes 然后我们把token复制输入到登录页面即可