TensorFlow2学习十六、实现ResNet(一)创建简单的ResNet模型

一、Resnet简介

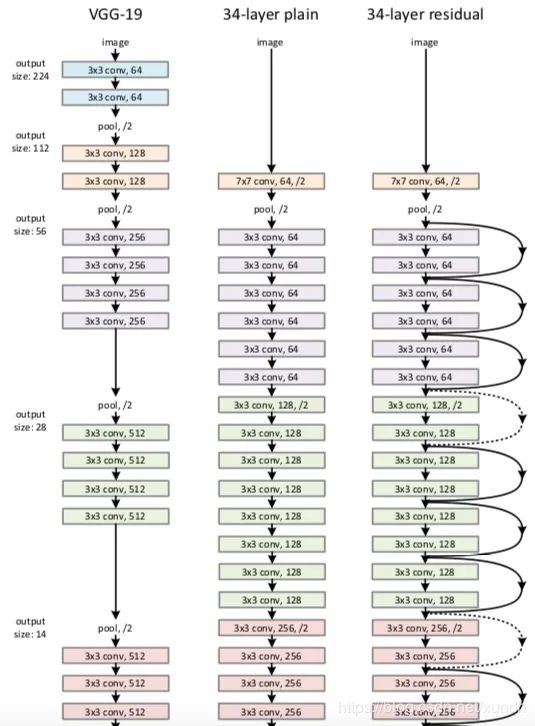

深度残差网络(Residual Network, 简写为 ResNet)由微软研究院的Kaiming He等四名华人提出,通过使用ResNet Unit成功训练出了152层的神经网络,并在ILSVRC2015比赛中取得冠军,在top5上的错误率为3.57%,同时参数量比VGGNet低,效果非常突出。ResNet的结构可以极快的加速神经网络的训练,模型的准确率也有比较大的提升。

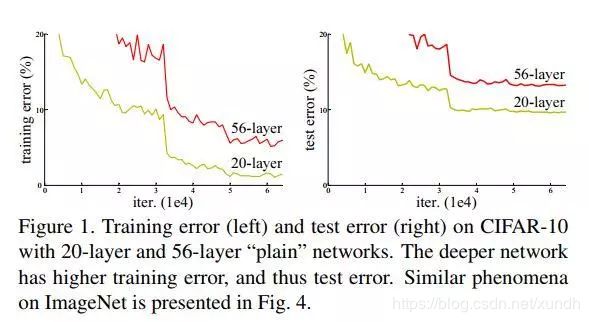

CNN网络自Alexnet的7层发展到VGG16-19层、Googlenet22层。当CNN网络达到一定深度后再一味地增加层数并不能带来性能提升,反而会收敛更慢,准确率变差。

Resnet作者使用了residual representation残差的概念,使用多个有参层来学习输入输出之间的残差表示,而非像一般CNN网络那样使用有参层来直接尝试学习输入、输出之间的映射。当下Resnet已经代替VGG成为一般计算机视觉领域问题中的基础特征提取网络。

二、TensorFlow2.0代码实现

- 数据集:CIFAR-10

- 测试环境:Google Colab

1. 初始化环境,选择TF2.0

try:

# %tensorflow_version only exists in Colab.

%tensorflow_version 2.x

except Exception:

pass

2. 导入包,加载数据集

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import numpy as np

import datetime as dt

(x_train, y_train), (x_test, y_test) = tf.keras.datasets.cifar10.load_data()

3. 数据预处理

- 图片数值区域调整到[0,1]

- 集中裁剪图像到正常范围的75%

- 对中心轴随机翻转进行数据增强

train_dataset = tf.data.Dataset.from_tensor_slices((x_train, y_train)).batch(64).shuffle(10000)

train_dataset = train_dataset.map(lambda x, y: (tf.cast(x, tf.float32) / 255.0, y))

train_dataset = train_dataset.map(lambda x, y: (tf.image.central_crop(x, 0.75), y))

train_dataset = train_dataset.map(lambda x, y: (tf.image.random_flip_left_right(x), y))

train_dataset = train_dataset.repeat()

valid_dataset = tf.data.Dataset.from_tensor_slices((x_test, y_test)).batch(5000).shuffle(10000)

valid_dataset = valid_dataset.map(lambda x, y: (tf.cast(x, tf.float32) / 255.0, y))

valid_dataset = valid_dataset.map(lambda x, y: (tf.image.central_crop(x, 0.75), y))

valid_dataset = valid_dataset.repeat()

4. 使用TF2.0定义ResNet模型

def res_net_block(input_data, filters, conv_size):

# CNN层

x = layers.Conv2D(filters, conv_size, activation='relu', padding='same')(input_data)

x = layers.BatchNormalization()(x)

x = layers.Conv2D(filters, conv_size, activation=None, padding='same')(x)

# 第二层没有激活函数

x = layers.BatchNormalization()(x)

# 两个张量相加

x = layers.Add()([x, input_data])

# 对相加的结果使用ReLU激活

x = layers.Activation('relu')(x)

# 返回结果

return x

inputs = keras.Input(shape=(24, 24, 3))

x = layers.Conv2D(32, 3, activation='relu')(inputs)

x = layers.Conv2D(64, 3, activation='relu')(x)

x = layers.MaxPooling2D(3)(x)

num_res_net_blocks = 10

for i in range(num_res_net_blocks):

x = res_net_block(x, 64, 3)

# 添加一个CNN层

x = layers.Conv2D(64, 3, activation='relu')(x)

# 全局平均池化GAP层

x = layers.GlobalAveragePooling2D()(x)

# 几个密集分类层

x = layers.Dense(256, activation='relu')(x)

# 退出层

x = layers.Dropout(0.5)(x)

outputs = layers.Dense(10, activation='softmax')(x)

res_net_model = keras.Model(inputs, outputs)

- CIFAR-10维度是(32,32,3),我们基于中心点裁剪了75%,现在维度是(24,24,3)。

- 然后建立2个CNN层,输出维度filter分别是32、64.卷积核是3*3.

- res_net_block函数用来创建ResNet层

- 这个demo里 创建了10个ResNet层

- ResNet结束后,添加了几个层:CNN GAP DROUT等。

这个模型里进行了30次迭代,下面一个函数是替代函数,不使用resnet的版本:

def non_res_block(input_data, filters, conv_size):

x = layers.Conv2D(filters, conv_size, activation='relu', padding='same')(input_data)

x = layers.BatchNormalization()(x)

x = layers.Conv2D(filters, conv_size, activation='relu', padding='same')(x)

x = layers.BatchNormalization()(x)

return x

这里差别是没有残差的模块。

5. 训练

callbacks = [

# Write TensorBoard logs to `./logs` directory

keras.callbacks.TensorBoard(log_dir='./log/{}'.format(dt.datetime.now().strftime("%Y-%m-%d-%H-%M-%S")), write_images=True),

]

res_net_model.compile(optimizer=keras.optimizers.Adam(),

loss='sparse_categorical_crossentropy',

metrics=['acc'])

history =res_net_model.fit(train_dataset, epochs=30, steps_per_epoch=195,

validation_data=valid_dataset,

validation_steps=3, callbacks=callbacks)

6. 打印准确度与损失函数

参考:

https://adventuresinmachinelearning.com/introduction-resnet-tensorflow-2/