简介

kube-router是一个新的k8s的网络插件,使用lvs做服务的代理及负载均衡,使用iptables来做网络的隔离策略。部署简单,只需要在每个节点部署一个daemonset即可,高性能,易维护。支持pod间通信,以及服务的代理。

安装

# 本次实验重新创建了集群,使用之前测试其他网络插件的集群环境没有成功

# 可能是由于环境干扰,实验时需要注意

# 创建kube-router目录下载相关文件

mkdir kube-router && cd kube-router

wget https://raw.githubusercontent.com/cloudnativelabs/kube-router/master/daemonset/kubeadm-kuberouter.yaml

wget https://raw.githubusercontent.com/cloudnativelabs/kube-router/master/daemonset/kubeadm-kuberouter-all-features.yaml

# 以下两种部署方式任选其一

# 1. 只启用 pod网络通信,网络隔离策略 功能

kubectl apply -f kubeadm-kuberouter.yaml

# 2. 启用 pod网络通信,网络隔离策略,服务代理 所有功能

# 删除kube-proxy和其之前配置的服务代理

kubectl apply -f kubeadm-kuberouter-all-features.yaml

kubectl -n kube-system delete ds kube-proxy

# 在每个节点上执行

docker run --privileged --net=host registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy-amd64:v1.10.2 kube-proxy --cleanup

# 查看

kubectl get pods --namespace kube-system

kubectl get svc --namespace kube-system

测试

# 启动用于测试的deployment

kubectl run nginx --replicas=2 --image=nginx:alpine --port=80

kubectl expose deployment nginx --type=NodePort --name=example-service-nodeport

kubectl expose deployment nginx --name=example-service

# dns及访问测试

kubectl run curl --image=radial/busyboxplus:curl -i --tty

nslookup kubernetes

nslookup example-service

curl example-service

# 清理

kubectl delete svc example-service example-service-nodeport

kubectl delete deploy nginx curl

监控相关数据并可视化

重新部署kube-router

# 修改yml文件

cp kubeadm-kuberouter-all-features.yaml kubeadm-kuberouter-all-features.yaml.ori

vim kubeadm-kuberouter-all-features.yaml

...

spec:

template:

metadata:

labels:

k8s-app: kube-router

tier: node

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

# 添加如下参数,让prometheus收集数据

prometheus.io/scrape: "true"

prometheus.io/path: "/metrics"

prometheus.io/port: "8080"

spec:

serviceAccountName: kube-router

serviceAccount: kube-router

containers:

- name: kube-router

image: cloudnativelabs/kube-router

imagePullPolicy: Always

args:

# 添加如下参数开启metrics

- --metrics-path=/metrics

- --metrics-port=8080

- --run-router=true

- --run-firewall=true

- --run-service-proxy=true

- --kubeconfig=/var/lib/kube-router/kubeconfig

...

# 重新部署

kubectl delete ds kube-router -n kube-system

kubectl apply -f kubeadm-kuberouter-all-features.yaml

# 测试获取metrics

curl http://127.0.0.1:8080/metrics

部署prometheus

复制如下内容到prometheus.yml文件

---

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus

namespace: kube-system

data:

prometheus.yml: |-

global:

scrape_interval: 15s

scrape_configs:

# scrape config for API servers

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

# scrape config for nodes (kubelet)

- job_name: 'kubernetes-nodes'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

# Scrape config for Kubelet cAdvisor.

#

# This is required for Kubernetes 1.7.3 and later, where cAdvisor metrics

# (those whose names begin with 'container_') have been removed from the

# Kubelet metrics endpoint. This job scrapes the cAdvisor endpoint to

# retrieve those metrics.

#

# In Kubernetes 1.7.0-1.7.2, these metrics are only exposed on the cAdvisor

# HTTP endpoint; use "replacement: /api/v1/nodes/${1}:4194/proxy/metrics"

# in that case (and ensure cAdvisor's HTTP server hasn't been disabled with

# the --cadvisor-port=0 Kubelet flag).

#

# This job is not necessary and should be removed in Kubernetes 1.6 and

# earlier versions, or it will cause the metrics to be scraped twice.

- job_name: 'kubernetes-cadvisor'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

# scrape config for service endpoints.

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

# Example scrape config for pods

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod_name

---

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: 'true'

labels:

name: prometheus

name: prometheus

namespace: kube-system

spec:

selector:

app: prometheus

type: NodePort

ports:

- name: prometheus

protocol: TCP

port: 9090

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: prometheus

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

name: prometheus

labels:

app: prometheus

annotations:

sidecar.istio.io/inject: "false"

spec:

serviceAccountName: prometheus

containers:

- name: prometheus

image: docker.io/prom/prometheus:v2.2.1

imagePullPolicy: IfNotPresent

args:

- '--storage.tsdb.retention=6h'

- '--config.file=/etc/prometheus/prometheus.yml'

ports:

- name: web

containerPort: 9090

volumeMounts:

- name: config-volume

mountPath: /etc/prometheus

volumes:

- name: config-volume

configMap:

name: prometheus

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- services

- endpoints

- pods

- nodes/proxy

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources:

- configmaps

verbs: ["get"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system

---

部署测试

# 部署

kubectl apply -f prometheus.yml

# 查看

kubectl get pods --namespace kube-system

kubectl get svc --namespace kube-system

# 访问prometheus

# 输入 kube_router 关键字查找 看有无提示出现

prometheusNodePort=$(kubectl get svc -n kube-system | grep prometheus | awk '{print $5}' | cut -d '/' -f 1 | cut -d ':' -f 2)

nodeName=$(kubectl get no | grep '' | head -1 | awk '{print $1}')

nodeIP=$(ping -c 1 $nodeName | grep PING | awk '{print $3}' | tr -d '()')

echo "http://$nodeIP:"$prometheusNodePort

部署grafana

复制如下内容到grafana.yml文件

---

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: kube-system

spec:

type: NodePort

ports:

- port: 3000

protocol: TCP

name: http

selector:

app: grafana

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: grafana

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

app: grafana

spec:

serviceAccountName: grafana

containers:

- name: grafana

image: grafana/grafana

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3000

volumeMounts:

- mountPath: /var/lib/grafana

name: grafana-data

volumes:

- name: grafana-data

emptyDir: {}

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: grafana

namespace: kube-system

---

部署测试

# 部署

kubectl apply -f grafana.yml

# 查看

kubectl get pods --namespace kube-system

kubectl get svc --namespace kube-system

# 访问grafana

grafanaNodePort=$(kubectl get svc -n kube-system | grep grafana | awk '{print $5}' | cut -d '/' -f 1 | cut -d ':' -f 2)

nodeName=$(kubectl get no | grep '' | head -1 | awk '{print $1}')

nodeIP=$(ping -c 1 $nodeName | grep PING | awk '{print $3}' | tr -d '()')

echo "http://$nodeIP:"$grafanaNodePort

# 默认用户密码

admin/admin

导入并查看dashboard

# 下载官方dashboard的json文件

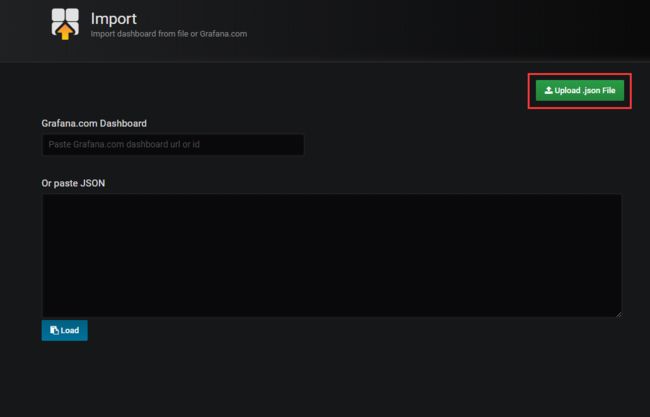

wget https://raw.githubusercontent.com/cloudnativelabs/kube-router/master/dashboard/kube-router.json

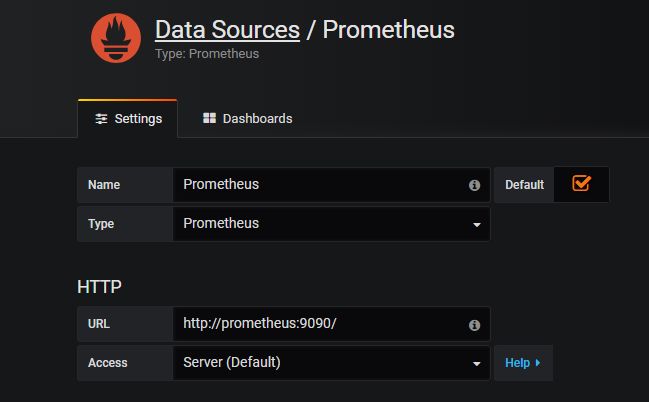

创建名为Prometheus类型也为Prometheus的数据源,连接地址为http://prometheus:9090/

选择刚刚下载的json文件导入dashboard

查看dashboard