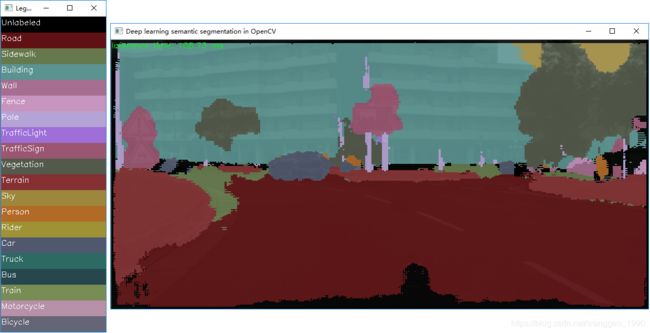

opencv dnn模块 示例(8) 语义分割 segmentation(ENet/fcn8s)

一、opencv的示例模型文件

opencv的dnn模块读取models.yml文件中包含的目标检测模型有2种,

ENet road scene segmentation network from https://github.com/e-lab/ENet-training

Works fine for different input sizes.

- enet:

model: “Enet-model-best.net”

mean: [0, 0, 0]

scale: 0.00392

width: 512

height: 256

rgb: true

classes: “enet-classes.txt”

sample: “segmentation” - fcn8s:

model: “fcn8s-heavy-pascal.caffemodel”

config: “fcn8s-heavy-pascal.prototxt”

mean: [0, 0, 0]

scale: 1.0

width: 500

height: 500

rgb: false

sample: “segmentation”

二、示例代码

#include 3、示例

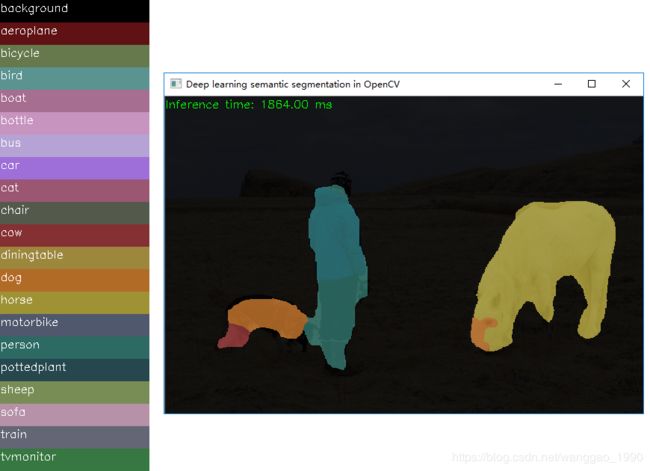

(1) fcn8s-heavy-pascal 测试结果

person.jpg 原图,图例,结果图如下 (opencl 比 cpu慢2倍…)

另外两张图结果