kubernetes k8s 最新版(1.13)二进制搭建

强大自己是唯一获得幸福的途径,这是长远的,而非当下的玩乐!

本次环境:

| 环境 | 版本 |

| 操作系统 | Centos7.4 |

| docker | 18.09-ce |

| kubernetes | 1.13 |

| etcd | 3.3.11 |

主机:

| 服务 | IP | 组件 |

| k8s-master | 192.168.2.9 | etcd,kube-apiserver,kube-controller-manager,kube-scheduler |

| k8s-node1 | 192.168.2.10 | etcd,kubelet,kube-proxy,docker,flannel |

| k8d-node2 | 192.168.2.11 | etcd,kubelet,kube-proxy,docker,flannel |

步骤:

1,证书生成及分发

2,etcd部署

3,master相关组件部署

3.1 部署kube-apiserver

3.2 部署kube-controller-manager

3.3 部署kube-scheduler

4,node相关组件部署

4.1 node授权,kube-bootstrap用户绑定权限

4.2 部署flannel

4.3 部署kubelet

4.4 部署kube-proxy

5,基础插件安装

准备工作

1.1 请先自行在node节点安装docker,下附官网Centos安装链接

https://docs.docker.com/install/linux/docker-ce/centos/

1.2 请自行安装cfssl,用于证书相关操作,下附需要下载的包及链接

需用到 [cfssl_linux-amd64, cfssljson_linux-amd64, cfssl-certinfo_linux-amd64]

https://pkg.cfssl.org/

1.3 请确认master节点hosts,及在所有节点执行添加目录 :

[root@k8s-master coredns]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.2.9 k8s-master

192.168.2.10 k8s-node1

192.168.2.11 k8s-node2

mkdir -p /opt/kubernetes/{bin,cfg,ssl}注: 我添加hosts是因为在最后时有一个小报错,这个报错引起的原因是我认证master用的是主机名,而在创建容器时 master找不到我。报错如下:

[root@k8s-master coredns]# kubectl run -it --image=busybox:1.28.4 --rm --restart=Never sh

If you don't see a command prompt, try pressing enter.

Error attaching, falling back to logs: error dialing backend: dial tcp: lookup k8s-node1 on xxx.xxx.x.xxx:53: no such host

pod "sh" deleted

Error from server: Get https://k8s-node1:10250/containerLogs/default/sh/sh: dial tcp: lookup k8s-node1 on xxx.xxx.x.xxx:53: no such host

1 证书生成及分发

1.1 总览

需生成的证书

- ca-key.pem

- ca.pem

- kubernetes-key.pem

- kubernetes.pem

- kube-proxy.pem

- kube-proxy-key.pem

- admin.pem

- admin-key.pem

用于

| 组件 | 用到证书 |

| etcd | ca.pem、kubernetes-key.pem、kubernetes.pem |

| kube-apiserver | ca.pem、kubernetes-key.pem、kubernetes.pem |

| kube-controller-manager | ca-key.pem、ca.pem |

| kubectl | ca.pem、admin-key.pem、admin.pem |

| kubelet | ca.pem |

| kube-proxy | ca.pem、kube-proxy-key.pem、kube-proxy.pem |

| flannel | ca.pem、kubernetes-key.pem、kubernetes.pem |

1.2 生成证书

注:本人对证书研究不深,如有解释或操作不合理,请您指教。

可以看下默认格式:

cfssl print-defaults config > config.json

cfssl print-defaults csr > csr.json创建ca证书

k8s-master操作

mkdir /root/k8s/cre_ssl -p

cd /root/k8s/cre_ssl/

echo "创建 ca 配置文件"

cat > ca-config.json << SUCESS

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

SUCESS

echo "创建 ca 证书签名请求"

cat > ca-csr.json << SUCESS

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

],

"ca": {

"expiry": "87600h"

}

}

SUCESS

echo "生成 ca 证书和私钥"

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

ls创建kubernetes证书

k8s-master操作

echo "创建 kubernetes 证书签名请求文件"

cat > kubernetes-csr.json << SUCESS

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.2.9",

"192.168.2.10",

"192.168.2.11",

"10.0.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

SUCESS

echo "生成 kubernetes 证书和私钥"

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

ls kubernetes* hosts段: 如果 hosts 字段不为空则需要指定授权使用该证书的 IP 或域名列表,由于该证书后续被 etcd 集群和 kubernetes master 集群使用,所以上面分别指定了 etcd 集群、kubernetes master 集群的主机 IP 和 kubernetes 服务的服务 IP(一般是 kube-apiserver 指定的 service-cluster-ip-range 网段的第一个IP,如 10.0.0.1)。kubernetes及以下.xxx默认格式。当然你也可以使用主机名。

创建admin证书

k8s-master操作

echo "创建 admin 证书签名请求文件"

cat > admin-csr.json << SUCESS

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

SUCESS

echo "生成 admin 证书和私钥"

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

ls admin*

此证书用于将来生成管理员用的kube config 配置文件用的

另后续 kube-apiserver 使用 RBAC 对客户端(如 kubelet、kube-proxy、Pod)请求进行授权;

kube-apiserver 预定义了一些 RBAC 使用的 RoleBindings,如 cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予了调用kube-apiserver 的所有 API的权限;

O 指定该证书的 Group 为 system:masters,kubelet 使用该证书访问 kube-apiserver 时 ,由于证书被 CA 签名,所以认证通过,同时由于证书用户组为经过预授权的 system:masters,所以被授予访问所有 API 的权限;

创建kube-proxy证书

k8s-master操作

echo "创建 kube-proxy 证书签名请求文件"

cat > kube-proxy-csr.json << SUCESS

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

SUCESS

echo "生成 kube-proxy 客户端证书和私钥"

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

ls kube-proxy* kube-apiserver 预定义的 RoleBinding system:node-proxier 将User system:kube-proxy 与 Role system:node-proxier 绑定,该 Role 授予了调用 kube-apiserver Proxy 相关 API 的权限;

请根据上面列表,将对应证书分发至对应节点

k8s-master操作

cp ca*.pem kubernetes*.pem admin*.pem /opt/kubernetes/ssl/

scp ca*.pem kubernetes*.pem kube-proxy*.pem 192.168.2.10:/opt/kubernetes/ssl/

scp ca*.pem kubernetes*.pem kube-proxy*.pem 192.168.2.11:/opt/kubernetes/ssl/2 etcd部署

注:本次是三节点高可用,复用了kubernetes的机器,上面也说过了,证书没有分开生成。但注意kubernetes 证书的 hosts 字段列表中必须包含此三个节点的 IP,否则后续证书校验会失败。

二进制包下载链接:https://github.com/etcd-io/etcd/releases

以下基本在k8s-master 及 k8s-node1、k8s-node2三个节点上一起操作(除一些特殊,如更改配置等)

如你是mac,iterm2,可以使用快捷键 commmand + shift + i 开启新世界大门(平常这样操作风险也较高,自注意)

k8s-master,k8s-node1,k8s-node2操作

echo "创建目录及下载、解压、放置系统环境变量路径内"

sleep 1

cd /usr/local/src/ && mkdir -p /opt/etcd/{work,cfg,data}

wget https://github.com/etcd-io/etcd/releases/download/v3.3.11/etcd-v3.3.11-linux-amd64.tar.gz

tar zxf etcd-v3.3.11-linux-amd64.tar.gz

mv etcd-v3.3.11-linux-amd64/etcd* /usr/local/bin/

echo "生成etcd配置文件"

echo -e "\033[31m请务必修改名称及IP\033[0m"

sleep 1

cat > etcd.conf << SUCESS

# [member]

ETCD_NAME=etcd01

ETCD_DATA_DIR="/opt/etcd/data/"

ETCD_SSL_DIR="/opt/kubernetes/ssl"

ETCD_LISTEN_PEER_URLS="https://192.168.2.9:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.2.9:2379"

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.2.9:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.2.9:2380,etcd02=https://192.168.2.10:2380,etcd03=https://192.168.2.11:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.2.9:2379"

SUCESS

echo "生成 etcd 系统服务启动文件"

sleep 1

cat > /usr/lib/systemd/system/etcd.service << SUCESS

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

WorkingDirectory=/opt/etcd/work/

EnvironmentFile=-/opt/etcd/cfg/etcd.conf

ExecStart=/usr/local/bin/etcd \\

--name \${ETCD_NAME} \\

--cert-file=\${ETCD_SSL_DIR}/kubernetes.pem \\

--key-file=\${ETCD_SSL_DIR}/kubernetes-key.pem \\

--peer-cert-file=\${ETCD_SSL_DIR}/kubernetes.pem \\

--peer-key-file=\${ETCD_SSL_DIR}/kubernetes-key.pem \\

--trusted-ca-file=\${ETCD_SSL_DIR}/ca.pem \\

--peer-trusted-ca-file=\${ETCD_SSL_DIR}/ca.pem \\

--initial-advertise-peer-urls \${ETCD_INITIAL_ADVERTISE_PEER_URLS} \\

--listen-peer-urls \${ETCD_LISTEN_PEER_URLS} \\

--listen-client-urls \${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \\

--advertise-client-urls \${ETCD_ADVERTISE_CLIENT_URLS} \\

--initial-cluster-token \${ETCD_INITIAL_CLUSTER_TOKEN} \\

--initial-cluster \${ETCD_INITIAL_CLUSTER} \\

--initial-cluster-state new \\

--data-dir=\${ETCD_DATA_DIR}

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

SUCESS

echo "启动"

sleep 1

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

systemctl status etcd

3 master节点部署

3.1 部署kube-apiserver

下载链接:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.13.md#v1132

只下载server的包就可以,因为server的包已经包含了我们此次所需的所有组件

k8s-master操作

echo "下载及移动master所需二进制包"

sleep 1

cd /usr/local/src/

wget https://dl.k8s.io/v1.13.2/kubernetes-server-linux-amd64.tar.gz

tar zxf kubernetes-server-linux-amd64.tar.gz

cd kubernetes/server/bin/

cp kubectl kube-scheduler kube-apiserver kube-controller-manager /opt/kubernetes/bin/

echo "生成token"

sleep 1

cat > /opt/kubernetes/cfg/token.csv << SUCESS

`head -c 16 /dev/urandom | od -An -t x | tr -d ' '`,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

SUCESS

echo "生成 kube-apiserver 配置"

sleep 1

echo -e "\033[31m请务必修改etcd地址及IP等\033[0m"

cat > /opt/kubernetes/cfg/kube-apiserver.conf << SUCESS

KUBE_APISERVER_OPTS="--logtostderr=true \\

--v=4 \\

--etcd-servers=https://192.168.2.9:2379,https://192.168.2.10:2379,https://192.168.2.11:2379 \\

--bind-address=192.168.2.9 \\

--secure-port=6443 \\

--advertise-address=192.168.2.9 \\

--allow-privileged=true \\

--service-cluster-ip-range=10.0.0.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--kubelet-https=true \\

--enable-bootstrap-token-auth \\

--token-auth-file=/opt/kubernetes/cfg/token.csv \\

--service-node-port-range=30000-50000 \\

--tls-cert-file=/opt/kubernetes/ssl/kubernetes.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/kubernetes-key.pem \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/opt/kubernetes/ssl/ca.pem \\

--etcd-certfile=/opt/kubernetes/ssl/kubernetes.pem \\

--etcd-keyfile=/opt/kubernetes/ssl/kubernetes-key.pem"

SUCESS

echo "生成 kube-apiserver 系统服务启动文件"

sleep 1

cat > /usr/lib/systemd/system/kube-apiserver.service << SUCESS

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

SUCESS

echo "启动"

sleep 1

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

systemctl status kube-apiserver

3.2 部署 kube-controller-manager

k8s-master操作

echo "生成 kube-controller-manager 配置文件"

sleep 1

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << SUCESS

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\

--v=4 \\

--master=127.0.0.1:8080 \\

--leader-elect=true \\

--address=127.0.0.1 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s"

SUCESS

echo "生成 kube-controller-manager 系统服务启动文件"

sleep 1

cat > /usr/lib/systemd/system/kube-controller-manager.service << SUCESS

[Unit]

Description=Kubernetes Controller Manager

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

SUCESS

echo "启动"

sleep 1

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

systemctl status kube-controller-manager3.3 部署kube-scheduler

k8s-master操作

echo "生成 kube-scheduler 配置文件"

sleep 1

cat > /opt/kubernetes/cfg/kube-scheduler << SUCESS

KUBE_SCHEDULER_OPTS="--logtostderr=true \\

--v=4 \\

--master=127.0.0.1:8080 \\

--leader-elect"

SUCESS

echo "生成 kube-scheduler 系统服务启动文件"

sleep 1

cat > /usr/lib/systemd/system/kube-scheduler.service << SUCESS

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

SUCESS

echo "启动"

sleep 1

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

systemctl status kube-scheduler

4,node相关组件部署

4.1 node授权

kubelet、kube-proxy 等 Node 机器上的进程与 Master 机器的 kube-apiserver 进程通信时需要认证和授权;

kubernetes 1.4 开始支持由 kube-apiserver 为客户端生成 TLS 证书的 TLS Bootstrapping 功能,这样就不需要为每个客户端生成证书了;所以下面的操作都在master操作。

由于kube-apiserver需要, token文件上面已经在 3.1 部署kube-apiserver时已经创建

k8s-master操作

echo "创建 kubelet bootstrapping kubeconfig 文件"

sleep 1

/opt/kubernetes/bin/kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://192.168.2.9:6443 \

--kubeconfig=bootstrap.kubeconfig

/opt/kubernetes/bin/kubectl config set-credentials kubelet-bootstrap \

--token=`cat /opt/kubernetes/cfg/token.csv |awk -F',' '{print $1}'` \

--kubeconfig=bootstrap.kubeconfig

/opt/kubernetes/bin/kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

/opt/kubernetes/bin/kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

echo "创建 kube-proxy kubeconfig 文件"

sleep 1

/opt/kubernetes/bin/kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://192.168.2.9:6443 \

--kubeconfig=kube-proxy.kubeconfig

/opt/kubernetes/bin/kubectl config set-credentials kube-proxy \

--client-certificate=/root/k8s/cre_ssl/kube-proxy.pem \

--client-key=/root/k8s/cre_ssl/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

/opt/kubernetes/bin/kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

/opt/kubernetes/bin/kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

echo "分发至node1, node2"

sleep 1

scp bootstrap.kubeconfig kube-proxy.kubeconfig 192.168.2.10:/opt/kubernetes/cfg/

scp bootstrap.kubeconfig kube-proxy.kubeconfig 192.168.2.11:/opt/kubernetes/cfg/

echo "绑定授权"

/opt/kubernetes/bin/kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

BOOTSTRAP_TOKEN 将被写入到 kube-apiserver 使用的 token.csv 文件和 kubelet 使用的 bootstrap.kubeconfig 文件,如果后续重新生成了 BOOTSTRAP_TOKEN,则需要:

- 更新 token.csv 文件,分发到所有机器 (master 和 node)的 /opt/kubernetes/cfg/ 目录下,分发到node节点上非必需;

- 重新生成 bootstrap.kubeconfig 文件,分发到所有 node 机器的/opt/kubernetes/cfg/目录下;

- 重启 kube-apiserver 和 kubelet 进程;

- 重新 approve kubelet 的 csr 请求;

4.2 部署flannel

下载链接: https://github.com/coreos/flannel/releases

k8s-master操作

alias etcdctl="etcdctl --endpoints='https://192.168.2.9:2379,https://192.168.2.10:2379,https://192.168.2.11:2379' --ca-file=/opt/kubernetes/ssl/ca.pem --cert-file=/opt/kubernetes/ssl/kubernetes.pem --key-file=/opt/kubernetes/ssl/kubernetes-key.pem"

etcdctl mkdir /k8s-flannel/network

etcdctl mk /k8s-flannel/network/config '{"Network":"172.17.0.0/16","SubnetLen":24,"Backend":{"Type":"vxlan"}}'

etcdctl ls /k8s-flannel/network

etcdctl ls /k8s-flannel/network/config

etcdctl get /k8s-flannel/network/configk8s-node1, k8s-node2操作

echo "下载二进制及移动"

sleep 1

cd /usr/local/src/

wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz

tar zxf flannel-v0.10.0-linux-amd64.tar.gz

mv flanneld mk-docker-opts.sh /opt/kubernetes/bin/

echo "生成 flannel 配置"

sleep 1

cat > /opt/kubernetes/cfg/flanneld.conf << SUCESS

FLANNEL_OPTIONS="-etcd-prefix=/k8s-flannel/network \

-etcd-endpoints=https://192.168.2.9:2379,https://192.168.2.10:2379,https://192.168.2.11:2379 \

-etcd-cafile=/opt/kubernetes/ssl/ca.pem \

-etcd-certfile=/opt/kubernetes/ssl/kubernetes.pem \

-etcd-keyfile=/opt/kubernetes/ssl/kubernetes-key.pem"

SUCESS

echo "生成 flannel 系统服务启动文件"

sleep 1

cat > /usr/lib/systemd/system/flanneld.service << SUCESS

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld.conf

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

SUCESS

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

systemctl status flanneld

echo "让 docker 使用flannel网络"

ExecStartNum=`grep -n 'ExecStart' /usr/lib/systemd/system/docker.service|awk -F: '{print $1}'`

if [[ ${ExecStartNum}x != "x" ]];then sed -i "${ExecStartNum}i\EnvironmentFile=/run/flannel/subnet.env" /usr/lib/systemd/system/docker.service;sed -r -i "s#ExecStart=(.*)#ExecStart=\1 \$DOCKER_NETWORK_OPTIONS#g" /usr/lib/systemd/system/docker.service;fi

systemctl daemon-reload

systemctl restart docker

echo "查看 docker 使用flannel是否正常"

alias etcdctl="etcdctl --endpoints=https://192.168.2.9:2379,https://192.168.2.10:2379,https://192.168.2.11:2379 --ca-file=/opt/kubernetes/ssl/ca.pem --cert-file=/opt/kubernetes/ssl/kubernetes.pem --key-file=/opt/kubernetes/ssl/kubernetes-key.pem"

ip add

etcdctl ls /k8s-flannel/network

etcdctl ls /k8s-flannel/network/subnets

4.2 部署kubelet

k8s-node1, k8s-node2操作, 不同节点IP自己改

echo "拷贝二进制包"

sleep 1

scp 192.168.2.9:/usr/local/src/kubernetes/server/bin/{kubelet,kube-proxy} /opt/kubernetes/bin/

echo "生成 kubelet 配置文件"

sleep 1

cat > /opt/kubernetes/cfg/kubelet.conf << SUCESS

KUBELET_OPTS="--logtostderr=true \\

--v=4 \\

--address=192.168.2.10 \\

--hostname-override=192.168.2.10 \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/opt/kubernetes/cfg/kubelet.config \\

--cert-dir=/opt/kubernetes/ssl \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

SUCESS

echo "生成 kubelet 配置文件"

sleep 1

cat > /opt/kubernetes/cfg/kubelet.config << SUCESS

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.2.10

port: 10250

cgroupDriver: cgroupfs

clusterDNS:

- 10.0.0.2

clusterDomain: cluster.local.

failSwapOn: false

SUCESS

echo "生成 kubelet 系统服务启动文件"

sleep 1

cat > /usr/lib/systemd/system/kubelet.service<< SUCESS

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

SUCESS

echo "启动"

sleep 1

systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

systemctl status kubelet

4.3 部署kube-proxy

k8s-node1, k8s-node2操作, 不同节点IP自己改

echo "生成 kube-proxy 配置文件"

cat > /opt/kubernetes/cfg/kube-proxy.conf << SUCESS

KUBE_PROXY_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=192.168.2.10 \\

--cluster-cidr=10.0.0.0/24 \\

--proxy-mode=ipvs \\

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

SUCESS

echo "生成 kube-proxy 系统服务启动文件"

cat > /usr/lib/systemd/system/kube-proxy.service << SUCESS

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

SUCESS

echo "启动"

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

systemctl status kube-proxy4.4 通过kublet的TLS证书请求

k8s-master操作

#添加环境变量

echo "export PATH=$PATH:/opt/kubernetes/bin" >> /etc/profile

#查看TLS请求

[root@k8s-master cfg]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-9zVbumZwMCkqjIYv2wMgc1d7RF3Pp4u3woR1QEvhf-U 9m kubelet-bootstrap Pending

node-csr-NGBaWrdKGYQlNmhzfFV5K5EOjf_YePNtU_WUzu52LRU 8m46s kubelet-bootstrap Pending

#通过请求

[root@k8s-master cfg]# kubectl certificate approve node-csr-9zVbumZwMCkqjIYv2wMgc1d7RF3Pp4u3woR1QEvhf-U

certificatesigningrequest.certificates.k8s.io/node-csr-9zVbumZwMCkqjIYv2wMgc1d7RF3Pp4u3woR1QEvhf-U approved

[root@k8s-master cfg]# /opt/kubernetes/bin/kubectl certificate approve node-csr-NGBaWrdKGYQlNmhzfFV5K5EOjf_YePNtU_WUzu52LRU

certificatesigningrequest.certificates.k8s.io/node-csr-FkqTr_6kQjNhziQPZr_Zy3jFcFZlYRHXT9RXqSaqf5w approved

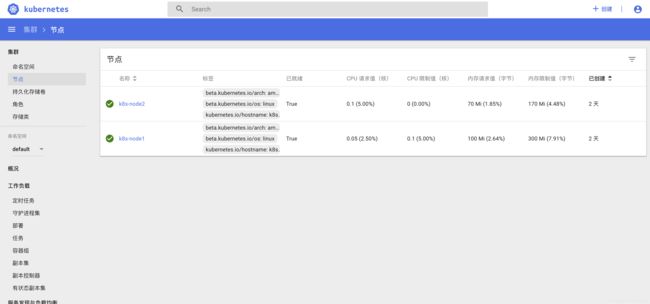

#查看节点

[root@k8s-master cfg]#kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-node1 Ready 11m v1.13.2

k8s-node2 Ready 15s v1.13.2

5 基础插件安装

5.1 dashboard部署

注:本人机器为香港节点,无需更改镜像地址,如您为国内网络,可更改 dashboard-controller.yaml 文件内镜像源为:

待查找。。。

echo "获取 dashboard yaml文件"

cd /usr/local/src/

wget https://github.com/kubernetes/kubernetes/releases/download/v1.13.2/kubernetes.tar.gz

tar zxf kubernetes.tar.gz

cp -r /usr/local/src/kubernetes/cluster/addons/dashboard/ /root/k8s/

cd /root/k8s/dashboard/

kubectl create -f dashboard-configmap.yaml

kubectl create -f dashboard-rbac.yaml

kubectl create -f dashboard-secret.yaml

kubectl create -f dashboard-controller.yaml

sed -i '/selector/i\ type: NodePort' dashboard-service.yaml

kubectl create -f dashboard-service.yaml

kubectl get svc -n kube-system

echo "请使用 https://你的NodeIp:上条命令PORT 访问dashboard"

sleep 2

echo "授权"

cat > k8s-admin.yaml << SUCESS

apiVersion: v1

kind: ServiceAccount

metadata:

name: dashboard-admin

namespace: kube-system

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: dashboard-admin

subjects:

- kind: ServiceAccount

name: dashboard-admin

namespace: kube-system

roleRef:

kind: ClusterRole

name: cluster-admin

apiGroup: rbac.authorization.k8s.io

SUCESS

kubectl create -f k8s-admin.yaml

echo "此后每人的secret名称以及token值不一样,根据自身操作"

sleep 3

kubectl get secret -n kube-system

kubectl describe secret dashboard-admin-token-bmh7l -n kube-system

echo "拿到token, 登录"

5.2 内部 CoreDns 部署

前面配置kubelet 预设了内部dns地址(kubelet.config),所以下面的地址也为此预设地址

cp -r /usr/local/src/kubernetes/cluster/addons/dns/coredns/ /root/k8s/

cd /root/k8s/coredns/

sed -i 's#$DNS_DOMAIN#cluster.local#g' coredns.yaml.sed

sed -i 's#$DNS_SERVER_IP#10.0.0.2#g' coredns.yaml.sed

echo "如为国内网络,执行下面更改镜像源"

sed -i 's#k8s.gcr.io#coredns#g' coredns.yaml.sed

echo "部署"

kubectl apply -f coredns.yaml.sed