HTML5之MSE标准为移动端的直播应用带来超低延时的播放体验

原文地址:http://www.zhiboshequ.com/news/604.html

随着移动互联网应用的大规模普及,移动端的视频播放体验日益受到重视。

2016年11月17日,由Google与Microsoft等互联网巨头主导的HTML5 MSE扩展标准已经正式发布,这标志着以往由Apple公司发布的HLS协议标准将会很快退出历史舞台,就像PC端的 Adobe Flash Player 播放器一样,都将被新的通用性更强的技术标准所代替。这些变化最终都将大幅提升终端用户的视频播放体验,无疑将受到行业的广泛支持和拥抱。

下面是MSE的 推荐标准,国内目前已有公司按照该标准在产品中有了具体实现。

标准原文如下:

Media Source Extensions™

W3C Recommendation 17 November 2016

- This version:

- https://www.w3.org/TR/2016/REC-media-source-20161117/

- Latest published version:

- https://www.w3.org/TR/media-source/

- Latest editor's draft:

- https://w3c.github.io/media-source/

- Previous version:

- https://www.w3.org/TR/2016/PR-media-source-20161004/

- Editors:

- Repository:

- We are on GitHub

- File a bug

- Commit history

- Mailing list:

- [email protected]

- Implementation:

- Can I use Media Source Extensions?

- Test Suite

- Test Suite repository

Please check the errata for any errors or issues reported since publication.

The English version of this specification is the only normative version. Non-normativetranslations may also be available.

Copyright © 2016W3C® (MIT,ERCIM,Keio,Beihang). W3C liability, trademark and permissive document license rules apply.

Abstract

This specification extends HTMLMediaElement [HTML51] to allow JavaScript to generate media streams for playback. Allowing JavaScript to generate streams facilitates a variety of use cases like adaptive streaming and time shifting live streams.

Status of This Document

This section describes the status of this document at the time of its publication. Other documents may supersede this document. A list of currentW3C publications and the latest revision of this technical report can be found in theW3C technical reports index at https://www.w3.org/TR/.

The working group maintains a list of all bug reports. New features for this specification are expected to be incubated in theWeb Platform Incubator Community Group.

One editorial issue (removing the exposure ofcreateObjectURL(mediaSource) in workers) was addressed since the previous publication. For the list of changes done since the previous version, see thecommits.

By publishing this Recommendation, W3C expects the functionality specified in this Recommendation will not be affected by changes to File API. The Working Group will continue to track these specifications.

This document was published by the HTML Media Extensions Working Group as a Recommendation. If you wish to make comments regarding this document, theGitHub repository is preferred for discussion of this specification. Historical discussion can also be found in themailing list archives).

In September 2016, the Working Group used an implementation report to move this document to Recommendation.

This document has been reviewed by W3C Members, by software developers, and by otherW3C groups and interested parties, and is endorsed by the Director as aW3C Recommendation. It is a stable document and may be used as reference material or cited from another document.W3C's role in making the Recommendation is to draw attention to the specification and to promote its widespread deployment. This enhances the functionality and interoperability of the Web.

This document was produced by a group operating under the 5 February 2004 W3C Patent Policy.W3C maintains apublic list of any patent disclosures made in connection with the deliverables of the group; that page also includes instructions for disclosing a patent. An individual who has actual knowledge of a patent which the individual believes containsEssential Claim(s) must disclose the information in accordance with section 6 of the W3C Patent Policy.

This document is governed by the 1 September 2015 W3C Process Document.

Table of Contents

- 1.Introduction

- 1.1Goals

- 1.2Definitions

- 2.MediaSource Object

- 2.1Attributes

- 2.2Methods

- 2.3Event Summary

- 2.4Algorithms

- 2.4.1Attaching to a media element

- 2.4.2Detaching from a media element

- 2.4.3Seeking

- 2.4.4SourceBuffer Monitoring

- 2.4.5Changes to selected/enabled track state

- 2.4.6Duration change

- 2.4.7End of stream algorithm

- 3.SourceBuffer Object

- 3.1Attributes

- 3.2Methods

- 3.3Track Buffers

- 3.4Event Summary

- 3.5Algorithms

- 3.5.1Segment Parser Loop

- 3.5.2Reset Parser State

- 3.5.3Append Error Algorithm

- 3.5.4Prepare Append Algorithm

- 3.5.5Buffer Append Algorithm

- 3.5.6Range Removal

- 3.5.7Initialization Segment Received

- 3.5.8Coded Frame Processing

- 3.5.9Coded Frame Removal Algorithm

- 3.5.10Coded Frame Eviction Algorithm

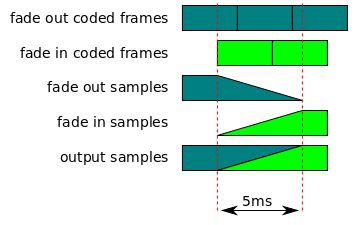

- 3.5.11Audio Splice Frame Algorithm

- 3.5.12Audio Splice Rendering Algorithm

- 3.5.13Text Splice Frame Algorithm

- 4.SourceBufferList Object

- 4.1Attributes

- 4.2Methods

- 4.3Event Summary

- 5.URL Object Extensions

- 5.1Methods

- 6.HTMLMediaElement Extensions

- 7.AudioTrack Extensions

- 8.VideoTrack Extensions

- 9.TextTrack Extensions

- 10.Byte Stream Formats

- 11.Conformance

- 12.Examples

- 13.Acknowledgments

- A.VideoPlaybackQuality

- B.References

- B.1Normative references

- B.2Informative references

1. Introduction

This section is non-normative.

This specification allows JavaScript to dynamically construct media streams for

1.1 Goals

This specification was designed with the following goals in mind:

- Allow JavaScript to construct media streams independent of how the media is fetched.

- Define a splicing and buffering model that facilitates use cases like adaptive streaming, ad-insertion, time-shifting, and video editing.

- Minimize the need for media parsing in JavaScript.

- Leverage the browser cache as much as possible.

- Provide requirements for byte stream format specifications.

- Not require support for any particular media format or codec.

This specification defines:

- Normative behavior for user agents to enable interoperability between user agents and web applications when processing media data.

- Normative requirements to enable other specifications to define media formats to be used within this specification.

1.2 Definitions

- Active Track Buffers

-

The track buffers that provide coded frames for the

enabledaudioTracks, theselectedvideoTracks, and the"showing"or"hidden"textTracks. All these tracks are associated withSourceBufferobjects in theactiveSourceBufferslist. - Append Window

-

A presentation timestamp range used to filter out coded frames while appending. The append window represents a single continuous time range with a single start time and end time. Coded frames withpresentation timestamp within this range are allowed to be appended to the

SourceBufferwhile coded frames outside this range are filtered out. The append window start and end times are controlled by theappendWindowStartandappendWindowEndattributes respectively. - Coded Frame

-

A unit of media data that has a presentation timestamp, a decode timestamp, and a coded frame duration.

- Coded Frame Duration

-

The duration of a coded frame. For video and text, the duration indicates how long the video frame or textSHOULD be displayed. For audio, the duration represents the sum of all the samples contained within the coded frame. For example, if an audio frame contained 441 samples @44100Hz the frame duration would be 10 milliseconds.

- Coded Frame End Timestamp

-

The sum of a coded frame presentation timestamp and its coded frame duration. It represents the presentation timestamp that immediately follows the coded frame.

- Coded Frame Group

-

A group of coded frames that are adjacent and have monotonically increasing decode timestamps without any gaps. Discontinuities detected by the coded frame processing algorithm and

abort()calls trigger the start of a new coded frame group. - Decode Timestamp

-

The decode timestamp indicates the latest time at which the frame needs to be decoded assuming instantaneous decoding and rendering of this and any dependant frames (this is equal to thepresentation timestamp of the earliest frame, in presentation order, that is dependant on this frame). If frames can be decoded out ofpresentation order, then the decode timestampMUST be present in or derivable from the byte stream. The user agentMUST run the append error algorithm if this is not the case. If frames cannot be decoded out ofpresentation order and a decode timestamp is not present in the byte stream, then the decode timestamp is equal to thepresentation timestamp.

- Initialization Segment

-

A sequence of bytes that contain all of the initialization information required to decode a sequence ofmedia segments. This includes codec initialization data,Track ID mappings for multiplexed segments, and timestamp offsets (e.g., edit lists).

NoteThe byte stream format specifications in the byte stream format registry [MSE-REGISTRY] contain format specific examples.

- Media Segment

-

A sequence of bytes that contain packetized & timestamped media data for a portion of themedia timeline. Media segments are always associated with the most recently appendedinitialization segment.

NoteThe byte stream format specifications in the byte stream format registry [MSE-REGISTRY] contain format specific examples.

- MediaSource object URL

-

A MediaSource object URL is a unique Blob URI [FILE-API] created by

createObjectURL(). It is used to attach aMediaSourceobject to an HTMLMediaElement.These URLs are the same as a Blob URI, except that anything in the definition of that feature that refers toFile andBlob objects is hereby extended to also apply to

MediaSourceobjects.The origin of the MediaSource object URL is the relevant settings object of

thisduring the call tocreateObjectURL().NoteFor example, the origin of the MediaSource object URL affects the way that the media element isconsumed by canvas.

- Parent Media Source

-

The parent media source of a

SourceBufferobject is theMediaSourceobject that created it. - Presentation Start Time

-

The presentation start time is the earliest time point in the presentation and specifies theinitial playback position andearliest possible position. All presentations created using this specification have a presentation start time of 0.

NoteFor the purposes of determining if

HTMLMediaElement.bufferedcontains aTimeRangethat includes the current playback position, implementationsMAY choose to allow a current playback position at or afterpresentation start time and before the firstTimeRangeto play the firstTimeRangeif thatTimeRangestarts within a reasonably short time, like 1 second, afterpresentation start time. This allowance accommodates the reality that muxed streams commonly do not begin all tracks precisely atpresentation start time. ImplementationsMUST report the actual buffered range, regardless of this allowance. - Presentation Interval

-

The presentation interval of a coded frame is the time interval from its presentation timestamp to the presentation timestamp plus the coded frame's duration. For example, if a coded frame has a presentation timestamp of 10 seconds and acoded frame duration of 100 milliseconds, then the presentation interval would be [10-10.1). Note that the start of the range is inclusive, but the end of the range is exclusive.

- Presentation Order

-

The order that coded frames are rendered in the presentation. The presentation order is achieved by orderingcoded frames in monotonically increasing order by theirpresentation timestamps.

- Presentation Timestamp

-

A reference to a specific time in the presentation. The presentation timestamp in acoded frame indicates when the frameSHOULD be rendered.

- Random Access Point

-

A position in a media segment where decoding and continuous playback can begin without relying on any previous data in the segment. For video this tends to be the location of I-frames. In the case of audio, most audio frames can be treated as a random access point. Since video tracks tend to have a more sparse distribution of random access points, the location of these points are usually considered the random access points for multiplexed streams.

- SourceBuffer byte stream format specification

-

The specific byte stream format specification that describes the format of the byte stream accepted by a

SourceBufferinstance. Thebyte stream format specification, for aSourceBufferobject, is selected based on the type passed to theaddSourceBuffer()call that created the object. - SourceBuffer configuration

-

A specific set of tracks distributed across one or more

SourceBufferobjects owned by a singleMediaSourceinstance.Implementations MUST support at least 1

MediaSourceobject with the following configurations:- A single SourceBuffer with 1 audio track and/or 1 video track.

- Two SourceBuffers with one handling a single audio track and the other handling a single video track.

MediaSource objects MUST support each of the configurations above, but they are only required to support one configuration at a time. Supporting multiple configurations at once or additional configurations is a quality of implementation issue.

- Track Description

-

A byte stream format specific structure that provides the Track ID, codec configuration, and other metadata for a single track. Each track description inside a singleinitialization segment has a uniqueTrack ID. The user agentMUST run the append error algorithm if the Track ID is not unique within the initialization segment.

- Track ID

-

A Track ID is a byte stream format specific identifier that marks sections of the byte stream as being part of a specific track. The Track ID in atrack description identifies which sections of amedia segment belong to that track.

2. MediaSource Object

The MediaSource object represents a source of media data for an HTMLMediaElement. It keeps track of thereadyState for this source as well as a list ofSourceBuffer objects that can be used to add media data to the presentation. MediaSource objects are created by the web application and then attached to an HTMLMediaElement. The application uses theSourceBuffer objects insourceBuffers to add media data to this source. The HTMLMediaElement fetches this media data from theMediaSource object when it is needed during playback.

Each MediaSource object has a live seekable range variable that stores anormalized TimeRanges object. This variable is initialized to an empty TimeRanges object when theMediaSource object is created, is maintained by setLiveSeekableRange() andclearLiveSeekableRange(), and is used inHTMLMediaElement Extensions to modifyHTMLMediaElement.seekable behavior.

enum ReadyState {

"closed",

"open",

"ended"

};

| Enumeration description | |

|---|---|

closed |

Indicates the source is not currently attached to a media element. |

open |

The source has been opened by a media element and is ready for data to be appended to theSourceBuffer objects insourceBuffers. |

ended |

The source is still attached to a media element, but endOfStream() has been called. |

enum EndOfStreamError {

"network",

"decode"

};

| Enumeration description | |

|---|---|

network |

Terminates playback and signals that a network error has occured.

Note

JavaScript applications SHOULD use this status code to terminate playback with a network error. For example, if a network error occurs while fetching media data. |

decode |

Terminates playback and signals that a decoding error has occured.

Note

JavaScript applications SHOULD use this status code to terminate playback with a decode error. For example, if a parsing error occurs while processing out-of-band media data. |

[Constructor]

interface MediaSource : EventTarget {

readonly attribute SourceBufferList sourceBuffers;

readonly attribute SourceBufferList activeSourceBuffers;

readonly attribute ReadyState readyState;

attribute unrestricted double duration;

attribute EventHandler onsourceopen;

attribute EventHandler onsourceended;

attribute EventHandler onsourceclose;

SourceBuffer addSourceBuffer(DOMString type);

void removeSourceBuffer(SourceBuffer sourceBuffer);

void endOfStream(optional EndOfStreamError error);

void setLiveSeekableRange(double start, double end);

void clearLiveSeekableRange();

static boolean isTypeSupported(DOMString type);

};

2.1 Attributes

-

sourceBuffersof typeSourceBufferList, readonly -

Contains the list of

SourceBufferobjects associated with thisMediaSource. WhenreadyStateequals"closed"this list will be empty. OncereadyStatetransitions to"open"SourceBuffer objects can be added to this list by usingaddSourceBuffer(). -

activeSourceBuffersof typeSourceBufferList, readonly -

Contains the subset of

sourceBuffersthat are providing theselected video track, the enabled audio track(s), and the"showing"or"hidden"text track(s).SourceBufferobjects in this listMUST appear in the same order as they appear in thesourceBuffersattribute; e.g., if only sourceBuffers[0] and sourceBuffers[3] are inactiveSourceBuffers, then activeSourceBuffers[0]MUST equal sourceBuffers[0] and activeSourceBuffers[1]MUST equal sourceBuffers[3].NoteThe Changes to selected/enabled track state section describes how this attribute gets updated.

-

readyStateof typeReadyState, readonly -

Indicates the current state of the

MediaSourceobject. When theMediaSourceis createdreadyStateMUST be set to"closed". -

durationof typeunrestricted double -

Allows the web application to set the presentation duration. The duration is initially set to NaN when the

MediaSourceobject is created.On getting, run the following steps:

- If the

readyStateattribute is"closed"then return NaN and abort these steps. - Return the current value of the attribute.

On setting, run the following steps:

- If the value being set is negative or NaN then throw a

TypeErrorexception and abort these steps. - If the

readyStateattribute is not"open"then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true on anySourceBufferinsourceBuffers, then throw anInvalidStateErrorexception and abort these steps. - Run the duration change algorithm with new duration set to the value being assigned to this attribute.

Note

The duration change algorithm will adjust new duration higher if there is any currently buffered coded frame with a higher end time.

NoteappendBuffer()andendOfStream()can update the duration under certain circumstances.

- If the

-

onsourceopenof typeEventHandler -

The event handler for the

sourceopenevent. -

onsourceendedof typeEventHandler -

The event handler for the

sourceendedevent. -

onsourcecloseof typeEventHandler -

The event handler for the

sourcecloseevent.

2.2 Methods

-

addSourceBuffer -

Adds a new

SourceBuffertosourceBuffers.Parameter Type Nullable Optional Description type DOMString✘ ✘ Return type:SourceBufferWhen this method is invoked, the user agent must run the following steps:

- If type is an empty string then throw a

TypeErrorexception and abort these steps. - If type contains a MIME type that is not supported or contains a MIME type that is not supported with the types specified for the other

SourceBufferobjects insourceBuffers, then throw aNotSupportedErrorexception and abort these steps. - If the user agent can't handle any more SourceBuffer objects or if creating a SourceBuffer based ontype would result in an unsupportedSourceBuffer configuration, then throw a

QuotaExceededErrorexception and abort these steps.NoteFor example, a user agent MAY throw a

QuotaExceededErrorexception if the media element has reached theHAVE_METADATAreadyState. This can occur if the user agent's media engine does not support adding more tracks during playback. - If the

readyStateattribute is not in the"open"state then throw anInvalidStateErrorexception and abort these steps. - Create a new

SourceBufferobject and associated resources. - Set the generate timestamps flag on the new object to the value in the "Generate Timestamps Flag" column of the byte stream format registry [MSE-REGISTRY] entry that is associated withtype.

-

- If the generate timestamps flag equals true:

-

Set the

modeattribute on the new object to"sequence". - Otherwise:

-

Set the

modeattribute on the new object to"segments".

- Add the new object to

sourceBuffersandqueue a task tofire a simple event namedaddsourcebufferatsourceBuffers. - Return the new object.

- If type is an empty string then throw a

-

removeSourceBuffer -

Removes a

SourceBufferfromsourceBuffers.Parameter Type Nullable Optional Description sourceBuffer SourceBuffer✘ ✘ Return type:voidWhen this method is invoked, the user agent must run the following steps:

- If sourceBuffer specifies an object that is not in

sourceBuffersthen throw aNotFoundErrorexception and abort these steps. - If the sourceBuffer.

updatingattribute equals true, then run the following steps:- Abort the buffer append algorithm if it is running.

- Set the sourceBuffer.

updatingattribute to false. - Queue a task tofire a simple event named

abortatsourceBuffer. - Queue a task tofire a simple event named

updateendatsourceBuffer.

- Let SourceBuffer audioTracks list equal the

AudioTrackListobject returned bysourceBuffer.audioTracks. - If the SourceBuffer audioTracks list is not empty, then run the following steps:

- Let HTMLMediaElement audioTracks list equal the

AudioTrackListobject returned by theaudioTracksattribute on the HTMLMediaElement. - For each

AudioTrackobject in theSourceBuffer audioTracks list, run the following steps:- Set the

sourceBufferattribute on theAudioTrackobject to null. - Remove the

AudioTrackobject from theHTMLMediaElement audioTracks list.NoteThis should trigger

AudioTrackList[HTML51] logic to queue a task to fire a trusted event namedremovetrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized to theAudioTrackobject, at theHTMLMediaElement audioTracks list. If theenabledattribute on theAudioTrackobject was true at the beginning of this removal step, then this should also triggerAudioTrackList[HTML51] logic to queue a task to fire a simple event namedchangeat theHTMLMediaElement audioTracks list - Remove the

AudioTrackobject from theSourceBuffer audioTracks list.NoteThis should trigger

AudioTrackList[HTML51] logic to queue a task to fire a trusted event namedremovetrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized to theAudioTrackobject, at theSourceBuffer audioTracks list. If theenabledattribute on theAudioTrackobject was true at the beginning of this removal step, then this should also triggerAudioTrackList[HTML51] logic to queue a task to fire a simple event namedchangeat theSourceBuffer audioTracks list

- Set the

- Let HTMLMediaElement audioTracks list equal the

- Let SourceBuffer videoTracks list equal the

VideoTrackListobject returned bysourceBuffer.videoTracks. - If the SourceBuffer videoTracks list is not empty, then run the following steps:

- Let HTMLMediaElement videoTracks list equal the

VideoTrackListobject returned by thevideoTracksattribute on the HTMLMediaElement. - For each

VideoTrackobject in theSourceBuffer videoTracks list, run the following steps:- Set the

sourceBufferattribute on theVideoTrackobject to null. - Remove the

VideoTrackobject from theHTMLMediaElement videoTracks list.NoteThis should trigger

VideoTrackList[HTML51] logic to queue a task to fire a trusted event namedremovetrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized to theVideoTrackobject, at theHTMLMediaElement videoTracks list. If theselectedattribute on theVideoTrackobject was true at the beginning of this removal step, then this should also triggerVideoTrackList[HTML51] logic to queue a task to fire a simple event namedchangeat theHTMLMediaElement videoTracks list - Remove the

VideoTrackobject from theSourceBuffer videoTracks list.NoteThis should trigger

VideoTrackList[HTML51] logic to queue a task to fire a trusted event namedremovetrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized to theVideoTrackobject, at theSourceBuffer videoTracks list. If theselectedattribute on theVideoTrackobject was true at the beginning of this removal step, then this should also triggerVideoTrackList[HTML51] logic to queue a task to fire a simple event namedchangeat theSourceBuffer videoTracks list

- Set the

- Let HTMLMediaElement videoTracks list equal the

- Let SourceBuffer textTracks list equal the

TextTrackListobject returned bysourceBuffer.textTracks. - If the SourceBuffer textTracks list is not empty, then run the following steps:

- Let HTMLMediaElement textTracks list equal the

TextTrackListobject returned by thetextTracksattribute on the HTMLMediaElement. - For each

TextTrackobject in theSourceBuffer textTracks list, run the following steps:- Set the

sourceBufferattribute on theTextTrackobject to null. - Remove the

TextTrackobject from theHTMLMediaElement textTracks list.NoteThis should trigger

TextTrackList[HTML51] logic to queue a task to fire a trusted event namedremovetrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized to theTextTrackobject, at theHTMLMediaElement textTracks list. If themodeattribute on theTextTrackobject was"showing"or"hidden"at the beginning of this removal step, then this should also triggerTextTrackList[HTML51] logic to queue a task to fire a simple event namedchangeat theHTMLMediaElement textTracks list. - Remove the

TextTrackobject from theSourceBuffer textTracks list.NoteThis should trigger

TextTrackList[HTML51] logic to queue a task to fire a trusted event namedremovetrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized to theTextTrackobject, at theSourceBuffer textTracks list. If themodeattribute on theTextTrackobject was"showing"or"hidden"at the beginning of this removal step, then this should also triggerTextTrackList[HTML51] logic to queue a task to fire a simple event namedchangeat theSourceBuffer textTracks list.

- Set the

- Let HTMLMediaElement textTracks list equal the

- If sourceBuffer is in

activeSourceBuffers, then removesourceBuffer fromactiveSourceBuffersandqueue a task tofire a simple event namedremovesourcebufferat theSourceBufferListreturned byactiveSourceBuffers. - Remove sourceBuffer from

sourceBuffersandqueue a task tofire a simple event namedremovesourcebufferat theSourceBufferListreturned bysourceBuffers. - Destroy all resources for sourceBuffer.

- If sourceBuffer specifies an object that is not in

-

endOfStream -

Signals the end of the stream.

Parameter Type Nullable Optional Description error EndOfStreamError✘ ✔ Return type:voidWhen this method is invoked, the user agent must run the following steps:

- If the

readyStateattribute is not in the"open"state then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true on anySourceBufferinsourceBuffers, then throw anInvalidStateErrorexception and abort these steps. - Run the end of stream algorithm with the error parameter set to error.

- If the

-

setLiveSeekableRange -

Updates the live seekable range variable used inHTMLMediaElement Extensions to modify

HTMLMediaElement.seekablebehavior.Parameter Type Nullable Optional Description start double✘ ✘ The start of the range, in seconds measured from presentation start time. While set, and if durationequals positive Infinity,HTMLMediaElement.seekablewill return a non-empty TimeRanges object with a lowest range start timestamp no greater thanstart.end double✘ ✘ The end of range, in seconds measured from presentation start time. While set, and if durationequals positive Infinity,HTMLMediaElement.seekablewill return a non-empty TimeRanges object with a highest range end timestamp no less thanend.Return type:voidWhen this method is invoked, the user agent must run the following steps:

- If the

readyStateattribute is not"open"then throw anInvalidStateErrorexception and abort these steps. - If start is negative or greater than end, then throw a

TypeErrorexception and abort these steps. - Set live seekable range to be a newnormalized TimeRanges object containing a single range whose start position isstart and end position isend.

- If the

-

clearLiveSeekableRange -

Updates the live seekable range variable used inHTMLMediaElement Extensions to modify

HTMLMediaElement.seekablebehavior.No parameters.Return type:voidWhen this method is invoked, the user agent must run the following steps:

- If the

readyStateattribute is not"open"then throw anInvalidStateErrorexception and abort these steps. - If live seekable range contains a range, then setlive seekable range to be a new empty

TimeRangesobject.

- If the

-

isTypeSupported, static -

Check to see whether the

MediaSourceis capable of creatingSourceBufferobjects for the specified MIME type.NoteIf true is returned from this method, it only indicates that the

MediaSourceimplementation is capable of creatingSourceBufferobjects for the specified MIME type. AnaddSourceBuffer()callSHOULD still fail if sufficient resources are not available to support the addition of a newSourceBuffer.NoteThis method returning true implies that HTMLMediaElement.canPlayType() will return "maybe" or "probably" since it does not make sense for a

MediaSourceto support a type the HTMLMediaElement knows it cannot play.Parameter Type Nullable Optional Description type DOMString✘ ✘ Return type:booleanWhen this method is invoked, the user agent must run the following steps:

- If type is an empty string, then return false.

- If type does not contain a valid MIME type string, then return false.

- If type contains a media type or media subtype that the MediaSource does not support, then return false.

- If type contains a codec that the MediaSource does not support, then return false.

- If the MediaSource does not support the specified combination of media type, media subtype, and codecs then return false.

- Return true.

2.3 Event Summary

| Event name | Interface | Dispatched when... |

|---|---|---|

sourceopen |

Event |

readyState transitions from"closed" to"open" or from"ended" to"open". |

sourceended |

Event |

readyState transitions from"open" to"ended". |

sourceclose |

Event |

readyState transitions from"open" to"closed" or"ended" to"closed". |

2.4 Algorithms

2.4.1 Attaching to a media element

A MediaSource object can be attached to a media element by assigning aMediaSource object URL to the media elementsrc attribute or the src attribute of a createObjectURL().

If the resource fetch algorithm was invoked with a media provider object that is a MediaSource object or a URL record whose object is a MediaSource object, then let mode be local, skip the first step in theresource fetch algorithm (which may otherwise set mode to remote) and add the steps and clarifications below to the"Otherwise (mode is local)" section of theresource fetch algorithm.

The resource fetch algorithm's first step is expected to eventually align with selecting local mode for URL records whose objects are media provider objects. The intent is that if the HTMLMediaElement'ssrc attribute or selected childsrc attribute is ablob: URL matching aMediaSource object URL when the respective src attribute was last changed, then that MediaSource object is used as the media provider object and current media resource in the local mode logic in theresource fetch algorithm. This also means that the remote mode logic that includes observance of any preload attribute is skipped when a MediaSource object is attached. Even with that eventual change to [HTML51], the execution of the following steps at the beginning of the local mode logic is still required when the current media resource is a MediaSource object.

Relative to the action which triggered the media element's resource selection algorithm, these steps are asynchronous. The resource fetch algorithm is run after the task that invoked the resource selection algorithm is allowed to continue and a stable state is reached. Implementations may delay the steps in the "Otherwise" clause, below, until the MediaSource object is ready for use.

-

If

readyStateis NOT set to"closed" - Run the "If the media data cannot be fetched at all, due to network errors, causing the user agent to give up trying to fetch the resource" steps of the resource fetch algorithm's media data processing steps list.

- Otherwise

-

- Set the media element's delaying-the-load-event-flag to false.

- Set the

readyStateattribute to"open". - Queue a task tofire a simple event named

sourceopenat theMediaSource. - Continue the resource fetch algorithm by running the remaining "Otherwise (mode is local)" steps, with these clarifications:

- Text in the resource fetch algorithm or the media data processing steps list that refers to "the download", "bytes received", or "whenever new data for the current media resource becomes available" refers to data passed in via

appendBuffer(). - References to HTTP in the resource fetch algorithm and the media data processing steps list do not apply because the HTMLMediaElement does not fetch media data via HTTP when a

MediaSourceis attached.

- Text in the resource fetch algorithm or the media data processing steps list that refers to "the download", "bytes received", or "whenever new data for the current media resource becomes available" refers to data passed in via

An attached MediaSource does not use the remote mode steps in the resource fetch algorithm, so the media element will not fire "suspend" events. Though future versions of this specification will likely remove "progress" and "stalled" events from a media element with an attached MediaSource, user agents conforming to this version of the specification may still fire these two events as these [HTML51] references changed after implementations of this specification stabilized.

2.4.2 Detaching from a media element

The following steps are run in any case where the media element is going to transition toNETWORK_EMPTY andqueue a task to fire a simple event named emptied at the media element. These steps SHOULD be run right before the transition.

- Set the

readyStateattribute to"closed". - Update

durationto NaN. - Remove all the

SourceBufferobjects fromactiveSourceBuffers. - Queue a task tofire a simple event named

removesourcebufferatactiveSourceBuffers. - Remove all the

SourceBufferobjects fromsourceBuffers. - Queue a task tofire a simple event named

removesourcebufferatsourceBuffers. - Queue a task tofire a simple event named

sourcecloseat theMediaSource.

Going forward, this algorithm is intended to be externally called and run in any case where the attachedMediaSource, if any, must be detached from the media element. ItMAY be called on HTMLMediaElement [HTML51] operations like load() and resource fetch algorithm failures in addition to, or in place of, when the media element transitions toNETWORK_EMPTY. Resource fetch algorithm failures are those which abort either the resource fetch algorithm or the resource selection algorithm, with the exception that the "Final step" [HTML51] is not considered a failure that triggers detachment.

2.4.3 Seeking

Run the following steps as part of the "Wait until the user agent has established whether or not the media data for the new playback position is available, and, if it is, until it has decoded enough data to play back that position" step of theseek algorithm:

-

Note

The media element looks for media segments containing the new playback position in each

SourceBufferobject inactiveSourceBuffers. Any position within aTimeRangein the current value of theHTMLMediaElement.bufferedattribute has all necessary media segments buffered for that position.-

If new playback position is not in any

TimeRangeofHTMLMediaElement.buffered -

- If the

HTMLMediaElement.readyStateattribute is greater thanHAVE_METADATA, then set theHTMLMediaElement.readyStateattribute toHAVE_METADATA.NotePer

HTMLMediaElement ready states[HTML51] logic,HTMLMediaElement.readyStatechanges may trigger events on the HTMLMediaElement. - The media element waits until an

appendBuffer()call causes thecoded frame processing algorithm to set theHTMLMediaElement.readyStateattribute to a value greater thanHAVE_METADATA.NoteThe web application can use

bufferedandHTMLMediaElement.bufferedto determine what the media element needs to resume playback.

- If the

- Otherwise

-

Continue

Note

If the

readyStateattribute is"ended"and thenew playback position is within aTimeRangecurrently inHTMLMediaElement.buffered, then the seek operation must continue to completion here even if one or more currently selected or enabled track buffers' largest range end timestamp is less thannew playback position. This condition should only occur due to logic inbufferedwhenreadyStateis"ended".

-

If new playback position is not in any

- The media element resets all decoders and initializes each one with data from the appropriateinitialization segment.

- The media element feeds coded frames from the active track buffers into the decoders starting with the closest random access point before the new playback position.

- Resume the seek algorithm at the "Await a stable state" step.

2.4.4 SourceBuffer Monitoring

The following steps are periodically run during playback to make sure that all of theSourceBuffer objects inactiveSourceBuffers haveenough data to ensure uninterrupted playback. Changes toactiveSourceBuffers also cause these steps to run because they affect the conditions that trigger state transitions.

Having enough data to ensure uninterrupted playback is an implementation specific condition where the user agent determines that it currently has enough data to play the presentation without stalling for a meaningful period of time. This condition is constantly evaluated to determine when to transition the media element into and out of theHAVE_ENOUGH_DATA ready state. These transitions indicate when the user agent believes it has enough data buffered or it needs more data respectively.

An implementation MAY choose to use bytes buffered, time buffered, the append rate, or any other metric it sees fit to determine when it has enough data. The metrics usedMAY change during playback so web applicationsSHOULD only rely on the value ofHTMLMediaElement.readyState to determine whether more data is needed or not.

When the media element needs more data, the user agent SHOULD transition it from HAVE_ENOUGH_DATA toHAVE_FUTURE_DATA early enough for a web application to be able to respond without causing an interruption in playback. For example, transitioning when the current playback position is 500ms before the end of the buffered data gives the application roughly 500ms to append more data before playback stalls.

-

If the the

HTMLMediaElement.readyStateattribute equalsHAVE_NOTHING: -

- Abort these steps.

-

If

HTMLMediaElement.buffereddoes not contain aTimeRangefor the current playback position: -

- Set the

HTMLMediaElement.readyStateattribute toHAVE_METADATA.NotePer

HTMLMediaElement ready states[HTML51] logic,HTMLMediaElement.readyStatechanges may trigger events on the HTMLMediaElement. - Abort these steps.

- Set the

-

If

HTMLMediaElement.bufferedcontains aTimeRangethat includes the current playback position and enough data to ensure uninterrupted playback: -

- Set the

HTMLMediaElement.readyStateattribute toHAVE_ENOUGH_DATA.NotePer

HTMLMediaElement ready states[HTML51] logic,HTMLMediaElement.readyStatechanges may trigger events on the HTMLMediaElement. - Playback may resume at this point if it was previously suspended by a transition to

HAVE_CURRENT_DATA. - Abort these steps.

- Set the

-

If

HTMLMediaElement.bufferedcontains aTimeRangethat includes the current playback position and some time beyond the current playback position, then run the following steps: -

- Set the

HTMLMediaElement.readyStateattribute toHAVE_FUTURE_DATA.NotePer

HTMLMediaElement ready states[HTML51] logic,HTMLMediaElement.readyStatechanges may trigger events on the HTMLMediaElement. - Playback may resume at this point if it was previously suspended by a transition to

HAVE_CURRENT_DATA. - Abort these steps.

- Set the

-

If

HTMLMediaElement.bufferedcontains aTimeRangethat ends at the current playback position and does not have a range covering the time immediately after the current position: -

- Set the

HTMLMediaElement.readyStateattribute toHAVE_CURRENT_DATA.NotePer

HTMLMediaElement ready states[HTML51] logic,HTMLMediaElement.readyStatechanges may trigger events on the HTMLMediaElement. - Playback is suspended at this point since the media element doesn't have enough data to advance themedia timeline.

- Abort these steps.

- Set the

2.4.5 Changes to selected/enabled track state

During playback activeSourceBuffers needs to be updated if theselected video track, the enabled audio track(s), or a text track mode changes. When one or more of these changes occur the following steps need to be followed.

- If the selected video track changes, then run the following steps:

-

- If the

SourceBufferassociated with the previously selected video track is not associated with any other enabled tracks, run the following steps:- Remove the

SourceBufferfromactiveSourceBuffers. - Queue a task tofire a simple event named

removesourcebufferatactiveSourceBuffers

- Remove the

- If the

SourceBufferassociated with the newly selected video track is not already inactiveSourceBuffers, run the following steps:- Add the

SourceBuffertoactiveSourceBuffers. - Queue a task tofire a simple event named

addsourcebufferatactiveSourceBuffers

- Add the

- If the

-

If an audio track becomes disabled and the

SourceBufferassociated with this track is not associated with any other enabled or selected track, then run the following steps: -

- Remove the

SourceBufferassociated with the audio track fromactiveSourceBuffers - Queue a task tofire a simple event named

removesourcebufferatactiveSourceBuffers

- Remove the

-

If an audio track becomes enabled and the

SourceBufferassociated with this track is not already inactiveSourceBuffers, then run the following steps: -

- Add the

SourceBufferassociated with the audio track toactiveSourceBuffers - Queue a task tofire a simple event named

addsourcebufferatactiveSourceBuffers

- Add the

-

If a text track mode becomes

"disabled"and theSourceBufferassociated with this track is not associated with any other enabled or selected track, then run the following steps: -

- Remove the

SourceBufferassociated with the text track fromactiveSourceBuffers - Queue a task tofire a simple event named

removesourcebufferatactiveSourceBuffers

- Remove the

-

If a text track mode becomes

"showing"or"hidden"and theSourceBufferassociated with this track is not already inactiveSourceBuffers, then run the following steps: -

- Add the

SourceBufferassociated with the text track toactiveSourceBuffers - Queue a task tofire a simple event named

addsourcebufferatactiveSourceBuffers

- Add the

2.4.6 Duration change

Follow these steps when duration needs to change to anew duration.

- If the current value of

durationis equal tonew duration, then return. - If new duration is less than the highest presentation timestamp of any buffered coded frames for all

SourceBufferobjects insourceBuffers, then throw anInvalidStateErrorexception and abort these steps.NoteDuration reductions that would truncate currently buffered media are disallowed. When truncation is necessary, use

remove()to reduce the buffered range before updatingduration. - Let highest end time be the largest track buffer ranges end time across all the track buffers across all

SourceBufferobjects insourceBuffers. - If new duration is less than highest end time, then

Note

This condition can occur because the coded frame removal algorithm preserves coded frames that start before the start of the removal range.

- Update new duration to equal highest end time.

- Update

durationtonew duration. - Update the

media durationtonew duration and run theHTMLMediaElement duration change algorithm.

2.4.7 End of stream algorithm

This algorithm gets called when the application signals the end of stream via anendOfStream() call or an algorithm needs to signal a decode error. This algorithm takes anerror parameter that indicates whether an error will be signalled.

- Change the

readyStateattribute value to"ended". - Queue a task tofire a simple event named

sourceendedat theMediaSource. -

- If error is not set

-

- Run the duration change algorithm with new duration set to the largest track buffer ranges end time across all the track buffers across all

SourceBufferobjects insourceBuffers.NoteThis allows the duration to properly reflect the end of the appended media segments. For example, if the duration was explicitly set to 10 seconds and only media segments for 0 to 5 seconds were appended before endOfStream() was called, then the duration will get updated to 5 seconds.

- Notify the media element that it now has all of the media data.

- Run the duration change algorithm with new duration set to the largest track buffer ranges end time across all the track buffers across all

-

If error is set to

"network" -

-

If the

HTMLMediaElement.readyStateattribute equalsHAVE_NOTHING - Run the "If the media data cannot be fetched at all, due to network errors, causing the user agent to give up trying to fetch the resource" steps of the resource fetch algorithm's media data processing steps list.

-

If the

HTMLMediaElement.readyStateattribute is greater thanHAVE_NOTHING - Run the " If the connection is interrupted after some media data has been received, causing the user agent to give up trying to fetch the resource" steps of the resource fetch algorithm's media data processing steps list.

-

If the

-

If error is set to

"decode" -

-

If the

HTMLMediaElement.readyStateattribute equalsHAVE_NOTHING - Run the " If the media data can be fetched but is found by inspection to be in an unsupported format, or can otherwise not be rendered at all" steps of the resource fetch algorithm's media data processing steps list.

-

If the

HTMLMediaElement.readyStateattribute is greater thanHAVE_NOTHING - Run the media data is corrupted steps of the resource fetch algorithm's media data processing steps list.

-

If the

3. SourceBuffer Object

enum AppendMode {

"segments",

"sequence"

};

| Enumeration description | |

|---|---|

segments |

The timestamps in the media segment determine where the coded frames are placed in the presentation. Media segments can be appended in any order. |

sequence |

Media segments will be treated as adjacent in time independent of the timestamps in the media segment. Coded frames in a new media segment will be placed immediately after the coded frames in the previous media segment. The |

interface SourceBuffer : EventTarget {

attribute AppendMode mode;

readonly attribute boolean updating;

readonly attribute TimeRanges buffered;

attribute double timestampOffset;

readonly attribute AudioTrackList audioTracks;

readonly attribute VideoTrackList videoTracks;

readonly attribute TextTrackList textTracks;

attribute double appendWindowStart;

attribute unrestricted double appendWindowEnd;

attribute EventHandler onupdatestart;

attribute EventHandler onupdate;

attribute EventHandler onupdateend;

attribute EventHandler onerror;

attribute EventHandler onabort;

void appendBuffer(BufferSource data);

void abort();

void remove(double start, unrestricted double end);

};

3.1 Attributes

-

modeof typeAppendMode -

Controls how a sequence of media segments are handled. This attribute is initially set by

addSourceBuffer()after the object is created.On getting, Return the initial value or the last value that was successfully set.

On setting, run the following steps:

- If this object has been removed from the

sourceBuffersattribute of theparent media source, then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true, then throw anInvalidStateErrorexception and abort these steps. - Let new mode equal the new value being assigned to this attribute.

- If generate timestamps flag equals true andnew mode equals

"segments", then throw aTypeErrorexception and abort these steps. -

If the

readyStateattribute of theparent media source is in the"ended"state then run the following steps:- Set the

readyStateattribute of theparent media source to"open" - Queue a task tofire a simple event named

sourceopenat theparent media source.

- Set the

- If the append state equalsPARSING_MEDIA_SEGMENT, then throw an

InvalidStateErrorand abort these steps. - If the new mode equals

"sequence", then set thegroup start timestamp to thegroup end timestamp. - Update the attribute to new mode.

- If this object has been removed from the

-

updatingof typeboolean, readonly -

Indicates whether the asynchronous continuation of an

appendBuffer()orremove()operation is still being processed. This attribute is initially set to false when the object is created. -

bufferedof typeTimeRanges, readonly -

Indicates what

TimeRangesare buffered in theSourceBuffer. This attribute is initially set to an emptyTimeRangesobject when the object is created.When the attribute is read the following steps MUST occur:

- If this object has been removed from the

sourceBuffersattribute of theparent media source then throw anInvalidStateErrorexception and abort these steps. - Let highest end time be the largest track buffer ranges end time across all the track buffers managed by this

SourceBufferobject. - Let intersection ranges equal a

TimeRangeobject containing a single range from 0 tohighest end time. - For each audio and video track buffer managed by this

SourceBuffer, run the following steps:NoteText track-buffers are included in the calculation of highest end time, above, but excluded from the buffered range calculation here. They are not necessarily continuous, nor should any discontinuity within them trigger playback stall when the other media tracks are continuous over the same time range.

- Let track ranges equal the track buffer ranges for the current track buffer.

- If

readyStateis"ended", then set the end time on the last range intrack ranges to highest end time. - Let new intersection ranges equal the intersection between the intersection ranges and the track ranges.

- Replace the ranges in intersection ranges with the new intersection ranges.

- If intersection ranges does not contain the exact same range information as the current value of this attribute, then update the current value of this attribute tointersection ranges.

- Return the current value of this attribute.

- If this object has been removed from the

-

timestampOffsetof typedouble -

Controls the offset applied to timestamps inside subsequent media segments that are appended to this

SourceBuffer. ThetimestampOffsetis initially set to 0 which indicates that no offset is being applied.On getting, Return the initial value or the last value that was successfully set.

On setting, run the following steps:

- Let new timestamp offset equal the new value being assigned to this attribute.

- If this object has been removed from the

sourceBuffersattribute of theparent media source, then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true, then throw anInvalidStateErrorexception and abort these steps. -

If the

readyStateattribute of theparent media source is in the"ended"state then run the following steps:- Set the

readyStateattribute of theparent media source to"open" - Queue a task tofire a simple event named

sourceopenat theparent media source.

- Set the

- If the append state equalsPARSING_MEDIA_SEGMENT, then throw an

InvalidStateErrorand abort these steps. - If the

modeattribute equals"sequence", then set thegroup start timestamp tonew timestamp offset. - Update the attribute to new timestamp offset.

-

audioTracksof typeAudioTrackList, readonly -

The list of

AudioTrackobjects created by this object. -

videoTracksof typeVideoTrackList, readonly -

The list of

VideoTrackobjects created by this object. -

textTracksof typeTextTrackList, readonly -

The list of

TextTrackobjects created by this object. -

appendWindowStartof typedouble -

The presentation timestamp for the start of the append window. This attribute is initially set to the presentation start time.

On getting, Return the initial value or the last value that was successfully set.

On setting, run the following steps:

- If this object has been removed from the

sourceBuffersattribute of theparent media source, then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true, then throw anInvalidStateErrorexception and abort these steps. - If the new value is less than 0 or greater than or equal to

appendWindowEndthen throw aTypeErrorexception and abort these steps. - Update the attribute to the new value.

- If this object has been removed from the

-

appendWindowEndof typeunrestricted double -

The presentation timestamp for the end of the append window. This attribute is initially set to positive Infinity.

On getting, Return the initial value or the last value that was successfully set.

On setting, run the following steps:

- If this object has been removed from the

sourceBuffersattribute of theparent media source, then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true, then throw anInvalidStateErrorexception and abort these steps. - If the new value equals NaN, then throw a

TypeErrorand abort these steps. - If the new value is less than or equal to

appendWindowStartthen throw aTypeErrorexception and abort these steps. - Update the attribute to the new value.

- If this object has been removed from the

-

onupdatestartof typeEventHandler -

The event handler for the

updatestartevent. -

onupdateof typeEventHandler -

The event handler for the

updateevent. -

onupdateendof typeEventHandler -

The event handler for the

updateendevent. -

onerrorof typeEventHandler -

The event handler for the

errorevent. -

onabortof typeEventHandler -

The event handler for the

abortevent.

3.2 Methods

-

appendBuffer -

Appends the segment data in an

BufferSource[WEBIDL] to the source buffer.Parameter Type Nullable Optional Description data BufferSource✘ ✘ Return type:voidWhen this method is invoked, the user agent must run the following steps:

- Run the prepare append algorithm.

- Add data to the end of the input buffer.

- Set the

updatingattribute to true. - Queue a task tofire a simple event named

updatestartat thisSourceBufferobject. - Asynchronously run the buffer append algorithm.

-

abort -

Aborts the current segment and resets the segment parser.

No parameters.Return type:voidWhen this method is invoked, the user agent must run the following steps:

- If this object has been removed from the

sourceBuffersattribute of theparent media source then throw anInvalidStateErrorexception and abort these steps. - If the

readyStateattribute of theparent media source is not in the"open"state then throw anInvalidStateErrorexception and abort these steps. - If the range removal algorithm is running, then throw an

InvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true, then run the following steps:- Abort the buffer append algorithm if it is running.

- Set the

updatingattribute to false. - Queue a task tofire a simple event named

abortat thisSourceBufferobject. - Queue a task tofire a simple event named

updateendat thisSourceBufferobject.

- Run the reset parser state algorithm.

- Set

appendWindowStartto thepresentation start time. - Set

appendWindowEndto positive Infinity.

- If this object has been removed from the

-

remove -

Removes media for a specific time range.

Parameter Type Nullable Optional Description start double✘ ✘ The start of the removal range, in seconds measured from presentation start time. end unrestricted double✘ ✘ The end of the removal range, in seconds measured from presentation start time. Return type:voidWhen this method is invoked, the user agent must run the following steps:

- If this object has been removed from the

sourceBuffersattribute of theparent media source then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true, then throw anInvalidStateErrorexception and abort these steps. - If

durationequals NaN, then throw aTypeErrorexception and abort these steps. - If start is negative or greater than

duration, then throw aTypeErrorexception and abort these steps. - If end is less than or equal to start or end equals NaN, then throw a

TypeErrorexception and abort these steps. -

If the

readyStateattribute of theparent media source is in the"ended"state then run the following steps:- Set the

readyStateattribute of theparent media source to"open" - Queue a task tofire a simple event named

sourceopenat theparent media source.

- Set the

- Run the range removal algorithm with start and end as the start and end of the removal range.

- If this object has been removed from the

3.3 Track Buffers

A track buffer stores the track descriptions and coded frames for an individual track. The track buffer is updated as initialization segments and media segments are appended to the SourceBuffer.

Each track buffer has a last decode timestamp variable that stores the decode timestamp of the lastcoded frame appended in the currentcoded frame group. The variable is initially unset to indicate that nocoded frames have been appended yet.

Each track buffer has a last frame duration variable that stores thecoded frame duration of the lastcoded frame appended in the current coded frame group. The variable is initially unset to indicate that no coded frames have been appended yet.

Each track buffer has a highest end timestamp variable that stores the highestcoded frame end timestamp across allcoded frames in the current coded frame group that were appended to this track buffer. The variable is initially unset to indicate that nocoded frames have been appended yet.

Each track buffer has a need random access point flag variable that keeps track of whether the track buffer is waiting for arandom access point coded frame. The variable is initially set to true to indicate that random access point coded frame is needed before anything can be added to the track buffer.

Each track buffer has a track buffer ranges variable that represents the presentation time ranges occupied by thecoded frames currently stored in the track buffer.

For track buffer ranges, these presentation time ranges are based on presentation timestamps, frame durations, and potentially coded frame group start times for coded frame groups across track buffers in a muxedSourceBuffer.

For specification purposes, this information is treated as if it were stored in anormalized TimeRanges object. Intersectedtrack buffer ranges are used to report HTMLMediaElement.buffered, andMUST therefore support uninterrupted playback within each range ofHTMLMediaElement.buffered.

These coded frame group start times differ slightly from those mentioned in thecoded frame processing algorithm in that they are the earliestpresentation timestamp across all track buffers following a discontinuity. Discontinuities can occur within thecoded frame processing algorithm or result from the coded frame removal algorithm, regardless of mode. The threshold for determining disjointness oftrack buffer ranges is implementation-specific. For example, to reduce unexpected playback stalls, implementationsMAY approximate thecoded frame processing algorithm's discontinuity detection logic by coalescing adjacent ranges separated by a gap smaller than 2 times the maximum frame duration buffered so far in thistrack buffer. ImplementationsMAY also use coded frame group start times as range start times acrosstrack buffers in a muxedSourceBuffer to further reduce unexpected playback stalls.

3.4 Event Summary

| Event name | Interface | Dispatched when... |

|---|---|---|

updatestart |

Event |

updating transitions from false to true. |

update |

Event |

The append or remove has successfully completed. updating transitions from true to false. |

updateend |

Event |

The append or remove has ended. |

error |

Event |

An error occurred during the append. updating transitions from true to false. |

abort |

Event |

The append or remove was aborted by an abort() call.updating transitions from true to false. |

3.5 Algorithms

3.5.1 Segment Parser Loop

All SourceBuffer objects have an internal append state variable that keeps track of the high-level segment parsing state. It is initially set toWAITING_FOR_SEGMENT and can transition to the following states as data is appended.

| Append state name | Description |

|---|---|

| WAITING_FOR_SEGMENT | Waiting for the start of an initialization segment or media segment to be appended. |

| PARSING_INIT_SEGMENT | Currently parsing an initialization segment. |

| PARSING_MEDIA_SEGMENT | Currently parsing a media segment. |

The input buffer is a byte buffer that is used to hold unparsed bytes acrossappendBuffer() calls. The buffer is empty when the SourceBuffer object is created.

The buffer full flag keeps track of whetherappendBuffer() is allowed to accept more bytes. It is set to false when the SourceBuffer object is created and gets updated as data is appended and removed.

The group start timestamp variable keeps track of the starting timestamp for a newcoded frame group in the"sequence" mode. It is unset when the SourceBuffer object is created and gets updated when themode attribute equals"sequence" and thetimestampOffset attribute is set, or thecoded frame processing algorithm runs.

The group end timestamp variable stores the highestcoded frame end timestamp across allcoded frames in the current coded frame group. It is set to 0 when the SourceBuffer object is created and gets updated by thecoded frame processing algorithm.

The group end timestamp stores the highestcoded frame end timestamp across all track buffers in a SourceBuffer. Therefore, care should be taken in setting the mode attribute when appending multiplexed segments in which the timestamps are not aligned across tracks.

The generate timestamps flag is a boolean variable that keeps track of whether timestamps need to be generated for thecoded frames passed to thecoded frame processing algorithm. This flag is set byaddSourceBuffer() when the SourceBuffer object is created.

When the segment parser loop algorithm is invoked, run the following steps:

- Loop Top: If the input buffer is empty, then jump to theneed more data step below.

- If the input buffer contains bytes that violate theSourceBuffer byte stream format specification, then run the append error algorithm and abort this algorithm.

- Remove any bytes that the byte stream format specifications say MUST be ignored from the start of theinput buffer.

-

If the append state equalsWAITING_FOR_SEGMENT, then run the following steps:

- If the beginning of the input buffer indicates the start of aninitialization segment, set theappend state toPARSING_INIT_SEGMENT.

- If the beginning of the input buffer indicates the start of amedia segment, setappend state toPARSING_MEDIA_SEGMENT.

- Jump to the loop top step above.

-

If the append state equalsPARSING_INIT_SEGMENT, then run the following steps:

- If the input buffer does not contain a completeinitialization segment yet, then jump to theneed more data step below.

- Run the initialization segment received algorithm.

- Remove the initialization segment bytes from the beginning of the input buffer.

- Set append state toWAITING_FOR_SEGMENT.

- Jump to the loop top step above.

-

If the append state equalsPARSING_MEDIA_SEGMENT, then run the following steps:

- If the first initialization segment received flag is false, then run theappend error algorithm and abort this algorithm.

- If the input buffer contains one or more completecoded frames, then run thecoded frame processing algorithm.

Note

The frequency at which the coded frame processing algorithm is run is implementation-specific. The coded frame processing algorithmMAY be called when the input buffer contains the complete media segment or itMAY be called multiple times as complete coded frames are added to the input buffer.

- If this

SourceBufferis full and cannot accept more media data, then set thebuffer full flag to true. - If the input buffer does not contain a completemedia segment, then jump to theneed more data step below.

- Remove the media segment bytes from the beginning of the input buffer.

- Set append state toWAITING_FOR_SEGMENT.

- Jump to the loop top step above.

- Need more data: Return control to the calling algorithm.

3.5.2 Reset Parser State

When the parser state needs to be reset, run the following steps:

- If the append state equalsPARSING_MEDIA_SEGMENT and the input buffer contains some completecoded frames, then run thecoded frame processing algorithm until all of these completecoded frames have been processed.

- Unset the last decode timestamp on alltrack buffers.

- Unset the last frame duration on alltrack buffers.

- Unset the highest end timestamp on alltrack buffers.

- Set the need random access point flag on alltrack buffers to true.

- If the

modeattribute equals"sequence", then set thegroup start timestamp to thegroup end timestamp - Remove all bytes from the input buffer.

- Set append state toWAITING_FOR_SEGMENT.

3.5.3 Append Error Algorithm

This algorithm is called when an error occurs during an append.

- Run the reset parser state algorithm.

- Set the

updatingattribute to false. - Queue a task tofire a simple event named

errorat thisSourceBufferobject. - Queue a task tofire a simple event named

updateendat thisSourceBufferobject. - Run the end of stream algorithm with the error parameter set to

"decode".

3.5.4 Prepare Append Algorithm

When an append operation begins, the follow steps are run to validate and prepare theSourceBuffer.

- If the

SourceBufferhas been removed from thesourceBuffersattribute of theparent media source then throw anInvalidStateErrorexception and abort these steps. - If the

updatingattribute equals true, then throw anInvalidStateErrorexception and abort these steps. - If the

HTMLMediaElement.errorattribute is not null, then throw anInvalidStateErrorexception and abort these steps. -

If the

readyStateattribute of theparent media source is in the"ended"state then run the following steps:- Set the

readyStateattribute of theparent media source to"open" - Queue a task tofire a simple event named

sourceopenat theparent media source.

- Set the

- Run the coded frame eviction algorithm.

-

If the buffer full flag equals true, then throw a

QuotaExceededErrorexception and abort these step.NoteThis is the signal that the implementation was unable to evict enough data to accommodate the append or the append is too big. The web applicationSHOULD use

remove()to explicitly free up space and/or reduce the size of the append.

3.5.5 Buffer Append Algorithm

When appendBuffer() is called, the following steps are run to process the appended data.

- Run the segment parser loop algorithm.

- If the segment parser loop algorithm in the previous step was aborted, then abort this algorithm.

- Set the

updatingattribute to false. - Queue a task tofire a simple event named

updateat thisSourceBufferobject. - Queue a task tofire a simple event named

updateendat thisSourceBufferobject.

3.5.6 Range Removal

Follow these steps when a caller needs to initiate a JavaScript visible range removal operation that blocks other SourceBuffer updates:

- Let start equal the starting presentation timestamp for the removal range, in seconds measured from presentation start time.

- Let end equal the end presentation timestamp for the removal range, in seconds measured from presentation start time.

- Set the

updatingattribute to true. - Queue a task tofire a simple event named

updatestartat thisSourceBufferobject. - Return control to the caller and run the rest of the steps asynchronously.

- Run the coded frame removal algorithm with start and end as the start and end of the removal range.

- Set the

updatingattribute to false. - Queue a task tofire a simple event named

updateat thisSourceBufferobject. - Queue a task tofire a simple event named

updateendat thisSourceBufferobject.

3.5.7 Initialization Segment Received

The following steps are run when the segment parser loop successfully parses a complete initialization segment:

Each SourceBuffer object has an internal first initialization segment received flag that tracks whether the first initialization segment has been appended and received by this algorithm. This flag is set to false when the SourceBuffer is created and updated by the algorithm below.

- Update the

durationattribute if it currently equals NaN:- If the initialization segment contains a duration:

- Run the duration change algorithm with new duration set to the duration in the initialization segment.

- Otherwise:

- Run the duration change algorithm with new duration set to positive Infinity.

- If the initialization segment has no audio, video, or text tracks, then run the append error algorithm and abort these steps.

- If the first initialization segment received flag is true, then run the following steps:

- Verify the following properties. If any of the checks fail then run the append error algorithm and abort these steps.

- The number of audio, video, and text tracks match what was in the first initialization segment.

- The codecs for each track, match what was specified in the first initialization segment.

- If more than one track for a single type are present (e.g., 2 audio tracks), then theTrack IDs match the ones in the firstinitialization segment.

- Add the appropriate track descriptions from this initialization segment to each of the track buffers.

- Set the need random access point flag on all track buffers to true.

- Verify the following properties. If any of the checks fail then run the append error algorithm and abort these steps.

- Let active track flag equal false.

-

If the first initialization segment received flag is false, then run the following steps:

- If the initialization segment contains tracks with codecs the user agent does not support, then run theappend error algorithm and abort these steps.

Note

User agents MAY consider codecs, that would otherwise be supported, as "not supported" here if the codecs were not specified in thetype parameter passed to

addSourceBuffer().

For example, MediaSource.isTypeSupported('video/webm;codecs="vp8,vorbis"') may return true, but ifaddSourceBuffer()was called with 'video/webm;codecs="vp8"' and a Vorbis track appears in theinitialization segment, then the user agentMAY use this step to trigger a decode error. -

For each audio track in the initialization segment, run following steps:

- Let audio byte stream track ID be the Track ID for the current track being processed.

- Let audio language be a BCP 47 language tag for the language specified in theinitialization segment for this track or an empty string if no language info is present.

- If audio language equals the 'und' BCP 47 value, then assign an empty string toaudio language.

- Let audio label be a label specified in the initialization segment for this track or an empty string if no label info is present.

- Let audio kinds be a sequence of kind strings specified in the initialization segment for this track or a sequence with a single empty string element in it if no kind information is provided.

- For each value in audio kinds, run the following steps:

- Let current audio kind equal the value from audio kinds for this iteration of the loop.

- Let new audio track be a new

AudioTrackobject. - Generate a unique ID and assign it to the

idproperty onnew audio track. - Assign audio language to the

languageproperty onnew audio track. - Assign audio label to the

labelproperty onnew audio track. - Assign current audio kind to the

kindproperty onnew audio track. -

If

audioTracks.lengthequals 0, then run the following steps:- Set the

enabledproperty onnew audio track to true. - Set active track flag to true.

- Set the

- Add new audio track to the

audioTracksattribute on thisSourceBufferobject.NoteThis should trigger

AudioTrackList[HTML51] logic to queue a task to fire a trusted event namedaddtrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized tonew audio track, at theAudioTrackListobject referenced by theaudioTracksattribute on thisSourceBufferobject. - Add new audio track to the

audioTracksattribute on the HTMLMediaElement.NoteThis should trigger

AudioTrackList[HTML51] logic to queue a task to fire a trusted event namedaddtrack, that does not bubble and is not cancelable, and that uses theTrackEventinterface, with thetrackattribute initialized tonew audio track, at theAudioTrackListobject referenced by theaudioTracksattribute on the HTMLMediaElement.

- Create a new track buffer to store coded frames for this track.

- Add the track description for this track to the track buffer.

-

For each video track in the initialization segment, run following steps:

- Let video byte stream track ID be the Track ID for the current track being processed.

- Let video language be a BCP 47 language tag for the language specified in theinitialization segment for this track or an empty string if no language info is present.

- If video language equals the 'und' BCP 47 value, then assign an empty string tovideo language.

- Let video label be a label specified in the initialization segment for this track or an empty string if no label info is present.

- Let video kinds be a sequence of kind strings specified in the initialization segment for this track or a sequence with a single empty string element in it if no kind information is provided.

- For each value in video kinds, run the following steps:

- Let current video kind equal the value from video kinds for this iteration of the loop.

- Let new video track be a new

VideoTrackobject. - Generate a unique ID and assign it to the

idproperty onnew video track. - Assign video language to the

languageproperty onnew video track. - Assign video label to the

labelproperty onnew video track. - Assign current video kind to the

kindproperty onnew video track. -

If

videoTracks.lengthequals 0, then run the following steps:- Set the

selectedproperty onnew video track to true. - Set active track flag to true.

- Set the

- Add new video track to the

videoTracksattribute on thisSourceBufferobject.NoteThis should trigger