初探Openstack Neutron DVR

作者:Liping Mao 发表于:2014-09-10

版权声明:可以任意转载,转载时请务必以超链接形式标明文章原始出处和作者信息及本版权声明

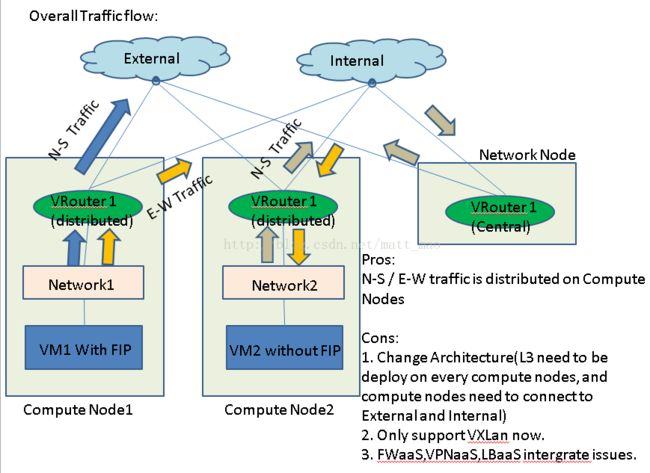

目前在Juno版本的trunk中已经合入了DVR相关的代码,我的理解是在Juno版本中DVR是一个experimental feature。最好需要稳定一个版本以后再上生产环境。之前写过一篇博文是DVR相关的,当时代码还没有实现,与实际的实现有一些出入。当前的DVR的实现是基于VXLAN的。关于VXLAN的优势,有时间会写一些体会,今天暂且不谈。

建议先看一下以下文档,对DVR有一些了解:

https://docs.google.com/presentation/d/1ktCLAdglpKdsC--fQ2F_c2d3X1u-lyRGGUzRu9ITuWo/edit#slide=id.g2b6a14e30_036

https://docs.google.com/presentation/d/1ktCLAdglpKdsC--fQ2F_c2d3X1u-lyRGGUzRu9ITuWo/edit#slide=id.g2b6a14e30_030

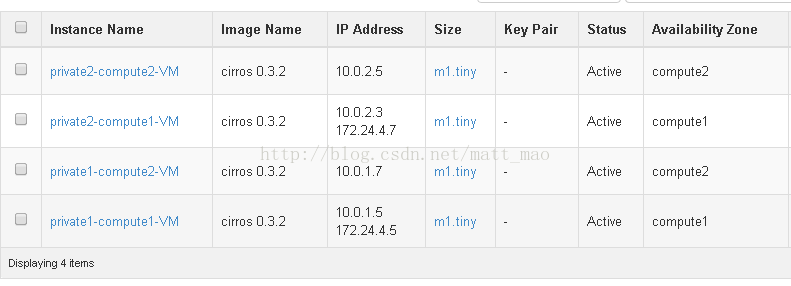

然后起了两个私有网络和一个DVR 路由器,拓扑如下:

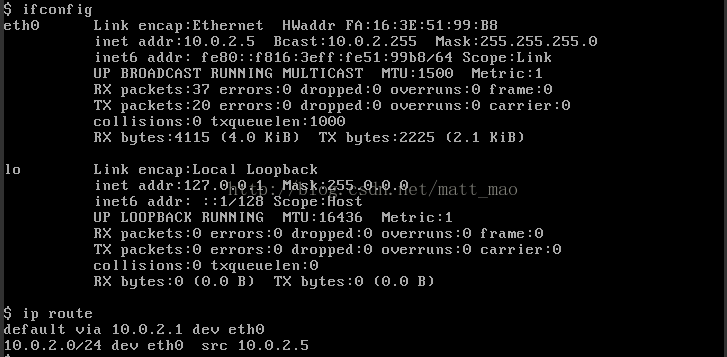

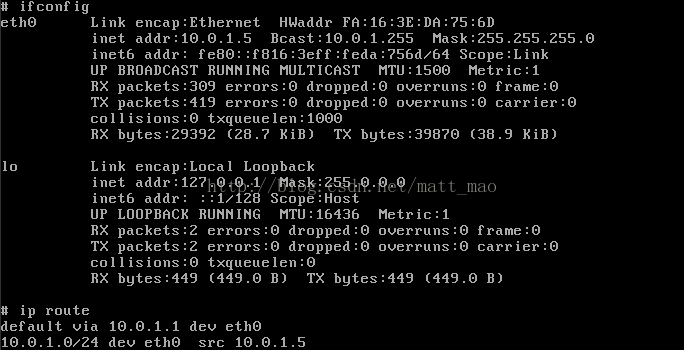

再看一下虚机private2-compute2-VM的IP和路由:

最终会转发到br-int的qvo4e843b99-fb:

root@dvr-compute1:~# ovs-vsctl show

67f121bd-cca7-41c2-95ab-23ed85d1305b

Bridge br-tun

Port patch-int

Interface patch-int

type: patch

options: {peer=patch-tun}

Port "vxlan-0ae09f91"

Interface "vxlan-0ae09f91"

type: vxlan

options: {df_default="true", in_key=flow, local_ip="10.224.159.141", out_key=flow, remote_ip="10.224.159.145"}

Port "vxlan-0ae09f88"

Interface "vxlan-0ae09f88"

type: vxlan

options: {df_default="true", in_key=flow, local_ip="10.224.159.141", out_key=flow, remote_ip="10.224.159.136"}

Port br-tun

Interface br-tun

type: internal

Bridge br-int

fail_mode: secure

Port "qvo111517d8-c5"

tag: 2

Interface "qvo111517d8-c5"

Port patch-tun

Interface patch-tun

type: patch

options: {peer=patch-int}

Port "qr-001d0ed9-01"

tag: 2

Interface "qr-001d0ed9-01"

type: internal

Port br-int

Interface br-int

type: internal

Port "qr-ddbdc784-d7"

tag: 1

Interface "qr-ddbdc784-d7"

type: internal

Port "qvo4e843b99-fb"

tag: 1

Interface "qvo4e843b99-fb"

Bridge br-ex

Port br-ex

Interface br-ex

type: internal

Port "fg-081d537b-06"

Interface "fg-081d537b-06"

type: internal

ovs_version: "2.0.2"

fip-fbd46644-c70f-4227-a414-862a00cbd1d2

qrouter-0fbb351e-a65b-4790-a409-8fb219ce16aa

qdhcp-401f678d-4518-446c-9a33-cd2fb054c104

qdhcp-db755841-0764-4a8f-b962-8df008ce6330

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

qr-001d0ed9-01 Link encap:Ethernet HWaddr fa:16:3e:69:b4:05

inet addr:10.0.2.1 Bcast:10.0.2.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fe69:b405/64 Scope:Link

UP BROADCAST RUNNING MTU:1500 Metric:1

RX packets:35 errors:0 dropped:0 overruns:0 frame:0

TX packets:14 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:3510 (3.5 KB) TX bytes:1092 (1.0 KB)

qr-ddbdc784-d7 Link encap:Ethernet HWaddr fa:16:3e:66:13:af

inet addr:10.0.1.1 Bcast:10.0.1.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fe66:13af/64 Scope:Link

UP BROADCAST RUNNING MTU:1500 Metric:1

RX packets:401 errors:0 dropped:0 overruns:0 frame:0

TX packets:378 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:38224 (38.2 KB) TX bytes:36224 (36.2 KB)

rfp-0fbb351e-a Link encap:Ethernet HWaddr ea:5c:56:9a:36:9c

inet addr:169.254.31.28 Bcast:0.0.0.0 Mask:255.255.255.254

inet6 addr: fe80::e85c:56ff:fe9a:369c/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:12 errors:0 dropped:0 overruns:0 frame:0

TX packets:12 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1116 (1.1 KB) TX bytes:1116 (1.1 KB)

NXST_FLOW reply (xid=0x4):

。。。

cookie=0x0, duration=62666.766s, table=1, n_packets=2, n_bytes=84, idle_age=62576, priority=3,arp,dl_vlan=2,arp_tpa=10.0.2.1 actions=drop

0: from all lookup local

32766: from all lookup main

32767: from all lookup default

32768: from 10.0.1.5 lookup 16

32769: from 10.0.2.3 lookup 16

167772417: from 10.0.1.1/24 lookup 167772417

167772417: from 10.0.1.1/24 lookup 167772417

167772673: from 10.0.2.1/24 lookup 167772673

root@dvr-compute1:~# ip netns exec qrouter-0fbb351e-a65b-4790-a409-8fb219ce16aa ip route list table main

10.0.1.0/24 dev qr-ddbdc784-d7 proto kernel scope link src 10.0.1.1

10.0.2.0/24 dev qr-001d0ed9-01 proto kernel scope link src 10.0.2.1

169.254.31.28/31 dev rfp-0fbb351e-a proto kernel scope link src 169.254.31.28

10.0.1.5 dev qr-ddbdc784-d7 lladdr fa:16:3e:da:75:6d PERMANENT

10.0.2.3 dev qr-001d0ed9-01 lladdr fa:16:3e:a4:fc:98 PERMANENT

10.0.1.6 dev qr-ddbdc784-d7 lladdr fa:16:3e:9f:55:67 PERMANENT

10.0.2.2 dev qr-001d0ed9-01 lladdr fa:16:3e:13:55:66 PERMANENT

10.0.2.5 dev qr-001d0ed9-01 lladdr fa:16:3e:51:99:b8 PERMANENT

10.0.1.4 dev qr-ddbdc784-d7 lladdr fa:16:3e:da:e3:6e PERMANENT

10.0.1.7 dev qr-ddbdc784-d7 lladdr fa:16:3e:14:b8:ec PERMANENT

169.254.31.29 dev rfp-0fbb351e-a lladdr 42:0d:9f:49:63:c6 STALE

OFPT_FEATURES_REPLY (xid=0x2): dpid:0000e2b7aa5da34a

n_tables:254, n_buffers:256

capabilities: FLOW_STATS TABLE_STATS PORT_STATS QUEUE_STATS ARP_MATCH_IP

actions: OUTPUT SET_VLAN_VID SET_VLAN_PCP STRIP_VLAN SET_DL_SRC SET_DL_DST SET_NW_SRC SET_NW_DST SET_NW_TOS SET_TP_SRC SET_TP_DST ENQUEUE

1(patch-int): addr:76:ae:9f:b3:bf:c6

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

3(vxlan-0ae09f88): addr:92:61:e9:43:dd:99

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

4(vxlan-0ae09f91): addr:2e:cc:c0:4a:4e:d4

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

LOCAL(br-tun): addr:e2:b7:aa:5d:a3:4a

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

OFPT_GET_CONFIG_REPLY (xid=0x4): frags=normal miss_send_len=0

67f121bd-cca7-41c2-95ab-23ed85d1305b

Bridge br-tun

Port patch-int

Interface patch-int

type: patch

options: {peer=patch-tun}

Port "vxlan-0ae09f91"

Interface "vxlan-0ae09f91"

type: vxlan

options: {df_default="true", in_key=flow, local_ip="10.224.159.141", out_key=flow, remote_ip="10.224.159.145"}

Port "vxlan-0ae09f88"

Interface "vxlan-0ae09f88"

type: vxlan

options: {df_default="true", in_key=flow, local_ip="10.224.159.141", out_key=flow, remote_ip="10.224.159.136"}

Port br-tun

Interface br-tun

type: internal

OVS会将此包进行VXLAN封装,将L2帧分装到VXLAN中,包头如下:

OFPT_FEATURES_REPLY (xid=0x2): dpid:000062e9fb8b8f42

n_tables:254, n_buffers:256

capabilities: FLOW_STATS TABLE_STATS PORT_STATS QUEUE_STATS ARP_MATCH_IP

actions: OUTPUT SET_VLAN_VID SET_VLAN_PCP STRIP_VLAN SET_DL_SRC SET_DL_DST SET_NW_SRC SET_NW_DST SET_NW_TOS SET_TP_SRC SET_TP_DST ENQUEUE

1(patch-int): addr:02:dc:f1:96:db:bd

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

3(vxlan-0ae09f88): addr:b6:4b:d0:83:07:52

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

4(vxlan-0ae09f8d): addr:12:e5:36:2c:1a:36

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

LOCAL(br-tun): addr:62:e9:fb:8b:8f:42

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

OFPT_GET_CONFIG_REPLY (xid=0x4): frags=normal miss_send_len=0

0: from all lookup local

32766: from all lookup main

32767: from all lookup default

32768: from 10.0.1.5 lookup 16

32769: from 10.0.2.3 lookup 16

167772417: from 10.0.1.1/24 lookup 167772417

167772417: from 10.0.1.1/24 lookup 167772417

167772673: from 10.0.2.1/24 lookup 167772673

10.0.1.0/24 dev qr-ddbdc784-d7 proto kernel scope link src 10.0.1.1

10.0.2.0/24 dev qr-001d0ed9-01 proto kernel scope link src 10.0.2.1

169.254.31.28/31 dev rfp-0fbb351e-a proto kernel scope link src 169.254.31.28

default via 169.254.31.29 dev rfp-0fbb351e-a

fg-081d537b-06 Link encap:Ethernet HWaddr fa:16:3e:a4:eb:6b

inet addr:172.24.4.6 Bcast:172.24.4.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fea4:eb6b/64 Scope:Link

UP BROADCAST RUNNING MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:50 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 B) TX bytes:2512 (2.5 KB)

fpr-0fbb351e-a Link encap:Ethernet HWaddr 42:0d:9f:49:63:c6

inet addr:169.254.31.29 Bcast:0.0.0.0 Mask:255.255.255.254

inet6 addr: fe80::400d:9fff:fe49:63c6/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:12 errors:0 dropped:0 overruns:0 frame:0

TX packets:12 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1116 (1.1 KB) TX bytes:1116 (1.1 KB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:13 errors:0 dropped:0 overruns:0 frame:0

TX packets:13 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1250 (1.2 KB) TX bytes:1250 (1.2 KB)

default via 172.24.4.1 dev fg-081d537b-06

169.254.31.28/31 dev fpr-0fbb351e-a proto kernel scope link src 169.254.31.29

172.24.4.0/24 dev fg-081d537b-06 proto kernel scope link src 172.24.4.6

172.24.4.5 via 169.254.31.28 dev fpr-0fbb351e-a

172.24.4.7 via 169.254.31.28 dev fpr-0fbb351e-a

net.ipv4.conf.fg-081d537b-06.proxy_arp = 1

。。。

172.24.4.5 via 169.254.31.28 dev fpr-0fbb351e-a

172.24.4.7 via 169.254.31.28 dev fpr-0fbb351e-a

1: lo:

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: rfp-0fbb351e-a:

link/ether ea:5c:56:9a:36:9c brd ff:ff:ff:ff:ff:ff

inet 169.254.31.28/31 scope global rfp-0fbb351e-a

valid_lft forever preferred_lft forever

inet 172.24.4.5/32 brd 172.24.4.5 scope global rfp-0fbb351e-a

valid_lft forever preferred_lft forever

inet 172.24.4.7/32 brd 172.24.4.7 scope global rfp-0fbb351e-a

valid_lft forever preferred_lft forever

inet6 fe80::e85c:56ff:fe9a:369c/64 scope link

valid_lft forever preferred_lft forever

17: qr-ddbdc784-d7:

link/ether fa:16:3e:66:13:af brd ff:ff:ff:ff:ff:ff

inet 10.0.1.1/24 brd 10.0.1.255 scope global qr-ddbdc784-d7

valid_lft forever preferred_lft forever

inet6 fe80::f816:3eff:fe66:13af/64 scope link

valid_lft forever preferred_lft forever

19: qr-001d0ed9-01:

link/ether fa:16:3e:69:b4:05 brd ff:ff:ff:ff:ff:ff

inet 10.0.2.1/24 brd 10.0.2.255 scope global qr-001d0ed9-01

valid_lft forever preferred_lft forever

inet6 fe80::f816:3eff:fe69:b405/64 scope link

valid_lft forever preferred_lft forever

10.0.1.0/24 dev qr-ddbdc784-d7 proto kernel scope link src 10.0.1.1

10.0.2.0/24 dev qr-001d0ed9-01 proto kernel scope link src 10.0.2.1

169.254.31.28/31 dev rfp-0fbb351e-a proto kernel scope link src 169.254.31.28

0: from all lookup local

32766: from all lookup main

32767: from all lookup default

32768: from 10.0.1.5 lookup 16

32769: from 10.0.2.3 lookup 16

167772417: from 10.0.1.1/24 lookup 167772417

167772417: from 10.0.1.1/24 lookup 167772417

167772673: from 10.0.2.1/24 lookup 167772673

default via 10.0.1.6 dev qr-ddbdc784-d7

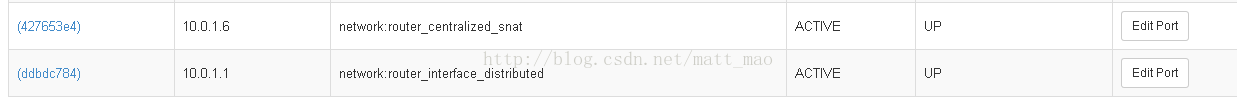

这个表会将其转发给10.0.1.6,而这个IP就是在network node上的router_centralized_snat接口。

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

qg-4d15b7f6-cb Link encap:Ethernet HWaddr fa:16:3e:24:0b:6b

inet addr:172.24.4.4 Bcast:172.24.4.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fe24:b6b/64 Scope:Link

UP BROADCAST RUNNING MTU:1500 Metric:1

RX packets:5 errors:0 dropped:0 overruns:0 frame:0

TX packets:144 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:210 (210.0 B) TX bytes:13320 (13.3 KB)

sg-427653e4-a3 Link encap:Ethernet HWaddr fa:16:3e:9f:55:67

inet addr:10.0.1.6 Bcast:10.0.1.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fe9f:5567/64 Scope:Link

UP BROADCAST RUNNING MTU:1500 Metric:1

RX packets:167 errors:0 dropped:0 overruns:0 frame:0

TX packets:52 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:16260 (16.2 KB) TX bytes:4460 (4.4 KB)

sg-5df1ec71-d3 Link encap:Ethernet HWaddr fa:16:3e:13:55:66

inet addr:10.0.2.2 Bcast:10.0.2.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fe13:5566/64 Scope:Link

UP BROADCAST RUNNING MTU:1500 Metric:1

RX packets:34 errors:0 dropped:0 overruns:0 frame:0

TX packets:12 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:3412 (3.4 KB) TX bytes:952 (952.0 B)