二进制安装部署kubernetes集群---超详细教程

前言:本篇博客是博主踩过无数坑,反复查阅资料,一步步搭建完成后整理的个人心得,分享给大家~~~

本文所需的安装包,都上传在我的网盘中,需要的可以打赏博主一杯咖啡钱,然后私密博主,博主会很快答复呦~

00.组件版本和配置策略

00-01.组件版本

- Kubernetes 1.10.4

- Docker 18.03.1-ce

- Etcd 3.3.7

- Flanneld 0.10.0

- 插件:

- Coredns

- Dashboard

- Heapster (influxdb、grafana)

- Metrics-Server

- EFK (elasticsearch、fluentd、kibana)

- 镜像仓库:

- docker registry

- harbor

00-02.主要配置策略

kube-apiserver:

- 使用 keepalived 和 haproxy 实现 3 节点高可用;

- 关闭非安全端口 8080 和匿名访问;

- 在安全端口 6443 接收 https 请求;

- 严格的认证和授权策略 (x509、token、RBAC);

- 开启 bootstrap token 认证,支持 kubelet TLS bootstrapping;

- 使用 https 访问 kubelet、etcd,加密通信;

kube-controller-manager:

- 3 节点高可用;

- 关闭非安全端口,在安全端口 10252 接收 https 请求;

- 使用 kubeconfig 访问 apiserver 的安全端口;

- 自动 approve kubelet 证书签名请求 (CSR),证书过期后自动轮转;

- 各 controller 使用自己的 ServiceAccount 访问 apiserver;

kube-scheduler:

- 3 节点高可用;

- 使用 kubeconfig 访问 apiserver 的安全端口;

kubelet:

- 使用 kubeadm 动态创建 bootstrap token,而不是在 apiserver 中静态配置;

- 使用 TLS bootstrap 机制自动生成 client 和 server 证书,过期后自动轮转;

- 在 KubeletConfiguration 类型的 JSON 文件配置主要参数;

- 关闭只读端口,在安全端口 10250 接收 https 请求,对请求进行认证和授权,拒绝匿名访问和非授权访问;

- 使用 kubeconfig 访问 apiserver 的安全端口;

kube-proxy:

- 使用 kubeconfig 访问 apiserver 的安全端口;

- 在 KubeProxyConfiguration 类型的 JSON 文件配置主要参数;

- 使用 ipvs 代理模式;

集群插件:

- DNS:使用功能、性能更好的 coredns;

- Dashboard:支持登录认证;

- Metric:heapster、metrics-server,使用 https 访问 kubelet 安全端口;

- Log:Elasticsearch、Fluend、Kibana;

- Registry 镜像库:docker-registry、harbor;

01.系统初始化

01-01.集群机器

- kube-master:192.168.10.108

- kube-node1:192.168.10.109

- kube-node2:192.168.10.110

本文档中的 etcd 集群、master 节点、worker 节点均使用这三台机器。

在每个服务器上都要执行以下全部操作,如果没有特殊指明,本文档的所有操作均在kube-master 节点上执行

01-02.主机名

1、设置永久主机名称,然后重新登录

$ sudo hostnamectl set-hostname kube-master

$ sudo hostnamectl set-hostname kube-node1

$ sudo hostnamectl set-hostname kube-node2

2、修改 /etc/hostname 文件,添加主机名和 IP 的对应关系:

$ vim /etc/hosts

192.168.10.108 kube-master

192.168.10.109 kube-node1

192.168.10.110 kube-node2

01-03.添加 k8s 和 docker 账户

1、在每台机器上添加 k8s 账户

$ sudo useradd -m k8s

$ sudo sh -c 'echo along |passwd k8s --stdin' #为k8s 账户设置密码

2、修改visudo权限

$ sudo visudo #去掉%wheel ALL=(ALL) NOPASSWD: ALL这行的注释

$ sudo grep '%wheel.*NOPASSWD: ALL' /etc/sudoers

%wheel ALL=(ALL) NOPASSWD: ALL

3、将k8s用户归到wheel组

$ gpasswd -a k8s wheel

Adding user k8s to group wheel

$ id k8s

uid=1000(k8s) gid=1000(k8s) groups=1000(k8s),10(wheel)

4、在每台机器上添加 docker 账户,将 k8s 账户添加到 docker 组中,同时配置 dockerd 参数(注:安装完docker才有):

$ sudo useradd -m docker

$ sudo gpasswd -a k8s docker

$ sudo mkdir -p /opt/docker/

$ vim /opt/docker/daemon.json #可以后续部署docker时在操作

{

"registry-mirrors": ["https://hub-mirror.c.163.com", "https://docker.mirrors.ustc.edu.cn"],

"max-concurrent-downloads": 20

}

01-04.无密码 ssh 登录其它节点

1、生成秘钥对

[root@kube-master ~]# ssh-keygen #连续回车即可

2、将自己的公钥发给其他服务器

[root@kube-master ~]# ssh-copy-id root@kube-master

[root@kube-master ~]# ssh-copy-id root@kube-node1

[root@kube-master ~]# ssh-copy-id root@kube-node2

[root@kube-master ~]# ssh-copy-id k8s@kube-master

[root@kube-master ~]# ssh-copy-id k8s@kube-node1

[root@kube-master ~]# ssh-copy-id k8s@kube-node2

01-05.将可执行文件路径 /opt/k8s/bin 添加到 PATH 变量

在每台机器上添加环境变量:

$ sudo sh -c "echo 'PATH=/opt/k8s/bin:$PATH:$HOME/bin:$JAVA_HOME/bin' >> /etc/profile.d/k8s.sh"

$ source /etc/profile.d/k8s.sh

01-06.安装依赖包

在每台机器上安装依赖包:

CentOS:

$ sudo yum install -y epel-release

$ sudo yum install -y conntrack ipvsadm ipset jq sysstat curl iptables libseccomp

Ubuntu:

$ sudo apt-get install -y conntrack ipvsadm ipset jq sysstat curl iptables libseccomp

注:ipvs 依赖 ipset;

01-07.关闭防火墙

在每台机器上关闭防火墙:

① 关闭服务,并设为开机不自启

$ sudo systemctl stop firewalld

$ sudo systemctl disable firewalld

② 清空防火墙规则

$ sudo iptables -F && sudo iptables -X && sudo iptables -F -t nat && sudo iptables -X -t nat

$ sudo iptables -P FORWARD ACCEPT

01-08.关闭 swap 分区

1、如果开启了 swap 分区,kubelet 会启动失败(可以通过将参数 --fail-swap-on 设置为false 来忽略 swap on),故需要在每台机器上关闭swap 分区:

$ sudo swapoff -a

2、为了防止开机自动挂载 swap 分区,可以注释 /etc/fstab 中相应的条目:

$ sudo sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

01-09.关闭 SELinux

1、关闭 SELinux,否则后续 K8S 挂载目录时可能报错 Permission denied :

$ sudo setenforce 0

2、修改配置文件,永久生效;

$ grep SELINUX /etc/selinux/config

SELINUX=disabled

01-10.关闭 dnsmasq (可选)

linux 系统开启了 dnsmasq 后(如 GUI 环境),将系统 DNS Server 设置为 127.0.0.1,这会导致 docker 容器无法解析域名,需要关闭它:

$ sudo service dnsmasq stop

$ sudo systemctl disable dnsmasq

01-11.加载内核模块

$ sudo modprobe br_netfilter

$ sudo modprobe ip_vs

01-12.设置系统参数

$ cat > kubernetes.conf < EOF $ sudo cp kubernetes.conf /etc/sysctl.d/kubernetes.conf $ sudo sysctl -p /etc/sysctl.d/kubernetes.conf $ sudo mount -t cgroup -o cpu,cpuacct none /sys/fs/cgroup/cpu,cpuacct 注: 01-13.设置系统时区 1、调整系统 TimeZone $ sudo timedatectl set-timezone Asia/Shanghai 2、将当前的 UTC 时间写入硬件时钟 $ sudo timedatectl set-local-rtc 0 3、重启依赖于系统时间的服务 $ sudo systemctl restart rsyslog $ sudo systemctl restart crond 01-14.更新系统时间 $ yum -y install ntpdate $ sudo ntpdate cn.pool.ntp.org 01-15.创建目录 在每台机器上创建目录: $ sudo mkdir -p /opt/k8s/bin $ sudo mkdir -p /opt/k8s/cert $ sudo mkdir -p /opt/etcd/cert $ sudo mkdir -p /opt/lib/etcd $ sudo mkdir -p /opt/k8s/script $ chown -R k8s /opt/* 01-16.检查系统内核和模块是否适合运行 docker (仅适用于linux 系统) $ curl https://raw.githubusercontent.com/docker/docker/master/contrib/check-config.sh > check-config.sh $ chmod +x check-config.sh $ bash ./check-config.sh 本文档使用 CloudFlare 的 PKI 工具集 cfssl 创建所有证书。 02-01.安装 cfssl 工具集 mkdir -p /opt/k8s/cert && sudo chown -R k8s /opt/k8s && cd /opt/k8s wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 mv cfssl_linux-amd64 /opt/k8s/bin/cfssl wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 mv cfssljson_linux-amd64 /opt/k8s/bin/cfssljson wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 mv cfssl-certinfo_linux-amd64 /opt/k8s/bin/cfssl-certinfo chmod +x /opt/k8s/bin/* 02-02.创建根证书 (CA) CA 证书是集群所有节点共享的,只需要创建一个 CA 证书,后续创建的所有证书都由它签名。 02-02-01 创建配置文件 CA 配置文件用于配置根证书的使用场景 (profile) 和具体参数 (usage,过期时间、服务端认证、客户端认证、加密等),后续在签名其它证书时需要指定特定场景。 [root@kube-master ~]# cd /opt/k8s/cert [root@kube-master cert]# vim ca-config.json 注: ① signing :表示该证书可用于签名其它证书,生成的 ca.pem 证书中CA=TRUE ; ② server auth :表示 client 可以用该该证书对 server 提供的证书进行验证; ③ client auth :表示 server 可以用该该证书对 client 提供的证书进行验证; 02-02-02 创建证书签名请求文件 [root@kube-master cert]# vim ca-csr.json 注: ① CN: Common Name ,kube-apiserver 从证书中提取该字段作为请求的用户名(User Name),浏览器使用该字段验证网站是否合法; ② O: Organization ,kube-apiserver 从证书中提取该字段作为请求用户所属的组(Group); ③ kube-apiserver 将提取的 User、Group 作为 RBAC 授权的用户标识; 02-02-03 生成 CA 证书和私钥 [root@kube-master cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca [root@kube-master cert]# ls ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem 02-02-04 分发证书文件 将生成的 CA 证书、秘钥文件、配置文件拷贝到所有节点的/opt/k8s/cert 目录下: [root@kube-master ~]# vim /opt/k8s/script/scp_k8scert.sh [root@kube-master ~]# chmod +x /opt/k8s/script/scp_k8scert.sh && /opt/k8s/script/scp_k8scert.sh kubectl 是 kubernetes 集群的命令行管理工具,本文档介绍安装和配置它的步骤。 kubectl 默认从 ~/.kube/config 文件读取 kube-apiserver 地址、证书、用户名等信息,如果没有配置,执行 kubectl 命令时可能会出错: $ kubectl get pods The connection to the server localhost:8080 was refused - did you specify the right host or port? 本文档只需要部署一次,生成的 kubeconfig 文件与机器无关。 03-01.下载kubectl 二进制文件 下载和解压 kubectl二进制文件需要科学上网下载,我已经下载到我的网盘,有需要的小伙伴联系我~ [root@kube-master ~]# wget https://dl.k8s.io/v1.10.4/kubernetes-client-linux-amd64.tar.gz [root@kube-master ~]# tar -xzvf kubernetes-client-linux-amd64.tar.gz 03-02.创建 admin 证书和私钥 03-02-01 创建证书签名请求 [root@kube-master ~]# cd /opt/k8s/cert/ cat > admin-csr.json < 注: ① O 为 system:masters ,kube-apiserver 收到该证书后将请求的 Group 设置为system:masters; ② 预定义的 ClusterRoleBinding cluster-admin 将 Group system:masters 与Role cluster-admin 绑定,该 Role 授予所有 API的权限; ③ 该证书只会被 kubectl 当做 client 证书使用,所以 hosts 字段为空; 03-02-02 生成证书和私钥 [root@kube-master cert]# cfssl gencert -ca=/opt/k8s/cert/ca.pem \ -ca-key=/opt/k8s/cert/ca-key.pem \ -config=/opt/k8s/cert/ca-config.json \ -profile=kubernetes admin-csr.json | cfssljson_linux-amd64 -bare admin [root@kube-master cert]# ls admin* admin.csr admin-csr.json admin-key.pem admin.pem 03-03.创建和分发 kubeconfig 文件 03-03-01 创建kubeconfig文件 kubeconfig 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书; ① 设置集群参数,(--server=${KUBE_APISERVER} ,指定IP和端口;我使用的是haproxy的VIP和端口;如果没有haproxy代理,就用实际服务的IP和端口;如:https://192.168.10.108:6443) [root@kube-master ~]# kubectl config set-cluster kubernetes \ --certificate-authority=/opt/k8s/cert/ca.pem \ --embed-certs=true \ --server=https://192.168.10.10:8443 \ --kubeconfig=/root/.kube/kubectl.kubeconfig ② 设置客户端认证参数 [root@kube-master ~]# kubectl config set-credentials kube-admin \ --client-certificate=/opt/k8s/cert/admin.pem \ --client-key=/opt/k8s/cert/admin-key.pem \ --embed-certs=true \ --kubeconfig=/root/.kube/kubectl.kubeconfig ③ 设置上下文参数 [root@kube-master ~]# kubectl config set-context kube-admin@kubernetes \ --cluster=kubernetes \ --user=kube-admin \ --kubeconfig=/root/.kube/kubectl.kubeconfig ④ 设置默认上下文 [root@kube-master ~]# kubectl config use-context kube-admin@kubernetes --kubeconfig=/root/.kube/kubectl.kubeconfig 注:在后续kubernetes认证,文章中会详细讲解 [root@kube-master ~]# chmod +x /opt/k8s/script/kubectl_environment.sh && /opt/k8s/script/kubectl_environment.sh 03-03-01 验证kubeconfig文件 [root@kube-master ~]# ls /root/.kube/kubectl.kubeconfig /root/.kube/kubectl.kubeconfig [root@kube-master ~]# kubectl config view --kubeconfig=/root/.kube/kubectl.kubeconfig 03-03-03 分发 kubeclt 和kubeconfig 文件,分发到所有使用kubectl 命令的节点 [root@kube-master ~]# vim /opt/k8s/script/scp_kubectl.sh NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110") [root@kube-master ~]# chmod +x /opt/k8s/script/scp_kubectl.sh && /opt/k8s/script/scp_kubectl.sh etcd 是基于 Raft 的分布式 key-value 存储系统,由 CoreOS 开发,常用于服务发现、共享配置以及并发控制(如 leader 选举、分布式锁等)。kubernetes 使用 etcd 存储所有运行数据。 本文档介绍部署一个三节点高可用 etcd 集群的步骤: ① 下载和分发 etcd 二进制文件 ② 创建 etcd 集群各节点的 x509 证书,用于加密客户端(如 etcdctl) 与 etcd 集群、etcd 集群之间的数据流; ③ 创建 etcd 的 systemd unit 文件,配置服务参数; ④ 检查集群工作状态; 04-01.下载etcd 二进制文件 到 https://github.com/coreos/etcd/releases 页面下载最新版本的发布包: [root@kube-master ~]# https://github.com/coreos/etcd/releases/download/v3.3.7/etcd-v3.3.7-linux-amd64.tar.gz [root@kube-master ~]# tar -xvf etcd-v3.3.7-linux-amd64.tar.gz 04-02.创建 etcd 证书和私钥 04-02-01 创建证书签名请求 [root@kube-master ~]# cd /opt/etcd/cert [root@kube-master cert]# cat > etcd-csr.json < EOF 注:hosts 字段指定授权使用该证书的 etcd 节点 IP 或域名列表,这里将 etcd 集群的三个节点 IP 都列在其中; 04-02-02 生成证书和私钥 [root@kube-master cert]# cfssl gencert -ca=/opt/k8s/cert/ca.pem \ -ca-key=/opt/k8s/cert/ca-key.pem \ -config=/opt/k8s/cert/ca-config.json \ -profile=kubernetes etcd-csr.json | cfssljson_linux-amd64 -bare etcd [root@kube-master cert]# ls etcd* etcd.csr etcd-csr.json etcd-key.pem etcd.pem 04-02-03 分发生成的证书和私钥到各 etcd 节点 [root@kube-master ~]# vim /opt/k8s/script/scp_etcd.sh NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110") 04-03.创建etcd 的systemd unit 模板及etcd 配置文件 04-03-01 创建etcd 的systemd unit 模板 [root@kube-master ~]# cat > /opt/etcd/etcd.service.template < EOF 注: 04-04.为各节点创建和分发 etcd systemd unit 文件 [root@kube-master ~]# cd /opt/k8s/script [root@kube-master script]# vim etcd_service.sh [root@kube-master script]# chmod +x /opt/k8s/script/etcd_service.sh && /opt/k8s/script/etcd_service.sh [root@kube-master script]# ls /opt/etcd/*.service /opt/etcd/etcd-192.168.10.108.service /opt/etcd/etcd-192.168.10.110.service /opt/etcd/etcd-192.168.10.109.service [root@kube-master script]# ls /etc/systemd/system/etcd.service /etc/systemd/system/etcd.service 04-05.启动 etcd 服务 [root@kube-master script]# vim /opt/k8s/script/etcd.sh [root@kube-master script]# chmod +x etcd.sh && ./etcd.sh >>> 192.168.10.108 Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /etc/systemd/system/etcd.service. >>> 192.168.10.109 Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /etc/systemd/system/etcd.service. >>> 192.168.10.110 Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /etc/systemd/system/etcd.service. #确保状态为 active (running),否则查看日志,确认原因:$ journalctl -u etcd >>> 192.168.10.108 Active: active (running) since Mon 2018-11-26 17:41:00 CST; 12min ago >>> 192.168.10.109 Active: active (running) since Mon 2018-11-26 17:41:00 CST; 12min ago >>> 192.168.10.110 Active: active (running) since Mon 2018-11-26 17:41:01 CST; 12min ago #输出均为healthy 时表示集群服务正常 >>> 192.168.10.108 https://192.168.10.108:2379 is healthy: successfully committed proposal: took = 1.373318ms >>> 192.168.10.109 https://192.168.10.109:2379 is healthy: successfully committed proposal: took = 2.371807ms >>> 192.168.10.110 https://192.168.10.110:2379 is healthy: successfully committed proposal: took = 1.764309ms 05-01.下载flanneld 二进制文件 到 https://github.com/coreos/flannel/releases 页面下载最新版本的发布包: [root@kube-master ~]# wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz [root@kube-master ~]# tar -xzvf flannel-v0.10.0-linux-amd64.tar.gz -C flannel 05-02.创建 flannel 证书和私钥 flannel 从 etcd 集群存取网段分配信息,而 etcd 集群启用了双向 x509 证书认证,所以需要为 flanneld 生成证书和私钥。 05-02-01 创建证书签名请求: [root@kube-master ~]# cd /opt/flannel/cert cat > flanneld-csr.json < EOF 该证书只会被 kubectl 当做 client 证书使用,所以 hosts 字段为空; 05-02-02 生成证书和私钥 [root@kube-master cert]# cfssl gencert -ca=/opt/k8s/cert/ca.pem \ -ca-key=/opt/k8s/cert/ca-key.pem \ -config=/opt/k8s/cert/ca-config.json \ -profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld [root@kube-master cert]# ls flanneld.csr flanneld-csr.json flanneld-key.pem flanneld.pemls flanneld*pem 05-02-03 将flanneld 二进制文件he1生成的证书和私钥分发到所有节点 cat > /opt/k8s/script/scp_flannel.sh < EOF 05-03.向etcd 写入集群Pod 网段信息 注意:本步骤只需执行一次。 [root@kube-master ~]# etcdctl \ --endpoints="https://192.168.10.108:2379,https://192.168.10.109:2379,https://192.168.10.110:2379" \ --ca-file=/opt/k8s/cert/ca.pem \ --cert-file=/opt/flannel/cert/flanneld.pem \ --key-file=/opt/flannel/cert/flanneld-key.pem \ set /atomic.io/network/config '{"Network":"10.30.0.0/16","SubnetLen": 24, "Backend": {"Type": "vxlan"}}' {"Network":"10.30.0.0/16","SubnetLen": 24, "Backend": {"Type": "vxlan"}} 注: 05-04.创建 flanneld 的 systemd unit 文件 [root@kube-master ~]# cat > /opt/flannel/flanneld.service << EOF 注: 05-05.分发flanneld systemd unit 文件到所有节点,启动并检查flanneld 服务 [root@kube-master ~]# vim /opt/k8s/script/flanneld_service.sh [root@kube-master ~]# chmod +x /opt/k8s/script/flanneld_service.sh && /opt/k8s/script/flanneld_service.sh 注:确保状态为 active (running) ,否则查看日志,确认原因: $ journalctl -u flanneld 05-06.检查分配给各 flanneld 的 Pod 网段信息 05-06-01 查看集群 Pod 网段(/16) [root@kube-master ~]# etcdctl \ --endpoints="https://192.168.10.108:2379,https://192.168.10.109:2379,https://192.168.10.110:2379" \ --ca-file=/opt/k8s/cert/ca.pem \ --cert-file=/opt/flannel/cert/flanneld.pem \ --key-file=/opt/flannel/cert/flanneld-key.pem \ get /atomic.io/network/config 输出: {"Network":"10.30.0.0/16","SubnetLen": 24, "Backend": {"Type": "vxlan"}} 05-06-02 查看已分配的 Pod 子网段列表(/24) [root@kube-master ~]# etcdctl \ --endpoints="https://192.168.10.108:2379,https://192.168.10.109:2379,https://192.168.10.110:2379" \ --ca-file=/opt/k8s/cert/ca.pem \ --cert-file=/opt/flannel/cert/flanneld.pem \ --key-file=/opt/flannel/cert/flanneld-key.pem \ ls /atomic.io/network/subnets 输出: /atomic.io/network/subnets/10.30.22.0-24 /atomic.io/network/subnets/10.30.33.0-24 /atomic.io/network/subnets/10.30.44.0-24 05-06-03 查看某一 Pod 网段对应的节点 IP 和 flannel 接口地址 [root@kube-master ~]# etcdctl \ --endpoints="https://192.168.10.108:2379,https://192.168.10.109:2379,https://192.168.10.110:2379" \ --ca-file=/opt/k8s/cert/ca.pem \ --cert-file=/opt/flannel/cert/flanneld.pem \ --key-file=/opt/flannel/cert/flanneld-key.pem \ get /atomic.io/network/subnets/10.30.22.0-24 输出: {"PublicIP":"192.168.10.108","BackendType":"vxlan","BackendData":{"VtepMAC":"fe:20:82:76:fc:25"}} 05-06-04 验证各节点能通过 Pod 网段互通 [root@kube-master ~]# vim /opt/k8s/script/ping_flanneld.sh [root@kube-master ~]# chmod +x /opt/k8s/script/ping_flanneld.sh && /opt/k8s/script/ping_flanneld.sh ① kubernetes master 节点运行如下组件: ② kube-scheduler 和 kube-controller-manager 可以以集群模式运行,通过 leader 选举产生一个工作进程,其它进程处于阻塞模式。 ③ 对于 kube-apiserver,可以运行多个实例(本文档是 3 实例),但对其它组件需要提供统一的访问地址,该地址需要高可用。本文档使用keepalived 和 haproxy 实现 kube-apiserver VIP 高可用和负载均衡。 ④ 因为对master做了keepalived高可用,所以3台服务器都有可能会升成master服务器(主master宕机,会有从升级为主);因此所有的master操作,在3个服务器上都要进行。 1、下载最新版本的二进制文件 从CHANGELOG 页面 下载 server tarball 文件。这2个包下载也需要科学上网。 [root@kube-master ~]# wget https://dl.k8s.io/v1.10.4/kubernetes-server-linux-amd64.tar.gz [root@kube-master ~]# tar -xzvf kubernetes-server-linux-amd64.tar.gz [root@kube-master ~]# cd kubernetes/ [root@kube-master kubernetes]# tar -xzvf kubernetes-src.tar.gz 2、将二进制文件拷贝到所有 master 节点 [root@kube-master ~]# vim /opt/k8s/script/scp_master.sh [root@kube-master ~]# chmod +x /opt/k8s/script/scp_master.sh && /opt/k8s/script/scp_master.sh ① 本文档讲解使用 keepalived 和 haproxy 实现 kube-apiserver 高可用的步骤: ② 运行 keepalived 和 haproxy 的节点称为 LB 节点。由于 keepalived 是一主多备运行模式,故至少两个 LB 节点。 ③ 本文档复用 master 节点的三台机器,haproxy 监听的端口(8443) 需要与 kube-apiserver的端口 6443 不同,避免冲突。 ④ keepalived 在运行过程中周期检查本机的 haproxy 进程状态,如果检测到 haproxy 进程异常,则触发重新选主的过程,VIP 将飘移到新选出来的主节点,从而实现 VIP 的高可用。 ⑤ 所有组件(如 kubeclt、apiserver、controller-manager、scheduler 等)都通过 VIP 和haproxy 监听的 8443 端口访问 kube-apiserver 服务。 06-01-01 安装软件包,配置haproxy 配置文件 [root@kube-master ~]# yum install -y keepalived haproxy [root@kube-master ~]# vim /etc/haproxy/haproxy.cfg [root@kube-master ~]# cat /etc/haproxy/haproxy.cfg 注: 06-01-02 在其他服务器安装、下发haproxy 配置文件;并启动检查haproxy服务 [root@kube-master ~]# vim /opt/k8s/script/haproxy.sh [root@kube-master ~]# chmod +x /opt/k8s/script/haproxy.sh && /opt/k8s/script/haproxy.sh 确保输出类似于: tcp 0 0 0.0.0.0:8443 0.0.0.0:* LISTEN 5351/haproxy tcp 0 0 0.0.0.0:10080 0.0.0.0:* LISTEN 5351/haproxy 06-01-03 配置和启动 keepalived 服务 keepalived 是一主(master)多备(backup)运行模式,故有两种类型的配置文件。 master 配置文件只有一份,backup 配置文件视节点数目而定,对于本文档而言,规划如下: (1)在192.168.10.108 master服务;配置文件: [root@kube-master ~]# vim /etc/keepalived/keepalived.conf 注: (2)在两台backup 服务;配置文件: [root@kube-node1 ~]# vim /etc/keepalived/keepalived.conf 注: (3)开启keepalived 服务 [root@kube-master ~]# vim /opt/k8s/script/keepalived.sh [root@kube-master ~]# chmod +x /opt/k8s/script/keepalived.sh && /opt/k8s/script/keepalived.sh (4)在master服务器上能看到eth1网卡上已经有192.168.10.10 VIP了 [root@kube-master ~]# ip a show eth1 3: eth1: link/ether 00:50:56:22:1b:39 brd ff:ff:ff:ff:ff:ff inet 192.168.10.108/24 brd 192.168.10.255 scope global eth1 valid_lft forever preferred_lft forever inet 192.168.10.10/32 scope global eth1 valid_lft forever preferred_lft forever 06-01-04 查看 haproxy 状态页面 浏览器访问192.168.10.10:10080/status 地址 ① 输入用户名、密码;在配置文件中自己定义的 ② 查看 haproxy 状态页面 本文档讲解使用 keepalived 和 haproxy 部署一个 3 节点高可用 master 集群的步骤,对应的 LB VIP 为环境变量 ${MASTER_VIP}。 准备工作:下载最新版本的二进制文件、安装和配置 flanneld 06-02-01 创建 kubernetes 证书和私钥 (1)创建证书签名请求: [root@kube-master ~]# cd /opt/k8s/cert/ [root@kube-master cert]# cat > kubernetes-csr.json < EOF 注: [root@kube-master ~]# kubectl get svc kubernetes NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 (2)生成证书和私钥 [root@kube-master cert]# cfssl gencert -ca=/opt/k8s/cert/ca.pem \ -ca-key=/opt/k8s/cert/ca-key.pem \ -config=/opt/k8s/cert/ca-config.json \ -profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes [root@kube-master cert]# ls kubernetes* kubernetes.csr kubernetes-csr.json kubernetes-key.pem kubernetes.pem 06-02-02 创建加密配置文件 ① 产生一个用来加密Etcd 的 Key: [root@kube-master ~]# head -c 32 /dev/urandom | base64 uS+YQXYoi1nxvI1pfSc2wRt64h/Iu5/4GxCuSvN+/jI= 注意:每台master节点需要用一样的 Key ② 使用这个加密的key,创建加密配置文件 [root@kube-master cert]# vim encryption-config.yaml 06-02-03 将生成的证书和私钥文件、加密配置文件拷贝到master 节点的/opt/k8s目录下 [root@kube-master cert]# vim /opt/k8s/script/scp_apiserver.sh [root@kube-master cert]# chmod +x /opt/k8s/script/scp_apiserver.sh && /opt/k8s/script/scp_apiserver.sh 06-02-04 创建 kube-apiserver systemd unit 模板文件 cat > /opt/apiserver/kube-apiserver.service.template < EOF 注: 06-02-05 为各节点创建和分发 kube-apiserver systemd unit文件;启动检查 kube-apiserver 服务 [root@kube-master ~]# vim /opt/k8s/script/apiserver_service.sh [root@kube-master ~]# chmod +x /opt/k8s/script/apiserver_service.sh && /opt/k8s/script/apiserver_service.sh 确保状态为 active (running) ,否则到 master 节点查看日志,确认原因: journalctl -u kube-apiserver 06-02-06 打印 kube-apiserver 写入 etcd 的数据 [root@kube-master ~]# ETCDCTL_API=3 etcdctl \ --endpoints="https://192.168.10.108:2379,https://192.168.10.109:2379,https://192.168.10.110:2379" \ --cacert=/opt/k8s/cert/ca.pem \ --cert=/opt/etcd/cert/etcd.pem \ --key=/opt/etcd/cert/etcd-key.pem \ get /registry/ --prefix --keys-only 06-02-07 检查集群信息 [root@kube-master ~]# kubectl cluster-info Kubernetes master is running at https://192.168.10.108:6443 To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. [root@kube-master ~]# kubectl get all --all-namespaces NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE default service/kubernetes ClusterIP 10.96.0.1 [root@kube-master ~]# kubectl get componentstatuses NAME STATUS MESSAGE ERROR scheduler Unhealthy Get http://127.0.0.1:10251/healthz: dial tcp 127.0.0.1:10251: getsockopt: connection refused controller-manager Unhealthy Get http://127.0.0.1:10252/healthz: dial tcp 127.0.0.1:10252: getsockopt: connection refused etcd-1 Healthy {"health":"true"} etcd-2 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} 注意: ① 如果执行 kubectl 命令式时输出如下错误信息,则说明使用的 ~/.kube/config文件不对,请切换到正确的账户后再执行该命令: The connection to the server localhost:8080 was refused - did you specify the right host or port? ② 执行 kubectl get componentstatuses 命令时,apiserver 默认向 127.0.0.1 发送请求。当controller-manager、scheduler 以集群模式运行时,有可能和 kube-apiserver 不在一台机器上,这时 controller-manager 或 scheduler 的状态为Unhealthy,但实际上它们工作正常。 06-02-08 检查 kube-apiserver 监听的端口 [root@kube-master ~]# ss -nutlp |grep apiserver tcp LISTEN 0 128 192.168.10.108:6443 *:* users:(("kubeapiserver",pid=929,fd=5)) 06-02-09 授予 kubernetes 证书访问 kubelet API 的权限 在执行 kubectl exec、run、logs 等命令时,apiserver 会转发到 kubelet。这里定义RBAC 规则,授权 apiserver 调用 kubelet API。 [root@kube-master ~]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes clusterrolebinding.rbac.authorization.k8s.io "kube-apiserver:kubelet-apis" created 本文档介绍部署高可用 kube-controller-manager 集群的步骤。 该集群包含 3 个节点,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。 为保证通信安全,本文档先生成 x509 证书和私钥,kube-controller-manager 在如下两种情况下使用该证书: ① 与 kube-apiserver 的安全端口通信时; ② 在安全端口(https,10252) 输出 prometheus 格式的 metrics; 准备工作:下载最新版本的二进制文件、安装和配置 flanneld 06-03-01 创建 kube-controller-manager 证书和私钥 创建证书签名请求: [root@kube-master ~]# cd /opt/k8s/cert/ [root@kube-master cert]# cat > kube-controller-manager-csr.json < EOF 注: 06-03-02 生成证书和私钥 [root@kube-master cert]# cfssl gencert -ca=/opt/k8s/cert/ca.pem \ -ca-key=/opt/k8s/cert/ca-key.pem \ -config=/opt/k8s/cert/ca-config.json \ -profile=kubernetes kube-controller-manager-csr.json | cfssljson_linux-amd64 -bare kube-controller-manager [root@kube-master cert]# ls *controller-manager* kube-controller-manager.csr kube-controller-manager-key.pem kube-controller-manager-csr.json kube-controller-manager.pem 06-03-03 创建kubeconfig 文件 kubeconfig 文件包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书; ① 执行命令,生产kube-controller-manager.kubeconfig文件 [root@kube-master ~]# kubectl config set-cluster kubernetes \ --certificate-authority=/opt/k8s/cert/ca.pem \ --embed-certs=true \ --server=https://192.168.10.10:8443 \ --kubeconfig=/root/.kube/kube-controller-manager.kubeconfig [root@kube-master ~]# kubectl config set-credentials system:kube-controller-manager \ --client-certificate=/opt/k8s/cert/kube-controller-manager.pem \ --client-key=/opt/k8s/cert/kube-controller-manager-key.pem \ --embed-certs=true \ --kubeconfig=/root/.kube/kube-controller-manager.kubeconfig [root@kube-master ~]# kubectl config set-context system:kube-controller-manager@kubernetes \ --cluster=kubernetes \ --user=system:kube-controller-manager \ --kubeconfig=/root/.kube/kube-controller-manager.kubeconfig [root@kube-master ~]# kubectl config use-context system:kube-controller-manager@kubernetes --kubeconfig=/root/.kube/kube-controller-manager.kubeconfig ② 验证kube-controller-manager.kubeconfig文件 [root@kube-master cert]# ls /root/.kube/kube-controller-manager.kubeconfig /root/.kube/kube-controller-manager.kubeconfig [root@kube-master ~]# kubectl config view --kubeconfig=/root/.kube/kube-controller-manager.kubeconfig 06-03-04 分发生成的证书和私钥、kubeconfig 到所有 master 节点 [root@kube-master ~]# vim /opt/k8s/script/scp_controller_manager.sh [root@kube-master ~]# chmod +x /opt/k8s/script/scp_controller_manager.sh && /opt/k8s/script/scp_controller_manager.sh 06-03-05 创建和分发 kube-controller-manager systemd unit 文件 [root@kube-master ~]# mkdir /opt/controller_manager [root@kube-master ~]# cd /opt/controller_manager [root@kube-master controller_manager]# cat > kube-controller-manager.service < 注: kube-controller-manager 不对请求 https metrics 的 Client 证书进行校验,故不需要指定 --tls-ca-file 参数,而且该参数已被淘汰。 06-03-06 kube-controller-manager 的权限 ClusteRole: system:kube-controller-manager 的权限很小,只能创建 secret、serviceaccount 等资源对象,各 controller 的权限分散到ClusterRole system:controller:XXX 中。 需要在 kube-controller-manager 的启动参数中添加 --use-service-account-credentials=true 参数,这样 main controller 会为各 controller 创建对应的 ServiceAccount XXX-controller。 内置的 ClusterRoleBinding system:controller:XXX 将赋予各 XXX-controller ServiceAccount 对应的 ClusterRole system:controller:XXX 权限。 06-03-07 分发systemd unit 文件到所有master 节点;启动检查 kube-controller-manager 服务 [root@kube-master ~]# vim /opt/k8s/script/controller_manager.sh [root@kube-master ~]# chmod +x /opt/k8s/script/controller_manager.sh && /opt/k8s/script/controller_manager.sh 06-03-08 查看输出的 metric 注意:以下命令在 kube-controller-manager 节点上执行。 [root@kube-master ~]# ss -nutlp |grep kube-controll tcp LISTEN 0 128 127.0.0.1:10252 *:* users:(("kube-controller",pid=6532,fd=5)) [root@kube-master ~]# curl -s --cacert /opt/k8s/cert/ca.pem https://127.0.0.1:10252/metrics |head # HELP ClusterRoleAggregator_adds Total number of adds handled by workqueue: ClusterRoleAggregator # TYPE ClusterRoleAggregator_adds counter ClusterRoleAggregator_adds 6 # HELP ClusterRoleAggregator_depth Current depth of workqueue: ClusterRoleAggregator # TYPE ClusterRoleAggregator_depth gauge ClusterRoleAggregator_depth 0 # HELP ClusterRoleAggregator_queue_latency How long an item stays in workqueueClusterRoleAggregator before being requested. # TYPE ClusterRoleAggregator_queue_latency summary ClusterRoleAggregator_queue_latency{quantile="0.5"} 431 ClusterRoleAggregator_queue_latency{quantile="0.9"} 85089 注:curl --cacert CA 证书用来验证 kube-controller-manager https server 证书; 06-03-09 测试 kube-controller-manager 集群的高可用 1、停掉一个或两个节点的 kube-controller-manager 服务,观察其它节点的日志,看是否获取了 leader 权限。 2、查看当前的 leader [root@kube-master ~]# kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml apiVersion: v1 kind: Endpoints metadata: annotations: control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"kube-master_53bc08b7-f69d-11e8-9e79-0050563ab62b","leaseDurationSeconds":15,"acquireTime":"2018-12-03T01:48:18Z","renewTime":"2018-12-03T01:59:15Z","leaderTransitions":5}' creationTimestamp: 2018-11-29T03:12:14Z name: kube-controller-manager namespace: kube-system resourceVersion: "56075" selfLink: /api/v1/namespaces/kube-system/endpoints/kube-controller-manager uid: 91e64a51-f384-11e8-a392-0050563ab62b 可见,当前的 leader 为 kube-node1 节点。(本来是在kube-master节点) 本文档介绍部署高可用 kube-scheduler 集群的步骤。 该集群包含 3 个节点,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。 为保证通信安全,本文档先生成 x509 证书和私钥,kube-scheduler 在如下两种情况下使用该证书: ① 与 kube-apiserver 的安全端口通信; ② 在安全端口(https,10251) 输出 prometheus 格式的 metrics; 准备工作:下载最新版本的二进制文件、安装和配置 flanneld 06-04-01 创建 kube-scheduler 证书和私钥 创建证书签名请求: [root@kube-master ~]# cd /opt/k8s/cert/ [root@kube-master cert]# cat > kube-scheduler-csr.json < EOF 注: 06-04-02 生成证书和私钥 [root@kube-master cert]# cfssl gencert -ca=/opt/k8s/cert/ca.pem \ -ca-key=/opt/k8s/cert/ca-key.pem \ -config=/opt/k8s/cert/ca-config.json \ -profile=kubernetes kube-scheduler-csr.json | cfssljson_linux-amd64 -bare kube-scheduler [root@kube-master cert]# ls *scheduler* kube-scheduler.csr kube-scheduler-csr.json kube-scheduler-key.pem kube-scheduler.pem 06-04-03 创建kubeconfig 文件 kubeconfig 文件包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书; ① 执行命令,生产kube-scheduler.kubeconfig文件 [root@kube-master ~]# kubectl config set-cluster kubernetes \ --certificate-authority=/opt/k8s/cert/ca.pem \ --embed-certs=true \ --server=https://192.168.10.10:8443 \ --kubeconfig=/root/.kube/kube-scheduler.kubeconfig [root@kube-master ~]# kubectl config set-credentials system:kube-scheduler \ --client-certificate=/opt/k8s/cert/kube-scheduler.pem \ --client-key=/opt/k8s/cert/kube-scheduler-key.pem \ --embed-certs=true \ --kubeconfig=/root/.kube/kube-scheduler.kubeconfig [root@kube-master ~]# kubectl config set-context system:kube-scheduler@kubernetes \ --cluster=kubernetes \ --user=system:kube-scheduler \ --kubeconfig=/root/.kube/kube-scheduler.kubeconfig [root@kube-master ~]# kubectl config use-context system:kube-scheduler@kubernetes --kubeconfig=/root/.kube/kube-scheduler.kubeconfig ② 验证kube-controller-manager.kubeconfig文件 [root@kube-master cert]# ls /root/.kube/kube-scheduler.kubeconfig /root/.kube/kube-scheduler.kubeconfig [root@kube-master ~]# kubectl config view --kubeconfig=/root/.kube/kube-scheduler.kubeconfig 06-04-04 分发生成的证书和私钥、kubeconfig 到所有 master 节点 [root@kube-master ~]# vim /opt/k8s/script/scp_scheduler.sh [root@kube-master ~]# chmod +x /opt/k8s/script/scp_scheduler.sh && /opt/k8s/script/scp_scheduler.sh 06-04-05 创建kube-scheduler systemd unit 文件 [root@kube-master ~]# mkdir /opt/scheduler [root@kube-master ~]# cd /opt/scheduler [root@kube-master scheduler]# cat > kube-scheduler.service < EOF 注: 06-04-06 分发systemd unit 文件到所有master 节点;启动检查kube-scheduler 服务 [root@kube-master scheduler]# vim /opt/k8s/script/scheduler.sh [root@kube-master scheduler]# chmod +x /opt/k8s/script/scheduler.sh && /opt/k8s/script/scheduler.sh 确保状态为 active (running),否则查看日志,确认原因: journalctl -u kube-scheduler 06-04-07 查看输出的 metric 注意:以下命令在 kube-scheduler 节点上执行。 kube-scheduler 监听 10251 端口,接收 http 请求: [root@kube-master ~]# ss -nutlp |grep kube-scheduler tcp LISTEN 0 128 127.0.0.1:10251 *:* users:(("kube-scheduler",pid=14968,fd=8)) [root@kube-master ~]# curl -s http://127.0.0.1:10251/metrics |head # HELP apiserver_audit_event_total Counter of audit events generated and sent to the audit backend. # TYPE apiserver_audit_event_total counter apiserver_audit_event_total 0 # HELP go_gc_duration_seconds A summary of the GC invocation durations. # TYPE go_gc_duration_seconds summary go_gc_duration_seconds{quantile="0"} 3.6554e-05 go_gc_duration_seconds{quantile="0.25"} 0.000133804 go_gc_duration_seconds{quantile="0.5"} 0.000203523 go_gc_duration_seconds{quantile="0.75"} 0.000683624 go_gc_duration_seconds{quantile="1"} 0.001188571 06-04-08 测试 kube-scheduler 集群的高可用 1、随便找一个或两个 master 节点,停掉 kube-scheduler 服务,看其它节点是否获取了 leader 权限(systemd 日志)。 2、查看当前的 leader [root@kube-master ~]# kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml apiVersion: v1 kind: Endpoints metadata: annotations: control-plane.alpha.kubernetes.io/leader: '{"holderIdentity":"kube-node1_531fab4b-f69d-11e8-ba0a-00505631d257","leaseDurationSeconds":15,"acquireTime":"2018-12-03T01:48:23Z","renewTime":"2018-12-03T02:02:28Z","leaderTransitions":4}' creationTimestamp: 2018-11-29T05:50:35Z name: kube-scheduler namespace: kube-system resourceVersion: "56324" selfLink: /api/v1/namespaces/kube-system/endpoints/kube-scheduler uid: b1435e86-f39a-11e8-a392-0050563ab62b 可见,当前的 leader 为 kube-node2 节点。(本来是在kube-master节点) kubernetes work 节点运行如下组件: 1、安装和配置 flanneld 参考 05.部署 flannel 网络 2、安装依赖包 CentOS: $ yum install -y epel-release $ yum install -y conntrack ipvsadm ipset jq iptables curl sysstat libseccomp && /usr/sbin/modprobe ip_vs Ubuntu: $ apt-get install -y conntrack ipvsadm ipset jq iptables curl sysstat libseccomp && /usr/sbin/modprobe ip_vs docker 是容器的运行环境,管理它的生命周期。kubelet 通过 Container Runtime Interface (CRI) 与 docker 进行交互。 07-01-01 下载docker 二进制文件 到 https://download.docker.com/linux/static/stable/x86_64/ 页面下载最新发布包: wget https://download.docker.com/linux/static/stable/x86_64/docker-18.03.1-ce.tgz tar -xvf docker-18.03.1-ce.tgz 07-01-02 创建和分发 systemd unit 文件 [root@kube-master ~]# mkdir /opt/docker [root@kube-master ~]# cd /opt/ [root@kube-master docker]# cat > docker.service << "EOF" EOF 07-01-03 配置docker 配置文件 使用国内的仓库镜像服务器以加快 pull image 的速度,同时增加下载的并发数 (需要重启 dockerd 生效): cat > docker-daemon.json < EOF 07-01-04 分发docker 二进制文件、systemd unit 文件、docker 配置文件到所有 worker 机器 [root@kube-master ~]# vim /opt/k8s/script/scp_docker.sh 07-01-05 启动并检查 docker 服务 [root@kube-master ~]# vim /opt/k8s/script/docker.sh 注: [root@kube-master ~]# chmod +x /opt/k8s/script/docker.sh && /opt/k8s/script/docker.sh ① 确保状态为 active (running),否则查看日志,确认原因: $ journalctl -u docker ② 确认各 work 节点的 docker0 网桥和 flannel.1 接口的 IP 处于同一个网段中(如下10.30.22.0和 10.30.22.1): 4: flannel.1: link/ether ea:b3:44:ab:36:16 brd ff:ff:ff:ff:ff:ff inet 10.30.89.0/32 scope global flannel.1 valid_lft forever preferred_lft forever 7: docker0: link/ether 02:42:8e:6e:ea:ef brd ff:ff:ff:ff:ff:ff inet 10.30.89.1/24 brd 10.30.89.255 scope global docker0 valid_lft forever preferred_lft forever kublet 运行在每个 worker 节点上,接收 kube-apiserver 发送的请求,管理 Pod 容器,执行交互式命令,如 exec、run、logs 等。 kublet 启动时自动向 kube-apiserver 注册节点信息,内置的 cadvisor 统计和监控节点的资源使用情况。 为确保安全,本文档只开启接收 https 请求的安全端口,对请求进行认证和授权,拒绝未授权的访问(如 apiserver、heapster)。 1、下载和分发 kubelet 二进制文件 参考 06.部署master节点.md 2、安装依赖包 参考 07部署worker节点.md 07-02-01 创建 kubelet bootstrap kubeconfig 文件 [root@kube-master ~]# vim /opt/k8s/script/bootstrap_kubeconfig.sh [root@kube-master ~]# chmod +x /opt/k8s/script/bootstrap_kubeconfig.sh && /opt/k8s/script/bootstrap_kubeconfig.sh 注: ① 证书中写入 Token 而非证书,证书后续由 controller-manager 创建。 查看 kubeadm 为各节点创建的 token: [root@kube-master ~]# kubeadm token list --kubeconfig ~/.kube/config TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS 8hpvxm.w5uctmxzlphfh37l 23h 2018-11-30T16:03:27+08:00 authentication,signing kubelet-bootstrap-token system:bootstrappers:kube-node1 gktdpg.5x931bwfzf4z4hjt 23h 2018-11-30T16:03:27+08:00 authentication,signing kubelet-bootstrap-token system:bootstrappers:kube-node2 ttbgfq.19zeet23eohtdo65 23h 2018-11-30T16:03:26+08:00 authentication,signing kubelet-bootstrap-token system:bootstrappers:kube-master ② 创建的 token 有效期为 1 天,超期后将不能再被使用,且会被 kube-controller-manager 的 tokencleaner 清理(如果启用该 controller 的话); ③ kube-apiserver 接收 kubelet 的 bootstrap token 后,将请求的 user 设置为 system:bootstrap:,group 设置为 system:bootstrappers; 各 token 关联的 Secret: [root@kube-master ~]# kubectl get secrets -n kube-system NAME TYPE DATA AGE bootstrap-token-8hpvxm bootstrap.kubernetes.io/token 7 7m bootstrap-token-gktdpg bootstrap.kubernetes.io/token 7 7m bootstrap-token-ttbgfq bootstrap.kubernetes.io/token 7 7m default-token-5lvn4 kubernetes.io/service-account-token 3 4h 07-02-02 创建kubelet 参数配置文件 从 v1.10 开始,kubelet 部分参数需在配置文件中配置,kubelet --help 会提示: DEPRECATED: This parameter should be set via the config file specified by the Kubelet's --config flag [root@kube-master ~]# mkdir /opt/kubelet [root@kube-master ~]# cd /opt/kubelet [root@kube-master kubelet]# vim kubelet.config.json.template 07-02-03 分发 bootstrap kubeconfig 、kubelet 配置文件到所有 worker 节点 [root@kube-master ~]# vim /opt/k8s/script/scp_kubelet.sh [root@kube-master ~]# chmod +x /opt/k8s/script/scp_kubelet.sh && /opt/k8s/script/scp_kubelet.sh 07-02-04 创建kubelet systemd unit 文件 [root@kube-master ~]# vim /opt/kubelet/kubelet.service.template 07-02-05 Bootstrap Token Auth 和授予权限 1、kublet 启动时查找配置的 --kubeletconfig 文件是否存在,如果不存在则使用 --bootstrap-kubeconfig 向 kube-apiserver 发送证书签名请求(CSR)。 2、kube-apiserver 收到 CSR 请求后,对其中的 Token 进行认证(事先使用 kubeadm 创建的 token),认证通过后将请求的 user 设置为system:bootstrap:,group 设置为 system:bootstrappers,这一过程称为 Bootstrap Token Auth。 3、默认情况下,这个 user 和 group 没有创建 CSR 的权限,kubelet 启动失败,错误日志如下: $ sudo journalctl -u kubelet -a |grep -A 2 'certificatesigningrequests' May 06 06:42:36 kube-node1 kubelet[26986]: F0506 06:42:36.314378 26986 server.go:233] failed to run Kubelet: cannot create certificate signing request: certificatesigningrequests.certificates.k8s.io is forbidden: User "system:bootstrap:lemy40" cannot create certificatesigningrequests.certificates.k8s.io at the cluster scope May 06 06:42:36 kube-node1 systemd[1]: kubelet.service: Main process exited, code=exited, status=255/n/a May 06 06:42:36 kube-node1 systemd[1]: kubelet.service: Failed with result 'exit-code'. 4、解决办法是:创建一个 clusterrolebinding,将 group system:bootstrappers 和 clusterrole system:node-bootstrapper 绑定: [root@kube-master ~]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --group=system:bootstrappers 07-02-06 启动 kubelet 服务 [root@kube-master ~]# vim /opt/k8s/script/kubelet.sh 注: 07-02-07 approve kubelet CSR 请求 可以手动或自动 approve CSR 请求。推荐使用自动的方式,因为从 v1.8 版本开始,可以自动轮转approve csr 后生成的证书。 1、手动 approve CSR 请求 (1)查看 CSR 列表: [root@kube-master ~]# kubectl get csr NAME AGE REQUESTOR CONDITION node-csr-SdkiSnAdFByBTIJDyFWTBSTIDMJKxwxQt9gEExFX5HU 4m system:bootstrap:8hpvxm Pending node-csr-atMwF8GpKbDEcGjzCTXF1NYo9Jc1AzE2yQoxaU8NAkw 7m system:bootstrap:ttbgfq Pending node-csr-qxa30a9GRg35iNEl3PYZOIICMo_82qPrqNu6PizEZXw 4m system:bootstrap:gktdpg Pending 三个 work 节点的 csr 均处于 pending 状态; (2)approve CSR: [root@kube-master ~]# kubectl certificate approve node-csr-SdkiSnAdFByBTIJDyFWTBSTIDMJKxwxQt9gEExFX5HU certificatesigningrequest.certificates.k8s.io "node-csr-SdkiSnAdFByBTIJDyFWTBSTIDMJKxwxQt9gEExFX5HU" approved (3)查看 Approve 结果: [root@kube-master ~]# kubectl describe csr node-csr-SdkiSnAdFByBTIJDyFWTBSTIDMJKxwxQt9gEExFX5HU Name: node-csr-SdkiSnAdFByBTIJDyFWTBSTIDMJKxwxQt9gEExFX5HU Labels: Annotations: CreationTimestamp: Thu, 29 Nov 2018 17:51:43 +0800 Requesting User: system:bootstrap:8hpvxm Status: Approved,Issued Subject: Common Name: system:node:kube-node1 Serial Number: Organization: system:nodes Events: 2、自动 approve CSR 请求 (1)创建三个 ClusterRoleBinding,分别用于自动 approve client、renew client、renew server 证书: [root@kube-master ~]# cat > /opt/kubelet/csr-crb.yaml < # Approve all CSRs for the group "system:bootstrappers" kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: auto-approve-csrs-for-group subjects: - kind: Group name: EOF 注: (2)生效配置: [root@kube-master ~]# $ kubectl apply -f /opt/kubelet/csr-crb.yaml 07-02-08 查看 kublet 的情况 1、等待一段时间(1-10 分钟),三个节点的 CSR 都被自动 approve: [root@kube-master ~]# kubectl get csr NAME AGE REQUESTOR CONDITION csr-kvbtt 15h system:node:kube-node1 Approved,Issued csr-p9b9s 15h system:node:kube-node2 Approved,Issued csr-rjpr9 15h system:node:kube-master Approved,Issued node-csr-8Sr42M0z_LzZeHU-RCbgOynJm3Z2TsSXHuAlohfJiIM 15h system:bootstrap:ttbgfq Approved,Issued node-csr-SdkiSnAdFByBTIJDyFWTBSTIDMJKxwxQt9gEExFX5HU 15h system:bootstrap:8hpvxm Approved,Issued node-csr-atMwF8GpKbDEcGjzCTXF1NYo9Jc1AzE2yQoxaU8NAkw 15h system:bootstrap:ttbgfq Approved,Issued node-csr-elVB0jp36nOHuOYlITWDZx8LoO2Ly4aW0VqgYxw_Te0 15h system:bootstrap:gktdpg Approved,Issued node-csr-muNcDteZINLZnSv8FkhOMaP2ob5uw82PGwIAynNNrco 15h system:bootstrap:ttbgfq Approved,Issued node-csr-qxa30a9GRg35iNEl3PYZOIICMo_82qPrqNu6PizEZXw 15h system:bootstrap:gktdpg Approved,Issued 2、所有节点均 ready: [root@kube-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION kube-master Ready kube-node1 Ready kube-node2 Ready 3、kube-controller-manager 为各 node 生成了 kubeconfig 文件和公私钥: [root@kube-master ~]# ll /opt/k8s/kubelet.kubeconfig -rw------- 1 root root 2280 Nov 29 18:05 /opt/k8s/kubelet.kubeconfig [root@kube-master ~]# ll /opt/k8s/cert/ |grep kubelet -rw-r--r-- 1 root root 1050 Nov 29 18:05 kubelet-client.crt -rw------- 1 root root 227 Nov 29 18:01 kubelet-client.key -rw------- 1 root root 1338 Nov 29 18:05 kubelet-server-2018-11-29-18-05-11.pem lrwxrwxrwx 1 root root 52 Nov 29 18:05 kubelet-server-current.pem -> /opt/k8s/cert/kubelet-server-2018-11-29-18-05-11.pem 注:kubelet-server 证书会周期轮转; 07-02-09 kubelet 提供的 API 接口 1、kublet 启动后监听多个端口,用于接收 kube-apiserver 或其它组件发送的请求: [root@kube-master ~]# ss -nutlp |grep kubelet tcp LISTEN 0 128 192.168.10.108:10250 *:* users:(("kubelet",pid=2797,fd=22)) tcp LISTEN 0 128 192.168.10.108:4194 *:* users:(("kubelet",pid=2797,fd=13)) tcp LISTEN 0 128 127.0.0.1:10248 *:* users:(("kubelet",pid=2797,fd=32)) 注: 2、例如执行 kubectl ec -it nginx-ds-5rmws -- sh 命令时,kube-apiserver 会向 kubelet 发送如下请求: POST /exec/default/nginx-ds-5rmws/my-nginx?command=sh&input=1&output=1&tty=1 3、kubelet 接收 10250 端口的 https 请求: 4、由于关闭了匿名认证,同时开启了 webhook 授权,所有访问 10250 端口 https API 的请求都需要被认证和授权。 预定义的 ClusterRole system:kubelet-api-admin 授予访问 kubelet 所有 API 的权限: [root@kube-master ~]# kubectl describe clusterrole system:kubelet-api-admin Name: system:kubelet-api-admin Labels: kubernetes.io/bootstrapping=rbac-defaults Annotations: rbac.authorization.kubernetes.io/autoupdate=true PolicyRule: Resources Non-Resource URLs Resource Names Verbs --------- ----------------- -------------- ----- nodes [] [] [get list watch proxy] nodes/log [] [] [*] nodes/metrics [] [] [*] nodes/proxy [] [] [*] nodes/spec [] [] [*] nodes/stats [] [] [*] 07-02-10 kublet api 认证和授权 1、kublet 配置了如下认证参数: 同时配置了如下授权参数: 2、kubelet 收到请求后,使用 clientCAFile 对证书签名进行认证,或者查询 bearer token 是否有效。如果两者都没通过,则拒绝请求,提示Unauthorized: [root@kube-master ~]# curl -s --cacert /opt/k8s/cert/ca.pem https://192.168.10.109:10250/metrics Unauthorized [root@kube-master ~]# curl -s --cacert /opt/k8s/cert/ca.pem -H "Authorization: Bearer 123456" https://192.168.10.109:10250/metrics Unauthorized 3、通过认证后,kubelet 使用 SubjectAccessReview API 向 kube-apiserver 发送请求,查询证书或 token 对应的 user、group 是否有操作资源的权限(RBAC); 证书认证和授权: $ 权限不足的证书; [root@kube-master ~]# curl -s --cacert /opt/k8s/cert/ca.pem --cert /opt/k8s/cert/kube-controller-manager.pem --key /opt/k8s/cert/kube-controller-manager-key.pem https://192.168.10.109:10250/metrics Forbidden (user=system:kube-controller-manager, verb=get, resource=nodes, subresource=metrics) $ 使用部署 kubectl 命令行工具时创建的、具有最高权限的 admin 证书; [root@kube-master cert]# curl -s --cacert /opt/k8s/cert/ca.pem --cert /opt/k8s/cert/admin.pem --key /opt/k8s/cert/admin-key.pem https://192.168.10.109:10250/metrics|head # HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request. # TYPE apiserver_client_certificate_expiration_seconds histogram apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="21600"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="43200"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="86400"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="172800"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="345600"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="604800"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="2.592e+06"} 0 4、bear token 认证和授权: 创建一个 ServiceAccount,将它和 ClusterRole system:kubelet-api-admin 绑定,从而具有调用 kubelet API 的权限: [root@kube-master ~]# kubectl create sa kubelet-api-test serviceaccount "kubelet-api-test" created [root@kube-master ~]# kubectl create clusterrolebinding kubelet-api-test --clusterrole=system:kubelet-api-admin --serviceaccount=default:kubelet-api-test clusterrolebinding.rbac.authorization.k8s.io "kubelet-api-test" created [root@kube-master ~]# SECRET=$(kubectl get secrets | grep kubelet-api-test | awk '{print $1}') [root@kube-master ~]# TOKEN=$(kubectl describe secret ${SECRET} | grep -E '^token' | awk '{print $2}') [root@kube-master ~]# curl -s --cacert /opt/k8s/cert/ca.pem -H "Authorization: Bearer ${TOKEN}" https://192.168.10.109:10250/metrics|head # HELP apiserver_client_certificate_expiration_seconds Distribution of the remaining lifetime on the certificate used to authenticate a request. # TYPE apiserver_client_certificate_expiration_seconds histogram apiserver_client_certificate_expiration_seconds_bucket{le="0"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="21600"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="43200"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="86400"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="172800"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="345600"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="604800"} 0 apiserver_client_certificate_expiration_seconds_bucket{le="2.592e+06"} 0 07-02-11 cadvisor 和 metrics cadvisor 统计所在节点各容器的资源(CPU、内存、磁盘、网卡)使用情况,分别在自己的 http web 页面(4194 端口)和 10250 以promehteus metrics 的形式输出。 浏览器访问 http://192.168.10.108:4194/containers/ 可以查看到 cadvisor 的监控页面: 07-02-12 获取 kublet 的配置 从 kube-apiserver 获取各 node 的配置: 使用部署 kubectl 命令行工具时创建的、具有最高权限的 admin 证书; [root@kube-master ~]# curl -sSL --cacert /opt/k8s/cert/ca.pem --cert /opt/k8s/cert/admin.pem --key /opt/k8s/cert/admin-key.pem https://192.168.10.10:8443/api/v1/nodes/kube-node1/proxy/configz | jq \ '.kubeletconfig|.kind="KubeletConfiguration"|.apiVersion="kubelet.config.k8s.io/v1beta1"' { "syncFrequency": "1m0s", "fileCheckFrequency": "20s", "httpCheckFrequency": "20s", "address": "192.168.10.109", "port": 10250, "authentication": { "x509": { "clientCAFile": "/opt/k8s/cert/ca.pem" }, "webhook": { "enabled": true, "cacheTTL": "2m0s" }, "anonymous": { "enabled": false } }, "authorization": { "mode": "Webhook", "webhook": { "cacheAuthorizedTTL": "5m0s", "cacheUnauthorizedTTL": "30s" } }, "registryPullQPS": 5, "registryBurst": 10, "eventRecordQPS": 5, "eventBurst": 10, "enableDebuggingHandlers": true, "healthzPort": 10248, "healthzBindAddress": "127.0.0.1", "oomScoreAdj": -999, "clusterDomain": "cluster.local.", "clusterDNS": [ "10.96.0.2" ], "streamingConnectionIdleTimeout": "4h0m0s", "nodeStatusUpdateFrequency": "10s", "imageMinimumGCAge": "2m0s", "imageGCHighThresholdPercent": 85, "imageGCLowThresholdPercent": 80, "volumeStatsAggPeriod": "1m0s", "cgroupsPerQOS": true, "cgroupDriver": "cgroupfs", "cpuManagerPolicy": "none", "cpuManagerReconcilePeriod": "10s", "runtimeRequestTimeout": "2m0s", "hairpinMode": "promiscuous-bridge", "maxPods": 110, "podPidsLimit": -1, "resolvConf": "/etc/resolv.conf", "cpuCFSQuota": true, "maxOpenFiles": 1000000, "contentType": "application/vnd.kubernetes.protobuf", "kubeAPIQPS": 5, "kubeAPIBurst": 10, "serializeImagePulls": false, "evictionHard": { "imagefs.available": "15%", "memory.available": "100Mi", "nodefs.available": "10%", "nodefs.inodesFree": "5%" }, "evictionPressureTransitionPeriod": "5m0s", "enableControllerAttachDetach": true, "makeIPTablesUtilChains": true, "iptablesMasqueradeBit": 14, "iptablesDropBit": 15, "featureGates": { "RotateKubeletClientCertificate": true, "RotateKubeletServerCertificate": true }, "failSwapOn": true, "containerLogMaxSize": "10Mi", "containerLogMaxFiles": 5, "enforceNodeAllocatable": [ "pods" ], "kind": "KubeletConfiguration", "apiVersion": "kubelet.config.k8s.io/v1beta1" } kube-proxy 运行在所有 worker 节点上,,它监听 apiserver 中 service 和 Endpoint 的变化情况,创建路由规则来进行服务负载均衡。 本文档讲解部署 kube-proxy 的部署,使用 ipvs 模式。 1、下载和分发 kube-proxy 二进制文件 参考 06.部署master节点.md 2、安装依赖包 各节点需要安装 ipvsadm 和 ipset 命令,加载 ip_vs 内核模块。 参考 07.部署worker节点.md 07-03-01 创建 kube-proxy 证书 创建证书签名请求: [root@kube-master ~]# cd /opt/k8s/cert/ [root@kube-master cert]# cat > kube-proxy-csr.json << EOF EOF 注: 07-03-02 生成证书和私钥 [root@kube-master cert]# cfssl gencert -ca=/opt/k8s/cert/ca.pem \ -ca-key=/opt/k8s/cert/ca-key.pem \ -config=/opt/k8s/cert/ca-config.json \ -profile=kubernetes kube-proxy-csr.json | cfssljson_linux-amd64 -bare kube-proxy [root@kube-master cert]# ls *kube-proxy* kube-proxy.csr kube-proxy-csr.json kube-proxy-key.pem kube-proxy.pem 07-03-03 创建kubeconfig 文件 [root@kube-master ~]# kubectl config set-cluster kubernetes \ --certificate-authority=/opt/k8s/cert/ca.pem \ --embed-certs=true \ --server=https://192.168.10.10:8443 \ --kubeconfig=/root/.kube/kube-proxy.kubeconfig [root@kube-master ~]# kubectl config set-credentials kube-proxy \ --client-certificate=/opt/k8s/cert/kube-proxy.pem \ --client-key=/opt/k8s/cert/kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=/root/.kube/kube-proxy.kubeconfig [root@kube-master ~]# kubectl config set-context kube-proxy@kubernetes \ --cluster=kubernetes \ --user=kube-proxy \ --kubeconfig=/root/.kube/kube-proxy.kubeconfig [root@kube-master ~]# kubectl config use-context kube-proxy@kubernetes --kubeconfig=/root/.kube/kube-proxy.kubeconfig 注: [root@kube-master ~]# kubectl config view --kubeconfig=/root/.kube/kube-proxy.kubeconfig 07-03-04 创建 kube-proxy 配置文件 从 v1.10 开始,kube-proxy 部分参数可以配置文件中配置。可以使用 --write-config-to 选项生成该配置文件, 创建 kube-proxy config 文件模板 [root@kube-master ~]# mkdir /opt/kube-proxy [root@kube-master ~]# cd /opt/kube-proxy [root@kube-master kube-proxy]# cat >kube-proxy.config.yaml.template < EOF 注: 07-03-05 分发 kubeconfig、kube-proxy systemd unit 文件;启动并检查kube-proxy 服务 [root@kube-master ~]# vim /opt/k8s/script/kube_proxy.sh [root@kube-master ~]# chmod +x /opt/k8s/script/kube_proxy.sh && /opt/k8s/script/kube_proxy.sh 07-03-06 查看监听端口和 metrics [root@kube-master ~]# ss -nutlp |grep kube-prox tcp LISTEN 0 128 192.168.10.108:10256 *:* users:(("kube-proxy",pid=34230,fd=10)) tcp LISTEN 0 128 192.168.10.108:10249 *:* users:(("kube-proxy",pid=34230,fd=11)) 07-03-07 查看 ipvs 路由规则 [root@kube-master ~]# /usr/sbin/ipvsadm -ln IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.96.0.1:443 rr persistent 10800 -> 192.168.10.108:6443 Masq 1 0 0 -> 192.168.10.109:6443 Masq 1 0 0 -> 192.168.10.110:6443 Masq 1 0 0 可见将所有到 kubernetes cluster ip 443 端口的请求都转发到 kube-apiserver 的 6443 端口; 本文档使用 daemonset 验证 master 和 worker 节点是否工作正常。 08-01 检查节点状态 [root@kube-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION kube-master Ready kube-node1 Ready kube-node2 Ready 都为 Ready 时正常。 08-02 创建测试文件 [root@kube-master ~]# mkdir /opt/k8s/damo [root@kube-master ~]# cat > nginx-ds.yml < EOF 执行定义文件 [root@kube-master ~]# kubectl create -f /opt/k8s/damo/nginx-ds.yml service "nginx-ds" created daemonset.extensions "nginx-ds" created 08-03 检查各 Node 上的 Pod IP 连通性 因为需要拖拉镜像、创建Pod,所以需要等一段时间 [root@kube-master ~]# kubectl get pods -o wide|grep nginx-ds nginx-ds-7cz4p 1/1 Running 0 4m 10.30.22.2 kube-master nginx-ds-lg585 1/1 Running 0 4m 10.30.44.2 kube-node2 nginx-ds-zc448 1/1 Running 0 4m 10.30.33.2 kube-node1 可见,nginx-ds 的 Pod IP 分别是 10.30.22.2、10.30.44.2、10.30.33.2,在所有 Node 上分别 ping 这三个 IP,看是否连通: [root@kube-master ~]# NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110");\ [root@kube-master ~]# for node_ip in ${NODE_IPS[@]};do \ echo ">>> ${node_ip}" ;\ ssh ${node_ip} "ping -c 1 10.30.22.2"; \ ssh ${node_ip} "ping -c 1 10.30.44.2"; \ ssh ${node_ip} "ping -c 1 10.30.33.2"; \ done 08-04 检查服务 IP 和端口可达性 [root@kube-master ~]# kubectl get svc |grep nginx-ds nginx-ds NodePort 10.96.192.157 可见: 在所有 Node 上 curl Service IP: [root@kube-master ~]# curl 10.96.192.157 [root@kube-node1 ~]# curl 10.96.192.157 [root@kube-node2 ~]# curl 10.96.192.157 预期输出 nginx 欢迎页面内容。 08-05 检查服务的 NodePort 可达性 在所有 Node 上执行:预期输出 nginx 欢迎页面内容。 [root@kube-master ~]# curl 192.168.10.108:15131 [root@kube-master ~]# curl 192.168.10.109:15131 [root@kube-master ~]# curl 192.168.10.110:15131 If you see this page, the nginx web server is successfully installed and working. Further configuration is required. For online documentation and support please refer to Commercial support is available at Thank you for using nginx.net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

02.创建 CA 证书和秘钥

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "4Paradigm"

}

]

}

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /opt/k8s/cert && chown -R k8s /opt/k8s"

scp /opt/k8s/cert/ca*.pem /opt/k8s/cert/ca-config.json k8s@${node_ip}:/opt/k8s/cert

done

03.部署 kubectl 命令行工具

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "4Paradigm"

}

]

}

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: REDACTED

server: https://192.168.10.10:8443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kube-admin

name: kube-admin@kubernetes

current-context: kube-admin@kubernetes

kind: Config

preferences: {}

users:

- name: kube-admin

user:

client-certificate-data: REDACTED

client-key-data: REDACTED

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

scp /root/kubernetes/client/bin/kubectl k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

ssh k8s@${node_ip} "mkdir -p ~/.kube"

scp ~/.kube/config k8s@${node_ip}:~/.kube/config

ssh root@${node_ip} "mkdir -p ~/.kube"

scp ~/.kube/config root@${node_ip}:~/.kube/config

done

04.部署 etcd 集群

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.10.108",

"192.168.10.109",

"192.168.10.110"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "4Paradigm"

}

]

}

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

scp /root/etcd-v3.3.7-linux-amd64/etcd* k8s@${node_ip}:/opt/k8s/bin

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

ssh root@${node_ip} "mkdir -p /opt/etcd/cert && chown -R k8s /opt/etcd/cert"

scp /opt/etcd/cert/etcd*.pem k8s@${node_ip}:/opt/etcd/cert/

done

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

Documentation=https://github.com/coreos

[Service]

User=k8s

Type=notify

WorkingDirectory=/opt/lib/etcd/

ExecStart=/opt/k8s/bin/etcd \

--data-dir=/opt/lib/etcd \

--name ##NODE_NAME## \

--cert-file=/opt/etcd/cert/etcd.pem \

--key-file=/opt/etcd/cert/etcd-key.pem \

--trusted-ca-file=/opt/k8s/cert/ca.pem \

--peer-cert-file=/opt/etcd/cert/etcd.pem \

--peer-key-file=/opt/etcd/cert/etcd-key.pem \

--peer-trusted-ca-file=/opt/k8s/cert/ca.pem \

--peer-client-cert-auth \

--client-cert-auth \

--listen-peer-urls=https://##NODE_IP##:2380 \

--initial-advertise-peer-urls=https://##NODE_IP##:2380 \

--listen-client-urls=https://##NODE_IP##:2379,http://127.0.0.1:2379\

--advertise-client-urls=https://##NODE_IP##:2379 \

--initial-cluster-token=etcd-cluster-0 \

--initial-cluster=etcd0=https://192.168.10.108:2380,etcd1=https://192.168.10.109:2380,etcd2=https://192.168.10.110:2380 \

--initial-cluster-state=new

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

NODE_NAMES=("etcd0" "etcd1" "etcd2")

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

#替换模板文件中的变量,为各节点创建 systemd unit 文件

for (( i=0; i < 3; i++ ));do

sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/g" -e "s/##NODE_IP##/${NODE_IPS[i]}/g" /opt/etcd/etcd.service.template > /opt/etcd/etcd-${NODE_IPS[i]}.service

done

#分发生成的 systemd unit 和etcd的配置文件:

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /opt/lib/etcd && chown -R k8s /opt/lib/etcd"

scp /opt/etcd/etcd-${node_ip}.service root@${node_ip}:/etc/systemd/system/etcd.service

done

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

#启动 etcd 服务

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable etcd && systemctl start etcd"

done

#检查启动结果,确保状态为 active (running)

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status etcd|grep Active"

done

#验证服务状态,输出均为healthy 时表示集群服务正常

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ETCDCTL_API=3 /opt/k8s/bin/etcdctl \

--endpoints=https://${node_ip}:2379 \

--cacert=/opt/k8s/cert/ca.pem \

--cert=/opt/etcd/cert/etcd.pem \

--key=/opt/etcd/cert/etcd-key.pem endpoint health

done

05.部署 flannel 网络

{

"CN": "flanneld",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "4Paradigm"

}

]

}

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

scp /root/flannel/{flanneld,mk-docker-opts.sh} k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

ssh root@${node_ip} "mkdir -p /opt/flannel/cert && chown -R k8s /opt/flannel"

scp /opt/flannel/cert/flanneld*.pem k8s@${node_ip}:/opt/flannel/cert

done

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

ExecStart=/opt/k8s/bin/flanneld \

-etcd-cafile=/opt/k8s/cert/ca.pem \

-etcd-certfile=/opt/flannel/cert/flanneld.pem \

-etcd-keyfile=/opt/flannel/cert/flanneld-key.pem \

-etcd-endpoints=https://192.168.10.108:2379,https://192.168.10.109:2379,https://192.168.10.110:2379 \

-etcd-prefix=/atomic.io/network \

-iface=eth1

ExecStartPost=/opt/k8s/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker

Restart=on-failure

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

#分发 flanneld systemd unit 文件到所有节点

scp /opt/flannel/flanneld.service root@${node_ip}:/etc/systemd/system/

#启动 flanneld 服务

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable flanneld && systemctl restart flanneld"

#检查启动结果

ssh k8s@${node_ip} "systemctl status flanneld|grep Active"

done

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

#在各节点上部署 flannel 后,检查是否创建了 flannel 接口(名称可能为 flannel0、flannel.0、flannel.1 等)

ssh ${node_ip} "/usr/sbin/ip addr show flannel.1|grep -w inet"

#在各节点上 ping 所有 flannel 接口 IP,确保能通

ssh ${node_ip} "ping -c 1 10.30.22.0"

ssh ${node_ip} "ping -c 1 10.30.33.0"

ssh ${node_ip} "ping -c 1 10.30.44.0"

done

06.部署 master 节点

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

scp /root/kubernetes/server/bin/* k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

done

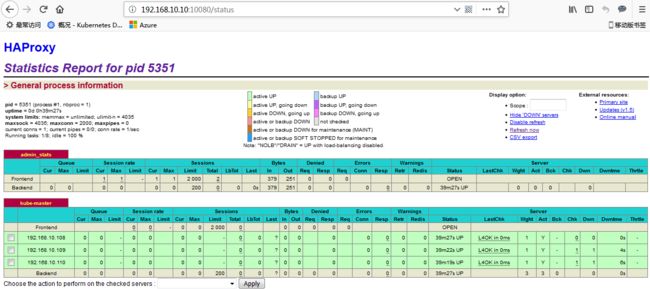

06-01.部署高可用组件

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /var/run/haproxy-admin.sock mode 660 level admin

stats timeout 30s

user haproxy

group haproxy

daemon

nbproc 1

defaults

log global

timeout connect 5000

timeout client 10m

timeout server 10m

listen admin_stats

bind 0.0.0.0:10080

mode http

log 127.0.0.1 local0 err

stats refresh 30s

stats uri /status

stats realm welcome login\ Haproxy

stats auth along:along123

stats hide-version

stats admin if TRUE

listen kube-master

bind 0.0.0.0:8443

mode tcp

option tcplog

balance source

server 192.168.10.108 192.168.10.108:6443 check inter 2000 fall 2 rise 2 weight 1

server 192.168.10.109 192.168.10.109:6443 check inter 2000 fall 2 rise 2 weight 1

server 192.168.10.110 192.168.10.110:6443 check inter 2000 fall 2 rise 2 weight 1

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

#安装haproxy

ssh root@${node_ip} "yum install -y keepalived haproxy"

#下发配置文件

scp /etc/haproxy/haproxy.cfg root@${node_ip}:/etc/haproxy

#启动检查haproxy服务

ssh root@${node_ip} "systemctl restart haproxy"

ssh root@${node_ip} "systemctl enable haproxy.service"

ssh root@${node_ip} "systemctl status haproxy|grep Active"

#检查 haproxy 是否监听6443 端口

ssh root@${node_ip} "netstat -lnpt|grep haproxy"

done

global_defs {

router_id keepalived_hap

}

vrrp_script check-haproxy {

script "killall -0 haproxy"

interval 5

weight -30

}

vrrp_instance VI-kube-master {

state MASTER

priority 120

dont_track_primary

interface eth1

virtual_router_id 68

advert_int 3

track_script {

check-haproxy

}

virtual_ipaddress {

192.168.10.10

}

}

global_defs {

router_id keepalived_hap

}

vrrp_script check-haproxy {

script "killall -0 haproxy"

interval 5

weight -30

}

vrrp_instance VI-kube-master {

state BACKUP

priority 110 #第2台从为100

dont_track_primary

interface eth1

virtual_router_id 68

advert_int 3

track_script {

check-haproxy

}

virtual_ipaddress {

192.168.10.10

}

}

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

VIP="192.168.10.10"

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl restart keepalived && systemctl enable keepalived"

ssh root@${node_ip} "systemctl status keepalived|grep Active"

ssh ${node_ip} "ping -c 1 ${VIP}"

done

06-02.部署 kube-apiserver 组件

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.10.108",

"192.168.10.109",

"192.168.10.110",

"192.168.10.10",

"10.96.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "4Paradigm"

}

]

}

kind: EncryptionConfig

apiVersion: v1

resources:

- resources:

- secrets

providers:

- aescbc:

keys:

- name: key1

secret: uS+YQXYoi1nxvI1pfSc2wRt64h/Iu5/4GxCuSvN+/jI=

- identity: {}

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /opt/k8s/cert/ && sudo chown -R k8s /opt/k8s/cert/"

scp /opt/k8s/cert/kubernetes*.pem k8s@${node_ip}:/opt/k8s/cert/

scp /opt/k8s/cert/encryption-config.yaml root@${node_ip}:/opt/k8s/

done

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

ExecStart=/opt/k8s/bin/kube-apiserver \

--enable-admission-plugins=Initializers,NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--experimental-encryption-provider-config=/opt/k8s/encryption-config.yaml \

--advertise-address=##NODE_IP## \

--bind-address=##NODE_IP## \

--insecure-port=0 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \

--service-node-port-range=1-32767 \

--tls-cert-file=/opt/k8s/cert/kubernetes.pem \

--tls-private-key-file=/opt/k8s/cert/kubernetes-key.pem \

--client-ca-file=/opt/k8s/cert/ca.pem \

--kubelet-client-certificate=/opt/k8s/cert/kubernetes.pem \

--kubelet-client-key=/opt/k8s/cert/kubernetes-key.pem \

--service-account-key-file=/opt/k8s/cert/ca-key.pem \

--etcd-cafile=/opt/k8s/cert/ca.pem \

--etcd-certfile=/opt/k8s/cert/kubernetes.pem \

--etcd-keyfile=/opt/k8s/cert/kubernetes-key.pem \

--etcd-servers=https://192.168.10.108:2379,https://192.168.10.109:2379,https://192.168.10.110:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

Type=notify

User=k8s

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

#替换模板文件中的变量,为各节点创建 systemd unit 文件

for (( i=0; i < 3; i++ ));do

sed "s/##NODE_IP##/${NODE_IPS[i]}/" /opt/apiserver/kube-apiserver.service.template > /opt/apiserver/kube-apiserver-${NODE_IPS[i]}.service

done

#启动并检查 kube-apiserver 服务

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "mkdir -p /opt/log/kubernetes && chown -R k8s /opt/log/kubernetes"

scp /opt/apiserver/kube-apiserver-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-apiserver.service

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-apiserver && systemctl restart kube-apiserver"

ssh root@${node_ip} "systemctl status kube-apiserver |grep 'Active:'"

done

06-03.部署高可用kube-controller-manager 集群

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"192.168.10.108",

"192.168.10.109",

"192.168.10.110"

],

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "4Paradigm"

}

]

}

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: REDACTED

server: https://192.168.10.10:8443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: system:kube-controller-manager

name: system:kube-controller-manager@kubernetes

current-context: system:kube-controller-manager@kubernetes

kind: Config

preferences: {}

users:

- name: system:kube-controller-manager

user:

client-certificate-data: REDACTED

client-key-data: REDACTED

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "chown k8s /opt/k8s/cert/*"

scp /opt/k8s/cert/kube-controller-manager*.pem k8s@${node_ip}:/opt/k8s/cert/

scp /root/.kube/kube-controller-manager.kubeconfig k8s@${node_ip}:/opt/k8s/

done

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/k8s/bin/kube-controller-manager \

--port=0 \

--secure-port=10252 \

--bind-address=127.0.0.1 \

--kubeconfig=/opt/k8s/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=10.96.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/k8s/cert/ca.pem \

--cluster-signing-key-file=/opt/k8s/cert/ca-key.pem \

--experimental-cluster-signing-duration=8760h \

--root-ca-file=/opt/k8s/cert/ca.pem \

--service-account-private-key-file=/opt/k8s/cert/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--horizontal-pod-autoscaler-use-rest-clients=true \

--horizontal-pod-autoscaler-sync-period=10s \

--tls-cert-file=/opt/k8s/cert/kube-controller-manager.pem \

--tls-private-key-file=/opt/k8s/cert/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on

Restart=on-failure

RestartSec=5

User=k8s

[Install]

WantedBy=multi-user.target

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

scp /opt/controller_manager/kube-controller-manager.service root@${node_ip}:/etc/systemd/system/

ssh root@${node_ip} "mkdir -p /opt/log/kubernetes && chown -R k8s /opt/log/kubernetes"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl start kube-controller-manager"

done

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status kube-controller-manager|grep Active"

done

06-04.部署高可用 kube-scheduler 集群

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.10.108",

"192.168.10.109",

"192.168.10.110"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-scheduler",

"OU": "4Paradigm"

}

]

}

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: REDACTED

server: https://192.168.10.100:8443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: system:kube-scheduler

name: system:kube-scheduler@kubernetes

current-context: system:kube-scheduler@kubernetes

kind: Config

preferences: {}

users:

- name: system:kube-scheduler

user:

client-certificate-data: REDACTED

client-key-data: REDACTED

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "chown k8s /opt/k8s/cert/*"

scp /opt/k8s/cert/kube-scheduler*.pem k8s@${node_ip}:/opt/k8s/cert/

scp /root/.kube/kube-scheduler.kubeconfig k8s@${node_ip}:/opt/k8s/

done

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/k8s/bin/kube-scheduler \\

--address=127.0.0.1 \\

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \\

--leader-elect=true \\

--alsologtostderr=true \\

--logtostderr=false \\

--log-dir=/var/log/kubernetes \\

--v=2

Restart=on-failure

RestartSec=5

User=k8s

[Install]

WantedBy=multi-user.target

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

scp /opt/scheduler/kube-scheduler.service root@${node_ip}:/etc/systemd/system/

ssh root@${node_ip} "mkdir -p /opt/log/kubernetes && chown -R k8s /opt/log/kubernetes"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-scheduler && systemctl start kube-scheduler"

done

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh k8s@${node_ip} "systemctl status kube-scheduler|grep Active"

done

07.部署 worker 节点

07-01.部署 docker 组件

[Unit]

Description=Docker Application Container Engine

Documentation=http://docs.docker.io

[Service]

Environment="PATH=/opt/k8s/bin:/bin:/sbin:/usr/bin:/usr/sbin"

EnvironmentFile=-/run/flannel/docker

ExecStart=/opt/k8s/bin/dockerd --log-level=error $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

Restart=on-failure

RestartSec=5

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

Delegate=yes

KillMode=process

[Install]

WantedBy=multi-user.target

{

"registry-mirrors": ["https://hub-mirror.c.163.com", "https://docker.mirrors.ustc.edu.cn"],

"max-concurrent-downloads": 20

}

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

scp /root/docker/docker* k8s@${node_ip}:/opt/k8s/bin/

ssh k8s@${node_ip} "chmod +x /opt/k8s/bin/*"

scp /opt/docker/docker.service root@${node_ip}:/etc/systemd/system/

ssh root@${node_ip} "mkdir -p /opt/docker/"

scp /opt/docker/docker-daemon.json root@${node_ip}:/opt/docker/daemon.json

done

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

ssh root@${node_ip} "systemctl stop firewalld && systemctl disable firewalld"

ssh root@${node_ip} "/usr/sbin/iptables -F && /usr/sbin/iptables -X && /usr/sbin/iptables -F -t nat && /usr/sbin/iptables -X -t nat"

ssh root@${node_ip} "/usr/sbin/iptables -P FORWARD ACCEPT"

ssh root@${node_ip} "systemctl daemon-reload && systemctl enable docker && systemctl restart docker"

ssh root@${node_ip} 'for intf in /sys/devices/virtual/net/docker0/brif/*; do echo 1 > $intf/hairpin_mode; done'

ssh root@${node_ip} "sudo sysctl -p /etc/sysctl.d/kubernetes.conf"

#检查服务运行状态

ssh k8s@${node_ip} "systemctl status docker|grep Active"

#检查 docker0 网桥

ssh k8s@${node_ip} "/usr/sbin/ip addr show flannel.1 && /usr/sbin/ip addr show docker0"

done

07-02.部署 kubelet 组件

NODE_NAMES=("kube-master" "kube-node1" "kube-node2")

for node_name in ${NODE_NAMES[@]};do

echo ">>> ${node_name}"

# 创建 token

export BOOTSTRAP_TOKEN=$(kubeadm token create \

--description kubelet-bootstrap-token \

--groups system:bootstrappers:${node_name} \

--kubeconfig ~/.kube/config)

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/k8s/cert/ca.pem \

--embed-certs=true \

--server=https://192.168.10.10:8443 \

--kubeconfig=~/.kube/kubelet-bootstrap-${node_name}.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=~/.kube/kubelet-bootstrap-${node_name}.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=~/.kube/kubelet-bootstrap-${node_name}.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=~/.kube/kubelet-bootstrap-${node_name}.kubeconfig

done

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/opt/k8s/cert/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "##NODE_IP##",

"port": 10250,

"readOnlyPort": 0,

"cgroupDriver": "cgroupfs",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"featureGates": {

"RotateKubeletClientCertificate": true,

"RotateKubeletServerCertificate": true

},

"clusterDomain": "cluster.local",

"clusterDNS": ["10.90.0.2"]

}

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

NODE_NAMES=("kube-master" "kube-node1" "kube-node2")

for node_name in ${NODE_NAMES[@]};do

echo ">>> ${node_name}"

scp ~/.kube/kubelet-bootstrap-${node_name}.kubeconfig k8s@${node_name}:/opt/k8s/kubelet-bootstrap.kubeconfig

done

for node_ip in ${NODE_IPS[@]};do

echo ">>> ${node_ip}"

sed -e "s/##NODE_IP##/${node_ip}/" /opt/kubelet/kubelet.config.json.template > /opt/kubelet/kubelet.config-${node_ip}.json

scp /opt/kubelet/kubelet.config-${node_ip}.json root@${node_ip}:/opt/k8s/kubelet.config.json

done

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/opt/lib/kubelet

ExecStart=/opt/k8s/bin/kubelet \

--bootstrap-kubeconfig=/opt/k8s/kubelet-bootstrap.kubeconfig \

--cert-dir=/opt/k8s/cert \

--kubeconfig=/opt/k8s/kubelet.kubeconfig \

--config=/opt/k8s/kubelet.config.json \

--hostname-override=##NODE_NAME## \

--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest \

--allow-privileged=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

NODE_IPS=("192.168.10.108" "192.168.10.109" "192.168.10.110")

NODE_NAMES=("kube-master" "kube-node1" "kube-node2")

#分发kubelet systemd unit 文件

for node_name in ${NODE_NAMES[@]};do

echo ">>> ${node_name}"

sed -e "s/##NODE_NAME##/${node_name}/" /opt/kubelet/kubelet.service.template > /opt/kubelet/kubelet-${node_name}.service

scp /opt/kubelet/kubelet-${node_name}.service root@${node_name}:/etc/systemd/system/kubelet.service

done

#开启检查kubelet 服务

for node_ip in ${NODE_IPS[@]};do

ssh root@${node_ip} "mkdir -p /opt/lib/kubelet"

ssh root@${node_ip} "/usr/sbin/swapoff -a"

ssh root@${node_ip} "mkdir -p /opt/log/kubernetes && chown -R k8s /opt/log/kubernetes"