【kubernetes/k8s源码分析】kube-controller-manager之node controller taint部分源码分析

kubernetes v1.12.1

kube-controller-manager中node controller源码分析参看:

https://blog.csdn.net/zhonglinzhang/article/details/77767847

本文关于taint node部分源码分析

节点亲和性是 pod 的一种属性(偏好或硬性要求),它使 pod 被吸引到一类特定的节点。Taint 则相反,它使节点 能够排斥 一类特定的 pod。

Taint 和 toleration 相互配合,可以用来避免 pod 被分配到不合适的节点上。每个节点上都可以应用一个或多个 taint ,这表示对于那些不能容忍这些 taint 的 pod,是不会被该节点接受的。如果将 toleration 应用于 pod 上,则表示这些 pod 可以(但不要求)被调度到具有匹配 taint 的节点上。

可以使用命令行为 Node 节点添加 Taints: kubectl taint nodes node1 key=value:NoSchedule

- NoSchedule:仅影响调度过程,对现存的Pod对象不产生影响;

- NoExecute:既影响调度过程,也影响显著的Pod对象;不容忍的Pod对象将被驱逐

- PreferNoSchedule: 表示尽量不调度

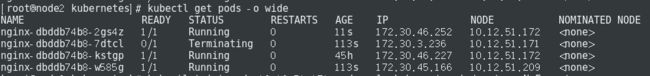

在集群里部署三副本nginx,如下

将node 10.12.51.171添建污点,并设置NoExecute方式

查看pod情况,发现10.12.51.171节点不容忍pod,将其终止

NoExecuteTaintManager结构体

负责监听taint / Toleration的变化,来响应删除pod,对应NoExecute:既影响调度过程,也影响显著的Pod对象;不容忍的Pod对象将被驱逐

- taintedNodes: 记录每个Node对应的Taint信息\

- nodeUpdateChannels: nodeUpdateQueue中取出的updateItem会发送到nodeUpdateChannel,Tait Manager从该Channel中取出对应的node update info

- podUpdateChannels: podUpdateQueue中取出的updateItem会发送到podUpdateChannel,Tait Manager从该Channel中取出对应的pod update info

- nodeUpdateQueue: Node Controller watch node更新会发送到nodeUpdateQueue

- podUpdateQueue: Node Controller watch pod更新会发送到podUpdateQueue

// NoExecuteTaintManager listens to Taint/Toleration changes and is responsible for removing Pods

// from Nodes tainted with NoExecute Taints.

type NoExecuteTaintManager struct {

client clientset.Interface

recorder record.EventRecorder

taintEvictionQueue *TimedWorkerQueue

// keeps a map from nodeName to all noExecute taints on that Node

taintedNodesLock sync.Mutex

taintedNodes map[string][]v1.Taint

nodeUpdateChannels []chan *nodeUpdateItem

podUpdateChannels []chan *podUpdateItem

nodeUpdateQueue workqueue.Interface

podUpdateQueue workqueue.Interface

}

1. taint manager初始化

路径 pkg/controller/nodelifecycle/node_lifecycle_controller.go中NewNodeLifecycleController函数

- --enable-taint-manager:如果设置为true则开启NoExecute Taints,将驱逐所有节点上(拥有这种污点的节点)不容忍运行pod (default true)

调用NewNoExecuteTaintManager初始化node controller对象的taintManager

初始化node informer的event handler,包括taintManager.NodeUpdated

if nc.runTaintManager {

nc.taintManager = scheduler.NewNoExecuteTaintManager(kubeClient)

nodeInformer.Informer().AddEventHandler(cache.ResourceEventHandlerFuncs{

AddFunc: nodeutil.CreateAddNodeHandler(func(node *v1.Node) error {

nc.taintManager.NodeUpdated(nil, node)

return nil

}),

UpdateFunc: nodeutil.CreateUpdateNodeHandler(func(oldNode, newNode *v1.Node) error {

nc.taintManager.NodeUpdated(oldNode, newNode)

return nil

}),

DeleteFunc: nodeutil.CreateDeleteNodeHandler(func(node *v1.Node) error {

nc.taintManager.NodeUpdated(node, nil)

return nil

}),

})

}

2. NewNoExecuteTaintManager函数

创建时间广播器taint controller eventBroadcaster

初始化NoExecuteTaintManager对象,并创建队列,并设置处理队列函数 deletePodHandler

// NewNoExecuteTaintManager creates a new NoExecuteTaintManager that will use passed clientset to

// communicate with the API server.

func NewNoExecuteTaintManager(c clientset.Interface) *NoExecuteTaintManager {

eventBroadcaster := record.NewBroadcaster()

recorder := eventBroadcaster.NewRecorder(scheme.Scheme, v1.EventSource{Component: "taint-controller"})

eventBroadcaster.StartLogging(glog.Infof)

if c != nil {

glog.V(0).Infof("Sending events to api server.")

eventBroadcaster.StartRecordingToSink(&v1core.EventSinkImpl{Interface: c.CoreV1().Events("")})

} else {

glog.Fatalf("kubeClient is nil when starting NodeController")

}

tm := &NoExecuteTaintManager{

client: c,

recorder: recorder,

taintedNodes: make(map[string][]v1.Taint),

nodeUpdateQueue: workqueue.New(),

podUpdateQueue: workqueue.New(),

}

tm.taintEvictionQueue = CreateWorkerQueue(deletePodHandler(c, tm.emitPodDeletionEvent))

return tm

}

3. Run函数

node controller Run函数中调用taintManager.Run函数运行主要执行体(第3.1章节讲解)

// Run starts an asynchronous loop that monitors the status of cluster nodes.

func (nc *Controller) Run(stopCh <-chan struct{}) {

if nc.runTaintManager {

go nc.taintManager.Run(stopCh)

}

if nc.taintNodeByCondition {

// Close node update queue to cleanup go routine.

defer nc.nodeUpdateQueue.ShutDown()

// Start workers to update NoSchedule taint for nodes.

for i := 0; i < scheduler.UpdateWorkerSize; i++ {

// Thanks to "workqueue", each worker just need to get item from queue, because

// the item is flagged when got from queue: if new event come, the new item will

// be re-queued until "Done", so no more than one worker handle the same item and

// no event missed.

go wait.Until(nc.doNoScheduleTaintingPassWorker, time.Second, stopCh)

}

}

if nc.useTaintBasedEvictions {

// Handling taint based evictions. Because we don't want a dedicated logic in TaintManager for NC-originated

// taints and we normally don't rate limit evictions caused by taints, we need to rate limit adding taints.

go wait.Until(nc.doNoExecuteTaintingPass, scheduler.NodeEvictionPeriod, stopCh)

} else {

// Managing eviction of nodes:

// When we delete pods off a node, if the node was not empty at the time we then

// queue an eviction watcher. If we hit an error, retry deletion.

go wait.Until(nc.doEvictionPass, scheduler.NodeEvictionPeriod, stopCh)

}

<-stopCh

}

3.1 Run函数

路径 pkg/controller/nodelifecycle/scheduler/taint_manager.go

3.1.1 初始化nodeUpdateChannels,podUpdateChannels各有八个缓存

// Run starts NoExecuteTaintManager which will run in loop until `stopCh` is closed.

func (tc *NoExecuteTaintManager) Run(stopCh <-chan struct{}) {

glog.V(0).Infof("Starting NoExecuteTaintManager")

for i := 0; i < UpdateWorkerSize; i++ {

tc.nodeUpdateChannels = append(tc.nodeUpdateChannels, make(chan *nodeUpdateItem, NodeUpdateChannelSize))

tc.podUpdateChannels = append(tc.podUpdateChannels, make(chan *podUpdateItem, podUpdateChannelSize))

}3.1.2 异步循环从nodeUpdateQueue取出,发送到nodeUpdateChannels

// Functions that are responsible for taking work items out of the workqueues and putting them

// into channels.

go func(stopCh <-chan struct{}) {

for {

item, shutdown := tc.nodeUpdateQueue.Get()

if shutdown {

break

}

nodeUpdate := item.(*nodeUpdateItem)

hash := hash(nodeUpdate.name(), UpdateWorkerSize)

select {

case <-stopCh:

tc.nodeUpdateQueue.Done(item)

return

case tc.nodeUpdateChannels[hash] <- nodeUpdate:

}

tc.nodeUpdateQueue.Done(item)

}

}(stopCh)3.1.3 异步循环从podUpdateQueue取出,发送到podUpdateChannels

go func(stopCh <-chan struct{}) {

for {

item, shutdown := tc.podUpdateQueue.Get()

if shutdown {

break

}

podUpdate := item.(*podUpdateItem)

hash := hash(podUpdate.nodeName(), UpdateWorkerSize)

select {

case <-stopCh:

tc.podUpdateQueue.Done(item)

return

case tc.podUpdateChannels[hash] <- podUpdate:

}

tc.podUpdateQueue.Done(item)

}

}(stopCh)3.1.4 异步创建8个worker工作

wg := sync.WaitGroup{}

wg.Add(UpdateWorkerSize)

for i := 0; i < UpdateWorkerSize; i++ {

go tc.worker(i, wg.Done, stopCh)

}

wg.Wait()

4. Worker函数

如此大费周章主要原因是,处理node优先级比pod高

调用handleNodeUpdate处理node更新

调用handlePodUpdate处理pod更新

func (tc *NoExecuteTaintManager) worker(worker int, done func(), stopCh <-chan struct{}) {

defer done()

// When processing events we want to prioritize Node updates over Pod updates,

// as NodeUpdates that interest NoExecuteTaintManager should be handled as soon as possible -

// we don't want user (or system) to wait until PodUpdate queue is drained before it can

// start evicting Pods from tainted Nodes.

for {

select {

case <-stopCh:

return

case nodeUpdate := <-tc.nodeUpdateChannels[worker]:

tc.handleNodeUpdate(nodeUpdate)

case podUpdate := <-tc.podUpdateChannels[worker]:

// If we found a Pod update we need to empty Node queue first.

priority:

for {

select {

case nodeUpdate := <-tc.nodeUpdateChannels[worker]:

tc.handleNodeUpdate(nodeUpdate)

default:

break priority

}

}

// After Node queue is emptied we process podUpdate.

tc.handlePodUpdate(podUpdate)

}

}

}4.1 handleNodeUpdate

4.1.1 node已经被删除的情况,删除taintedNode记录的node

func (tc *NoExecuteTaintManager) handleNodeUpdate(nodeUpdate *nodeUpdateItem) {

// Delete

if nodeUpdate.newNode == nil {

node := nodeUpdate.oldNode

glog.V(4).Infof("Noticed node deletion: %#v", node.Name)

tc.taintedNodesLock.Lock()

defer tc.taintedNodesLock.Unlock()

delete(tc.taintedNodes, node.Name)

return

}4.1.2 node创建或者更新的情况

// Create or Update

glog.V(4).Infof("Noticed node update: %#v", nodeUpdate)

node := nodeUpdate.newNode

taints := nodeUpdate.newTaints

func() {

tc.taintedNodesLock.Lock()

defer tc.taintedNodesLock.Unlock()

glog.V(4).Infof("Updating known taints on node %v: %v", node.Name, taints)

if len(taints) == 0 {

delete(tc.taintedNodes, node.Name)

} else {

tc.taintedNodes[node.Name] = taints

}

}()4.1.3 调用getPodsAssigned函数获取节点上所有的pod

如果该Node上的Taints被删除了,则取消所有该node上的pod evictions

调用tc.processPodOnNode根据Node Taints info和Pod Tolerations info处理该Node上的Pod Eviction

pods, err := getPodsAssignedToNode(tc.client, node.Name)

if err != nil {

glog.Errorf(err.Error())

return

}

if len(pods) == 0 {

return

}

// Short circuit, to make this controller a bit faster.

if len(taints) == 0 {

glog.V(4).Infof("All taints were removed from the Node %v. Cancelling all evictions...", node.Name)

for i := range pods {

tc.cancelWorkWithEvent(types.NamespacedName{Namespace: pods[i].Namespace, Name: pods[i].Name})

}

return

}

now := time.Now()

for i := range pods {

pod := &pods[i]

podNamespacedName := types.NamespacedName{Namespace: pod.Namespace, Name: pod.Name}

tc.processPodOnNode(podNamespacedName, node.Name, pod.Spec.Tolerations, taints, now)

}5 processPodOnNode函数

根据Node Taints info和Pod Tolerations info处理该Node上的Pod Eviction

5.1 如果node没有任何污点,取消所有的pod驱逐

func (tc *NoExecuteTaintManager) processPodOnNode(

podNamespacedName types.NamespacedName,

nodeName string,

tolerations []v1.Toleration,

taints []v1.Taint,

now time.Time,

) {

if len(taints) == 0 {

tc.cancelWorkWithEvent(podNamespacedName)

}5.1.1 cancelWork取消Taint Eviction Pods

// CancelWork removes scheduled function execution from the queue. Returns true if work was cancelled.

func (q *TimedWorkerQueue) CancelWork(key string) bool {

q.Lock()

defer q.Unlock()

worker, found := q.workers[key]

result := false

if found {

glog.V(4).Infof("Cancelling TimedWorkerQueue item %v at %v", key, time.Now())

if worker != nil {

result = true

worker.Cancel()

}

delete(q.workers, key)

}

return result

}5.2 对比node的taints和pod tolerations,判断出node的taints是否全部被pod容忍

- 如果不是全部都能容忍,调用AddWork来创建worker,tc.taintEvictionQueue注册的deletePodHandler来删除该pod,返回

- 取pod的所有tolerations的最小值作为minTolerationTime。未设置的则置0

- 如果minTolerationTime小于0,则永远容忍

allTolerated, usedTolerations := v1helper.GetMatchingTolerations(taints, tolerations)

if !allTolerated {

glog.V(2).Infof("Not all taints are tolerated after update for Pod %v on %v", podNamespacedName.String(), nodeName)

// We're canceling scheduled work (if any), as we're going to delete the Pod right away.

tc.cancelWorkWithEvent(podNamespacedName)

tc.taintEvictionQueue.AddWork(NewWorkArgs(podNamespacedName.Name, podNamespacedName.Namespace), time.Now(), time.Now())

return

}

minTolerationTime := getMinTolerationTime(usedTolerations)

// getMinTolerationTime returns negative value to denote infinite toleration.

if minTolerationTime < 0 {

glog.V(4).Infof("New tolerations for %v tolerate forever. Scheduled deletion won't be cancelled if already scheduled.", podNamespacedName.String())

return

}5.3 达到触发时间则取消worker

调用AddWork来创建worker,启动tc.taintEvictionQueue注册的deletePodHandler来删除该pod

startTime := now

triggerTime := startTime.Add(minTolerationTime)

scheduledEviction := tc.taintEvictionQueue.GetWorkerUnsafe(podNamespacedName.String())

if scheduledEviction != nil {

startTime = scheduledEviction.CreatedAt

if startTime.Add(minTolerationTime).Before(triggerTime) {

return

}

tc.cancelWorkWithEvent(podNamespacedName)

}

tc.taintEvictionQueue.AddWork(NewWorkArgs(podNamespacedName.Name, podNamespacedName.Namespace), startTime, triggerTime)

6. deletePodHandler函数

比较简单操作,最终调用pod的delete API删除

func deletePodHandler(c clientset.Interface, emitEventFunc func(types.NamespacedName)) func(args *WorkArgs) error {

return func(args *WorkArgs) error {

ns := args.NamespacedName.Namespace

name := args.NamespacedName.Name

glog.V(0).Infof("NoExecuteTaintManager is deleting Pod: %v", args.NamespacedName.String())

if emitEventFunc != nil {

emitEventFunc(args.NamespacedName)

}

var err error

for i := 0; i < retries; i++ {

err = c.CoreV1().Pods(ns).Delete(name, &metav1.DeleteOptions{})

if err == nil {

break

}

time.Sleep(10 * time.Millisecond)

}

return err

}

}

7. handlePodUpdate函数

7.1 pod已经被删除的情况

调用cacelWorkWithEvent函数取消该Pod被驱逐

func (tc *NoExecuteTaintManager) handlePodUpdate(podUpdate *podUpdateItem) {

// Delete

if podUpdate.newPod == nil {

pod := podUpdate.oldPod

podNamespacedName := types.NamespacedName{Namespace: pod.Namespace, Name: pod.Name}

glog.V(4).Infof("Noticed pod deletion: %#v", podNamespacedName)

tc.cancelWorkWithEvent(podNamespacedName)

return

}7.2 Pod创建或者更新情况

根据Node Taints info和Pod Tolerations info处理该Node上的Pod Eviction

// Create or Update

pod := podUpdate.newPod

podNamespacedName := types.NamespacedName{Namespace: pod.Namespace, Name: pod.Name}

glog.V(4).Infof("Noticed pod update: %#v", podNamespacedName)

nodeName := pod.Spec.NodeName

if nodeName == "" {

return

}

taints, ok := func() ([]v1.Taint, bool) {

tc.taintedNodesLock.Lock()

defer tc.taintedNodesLock.Unlock()

taints, ok := tc.taintedNodes[nodeName]

return taints, ok

}()

// It's possible that Node was deleted, or Taints were removed before, which triggered

// eviction cancelling if it was needed.

if !ok {

return

}

tc.processPodOnNode(podNamespacedName, nodeName, podUpdate.newTolerations, taints, time.Now())

参考:

https://kubernetes.io/zh/docs/concepts/configuration/taint-and-toleration/