- PyTorch & TensorFlow速成复习:从基础语法到模型部署实战(附FPGA移植衔接)

阿牛的药铺

算法移植部署pytorchtensorflowfpga开发

PyTorch&TensorFlow速成复习:从基础语法到模型部署实战(附FPGA移植衔接)引言:为什么算法移植工程师必须掌握框架基础?针对光学类产品算法FPGA移植岗位需求(如可见光/红外图像处理),深度学习框架是算法落地的"桥梁"——既要用PyTorch/TensorFlow验证算法可行性,又要将训练好的模型(如CNN、目标检测)转换为FPGA可部署的格式(ONNX、TFLite)。本文采用"

- Python的科学计算库NumPy(一)

linlin_1998

pythonnumpy开发语言

NumPy(NumericalPython)是Python中最基础、最重要的科学计算库之一,提供了高性能的多维数组(ndarray)对象和大量数学函数,是许多数据科学、机器学习库(如Pandas、SciPy、TensorFlow等)的基础依赖。1.创建一个numpy里面的一维数组importnumpyasnp###通过array方法创建一个ndarrayarray1=np.array([1,2,3

- 使用tensorflow的多项式回归的例子(二)

lishaoan77

tensorflowtensorflow回归人工智能多项式回归

例2importtensorflowastfimportnumpyasnpimportmatplotlib.pyplotaspltplt.style.use('default')#importtensorflow.contrib.eagerastfe#fromgoogle.colabimportfiles#tf.enable_eager_execution()x=np.arange(0,5,0.1

- 使用tensorflow的线性回归的例子(七)

lishaoan77

tensorflowtensorflow线性回归人工智能

L1与L2损失这个脚本展示如何用TensorFlow求解线性回归。在算法的收敛性中,理解损失函数的影响是很重要的。这里我们展示L1和L2损失函数是如何影响线性回归的收敛性的。我们使用iris数据集,但是我们将改变损失函数和学习速率来看收敛性的改变。importmatplotlib.pyplotaspltimportnumpyasnpimporttensorflowastffromsklearnim

- 使用tensorflow的线性回归的例子(十二)

lishaoan77

tensorflowtensorflow线性回归人工智能戴明回归

DemingRegression这里展示如何用TensorFlow求解线性戴明回归。=+y=Ax+b我们用iris数据集,特别是:y=SepalLength且x=PetalWidth。戴明回归Demingregression也称为totalleastsquares,其中我们最小化从预测线到实际点(x,y)的最短的距离。最小二乘线性回归最小化与预测线的垂直距离,戴明回归最小化与预测线的总的距离,这种

- 第八周 tensorflow实现猫狗识别

降花绘

365天深度学习tensorflow系列tensorflow深度学习人工智能

本文为365天深度学习训练营内部限免文章(版权归K同学啊所有)**参考文章地址:[TensorFlow入门实战|365天深度学习训练营-第8周:猫狗识别(训练营内部成员可读)]**作者:K同学啊文章目录一、本周学习内容:1、自己搭建VGG16网络2、了解model.train_on_batch()3、了解tqdm,并使用tqdm实现可视化进度条二、前言三、电脑环境四、前期准备1、导入相关依赖项2、

- 深度学习实战-使用TensorFlow与Keras构建智能模型

程序员Gloria

Python超入门TensorFlowpython

深度学习实战-使用TensorFlow与Keras构建智能模型深度学习已经成为现代人工智能的重要组成部分,而Python则是实现深度学习的主要编程语言之一。本文将探讨如何使用TensorFlow和Keras构建深度学习模型,包括必要的代码实例和详细的解析。1.深度学习简介深度学习是机器学习的一个分支,使用多层神经网络来学习和表示数据中的复杂模式。其广泛应用于图像识别、自然语言处理、推荐系统等领域。

- 微信小程序——扫码功能简单实现

mon_star°

智慧门店小程序

微信小程序中二维码扫描的简单实现,很容易的。首先在.wxml写一个text组件,通过点击这个text来实现扫码功能。{{scanCode}}bindtap是给text绑定的点击事件;{{scanCode}}给这个text赋值,赋值的数据在.js文件的data里初始化。.js文件Page({/***页面的初始数据*/data:{scanCode:'扫码',},/***生命周期函数--监听页面加载*/

- Python结合TensorFlow实现图像风格迁移

Python编程之道

Python人工智能与大数据Python编程之道pythontensorflow开发语言ai

Python结合TensorFlow实现图像风格迁移关键词:Python、TensorFlow、图像风格迁移、神经网络、内容损失、风格损失摘要:本文将带领大家探索如何使用Python结合TensorFlow来实现图像风格迁移。图像风格迁移是一项神奇的技术,它能将一幅图像的风格应用到另一幅图像上。我们会从基础概念讲起,解释图像风格迁移背后的原理,通过Python代码详细展示实现过程,还会探讨实际应用

- 量化价值投资中的深度学习技术:TensorFlow实战

量化价值投资中的深度学习技术:TensorFlow实战关键词:量化价值投资,深度学习,TensorFlow,股票预测,因子模型,LSTM神经网络,量化策略摘要:本文将带你走进"量化价值投资"与"深度学习"的交叉地带,用小学生都能听懂的语言解释复杂概念,再通过手把手的TensorFlow实战案例,教你如何用AI技术挖掘股票市场中的价值宝藏。我们会从传统价值投资的痛点出发,揭示深度学习如何像"超级分析

- AI人工智能遇上TensorFlow:技术融合新趋势

AI大模型应用之禅

人工智能tensorflowpythonai

AI人工智能遇上TensorFlow:技术融合新趋势关键词:人工智能、TensorFlow、深度学习、神经网络、机器学习、技术融合、AI开发摘要:本文深入探讨了人工智能技术与TensorFlow框架的融合发展趋势。我们将从基础概念出发,详细分析TensorFlow在AI领域的核心优势,包括其架构设计、算法实现和实际应用。文章包含丰富的技术细节,如神经网络原理、TensorFlow核心算法实现、数学

- 【零基础学AI】第30讲:生成对抗网络(GAN)实战 - 手写数字生成

1989

0基础学AI人工智能生成对抗网络神经网络python机器学习近邻算法深度学习

本节课你将学到GAN的基本原理和工作机制使用PyTorch构建生成器和判别器DCGAN架构实现技巧训练GAN模型的实用技巧开始之前环境要求Python3.8+需要安装的包:pipinstalltorchtorchvisionmatplotlibnumpyGPU推荐(可大幅加速训练)前置知识第21讲TensorFlow基础第23讲神经网络原理基本PyTorch使用经验核心概念什么是GAN?GAN就像

- 【深度学习-Day 35】实战图像数据增强:用PyTorch和TensorFlow扩充你的数据集

吴师兄大模型

深度学习入门到精通深度学习pytorchtensorflow人工智能python大模型LLM

Langchain系列文章目录01-玩转LangChain:从模型调用到Prompt模板与输出解析的完整指南02-玩转LangChainMemory模块:四种记忆类型详解及应用场景全覆盖03-全面掌握LangChain:从核心链条构建到动态任务分配的实战指南04-玩转LangChain:从文档加载到高效问答系统构建的全程实战05-玩转LangChain:深度评估问答系统的三种高效方法(示例生成、手

- C++ --- list的简单实现

list的简单实现前言一、节点类二、迭代器类三、list类四、迭代器类的相关运算符重载1.解引用操作符2.成员访问操作符3.前置后置++/--4.==/!=运算符五、list类的相关构造和方法1.迭代器相关2.空初始化方法3.构造,析构函数相关4.赋值运算符重载5.尾插,头插,任意位置插6.尾删,头删,任意位置删除7.清空8.size方法六、总结前言本次实现的list结构是带头双向循环链表,节点结

- 基于Abp Vnext、FastMCP构建一个企业级的模型即服务(MaaS)平台方案

NetX行者

AbpvnextMaasAbpvnextFastMCP企业级平台解决方案开源python

企业级MaaS平台技术可行性分析报告一、总体技术架构HTTP/WebSocketgRPC/RESTgRPC/RESTgRPCVue3前端ABPvNextAPI网关.NET9业务微服务ABPvNextMCPClientFastMCP模型仓库PyTorch/TensorFlowHuggingFaceHeyGem/ChatGLM自定义模型统一鉴权中心二、核心框架与中间件组件技术选型官方链接作用前端框架V

- 服务器无对应cuda版本安装pytorch-gpu[自用]

片月斜生梦泽南

pytorch

服务器无对应cuda版本安装pytorch-gpu服务器无对应cuda版本安装pytorch-gpu网址下载非root用户安装tmux查看服务器ubuntu版本conda安装tensorflow-gpu安装1.x版本服务器无对应cuda版本安装pytorch-gpu网址GPU版本的pytorch、pytorchvision的下载链接https://download.pytorch.org/whl/

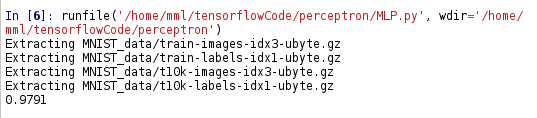

- 视频讲解:多层感知机MLP与卷积神经网络CNN在服装图像识别中的应用

原文链接:https://tecdat.cn/?p=42891原文出处:拓端数据部落公众号分析师:ZiqiYe视频讲解:多层感知机MLP与卷积神经网络CNN在服装图像识别中的应用作为数据科学领域的从业者,我们常面临这样的挑战:如何让机器真正“看懂”图像中的信息?在为客户完成服装零售行业的图像识别时,这一问题尤为突出。追溯图像识别技术的发展,早期依赖人工设计特征,如边缘检测、纹理分析等,效率低下且适

- Ubuntu下安装多版本CUDA及灵活切换全攻略

芯作者

D2:ubuntulinuxubuntu

——释放深度学习潜能,告别版本依赖的烦恼!**为什么需要多版本CUDA?在深度学习、科学计算等领域,不同框架(TensorFlow、PyTorch等)对CUDA版本的要求各异。同时升级框架或维护旧项目时,版本冲突频发。多版本CUDA共存+一键切换是高效开发的刚需!本文将手把手教你实现这一能力,并分享独创的“动态软链接+环境隔离”技巧,让版本管理行云流水!环境准备硬件要求NVIDIA显卡(支持CUD

- ubuntu22.04从新系统到tensorflow GPU支持

澍龑

tensorflow人工智能

ubuntu22.04CUDA从驱动到tensorflow安装0系统常规设置和软件安装0.1挂载第二硬盘默认Home0.2软件安装0.3安装指定版本的python0.4python虚拟环境设置1直接安装1.1配置信息1.2驱动安装1.3集显显示,独显运算(其它debug用)1.4卸载驱动(备用,未试)日常使用ssh后台运行(断联不中断)0系统常规设置和软件安装0.1挂载第二硬盘默认Homesudo

- 【零基础学AI】第27讲:注意力机制(Attention) - 机器翻译实战

1989

0基础学AI人工智能机器翻译自然语言处理pythontensorflow机器学习神经网络

本节课你将学到理解注意力机制的核心思想掌握注意力计算的数学原理实现基于注意力机制的Seq2Seq模型构建英语到法语的神经翻译系统开始之前环境要求Python3.8+需要安装的包:tensorflow==2.8.0numpy==1.21.0matplotlib==3.4.0pandas==1.3.0前置知识RNN/LSTM原理(第26讲)序列数据处理(第26讲)自然语言处理基础(第14讲)核心概念为

- TensorFlow图神经网络(GNN)入门指南

AI天才研究院

AI人工智能与大数据tensorflow神经网络人工智能ai

TensorFlow图神经网络(GNN)入门指南关键词:TensorFlow、图神经网络、GNN、深度学习、图数据、节点嵌入、图卷积网络摘要:本文全面介绍如何使用TensorFlow实现图神经网络(GNN)。我们将从图数据的基本概念开始,深入探讨GNN的核心原理,包括图卷积网络(GCN)、图注意力网络(GAT)等流行架构,并通过TensorFlow代码示例展示如何构建和训练GNN模型。文章还将涵盖

- mediapipe流水线分析 三

江太翁

AndroidNDK人工智能mediapipeandroid

目标检测Graph一流水线上游输入处理1TfLiteConverterCalculator将输入的数据转换成tensorflowapi支持的TensorTfLiteTensor并初始化相关输入输出节点,该类的业务主要通过interpreterstd::unique_ptrtflite::Interpreterinterpreter_=nullptr;实现类完成数据在cpu/gpu上的推理1.1Tf

- JuPyter(IPython) Notebooks中使用pip安装Python的模块

weixin_34218890

开发工具python人工智能

问题描述:没有带GPU的电脑,搞深度学习不是耍流氓嘛,我网上看到有个云平台,免费使用了一下,小姐姐很热情。使用过程如下:他们给的接口是Jupyter编辑平台,我就在上面跑了一个小例子。tensorflow和python环境是他们配置好的,不过我的例子中需要导入matplotlib.pylot模块。可是他们没有提供,怎么办呢?网上查了一下啊解决方法:采用如下方法:importpipdefMyPipi

- TensorFlow武林志 第一卷:入门篇 - 初入江湖 第一章:真气初现

空中湖

tensorflow武林志tensorflow人工智能python

第一卷:入门篇-初入江湖第一章:真气初现林枫揉了揉酸痛的胳膊,将最后一捆柴火堆放在灶房角落。这是他来到青霄剑宗做杂役的第三个月,每日劈柴挑水的生活让他原本白皙的皮肤变得黝黑粗糙。"喂,新来的!掌门要的热水怎么还没送去?"门外传来管事的呵斥声。"马上就好!"林枫急忙提起铜壶,滚烫的热水溅在他手背上,他却浑然不觉疼痛。自从上月在后山偶然吞服了那枚奇异的朱果后,他对冷热疼痛的感知就变得异常迟钝。穿过曲折

- Webpack 简版手写

aiguangyuan

前端开发Webpack

本文将以Webpack5为例,通过简单实现,整体介绍一下Wwebpack构建过程与实现细节。1.项目结构simple-webpack├──dist├──src│├──index.js│├──module1.js│└──module2.js├──loaders│├──babelLoader.js│└──exampleLoader.js├──plugins│└──examplePlugin.js├──

- TensorFlow 零基础入门:手把手教你跑通第一个AI模型

蓑笠翁001

人工智能人工智能tensorflowpython机器学习深度学习分类

今天用最直白的语言,带完全零基础的同学走进TensorFlow的世界。不用担心数学公式,先学会"开车",再学"造车"!1.准备工作:安装TensorFlow就像玩游戏需要先安装游戏客户端一样,我们需要先安装TensorFlow。打开你的电脑(Windows/Mac都行),按下Win+R,输入cmd打开命令提示符,然后输入:pipinstalltensorflow看到"Successfullyins

- 疏锦行Python打卡 DAY 33 MLP神经网络的训练

importtorchtorch.cudaimporttorch#检查CUDA是否可用iftorch.cuda.is_available():print("CUDA可用!")#获取可用的CUDA设备数量device_count=torch.cuda.device_count()print(f"可用的CUDA设备数量:{device_count}")#获取当前使用的CUDA设备索引current_d

- Class5多层感知机的从零开始实现

Morning的呀

深度学习深度学习机器学习pytorch

Class5多层感知机的从零开始实现importtorchfromtorchimportnnfromd2limporttorchasd2l#设置批量大小为256batch_size=256#初始化训练集和测试集迭代器,每次训练一个批量train_iter,test_iter=d2l.load_data_fashion_mnist(batch_size)#构建一个单隐藏层的前馈神经网络(MLP)#n

- 「日拱一码」017 深度学习常用库——TensorFlow

目录基础操作张量操作:tf.constant用于创建常量张量tf.Variable用于创建可训练的变量张量tf.reshape可改变张量的形状tf.concat可将多个张量沿指定维度拼接tf.split则可将张量沿指定维度分割数学运算:tf.add张量的加运算tf.subtract张量的减运算tf.multiply张量的乘运算tf.divide张量的除运算tf.pow计算张量的幂tf.sqrt计算

- spring boot中使用easyexcel简单实现导出功能

导入依赖com.alibabaeasyexcel3.1.1建立excel表格所需数据类(载入excel表的数据)ExcelProperty可定义列名,位置等属性@Data@ExcelIgnoreUnannotatedpublicclassOrderListResp{/***用户id*/@ApiModelProperty("用户id")//value代表列名,index为表格列序号,此代表列名为用户

- JVM StackMapTable 属性的作用及理解

lijingyao8206

jvm字节码Class文件StackMapTable

在Java 6版本之后JVM引入了栈图(Stack Map Table)概念。为了提高验证过程的效率,在字节码规范中添加了Stack Map Table属性,以下简称栈图,其方法的code属性中存储了局部变量和操作数的类型验证以及字节码的偏移量。也就是一个method需要且仅对应一个Stack Map Table。在Java 7版

- 回调函数调用方法

百合不是茶

java

最近在看大神写的代码时,.发现其中使用了很多的回调 ,以前只是在学习的时候经常用到 ,现在写个笔记 记录一下

代码很简单:

MainDemo :调用方法 得到方法的返回结果

- [时间机器]制造时间机器需要一些材料

comsci

制造

根据我的计算和推测,要完全实现制造一台时间机器,需要某些我们这个世界不存在的物质

和材料...

甚至可以这样说,这种材料和物质,我们在反应堆中也无法获得......

- 开口埋怨不如闭口做事

邓集海

邓集海 做人 做事 工作

“开口埋怨,不如闭口做事。”不是名人名言,而是一个普通父亲对儿子的训导。但是,因为这句训导,这位普通父亲却造就了一个名人儿子。这位普通父亲造就的名人儿子,叫张明正。 张明正出身贫寒,读书时成绩差,常挨老师批评。高中毕业,张明正连普通大学的分数线都没上。高考成绩出来后,平时开口怨这怨那的张明正,不从自身找原因,而是不停地埋怨自己家庭条件不好、埋怨父母没有给他创造良好的学习环境。

- jQuery插件开发全解析,类级别与对象级别开发

IT独行者

jquery开发插件 函数

jQuery插件的开发包括两种: 一种是类级别的插件开发,即给

jQuery添加新的全局函数,相当于给

jQuery类本身添加方法。

jQuery的全局函数就是属于

jQuery命名空间的函数,另一种是对象级别的插件开发,即给

jQuery对象添加方法。下面就两种函数的开发做详细的说明。

1

、类级别的插件开发 类级别的插件开发最直接的理解就是给jQuer

- Rome解析Rss

413277409

Rome解析Rss

import java.net.URL;

import java.util.List;

import org.junit.Test;

import com.sun.syndication.feed.synd.SyndCategory;

import com.sun.syndication.feed.synd.S

- RSA加密解密

无量

加密解密rsa

RSA加密解密代码

代码有待整理

package com.tongbanjie.commons.util;

import java.security.Key;

import java.security.KeyFactory;

import java.security.KeyPair;

import java.security.KeyPairGenerat

- linux 软件安装遇到的问题

aichenglong

linux遇到的问题ftp

1 ftp配置中遇到的问题

500 OOPS: cannot change directory

出现该问题的原因:是SELinux安装机制的问题.只要disable SELinux就可以了

修改方法:1 修改/etc/selinux/config 中SELINUX=disabled

2 source /etc

- 面试心得

alafqq

面试

最近面试了好几家公司。记录下;

支付宝,面试我的人胖胖的,看着人挺好的;博彦外包的职位,面试失败;

阿里金融,面试官人也挺和善,只不过我让他吐血了。。。

由于印象比较深,记录下;

1,自我介绍

2,说下八种基本类型;(算上string。楼主才答了3种,哈哈,string其实不是基本类型,是引用类型)

3,什么是包装类,包装类的优点;

4,平时看过什么书?NND,什么书都没看过。。照样

- java的多态性探讨

百合不是茶

java

java的多态性是指main方法在调用属性的时候类可以对这一属性做出反应的情况

//package 1;

class A{

public void test(){

System.out.println("A");

}

}

class D extends A{

public void test(){

S

- 网络编程基础篇之JavaScript-学习笔记

bijian1013

JavaScript

1.documentWrite

<html>

<head>

<script language="JavaScript">

document.write("这是电脑网络学校");

document.close();

</script>

</h

- 探索JUnit4扩展:深入Rule

bijian1013

JUnitRule单元测试

本文将进一步探究Rule的应用,展示如何使用Rule来替代@BeforeClass,@AfterClass,@Before和@After的功能。

在上一篇中提到,可以使用Rule替代现有的大部分Runner扩展,而且也不提倡对Runner中的withBefores(),withAfte

- [CSS]CSS浮动十五条规则

bit1129

css

这些浮动规则,主要是参考CSS权威指南关于浮动规则的总结,然后添加一些简单的例子以验证和理解这些规则。

1. 所有的页面元素都可以浮动 2. 一个元素浮动后,会成为块级元素,比如<span>,a, strong等都会变成块级元素 3.一个元素左浮动,会向最近的块级父元素的左上角移动,直到浮动元素的左外边界碰到块级父元素的左内边界;如果这个块级父元素已经有浮动元素停靠了

- 【Kafka六】Kafka Producer和Consumer多Broker、多Partition场景

bit1129

partition

0.Kafka服务器配置

3个broker

1个topic,6个partition,副本因子是2

2个consumer,每个consumer三个线程并发读取

1. Producer

package kafka.examples.multibrokers.producers;

import java.util.Properties;

import java.util.

- zabbix_agentd.conf配置文件详解

ronin47

zabbix 配置文件

Aliaskey的别名,例如 Alias=ttlsa.userid:vfs.file.regexp[/etc/passwd,^ttlsa:.:([0-9]+),,,,\1], 或者ttlsa的用户ID。你可以使用key:vfs.file.regexp[/etc/passwd,^ttlsa:.: ([0-9]+),,,,\1],也可以使用ttlsa.userid。备注: 别名不能重复,但是可以有多个

- java--19.用矩阵求Fibonacci数列的第N项

bylijinnan

fibonacci

参考了网上的思路,写了个Java版的:

public class Fibonacci {

final static int[] A={1,1,1,0};

public static void main(String[] args) {

int n=7;

for(int i=0;i<=n;i++){

int f=fibonac

- Netty源码学习-LengthFieldBasedFrameDecoder

bylijinnan

javanetty

先看看LengthFieldBasedFrameDecoder的官方API

http://docs.jboss.org/netty/3.1/api/org/jboss/netty/handler/codec/frame/LengthFieldBasedFrameDecoder.html

API举例说明了LengthFieldBasedFrameDecoder的解析机制,如下:

实

- AES加密解密

chicony

加密解密

AES加解密算法,使用Base64做转码以及辅助加密:

package com.wintv.common;

import javax.crypto.Cipher;

import javax.crypto.spec.IvParameterSpec;

import javax.crypto.spec.SecretKeySpec;

import sun.misc.BASE64Decod

- 文件编码格式转换

ctrain

编码格式

package com.test;

import java.io.File;

import java.io.FileInputStream;

import java.io.FileOutputStream;

import java.io.IOException;

import java.io.InputStream;

import java.io.OutputStream;

- mysql 在linux客户端插入数据中文乱码

daizj

mysql中文乱码

1、查看系统客户端,数据库,连接层的编码

查看方法: http://daizj.iteye.com/blog/2174993

进入mysql,通过如下命令查看数据库编码方式: mysql> show variables like 'character_set_%'; +--------------------------+------

- 好代码是廉价的代码

dcj3sjt126com

程序员读书

长久以来我一直主张:好代码是廉价的代码。

当我跟做开发的同事说出这话时,他们的第一反应是一种惊愕,然后是将近一个星期的嘲笑,把它当作一个笑话来讲。 当他们走近看我的表情、知道我是认真的时,才收敛一点。

当最初的惊愕消退后,他们会用一些这样的话来反驳: “好代码不廉价,好代码是采用经过数十年计算机科学研究和积累得出的最佳实践设计模式和方法论建立起来的精心制作的程序代码。”

我只

- Android网络请求库——android-async-http

dcj3sjt126com

android

在iOS开发中有大名鼎鼎的ASIHttpRequest库,用来处理网络请求操作,今天要介绍的是一个在Android上同样强大的网络请求库android-async-http,目前非常火的应用Instagram和Pinterest的Android版就是用的这个网络请求库。这个网络请求库是基于Apache HttpClient库之上的一个异步网络请求处理库,网络处理均基于Android的非UI线程,通

- ORACLE 复习笔记之SQL语句的优化

eksliang

SQL优化Oracle sql语句优化SQL语句的优化

转载请出自出处:http://eksliang.iteye.com/blog/2097999

SQL语句的优化总结如下

sql语句的优化可以按照如下六个步骤进行:

合理使用索引

避免或者简化排序

消除对大表的扫描

避免复杂的通配符匹配

调整子查询的性能

EXISTS和IN运算符

下面我就按照上面这六个步骤分别进行总结:

- 浅析:Android 嵌套滑动机制(NestedScrolling)

gg163

android移动开发滑动机制嵌套

谷歌在发布安卓 Lollipop版本之后,为了更好的用户体验,Google为Android的滑动机制提供了NestedScrolling特性

NestedScrolling的特性可以体现在哪里呢?<!--[if !supportLineBreakNewLine]--><!--[endif]-->

比如你使用了Toolbar,下面一个ScrollView,向上滚

- 使用hovertree菜单作为后台导航

hvt

JavaScriptjquery.nethovertreeasp.net

hovertree是一个jquery菜单插件,官方网址:http://keleyi.com/jq/hovertree/ ,可以登录该网址体验效果。

0.1.3版本:http://keleyi.com/jq/hovertree/demo/demo.0.1.3.htm

hovertree插件包含文件:

http://keleyi.com/jq/hovertree/css

- SVG 教程 (二)矩形

天梯梦

svg

SVG <rect> SVG Shapes

SVG有一些预定义的形状元素,可被开发者使用和操作:

矩形 <rect>

圆形 <circle>

椭圆 <ellipse>

线 <line>

折线 <polyline>

多边形 <polygon>

路径 <path>

- 一个简单的队列

luyulong

java数据结构队列

public class MyQueue {

private long[] arr;

private int front;

private int end;

// 有效数据的大小

private int elements;

public MyQueue() {

arr = new long[10];

elements = 0;

front

- 基础数据结构和算法九:Binary Search Tree

sunwinner

Algorithm

A binary search tree (BST) is a binary tree where each node has a Comparable key (and an associated value) and satisfies the restriction that the key in any node is larger than the keys in all

- 项目出现的一些问题和体会

Steven-Walker

DAOWebservlet

第一篇博客不知道要写点什么,就先来点近阶段的感悟吧。

这几天学了servlet和数据库等知识,就参照老方的视频写了一个简单的增删改查的,完成了最简单的一些功能,使用了三层架构。

dao层完成的是对数据库具体的功能实现,service层调用了dao层的实现方法,具体对servlet提供支持。

&

- 高手问答:Java老A带你全面提升Java单兵作战能力!

ITeye管理员

java

本期特邀《Java特种兵》作者:谢宇,CSDN论坛ID: xieyuooo 针对JAVA问题给予大家解答,欢迎网友积极提问,与专家一起讨论!

作者简介:

淘宝网资深Java工程师,CSDN超人气博主,人称“胖哥”。

CSDN博客地址:

http://blog.csdn.net/xieyuooo

作者在进入大学前是一个不折不扣的计算机白痴,曾经被人笑话过不懂鼠标是什么,