Kubernetes调度

Kubernetes调度

调度器通过 kubernetes的watch机制来发现集群中新创建且尚未被调度到Node上的Pod。调度器会将发现的每一个未调度的Pod调度到一个合适的Node上来运行。

kube-scheduler是Kubernetes集群的默认调度器,并且是集群控制面的一部分。如果你真的希望或者有这方面的需求,kube-scheduler 在设计上是允许你自己写一个调度组件并替换原有的 kube-scheduler。

在做调度决定时需要考虑的因素包括:单独和整体的资源请求、硬件/软件/策略限制、亲和以及反亲和要求、数据局域性、负载间的干扰等等。

默认策略可以参考: https://kubernetes.io/zh/docs/concepts/scheduling/kube-scheduler/

调度框架: https://kubernetes.io/zh/docs/concepts/configuration/scheduling-framework/

nodeName

nodeName是节点选择约束的最简单方法,但一般不推荐。如果 nodeName 在PodSpec 中指定了,则它优先于其他的节点选择方法。

示例:

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- name: nginx

image: reg.harbor.com/library/nginx

nodeName: server3

nodeName 选择节点的限制:

- 如果指定的节点不存在。

- 如果指定的节点没有资源来容纳 pod,则pod 调度失败。

- 云环境中的节点名称并非总是可预测或稳定的。

nodeSelector

nodeSelector 是节点选择约束的最简单推荐形式。

给选择的节点添加标签:

kubectl label nodes server2 disktype=hdd ##通过label来给节点打上标签添加 nodeSelector 字段到 pod 配置中:

apiVersion: v1

kind: Pod

metadata:

name: nginx

labels:

env: test

spec:

containers:

- name: nginx

image: reg.harbor.com/library/nginx

imagePullPolicy: IfNotPresent

nodeSelector:

disktype: hdd

亲和与反亲和

- nodeSelector 提供了一种非常简单的方法来将 pod 约束到具有特定标签的节点上。亲和/反亲和功能极大地扩展了你可以表达约束的类型。

- 你可以发现规则是“软”/“偏好”,而不是硬性要求,因此,如果调度器无法满足该要求,仍然调度该 pod

- 你可以使用节点上的 pod 的标签来约束,而不是使用节点本身的标签,来允许哪些 pod 可以或者不可以被放置在一起。

节点亲和

- requiredDuringSchedulingIgnoredDuringExecution 必须满足

- preferredDuringSchedulingIgnoredDuringExecution 倾向满足

- IgnoreDuringExecution 表示如果在Pod运行期间Node的标签发生变化,导致亲和性策略不能满足,则继续运行当前的Pod。

参考: https://kubernetes.io/zh/docs/concepts/configuration/assign-pod- node/

节点亲和性pod示例:

apiVersion: v1

kind: Pod

metadata:

name: node-affinity

spec:

containers:

- name: nginx

image: reg.harbor.com/library/nginx

affinity:

nodeAffinity: ##亲和

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: disktype

operator: In ###label的值在列表内

values:

- hdd

nodeaffinity支持多种规则匹配条件的配置

- In:label 的值在列表内

- NotIn:label 的值不在列表内

- Gt:label 的值大于设置的值,不支持Pod亲和性

- Lt:label 的值小于设置的值,不支持pod亲和性

- Exists:设置的label 存在

- DoesNotExist:设置的 label 不存在

pod 亲和性和反亲和性

- podAffinity 主要解决POD可以和哪些POD部署在同一个拓扑域中的问题(拓扑域用主机标签实现,可以是单个主机,也可以是多个主机组成的cluster、zone等。)

- podAntiAffinity主要解决POD不能和哪些POD部署在同一个拓扑域中的问题。它们处理的是Kubernetes集群内部POD和POD之间的关系。

- Pod 间亲和与反亲和在与更高级别的集合(例如 ReplicaSets,StatefulSets,Deployments 等)一起使用时,它们可能更加有用。可以轻松配置一组应位于相同定义拓扑(例如,节点)中的工作负载。

pod亲和性示例:

apiVersion: v1

kind: Pod

metadata:

name: nginx

labels:

app: nginx

spec:

containers:

- name: nginx

image: reg.harbor.com/library/nginxapiVersion: v1

kind: Pod

metadata:

name: mysql

labels:

app: mysql

spec:

containers:

- name: mysql

image: mysql

env:

- name: "MYSQL_ROOT_PASSWORD"

value: "redhat" ##mysql密码

affinity:

podAffinity: ##pod亲和

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In ##在label列表内

values:

- nginx

topologyKey: kubernetes.io/hostnamepod反亲和性示例:

apiVersion: v1

kind: Pod

metadata:

name: mysql

labels:

app: mysql

spec:

containers:

- name: mysql

image: mysql

env:

- name: "MYSQL_ROOT_PASSWORD"

value: "westos"

affinity:

podAntiAffinity: ##pod反亲和

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- nginx

topologyKey: "kubernetes.io/hostname"

NodeAffinity节点亲和性,是Pod上定义的一种属性,使Pod能够按我们的要求调度到某个Node上,而Taints则恰恰相反,它可以让Node拒绝运行Pod,甚至驱逐Pod。

Taints(污点)是Node的一个属性,设置了Taints后,所以Kubernetes是不会将Pod调度到这个Node上的,于是Kubernetes就给Pod设置了个属性Tolerations(容忍),只要Pod能够容忍Node上的污点,那么Kubernetes就会忽略Node上的污点,就能够(不是必须)把Pod调度过去。

taint相关操作:

创建

kubectl taint nodes node1 key=value:NoSchedule查询

kubectl describe nodes server1 |grep Taints删除

kubectl taint nodes node1 key:NoSchedule-[ NoSchedule | PreferNoSchedule | NoExecute ]

- NoSchedule:POD 不会被调度到标记为 taints 节点。

- PreferNoSchedule:NoSchedule 的软策略版本。

- NoExecute:该选项意味着一旦 Taint 生效,如该节点内正在运行的 POD 没有对应Tolerate 设置,会直接被逐出。

示例:

部署nginx deployment

vim nginx-dep.yaml 1 apiVersion: apps/v1

2 kind: Deployment

3 metadata:

4 name: web-server

5 spec:

6 selector:

7 matchLabels:

8 app: nginx

9 replicas: 3

10 template:

11 metadata:

12 labels:

13 app: nginx

14 spec:

15 containers:

16 - name: nginx

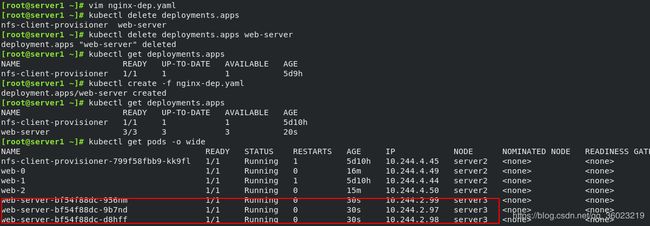

17 image: reg.harbor.com/library/nginxkubectl create -f nginx-dep.yaml

kubectl get deployments.apps

kubectl get pods -o wide

给server3节点打上taint:

kubectl taint node server3 key1=v1:NoExecute

kubectl get pods -o wide

可以看到server3的pod被驱离,deployment控制器自动在server2重新创建pod

在PodSpec中为容器设定容忍标签:

tolerations:

- key: "key1"

operator: "Equal"

value: "v1"

effect: "NoExecute"删除容器重新创建,可以发现server3已经可以运行pod了

kubectl describe nodes server3 |grep Taints ##查看污点,污点存在kubectl taint nodes server3 key1:NoExecute- ##去除污点

tolerations中定义的key、value、effect,要与node上设置的taint保持一致:

- 如果 operator 是 Exists ,value可以省略。

- 如果 operator 是 Equal ,则key与value之间的关系必须相等。

- 如果不指定operator属性,则默认值为Equal。

两个特殊值:

- 当不指定key,再配合Exists 就能匹配所有的key与value ,可以容忍所有污点。

- 当不指定effect ,则匹配所有的effect。

示例

tolerations:

- key: "key"

operator: "Equal"

value: "value"

effect: "NoSchedule"

---

tolerations:

- key: "key"

operator: "Exists"

effect: "NoSchedule"

影响Pod调度的其他指令:

cordon、drain、delete,后期创建的pod都不会被调度到该节点上,但操作的暴力程度不一样。

cordon 停止调度:

影响最小,只会将node调为SchedulingDisabled,新创建pod,不会被调度到该节点,节点原有pod不受影响,仍正常对外提供服务。

kubectl cordon server3

kubectl get nodekubectl uncordon server3 ##恢复

drain 驱逐节点:

首先驱逐node上的pod,在其他节点重新创建,然后将节点调为SchedulingDisabled。

kubectl drain server3

部分特殊pod无法删除

添加参数--ignore-daemonsets 无视DaemonSet管理下的Pod

kubectl drain server3 --ignore-daemonsets

kubectl uncordon server3 ##恢复

delete 删除节点

最暴力的方法,首先驱逐node上的pod,在其他节点重新创建,然后,从master节点删除该node,master失去对其控制,如要恢复调度,需进入node节点,重启kubelet服务

kubectl delete node server3systemctl restart kubelet ##基于node的自注册功能,恢复使用