Hadoop HA 高可用(重点详解)

文章目录

- 四、Hadoop HA 高可用

- 4.1 HA 概述

- 4.2 HDFS-HA 工作机制

- 4.2.1 HDFS-HA 工作要点

- 4.2.2 HDFS-HA 自动故障转移工作机制

- 4.3 HDFS-HA 集群配置

- 4.3.1 环境准备

- 4.3.2 规划集群

- 4.3.3 配置 Zookeeper 集群

- 4.3.4 配置 HDFS-HA 集群

- 4.3.5 启动HDFS-HA集群

- 4.3.6 配置 HDFS-HA 自动故障转移

- 4.4 YARN-HA配置

- 4.4.1 YARN-HA工作机制

- 4.4.2 配置 YARN-HA 集群

- 4.5 HDFS Federation 架构设计

- 4.5.1 NameNode架构的局限性

- 4.5.2 HDFS Federation架构设计**

- 4.5.3 HDFS Federation应用思考

四、Hadoop HA 高可用

4.1 HA 概述

(1)所谓 HA(High Availablity),即高可用(7*24小时不中断服务)。

(2)实现高可用最关键的策略是消除单点故障。HA严格来说应该分成各个组件的HA机制:HDFS 的 HA 和 YARN 的 HA。

(3)Hadoop2.0之前,在 HDFS 集群中 NameNode 存在单点故障(SPOF)。

(4)NameNode 主要在以下两个方面影响 HDFS 集群

- NameNode 机器发生意外,如宕机,集群将无法使用,直到管理员重启

- NameNode 机器需要升级,包括软件、硬件升级,此时集群也将无法使用

- HDFS HA 功能通过配置 Active/Standby 两个 NameNodes 实现在集群中对 NameNode 的热备来解决上述问题。如果出现故障,如机器崩溃或机器需要升级维护,这时可通过此种方式将 NameNode 很快的切换到另外一台机器。

4.2 HDFS-HA 工作机制

- 通过多个 NameNode 消除单点故障

4.2.1 HDFS-HA 工作要点

1)元数据管理方式需要改变

- 内存中各自保存一份元数据;

- Edits 日志只有 Active 状态的 NameNode 节点可以做写操作;

- 所有的 NameNode 都可以读取 Edits;

- 共享的 Edits 放在一个共享存储中管理(qjournal 和 NFS 两个主流实现);

2)需要一个状态管理功能模块

- 实现了一个 zkfailover,常驻在每一个 namenode 所在的节点,每一个 zkfailover 负责监控自己所在 NameNode 节点,利用zk进行状态标识,当需要进行状态切换时,由 zkfailover 来负责切换,切换时需要防止 brain split (脑裂)现象的发生。

3)必须保证两个 NameNode 之间能够ssh无密码登录

4)隔离(Fence),即同一时刻仅仅有一个 NameNode 对外提供服务

4.2.2 HDFS-HA 自动故障转移工作机制

- 自动故障转移为 HDFS 部署增加了两个新组件:ZooKeeper 和ZKFailoverController(ZKFC)进程。ZooKeeper 是维护少量协调数据,通知客户端这些数据的改变和监视客户端故障的高可用服务。HA的自动故障转移依赖于 ZooKeeper 的以下功能:

1.故障检测

- 集群中的每个 NameNode 在 ZooKeeper 中维护了一个会话,如果机器崩溃,ZooKeeper 中的会话将终止,ZooKeeper 通知另一个 NameNode 需要触发故障转移。

2.现役 NameNode 选择

- ZooKeeper 提供了一个简单的机制用于唯一的选择一个节点为 active 状态。如果目前现役 NameNode 崩溃,另一个节点可能从 ZooKeeper 获得特殊的排外锁以表明它应该成为现役 NameNode。

- ZKFC 是自动故障转移中的另一个新组件,是 ZooKeeper 的客户端,也监视和管理 NameNode 的状态。每个运行 NameNode 的主机也运行了一个 ZKFC 进程,ZKFC 负责:

1)健康监测

- ZKFC 使用一个健康检查命令定期地 ping 与之在相同主机的 NameNode,只要该 NameNode 及时地回复健康状态,ZKFC 认为该节点是健康的。如果该节点崩溃,冻结或进入不健康状态,健康监测器标识该节点为非健康的。

2)ZooKeeper 会话管理

- 当本地 NameNode 是健康的,ZKFC 保持一个在 ZooKeeper 中打开的会话。如果本地 NameNode 处于 active 状态,ZKFC 也保持一个特殊的 znode 锁,该锁使用了 ZooKeeper 对短暂节点的支持,如果会话终止,锁节点将自动删除。

3)基于 ZooKeeper 的选择

- 如果本地 NameNode 是健康的,且ZKFC发现没有其它的节点当前持有 znode 锁,它将为自己获取该锁。如果成功,则它已经赢得了选择,并负责运行故障转移进程以使它的本地 NameNode 为 Active。

4.3 HDFS-HA 集群配置

4.3.1 环境准备

(1)修改IP

(2)修改主机名及主机名和IP地址的映射

(3)关闭防火墙

(4)ssh免密登录

(5)安装JDK,配置环境变量等

4.3.2 规划集群

| hadoop105 | hadoop106 | hadoop107 |

|---|---|---|

| NameNode | NameNode | NameNode |

| ZKFC | ZKFC | ZKFC |

| JournalNode | JournalNode | JournalNode |

| DataNode | DataNode | DataNode |

| ZK | ZK | ZK |

| ResourceManager | ||

| NodeManager | NodeManager | NodeManager |

4.3.3 配置 Zookeeper 集群

1)集群规划

在 hadoop105、hadoop106 和 hadoop107 三个节点上部署Zookeeper。

2)解压安装

(1)解压 Zookeeper 安装包到/opt/module/目录下

[xiaoxq@hadoop105 software]$ tar -zxvf zookeeper-3.5.7.tar.gz -C /opt/module/

(2)在 /opt/module/zookeeper-3.5.7/ 这个目录下创建zkData

[xiaoxq@hadoop105 zookeeper-3.5.7]$ mkdir -p zkData

(3)重命名 /opt/module/zookeeper-3.5.7/conf 这个目录下的zoo_sample.cfg 为zoo.cfg

[xiaoxq@hadoop105 conf]$ mv zoo_sample.cfg zoo.cfg

3)配置 zoo.cfg 文件

(1)具体配置

dataDir=/opt/module/zookeeper-3.5.7/zkData

增加如下配置

#######################cluster##########################

server.5=hadoop105:2888:3888

server.6=hadoop106:2888:3888

server.7=hadoop107:2888:3888

(2)配置参数解读

Server.A=B:C:D

A是一个数字,表示这个是第几号服务器;

集群模式下配置一个文件 myid,这个文件在 dataDir 目录下,这个文件里面有一个数据就是A的值,Zookeeper 启动时读取此文件,拿到里面的数据与 zoo.cfg 里面的配置信息比较从而判断到底是哪个 server。

B是这个服务器的地址;

C是这个服务器 Follower 与集群中的 Leader 服务器交换信息的端口;

D是万一集群中的 Leader 服务器挂了,需要一个端口来重新进行选举,选出一个新的 Leader,而这个端口就是用来执行选举时服务器相互通信的端口。

4)集群操作

(1)在/opt/module/zookeeper-3.5.7/zkData目录下创建一个myid的文件

[xiaoxq@hadoop105 zkData]$ touch myid

添加myid文件,注意一定要在linux里面创建,在notepad++里面很可能乱码

(2)编辑myid文件

[xiaoxq@hadoop105 zkData]$ vim myid

在文件中添加与 server 对应的编号:如5

(3)拷贝配置好的 zookeeper 到其他机器上

[xiaoxq@hadoop105 module]$ scp -r zookeeper-3.5.7/ xiaoxq@hadoop106:/opt/module/

[xiaoxq@hadoop105 module]$ scp -r zookeeper-3.5.7/ xiaoxq@hadoop107:/opt/module/

并分别修改myid文件中内容为6、7

(4)分别启动zookeeper

[xiaoxq@hadoop105 zookeeper-3.5.7]$ bin/zkServer.sh start

[xiaoxq@hadoop106 zookeeper-3.5.7]$ bin/zkServer.sh start

[xiaoxq@hadoop107 zookeeper-3.5.7]$ bin/zkServer.sh start

(5)查看状态

[xiaoxq@hadoop105 zookeeper-3.5.7]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: follower

[xiaoxq@hadoop106 zookeeper-3.5.7]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: follower

[xiaoxq@hadoop107 zookeeper-3.5.7]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: leader

4.3.4 配置 HDFS-HA 集群

1)官方地址:http://hadoop.apache.org/

2)在opt目录下创建一个ha文件夹

[xiaoxq@hadoop105 zkData]$ cd /opt/

[xiaoxq@hadoop105 opt]$ sudo mkdir ha

drwxr-xr-x. 2 root root 4096 7月 28 18:31 ha

[xiaoxq@hadoop105 opt]$ sudo chown xiaoxq:xiaoxq ha

drwxr-xr-x. 2 xiaoxq xiaoxq 4096 7月 28 18:31 ha

3)将/opt/module/下的 hadoop-3.1.3拷贝到/opt/ha目录下(记得删除data 和 log目录)

[xiaoxq@hadoop105 ~]$ cp -r /opt/module/hadoop-3.1.3 /opt/ha/

[xiaoxq@hadoop105 hadoop-3.1.3]$ rm -rf data logs

4)配置 hadoop-env.sh

export JAVA_HOME=/opt/module/jdk1.8.0_212

5)配置 core-site.xml

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://myclustervalue>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/opt/ha/hadoop-3.1.3/datavalue>

property>

configuration>

6)配置 hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.name.dirname>

<value>file://${hadoop.tmp.dir}/namevalue>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>file://${hadoop.tmp.dir}/datavalue>

property>

<property>

<name>dfs.journalnode.edits.dirname>

<value>${hadoop.tmp.dir}/jnvalue>

property>

<property>

<name>dfs.nameservicesname>

<value>myclustervalue>

property>

<property>

<name>dfs.ha.namenodes.myclustername>

<value>nn1,nn2,nn3value>

property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1name>

<value>hadoop105:8020value>

property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2name>

<value>hadoop106:8020value>

property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn3name>

<value>hadoop107:8020value>

property>

<property>

<name>dfs.namenode.http-address.mycluster.nn1name>

<value>hadoop105:9870value>

property>

<property>

<name>dfs.namenode.http-address.mycluster.nn2name>

<value>hadoop106:9870value>

property>

<property>

<name>dfs.namenode.http-address.mycluster.nn3name>

<value>hadoop107:9870value>

property>

<property>

<name>dfs.namenode.shared.edits.dirname>

<value>qjournal://hadoop105:8485;hadoop106:8485;hadoop107:8485/myclustervalue>

property>

<property>

<name>dfs.client.failover.proxy.provider.myclustername>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvidervalue>

property>

<property>

<name>dfs.ha.fencing.methodsname>

<value>sshfencevalue>

property>

<property>

<name>dfs.ha.fencing.ssh.private-key-filesname>

<value>/home/xiaoxq/.ssh/id_rsavalue>

property>

configuration>

7)分发配置好的hadoop环境到其他节点

[xiaoxq@hadoop105 etc]$ sudo xsync /opt/ha/

4.3.5 启动HDFS-HA集群

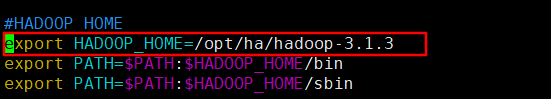

1)将 HADOOP_HOME 环境变量更改到 HA 目录

[xiaoxq@hadoop105 ~]$ sudo vim /etc/profile.d/my_evn.sh

将HADOOP_HOME部分改为如下module —> ha

2)在各个 JournalNode 节点上,输入以下命令启动 journalnode 服务

[xiaoxq@hadoop105 ~]$ hdfs --daemon start journalnode

[xiaoxq@hadoop106 ~]$ hdfs --daemon start journalnode

[xiaoxq@hadoop107 ~]$ hdfs --daemon start journalnode

3)在[nn1]上,对其进行格式化,并启动

- 在格式化之前要先进行source /etc/profile(三台虚拟机上都要,不然会报错)

[xiaoxq@hadoop105 ~]$ hdfs namenode -format

[xiaoxq@hadoop105 ~]$ hdfs --daemon start namenode

4)在[nn2]和[nn3]上,同步nn1的元数据信息

[xiaoxq@hadoop106 ~]$ hdfs namenode -bootstrapStandby

[xiaoxq@hadoop107 ~]$ hdfs namenode -bootstrapStandby

5)启动[nn2]和[nn3]

[xiaoxq@hadoop106 ~]$ hdfs --daemon start namenode

[xiaoxq@hadoop107 ~]$ hdfs --daemon start namenode

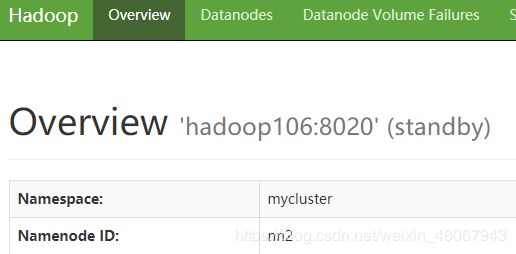

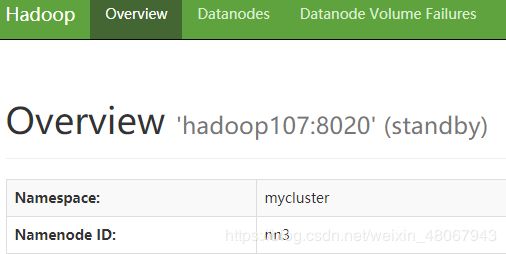

6)查看web页面显示

图 hadoop105(standby)

图 hadoop106(standby)

图 hadoop107(standby)

7)在所有节点上,启动datanode

[xiaoxq@hadoop105 hadoop]$ hdfs --daemon start datanode

[xiaoxq@hadoop106 hadoop]$ hdfs --daemon start datanode

[xiaoxq@hadoop107 hadoop]$ hdfs --daemon start datanode

8)将[nn1]切换为Active

[xiaoxq@hadoop105 ~]$ hdfs haadmin -transitionToActive nn1

9)查看是否Active

[xiaoxq@hadoop105 ~]$ hdfs haadmin -getServiceState nn1

active

4.3.6 配置 HDFS-HA 自动故障转移

1)具体配置

(1)在hdfs-site.xml中增加

<property>

<name>dfs.ha.automatic-failover.enabledname>

<value>truevalue>

property>

(2)在core-site.xml文件中增加

<property>

<name>ha.zookeeper.quorumname> <value>hadoop105:2181,hadoop106:2181,hadoop107:2181value>

property>

(3)修改后分发配置文件

[xiaoxq@hadoop105 etc]$ pwd

/opt/ha/hadoop-3.1.3/etc

[xiaoxq@hadoop105 etc]$ sudo xsync hadoop/

2)启动

(1)关闭所有HDFS服务:

[xiaoxq@hadoop105 etc]$ stop-dfs.sh

(2)启动 Zookeeper 集群:

[xiaoxq@hadoop105 ~]$ zkServer.sh start

[xiaoxq@hadoop106 ~]$ zkServer.sh start

[xiaoxq@hadoop107 ~]$ zkServer.sh start

[xiaoxq@hadoop105 ~]$ jpsall

========== hadoop105 ==========

3866 QuorumPeerMain

========== hadoop106 ==========

3794 QuorumPeerMain

========== hadoop107 ==========

3711 QuorumPeerMain

(3)启动Zookeeper以后,然后再初始化HA在 Zookeeper 中状态:

[xiaoxq@hadoop105 ~]$ hdfs zkfc -formatZK

(4)启动HDFS服务:

[xiaoxq@hadoop105 ~]$ start-dfs.sh

(5)可以去 zkCli.sh 客户端查看 Namenode 选举锁节点内容:

[xiaoxq@hadoop105 ~]$ zkCli.sh

[zk: localhost:2181(CONNECTED) 0] get -s /hadoop-ha/mycluster/ActiveStandbyElectorLock

myclusternn2 hadoop106 �>(�>

cZxid = 0x80000000f

ctime = Tue Jul 28 20:49:49 CST 2020

mZxid = 0x80000000f

mtime = Tue Jul 28 20:49:49 CST 2020

pZxid = 0x80000000f

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x60000861b830001

dataLength = 33

numChildren = 0

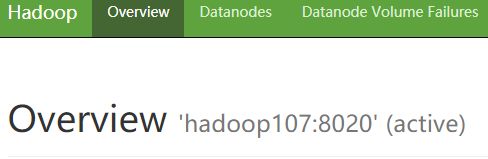

3)验证

(1)将 Active NameNode 进程 kill,查看网页端三台 Namenode 的状态变化

========== hadoop106 ==========

9602 DataNode

9826 DFSZKFailoverController

9510 NameNode

9708 JournalNode

9356 QuorumPeerMain

[xiaoxq@hadoop105 ~]$ kill -9 namenode的进程id

[xiaoxq@hadoop106 hadoop-3.1.3]$ kill -9 9510

[zk: localhost:2181(CONNECTED) 0] get -s /hadoop-ha/mycluster/ActiveStandbyElectorLock

myclusternn3 hadoop107 �>(�>

cZxid = 0x800000017

ctime = Tue Jul 28 20:58:56 CST 2020

mZxid = 0x800000017

mtime = Tue Jul 28 20:58:56 CST 2020

pZxid = 0x800000017

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x500008620e30000

dataLength = 33

numChildren = 0

4.4 YARN-HA配置

4.4.1 YARN-HA工作机制

1)官方文档:

http://hadoop.apache.org/docs/r3.1.3/hadoop-yarn/hadoop-yarn-site/ResourceManagerHA.html

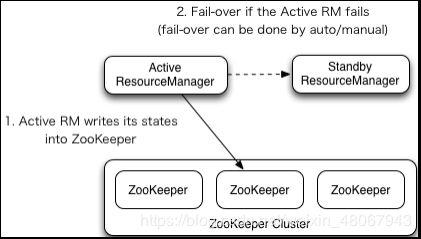

2)YARN-HA工作机制

4.4.2 配置 YARN-HA 集群

1)环境准备

(1)修改IP

(2)修改主机名及主机名和IP地址的映射

(3)关闭防火墙

(4)ssh免密登录

(5)安装JDK,配置环境变量等

(6)配置Zookeeper集群

2)规划集群

| hadoop102 | hadoop103 | hadoop104 |

|---|---|---|

| NameNode | NameNode | NameNode |

| JournalNode | JournalNode | JournalNode |

| DataNode | DataNode | DataNode |

| ZK | ZK | ZK |

| ResourceManager | ResourceManager | |

| NodeManager | NodeManager | NodeManager |

3)具体配置

(1)yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.ha.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.cluster-idname>

<value>cluster-yarn1value>

property>

<property>

<name>yarn.resourcemanager.ha.rm-idsname>

<value>rm1,rm2value>

property>

<property>

<name>yarn.resourcemanager.hostname.rm1name>

<value>hadoop105value>

property>

<property>

<name>yarn.resourcemanager.webapp.address.rm1name>

<value>hadoop105:8088value>

property>

<property>

<name>yarn.resourcemanager.address.rm1name>

<value>hadoop105:8032value>

property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm1name>

<value>hadoop105:8030value>

property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm1name>

<value>hadoop105:8031value>

property>

<property>

<name>yarn.resourcemanager.hostname.rm2name>

<value>hadoop106value>

property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2name>

<value>hadoop106:8088value>

property>

<property>

<name>yarn.resourcemanager.address.rm2name>

<value>hadoop106:8032value>

property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm2name>

<value>hadoop106:8030value>

property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm2name>

<value>hadoop106:8031value>

property>

<property>

<name>yarn.resourcemanager.zk-addressname>

<value>hadoop105:2181,hadoop106:2181,hadoop107:2181value>

property>

<property>

<name>yarn.resourcemanager.recovery.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.store.classname> <value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStorevalue>

property>

<property>

<name>yarn.nodemanager.env-whitelistname>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOMEvalue>

property>

configuration>

(2)同步更新其他节点的配置信息,分发配置文件

[xiaoxq@hadoop105 etc]$ sudo xsync hadoop/

[xiaoxq@hadoop105 etc]$ source /etc/profile

4)启动 hdfs

[xiaoxq@hadoop105 etc]$ start-dfs.sh

5)启动YARN

(1)在 hadoop105 或者 hadoop106 中执行:

[xiaoxq@hadoop106 hadoop-3.1.3]$ start-yarn.sh

(2)查看服务状态

[xiaoxq@hadoop106 hadoop-3.1.3]$ yarn rmadmin -getServiceState rm1

standby

[xiaoxq@hadoop106 hadoop-3.1.3]$ yarn rmadmin -getServiceState rm2

active

(3)可以去 zkCli.sh 客户端查看 ResourceManager 选举锁节点内容:

[xiaoxq@hadoop106~]$ zkCli.sh

[zk: localhost:2181(CONNECTED) 16] get -s /yarn-leader-election/cluster-yarn1/ActiveStandbyElectorLock

[zk: localhost:2181(CONNECTED) 0] get -s /yarn-leader-election/cluster-yarn1/ActiveStandbyElectorLock

cluster-yarn1rm2

cZxid = 0x900000009

ctime = Tue Jul 28 21:24:42 CST 2020

mZxid = 0x900000009

mtime = Tue Jul 28 21:24:42 CST 2020

pZxid = 0x900000009

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x70000a7944d0000

dataLength = 20

numChildren = 0

(4)web端查看hadoop105:8088和hadoop106:8088的YARN的状态,和 NameNode 对比,查看区别

4.5 HDFS Federation 架构设计

4.5.1 NameNode架构的局限性

1)Namespace(命名空间)的限制

- 由于NameNode在内存中存储所有的元数据(metadata),因此单个NameNode所能存储的对象(文件+块)数目受到NameNode所在JVM的heapsize的限制。50G的heap能够存储20亿(200million)个对象,这20亿个对象支持4000个DataNode,12PB的存储(假设文件平均大小为40MB)。随着数据的飞速增长,存储的需求也随之增长。单个DataNode从4T增长到36T,集群的尺寸增长到8000个DataNode。存储的需求从12PB增长到大于100PB。

2)隔离问题

- 由于HDFS仅有一个NameNode,无法隔离各个程序,因此HDFS上的一个实验程序就很有可能影响整个HDFS上运行的程序。

3)性能的瓶颈

- 由于是单个NameNode的HDFS架构,因此整个HDFS文件系统的吞吐量受限于单个NameNode的吞吐量。

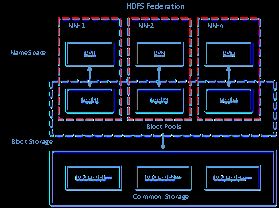

4.5.2 HDFS Federation架构设计**

- 能不能有多个NameNode

| NameNode | NameNode | NameNode |

|---|---|---|

| 元数据 | 元数据 | 元数据 |

| Log | machine | 电商数据/话单数据 |

图 HDFS Federation架构设计

4.5.3 HDFS Federation应用思考

- 不同应用可以使用不同NameNode进行数据管理图片业务、爬虫业务、日志审计业务。Hadoop生态系统中,不同的框架使用不同的NameNode进行管理NameSpace。(隔离性)