Python爬取Boss直聘,获取全国Python薪酬榜

深感抱歉

本来这篇文章应该是在昨天发的,可是电脑出了问题蓝屏了。晚上回来重装了系统,结果还是搞到了现在。

今天想和大家聊聊Python与爬虫

python之所以能迅速风靡全国,和大街小巷各种的培训机构脱不开关系。

一会pythonAI未来以来,一会儿4个月培养人工智能与机器学习顶尖人才,更有甚者什么一周成就爬虫分析师...

我这一把年纪了,胆子小只敢在自己的公众号里说说。至于出去了,你们该实力互吹、生猛造势的,我看看就好不说话。

网上经常看到爬虫的文章,什么爬了几十万数据,一把撸下来几千万评论的,听起来高大上又牛逼。

但其实爬虫工程师,你看网上有几个招聘的?为什么,因为数据有价!

各大厂做什么网络解决方案的,怎么解决?不得先把各大运营商数据买回来分析了才去解决吗?天下哪有白吃的午餐。

爬虫面临的问题

不再是单纯的数据一把抓

多数的网站还是请求来了,一把将所有数据塞进去返回,但现在更多的网站使用数据的异步加载,爬虫不再像之前那么方便

很多人说js异步加载与数据解析,爬虫可以做到啊,恩是的,无非增加些工作量,那是你没遇到牛逼的前端,多数的解决办法只能靠渲染浏览器抓取,效率低下,接着往下走千姿百态的登陆验证

从12306的说说下面哪个糖是奶糖,到现在各大网站的滑动拼图、汉子点击解锁,这些操作都是在为了阻止爬虫的自动化运行。

你说可以先登录了复制cookie,但cookie也有失效期吧?反爬虫机制

何为反爬虫?犀利的解释网上到处搜,简单的逻辑我讲给你听。你几秒钟访问了我的网站一千次,不好意思,我把你的ip禁掉,一段时间你别来了。

很多人又说了,你也太菜了吧,不知道有爬虫ip代理池的开源项目IPProxys吗?那我就呵呵了,几个人真的现在用过免费的ip代理池,你去看看现在的免费代理池,有几个是可用的!

再说了,你通过IPProxys代理池,获取到可用的代理访问人家网站,人家网站不会用同样的办法查到可用的代理先一步封掉吗?然后你只能花钱去买付费的代理数据源头封锁

平时大家看的什么爬爬豆瓣电影网站啊,收集下某宝评论啊....这些都是公开数据。但现在更多的数据逐步走向闭源化。数据的价值越来越大,没有数据获取的源头,爬虫面临什么问题?

个人面对爬虫的态度

学习爬虫,可以让你多掌握一门技术,但个人劝你不要在这条路走的太深。没事儿爬点小东西,学习下网络知识,掌握些网页解析技巧就好了。再牛逼的爬虫框架,也解决不了你没数据的苦恼。

说说今天内容

扯了一圈了,该回到主题了。

上面说了一堆的爬虫这不好那不好,结果我今天发的文章确是爬虫的,自己打自己的脸?

其实我只是想说说网站数据展示与分析的技巧...恰巧Boss直聘就做的很不错。怎么不错?一点点分析...

- 数据共享

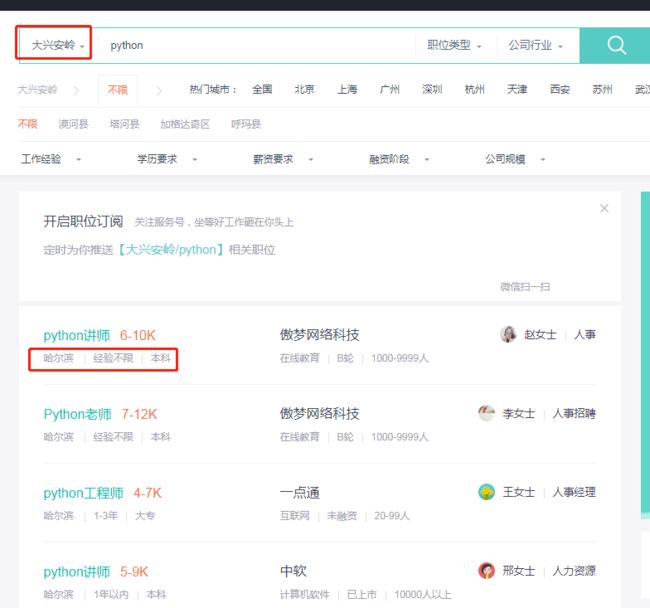

先来看一张图:

我选择黑龙江省的大兴安岭,去看看那里有招聘python的没,多数系统查询不到数据就会给你提示未获取到相关数据,但Boss直聘会悄悄地吧黑龙江省的python招聘信息给你显示处理,够鸡~贼。 - 数据限制

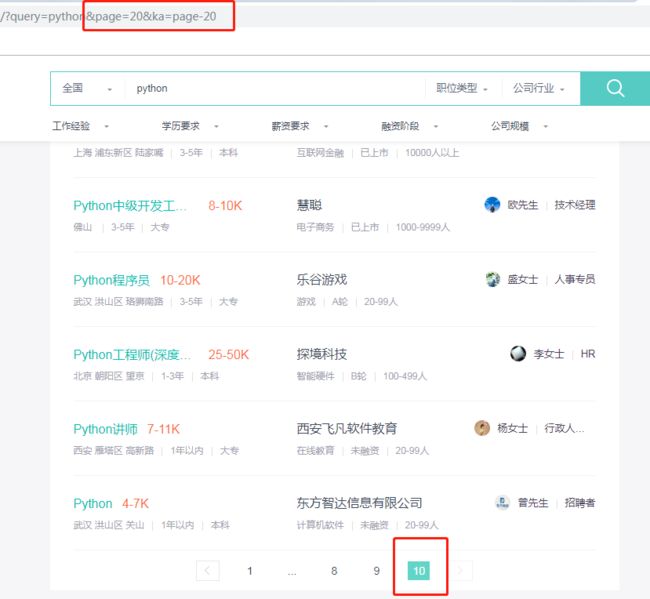

大兴安岭没有搞python的,那我们去全国看看吧:

这里差一点就把我坑了,我开始天真的以为,全国只有300条(一页30条,共10也)python招聘信息。

然后我回过头去看西安的,也只有10页,然后想着修改下他的get请求parameters,没卵用。

这有啥用?仔细想...一方面可以做到放置咱们爬虫一下获取所有的数据,但这只是你自作多情,这东西是商机!

每天那么多的商家发布招聘信息,进入不了top100,别人想看都看不到你的消息,除非搜索名字。那么如何排名靠前?答案就是最后俩字,靠钱。你是Boss直聘的会员,你发布的就会靠前.... - 偷换概念

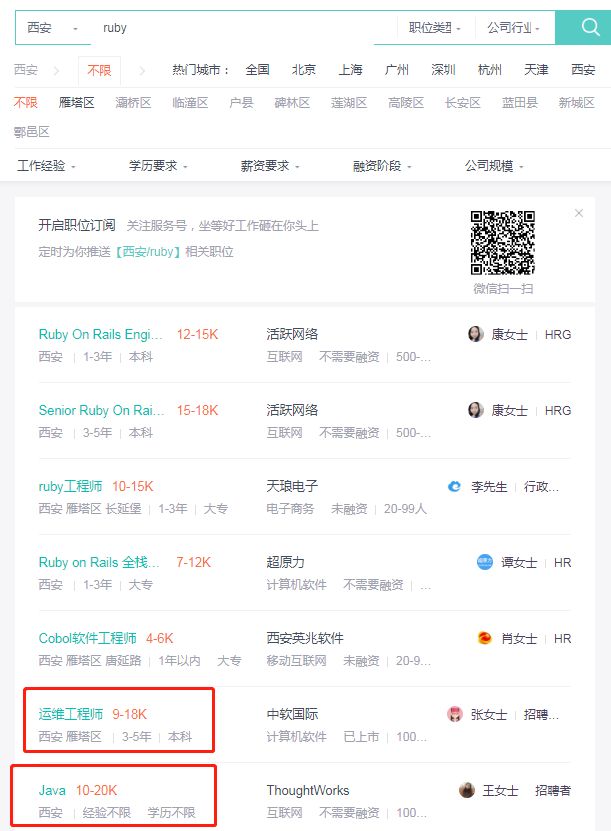

依旧先看图:

我搜索的是ruby,你资料不够,其他来凑.... - ip解析

老套路,再来看一张图:

感觉人生已经到达了高潮,感觉人生已经到达了巅峰

Boss直聘的服务器里,留着我的痕迹,多么骄傲的事情啊。你们想不想和我一样?只需要3秒钟....

三秒钟内你的访问量能超过1000,妥妥被封!

- 反反反爬虫

咱们正常的叫爬虫,它不让我们爬,这叫反爬虫,然后我们用ip代理池的ip,这叫反反爬虫。结果你发现,人家早就把可用的代理池先一步封了,这叫反反反爬虫....

免费代理池中,很多代理是不可用或者需要输入密码的。好不容易找到一些能用的列表,拉过来添加上发现早就被封掉了,也许是它提前禁掉,也许是别人用过被封了,但结局就是你千辛万苦找来的,往往最终还是失败的。

那么我们该怎么办

- 设置不同的User-Agent

使用pip install fake-useragent安装后获取多种User-Agent,但其实本地保存上几十个,完全够了.... - 不要太夯(大力)

适当的减慢你的速度,别人不会觉得是你菜....别觉得一秒爬几千比一秒爬几百的人牛逼(快枪手子弹打完的早....不算开车吧?)。 - 购买付费的代理

为什么我跳过了说免费的代理?因为现在搞爬虫的人太多了,免费的基本早就列入各大网站的黑名单了。

说书今天的内容

爬取全国热点城市的职业,然后对各大城市的薪资进行比较。

原始数据如下:

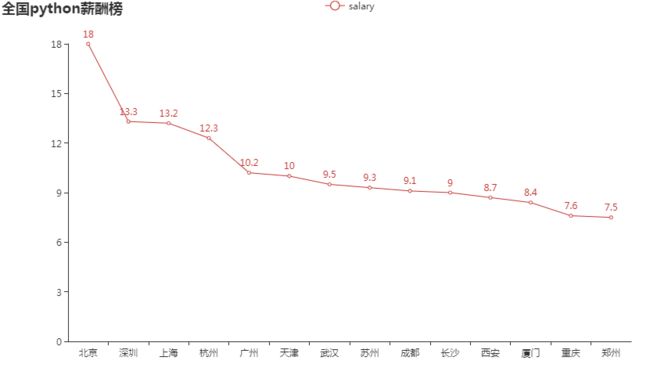

先来看看python的薪酬榜:

看一下西安的排位,薪资平均真的好低.....

至于你说薪资范围:什么15-20K?放心90%的人入职都只会给你15K的,那10%的人不是你,不是你。

再来看看ruby的:

看这感觉比Python高很多啊....但其实呢?跟百度人均公司3W+一样,你拿人均算?光几个总裁年薪上亿的,就拉上去了....

但还是可以看到一点,西安的薪酬还是好低......

代码

代码其实没有太多讲的,篇幅最多的内容,估计就是我的User-Agent了....

# -*- coding: utf-8 -*-

# @Author : 王翔

# @JianShu : 清风Python

# @Date : 2019/6/14 22:23

# @Software : PyCharm

# @version :Python 3.6.8

# @File : BossCrawler.py

import requests

from bs4 import BeautifulSoup

import csv

import random

import time

import argparse

from pyecharts.charts import Line

import pandas as pd

class BossCrawler:

def __init__(self, query):

self.query = query

self.filename = 'boss_info_%s.csv' % self.query

self.city_code_list = self.get_city()

self.boss_info_list = []

self.csv_header = ["city", "profession", "salary", "company"]

@staticmethod

def getheaders():

user_list = [

"Opera/9.80 (X11; Linux i686; Ubuntu/14.10) Presto/2.12.388 Version/12.16",

"Opera/9.80 (Windows NT 6.0) Presto/2.12.388 Version/12.14",

"Mozilla/5.0 (Windows NT 6.0; rv:2.0) Gecko/20100101 Firefox/4.0 Opera 12.14",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.0) Opera 12.14",

"Opera/12.80 (Windows NT 5.1; U; en) Presto/2.10.289 Version/12.02",

"Opera/9.80 (Windows NT 6.1; U; es-ES) Presto/2.9.181 Version/12.00",

"Opera/9.80 (Windows NT 5.1; U; zh-sg) Presto/2.9.181 Version/12.00",

"Opera/12.0(Windows NT 5.2;U;en)Presto/22.9.168 Version/12.00",

"Opera/12.0(Windows NT 5.1;U;en)Presto/22.9.168 Version/12.00",

"Mozilla/5.0 (Windows NT 5.1) Gecko/20100101 Firefox/14.0 Opera/12.0",

"Opera/9.80 (Windows NT 6.1; WOW64; U; pt) Presto/2.10.229 Version/11.62",

"Opera/9.80 (Windows NT 6.0; U; pl) Presto/2.10.229 Version/11.62",

"Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52",

"Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; de) Presto/2.9.168 Version/11.52",

"Opera/9.80 (Windows NT 5.1; U; en) Presto/2.9.168 Version/11.51",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; de) Opera 11.51",

"Opera/9.80 (X11; Linux x86_64; U; fr) Presto/2.9.168 Version/11.50",

"Opera/9.80 (X11; Linux i686; U; hu) Presto/2.9.168 Version/11.50",

"Opera/9.80 (X11; Linux i686; U; ru) Presto/2.8.131 Version/11.11",

"Opera/9.80 (X11; Linux i686; U; es-ES) Presto/2.8.131 Version/11.11",

"Mozilla/5.0 (Windows NT 5.1; U; en; rv:1.8.1) Gecko/20061208 Firefox/5.0 Opera 11.11",

"Opera/9.80 (X11; Linux x86_64; U; bg) Presto/2.8.131 Version/11.10",

"Opera/9.80 (Windows NT 6.0; U; en) Presto/2.8.99 Version/11.10",

"Opera/9.80 (Windows NT 5.1; U; zh-tw) Presto/2.8.131 Version/11.10",

"Opera/9.80 (Windows NT 6.1; Opera Tablet/15165; U; en) Presto/2.8.149 Version/11.1",

"Opera/9.80 (X11; Linux x86_64; U; Ubuntu/10.10 (maverick); pl) Presto/2.7.62 Version/11.01",

"Opera/9.80 (X11; Linux i686; U; ja) Presto/2.7.62 Version/11.01",

"Opera/9.80 (X11; Linux i686; U; fr) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 6.1; U; zh-tw) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 6.1; U; zh-cn) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 6.1; U; sv) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 6.1; U; en-US) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 6.1; U; cs) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 6.0; U; pl) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 5.2; U; ru) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 5.1; U;) Presto/2.7.62 Version/11.01",

"Opera/9.80 (Windows NT 5.1; U; cs) Presto/2.7.62 Version/11.01",

"Mozilla/5.0 (Windows NT 6.1; U; nl; rv:1.9.1.6) Gecko/20091201 Firefox/3.5.6 Opera 11.01",

"Mozilla/5.0 (Windows NT 6.1; U; de; rv:1.9.1.6) Gecko/20091201 Firefox/3.5.6 Opera 11.01",

"Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.1; de) Opera 11.01",

"Opera/9.80 (X11; Linux x86_64; U; pl) Presto/2.7.62 Version/11.00",

"Opera/9.80 (X11; Linux i686; U; it) Presto/2.7.62 Version/11.00",

"Opera/9.80 (Windows NT 6.1; U; zh-cn) Presto/2.6.37 Version/11.00",

"Opera/9.80 (Windows NT 6.1; U; pl) Presto/2.7.62 Version/11.00",

"Opera/9.80 (Windows NT 6.1; U; ko) Presto/2.7.62 Version/11.00",

"Opera/9.80 (Windows NT 6.1; U; fi) Presto/2.7.62 Version/11.00",

"Opera/9.80 (Windows NT 6.1; U; en-GB) Presto/2.7.62 Version/11.00",

"Opera/9.80 (Windows NT 6.1 x64; U; en) Presto/2.7.62 Version/11.00",

"Opera/9.80 (Windows NT 6.0; U; en) Presto/2.7.39 Version/11.00"

]

user_agent = random.choice(user_list)

headers = {'User-Agent': user_agent}

return headers

def get_city(self):

headers = self.getheaders()

r = requests.get("http://www.zhipin.com/wapi/zpCommon/data/city.json", headers=headers)

data = r.json()

return [city['code'] for city in data['zpData']['hotCityList'][1:]]

def get_response(self, url, params=None):

headers = self.getheaders()

r = requests.get(url, headers=headers, params=params)

r.encoding = 'utf-8'

soup = BeautifulSoup(r.text, "lxml")

return soup

def get_url(self):

for city_code in self.city_code_list:

url = "https://www.zhipin.com/c%s/" % city_code

self.per_page_info(url)

time.sleep(10)

def per_page_info(self, url):

for page_num in range(1, 11):

params = {"query": self.query, "page": page_num}

soup = self.get_response(url, params)

lines = soup.find('div', class_='job-list').select('ul > li')

if not lines:

# 代表没有数据了,换下一个城市

return

for line in lines:

info_primary = line.find('div', class_="info-primary")

city = info_primary.find('p').text.split(' ')[0]

job = info_primary.find('div', class_="job-title").text

# 过滤答非所谓的招聘信息

if self.query.lower() not in job.lower():

continue

salary = info_primary.find('span', class_="red").text.split('-')[0].replace('K', '')

company = line.find('div', class_="info-company").find('a').text.lower()

result = dict(zip(self.csv_header, [city, job, salary, company]))

print(result)

self.boss_info_list.append(result)

def write_result(self):

with open(self.filename, "w+", encoding='utf-8', newline='') as f:

f_csv = csv.DictWriter(f, self.csv_header)

f_csv.writeheader()

f_csv.writerows(self.boss_info_list)

def read_csv(self):

data = pd.read_csv(self.filename, sep=",", header=0)

data.groupby('city').mean()['salary'].to_frame('salary').reset_index().sort_values('salary', ascending=False)

result = data.groupby('city').apply(lambda x: x.mean()).round(1)['salary'].to_frame(

'salary').reset_index().sort_values('salary', ascending=False)

print(result)

charts_bar = (

Line()

.set_global_opts(

title_opts={"text": "全国%s薪酬榜" % self.query})

.add_xaxis(result.city.values.tolist())

.add_yaxis("salary", result.salary.values.tolist())

)

charts_bar.render('%s.html' % self.query)

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument("-k", "--keyword", help="请填写所需查询的关键字")

args = parser.parse_args()

if not args.keyword:

print(parser.print_help())

else:

main = BossCrawler(args.keyword)

main.get_url()

main.write_result()

main.read_csv()

结尾说两句

- 关于爬虫的问题,可能言语有些偏激,觉得那里说的不对的,大家可以在评论区讨论。

- 如果大家觉得这种个人言论过多的帖子不适合你们学习,也可以评论或者私信我,我以后少说点。

- ** 这点最重要,如果你们觉得我的文章还看得过去,每天更新还算辛苦,就帮忙把公众号推荐给你身边同样喜欢python的朋友**

- 其实这点对我有些重要,如果你看过觉得有收获,可以点击文章右下角的“在看”让我知道。

- 代码和数据和html,老规矩公众号回复boss,即可获取文章的数据、代码及pyecharts生成好的html文件...

今天的内容就到这里,如果觉得有帮助,记得点赞支持。欢迎大家关注我的公众号【清风Python】,

公众号内有整理好的各类福利数据供大家下载,扫码关注: