从特征描述符到深度学习:计算机视觉的20年

从特征描述符到深度学习:计算机视觉的20年

本文原文部分搬自以下(墙外)内容:

Tombone’s Computer Vision Blog:

From feature descriptors to deep learning: 20 years of computer vision

原文链接:http://www.computervisionblog.com/2015/01/from-feature-descriptors-to-deep.html

本文译文部分是对原文的翻译。

原文:

From feature descriptors to deep learning: 20 years of computer vision

We all know that deep convolutional neural networks have produced some stellar results on object detection and recognition benchmarks in the past two years (2012-2014), so you might wonder: what did the earlier object recognition techniques look like? How do the designs of earlier recognition systems relate to the modern multi-layer convolution-based framework?

Let’s take a look at some of the big ideas in Computer Vision from the last 20 years.

The rise of the local feature descriptors: ~1995 to ~2000

When SIFT (an acronym for Scale Invariant Feature Transform) was introduced by David Lowe in 1999, the world of computer vision research changed almost overnight. It was robust solution to the problem of comparing image patches. Before SIFT entered the game, people were just using SSD (sum of squared distances) to compare patches and not giving it much thought.

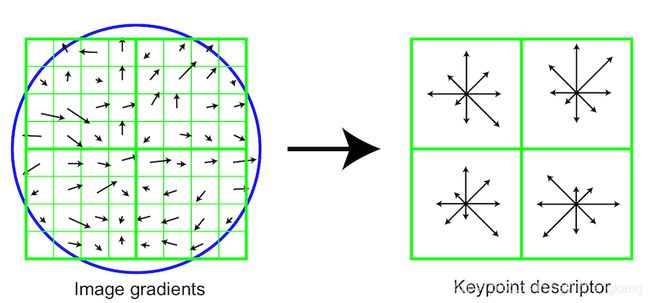

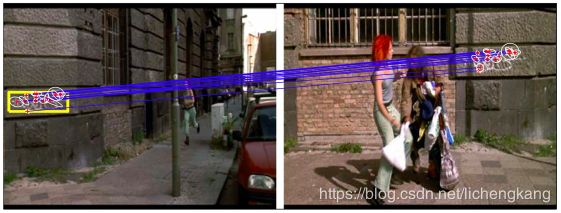

SIFT is something called a local feature descriptor – it is one of those research findings which is the result of one ambitious man hackplaying with pixels for more than a decade. Lowe and the University of British Columbia got a patent on SIFT and Lowe released a nice compiled binary of his very own SIFT implementation for researchers to use in their work. SIFT allows a point inside an RGB image to be represented robustly by a low dimensional vector. When you take multiple images of the same physical object while rotating the camera, the SIFT descriptors of corresponding points are very similar in their 128-D space. At first glance it seems silly that you need to do something as complex as SIFT, but believe me: just because you, a human, can look at two image patches and quickly “understand” that they belong to the same physical point, this is not the same for machines. SIFT had massive implications for the geometric side of computer vision (stereo, Structure from Motion, etc) and later became the basis for the popular Bag of Words model for object recognition.

Seeing a technique like SIFT dramatically outperform an alternative method like Sum-of-Squared-Distances (SSD) Image Patch Matching firsthand is an important step in every aspiring vision scientist’s career. And SIFT isn’t just a vector of filter bank responses, the binning and normalization steps are very important. It is also worthwhile noting that while SIFT was initially (in its published form) applied to the output of an interest point detector, later it was found that the interest point detection step was not important in categorization problems. For categorization, researchers eventually moved towards vector quantized SIFT applied densely across an image.

I should also mention that other descriptors such as Spin Images (see my 2009 blog post on spin images) came out a little bit earlier than SIFT, but because Spin Images were solely applicable to 2.5D data, this feature’s impact wasn’t as great as that of SIFT.

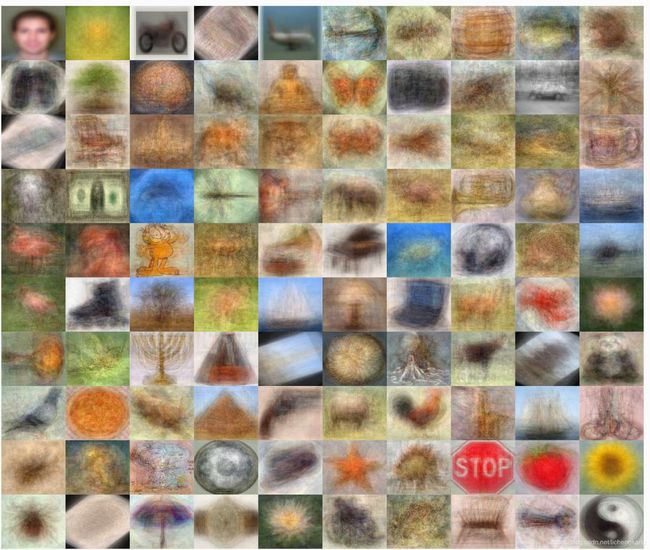

The modern dataset (aka the hardening of vision as science): ~2000 to ~2005

Homography estimation, ground-plane estimation, robotic vision, SfM, and all other geometric problems in vision greatly benefited from robust image features such as SIFT. But towards the end of the 1990s, it was clear that the internet was the next big thing. Images were going online. Datasets were being created. And no longer was the current generation solely interested in structure recovery (aka geometric) problems. This was the beginning of the large-scale dataset era with Caltech-101 slowly gaining popularity and categorization research on the rise. No longer were researchers evaluating their own algorithms on their own in-house datasets – we now had a more objective and standard way to determine if yours is bigger than mine. Even though Caltech-101 is considered outdated by 2015 standards, it is fair to think of this dataset as the Grandfather of the more modern ImageNet dataset. Thanks Fei-Fei Li.

Bins, Grids, and Visual Words (aka Machine Learning meets descriptors): ~2000 to ~2005

After the community shifted towards more ambitious object recognition problems and away from geometry recovery problems, we had a flurry of research in Bag of Words, Spatial Pyramids, Vector Quantization, as well as machine learning tools used in any and all stages of the computer vision pipeline. Raw SIFT was great for wide-baseline stereo, but it wasn’t powerful enough to provide matches between two distinct object instances from the same visual object category. What was needed was a way to encode the following ideas: object parts can deform relative to each other and some image patches can be missing. Overall, a much more statistical way to characterize objects was needed.

Visual Words were introduced by Josef Sivic and Andrew Zisserman in approximately 2003 and this was a clever way of taking algorithms from large-scale text matching and applying them to visual content. A visual dictionary can be obtained by performing unsupervised learning (basically just K-means) on SIFT descriptors which maps these 128-D real-valued vectors into integers (which are cluster center assignments). A histogram of these visual words is a fairly robust way to represent images. Variants of the Bag of Words model are still heavily utilized in vision research.

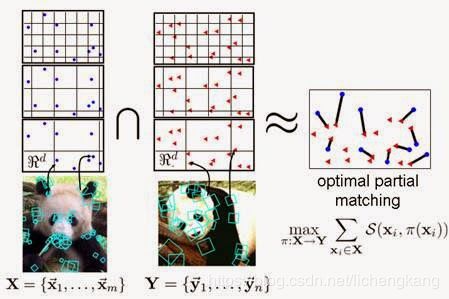

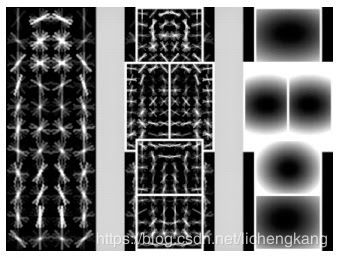

Another idea which was gaining traction at the time was the idea of using some sort of binning structure for matching objects. Caltech-101 images mostly contained objects, so these grids were initially placed around entire images, and later on they would be placed around object bounding boxes. Here is a picture from Kristen Grauman’s famous Pyramid Match Kernel paper which introduced a powerful and hierarchical way of integrating spatial information into the image matching process.

At some point it was not clear whether researchers should focus on better features, better comparison metrics, or better learning. In the mid 2000s it wasn’t clear if young PhD students should spend more time concocting new descriptors or kernelizing their support vector machines to death.

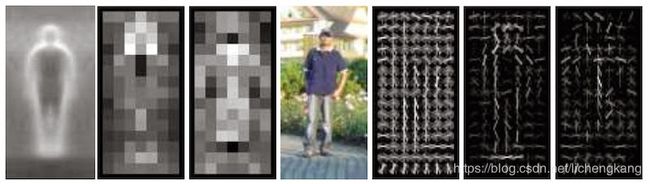

Object Templates (aka the reign of HOG and DPM): ~2005 to ~2010

At around 2005, a young researcher named Navneet Dalal showed the world just what can be done with his own new badass feature descriptor, HOG. (It is sometimes written as HoG, but because it is an acronym for “Histogram of Oriented Gradients” it should really be HOG. The confusion must have came from an earlier approach called DoG which stood for Difference of Gaussian, in which case the “o” should definitely be lower case.)

HOG came at the time when everybody was applying spatial binning to bags of words, using multiple layers of learning, and making their systems overly complicated. Dalal’s ingenious descriptor was actually quite simple. The seminal HOG paper was published in 2005 by Navneet and his PhD advisor, Bill Triggs. Triggs got his fame from earlier work on geometric vision, and Dr. Dalal got his fame from his newly found descriptor. HOG was initially applied to the problem of pedestrian detection, and one of the reasons it because so popular was that the machine learning tool used on top of HOG was quite simple and well understood, it was the linear Support Vector Machine.

I should point out that in 2008, a follow-up paper on object detection, which introduced a technique called the Deformable Parts-based Model (or DPM as we vision guys call it), helped reinforce the popularity and strength of the HOG technique. I personally jumped on the HOG bandwagon in about 2008. My first few years as a grad student (2005-2008) I was hackplaying with my own vector quantized filter bank responses, and definitely developed some strong intuition regarding features. In the end I realized that my own features were only “okay,” and because I was applying them to the outputs of image segmentation algorithms they were extremely slow. Once I started using HOG, it didn’t take me long to realize there was no going back to custom, slow, features. Once I started using a multiscale feature pyramid with a slightly improved version of HOG introduced by master hackers such as Ramanan and Felzenszwalb, I was processing images at 100x the speed of multiple segmentations + custom features (my earlier work).

DPM was the reigning champ on the PASCAL VOC challenge, and one of the reasons why it became so popular was the excellent MATLAB/C++ implementation by Ramanan and Felzenszwalb. I still know many researchers who never fully acknowledged what releasing such great code really meant for the fresh generation of incoming PhD students, but at some point it seems like everybody was modifying the DPM codebase for their own CVPR attempts. Too many incoming students were lacking solid software engineering skills and giving them the DPM code was a surefire way to get some some experiments up and running. Personally, I never jumped on the parts-based methodology, but I did take apart the DPM codebase several times. However, when I put it back together, the Exemplar-SVM was the result.

Big data, Convolutional Neural Networks and the promise of Deep Learning: ~2010 to ~2015

Sometime around 2008, it was pretty clear that scientists were getting more and more comfortable with large datasets. It wasn’t just the rise of “Cloud Computing” and “Big Data,” it was the rise of the data scientists. Hacking on equations by morning, developing a prototype during lunch, deploying large scale computations in the evening, and integrating the findings into a production system by sunset. I spent two summers at Google Research, I saw lots of guys who had made their fame as vision hackers. But they weren’t just writing “academic” papers at Google – sharding datasets with one hand, compiling results for their managers, writing Borg scripts in their sleep, and piping results into gnuplot (because Jedis don’t need GUIs?). It was pretty clear that big data, and a DevOps mentality was here to stay, and the vision researcher of tomorrow would be quite comfortable with large datasets. No longer did you need one guy with a mathy PhD, one software engineer, one manager, and one tester. Plenty of guys who could do all of those jobs.

Deep Learning: 1980s - 2015

2014 was definitely a big year for Deep Learning. What’s interesting about Deep Learning is that it is a very old technique. What we’re seeing now is essentially the Neural Network 2.0 revolution – but this time around, there’s we’re 20 years ahead R&D-wise and our computers are orders of magnitude faster. And what’s funny is that the same guys that were championing such techniques in the early 90s were the same guys we were laughing at in the late 90s (because clearly convex methods were superior to the magical NN learning-rate knobs). I guess they really had the last laugh because eventually these relentless neural network gurus became the same guys we now all look up to. Geoffrey Hinton, Yann LeCun, Andrew Ng, and Yeshua Bengio are the 4 Titans of Deep Learning. By now, just about everybody has jumped ship to become a champion of Deep Learning.

But with Google, Facebook, Baidu, and a multitude of little startups riding the Deep Learning wave, who will rise to the top as the master of artificial intelligence?

How to today’s deep learning systems resemble the recognition systems of yesteryear?

Multiscale convolutional neural networks aren’t that much different than the feature-based systems of the past. The first level neurons in deep learning systems learn to utilize gradients in a way that is similar to hand-crafted features such as SIFT and HOG. Objects used to be found in a sliding-window fashion, but now it is easier and sexier to think of this operation as convolving an image with a filter. Some of the best detection systems used to use multiple linear SVMs, combined in some ad-hoc way, and now we are essentially using even more of such linear decision boundaries. Deep learning systems can be thought of a multiple stages of applying linear operators and piping them through a non-linear activation function, but deep learning is more similar to a clever combination of linear SVMs than a memory-ish Kernel-based learning system.

Features these days aren’t engineered by hand. However, architectures of Deep systems are still being designed manually – and it looks like the experts are the best at this task. The operations on the inside of both classic and modern recognition systems are still very much the same. You still need to be clever to play in the game, but now you need a big computer. There’s still lot of room for improvement, so I encourage all of you to be creative in your research.

Research-wise, it never hurts to know where we have been before so that we can better plan for our journey ahead. I hope you enjoyed this brief history lesson and the next time you look for insights in your research, don’t be afraid to look back.

To learn more about computer vision techniques:

SIFT article on Wikipedia

Bag of Words article on Wikipedia

HOG article on Wikipedia

Deformable Part-based Model Homepage

Pyramid Match Kernel Homepage

“Video Google” Image Retrieval System

Some Computer Vision datasets:

Caltech-101 Dataset

ImageNet Dataset

To learn about the people mentioned in this article:

Kristen Grauman (creator of Pyramid Match Kernel, Prof at Univ of Texas)

Bill Triggs’s (co-creator of HOG, Researcher at INRIA)

Navneet Dalal (co-creator of HOG, now at Google)

Yann LeCun (one of the Titans of Deep Learning, at NYU and Facebook)

Geoffrey Hinton (one of the Titans of Deep Learning, at Univ of Toronto and Google)

Andrew Ng (leading the Deep Learning effort at Baidu, Prof at Stanford)

Yoshua Bengio (one of the Titans of Deep Learning, Prof at U Montreal)

Deva Ramanan (one of the creators of DPM, Prof at UC Irvine)

Pedro Felzenszwalb (one of the creators of DPM, Prof at Brown)

Fei-fei Li (Caltech101 and ImageNet, Prof at Stanford)

Josef Sivic (Video Google and Visual Words, Researcher at INRIA/ENS)

Andrew Zisserman (Geometry-based methods in vision, Prof at Oxford)

Andrew E. Johnson (SPIN Images creator, Researcher at JPL)

Martial Hebert (Geometry-based methods in vision, Prof at CMU)

译文:

我们都知道深度卷积神经网络在过去的两年(2012-2014)中在目标检测和识别基准上取得了一些令人瞩目的成果,所以你可能会问:早期的目标识别技术是什么样子的?早期识别系统的设计如何与现代基于多层卷积的框架相关联?

让我们来看看过去20年来计算机视觉领域中的一些重大想法。

局部特征描述符的兴起:大约1995年 至 大约2000年

当David Lowe在1999年提出SIFT(Scale Invariant Feature Transform的首字母缩写,尺度不变特征变换)时,计算机视觉研究的世界几乎在一夜之间发生了变化。它是对图像块(image patches)比较问题的鲁棒性解决方案。在SIFT进入游戏之前,人们只是使用SSD(sum of squared distances,距离平方和)来比较块(patches),并没有考虑太多。

SIFT是一种被称为局部特征描述符的东西——它是一个雄心勃勃的人对像素进行了十多年的破解后得出的研究结果之一。Lowe和不列颠哥伦比亚大学获得了SIFT的专利,Lowe发布了他自己的SIFT实现的一个很好的编译二进制文件,供研究人员在他们的工作中使用。SIFT允许RGB图像中的一个点用低维向量鲁棒地表示。当你在旋转相机的同时拍摄同一物理对象的多个图像时,对应点的SIFT描述符在其128维空间中非常相似。乍一看,你需要做一些像SIFT这样复杂的操作似乎有些愚蠢,但请相信我:仅仅因为你,一个人,可以查看两个图像块并快速“理解”它们属于同一个物理点,这对机器来说就不一样了。SIFT对计算机视觉的几何方面(立体声、运动中恢复结构,等)有着巨大的影响,后来成为流行的Bag of Words对象识别模型的基础。

在每一位有抱负的视觉科学家的职业生涯中,看到像SIFT这样的技术显著优于像直接对图像进行距离平方和(SSD)匹配的替代方法是一个重要的步骤。SIFT不仅仅是滤波器组响应的一个向量,像素合并(binning)和标准化步骤非常重要。同样值得注意的是,虽然SIFT最初(以其发布的形式)应用于兴趣点检测器的输出,但是后来发现兴趣点检测步骤在分类问题中并不重要。为了分类,研究人员最终转向了矢量量化SIFT,它在图像上密集应用。

我应该提一下其他描述符,比如Spin Images(参见我2009年关于Spin Images的博客文章)比SIFT出现得稍早,但是因为Spin Images仅仅适用于2.5D数据,所以这个特性的影响没有SIFT那么大。

现代数据集(又称强化视觉科学):大约2000年 至 大约2005年

单应性估计、地面估计、机器人视觉、SfM以及视觉中的所有其他几何问题都极大地受益于SIFT等鲁棒图像特征。但到了20世纪90年代末,互联网显然是下一个大趋势。照片在网上流传。数据集被创建。当前这一代人不再只对结构恢复(又称几何)问题感兴趣。这是大规模数据集时代的开始,Caltech-101慢慢地流行起来,分类研究也在兴起。研究人员不再在他们自己的内部数据集上评估他们自己的算法——我们现在有了一种更客观、更标准的方法来确定你的算法是否比我的算法大。尽管按照2015年的标准来看,Caltech-101已经过时了,但是将这个数据集视为更现代的ImageNet数据集的鼻祖是公平的。谢谢李飞飞。

Bins,Grids和Visual Words(又名机器学习满足描述符):大约2000年 至 大约2005年

在社区向更加雄心勃勃的物体识别问题转移并远离几何恢复问题之后,我们进行了一系列关于词袋(Bag of Words)、空间金字塔(Spatial Pyramids)、矢量量化(Vector Quantization)、以及在计算机视觉管道的任何和所有阶段中使用的机器学习工具的研究。原生的SIFT对于宽基线立体(照片)效果非常好,但是它不足以提供来自同一视觉对象类别的两个不同对象实例之间的匹配。我们需要的是一种编码以下想法的方法:对象部分可以相互变形,一些图像块可以缺失。总的来说,需要一种更加统计的方法来表征对象。

Josef Sivic和Andrew Zisserman在2003年左右引入了视觉词,这是一种从大规模文本匹配中获取算法并将其应用于视觉内容的聪明的方法。通过对SIFT描述符执行无监督学习(基本上就是K-means),可以获得一个可视化字典,SIFT描述符将这些128维实值向量映射成整数(这是聚类中心赋值)。这些视觉单词的直方图是表示图像的一种相当健壮的方法。Bag of Words模型的变体在视觉研究中仍被大量使用。

另一个在当时得到广泛关注的想法是使用某种框架结构来匹配对象。Caltech-101图像主要包含物体,因此这些网格最初被放置在整个图像周围,后来它们被放置在物体边界框周围。这是一张来自Kristen Grauman的著名的金字塔匹配核心论文的图片,该论文介绍了一种将空间信息集成到图像匹配过程中的强大的、分层的方法。

在某些时候,尚不清楚研究人员是否应该专注于更好的特性、更好的比较指标或更好的学习。在2000年代中期,尚不清楚年轻的博士生是应该花更多的时间来编造新的描述符,还是应该把他们的支持向量机核化到死。

对象模板(又名HOG和DPM的统治):大约2005年 至 大约2010年

2005年左右,一位名叫Navneet Dalal的年轻研究员向世界展示了他自己的新特征描述符HOG可以做什么。(有时它被写成HoG,但是因为它是“方向梯度直方图”的缩写,所以它应该是HOG。这种混淆一定来自于早期的一种叫做DoG的方法,它代表高斯差分(Difference of Gaussian),在这种情况下,“o”一定应该是小写的。

当每个人对词袋应用空间分箱技术,使用多层学习,使他们的系统变得过于复杂时,HOG出现了。 Dalal巧妙的描述符实际上很简单。Navneet和他的博士生导师Bill Triggs在2005年发表了开创性的HOG论文。Triggs因其早期的几何视觉研究而出名,而Dalal博士则因其新发现的描述符而出名。HOG最初应用于行人检测问题,之所以如此流行的原因之一是在HOG上面使用的机器学习工具非常简单易懂,它就是线性支持向量机。

我应该指出的是,在2008年,一篇关于对象检测的后续论文中,引入了一种称为基于可变形部件的模型(或者我们vision人员所称的DPM)的技术,帮助增强了HOG技术的流行度和强度。我个人在2008年左右加入了HOG的行列。在我读研究生的头几年(2005-2008),我一直在研究自己的矢量量化滤波器组响应,并明确地形成了一些关于特征的强烈的直觉。最后,我意识到我自己的特征只是"okay",因为我把它们应用于图像分割算法的输出,它们非常慢。一旦我开始使用HOG,我很快就意识到不能再回到自定义的、慢速的特性了。当我开始使用一个由Ramanan和Felzenszwalb等黑客大师引入的带有稍微改进的HOG版本的多尺度特征金字塔时,我处理图像的速度是多个分段+自定义特征(我早期的工作)处理速度的100倍。

DPM是PASCAL VOC挑战赛的卫冕冠军,它如此受欢迎的原因之一是Ramanan和Felzenszwalb出色的MATLAB/C++实现。我仍然知道许多研究人员,他们从来没有完全认识到发布如此优秀的代码对于新一代的博士生意味着什么,但在某种程度上,似乎每个人都在为自己的CVPR尝试修改DPM代码库。太多的新生缺乏扎实的软件工程技能,向他们提供DPM代码是一种启动和运行一些实验的可靠方法。就我个人而言,我从未跳过基于部件的方法,但我确实多次拆分了DPM代码库。然而,当我将其重新组合在一起时,得到的结果是范例支持向量机(Exemplar-SVM)。

大数据,卷积神经网络和深度学习的承诺:大约2010年 至 大约2015年

大约在2008年的某个时候,很明显,科学家们对大型数据集越来越熟悉。这不仅仅是“云计算”和“大数据”的崛起,而是数据科学家的崛起。早上破解方程式,午餐时间开发原型,晚上部署大规模计算,在日落之前将结果集成到生产系统中。我在Google Research度过了两个夏天,我看到很多人以视觉黑客而出名。但是他们不仅仅是在谷歌上写“学术”论文——用一只手分片数据集,为他们的经理编译结果,在睡梦中编写Borg脚本,并将结果导入gnuplot(因为Jedis不需要GUIs?)。很明显,大数据和DevOps的理念将会继续存在,未来的视觉研究者将会对大型数据集非常满意。你不再需要一个拥有数学博士学位的人,一个软件工程师,一个经理和一个测试人员。很多人可以做所有这些工作。

深度学习:20世纪80年代 至 2015年

2014年绝对是深度学习的重要一年。深度学习的有趣之处在于它是一种非常古老的技术。我们现在看到的本质上是神经网络2.0革命——但这一次,我们有20年的研发经验,我们的计算机速度也快了几个数量级。有趣的是,在90年代早期支持这种技术的人,也正是我们在90年代末嘲笑的人(因为凸方法显然优于神奇的神经网络学习率旋钮)。我猜他们真的笑到最后了,因为最终这些无情的神经网络大师变成了我们现在都敬仰的人。Geoffrey Hinton、Yann LeCun、Andrew Ng和Yeshua Bengio是深度学习的四大巨头。 到目前为止,几乎所有人都跳槽去成为深度学习的拥护者。

但是,随着谷歌、Facebook、百度和众多小型初创企业乘着深度学习的浪潮,谁将成为人工智能领域的顶尖大师呢?

今天的深度学习系统与过去的认知系统有何相似之处?

多尺度卷积神经网络与过去的基于特征的系统并没有太大的不同。深度学习系统的第一级神经元学习使用梯度,以类似于手工制作的特征(如SIFT和HOG)的方式。过去是用滑动窗口的方式找到对象的,但现在把这个操作看作是用滤波器对图像进行卷积就更容易、更性感了。一些最好的检测系统曾经使用多个线性支持向量机,以某种特定的方式组合在一起,现在我们实际上使用了更多这样的线性决策边界。深度学习系统可以被认为是应用线性算子并通过非线性激活函数对它们进行管道输送的多个阶段,但与基于内存内核的学习系统相比,深度学习更像是线性支持向量机的巧妙组合。

现在的特征不是手工设计的。但是,深层系统的体系结构仍然是手工设计的——看起来专家们在这方面是最好的。经典识别系统和现代识别系统的内部操作仍然非常相似。在游戏中你仍然需要聪明,但现在你需要一台大电脑。还有很大的改进空间,所以我鼓励你们在研究中发挥创造力。

从研究的角度来看,知道我们以前去过哪里从来没有坏处,这样我们就可以更好地为未来的旅程做计划。我希望你们喜欢这节简短的历史课,下次你们在研究中寻找真知灼见时,不要害怕回顾。

学习更多计算机视觉技术:

SIFT article on Wikipedia

Bag of Words article on Wikipedia

HOG article on Wikipedia

Deformable Part-based Model Homepage

Pyramid Match Kernel Homepage

“Video Google” Image Retrieval System

一些计算机视觉数据集:

Caltech-101 Dataset

ImageNet Dataset

了解本文提到的人物:

Kristen Grauman (creator of Pyramid Match Kernel, Prof at Univ of Texas)

Bill Triggs’s (co-creator of HOG, Researcher at INRIA)

Navneet Dalal (co-creator of HOG, now at Google)

Yann LeCun (one of the Titans of Deep Learning, at NYU and Facebook)

Geoffrey Hinton (one of the Titans of Deep Learning, at Univ of Toronto and Google)

Andrew Ng (leading the Deep Learning effort at Baidu, Prof at Stanford)

Yoshua Bengio (one of the Titans of Deep Learning, Prof at U Montreal)

Deva Ramanan (one of the creators of DPM, Prof at UC Irvine)

Pedro Felzenszwalb (one of the creators of DPM, Prof at Brown)

Fei-fei Li (Caltech101 and ImageNet, Prof at Stanford)

Josef Sivic (Video Google and Visual Words, Researcher at INRIA/ENS)

Andrew Zisserman (Geometry-based methods in vision, Prof at Oxford)

Andrew E. Johnson (SPIN Images creator, Researcher at JPL)

Martial Hebert (Geometry-based methods in vision, Prof at CMU)