kubernetes(k8s v1.15.0)+Docker(v18.0)+Springboot2.2的jar的项目安装+部署入门全演练

文章目录

- 一 写在前面

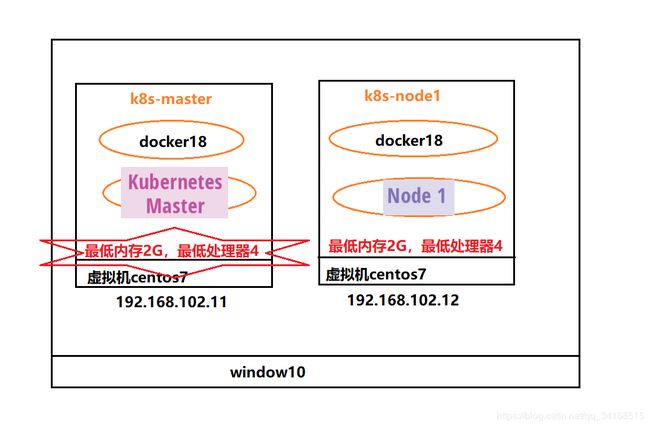

- 1、我的项目结构图

- 2、k8s的基础知识(如果没兴趣可以跳到实际操作)

- 二、配置两台虚拟机

- master和slave节点的虚拟机

- 安装docker, master和slave节点虚拟机一起安装

- 安装kubeadm、kubelet、kubectl,master和slave节点虚拟机一起安装

- 部署master节点

- 部署网络插件(发现镜像下载不了,可以评论回复,我可以帮组你)

- 部署slave节点

- 三、配置jar包和dockerfile

- 四、安装镜像

- 五、编写k8s容器编排文件

- 六、开始容器编排

- 七、结语

一 写在前面

kubernetes有人认为名字太长,k + ubernetes + s,中间刚刚为8个字母,有人为了省事,干脆就叫做 k8s,下文所有的kubernetes均统一称为k8s

1、我的项目结构图

注意点:该项目我是用2台虚拟机,每台虚拟机内存必须为2G以上,不然会出现很大问题,因为k8s的某些pod运行需要的内存很多。

版本:

- k8s版本为: v1.15,满足2020年前的所有应用

- docker版本为:18

2、k8s的基础知识(如果没兴趣可以跳到实际操作)

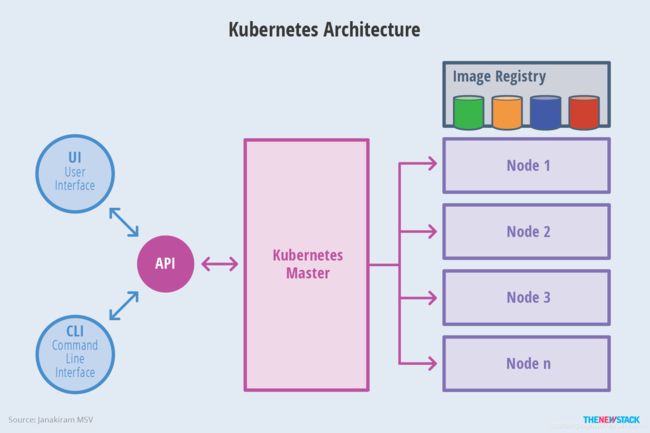

- 一个master,多个node,我这个案例只有一个node

- 需要docker提供镜像

- 通过api可以提供用户接口和命令接口

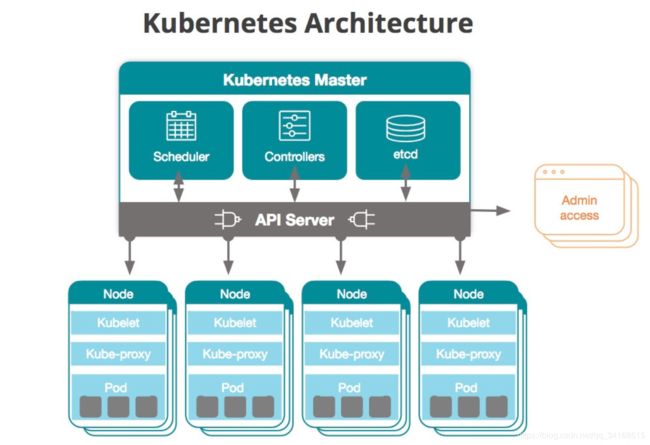

| 主机 | IP地址 | 角色 | 组件 |

|---|---|---|---|

| k8s-master | 192.168.102.11 | master | kube-scheduler, kube-controller-manager, etcd, kube-apiserver |

| k8s-master | 192.168.102.12 | node1 | kube-proxy |

二、配置两台虚拟机

master和slave节点的虚拟机

- master和slave节点虚拟机修改名字

-

1.1 master修改centos7的名字

hostnamectl set-hostname k8s-master

修改完需要重启计算机 -

1.2 Slave修改centos7的名字

hostnamectl set-hostname k8s-node1

- master和slave节点虚拟机修改固定ip地址(不懂可以百度)

-

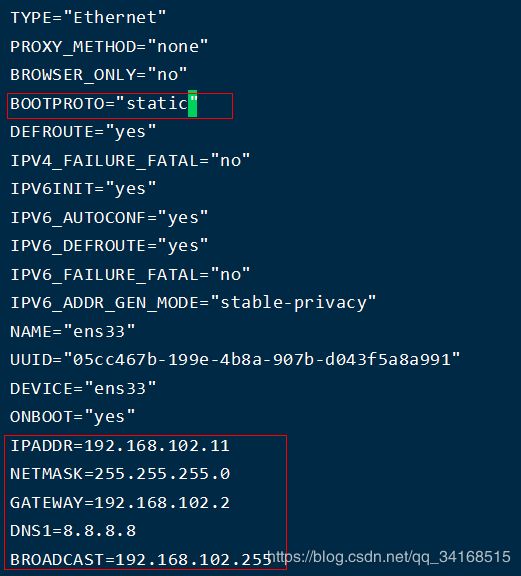

2.1 master修改固定ip

vi /etc/sysconfig/network-scripts/ifcfg-ens33

重启网络 service network restart -

2.2 slave修改固定ip

vi /etc/sysconfig/network-scripts/ifcfg-ens33

...

BOOTPROTO="static"

...

IPADDR=192.168.102.12

NETMASK=255.255.255.0

GATEWAY=192.168.102.2

DNS1=8.8.8.8

BROADCAST=192.168.102.255

重启网络 service network restart

- 修改/etc/hosts文件, master和slave节点虚拟机一起修改

cat >> /etc/hosts << EOF

192.168.102.11 k8s-master

192.168.102.12 k8s-node1

EOF

- 关闭防火墙, master和slave节点虚拟机一起修改

systemctl stop firewalld && systemctl disable firewalld

- 关闭swap, master和slave节点虚拟机一起修改

swapoff -a

yes | cp /etc/fstab /etc/fstab_bak

cat /etc/fstab_bak |grep -v swap > /etc/fstab

- 修改iptables相关参数, master和slave节点虚拟机一起修改

CentOS 7上的一些用户报告了由于iptables被绕过而导致流量路由不正确的问题。创建/etc/sysctl.d/k8s.conf文件,添加如下内容:

cat < /etc/sysctl.d/k8s.conf

vm.swappiness = 0

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

- 使配置生效

modprobe br_netfilter

sysctl -p /etc/sysctl.d/k8s.conf

安装docker, master和slave节点虚拟机一起安装

- 安装要求的软件包

yum install -y yum-utils device-mapper-persistent-data lvm2

2. 添加Docker repository,这里改为国内阿里云repo,

yum-config-manager \

--add-repo \

http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

- 安装docker

yum install -y docker-ce-18.06.0.ce-3.el7

- 创建daemon.json配置文件

注意,这里这指定了cgroupdriver=systemd,另外由于国内拉取镜像较慢,最后追加了阿里云镜像加速配置。

以下命令可以一次性复制粘贴运行

mkdir /etc/docker

cat > /etc/docker/daemon.json <- 重启docker服务

systemctl daemon-reload && systemctl restart docker && systemctl enable docker

- 查看docker是否安装成功

docker version

安装kubeadm、kubelet、kubectl,master和slave节点虚拟机一起安装

官方安装文档

- kubelet:在群集中所有节点上运行的核心组件, 用来执行如启动pods和containers等操作。

- kubeadm:引导启动k8s集群的命令行工具,用于初始化 Cluster。

- kubectl 是 Kubernetes 命令行工具。通过 kubectl 可以部署和管理应用,查看各种资源,创建、删除和更新各种组件。

- 配置kubernetes.repo的源,由于官方源国内无法访问,这里使用阿里云yum源

cat < /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

- 在所有节点上安装kubelet、kubeadm 和 kubectl

yum install -y kubelet-1.15.0-0 kubeadm-1.15.0-0 kubectl-1.15.0-0

- 启动kubelet服务

systemctl enable kubelet && systemctl start kubelet

部署master节点

完整的官方文档可以参考:

https://kubernetes.io/docs/setup/independent/create-cluster-kubeadm/

https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm-init/

- 执行Master节点执行初始化:

kubeadm init \

--apiserver-advertise-address=192.168.102.11 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.15.0 \

--pod-network-cidr=10.244.0.0/16

- 1.1 运行以上命令,开始运行

[root@k8s-master ~]# kubeadm init \

> --apiserver-advertise-address=192.168.102.11 \

> --image-repository registry.aliyuncs.com/google_containers \

> --kubernetes-version v1.15.0 \

> --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.15.0

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.102.11 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.102.11 127.0.0.1 ::1]

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.102.11]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 23.503985 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.15" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: ykt5ca.46u2ps8pvruc4xtf

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.102.11:6443 --token ykt5ca.46u2ps8pvruc4xtf \

--discovery-token-ca-cert-hash sha256:987a9babb3c0ab5ec79d15358918ef656918dd486772b822fe317fcc45fe7c5f

- 1.2 初始化过程说明:(注意记录下初始化结果中的kubeadm join命令,部署worker节点时会用到)

- [preflight] kubeadm 执行初始化前的检查。

- [kubelet-start] 生成kubelet的配置文件”/var/lib/kubelet/config.yaml”

- [certificates] 生成相关的各种token和证书

- [kubeconfig] 生成 KubeConfig 文件,kubelet 需要这个文件与 Master 通信

- [control-plane] 安装 Master 组件,会从指定的 Registry 下载组件的 Docker 镜像。

- [bootstraptoken] 生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到

- [addons] 安装附加组件 kube-proxy 和 kube-dns。

- Kubernetes Master 初始化成功,提示如何配置常规用户使用kubectl访问集群。

- 提示如何安装 Pod 网络。

- 提示如何注册其他节点到 Cluster。

- 1.3 以上的命令,只需要看后半段

注意要把 kubeadm join 192.168.102.11:6443 --token ykt5ca.46u2ps8pvruc4xtf

–discovery-token-ca-cert-hash sha256:987a9babb3c0ab5ec79d15358918ef656918dd486772b822fe317fcc45fe7c5f拷贝下来,Slave节点需要使用

- 配置 kubectl

kubectl 是管理 Kubernetes Cluster 的命令行工具, Master 初始化完成后需要做一些配置工作才能使用kubectl,,这里直接配置root用户:

export KUBECONFIG=/etc/kubernetes/admin.conf

配置普通用户

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

- node节点支持kubelet

scp /etc/kubernetes/admin.conf k8s-node1:/etc/kubernetes/admin.conf

export KUBECONFIG=/etc/kubernetes/admin.conf

- 查看集群状态:

[root@k8s-master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

- kubectl get nodes(发现状态有问题,以下解决)

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 7m15s v1.15.0

部署网络插件(发现镜像下载不了,可以评论回复,我可以帮组你)

要让 Kubernetes Cluster 能够工作,必须安装 Pod 网络,否则 Pod 之间无法通信。

Kubernetes 支持多种网络方案,这里我们使用 flannel

[root@k8s-master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds-amd64 created

daemonset.apps/kube-flannel-ds-arm64 created

daemonset.apps/kube-flannel-ds-arm created

daemonset.apps/kube-flannel-ds-ppc64le created

daemonset.apps/kube-flannel-ds-s390x created

部署完成后,我们可以通过 kubectl get 重新检查 Pod 的状态:

[root@k8s-master ~]# kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-bccdc95cf-dzmmt 1/1 Running 0 14m 10.244.0.2 k8s-master

coredns-bccdc95cf-rcbkg 1/1 Running 0 14m 10.244.0.3 k8s-master

etcd-k8s-master 1/1 Running 0 13m 192.168.102.11 k8s-master

kube-apiserver-k8s-master 1/1 Running 0 13m 192.168.102.11 k8s-master

kube-controller-manager-k8s-master 1/1 Running 0 13m 192.168.102.11 k8s-master

kube-flannel-ds-amd64-p64h2 1/1 Running 0 4m10s 192.168.102.11 k8s-master

kube-proxy-zm5jw 1/1 Running 0 14m 192.168.102.11 k8s-master

kube-scheduler-k8s-master 1/1 Running 0 13m 192.168.102.11 k8s-master

再查看master的状态(解决了)

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 15m v1.15.0

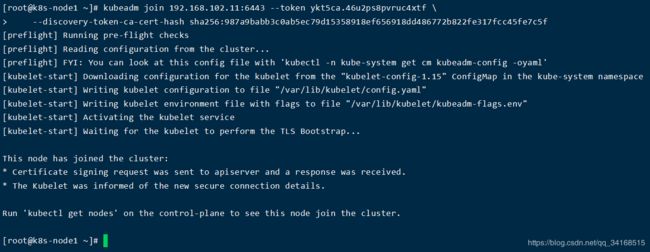

部署slave节点

去到Salve虚拟机上执行:

Kubernetes 的 Slave节点跟 Master 节点几乎是相同的,它们运行着的都是一个 kubelet 组件。唯一的区别在于,在 kubeadm init 的过程中,kubelet 启动后,Master 节点上还会自动运行 kube-apiserver、kube-scheduler、kube-controller-manger 这三个系统 Pod。

执行如下命令(该命令从master init从获取),将其注册到 Cluster 中:

kubeadm join 192.168.102.11:6443 --token ykt5ca.46u2ps8pvruc4xtf \

--discovery-token-ca-cert-hash sha256:987a9babb3c0ab5ec79d15358918ef656918dd486772b822fe317fcc45fe7c5f

查看slave节点是否加入到master节点

回到master虚拟机执行(需要等待一段时间):

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 23m v1.15.0

k8s-node1 NotReady 2m17s v1.15.0

查看

[root@k8s-master ~]# kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-bccdc95cf-dzmmt 1/1 Running 0 25m 10.244.0.2 k8s-master

coredns-bccdc95cf-rcbkg 1/1 Running 0 25m 10.244.0.3 k8s-master

etcd-k8s-master 1/1 Running 0 24m 192.168.102.11 k8s-master

kube-apiserver-k8s-master 1/1 Running 0 24m 192.168.102.11 k8s-master

kube-controller-manager-k8s-master 1/1 Running 0 24m 192.168.102.11 k8s-master

kube-flannel-ds-amd64-dh9bt 0/1 Init:ImagePullBackOff 0 3m55s 192.168.102.12 k8s-node1

kube-flannel-ds-amd64-p64h2 1/1 Running 0 15m 192.168.102.11 k8s-master

kube-proxy-ts447 1/1 Running 0 3m55s 192.168.102.12 k8s-node1

kube-proxy-zm5jw 1/1 Running 0 25m 192.168.102.11 k8s-master

kube-scheduler-k8s-master 1/1 Running 0 24m 192.168.102.11 k8s-master

例如,以上有一个状态部署Running,就会有问题,必须全部为Running

# 查看kube-flannel-ds-amd64-dh9bt为什么为 Init:ImagePullBackOff

[root@k8s-master ~]# kubectl describe pods kube-flannel-ds-amd64-dh9bt -n kube-system

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 58m default-scheduler Successfully assigned kube-system/kube-flannel-ds-amd64-dh9bt to k8s-node1

Normal Pulling (x4 over ) kubelet, k8s-node1 Pulling image "quay.io/coreos/flannel:v0.12.0-amd64"

Warning Failed (x5 over ) kubelet, k8s-node1 Failed to pull image "quay.io/coreos/flannel:v0.12.0-amd64": rpc error: code = Unknown desc = context canceled

Warning Failed (x24 over ) kubelet, k8s-node1 Error: ImagePullBackOff

Warning Failed (x7 over ) kubelet, k8s-node1 Error: ErrImagePull

Normal BackOff (x61 over ) kubelet, k8s-node1 Back-off pulling image "quay.io/coreos/flannel:v0.12.0-amd64"

发现 kubelet, k8s-node1 Back-off pulling image “quay.io/coreos/flannel:v0.12.0-amd64”,k8s-node节点的镜像没有拉取下来。

回到 k8s-node节点下

docker pull quay.io/coreos/flannel:v0.12.0-amd64

拉取完,可以把两台虚拟机都重启一下,再查看node状态

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 15h v1.15.0

k8s-node1 Ready 14h v1.15.0

注意:如果出现

[root@k8s-master ~]# kubectl get nodes

The connection to the server localhost:8080 was refused - did you specify the right host or port?

再配置一下角色即可

export KUBECONFIG=/etc/kubernetes/admin.conf

三、配置jar包和dockerfile

现在环境基本搭建完毕,现在需要运行一个java程序,跑起来

springboot的版本为:2.2.2.RELEASE

- 项目的pom为:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0modelVersion>

<parent>

<groupId>org.springframework.bootgroupId>

<artifactId>spring-boot-starter-parentartifactId>

<version>2.2.2.RELEASEversion>

<relativePath/>

parent>

<groupId>com.examplegroupId>

<artifactId>demo2artifactId>

<version>0.0.1version>

<name>demo2name>

<description>Demo project for Spring Bootdescription>

<properties>

<java.version>1.8java.version>

properties>

<dependencies>

<dependency>

<groupId>org.springframework.bootgroupId>

<artifactId>spring-boot-starter-webartifactId>

dependency>

<dependency>

<groupId>org.springframework.bootgroupId>

<artifactId>spring-boot-starter-testartifactId>

<scope>testscope>

<exclusions>

<exclusion>

<groupId>org.junit.vintagegroupId>

<artifactId>junit-vintage-engineartifactId>

exclusion>

exclusions>

dependency>

dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.bootgroupId>

<artifactId>spring-boot-maven-pluginartifactId>

plugin>

plugins>

build>

project>

- java代码如下

@SpringBootApplication

@RestController

public class Demo2Application {

public static void main(String[] args) {

SpringApplication.run(Demo2Application.class, args);

}

@Value("${spring.application.name}")

private String name;

@Autowired

private ConfigurableEnvironment environment;

@RequestMapping("/get")

public String get(){

MutablePropertySources propertySources = environment.getPropertySources();

PropertySource<?> propertySources1 = propertySources.get("applicationConfig: [classpath:/application.yml]");

Set set = Collections.singleton(((Map) propertySources1.getSource()));

InetAddress addr = null;

try {

addr = InetAddress.getLocalHost();

} catch (UnknownHostException e) {

e.printStackTrace();

}

System.out.println(addr.getHostAddress());

return "测试成功: "+name+", set: "+set+"ip: "+addr;

}

}

- application.yml

server:

port: 8081

spring:

application:

name: demo2

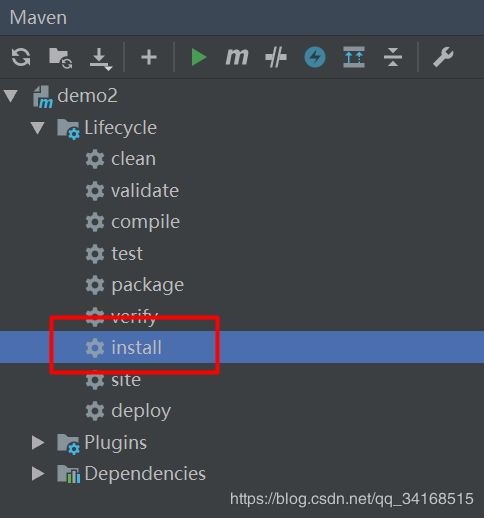

- 打包为jar包

# docker build -t demo2:1.0.0 .

FROM openjdk:8-jre-alpine3.8

RUN \

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime && \

echo "Asia/Shanghai" > /etc/timezone && \

mkdir -p /demo2

ADD . /demo2/

ENV JAVA_OPTS="-Duser.timezone=Asia/Shanghai"

EXPOSE 8081

ENV APP_OPTS=""

ENTRYPOINT [ "sh", "-c", "java $JAVA_OPTS -jar /demo2/demo2-0.0.1.jar $APP_OPTS" ]

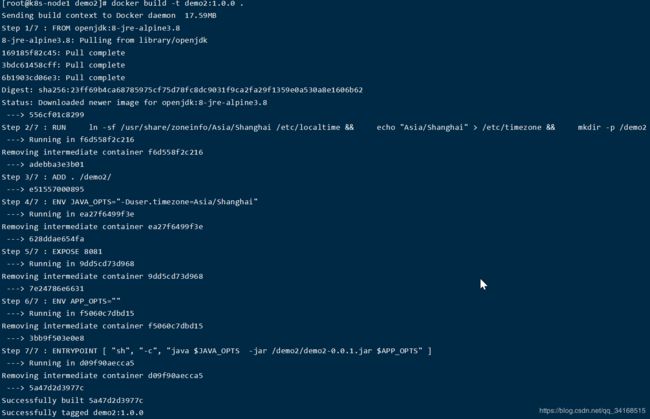

四、安装镜像

在master和node1都需要安装镜像,也就是执行同样的步骤

cd /usr/local

mkdir demo2

cd demo2

# 把dockerfile和jar包放置在一起

[root@k8s-master demo2]# ll

-rw-r--r--. 1 root root 17592130 4月 6 00:00 demo2-0.0.1.jar

-rw-r--r--. 1 root root 413 4月 6 00:00 Dockerfile

[root@k8s-master demo2]#

五、编写k8s容器编排文件

以下操作,只需要在master节点上

创建 demo2.yaml 文件

---

apiVersion: v1

kind: Service

metadata:

name: can-demo2

namespace: can

labels:

app: can-demo2

spec:

type: NodePort

ports:

- name: demo2

port: 8081

targetPort: 8081

nodePort: 30090 #暴露端口30090

selector:

project: ms

app: demo2

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: can-demo2

namespace: can

spec:

replicas: 2 #副本数量为2,既master和node1

selector:

matchLabels:

project: ms

app: demo2

template:

metadata:

labels:

project: ms

app: demo2

spec:

terminationGracePeriodSeconds: 10 #当删除Pod时,等待时间

containers:

- name: demo2

image: demo2:1.0.0

ports:

- protocol: TCP

containerPort: 8081

env:

- name: APP_NAME

value: "demo2"

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: APP_OPTS #添加环境变量

value:

"

--spring.application.name=can-demo2

"

resources:

limits:

cpu: 1

memory: 1024Mi

requests:

cpu: 0.5

memory: 125Mi

readinessProbe: #就绪探针

tcpSocket:

port: 8081

initialDelaySeconds: 20 #延迟加载时间

periodSeconds: 5 #重试时间间隔

timeoutSeconds: 10 #超时时间设置

failureThreshold: 5 #探测失败的重试次数

livenessProbe: #存活探针

tcpSocket:

port: 8081

initialDelaySeconds: 60 #延迟加载时间

periodSeconds: 5 #重试时间间隔

timeoutSeconds: 5 #超时时间设置

failureThreshold: 3 #探测失败的重试次数

把以上的yaml文件放置在demo2文件路径下

[root@k8s-master demo2]# cd /usr/local/demo2/

[root@k8s-master demo2]# ll

-rw-r--r--. 1 root root 17592130 4月 6 00:00 demo2-0.0.1.jar

-rw-r--r--. 1 root root 2074 4月 6 00:20 demo2.yaml

-rw-r--r--. 1 root root 413 4月 6 00:00 Dockerfile

六、开始容器编排

- 创建命名空间can

kubectl create namespace can

- 创建service和deployment

[root@k8s-master demo2]# kubectl apply -f demo2.yaml

service/can-demo2 created

deployment.apps/can-demo2 created

- 查看命名空间can下的pod的状态

[root@k8s-master ~]# kubectl get pods -n can -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

can-demo2-5f8dd65f54-m9gnv 1/1 Running 0 3m19s 10.244.1.4 k8s-node1

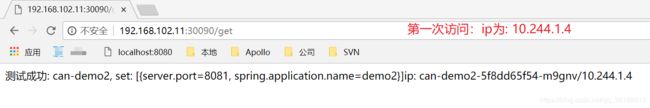

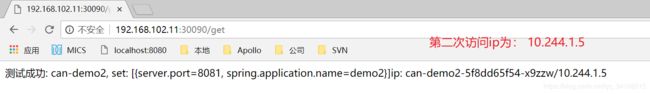

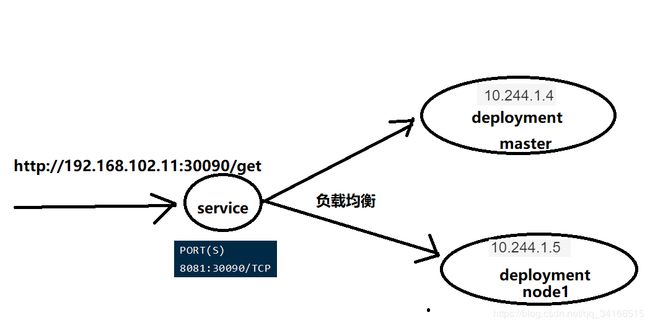

can-demo2-5f8dd65f54-x9zzw 1/1 Running 0 3m4s 10.244.1.5 k8s-node1

记下 ip 为: 10.244.1.4 和 10.244.1.5

- 查看命名空间can下的service的状态

[root@k8s-master demo2]# kubectl get service -n can

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

can-demo2 NodePort 10.100.239.166 8081:30090/TCP 3m22s

- 通过 192.168.102.11:30090/get 即可访问

测试成功: can-demo2, set: [{server.port=8081, spring.application.name=demo2}]ip: can-demo2-5f8dd65f54-x9zzw/10.244.1.5

七、结语

学习k8s不用急,我上面的案例,如果可以先搭建出来,且看到效果,再慢慢消化原理,有一天,就会成为k8s大神。

本人从事多年的java开发,有问题请留言,有问必回。

本人从事多年的java开发,有问题请留言,有问必回。