Tensorflow实战——使用Estimator创建卷积神经网络

这里是LeeTioN的博客

前言

本篇文章是针对GitHub上的一个TensorFlow实例来进行源码讲解和分析

Esitmator简介

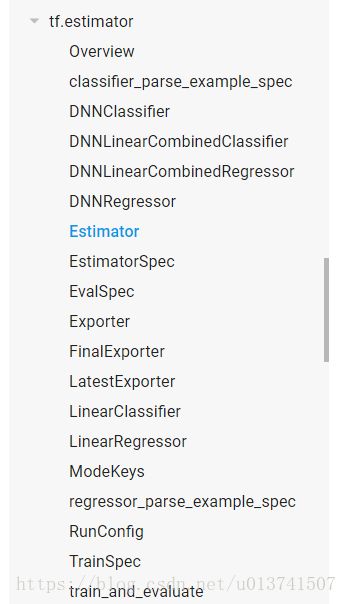

Esitmator是用来封装网络模型和参数的类,它的父类estimator(小写字母e)的其它子类有线性回归和分类等其他经典模型,而Esitmator用于构造自定义网络模型。

Estimator定义和传参方式

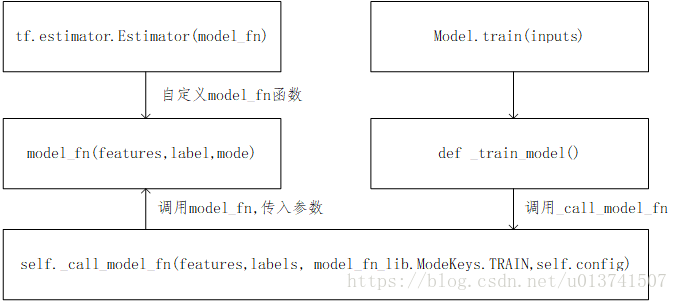

在Estimator中我们需要一个model_fn函数来自定义我们的网络,同时规定好features, labels和mode(可选模式,train, predition, evaluate)三个参数。

def model_fn(features, label, mode):

……

model = tf.estimator.Estimator(model_fn)model.train(input_fn, steps=num_steps)但是在model.train()函数并没有看到以上三个参数的传入,所以我就前往Tensorflow框架中的estimator.py源码看一看,在train()函数里面,调用了_train_model函数,函数中又调用了model_fn的回调函数,里面则传递了features, labels, ModeKeys等参数。

def _train_model(self, input_fn, hooks, saving_listeners):

……

estimator_spec = self._call_model_fn(

features, labels, model_fn_lib.ModeKeys.TRAIN, self.config)

……

return loss总的来说,以trian的整个流程举例,如下图所示。prediction和evaluation的过程类似。

用model_fn构建CNN

构建就是基本的常规操作了,这里主要注意到model_fn是要切换训练、预测和评估三种模式,所以三种模式下的不同操作都要写在model_fn函数里。

def conv_net(x_dict, n_classes, dropout, reuse, is_training):

with tf.variable_scope('ConvNet', reuse=reuse): # 变量共享,用于管理一个graph 中变量的名字,避免变量之间的命名冲突

x = x_dict['images']

x = tf.reshape(x, shape=[-1, 28, 28, 1])

# 4维张量[batch_size, height, width, channel]

conv1 = tf.layers.conv2d(x, 32, 5, activation=tf.nn.relu)

# 第一层卷积层 filters = 32, kernel_size = 5 ,过滤器的维度[5, 5],有32个

# [batch_size, 24, 24, 32]

# 若此时 padding = "same" 则会输出[batch_size, 28, 28, 32] 具体操作是在张量周围补0

conv1 = tf.layers.max_pooling2d(conv1, 2, 2)

# 卷积后的pooling [batch_size, 12, 12, 32]

conv2 = tf.layers.conv2d(conv1, 64, 3, activation=tf.nn.relu)

# 第二层卷积层

conv2 = tf.layers.max_pooling2d(conv2, 2, 2)

# 卷积后的pooling

fc1 = tf.contrib.layers.flatten(conv2)

# 全连接层

fc1 = tf.layers.dense(fc1, 1024)

fc1 = tf.layers.dropout(fc1, rate=dropout, training=is_training)

# dropout层

out = tf.layers.dense(fc1, n_classes)

return outdef model_fn(features, labels, mode): # ModeKey: TRAIN, EVAL, PREDICT

logits_train = conv_net(features, num_classes, dropout, reuse=False,

is_training=True)

logits_test = conv_net(features, num_classes, dropout, reuse=True,

is_training=False)

pred_classes = tf.argmax(logits_test, axis=1)

pred_probas = tf.nn.softmax(logits_test)

if mode == tf.estimator.ModeKeys.PREDICT:

return tf.estimator.EstimatorSpec(mode, predictions=pred_classes)

loss_op = tf.reduce_mean(tf.nn.sparse_softmax_cross_entropy_with_logits(

logits=logits_train, labels=tf.cast(labels, dtype=tf.int32)))

optimizer = tf.train.AdadeltaOptimizer(learning_rate=learning_rate)

train_op = optimizer.minimize(loss_op,

global_step=tf.train.get_global_step())

acc_op = tf.metrics.accuracy(labels=labels, predictions=pred_classes)

estim_specs = tf.estimator.EstimatorSpec(

mode = mode,

predictions=pred_classes,

loss=loss_op,

train_op=train_op,

eval_metric_ops={'accuracy': acc_op})

return estim_specs