NDK 直播推流与引流

该文章首发于微信公众号“字节流动”。

本博客 NDK 开发系列文章:

- NDK 编译的三种方式

- NDK 开发中引入第三方静态库和动态库

- NDK 开发中 Native 与 Java 交互

- NDK POSIX 多线程编程

- NDK Android OpenSL ES 音频采集与播放

- NDK FFmpeg 编译

- NDK FFmpeg 音视频解码

- NDK 直播流媒体服务器搭建

- NDK 直播推流与引流

- NDK 开发中快速定位 Crash 问题

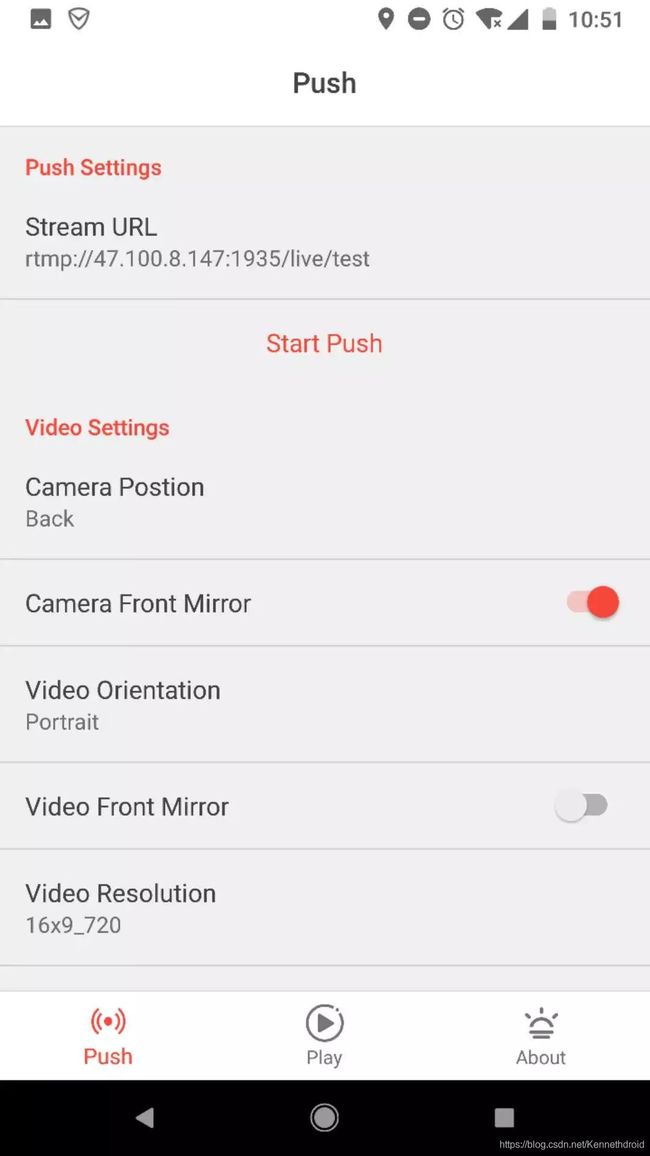

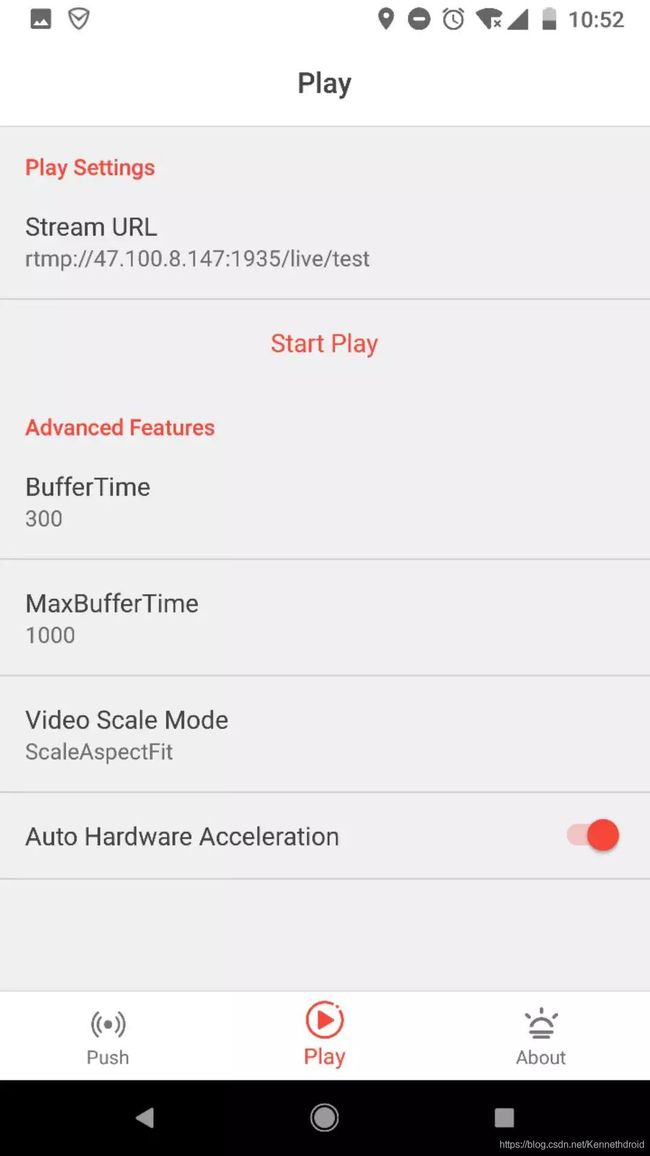

流媒体服务器测试

首先利用快直播 app(其他支持 RTMP 推流与引流的 app 亦可)和 ffplay.exe 对流媒体服务器进行测试。

Windows 下利用 ffplay 进行引流,命令行执行:

ffplay rtmp://192.168.0.0/live/test

# ip 地址换成流媒体服务器的地址, test 表示直播房间号

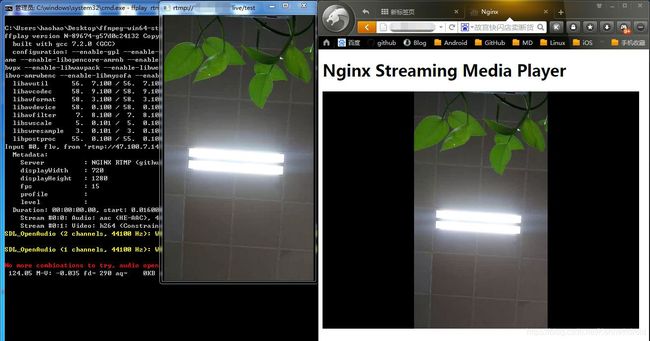

推流

本文直播推流步骤:

- 使用 AudioRecord 采集音频,使用 Camera API 采集视频数据

- 分别使用 faac 和 xh264 第三方库在 Native 层对音频和视频进行编码

- 利用 rtmp-dump 第三方库进行打包和推流

主要的 JNI 方法:

public class NativePush {

public native void startPush(String url);

public native void stopPush();

public native void release();

/**

* 设置视频参数

* @param width

* @param height

* @param bitrate

* @param fps

*/

public native void setVideoOptions(int width, int height, int bitrate, int fps);

/**

* 设置音频参数

* @param sampleRateInHz

* @param channel

*/

public native void setAudioOptions(int sampleRateInHz, int channel);

/**

* 发送视频数据

* @param data

*/

public native void fireVideo(byte[] data);

/**

* 发送音频数据

* @param data

* @param len

*/

public native void fireAudio(byte[] data, int len);

}

视频采集

视频采集主要基于 Camera 相关 API ,利用 SurfaceView 进行预览,通过 PreviewCallback 获取相机预览数据。

视频预览主要代码实现:

public void startPreview(){

try {

mCamera = Camera.open(mVideoParams.getCameraId());

Camera.Parameters param = mCamera.getParameters();

List<Camera.Size> previewSizes = param.getSupportedPreviewSizes();

int length = previewSizes.size();

for (int i = 0; i < length; i++) {

Log.i(TAG, "SupportedPreviewSizes : " + previewSizes.get(i).width + "x" + previewSizes.get(i).height);

}

mVideoParams.setWidth(previewSizes.get(0).width);

mVideoParams.setHeight(previewSizes.get(0).height);

param.setPreviewFormat(ImageFormat.NV21);

param.setPreviewSize(mVideoParams.getWidth(), mVideoParams.getHeight());

mCamera.setParameters(param);

//mCamera.setDisplayOrientation(90); // 竖屏

mCamera.setPreviewDisplay(mSurfaceHolder);

buffer = new byte[mVideoParams.getWidth() * mVideoParams.getHeight() * 4];

mCamera.addCallbackBuffer(buffer);

mCamera.setPreviewCallbackWithBuffer(this);

mCamera.startPreview();

} catch (IOException e) {

e.printStackTrace();

}

}

利用 FrameCallback 获取预览数据传入 Native 层,然后进行编码:

@Override

public void onPreviewFrame(byte[] bytes, Camera camera) {

if (mCamera != null) {

mCamera.addCallbackBuffer(buffer);

}

if (mIsPushing) {

mNativePush.fireVideo(bytes);

}

}

音频采集

音频采集基于 AudioRecord 实现,在一个子线程采集音频 PCM 数据,并将数据不断传入 Native 层进行编码。

private class AudioRecordRunnable implements Runnable {

@Override

public void run() {

mAudioRecord.startRecording();

while (mIsPushing) {

//通过AudioRecord不断读取音频数据

byte[] buffer = new byte[mMinBufferSize];

int length = mAudioRecord.read(buffer, 0, buffer.length);

if (length > 0) {

//传递给 Native 代码,进行音频编码

mNativePush.fireAudio(buffer, length);

}

}

}

}

编码和推流

音视频数据编码和推流在 Native 层实现,首先添加 faac , x264 , librtmp 第三方库到 AS 工程,然后初始化相关设置,基于生产者与消费者模式,将编码后的音视频数据,在生产者线程中打包 RTMPPacket 放入双向链表,在消费者线程中从链表中取 RTMPPacket ,通过 RTMP_SendPacket 方法发送给服务器。

x264 初始化:

JNIEXPORT void JNICALL

Java_com_haohao_live_jni_NativePush_setVideoOptions(JNIEnv *env, jobject instance, jint width,

jint height, jint bitRate, jint fps) {

x264_param_t param;

//x264_param_default_preset 设置

x264_param_default_preset(¶m, "ultrafast", "zerolatency");

//编码输入的像素格式YUV420P

param.i_csp = X264_CSP_I420;

param.i_width = width;

param.i_height = height;

y_len = width * height;

u_len = y_len / 4;

v_len = u_len;

//参数i_rc_method表示码率控制,CQP(恒定质量),CRF(恒定码率),ABR(平均码率)

//恒定码率,会尽量控制在固定码率

param.rc.i_rc_method = X264_RC_CRF;

param.rc.i_bitrate = bitRate / 1000; //* 码率(比特率,单位Kbps)

param.rc.i_vbv_max_bitrate = bitRate / 1000 * 1.2; //瞬时最大码率

//码率控制不通过timebase和timestamp,而是fps

param.b_vfr_input = 0;

param.i_fps_num = fps; //* 帧率分子

param.i_fps_den = 1; //* 帧率分母

param.i_timebase_den = param.i_fps_num;

param.i_timebase_num = param.i_fps_den;

param.i_threads = 1;//并行编码线程数量,0默认为多线程

//是否把SPS和PPS放入每一个关键帧

//SPS Sequence Parameter Set 序列参数集,PPS Picture Parameter Set 图像参数集

//为了提高图像的纠错能力

param.b_repeat_headers = 1;

//设置Level级别

param.i_level_idc = 51;

//设置Profile档次

//baseline级别,没有B帧,只有 I 帧和 P 帧

x264_param_apply_profile(¶m, "baseline");

//x264_picture_t(输入图像)初始化

x264_picture_alloc(&pic_in, param.i_csp, param.i_width, param.i_height);

pic_in.i_pts = 0;

//打开编码器

video_encode_handle = x264_encoder_open(¶m);

if (video_encode_handle) {

LOGI("打开视频编码器成功");

} else {

throwNativeError(env, INIT_FAILED);

}

}

faac 初始化:

JNIEXPORT void JNICALL

Java_com_haohao_live_jni_NativePush_setAudioOptions(JNIEnv *env, jobject instance,

jint sampleRateInHz, jint channel) {

audio_encode_handle = faacEncOpen(sampleRateInHz, channel, &nInputSamples,

&nMaxOutputBytes);

if (!audio_encode_handle) {

LOGE("音频编码器打开失败");

return;

}

//设置音频编码参数

faacEncConfigurationPtr p_config = faacEncGetCurrentConfiguration(audio_encode_handle);

p_config->mpegVersion = MPEG4;

p_config->allowMidside = 1;

p_config->aacObjectType = LOW;

p_config->outputFormat = 0; //输出是否包含ADTS头

p_config->useTns = 1; //时域噪音控制,大概就是消爆音

p_config->useLfe = 0;

// p_config->inputFormat = FAAC_INPUT_16BIT;

p_config->quantqual = 100;

p_config->bandWidth = 0; //频宽

p_config->shortctl = SHORTCTL_NORMAL;

if (!faacEncSetConfiguration(audio_encode_handle, p_config)) {

LOGE("%s", "音频编码器配置失败..");

throwNativeError(env, INIT_FAILED);

return;

}

LOGI("%s", "音频编码器配置成功");

}

对视频数据进行编码打包,通过 add_rtmp_packet 放入链表:

JNIEXPORT void JNICALL

Java_com_haohao_live_jni_NativePush_fireVideo(JNIEnv *env, jobject instance, jbyteArray buffer_) {

//视频数据转为YUV420P

//NV21->YUV420P

jbyte *nv21_buffer = (*env)->GetByteArrayElements(env, buffer_, NULL);

jbyte *u = pic_in.img.plane[1];

jbyte *v = pic_in.img.plane[2];

//nv21 4:2:0 Formats, 12 Bits per Pixel

//nv21与yuv420p,y个数一致,uv位置对调

//nv21转yuv420p y = w*h,u/v=w*h/4

//nv21 = yvu yuv420p=yuv y=y u=y+1+1 v=y+1

//如果要进行图像处理(美颜),可以再转换为RGB

//还可以结合OpenCV识别人脸等等

memcpy(pic_in.img.plane[0], nv21_buffer, y_len);

int i;

for (i = 0; i < u_len; i++) {

*(u + i) = *(nv21_buffer + y_len + i * 2 + 1);

*(v + i) = *(nv21_buffer + y_len + i * 2);

}

//h264编码得到NALU数组

x264_nal_t *nal = NULL; //NAL

int n_nal = -1; //NALU的个数

//进行h264编码

if (x264_encoder_encode(video_encode_handle, &nal, &n_nal, &pic_in, &pic_out) < 0) {

LOGE("%s", "编码失败");

return;

}

//使用rtmp协议将h264编码的视频数据发送给流媒体服务器

//帧分为关键帧和普通帧,为了提高画面的纠错率,关键帧应包含SPS和PPS数据

int sps_len, pps_len;

unsigned char sps[100];

unsigned char pps[100];

memset(sps, 0, 100);

memset(pps, 0, 100);

pic_in.i_pts += 1; //顺序累加

//遍历NALU数组,根据NALU的类型判断

for (i = 0; i < n_nal; i++) {

if (nal[i].i_type == NAL_SPS) {

//复制SPS数据,序列参数集(Sequence parameter set)

sps_len = nal[i].i_payload - 4;

memcpy(sps, nal[i].p_payload + 4, sps_len); //不复制四字节起始码

} else if (nal[i].i_type == NAL_PPS) {

//复制PPS数据,图像参数集(Picture parameter set)

pps_len = nal[i].i_payload - 4;

memcpy(pps, nal[i].p_payload + 4, pps_len); //不复制四字节起始码

//发送序列信息

//h264关键帧会包含SPS和PPS数据

add_264_sequence_header(pps, sps, pps_len, sps_len);

} else {

//发送帧信息

add_264_body(nal[i].p_payload, nal[i].i_payload);

}

}

(*env)->ReleaseByteArrayElements(env, buffer_, nv21_buffer, 0);

}

同样,对音频数据进行编码打包放入链表:

JNIEXPORT void JNICALL

Java_com_haohao_live_jni_NativePush_fireAudio(JNIEnv *env, jobject instance, jbyteArray buffer_,

jint length) {

int *pcmbuf;

unsigned char *bitbuf;

jbyte *b_buffer = (*env)->GetByteArrayElements(env, buffer_, 0);

pcmbuf = (short *) malloc(nInputSamples * sizeof(int));

bitbuf = (unsigned char *) malloc(nMaxOutputBytes * sizeof(unsigned char));

int nByteCount = 0;

unsigned int nBufferSize = (unsigned int) length / 2;

unsigned short *buf = (unsigned short *) b_buffer;

while (nByteCount < nBufferSize) {

int audioLength = nInputSamples;

if ((nByteCount + nInputSamples) >= nBufferSize) {

audioLength = nBufferSize - nByteCount;

}

int i;

for (i = 0; i < audioLength; i++) {//每次从实时的pcm音频队列中读出量化位数为8的pcm数据。

int s = ((int16_t *) buf + nByteCount)[i];

pcmbuf[i] = s << 8;//用8个二进制位来表示一个采样量化点(模数转换)

}

nByteCount += nInputSamples;

//利用FAAC进行编码,pcmbuf为转换后的pcm流数据,audioLength为调用faacEncOpen时得到的输入采样数,bitbuf为编码后的数据buff,nMaxOutputBytes为调用faacEncOpen时得到的最大输出字节数

int byteslen = faacEncEncode(audio_encode_handle, pcmbuf, audioLength,

bitbuf, nMaxOutputBytes);

if (byteslen < 1) {

continue;

}

add_aac_body(bitbuf, byteslen);//从bitbuf中得到编码后的aac数据流,放到数据队列

}

if (bitbuf)

free(bitbuf);

if (pcmbuf)

free(pcmbuf);

(*env)->ReleaseByteArrayElements(env, buffer_, b_buffer, 0);

}

消费者线程不断从链表中取 RTMPPacket 发送给服务器:

void *push_thread(void *arg) {

JNIEnv *env;//获取当前线程JNIEnv

(*javaVM)->AttachCurrentThread(javaVM, &env, NULL);

//建立RTMP连接

RTMP *rtmp = RTMP_Alloc();

if (!rtmp) {

LOGE("rtmp初始化失败");

goto end;

}

RTMP_Init(rtmp);

rtmp->Link.timeout = 5; //连接超时的时间

//设置流媒体地址

RTMP_SetupURL(rtmp, rtmp_path);

//发布rtmp数据流

RTMP_EnableWrite(rtmp);

//建立连接

if (!RTMP_Connect(rtmp, NULL)) {

LOGE("%s", "RTMP 连接失败");

throwNativeError(env, CONNECT_FAILED);

goto end;

}

//计时

start_time = RTMP_GetTime();

if (!RTMP_ConnectStream(rtmp, 0)) { //连接流

LOGE("%s", "RTMP ConnectStream failed");

throwNativeError(env, CONNECT_FAILED);

goto end;

}

is_pushing = TRUE;

//发送AAC头信息

add_aac_sequence_header();

while (is_pushing) {

//发送

pthread_mutex_lock(&mutex);

pthread_cond_wait(&cond, &mutex);

//取出队列中的RTMPPacket

RTMPPacket *packet = queue_get_first();

if (packet) {

queue_delete_first(); //移除

packet->m_nInfoField2 = rtmp->m_stream_id; //RTMP协议,stream_id数据

int i = RTMP_SendPacket(rtmp, packet, TRUE); //TRUE放入librtmp队列中,并不是立即发送

if (!i) {

LOGE("RTMP 断开");

RTMPPacket_Free(packet);

pthread_mutex_unlock(&mutex);

goto end;

} else {

LOGI("%s", "rtmp send packet");

}

RTMPPacket_Free(packet);

}

pthread_mutex_unlock(&mutex);

}

end:

LOGI("%s", "释放资源");

free(rtmp_path);

RTMP_Close(rtmp);

RTMP_Free(rtmp);

(*javaVM)->DetachCurrentThread(javaVM);

return 0;

}

引流

这里引流就不做展开讲,可以通过 QLive 的 SDK 或者 vitamio (小楠总)等第三方库实现。

基于 vitamio 实现引流:

private void init(){

mVideoView = (VideoView) findViewById(R.id.live_player_view);

mVideoView.setVideoPath(SPUtils.getInstance(this).getString(SPUtils.KEY_NGINX_SER_URI));

mVideoView.setMediaController(new MediaController(this));

mVideoView.requestFocus();

mVideoView.setOnPreparedListener(new MediaPlayer.OnPreparedListener() {

@Override

public void onPrepared(MediaPlayer mp) {

mp.setPlaybackSpeed(1.0f);

}

});

}

PS:源码地址:https://github.com/githubhaohao/NDKLive

联系与交流

微信公众号

![]()

个人微信

![]()