Wide and Deep Learning(2)tf代码实现

https://zhuanlan.zhihu.com/p/43328492

特征进行处理:

连续的特征,包含这些:

CONTINUOUS_COLUMNS = ["age", "education_num", "capital_gain", "capital_loss",

"hours_per_week"]

离散的特征,包含这些:

CATEGORICAL_COLUMNS = ["workclass", "education", "marital_status", "occupation",

"relationship", "race", "gender", "native_country"]

linear模型中

1.离散:

(1)按照自定义的字典将类别特征映射到数值,适合特征种类较少时候使用

gender = tf.contrib.layers.sparse_column_with_keys(column_name=“gender”, keys=[“female”,“male”])

(2)自动将类别特征映射到数值,适合特征种类较多时候使用

education = tf.contrib.layers.sparse_column_with_hash_bucket(“education”, hash_bucket_size = 1000)

2.连续:

(3) 连续数值特征

age = tf.contrib.layers.real_valued_column(“age”)

(4)把连续特征按照区间映射为类别特征

age_buckets = tf.contrib.layers.bucketized_column(age, boundaries= [18,25, 30, 35, 40, 45, 50, 55, 60, 65])

3.交叉特征:

(5)#特征相乘生成的交叉特征

tf.contrib.layers.crossed_column([education, occupation], hash_bucket_size=int(1e4))

神经网络中

1.连续的特征可以直接传入神经网络

2.离散的特征需要做处理之后再传入:有两种方式进行处理,可以使用one-hot 编码处理神经网络输入的离散特征,也可以使用 embedding 处理;建议该特征的数值种类较少时候使用one-hot 编码,在该特征的数值种类较多的情况下使用 embedding 编码,这里之所以要这么处理,我理解的原因是独热编码如果数值种类很多,会导致编码后的特征维度太高,从而学习到的信息过于分散,因此,在种类很多的时候,需要先将超高维的种类离散特征进行压缩embedding,再送入神经网络;

这是把离散特征embedding 操作的核心,它把原始的类别数值映射到这个权值矩阵,其实相当于神经网络的权值,后续如果是trainable的话,我们就会把这个当做网络的权值矩阵进行训练,但是在用的时候,就把这个当成一个embedding表,按id去取每个特征的embedding 后的数值。(这其实就类似于词向量了,把每个单词映射到一个词向量。)

embedding编码:(这其实就类似于词向量了,把每个单词映射到一个词向量。)

tf.contrib.layers.embedding_column(workclass, dimension=8)

离散值的存储:

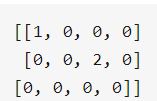

什么是 sparseTensor? 什么时候使用这种数据类型?

它是用(位置,值,形状) 三个元素简略的表示一个矩阵的方法,例如:

SparseTensor(indices=[[0, 0], [1, 2]], values=[1, 2], dense_shape=[3, 4])

表示:

也就是说它表明,在(0,0)的位置有1, (1,2)的位置存在2;

它只针对类别特征,适合于表示矩阵中大量元素为0的情况,会大大减少矩阵占用的存储量;在案例中,我发现它经常用来作为一个类别特征的中间变量矩阵,用来减小内存占用:

在input_fn()中:

categorical_cols = {k: tf.SparseTensor(indices=[[i,0] for i in range( df[k].size)], values = df[k].values, dense_shape=[df[k].size,1]) for k in CATEGORICAL_COLUMNS}

对离散特征的存储。

二、model:

根据选择的model_type不同,使用不同的方法。

if FLAGS.model_type =="wide":

m = tf.contrib.learn.LinearClassifier(model_dir=model_dir,feature_columns=wide_columns)

elif FLAGS.model_type == "deep":

m = tf.contrib.learn.DNNClassifier(model_dir=model_dir, feature_columns=deep_columns, hidden_units=[100,50])

else:

m = tf.contrib.learn.DNNLinearCombinedClassifier(model_dir=model_dir, linear_feature_columns=wide_columns, dnn_feature_columns = deep_columns, dnn_hidden_units=[100,50])

三、整体代码:

1.定义不同的FLAGS选择和离散项,连续项,Label

#tf定义了tf.app.flags,用于支持接受命令行传递参数,相当于接受argv。

import tempfile

import tensorflow as tf

from six.moves import urllib

import pandas as pd

flags = tf.app.flags #tf定义了tf.app.flags,用于支持接受命令行传递参数,相当于接受argv。

FLAGS = flags.FLAGS

#参数的几种选择:

flags.DEFINE_string("model_dir","","Base directory for output models.")

flags.DEFINE_string("model_type","wide_n_deep","valid model types:{'wide','deep', 'wide_n_deep'")

flags.DEFINE_integer("train_steps",200,"Number of training steps.")

flags.DEFINE_string("train_data","", "Path to the training data.")

flags.DEFINE_string("test_data", "", "path to the test data")

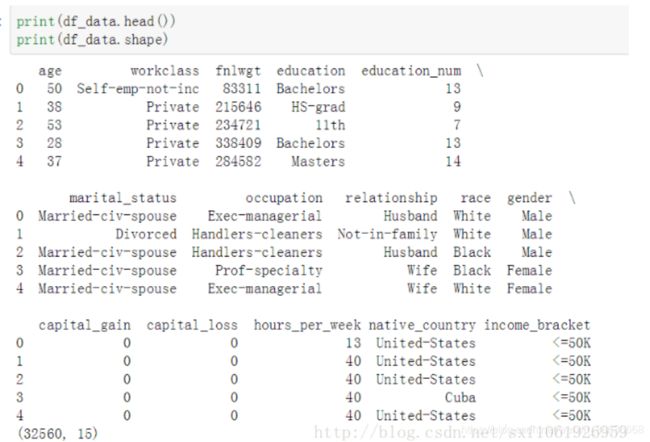

COLUMNS = ["age", "workclass", "fnlwgt", "education", "education_num",

"marital_status", "occupation", "relationship", "race", "gender",

"capital_gain", "capital_loss", "hours_per_week", "native_country",

"income_bracket"]

LABEL_COLUMN = "label"

CATEGORICAL_COLUMNS = ["workclass", "education", "marital_status", "occupation",

"relationship", "race", "gender", "native_country"]

CONTINUOUS_COLUMNS = ["age", "education_num", "capital_gain", "capital_loss",

"hours_per_week"]

2.下载数据:maybe_download()

def maybe_download():

if FLAGS.train_data:

train_data_file = FLAGS.train_data

else:

train_file = tempfile.NamedTemporaryFile(delete=False)

urllib.request.urlretrieve("http://mlr.cs.umass.edu/ml/machine-learning-databases/adult/adult.data", train_file.name)

train_file_name = train_file.name

train_file.close()

print("Training data is downloaded to %s" % train_file_name)

if FLAGS.test_data:

test_file_name = FLAGS.test_data

else:

test_file = tempfile.NamedTemporaryFile(delete=False)

urllib.request.urlretrieve("http://mlr.cs.umass.edu/ml/machine-learning-databases/adult/adult.test",

test_file.name) # pylint: disable=line-too-long

test_file_name = test_file.name

test_file.close()

print("Test data is downloaded to %s" % test_file_name)

return train_file_name, test_file_name

3.定义训练模型:build_estimator()

但这里线性模型和神经网络的特征采集:

wide 模型的特征都是离散特征、 离散特征之间的交互作用特征;

deep 模型的特征则是离散特征embedding 加上连续特征;

wide 端模型和 deep 端模型只需要分别专注于擅长的方面,wide 端模型通过离散特征的交叉组合进行 memorization,deep 端模型通过特征的 embedding 进行 generalization,这样单个模型的大小和复杂度也能得到控制,而整体模型的性能仍能得到提高。

# build the estimator

def build_estimator(model_dir):

# 离散分类别的

gender = tf.contrib.layers.sparse_column_with_keys(column_name="gender", keys=["female","male"])#按照自定义的字典将类别特征映射到数值,适合特征种类较少时候使用

education = tf.contrib.layers.sparse_column_with_hash_bucket("education", hash_bucket_size = 1000)#自动将类别特征映射到数值,适合特征种类较多时候使用

relationship = tf.contrib.layers.sparse_column_with_hash_bucket("relationship", hash_bucket_size = 100)

workclass = tf.contrib.layers.sparse_column_with_hash_bucket("workclass", hash_bucket_size=100)

occupation = tf.contrib.layers.sparse_column_with_hash_bucket("occupation", hash_bucket_size=1000)

native_country = tf.contrib.layers.sparse_column_with_hash_bucket( "native_country", hash_bucket_size=1000)

# Continuous base columns.

age = tf.contrib.layers.real_valued_column("age")#连续数值特征

education_num = tf.contrib.layers.real_valued_column("education_num")

capital_gain = tf.contrib.layers.real_valued_column("capital_gain")

capital_loss = tf.contrib.layers.real_valued_column("capital_loss")

hours_per_week = tf.contrib.layers.real_valued_column("hours_per_week")

#类别转换

age_buckets = tf.contrib.layers.bucketized_column(age, boundaries= [18,25, 30, 35, 40, 45, 50, 55, 60, 65]) #把连续特征按照区间映射为类别特征

wide_columns = [gender, native_country,education, occupation, workclass, relationship, age_buckets,

tf.contrib.layers.crossed_column([education, occupation], hash_bucket_size=int(1e4)), #特征相乘生成的交叉特征

tf.contrib.layers.crossed_column([age_buckets, education, occupation], hash_bucket_size=int(1e6)),

tf.contrib.layers.crossed_column([native_country, occupation],hash_bucket_size=int(1e4))]

#embedding_column用来表示类别型的变量

#这是把离散特征embedding 操作的核心,它把原始的类别数值映射到这个权值矩阵,其实相当于神经网络的权值,后续如果是trainable的话,我们就会把这个当做网络的权值矩阵进行训练,但是在用的时候,就把这个当成一个embedding表,按id去取每个特征的embedding 后的数值。

# (这其实就类似于词向量了,把每个单词映射到一个词向量。)

deep_columns = [tf.contrib.layers.embedding_column(workclass, dimension=8),

tf.contrib.layers.embedding_column(education, dimension=8),

tf.contrib.layers.embedding_column(gender, dimension=8),

tf.contrib.layers.embedding_column(relationship, dimension=8),

tf.contrib.layers.embedding_column(native_country,dimension=8),

tf.contrib.layers.embedding_column(occupation, dimension=8),

age,education_num,capital_gain,capital_loss,hours_per_week,]

if FLAGS.model_type =="wide":

m = tf.contrib.learn.LinearClassifier(model_dir=model_dir,feature_columns=wide_columns)

elif FLAGS.model_type == "deep":

m = tf.contrib.learn.DNNClassifier(model_dir=model_dir, feature_columns=deep_columns, hidden_units=[100,50])

else:

m = tf.contrib.learn.DNNLinearCombinedClassifier(model_dir=model_dir, linear_feature_columns=wide_columns, dnn_feature_columns = deep_columns, dnn_hidden_units=[100,50])

return m

两个模型使用不同的优化器是什么? 怎么在loss层面配合?

以 tf.estimator.DNNLinearCombinedClassifier() 为例,进入该函数发现:linear_optimizer=‘Ftrl’, 线性模型使用’Ftrl’优化器,dnn_optimizer=‘Adagrad’, 神经网络使用’Adagrad’优化器;

如果分类数量是2,loss使用

_binary_logistic_head_with_sigmoid_cross_entropy_loss

如果分类数量大于2,loss使用

_multi_class_head_with_softmax_cross_entropy_loss

最后怎么得到两个模型结合的预测结果的?

直接把两个模型的结果相加:

logits = dnn_logits + linear_logits

很出乎意料的方式啊,居然是直接相加的,然后是上面一点提到的操作,用这个logits 和label 得到 Loss,再将 Loss 分别反传回两个独立的优化器分别优化两个模型的权重。

4.划分数据中的Label 和 feature

#划分数据中的Label 和 feature

def input_fn(df):

continuous_cols = {k: tf.constant(df[k].values) for k in CONTINUOUS_COLUMNS}

categorical_cols = {k: tf.SparseTensor(indices=[[i,0] for i in range( df[k].size)], values = df[k].values, dense_shape=[df[k].size,1]) for k in CATEGORICAL_COLUMNS}#原文例子为dense_shape

feature_cols = dict(continuous_cols)

feature_cols.update(categorical_cols)

label = tf.constant(df[LABEL_COLUMN].values)

return feature_cols, label

5.下载文件,读取文件,去掉值为NAN的项,定义Label,划分Label,创建临时目录model_dir

,训练模型,估计测试集,输出最后的指标。

def train_and_eval():

train_file_name, test_file_name = maybe_download()

df_train = pd.read_csv(

tf.gfile.Open(train_file_name),

names=COLUMNS,

skipinitialspace=True,

engine="python"

)

df_test = pd.read_csv(

tf.gfile.Open(test_file_name),

names=COLUMNS,

skipinitialspace=True,

skiprows=1,

engine="python"

)

# drop Not a number elements

df_train = df_train.dropna(how='any',axis=0)

df_test = df_test.dropna(how='any', axis=0)

#convert >50 to 1

df_train[LABEL_COLUMN] = (

df_train["income_bracket"].apply(lambda x: ">50" in x).astype(int)

)

df_test[LABEL_COLUMN] = (

df_test["income_bracket"].apply(lambda x: ">50K" in x)).astype(int)

model_dir = tempfile.mkdtemp() if not FLAGS.model_dir else FLAGS.model_dir

print("model dir = %s" % model_dir)

m = build_estimator(model_dir)

print (FLAGS.train_steps)

m.fit(input_fn=lambda: input_fn(df_train),

steps=FLAGS.train_steps)

results = m.evaluate(input_fn=lambda: input_fn(df_test), steps=1)

for key in sorted(results):

print("%s: %s"%(key, results[key]))

def main(_):

train_and_eval()

if __name__ == "__main__":

tf.app.run()

最后代码为:

import tempfile

import tensorflow as tf

from six.moves import urllib

import pandas as pd

flags = tf.app.flags #tf定义了tf.app.flags,用于支持接受命令行传递参数,相当于接受argv。

FLAGS = flags.FLAGS

#参数的几种选择:

flags.DEFINE_string("model_dir","","Base directory for output models.")

flags.DEFINE_string("model_type","wide_n_deep","valid model types:{'wide','deep', 'wide_n_deep'")

flags.DEFINE_integer("train_steps",200,"Number of training steps.")

flags.DEFINE_string("train_data","", "Path to the training data.")

flags.DEFINE_string("test_data", "", "path to the test data")

COLUMNS = ["age", "workclass", "fnlwgt", "education", "education_num",

"marital_status", "occupation", "relationship", "race", "gender",

"capital_gain", "capital_loss", "hours_per_week", "native_country",

"income_bracket"]

LABEL_COLUMN = "label"

CATEGORICAL_COLUMNS = ["workclass", "education", "marital_status", "occupation",

"relationship", "race", "gender", "native_country"]

CONTINUOUS_COLUMNS = ["age", "education_num", "capital_gain", "capital_loss",

"hours_per_week"]

# download test and train data

def maybe_download():

if FLAGS.train_data:

train_data_file = FLAGS.train_data

else:

train_file = tempfile.NamedTemporaryFile(delete=False)

urllib.request.urlretrieve("http://mlr.cs.umass.edu/ml/machine-learning-databases/adult/adult.data", train_file.name)

train_file_name = train_file.name

train_file.close()

print("Training data is downloaded to %s" % train_file_name)

if FLAGS.test_data:

test_file_name = FLAGS.test_data

else:

test_file = tempfile.NamedTemporaryFile(delete=False)

urllib.request.urlretrieve("http://mlr.cs.umass.edu/ml/machine-learning-databases/adult/adult.test",

test_file.name) # pylint: disable=line-too-long

test_file_name = test_file.name

test_file.close()

print("Test data is downloaded to %s" % test_file_name)

return train_file_name, test_file_name

# build the estimator

def build_estimator(model_dir):

# 离散分类别的

gender = tf.contrib.layers.sparse_column_with_keys(column_name="gender", keys=["female","male"])#按照自定义的字典将类别特征映射到数值,适合特征种类较少时候使用

education = tf.contrib.layers.sparse_column_with_hash_bucket("education", hash_bucket_size = 1000)#自动将类别特征映射到数值,适合特征种类较多时候使用

relationship = tf.contrib.layers.sparse_column_with_hash_bucket("relationship", hash_bucket_size = 100)

workclass = tf.contrib.layers.sparse_column_with_hash_bucket("workclass", hash_bucket_size=100)

occupation = tf.contrib.layers.sparse_column_with_hash_bucket("occupation", hash_bucket_size=1000)

native_country = tf.contrib.layers.sparse_column_with_hash_bucket( "native_country", hash_bucket_size=1000)

# Continuous base columns.

age = tf.contrib.layers.real_valued_column("age")#连续数值特征

education_num = tf.contrib.layers.real_valued_column("education_num")

capital_gain = tf.contrib.layers.real_valued_column("capital_gain")

capital_loss = tf.contrib.layers.real_valued_column("capital_loss")

hours_per_week = tf.contrib.layers.real_valued_column("hours_per_week")

#类别转换

age_buckets = tf.contrib.layers.bucketized_column(age, boundaries= [18,25, 30, 35, 40, 45, 50, 55, 60, 65]) #把连续特征按照区间映射为类别特征

wide_columns = [gender, native_country,education, occupation, workclass, relationship, age_buckets,

tf.contrib.layers.crossed_column([education, occupation], hash_bucket_size=int(1e4)), #特征相乘生成的交叉特征

tf.contrib.layers.crossed_column([age_buckets, education, occupation], hash_bucket_size=int(1e6)),

tf.contrib.layers.crossed_column([native_country, occupation],hash_bucket_size=int(1e4))]

#embedding_column用来表示类别型的变量

#这是把离散特征embedding 操作的核心,它把原始的类别数值映射到这个权值矩阵,其实相当于神经网络的权值,后续如果是trainable的话,我们就会把这个当做网络的权值矩阵进行训练,但是在用的时候,就把这个当成一个embedding表,按id去取每个特征的embedding 后的数值。

# (这其实就类似于词向量了,把每个单词映射到一个词向量。)

deep_columns = [tf.contrib.layers.embedding_column(workclass, dimension=8),

tf.contrib.layers.embedding_column(education, dimension=8),

tf.contrib.layers.embedding_column(gender, dimension=8),

tf.contrib.layers.embedding_column(relationship, dimension=8),

tf.contrib.layers.embedding_column(native_country,dimension=8),

tf.contrib.layers.embedding_column(occupation, dimension=8),

age,education_num,capital_gain,capital_loss,hours_per_week,]

if FLAGS.model_type =="wide":

m = tf.contrib.learn.LinearClassifier(model_dir=model_dir,feature_columns=wide_columns)

elif FLAGS.model_type == "deep":

m = tf.contrib.learn.DNNClassifier(model_dir=model_dir, feature_columns=deep_columns, hidden_units=[100,50])

else:

m = tf.contrib.learn.DNNLinearCombinedClassifier(model_dir=model_dir, linear_feature_columns=wide_columns, dnn_feature_columns = deep_columns, dnn_hidden_units=[100,50])

return m

#划分数据中的Label 和 feature

def input_fn(df):

continuous_cols = {k: tf.constant(df[k].values) for k in CONTINUOUS_COLUMNS}

categorical_cols = {k: tf.SparseTensor(indices=[[i,0] for i in range( df[k].size)], values = df[k].values, dense_shape=[df[k].size,1]) for k in CATEGORICAL_COLUMNS}#原文例子为dense_shape

feature_cols = dict(continuous_cols)

feature_cols.update(categorical_cols)

label = tf.constant(df[LABEL_COLUMN].values)

return feature_cols, label

#训练的主函数

def train_and_eval():

train_file_name, test_file_name = maybe_download()

df_train = pd.read_csv(

tf.gfile.Open(train_file_name),

names=COLUMNS,

skipinitialspace=True,

engine="python"

)

df_test = pd.read_csv(

tf.gfile.Open(test_file_name),

names=COLUMNS,

skipinitialspace=True,

skiprows=1,

engine="python"

)

# drop Not a number elements

df_train = df_train.dropna(how='any',axis=0)

df_test = df_test.dropna(how='any', axis=0)

#convert >50 to 1

df_train[LABEL_COLUMN] = (

df_train["income_bracket"].apply(lambda x: ">50" in x).astype(int)

)

df_test[LABEL_COLUMN] = (

df_test["income_bracket"].apply(lambda x: ">50K" in x)).astype(int)

model_dir = tempfile.mkdtemp() if not FLAGS.model_dir else FLAGS.model_dir #创建临时目录,目录需要手动删除。

print("model dir = %s" % model_dir)

m = build_estimator(model_dir)

print (FLAGS.train_steps)

m.fit(input_fn=lambda: input_fn(df_train),

steps=FLAGS.train_steps)

results = m.evaluate(input_fn=lambda: input_fn(df_test), steps=1)

for key in sorted(results):

print("%s: %s"%(key, results[key]))

def main(_):

train_and_eval()

if __name__ == "__main__":

tf.app.run()