pytorch实现DPN 最详细的全面讲解

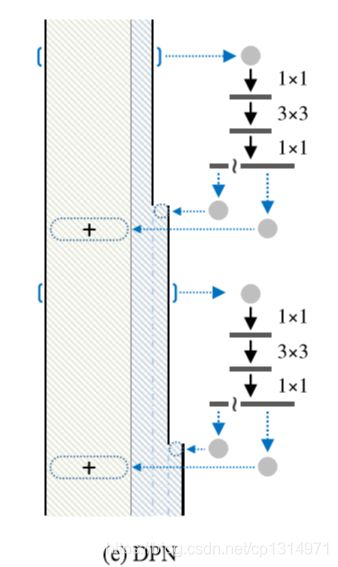

DPN这个模型融入了三种基础模型,inception,resnet,densenet。有inception的宽度又有resnet的shortcut利用和densenet的浅层特征重复利用,可以看出它的强大。这个模型比较难掌握,需要你之前实践过前三种模型才可以理解本篇的代码。前三种模型我都有详细的讲解,inception详细讲解 resnet详细讲解 densenet详细讲解完全理解这三篇就可以轻松的掌握DPN模型。

torch.cat的使用

第一步要理解下面一行的代码

x = torch.cat([a[:,:d,:,:]+x[:,:d,:,:], a[:,d:,:,:], x[:,d:,:,:]], dim=1)

再讲这段代码的含义之前我要先讲解切片的计算,请看下面几个例子。

a = torch.rand((2,3,5,5))

print(a[:,:1,:,:])

#输出

tensor([[[[0.8158, 0.1786, 0.3600, 0.6222, 0.6837],

[0.9744, 0.9082, 0.5492, 0.6188, 0.2063],

[0.1767, 0.1156, 0.7501, 0.6518, 0.0286],

[0.9356, 0.6780, 0.8628, 0.2419, 0.3672],

[0.3171, 0.0869, 0.4242, 0.0131, 0.3955]]],

基本的切片操作很好理解,在卷积的理解是特征图输出一层。再看下面二个例子

import torch

a = torch.rand((2,3,5,5))

b = torch.rand((2,3,5,5))

d = torch.cat([a[:,:1,:,:]+b[:,:1,:,:]],dim=1)

print(d.size())

#输出

torch.Size([2, 1, 5, 5])

d = torch.cat([a[:,:1,:,:],b[:,:1,:,:]],dim=1)

print(d.size())

#输出

torch.Size([2, 2, 5, 5])

输出的size不同,第一个是数据相加,值大小的变化,第二个是拼接了一个特征图。通道数的改变。

import torch

a = torch.rand((2,3,5,5))

b = torch.rand((2,3,5,5))

e = torch.cat([a[:,:1,:,:]+b[:,:1,:,:],a[:,1:,:,:]],dim=1)

c= torch.cat([a[:,:1,:,:]+b[:,:1,:,:],a[:,1:,:,:], b[:,1:,:,:]],dim=1)

print(e.size())

print(c.size())

#输出

torch.Size([2, 3, 5, 5])

torch.Size([2, 5, 5, 5])

e的通道数为3,[a[:,:1,:,:]+b[:,:1,:,:],通道数不变,值得相加,后面a[:,1:,:,:]=a[:,1:3,:,:]这样看更明白,前面是一个通道数,后面是二个通道数,相加就是三个通道数。到这里应该很明白torch.cat的使用了吧。

融合的模型

DPN对特征图的利用非常的全面,一边有shortcut,又有dense浅层重复利用,所以构造起来也是相当难以理解。请看下面一个板块的构造。

class Block(nn.Module):

def __init__(self,in_channels,mid_channels,out_channels,dense_channels,stride,is_shortcut):

super(Block,self).__init__()

self.is_shortcut = is_shortcut

self.out_channels = out_channels

self.relu = nn.ReLU(inplace=True)

self.conv1 = nn.Sequential(

nn.Conv2d(in_channels,mid_channels,kernel_size=1,bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU()

)

self.conv2 = nn.Sequential(

nn.Conv2d(mid_channels,mid_channels,kernel_size=3,stride=stride,padding=1,groups=32,bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU()

)

self.conv3 = nn.Sequential(

nn.Conv2d(mid_channels,out_channels+dense_channels,kernel_size=1,bias=False),

nn.BatchNorm2d(out_channels+dense_channels)

)

if self.is_shortcut:

self.shortcut = nn.Sequential(

nn.Conv2d(in_channels,out_channels+dense_channels,kernel_size=1,stride=stride,bias=False),

nn.BatchNorm2d(out_channels+dense_channels)

)

def forward(self, x):

a = x

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

if self.is_shortcut:

a = self.shortcut(a)

d = self.out_channels

x = torch.cat([a[:,:d,:,:]+x[:,:d,:,:], a[:,d:,:,:], x[:,d:,:,:]], dim=1)

x = self.relu(x)

return x

in_channels,是输入通道数,mid_channel是中间经历的通道数,out_channels是经过一次板块之后的输出通道数。dense_channels设置这个参数的原因就是一边进行着resnet方式的卷进运算,另一边也同时进行着dense的卷积计算,之后特征图融合形成新的特征图。

self.conv3 = nn.Sequential(

nn.Conv2d(mid_channels,out_channels+dense_channels,kernel_size=1,bias=False),

nn.BatchNorm2d(out_channels+dense_channels)

)

self.shortcut = nn.Sequential(

nn.Conv2d(in_channels,out_channels+dense_channels,kernel_size=1,stride=stride,bias=False),

nn.BatchNorm2d(out_channels+dense_channels)

核心点就是out_channels+dense_channels这个含义就是代表就同时进行resnet和dense卷积。

模型结构的讲解

def _make_layer(self,mid_channels,out_channels,dense_channels,num,stride):

layers = []

block_1 = Block(self.in_channels,mid_channels,out_channels,dense_channels,stride,is_shortcut=True)

self.in_channels = out_channels + 2*dense_channels

layers.append(block_1)

for i in range(1, num):

layers.append(Block(self.in_channels,mid_channels,out_channels,dense_channels,stride=1,is_shortcut=False))

self.in_channels = out_channels + (i+2)*dense_channels

return nn.Sequential(*layers)

block_1里面is_shortcut=True就是resnet中的shortcut连接,将浅层的特征进行一次卷积之后与进行三次卷积的特征图相加。 self.in_channels = out_channels + 2*dense_channels由于里面包含dense这种一直在叠加的特征图计算,所以第一次是2倍的dense_channels,每次一都会多出一倍,所以有(i+2)*dense_channels,后面几次相同的板块is_shortcut=False简单的理解就是一个多次重复的板块,第一次利用就可以满足浅层特征的利用。后面重复的不在需要。

全部代码

import torch

import torch.nn as nn

class Block(nn.Module):

def __init__(self,in_channels,mid_channels,out_channels,dense_channels,stride,is_shortcut):

super(Block,self).__init__()

self.is_shortcut = is_shortcut

self.out_channels = out_channels

self.relu = nn.ReLU(inplace=True)

self.conv1 = nn.Sequential(

nn.Conv2d(in_channels,mid_channels,kernel_size=1,bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU()

)

self.conv2 = nn.Sequential(

nn.Conv2d(mid_channels,mid_channels,kernel_size=3,stride=stride,padding=1,groups=32,bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU()

)

self.conv3 = nn.Sequential(

nn.Conv2d(mid_channels,out_channels+dense_channels,kernel_size=1,bias=False),

nn.BatchNorm2d(out_channels+dense_channels)

)

if self.is_shortcut:

self.shortcut = nn.Sequential(

nn.Conv2d(in_channels,out_channels+dense_channels,kernel_size=1,stride=stride,bias=False),

nn.BatchNorm2d(out_channels+dense_channels)

)

def forward(self, x):

a = x

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

if self.is_shortcut:

a = self.shortcut(a)

d = self.out_channels

x = torch.cat([a[:,:d,:,:]+x[:,:d,:,:], a[:,d:,:,:], x[:,d:,:,:]], dim=1)

x = self.relu(x)

return x

class DPN(nn.Module):

def __init__(self,cfg):

super(DPN,self).__init__()

mid_channels = cfg['mid_channels']

out_channels = cfg['out_channels']

num = cfg['num']

dense_channels = cfg['dense_channels']

self.in_channels = 64

self.conv1 = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False),

nn.BatchNorm2d(64),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

self.conv2 = self._make_layer(mid_channels[0], out_channels[0], dense_channels[0], num[0], stride=1)

self.conv3 = self._make_layer(mid_channels[1], out_channels[1], dense_channels[1], num[1], stride=2)

self.conv4 = self._make_layer(mid_channels[2], out_channels[2], dense_channels[2], num[2], stride=2)

self.conv5 = self._make_layer(mid_channels[3], out_channels[3], dense_channels[3], num[3], stride=2)

self.global_average_pool = nn.AdaptiveAvgPool2d((1,1))

self.fc = nn.Linear(out_channels[3]+(num[3]+1)*dense_channels[3], cfg['classes'])

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.conv4(x)

x = self.conv5(x)

x = self.global_average_pool(x)

x = torch.flatten(x, 1)

x = self.fc(x)

return x

def _make_layer(self,mid_channels,out_channels,dense_channels,num,stride):

layers = []

block_1 = Block(self.in_channels,mid_channels,out_channels,dense_channels,stride,is_shortcut=True)

self.in_channels = out_channels + 2*dense_channels

layers.append(block_1)

for i in range(1, num):

layers.append(Block(self.in_channels,mid_channels,out_channels,dense_channels,stride=1,is_shortcut=False))

self.in_channels = out_channels + (i+2)*dense_channels

return nn.Sequential(*layers)

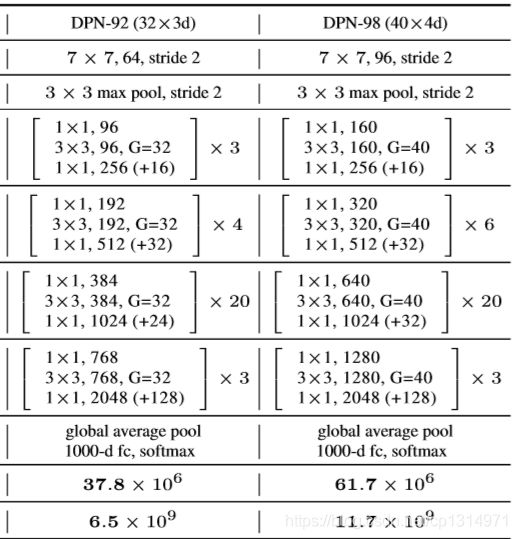

def DPN92():

cfg = {

'mid_channels': (96,192,384,768),

'out_channels': (256,512,1024,2048),

'num': (3,4,20,3),

'dense_channels': (16,32,24,128),

'classes': (10)

}

return DPN(cfg)

net = DPN92()

x = torch.rand((10, 3, 224, 224))

for name,layer in net.named_children():

if name != "fc":

x = layer(x)

print(name, 'output shaoe:', x.shape)

else:

x = x.view(x.size(0), -1)

x = layer(x)

print(name, 'output shaoe:', x.shape)