Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks 代码编译

看到faster rcnn官方说明其实配置起来很简单,但是... 还是遇到了一堆的问题。有几个重要的地方还记得就强调一下。

1. faster rcnn的官方第一句话是改Makefile.config的配置:使用cudnn以及python layer。所以是需要cudnn的,而且,(划重点)。只能使用cudnn v4。 如果看我以前的文章就知道,我刚开始安装的时候看别人的教程,就安装的cudnn v5。 但是,跑这个的时候需要用的v4版本。不然会出现下面的问题:

.build_release/lib/libcaffe.so: undefined reference to `cudnnConvolutionBackwardData_v3'

.build_release/lib/libcaffe.so: undefined reference to `cudnnConvolutionBackwardFilter_v3'

collect2: error: ld returned 1 exit status

Makefile:626: recipe for target '.build_release/tools/convert_imageset.bin' failed

make: *** [.build_release/tools/convert_imageset.bin] Error 1

或者是

In file included from ./include/caffe/util/cudnn.hpp:5:0,

from ./include/caffe/util/device_alternate.hpp:40,

from ./include/caffe/common.hpp:19,

from ./include/caffe/blob.hpp:8,

from ./include/caffe/layer.hpp:8,

from src/caffe/layer.cpp:2:

/usr/include/cudnn.h:803:27: note: declared here

cudnnStatus_t CUDNNWINAPI cudnnSetPooling2dDescriptor(

^

Makefile:563: recipe for target '.build_release/src/caffe/layer.o' failed

make: *** [.build_release/src/caffe/layer.o] Error 1

2.还有,官方说在make 的时候是make -j8 && make pycaffe。但是,如果你出现了以下的问题

In file included from ./include/caffe/util/device_alternate.hpp:40:0,

from ./include/caffe/common.hpp:19,

from src/caffe/net.cpp:10:

./include/caffe/util/cudnn.hpp:8:34: fatal error: caffe/proto/caffe.pb.h: No such file or directory

compilation terminated.

Makefile:582: recipe for target '.build_release/src/caffe/net.o' failed

make: *** [.build_release/src/caffe/net.o] Error 1

make: *** Waiting for unfinished jobs....

是因为运行速度太快,只要分开先make,完成之后再make pycaffe就没有问题。

3.刚开始还碰到一个脑残的问题

Makefile:6: *** Makefile.config not found. See Makefile.config.example.. Stop.

这是因为直接扒下来的东西里面是没有Makefile.config的,你需要加入Makefile.config。当然,有人是cp Makefile.config.example Makefile.config 来重新设置,如果你之前配成功过caffe,直接拷过来也是没问题的。最好把Makefile文件一起拷过来,如果你之前像我一样也改动过Makefile文件的话。

我的Makefile文件如下:

PROJECT := caffe

CONFIG_FILE := Makefile.config

# Explicitly check for the config file, otherwise make -k will proceed anyway.

ifeq ($(wildcard $(CONFIG_FILE)),)

$(error $(CONFIG_FILE) not found. See $(CONFIG_FILE).example.)

endif

include $(CONFIG_FILE)

BUILD_DIR_LINK := $(BUILD_DIR)

ifeq ($(RELEASE_BUILD_DIR),)

RELEASE_BUILD_DIR := .$(BUILD_DIR)_release

endif

ifeq ($(DEBUG_BUILD_DIR),)

DEBUG_BUILD_DIR := .$(BUILD_DIR)_debug

endif

DEBUG ?= 0

ifeq ($(DEBUG), 1)

BUILD_DIR := $(DEBUG_BUILD_DIR)

OTHER_BUILD_DIR := $(RELEASE_BUILD_DIR)

else

BUILD_DIR := $(RELEASE_BUILD_DIR)

OTHER_BUILD_DIR := $(DEBUG_BUILD_DIR)

endif

# All of the directories containing code.

SRC_DIRS := $(shell find * -type d -exec bash -c "find {} -maxdepth 1 \

\( -name '*.cpp' -o -name '*.proto' \) | grep -q ." \; -print)

# The target shared library name

LIBRARY_NAME := $(PROJECT)

LIB_BUILD_DIR := $(BUILD_DIR)/lib

STATIC_NAME := $(LIB_BUILD_DIR)/lib$(LIBRARY_NAME).a

DYNAMIC_VERSION_MAJOR := 1

DYNAMIC_VERSION_MINOR := 0

DYNAMIC_VERSION_REVISION := 0-rc5

DYNAMIC_NAME_SHORT := lib$(LIBRARY_NAME).so

#DYNAMIC_SONAME_SHORT := $(DYNAMIC_NAME_SHORT).$(DYNAMIC_VERSION_MAJOR)

DYNAMIC_VERSIONED_NAME_SHORT := $(DYNAMIC_NAME_SHORT).$(DYNAMIC_VERSION_MAJOR).$(DYNAMIC_VERSION_MINOR).$(DYNAMIC_VERSION_REVISION)

DYNAMIC_NAME := $(LIB_BUILD_DIR)/$(DYNAMIC_VERSIONED_NAME_SHORT)

COMMON_FLAGS += -DCAFFE_VERSION=$(DYNAMIC_VERSION_MAJOR).$(DYNAMIC_VERSION_MINOR).$(DYNAMIC_VERSION_REVISION)

##############################

# Get all source files

##############################

# CXX_SRCS are the source files excluding the test ones.

CXX_SRCS := $(shell find src/$(PROJECT) ! -name "test_*.cpp" -name "*.cpp")

# CU_SRCS are the cuda source files

CU_SRCS := $(shell find src/$(PROJECT) ! -name "test_*.cu" -name "*.cu")

# TEST_SRCS are the test source files

TEST_MAIN_SRC := src/$(PROJECT)/test/test_caffe_main.cpp

TEST_SRCS := $(shell find src/$(PROJECT) -name "test_*.cpp")

TEST_SRCS := $(filter-out $(TEST_MAIN_SRC), $(TEST_SRCS))

TEST_CU_SRCS := $(shell find src/$(PROJECT) -name "test_*.cu")

GTEST_SRC := src/gtest/gtest-all.cpp

# TOOL_SRCS are the source files for the tool binaries

TOOL_SRCS := $(shell find tools -name "*.cpp")

# EXAMPLE_SRCS are the source files for the example binaries

EXAMPLE_SRCS := $(shell find examples -name "*.cpp")

# BUILD_INCLUDE_DIR contains any generated header files we want to include.

BUILD_INCLUDE_DIR := $(BUILD_DIR)/src

# PROTO_SRCS are the protocol buffer definitions

PROTO_SRC_DIR := src/$(PROJECT)/proto

PROTO_SRCS := $(wildcard $(PROTO_SRC_DIR)/*.proto)

# PROTO_BUILD_DIR will contain the .cc and obj files generated from

# PROTO_SRCS; PROTO_BUILD_INCLUDE_DIR will contain the .h header files

PROTO_BUILD_DIR := $(BUILD_DIR)/$(PROTO_SRC_DIR)

PROTO_BUILD_INCLUDE_DIR := $(BUILD_INCLUDE_DIR)/$(PROJECT)/proto

# NONGEN_CXX_SRCS includes all source/header files except those generated

# automatically (e.g., by proto).

NONGEN_CXX_SRCS := $(shell find \

src/$(PROJECT) \

include/$(PROJECT) \

python/$(PROJECT) \

matlab/+$(PROJECT)/private \

examples \

tools \

-name "*.cpp" -or -name "*.hpp" -or -name "*.cu" -or -name "*.cuh")

LINT_SCRIPT := scripts/cpp_lint.py

LINT_OUTPUT_DIR := $(BUILD_DIR)/.lint

LINT_EXT := lint.txt

LINT_OUTPUTS := $(addsuffix .$(LINT_EXT), $(addprefix $(LINT_OUTPUT_DIR)/, $(NONGEN_CXX_SRCS)))

EMPTY_LINT_REPORT := $(BUILD_DIR)/.$(LINT_EXT)

NONEMPTY_LINT_REPORT := $(BUILD_DIR)/$(LINT_EXT)

# PY$(PROJECT)_SRC is the python wrapper for $(PROJECT)

PY$(PROJECT)_SRC := python/$(PROJECT)/_$(PROJECT).cpp

PY$(PROJECT)_SO := python/$(PROJECT)/_$(PROJECT).so

PY$(PROJECT)_HXX := include/$(PROJECT)/layers/python_layer.hpp

# MAT$(PROJECT)_SRC is the mex entrance point of matlab package for $(PROJECT)

MAT$(PROJECT)_SRC := matlab/+$(PROJECT)/private/$(PROJECT)_.cpp

ifneq ($(MATLAB_DIR),)

MAT_SO_EXT := $(shell $(MATLAB_DIR)/bin/mexext)

endif

MAT$(PROJECT)_SO := matlab/+$(PROJECT)/private/$(PROJECT)_.$(MAT_SO_EXT)

##############################

# Derive generated files

##############################

# The generated files for protocol buffers

PROTO_GEN_HEADER_SRCS := $(addprefix $(PROTO_BUILD_DIR)/, \

$(notdir ${PROTO_SRCS:.proto=.pb.h}))

PROTO_GEN_HEADER := $(addprefix $(PROTO_BUILD_INCLUDE_DIR)/, \

$(notdir ${PROTO_SRCS:.proto=.pb.h}))

PROTO_GEN_CC := $(addprefix $(BUILD_DIR)/, ${PROTO_SRCS:.proto=.pb.cc})

PY_PROTO_BUILD_DIR := python/$(PROJECT)/proto

PY_PROTO_INIT := python/$(PROJECT)/proto/__init__.py

PROTO_GEN_PY := $(foreach file,${PROTO_SRCS:.proto=_pb2.py}, \

$(PY_PROTO_BUILD_DIR)/$(notdir $(file)))

# The objects corresponding to the source files

# These objects will be linked into the final shared library, so we

# exclude the tool, example, and test objects.

CXX_OBJS := $(addprefix $(BUILD_DIR)/, ${CXX_SRCS:.cpp=.o})

CU_OBJS := $(addprefix $(BUILD_DIR)/cuda/, ${CU_SRCS:.cu=.o})

PROTO_OBJS := ${PROTO_GEN_CC:.cc=.o}

OBJS := $(PROTO_OBJS) $(CXX_OBJS) $(CU_OBJS)

# tool, example, and test objects

TOOL_OBJS := $(addprefix $(BUILD_DIR)/, ${TOOL_SRCS:.cpp=.o})

TOOL_BUILD_DIR := $(BUILD_DIR)/tools

TEST_CXX_BUILD_DIR := $(BUILD_DIR)/src/$(PROJECT)/test

TEST_CU_BUILD_DIR := $(BUILD_DIR)/cuda/src/$(PROJECT)/test

TEST_CXX_OBJS := $(addprefix $(BUILD_DIR)/, ${TEST_SRCS:.cpp=.o})

TEST_CU_OBJS := $(addprefix $(BUILD_DIR)/cuda/, ${TEST_CU_SRCS:.cu=.o})

TEST_OBJS := $(TEST_CXX_OBJS) $(TEST_CU_OBJS)

GTEST_OBJ := $(addprefix $(BUILD_DIR)/, ${GTEST_SRC:.cpp=.o})

EXAMPLE_OBJS := $(addprefix $(BUILD_DIR)/, ${EXAMPLE_SRCS:.cpp=.o})

# Output files for automatic dependency generation

DEPS := ${CXX_OBJS:.o=.d} ${CU_OBJS:.o=.d} ${TEST_CXX_OBJS:.o=.d} \

${TEST_CU_OBJS:.o=.d} $(BUILD_DIR)/${MAT$(PROJECT)_SO:.$(MAT_SO_EXT)=.d}

# tool, example, and test bins

TOOL_BINS := ${TOOL_OBJS:.o=.bin}

EXAMPLE_BINS := ${EXAMPLE_OBJS:.o=.bin}

# symlinks to tool bins without the ".bin" extension

TOOL_BIN_LINKS := ${TOOL_BINS:.bin=}

# Put the test binaries in build/test for convenience.

TEST_BIN_DIR := $(BUILD_DIR)/test

TEST_CU_BINS := $(addsuffix .testbin,$(addprefix $(TEST_BIN_DIR)/, \

$(foreach obj,$(TEST_CU_OBJS),$(basename $(notdir $(obj))))))

TEST_CXX_BINS := $(addsuffix .testbin,$(addprefix $(TEST_BIN_DIR)/, \

$(foreach obj,$(TEST_CXX_OBJS),$(basename $(notdir $(obj))))))

TEST_BINS := $(TEST_CXX_BINS) $(TEST_CU_BINS)

# TEST_ALL_BIN is the test binary that links caffe dynamically.

TEST_ALL_BIN := $(TEST_BIN_DIR)/test_all.testbin

##############################

# Derive compiler warning dump locations

##############################

WARNS_EXT := warnings.txt

CXX_WARNS := $(addprefix $(BUILD_DIR)/, ${CXX_SRCS:.cpp=.o.$(WARNS_EXT)})

CU_WARNS := $(addprefix $(BUILD_DIR)/cuda/, ${CU_SRCS:.cu=.o.$(WARNS_EXT)})

TOOL_WARNS := $(addprefix $(BUILD_DIR)/, ${TOOL_SRCS:.cpp=.o.$(WARNS_EXT)})

EXAMPLE_WARNS := $(addprefix $(BUILD_DIR)/, ${EXAMPLE_SRCS:.cpp=.o.$(WARNS_EXT)})

TEST_WARNS := $(addprefix $(BUILD_DIR)/, ${TEST_SRCS:.cpp=.o.$(WARNS_EXT)})

TEST_CU_WARNS := $(addprefix $(BUILD_DIR)/cuda/, ${TEST_CU_SRCS:.cu=.o.$(WARNS_EXT)})

ALL_CXX_WARNS := $(CXX_WARNS) $(TOOL_WARNS) $(EXAMPLE_WARNS) $(TEST_WARNS)

ALL_CU_WARNS := $(CU_WARNS) $(TEST_CU_WARNS)

ALL_WARNS := $(ALL_CXX_WARNS) $(ALL_CU_WARNS)

EMPTY_WARN_REPORT := $(BUILD_DIR)/.$(WARNS_EXT)

NONEMPTY_WARN_REPORT := $(BUILD_DIR)/$(WARNS_EXT)

##############################

# Derive include and lib directories

##############################

CUDA_INCLUDE_DIR := $(CUDA_DIR)/include

CUDA_LIB_DIR :=

# add

ifneq ("$(wildcard $(CUDA_DIR)/lib64)","")

CUDA_LIB_DIR += $(CUDA_DIR)/lib64

endif

CUDA_LIB_DIR += $(CUDA_DIR)/lib

INCLUDE_DIRS += $(BUILD_INCLUDE_DIR) ./src ./include

ifneq ($(CPU_ONLY), 1)

INCLUDE_DIRS += $(CUDA_INCLUDE_DIR)

LIBRARY_DIRS += $(CUDA_LIB_DIR)

LIBRARIES := cudart cublas curand

endif

LIBRARIES += glog gflags protobuf boost_system boost_filesystem m hdf5_hl hdf5

# handle IO dependencies

USE_LEVELDB ?= 1

USE_LMDB ?= 1

USE_OPENCV ?= 1

ifeq ($(USE_LEVELDB), 1)

LIBRARIES += leveldb snappy

endif

ifeq ($(USE_LMDB), 1)

LIBRARIES += lmdb

endif

ifeq ($(USE_OPENCV), 1)

LIBRARIES += opencv_core opencv_highgui opencv_imgproc

ifeq ($(OPENCV_VERSION), 3)

LIBRARIES += opencv_imgcodecs

endif

endif

PYTHON_LIBRARIES ?= boost_python python2.7

WARNINGS := -Wall -Wno-sign-compare

##############################

# Set build directories

##############################

DISTRIBUTE_DIR ?= distribute

DISTRIBUTE_SUBDIRS := $(DISTRIBUTE_DIR)/bin $(DISTRIBUTE_DIR)/lib

DIST_ALIASES := dist

ifneq ($(strip $(DISTRIBUTE_DIR)),distribute)

DIST_ALIASES += distribute

endif

ALL_BUILD_DIRS := $(sort $(BUILD_DIR) $(addprefix $(BUILD_DIR)/, $(SRC_DIRS)) \

$(addprefix $(BUILD_DIR)/cuda/, $(SRC_DIRS)) \

$(LIB_BUILD_DIR) $(TEST_BIN_DIR) $(PY_PROTO_BUILD_DIR) $(LINT_OUTPUT_DIR) \

$(DISTRIBUTE_SUBDIRS) $(PROTO_BUILD_INCLUDE_DIR))

##############################

# Set directory for Doxygen-generated documentation

##############################

DOXYGEN_CONFIG_FILE ?= ./.Doxyfile

# should be the same as OUTPUT_DIRECTORY in the .Doxyfile

DOXYGEN_OUTPUT_DIR ?= ./doxygen

DOXYGEN_COMMAND ?= doxygen

# All the files that might have Doxygen documentation.

DOXYGEN_SOURCES := $(shell find \

src/$(PROJECT) \

include/$(PROJECT) \

python/ \

matlab/ \

examples \

tools \

-name "*.cpp" -or -name "*.hpp" -or -name "*.cu" -or -name "*.cuh" -or \

-name "*.py" -or -name "*.m")

DOXYGEN_SOURCES += $(DOXYGEN_CONFIG_FILE)

##############################

# Configure build

##############################

# Determine platform

UNAME := $(shell uname -s)

ifeq ($(UNAME), Linux)

LINUX := 1

else ifeq ($(UNAME), Darwin)

OSX := 1

OSX_MAJOR_VERSION := $(shell sw_vers -productVersion | cut -f 1 -d .)

OSX_MINOR_VERSION := $(shell sw_vers -productVersion | cut -f 2 -d .)

endif

# Linux

ifeq ($(LINUX), 1)

CXX ?= /usr/bin/g++

GCCVERSION := $(shell $(CXX) -dumpversion | cut -f1,2 -d.)

# older versions of gcc are too dumb to build boost with -Wuninitalized

ifeq ($(shell echo | awk '{exit $(GCCVERSION) < 4.6;}'), 1)

WARNINGS += -Wno-uninitialized

endif

# boost::thread is reasonably called boost_thread (compare OS X)

# We will also explicitly add stdc++ to the link target.

LIBRARIES += boost_thread stdc++

VERSIONFLAGS += -Wl,-soname,$(DYNAMIC_VERSIONED_NAME_SHORT) -Wl,-rpath,$(ORIGIN)/../lib

endif

# OS X:

# clang++ instead of g++

# libstdc++ for NVCC compatibility on OS X >= 10.9 with CUDA < 7.0

ifeq ($(OSX), 1)

CXX := /usr/bin/clang++

ifneq ($(CPU_ONLY), 1)

CUDA_VERSION := $(shell $(CUDA_DIR)/bin/nvcc -V | grep -o 'release [0-9.]*' | tr -d '[a-z ]')

ifeq ($(shell echo | awk '{exit $(CUDA_VERSION) < 7.0;}'), 1)

CXXFLAGS += -stdlib=libstdc++

LINKFLAGS += -stdlib=libstdc++

endif

# clang throws this warning for cuda headers

WARNINGS += -Wno-unneeded-internal-declaration

# 10.11 strips DYLD_* env vars so link CUDA (rpath is available on 10.5+)

OSX_10_OR_LATER := $(shell [ $(OSX_MAJOR_VERSION) -ge 10 ] && echo true)

OSX_10_5_OR_LATER := $(shell [ $(OSX_MINOR_VERSION) -ge 5 ] && echo true)

ifeq ($(OSX_10_OR_LATER),true)

ifeq ($(OSX_10_5_OR_LATER),true)

LDFLAGS += -Wl,-rpath,$(CUDA_LIB_DIR)

endif

endif

endif

# gtest needs to use its own tuple to not conflict with clang

COMMON_FLAGS += -DGTEST_USE_OWN_TR1_TUPLE=1

# boost::thread is called boost_thread-mt to mark multithreading on OS X

LIBRARIES += boost_thread-mt

# we need to explicitly ask for the rpath to be obeyed

ORIGIN := @loader_path

VERSIONFLAGS += -Wl,-install_name,@rpath/$(DYNAMIC_VERSIONED_NAME_SHORT) -Wl,-rpath,$(ORIGIN)/../../build/lib

else

ORIGIN := \$$ORIGIN

endif

# Custom compiler

ifdef CUSTOM_CXX

CXX := $(CUSTOM_CXX)

endif

# Static linking

ifneq (,$(findstring clang++,$(CXX)))

STATIC_LINK_COMMAND := -Wl,-force_load $(STATIC_NAME)

else ifneq (,$(findstring g++,$(CXX)))

STATIC_LINK_COMMAND := -Wl,--whole-archive $(STATIC_NAME) -Wl,--no-whole-archive

else

# The following line must not be indented with a tab, since we are not inside a target

$(error Cannot static link with the $(CXX) compiler)

endif

# Debugging

ifeq ($(DEBUG), 1)

COMMON_FLAGS += -DDEBUG -g -O0

NVCCFLAGS += -G

else

COMMON_FLAGS += -DNDEBUG -O2

endif

# cuDNN acceleration configuration.

ifeq ($(USE_CUDNN), 1)

LIBRARIES += cudnn

COMMON_FLAGS += -DUSE_CUDNN

endif

# NCCL acceleration configuration

ifeq ($(USE_NCCL), 1)

LIBRARIES += nccl

COMMON_FLAGS += -DUSE_NCCL

endif

# configure IO libraries

ifeq ($(USE_OPENCV), 1)

COMMON_FLAGS += -DUSE_OPENCV

endif

ifeq ($(USE_LEVELDB), 1)

COMMON_FLAGS += -DUSE_LEVELDB

endif

ifeq ($(USE_LMDB), 1)

COMMON_FLAGS += -DUSE_LMDB

ifeq ($(ALLOW_LMDB_NOLOCK), 1)

COMMON_FLAGS += -DALLOW_LMDB_NOLOCK

endif

endif

# CPU-only configuration

ifeq ($(CPU_ONLY), 1)

OBJS := $(PROTO_OBJS) $(CXX_OBJS)

TEST_OBJS := $(TEST_CXX_OBJS)

TEST_BINS := $(TEST_CXX_BINS)

ALL_WARNS := $(ALL_CXX_WARNS)

TEST_FILTER := --gtest_filter="-*GPU*"

COMMON_FLAGS += -DCPU_ONLY

endif

# Python layer support

ifeq ($(WITH_PYTHON_LAYER), 1)

COMMON_FLAGS += -DWITH_PYTHON_LAYER

LIBRARIES += $(PYTHON_LIBRARIES)

endif

# BLAS configuration (default = ATLAS)

BLAS ?= atlas

ifeq ($(BLAS), mkl)

# MKL

LIBRARIES += mkl_rt

COMMON_FLAGS += -DUSE_MKL

MKLROOT ?= /opt/intel/mkl

BLAS_INCLUDE ?= $(MKLROOT)/include

BLAS_LIB ?= $(MKLROOT)/lib $(MKLROOT)/lib/intel64

else ifeq ($(BLAS), open)

# OpenBLAS

LIBRARIES += openblas

else

# ATLAS

ifeq ($(LINUX), 1)

ifeq ($(BLAS), atlas)

# Linux simply has cblas and atlas

LIBRARIES += cblas atlas

endif

else ifeq ($(OSX), 1)

# OS X packages atlas as the vecLib framework

LIBRARIES += cblas

# 10.10 has accelerate while 10.9 has veclib

XCODE_CLT_VER := $(shell pkgutil --pkg-info=com.apple.pkg.CLTools_Executables | grep 'version' | sed 's/[^0-9]*\([0-9]\).*/\1/')

XCODE_CLT_GEQ_7 := $(shell [ $(XCODE_CLT_VER) -gt 6 ] && echo 1)

XCODE_CLT_GEQ_6 := $(shell [ $(XCODE_CLT_VER) -gt 5 ] && echo 1)

ifeq ($(XCODE_CLT_GEQ_7), 1)

BLAS_INCLUDE ?= /Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/$(shell ls /Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/ | sort | tail -1)/System/Library/Frameworks/Accelerate.framework/Versions/A/Frameworks/vecLib.framework/Versions/A/Headers

else ifeq ($(XCODE_CLT_GEQ_6), 1)

BLAS_INCLUDE ?= /System/Library/Frameworks/Accelerate.framework/Versions/Current/Frameworks/vecLib.framework/Headers/

LDFLAGS += -framework Accelerate

else

BLAS_INCLUDE ?= /System/Library/Frameworks/vecLib.framework/Versions/Current/Headers/

LDFLAGS += -framework vecLib

endif

endif

endif

INCLUDE_DIRS += $(BLAS_INCLUDE)

LIBRARY_DIRS += $(BLAS_LIB)

LIBRARY_DIRS += $(LIB_BUILD_DIR)

# Automatic dependency generation (nvcc is handled separately)

CXXFLAGS += -MMD -MP

CXXFLAGS += -std=c++11

# Complete build flags.

COMMON_FLAGS += $(foreach includedir,$(INCLUDE_DIRS),-I$(includedir))

CXXFLAGS += -pthread -fPIC $(COMMON_FLAGS) $(WARNINGS)

NVCCFLAGS += -D_FORCE_INLINES -ccbin=$(CXX) -Xcompiler -fPIC $(COMMON_FLAGS)

# mex may invoke an older gcc that is too liberal with -Wuninitalized

MATLAB_CXXFLAGS := $(CXXFLAGS) -Wno-uninitialized

LINKFLAGS += -pthread -fPIC $(COMMON_FLAGS) $(WARNINGS)

USE_PKG_CONFIG ?= 0

ifeq ($(USE_PKG_CONFIG), 1)

PKG_CONFIG := $(shell pkg-config opencv --libs)

else

PKG_CONFIG :=

endif

LDFLAGS += $(foreach librarydir,$(LIBRARY_DIRS),-L$(librarydir)) $(PKG_CONFIG) \

$(foreach library,$(LIBRARIES),-l$(library))

PYTHON_LDFLAGS := $(LDFLAGS) $(foreach library,$(PYTHON_LIBRARIES),-l$(library))

# 'superclean' target recursively* deletes all files ending with an extension

# in $(SUPERCLEAN_EXTS) below. This may be useful if you've built older

# versions of Caffe that do not place all generated files in a location known

# to the 'clean' target.

#

# 'supercleanlist' will list the files to be deleted by make superclean.

#

# * Recursive with the exception that symbolic links are never followed, per the

# default behavior of 'find'.

SUPERCLEAN_EXTS := .so .a .o .bin .testbin .pb.cc .pb.h _pb2.py .cuo

# Set the sub-targets of the 'everything' target.

EVERYTHING_TARGETS := all py$(PROJECT) test warn lint

# Only build matcaffe as part of "everything" if MATLAB_DIR is specified.

ifneq ($(MATLAB_DIR),)

EVERYTHING_TARGETS += mat$(PROJECT)

endif

##############################

# Define build targets

##############################

.PHONY: all lib test clean docs linecount lint lintclean tools examples $(DIST_ALIASES) \

py mat py$(PROJECT) mat$(PROJECT) proto runtest \

superclean supercleanlist supercleanfiles warn everything

all: lib tools examples

lib: $(STATIC_NAME) $(DYNAMIC_NAME)

everything: $(EVERYTHING_TARGETS)

linecount:

cloc --read-lang-def=$(PROJECT).cloc \

src/$(PROJECT) include/$(PROJECT) tools examples \

python matlab

lint: $(EMPTY_LINT_REPORT)

lintclean:

@ $(RM) -r $(LINT_OUTPUT_DIR) $(EMPTY_LINT_REPORT) $(NONEMPTY_LINT_REPORT)

docs: $(DOXYGEN_OUTPUT_DIR)

@ cd ./docs ; ln -sfn ../$(DOXYGEN_OUTPUT_DIR)/html doxygen

$(DOXYGEN_OUTPUT_DIR): $(DOXYGEN_CONFIG_FILE) $(DOXYGEN_SOURCES)

$(DOXYGEN_COMMAND) $(DOXYGEN_CONFIG_FILE)

$(EMPTY_LINT_REPORT): $(LINT_OUTPUTS) | $(BUILD_DIR)

@ cat $(LINT_OUTPUTS) > $@

@ if [ -s "$@" ]; then \

cat $@; \

mv $@ $(NONEMPTY_LINT_REPORT); \

echo "Found one or more lint errors."; \

exit 1; \

fi; \

$(RM) $(NONEMPTY_LINT_REPORT); \

echo "No lint errors!";

$(LINT_OUTPUTS): $(LINT_OUTPUT_DIR)/%.lint.txt : % $(LINT_SCRIPT) | $(LINT_OUTPUT_DIR)

@ mkdir -p $(dir $@)

@ python $(LINT_SCRIPT) $< 2>&1 \

| grep -v "^Done processing " \

| grep -v "^Total errors found: 0" \

> $@ \

|| true

test: $(TEST_ALL_BIN) $(TEST_ALL_DYNLINK_BIN) $(TEST_BINS)

tools: $(TOOL_BINS) $(TOOL_BIN_LINKS)

examples: $(EXAMPLE_BINS)

py$(PROJECT): py

py: $(PY$(PROJECT)_SO) $(PROTO_GEN_PY)

$(PY$(PROJECT)_SO): $(PY$(PROJECT)_SRC) $(PY$(PROJECT)_HXX) | $(DYNAMIC_NAME)

@ echo CXX/LD -o $@ $<

$(Q)$(CXX) -shared -o $@ $(PY$(PROJECT)_SRC) \

-o $@ $(LINKFLAGS) -l$(LIBRARY_NAME) $(PYTHON_LDFLAGS) \

-Wl,-rpath,$(ORIGIN)/../../build/lib

mat$(PROJECT): mat

mat: $(MAT$(PROJECT)_SO)

$(MAT$(PROJECT)_SO): $(MAT$(PROJECT)_SRC) $(STATIC_NAME)

@ if [ -z "$(MATLAB_DIR)" ]; then \

echo "MATLAB_DIR must be specified in $(CONFIG_FILE)" \

"to build mat$(PROJECT)."; \

exit 1; \

fi

@ echo MEX $<

$(Q)$(MATLAB_DIR)/bin/mex $(MAT$(PROJECT)_SRC) \

CXX="$(CXX)" \

CXXFLAGS="\$$CXXFLAGS $(MATLAB_CXXFLAGS)" \

CXXLIBS="\$$CXXLIBS $(STATIC_LINK_COMMAND) $(LDFLAGS)" -output $@

@ if [ -f "$(PROJECT)_.d" ]; then \

mv -f $(PROJECT)_.d $(BUILD_DIR)/${MAT$(PROJECT)_SO:.$(MAT_SO_EXT)=.d}; \

fi

runtest: $(TEST_ALL_BIN)

$(TOOL_BUILD_DIR)/caffe

$(TEST_ALL_BIN) $(TEST_GPUID) --gtest_shuffle $(TEST_FILTER)

pytest: py

cd python; python -m unittest discover -s caffe/test

mattest: mat

cd matlab; $(MATLAB_DIR)/bin/matlab -nodisplay -r 'caffe.run_tests(), exit()'

warn: $(EMPTY_WARN_REPORT)

$(EMPTY_WARN_REPORT): $(ALL_WARNS) | $(BUILD_DIR)

@ cat $(ALL_WARNS) > $@

@ if [ -s "$@" ]; then \

cat $@; \

mv $@ $(NONEMPTY_WARN_REPORT); \

echo "Compiler produced one or more warnings."; \

exit 1; \

fi; \

$(RM) $(NONEMPTY_WARN_REPORT); \

echo "No compiler warnings!";

$(ALL_WARNS): %.o.$(WARNS_EXT) : %.o

$(BUILD_DIR_LINK): $(BUILD_DIR)/.linked

# Create a target ".linked" in this BUILD_DIR to tell Make that the "build" link

# is currently correct, then delete the one in the OTHER_BUILD_DIR in case it

# exists and $(DEBUG) is toggled later.

$(BUILD_DIR)/.linked:

@ mkdir -p $(BUILD_DIR)

@ $(RM) $(OTHER_BUILD_DIR)/.linked

@ $(RM) -r $(BUILD_DIR_LINK)

@ ln -s $(BUILD_DIR) $(BUILD_DIR_LINK)

@ touch $@

$(ALL_BUILD_DIRS): | $(BUILD_DIR_LINK)

@ mkdir -p $@

$(DYNAMIC_NAME): $(OBJS) | $(LIB_BUILD_DIR)

@ echo LD -o $@

$(Q)$(CXX) -shared -o $@ $(OBJS) $(VERSIONFLAGS) $(LINKFLAGS) $(LDFLAGS)

@ cd $(BUILD_DIR)/lib; rm -f $(DYNAMIC_NAME_SHORT); ln -s $(DYNAMIC_VERSIONED_NAME_SHORT) $(DYNAMIC_NAME_SHORT)

$(STATIC_NAME): $(OBJS) | $(LIB_BUILD_DIR)

@ echo AR -o $@

$(Q)ar rcs $@ $(OBJS)

$(BUILD_DIR)/%.o: %.cpp | $(ALL_BUILD_DIRS)

@ echo CXX $<

$(Q)$(CXX) $< $(CXXFLAGS) -c -o $@ 2> $@.$(WARNS_EXT) \

|| (cat $@.$(WARNS_EXT); exit 1)

@ cat $@.$(WARNS_EXT)

$(PROTO_BUILD_DIR)/%.pb.o: $(PROTO_BUILD_DIR)/%.pb.cc $(PROTO_GEN_HEADER) \

| $(PROTO_BUILD_DIR)

@ echo CXX $<

$(Q)$(CXX) $< $(CXXFLAGS) -c -o $@ 2> $@.$(WARNS_EXT) \

|| (cat $@.$(WARNS_EXT); exit 1)

@ cat $@.$(WARNS_EXT)

$(BUILD_DIR)/cuda/%.o: %.cu | $(ALL_BUILD_DIRS)

@ echo NVCC $<

$(Q)$(CUDA_DIR)/bin/nvcc $(NVCCFLAGS) $(CUDA_ARCH) -M $< -o ${@:.o=.d} \

-odir $(@D)

$(Q)$(CUDA_DIR)/bin/nvcc $(NVCCFLAGS) $(CUDA_ARCH) -c $< -o $@ 2> $@.$(WARNS_EXT) \

|| (cat $@.$(WARNS_EXT); exit 1)

@ cat $@.$(WARNS_EXT)

$(TEST_ALL_BIN): $(TEST_MAIN_SRC) $(TEST_OBJS) $(GTEST_OBJ) \

| $(DYNAMIC_NAME) $(TEST_BIN_DIR)

@ echo CXX/LD -o $@ $<

$(Q)$(CXX) $(TEST_MAIN_SRC) $(TEST_OBJS) $(GTEST_OBJ) \

-o $@ $(LINKFLAGS) $(LDFLAGS) -l$(LIBRARY_NAME) -Wl,-rpath,$(ORIGIN)/../lib

$(TEST_CU_BINS): $(TEST_BIN_DIR)/%.testbin: $(TEST_CU_BUILD_DIR)/%.o \

$(GTEST_OBJ) | $(DYNAMIC_NAME) $(TEST_BIN_DIR)

@ echo LD $<

$(Q)$(CXX) $(TEST_MAIN_SRC) $< $(GTEST_OBJ) \

-o $@ $(LINKFLAGS) $(LDFLAGS) -l$(LIBRARY_NAME) -Wl,-rpath,$(ORIGIN)/../lib

$(TEST_CXX_BINS): $(TEST_BIN_DIR)/%.testbin: $(TEST_CXX_BUILD_DIR)/%.o \

$(GTEST_OBJ) | $(DYNAMIC_NAME) $(TEST_BIN_DIR)

@ echo LD $<

$(Q)$(CXX) $(TEST_MAIN_SRC) $< $(GTEST_OBJ) \

-o $@ $(LINKFLAGS) $(LDFLAGS) -l$(LIBRARY_NAME) -Wl,-rpath,$(ORIGIN)/../lib

# Target for extension-less symlinks to tool binaries with extension '*.bin'.

$(TOOL_BUILD_DIR)/%: $(TOOL_BUILD_DIR)/%.bin | $(TOOL_BUILD_DIR)

@ $(RM) $@

@ ln -s $(notdir $<) $@

$(TOOL_BINS): %.bin : %.o | $(DYNAMIC_NAME)

@ echo CXX/LD -o $@

$(Q)$(CXX) $< -o $@ $(LINKFLAGS) -l$(LIBRARY_NAME) $(LDFLAGS) \

-Wl,-rpath,$(ORIGIN)/../lib

$(EXAMPLE_BINS): %.bin : %.o | $(DYNAMIC_NAME)

@ echo CXX/LD -o $@

$(Q)$(CXX) $< -o $@ $(LINKFLAGS) -l$(LIBRARY_NAME) $(LDFLAGS) \

-Wl,-rpath,$(ORIGIN)/../../lib

proto: $(PROTO_GEN_CC) $(PROTO_GEN_HEADER)

$(PROTO_BUILD_DIR)/%.pb.cc $(PROTO_BUILD_DIR)/%.pb.h : \

$(PROTO_SRC_DIR)/%.proto | $(PROTO_BUILD_DIR)

@ echo PROTOC $<

$(Q)protoc --proto_path=$(PROTO_SRC_DIR) --cpp_out=$(PROTO_BUILD_DIR) $<

$(PY_PROTO_BUILD_DIR)/%_pb2.py : $(PROTO_SRC_DIR)/%.proto \

$(PY_PROTO_INIT) | $(PY_PROTO_BUILD_DIR)

@ echo PROTOC \(python\) $<

$(Q)protoc --proto_path=$(PROTO_SRC_DIR) --python_out=$(PY_PROTO_BUILD_DIR) $<

$(PY_PROTO_INIT): | $(PY_PROTO_BUILD_DIR)

touch $(PY_PROTO_INIT)

clean:

@- $(RM) -rf $(ALL_BUILD_DIRS)

@- $(RM) -rf $(OTHER_BUILD_DIR)

@- $(RM) -rf $(BUILD_DIR_LINK)

@- $(RM) -rf $(DISTRIBUTE_DIR)

@- $(RM) $(PY$(PROJECT)_SO)

@- $(RM) $(MAT$(PROJECT)_SO)

supercleanfiles:

$(eval SUPERCLEAN_FILES := $(strip \

$(foreach ext,$(SUPERCLEAN_EXTS), $(shell find . -name '*$(ext)' \

-not -path './data/*'))))

supercleanlist: supercleanfiles

@ \

if [ -z "$(SUPERCLEAN_FILES)" ]; then \

echo "No generated files found."; \

else \

echo $(SUPERCLEAN_FILES) | tr ' ' '\n'; \

fi

superclean: clean supercleanfiles

@ \

if [ -z "$(SUPERCLEAN_FILES)" ]; then \

echo "No generated files found."; \

else \

echo "Deleting the following generated files:"; \

echo $(SUPERCLEAN_FILES) | tr ' ' '\n'; \

$(RM) $(SUPERCLEAN_FILES); \

fi

$(DIST_ALIASES): $(DISTRIBUTE_DIR)

$(DISTRIBUTE_DIR): all py | $(DISTRIBUTE_SUBDIRS)

# add proto

cp -r src/caffe/proto $(DISTRIBUTE_DIR)/

# add include

cp -r include $(DISTRIBUTE_DIR)/

mkdir -p $(DISTRIBUTE_DIR)/include/caffe/proto

cp $(PROTO_GEN_HEADER_SRCS) $(DISTRIBUTE_DIR)/include/caffe/proto

# add tool and example binaries

cp $(TOOL_BINS) $(DISTRIBUTE_DIR)/bin

cp $(EXAMPLE_BINS) $(DISTRIBUTE_DIR)/bin

# add libraries

cp $(STATIC_NAME) $(DISTRIBUTE_DIR)/lib

install -m 644 $(DYNAMIC_NAME) $(DISTRIBUTE_DIR)/lib

cd $(DISTRIBUTE_DIR)/lib; rm -f $(DYNAMIC_NAME_SHORT); ln -s $(DYNAMIC_VERSIONED_NAME_SHORT) $(DYNAMIC_NAME_SHORT)

# add python - it's not the standard way, indeed...

cp -r python $(DISTRIBUTE_DIR)/python

-include $(DEPS)

我的Makefile.config文件如下:

## Refer to http://caffe.berkeleyvision.org/installation.html

# Contributions simplifying and improving our build system are welcome!

# cuDNN acceleration switch (uncomment to build with cuDNN).

USE_CUDNN := 1

# CPU-only switch (uncomment to build without GPU support).

# CPU_ONLY := 1

# uncomment to disable IO dependencies and corresponding data layers

# USE_OPENCV := 0

# USE_LEVELDB := 0

# USE_LMDB := 0

# uncomment to allow MDB_NOLOCK when reading LMDB files (only if necessary)

# You should not set this flag if you will be reading LMDBs with any

# possibility of simultaneous read and write

# ALLOW_LMDB_NOLOCK := 1

# Uncomment if you're using OpenCV 3

OPENCV_VERSION := 3

# To customize your choice of compiler, uncomment and set the following.

# N.B. the default for Linux is g++ and the default for OSX is clang++

# CUSTOM_CXX := g++

# CUDA directory contains bin/ and lib/ directories that we need.

CUDA_DIR := /usr/local/cuda

# On Ubuntu 14.04, if cuda tools are installed via

# "sudo apt-get install nvidia-cuda-toolkit" then use this instead:

# CUDA_DIR := /usr

# CUDA architecture setting: going with all of them.

# For CUDA < 6.0, comment the *_50 through *_61 lines for compatibility.

# For CUDA < 8.0, comment the *_60 and *_61 lines for compatibility.

CUDA_ARCH := -gencode arch=compute_30,code=sm_30 \

-gencode arch=compute_35,code=sm_35 \

-gencode arch=compute_50,code=sm_50 \

-gencode arch=compute_52,code=sm_52 \

-gencode arch=compute_60,code=sm_60 \

-gencode arch=compute_61,code=sm_61 \

-gencode arch=compute_61,code=compute_61

# BLAS choice:

# atlas for ATLAS (default)

# mkl for MKL

# open for OpenBlas

BLAS := atlas

# Custom (MKL/ATLAS/OpenBLAS) include and lib directories.

# Leave commented to accept the defaults for your choice of BLAS

# (which should work)!

# BLAS_INCLUDE := /path/to/your/blas

# BLAS_LIB := /path/to/your/blas

# Homebrew puts openblas in a directory that is not on the standard search path

# BLAS_INCLUDE := $(shell brew --prefix openblas)/include

# BLAS_LIB := $(shell brew --prefix openblas)/lib

# This is required only if you will compile the matlab interface.

# MATLAB directory should contain the mex binary in /bin.

# MATLAB_DIR := /usr/local

MATLAB_DIR := /usr/local/MATLAB/R2014a

MATLAB_INCLUDE := ./MATLAB/R2014a/toolbox/distcomp/gpu/extern/include

# NOTE: this is required only if you will compile the python interface.

# We need to be able to find Python.h and numpy/arrayobject.h.

# PYTHON_INCLUDE := /usr/include/python2.7 \

# /usr/lib/python2.7/dist-packages/numpy/core/include

# Anaconda Python distribution is quite popular. Include path:

# Verify anaconda location, sometimes it's in root.

ANACONDA_HOME := $(HOME)/anaconda

PYTHON_INCLUDE := $(ANACONDA_HOME)/include \

$(ANACONDA_HOME)/include/python2.7 \

$(ANACONDA_HOME)/lib/python2.7/site-packages/numpy/core/include

# Uncomment to use Python 3 (default is Python 2)

# PYTHON_LIBRARIES := boost_python3 python3.5m

# PYTHON_INCLUDE := /usr/include/python3.5m \

# /usr/lib/python3.5/dist-packages/numpy/core/include

# We need to be able to find libpythonX.X.so or .dylib.

# PYTHON_LIB := /usr/lib

# PYTHON_LIB := $(ANACONDA_HOME)/lib

# Homebrew installs numpy in a non standard path (keg only)

# PYTHON_INCLUDE += $(dir $(shell python -c 'import numpy.core; print(numpy.core.__file__)'))/include

# PYTHON_LIB += $(shell brew --prefix numpy)/lib

# Uncomment to support layers written in Python (will link against Python libs)

WITH_PYTHON_LAYER := 1

# Whatever else you find you need goes here.

INCLUDE_DIRS := $(PYTHON_INCLUDE) $(MATLAB_INCLUDE)/usr/local/include /usr/include/hdf5/serial

LIBRARY_DIRS := $(PYTHON_LIB) /usr/local/lib /usr/lib /usr/lib/x86_64-linux-gnu /usr/lib/x86_64-linux-gnu/hdf5/serial

# If Homebrew is installed at a non standard location (for example your home directory) and you use it for general dependencies

# INCLUDE_DIRS += $(shell brew --prefix)/include

# LIBRARY_DIRS += $(shell brew --prefix)/lib

# NCCL acceleration switch (uncomment to build with NCCL)

# https://github.com/NVIDIA/nccl (last tested version: v1.2.3-1+cuda8.0)

# USE_NCCL := 1

# Uncomment to use `pkg-config` to specify OpenCV library paths.

# (Usually not necessary -- OpenCV libraries are normally installed in one of the above $LIBRARY_DIRS.)

USE_PKG_CONFIG := 1

# N.B. both build and distribute dirs are cleared on `make clean`

BUILD_DIR := build

DISTRIBUTE_DIR := distribute

# Uncomment for debugging. Does not work on OSX due to https://github.com/BVLC/caffe/issues/171

# DEBUG := 1

# The ID of the GPU that 'make runtest' will use to run unit tests.

TEST_GPUID := 0

# enable pretty build (comment to see full commands)

Q ?= @

我的.bashrc文件如下:

# ~/.bashrc: executed by bash(1) for non-login shells.

# see /usr/share/doc/bash/examples/startup-files (in the package bash-doc)

# for examples

# If not running interactively, don't do anything

case $- in

*i*) ;;

*) return;;

esac

# don't put duplicate lines or lines starting with space in the history.

# See bash(1) for more options

HISTCONTROL=ignoreboth

# append to the history file, don't overwrite it

shopt -s histappend

# for setting history length see HISTSIZE and HISTFILESIZE in bash(1)

HISTSIZE=1000

HISTFILESIZE=2000

# check the window size after each command and, if necessary,

# update the values of LINES and COLUMNS.

shopt -s checkwinsize

# If set, the pattern "**" used in a pathname expansion context will

# match all files and zero or more directories and subdirectories.

#shopt -s globstar

# make less more friendly for non-text input files, see lesspipe(1)

[ -x /usr/bin/lesspipe ] && eval "$(SHELL=/bin/sh lesspipe)"

# set variable identifying the chroot you work in (used in the prompt below)

if [ -z "${debian_chroot:-}" ] && [ -r /etc/debian_chroot ]; then

debian_chroot=$(cat /etc/debian_chroot)

fi

# set a fancy prompt (non-color, unless we know we "want" color)

case "$TERM" in

xterm-color|*-256color) color_prompt=yes;;

esac

# uncomment for a colored prompt, if the terminal has the capability; turned

# off by default to not distract the user: the focus in a terminal window

# should be on the output of commands, not on the prompt

#force_color_prompt=yes

if [ -n "$force_color_prompt" ]; then

if [ -x /usr/bin/tput ] && tput setaf 1 >&/dev/null; then

# We have color support; assume it's compliant with Ecma-48

# (ISO/IEC-6429). (Lack of such support is extremely rare, and such

# a case would tend to support setf rather than setaf.)

color_prompt=yes

else

color_prompt=

fi

fi

if [ "$color_prompt" = yes ]; then

PS1='${debian_chroot:+($debian_chroot)}\[\033[01;32m\]\u@\h\[\033[00m\]:\[\033[01;34m\]\w\[\033[00m\]\$ '

else

PS1='${debian_chroot:+($debian_chroot)}\u@\h:\w\$ '

fi

unset color_prompt force_color_prompt

# If this is an xterm set the title to user@host:dir

case "$TERM" in

xterm*|rxvt*)

PS1="\[\e]0;${debian_chroot:+($debian_chroot)}\u@\h: \w\a\]$PS1"

;;

*)

;;

esac

# enable color support of ls and also add handy aliases

if [ -x /usr/bin/dircolors ]; then

test -r ~/.dircolors && eval "$(dircolors -b ~/.dircolors)" || eval "$(dircolors -b)"

alias ls='ls --color=auto'

#alias dir='dir --color=auto'

#alias vdir='vdir --color=auto'

alias grep='grep --color=auto'

alias fgrep='fgrep --color=auto'

alias egrep='egrep --color=auto'

fi

# colored GCC warnings and errors

#export GCC_COLORS='error=01;31:warning=01;35:note=01;36:caret=01;32:locus=01:quote=01'

# some more ls aliases

alias ll='ls -alF'

alias la='ls -A'

alias l='ls -CF'

# Add an "alert" alias for long running commands. Use like so:

# sleep 10; alert

alias alert='notify-send --urgency=low -i "$([ $? = 0 ] && echo terminal || echo error)" "$(history|tail -n1|sed -e '\''s/^\s*[0-9]\+\s*//;s/[;&|]\s*alert$//'\'')"'

# Alias definitions.

# You may want to put all your additions into a separate file like

# ~/.bash_aliases, instead of adding them here directly.

# See /usr/share/doc/bash-doc/examples in the bash-doc package.

if [ -f ~/.bash_aliases ]; then

. ~/.bash_aliases

fi

# enable programmable completion features (you don't need to enable

# this, if it's already enabled in /etc/bash.bashrc and /etc/profile

# sources /etc/bash.bashrc).

if ! shopt -oq posix; then

if [ -f /usr/share/bash-completion/bash_completion ]; then

. /usr/share/bash-completion/bash_completion

elif [ -f /etc/bash_completion ]; then

. /etc/bash_completion

fi

fi

export PATH=/usr/local/cuda/bin${PATH:+:${PATH}}

export LD_LIBRARY_PATH=/usr/local/cuda/lib64${LD_LIBRARY_PATH:+:${LD_LIBRARY_PATH}}

# added by Anaconda2 4.3.1 installer

export PATH="/home/aem/anaconda2/bin:$PATH"

export LD_LIBRARY_PATH="/home/aem/anaconda2/lib:$LD_LIBRARY_PATH"

export PYTHONPATH="/home/aem/Downloads/caffe/python:$PYTHONPATH"

export LD_LIBRARY_PATH="/lib/x86_64-linux-gnu:$LD_LIBRARY_PATH"

# export PYTHONPATH=/home/aem/Downloads/caffe-0.999/python:$PYTHONPATH

export CPLUS_INCLUDE_PATH=/usr/include/python2.7

#export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/lib:/usr/include:$LD_LIBRARY_PATH

export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/lib:$LD_LIBRARY_PATH

注意底下的几个export。还有,如果你发现提示你什么protobuf什么的Google什么反正就是那个错了,千万不要重新安装protobuf。我之前就是装了,然后就各种错。后来卸载掉了...浪费好多时间。另外还有一些问题,http://www.weibo.com/p/230418721a75e50102wfig?from=page_100505_profile&wvr=6&mod=wenzhangmod。这个链接上有些,我看到了感觉还不错,存个底。接下来就是:

pip install cython

pip install easydict

apt-get install python-opencv

git clone --recursive https://github.com/rbgirshick/py-faster-rcnn.git

cd 你的下载地址/py-faster-rcnn/lib

make

cd 你下载的地址/py-fatser-rcnn/pycaffe-fast-rcnn

make

make pycaffe

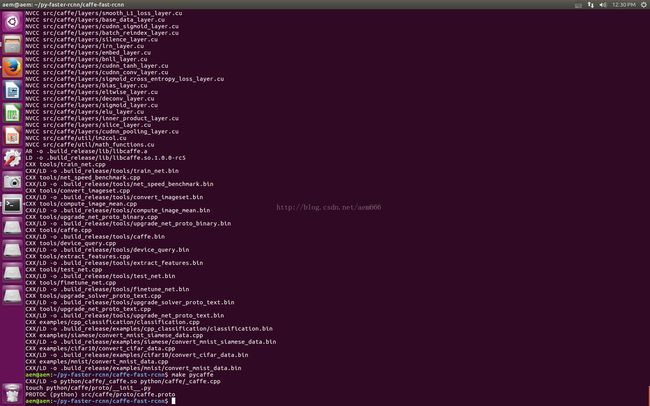

就可以了。好不容易弄好,还是贴张图纪念一下。都有点舍不得关Ubuntu,害怕再打开又不行了。接下来,我要回Windows下做数据集去了。

另外,博主最近回内地,打算在一台工作站上配一下DL。然鹅...不知道是网络问题还是强的问题,感受到被无与伦比龟速网络支配的恐惧,最关键等了几个小时,还下不下来或者下载不全的,气得要吐血。如果有需要的,可以私我,博主方便的话可以帮忙扒下来发给你。当然,仅供学术研究。