计算机视觉ch8 基于LeNet的手写字体识别

文章目录

- 原理

- LeNet的简单介绍

- Minist数据集的特点

- Python代码实现

原理

卷积神经网络参考:https://www.cnblogs.com/chensheng-zhou/p/6380738.html

LeNet神经网络参考:https://my.oschina.net/u/876354/blog/1632862

LeNet的简单介绍

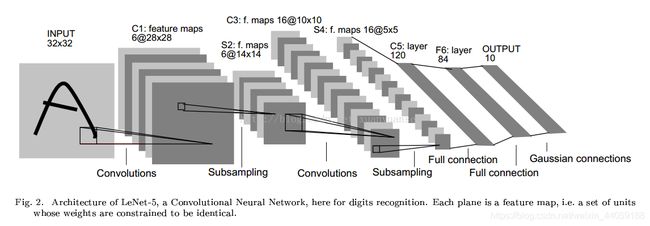

LeNet是一个最典型的卷积神经网络,由卷积层、池化层、全连接层组成。其中卷积层与池化层配合,组成多个卷积组,逐层提取特征,最终通过若干个全连接层完成分类,其结构如下图。

Minist数据集的特点

MNIST 数据集来自美国国家标准与技术研究所, National Institute of Standards and Technology (NIST). 训练集 (training set) 由来自 250 个不同人手写的数字构成, 其中 50% 是高中学生, 50% 来自人口普查局 (the Census Bureau) 的工作人员. 测试集(test set) 也是同样比例的手写数字数据

Python代码实现

手写字体识别

# coding:utf8

import os

import cv2

import numpy as np

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

sess = tf.InteractiveSession()

def getTrain():

trai n =[[] ,[]] # 指定训练集的格式,一维为输入数据,一维为其标签

# 读取所有训练图像,作为训练集

train_roo t ="mnist_train"

labels = os.listdir(train_root)

for label in labels:

imgpaths = os.listdir(os.path.join(train_root ,label))

for imgname in imgpaths:

img = cv2.imread(os.path.join(train_root ,label ,imgname) ,0)

array = np.array(img).flatten() # 将二维图像平铺为一维图像

arra y =MaxMinNormalization(array)

train[0].append(array)

label_ = [0 ,0 ,0 ,0 ,0 ,0 ,0 ,0 ,0 ,0]

label_[int(label)] = 1

train[1].append(label_)

train = shuff(train)

return train

def getTest():

tes t =[[] ,[]] # 指定训练集的格式,一维为输入数据,一维为其标签

# 读取所有训练图像,作为训练集

test_roo t ="mnist_test"

labels = os.listdir(test_root)

for label in labels:

imgpaths = os.listdir(os.path.join(test_root ,label))

for imgname in imgpaths:

img = cv2.imread(os.path.join(test_root ,label ,imgname) ,0)

array = np.array(img).flatten() # 将二维图像平铺为一维图像

arra y =MaxMinNormalization(array)

test[0].append(array)

label_ = [0 ,0 ,0 ,0 ,0 ,0 ,0 ,0 ,0 ,0]

label_[int(label)] = 1

test[1].append(label_)

test = shuff(test)

return test[0] ,test[1]

def shuff(data):

tem p =[]

for i in range(len(data[0])):

temp.append([data[0][i] ,data[1][i]])

import random

random.shuffle(temp)

dat a =[[] ,[]]

for tt in temp:

data[0].append(tt[0])

data[1].append(tt[1])

return data

count = 0

def getBatchNum(batch_size ,maxNum):

global count

if count = =0:

coun t =coun t +batch_size

return 0 ,min(batch_size ,maxNum)

else:

temp = count

coun t =coun t +batch_size

if min(count ,maxNum )= =maxNum:

coun t =0

return getBatchNum(batch_size ,maxNum)

return temp ,min(count ,maxNum)

def MaxMinNormalization(x):

x = (x - np.min(x)) / (np.max(x) - np.min(x))

return x

# 1、权重初始化,偏置初始化

def weight_variable(shape):

initial = tf.truncated_normal(shape ,stddev=0.1 ) # 正太分布的标准差设为0.1

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1 ,shape=shape)

return tf.Variable(initial)

# 2、卷积层和池化层也是接下来要重复使用的,因此也为它们定义创建函数

def conv2d(x, w):

return tf.nn.conv2d(x, w, strides=[1 ,1 ,1 ,1] ,padding='SAME') # 保证输出和输入是同样大小

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1 ,2 ,2 ,1], strides=[1 ,2 ,2 ,1] ,padding='SAME')

iterNum = 1000

batch_siz e =1024

print("load train dataset.")

trai n =getTrain()

print("load test dataset.")

test0 ,test 1 =getTest()

# 3、参数

x = tf.placeholder(tf.float32, [None ,784], name="x-input")

y_ = tf.placeholder(tf.float32 ,[None ,10]) # 10列

# 4、第一层卷积,它由一个卷积接一个max pooling完成

w_conv1 = weight_variable([5 ,5 ,1 ,32])

b_conv1 = bias_variable([32]) # 每个输出通道都有一个对应的偏置量

x_image = tf.reshape(x ,[-1 ,28 ,28 ,1])

h_conv1 = tf.nn.relu(conv2d(x_image, w_conv1) + b_conv1) # 使用conv2d函数进行卷积操作,非线性处理

h_pool1 = max_pool_2x2(h_conv1) # 对卷积的输出结果进行池化操作

# 5、第二个和第一个一样,是为了构建一个更深的网络,把几个类似的堆叠起来

w_conv2 = weight_variable([5 ,5 ,32 ,64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, w_conv2) + b_conv2 )# 输入的是第一层池化的结果

h_pool2 = max_pool_2x2(h_conv2)

# 6、密集连接层

# 把池化层输出的张量reshape(此函数可以重新调整矩阵的行、列、维数)成一些向量,加上偏置,然后对其使用Relu激活函数

w_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1 ,7 * 7 * 64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, w_fc1) + b_fc1)

# 7、使用dropout,防止过度拟合

keep_prob = tf.placeholder(tf.float32, name="keep_prob" )# placeholder是占位符

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

# 8、输出层,最后添加一个softmax层

w_fc2 = weight_variable([1024 ,10])

b_fc2 = bias_variable([10])

y_conv = tf.nn.softmax(tf.matmul(h_fc1_drop, w_fc2) + b_fc2, name="y-pred")

# 9、训练和评估模型

# 参数keep_prob控制dropout比例,然后每100次迭代输出一次日志

cross_entropy = tf.reduce_sum(-tf.reduce_sum(y_ * tf.log(y_conv) ,reduction_indices=[1]))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

# 预测结果与真实值的一致性,这里产生的是一个bool型的向量

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

# 将bool型转换成float型,然后求平均值,即正确的比例

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

# 初始化所有变量,在2017年3月2号以后,用 tf.global_variables_initializer()替代tf.initialize_all_variables()

sess.run(tf.initialize_all_variables())

# 保存最后一个模型

saver = tf.train.Saver(max_to_keep=1)

for i in range(iterNum):

for j in range(int(len(train[1] ) /batch_size)):

imagesNu m =getBatchNum(batch_size ,len(train[1]))

batch = [train[0][imagesNum[0]:imagesNum[1]] ,train[1][imagesNum[0]:imagesNum[1]]]

train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

if i % 2 == 0:

train_accuracy = accuracy.eval(feed_dict={x: batch[0], y_: batch[1] ,keep_prob: 1.0})

print("Step %d ,training accuracy %g" % (i, train_accuracy))

print("test accuracy %f " % accuracy.eval(feed_dict={x: test0, y_ :test1, keep_prob: 1.0}))

# 保存模型于文件夹

saver.save(sess ,"save/model")

数据可视化

import tensorflow as tf

import numpy as np

import tkinter as tk

from tkinter import filedialog

from PIL import Image, ImageTk

from tkinter import filedialog

def creat_windows():

win = tk.Tk() # 创建窗口

sw = win.winfo_screenwidth()

sh = win.winfo_screenheight()

ww, wh = 400, 450

x, y = (s w -ww ) /2, (s h -wh ) /2

win.geometry("%dx%d+%d+%d " %(ww, wh, x, y- 40)) # 居中放置窗口

win.title('手写体识别') # 窗口命名

bg1_open = Image.open("timg.jpg").resize((300, 300))

bg1 = ImageTk.PhotoImage(bg1_open)

canvas = tk.Label(win, image=bg1)

canvas.pack()

var = tk.StringVar() # 创建变量文字

var.set('')

tk.Label(win, textvariable=var, bg='#C1FFC1', font=('宋体', 21), width=20, height=2).pack()

tk.Button(win, text='选择图片', width=20, height=2, bg='#FF8C00', command=lambda: main(var, canvas),

font=('圆体', 10)).pack()

win.mainloop()

def main(var, canvas):

file_path = filedialog.askopenfilename()

bg1_open = Image.open(file_path).resize((28, 28))

pic = np.array(bg1_open).reshape(784, )

bg1_resize = bg1_open.resize((300, 300))

bg1 = ImageTk.PhotoImage(bg1_resize)

canvas.configure(image=bg1)

canvas.image = bg1

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

saver = tf.train.import_meta_graph('save/model.meta') # 载入模型结构

saver.restore(sess, 'save/model') # 载入模型参数

graph = tf.get_default_graph() # 加载计算图

x = graph.get_tensor_by_name("x-input:0") # 从模型中读取占位符变量

keep_prob = graph.get_tensor_by_name("keep_prob:0")

y_conv = graph.get_tensor_by_name("y-pred:0") # 关键的一句 从模型中读取占位符变量

prediction = tf.argmax(y_conv, 1)

predint = prediction.eval(feed_dict={x: [pic], keep_prob: 1.0}, session=sess) # feed_dict输入数据给placeholder占位符

answer = str(predint[0])

var.set("预测的结果是:" + answer)

if __name__ == "__main__":

creat_windows()

总结

- 学习率会影响模型的好坏,对于Mnist手写体的识别中,学习率控制在0.01~0.05中最佳。对数据集的正确率可以达到99%

- 测试图片中数字的粗细也会对识别结果造成影响,如果图片中数字太细,在resize和不断的池化的过程中,有些比较关键的特征就会丢失,导致最后的识别错误。

- 在数字形态有残缺的情况下,残缺较少且数字较正,预测结果准确度高,而残缺的面积较大,则容易造成预测错误

- 在数字镜像翻转的情况下,一般都能够预测出正确结果,也要根据数字的具体的形态来预测

- 在有额外干扰的情况下,如“7”和“4”或者其他数字,都能够识别出正确结果

- 在不规则形态下的数字,这个要根据具体训练集是否包含足够多的形态数据,而且数字图片卷积后的结果直接影响到预测结果