本文介绍iOS下使用FFmpeg+x264进行软编码。

x264是一个开源的H.264/MPEG-4 AVC视频编码函数库,我们可以直接使用x264的API进行编码,也可以将x264编译到FFmpeg中,使用FFmpeg提供的API进行编码。

一、编译x264

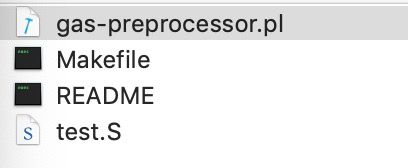

1、下载gas-preprocessor文件:

- https://github.com/libav/gas-preprocessor

- 将里面的gas-preprocessor.pl拷贝到/usr/local/bin目录下

- 修改文件权限:chmod 777 /usr/local/bin/gas-preprocessor.pl

2、下载x264源码:

- [https://www.videolan.org/developers/x264.html]

3、下载x264编译脚本文件:

- https://www.videolan.org/developers/x264.html

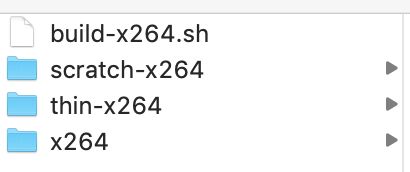

4、将源码与脚本放在一起:

-

新建一个文件夹,将编译脚本build-x264.sh与x264源码文件夹放入这个新建文件夹中,并将x264文件夹(x264-snapshot-xxxx)改名为"x264"

5、修改权限、执行脚本:

- sudo chmod u+x build-x264.sh

- sudo ./build-x264.sh

- 编译过程中会生成scratch-x264文件夹与thin-x264文件夹

- 编译完成最终会生成"x264-iOS"文件夹

6、编译遇到的问题:

No working C compiler found.

可能是xcode路径问题,终端输入命令:

sudo xcode-select --switch /Applications/Xcode.app/Contents/Developer/Found no assembler,Minimum version is yasm-x.x.x

或Found no assembler,Minimum version is nasm-x.x.x

或Found yasm x.x.x.xxxx,Minimum version is yasm-x.x.x

或Found nasm x.x.x.xxxx,Minimum version is nasm-x.x.x

原因是没有安装yasm/nasm或yasm/nasm版本太低,需要重新安装yasm/nasm。

安装yasm/nasm可通过Homebrew安装,Homebrew下载地址:https://brew.sh/

Homebrew安装yasm命令:brew install yasm

Homebrew安装nasm命令:brew install nasm如果已经安装了yasm/nasm并且是最新版本,仍然提示上面的问题,那么可能是yasm/nasm安装的路径没有识别到,

which yasm或which nasm查看下路径,并将最新版本的yasm/nasm拷贝到此目录下,如果使用"sudo"命令也没有权限,那么需要按照下面的步骤关闭rootless:

(1) 关机、重启进入恢复模式

重启系统。按住Command + R进入恢复模式, 在菜单中打开Terminal

(2) 关闭rootless

输入:csrutil disable,重启设备

(3) 拷贝完成,重新打开rootless如果编译i386遇到No working C compiler found

可以直接将i386略过编译,即编译脚本中ARCHS="arm64 x86_64 i386 armv7 armv7s"将i386去掉重新编译,或终端输入./build-x264.sh arm64 x86_64 armv7 armv7s进行编译

二、编译FFmpeg+x264

1、下载FFmpeg编译脚本:

- https://github.com/kewlbear/FFmpeg-iOS-build-script

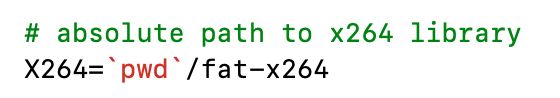

2、x264修改

- 将build-ffmpeg.sh中的

#X264=`pwd`/fat-x264注释去掉,即X264=`pwd`/fat-x264

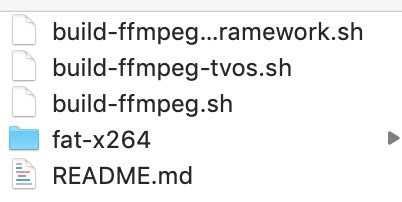

- 将x264编译出来的lib库文件夹放入ffmpeg编译脚本的文件夹中,并改名为"fat-x264"

3、编译FFmpeg

- ./build-ffmpeg.sh

注:如果出现i386问题,脚本中同样将ARCHS中的i386去掉

ARCHS="arm64 armv7 x86_64" - 编译完成,在目录下会生成"FFmpeg-iOS"文件夹

三、编码实现

1、将编译好的FFmpeg-iOS与x264-iOS导入工程中

2、导入系统库

- 导入

、libz.1.2.5.tbd、libbz2.1.0.tbd、libiconv.2.4.0.tbd等系统库

可参考:https://www.jianshu.com/p/5d20e2a50faa

3、Code

- X264Encoder.h

#import

#import

NS_ASSUME_NONNULL_BEGIN

@interface X264Encoder : NSObject

@property (assign, nonatomic) CGSize videoSize;

@property (assign, nonatomic) CGFloat frameRate;

@property (assign, nonatomic) CGFloat maxKeyframeInterval;

@property (assign, nonatomic) CGFloat bitrate;

@property (strong, nonatomic) NSString *profileLevel;

+ (instancetype)defaultX264Encoder;

- (instancetype)initX264Encoder:(CGSize)videoSize

frameRate:(NSUInteger)frameRate

maxKeyframeInterval:(CGFloat)maxKeyframeInterval

bitrate:(NSUInteger)bitrate

profileLevel:(NSString *)profileLevel;

- (void)encoding:(CVPixelBufferRef)pixelBuffer timestamp:(CGFloat)timestamp;

- (void)teardown;

@end

NS_ASSUME_NONNULL_END

- X264Encoder.m

#import "X264Encoder.h"

#ifdef __cplusplus

extern "C" {

#endif

#include

#include

#include

#include

#ifdef __cplusplus

};

#endif

@implementation X264Encoder

{

AVCodecContext *pCodecCtx;

AVCodec *pCodec;

AVPacket packet;

AVFrame *pFrame;

int pictureSize;

int frameCounter;

int frameWidth;

int frameHeight;

}

+ (instancetype)defaultX264Encoder

{

X264Encoder *x264encoder = [[X264Encoder alloc] initX264Encoder:CGSizeMake(720, 1280) frameRate:30 maxKeyframeInterval:25 bitrate:1024*1000 profileLevel:@""];

return x264encoder;

}

- (instancetype)initX264Encoder:(CGSize)videoSize

frameRate:(NSUInteger)frameRate

maxKeyframeInterval:(CGFloat)maxKeyframeInterval

bitrate:(NSUInteger)bitrate

profileLevel:(NSString *)profileLevel

{

self = [super init];

if (self) {

_videoSize = videoSize;

_frameRate = frameRate;

_maxKeyframeInterval = maxKeyframeInterval;

_bitrate = bitrate;

_profileLevel = profileLevel;

[self setupEncoder];

}

return self;

}

- (void)setupEncoder

{

avcodec_register_all();

frameCounter = 0;

frameWidth = self.videoSize.width;

frameHeight = self.videoSize.height;

// Param that must set

pCodecCtx = avcodec_alloc_context3(pCodec);

pCodecCtx->codec_id = AV_CODEC_ID_H264;

pCodecCtx->codec_type = AVMEDIA_TYPE_VIDEO;

pCodecCtx->pix_fmt = AV_PIX_FMT_YUV420P;

pCodecCtx->width = frameWidth;

pCodecCtx->height = frameHeight;

pCodecCtx->time_base.num = 1;

pCodecCtx->time_base.den = self.frameRate;

pCodecCtx->bit_rate = self.bitrate;

pCodecCtx->gop_size = self.maxKeyframeInterval;

pCodecCtx->qmin = 10;

pCodecCtx->qmax = 51;

AVDictionary *param = NULL;

if(pCodecCtx->codec_id == AV_CODEC_ID_H264) {

av_dict_set(¶m, "preset", "slow", 0);

av_dict_set(¶m, "tune", "zerolatency", 0);

}

pCodec = avcodec_find_encoder(pCodecCtx->codec_id);

if (!pCodec) {

NSLog(@"Can not find encoder!");

}

if (avcodec_open2(pCodecCtx, pCodec, ¶m) < 0) {

NSLog(@"Failed to open encoder!");

}

pFrame = av_frame_alloc();

pFrame->width = frameWidth;

pFrame->height = frameHeight;

pFrame->format = AV_PIX_FMT_YUV420P;

avpicture_fill((AVPicture *)pFrame, NULL, pCodecCtx->pix_fmt, pCodecCtx->width, pCodecCtx->height);

pictureSize = avpicture_get_size(pCodecCtx->pix_fmt, pCodecCtx->width, pCodecCtx->height);

av_new_packet(&packet, pictureSize);

}

- (void)encoding:(CVPixelBufferRef)pixelBuffer timestamp:(CGFloat)timestamp

{

CVPixelBufferLockBaseAddress(pixelBuffer, 0);

UInt8 *pY = (UInt8 *)CVPixelBufferGetBaseAddressOfPlane(pixelBuffer, 0);

UInt8 *pUV = (UInt8 *)CVPixelBufferGetBaseAddressOfPlane(pixelBuffer, 1);

size_t width = CVPixelBufferGetWidth(pixelBuffer);

size_t height = CVPixelBufferGetHeight(pixelBuffer);

size_t pYBytes = CVPixelBufferGetBytesPerRowOfPlane(pixelBuffer, 0);

size_t pUVBytes = CVPixelBufferGetBytesPerRowOfPlane(pixelBuffer, 1);

UInt8 *pYUV420P = (UInt8 *)malloc(width * height * 3 / 2);

UInt8 *pU = pYUV420P + (width * height);

UInt8 *pV = pU + (width * height / 4);

for(int i = 0; i < height; i++) {

memcpy(pYUV420P + i * width, pY + i * pYBytes, width);

}

for(int j = 0; j < height / 2; j++) {

for(int i = 0; i < width / 2; i++) {

*(pU++) = pUV[i<<1];

*(pV++) = pUV[(i<<1) + 1];

}

pUV += pUVBytes;

}

pFrame->data[0] = pYUV420P;

pFrame->data[1] = pFrame->data[0] + width * height;

pFrame->data[2] = pFrame->data[1] + (width * height) / 4;

pFrame->pts = frameCounter;

int got_picture = 0;

if (!pCodecCtx) {

CVPixelBufferUnlockBaseAddress(pixelBuffer, 0);

return;

}

int ret = avcodec_encode_video2(pCodecCtx, &packet, pFrame, &got_picture);

if(ret < 0) {

NSLog(@"Failed to encode!");

}

if (got_picture == 1) {

NSLog(@"Succeed to encode frame: %5d\tsize:%5d", frameCounter, packet.size);

frameCounter++;

av_free_packet(&packet);

}

free(pYUV420P);

CVPixelBufferUnlockBaseAddress(pixelBuffer, 0);

}

- (void)teardown

{

avcodec_close(pCodecCtx);

av_free(pFrame);

pCodecCtx = NULL;

pFrame = NULL;

}

@end

- use

- (void)initX264Encoder

{

dispatch_sync(encodeQueue, ^{

self->x264encoder = [X264Encoder defaultX264Encoder];

});

}

- (void)teardown

{

dispatch_sync(encodeQueue, ^{

[self->x264encoder teardown];

});

}

- (void)videoWithSampleBuffer:(CMSampleBufferRef)sampleBuffer

{

dispatch_sync(encodeQueue, ^{

if (self->isRecording) {

CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

CMTime ptsTime = CMSampleBufferGetOutputPresentationTimeStamp(sampleBuffer);

CGFloat pts = CMTimeGetSeconds(ptsTime);

[self->x264encoder encoding:pixelBuffer timestamp:pts];

}

});

}

以上,则实现了iOS下使用FFmpeg+x264进行软编码的整个流程。

demo:https://github.com/XuningZhai/VideoEncode_x264