Centos 7 搭建slurm

目录

背景说明

搭建步骤

slurm 常用命令

进阶(GPU)

参考文献

背景说明

Slurm 任务调度工具(前身为极简Linux资源管理工具,英文:Simple Linux Utility for Resource Management,取首字母,简写为SLURM),它是一个用于 Linux 和 Unix 内核系统的免费,开源的任务调度工具,被世界范围内的超级计算机和计算机群广泛采用。它提供了三个关键功能

- 第一,为用户分配一定时间的专享或非专享的资源(计算机节点),以供用户执行工作

- 第二,它提供了一个框架,用于启动、执行、监测在节点上运行着的任务(通常是并行的任务,例如 MPI)

- 第三,为任务队列合理地分配资源

搭建机器

10.145.67.213 master,控制机

10.134.100.133 slave1,计算机

10.141.160.105 slave2,计算机

搭建步骤

1. 如果不使用root用户装软件,需创建 slurm 账户(id必须是412),否则跳过此步

export SLURMUSER=412

groupadd -g $SLURMUSER slurm

useradd -m -c "SLURM workload manager" -d /var/lib/slurm -u $SLURMUSER -g slurm -s /bin/bash slurm

id slurm

2. slurm使用munge认证, 需安装 munge 和 munge-devel,若有源,直接安装,若没有,自行编译。在所有机器装上munge,在master机上创建证书,scp到其他节点。munge安装后,涉及的目录是munge:munge,需改组。若非root用户, 需将涉及的几个目录 chown slurm:slurm

yum install -y munge munge-devel

/usr/sbin/create-munge-key

# 将master 机生成的munge.key发送到slave机上

scp /etc/munge/munge.key xxx.xxx.xxx.xxx:/etc/munge

chown root:root /etc/munge

chown root:root /var/run/munge

chown root:root /var/lib/munge

chown root:root /var/log/munge

chown root:root /etc/munge/munge.key

3.在每台机器上,启动munge,校验是否启动成功

su slurm # 没有root权限用户执行,否则跳过此命令

munged

ps -aux | grep munged4.slurm编译配置(易错点)

本人采用源码编译, 下载地址,自行选择版本,本人选择 slurm-17.02.11.tar.bz2, 解压编译安装。

tar -bxvf slurm-17.02.11.tar.bz2

cd slurm-17.02.11

./configure

make

make install

slurmctld -V # 报没有/usr/local/etc/slurm.conf文件错误,表明安装成功

配置集群的slurm.conf文件前,首先得配置 hostname,有时候hostname其他地方也需要使用,不能随便修改,可以取别名让配置能识别。修改 /etc/hosts, 以master机为例,否则可能出现"slurmctld: error: this host (xx) not valid controller (master

or (null))", 你的 "ControlMachine" 不等于 hostname -s时就会出现此错误

[@gd.67.213 etc]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.145.67.213 gd.67.213 masterconf文件在slurm解压的源码目录etc下有,slurm.conf.example, cp一份为slurm.conf,修改,本人的配置如下

#

# Example slurm.conf file. Please run configurator.html

# (in doc/html) to build a configuration file customized

# for your environment.

#

#

# slurm.conf file generated by configurator.html.

#

# See the slurm.conf man page for more information.

#

ClusterName=slurm-xzy

ControlMachine=rysnc

ControlAddr=10.145.67.213

#BackupController=

#BackupAddr=

#

#SlurmUser=slurm

SlurmdUser=root

SlurmctldPort=6817

SlurmdPort=6818

AuthType=auth/munge

#JobCredentialPrivateKey=

#JobCredentialPublicCertificate=

StateSaveLocation=/var/spool/slurm/ctld

SlurmdSpoolDir=/var/spool/slurm/d

SwitchType=switch/none

MpiDefault=none

SlurmctldPidFile=/var/run/slurmctld.pid

SlurmdPidFile=/var/run/slurmd.pid

ProctrackType=proctrack/pgid

#PluginDir=

#FirstJobId=

ReturnToService=0

#MaxJobCount=

#PlugStackConfig=

#PropagatePrioProcess=

#PropagateResourceLimits=

#PropagateResourceLimitsExcept=

#Prolog=

#Epilog=

#SrunProlog=

#SrunEpilog=

#TaskProlog=

#TaskEpilog=

#TaskPlugin=

#TrackWCKey=no

#TreeWidth=50

#TmpFS=

#UsePAM=

#

# TIMERS

SlurmctldTimeout=300

SlurmdTimeout=300

InactiveLimit=0

MinJobAge=300

KillWait=30

Waittime=0

#

# SCHEDULING

SchedulerType=sched/backfill

#SchedulerAuth=

SelectType=select/linear

FastSchedule=1

#PriorityType=priority/multifactor

#PriorityDecayHalfLife=14-0

#PriorityUsageResetPeriod=14-0

#PriorityWeightFairshare=100000

#PriorityWeightAge=1000

#PriorityWeightPartition=10000

#PriorityWeightJobSize=1000

#PriorityMaxAge=1-0

#

# LOGGING

SlurmctldDebug=3

SlurmctldLogFile=/var/log/slurmctld.log

SlurmdDebug=3

SlurmdLogFile=/var/log/slurmd.log

JobCompType=jobcomp/none

#JobCompLoc=

#

# ACCOUNTING

#JobAcctGatherType=jobacct_gather/linux

#JobAcctGatherFrequency=30

#

#AccountingStorageType=accounting_storage/slurmdbd

#AccountingStorageHost=

#AccountingStorageLoc=

#AccountingStoragePass=

#AccountingStorageUser=

#

# COMPUTE NODES

NodeName=slave2 Sockets=1 Procs=1 CoresPerSocket=8 ThreadsPerCore=2 RealMemory=300 State=UNKNOWN NodeAddr=10.141.160.105

NodeName=slave1 Sockets=1 Procs=1 CoresPerSocket=1 ThreadsPerCore=1 RealMemory=300 State=UNKNOWN NodeAddr=10.134.100.133

NodeName=master Sockets=1 Procs=1 CoresPerSocket=3 ThreadsPerCore=2 RealMemory=300 State=UNKNOWN NodeAddr=10.145.67.213

PartitionName=compute Nodes=slave1,slave2 Default=YES MaxTime=INFINITE State=UP

PartitionName=control Nodes=master Default=NO MaxTime=INFINITE State=UP

说明,上面的slurm.conf末尾NodeName配置中, Procs是该节点能使用的CPU数,Sockets,CoresPerSocket和ThreadsPerCore可使用 lscpu查看配置(procs=sockets * corespersocket * threadpercore),内存自行根据机器设置。分区 PartitionName, Defult代表该机是否做运算,建议控制机设置NO不做运算,分区名自定义,不必叫 control、compute

[@gd.67.213 etc]# lscpu

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Byte Order: Little Endian

CPU(s): 6

On-line CPU(s) list: 0-5

Thread(s) per core: 2

Core(s) per socket: 3

Socket(s): 1

NUMA node(s): 1

将slurm.conf,分别拷贝到/usr/local/etc ,这也是解决错误"slurmd: error: s_p_parse_file: unable to status file /usr/local/etc/slurm.conf: No such file or directory, retrying in 1sec up to 60sec"

创建slurm中部分配置的目录,/var/spool/slurm/ctld 和 /var/spool/slurm/d ,不然会报类似错 "slurmd: fatal: mkdir(/var/spool/slurm/d): No such file or directory"

将slurm.conf文件拷贝到其他slave机

5. 启动。可以先前端启动查看是否有错, 没有错在以后台运行守护进程,注意: -D是前端展示

# master 机

slurmctld -D # 前端打印显示

slurmctld -c # 后台运行

# slave 机

slurmd -D

slurmd -c在master机上可执行 sinfo查看节点信息

[@gd.67.213 etc]# sinfo

PARTITION AVAIL TIMELIMIT NODES STATE NODELIST

compute* up infinite 2 idle slave[1-2]

control up infinite 1 down* master

slurm 常用命令

- sacct:查看历史作业信息

- salloc:分配资源

- sbatch:提交批处理作业

- scancel:取消作业

- scontrol:系统控制

scontrol show job jobid :显示jobid的信息

scontrol show nodes :显示节点信息

- sinfo:查看节点与分区状态

- squeue:查看队列状态

- srun:执行作业

进阶(GPU)

是否能使用虚机(上面没GPU卡)提一个GPU的任务(比如 Tensorflow 的任务)到有GPU卡的分区上去执行。答案是可以的

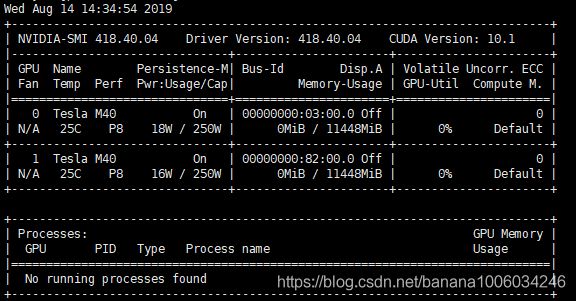

首先,slurm.conf文件添加类似如下内容。我的slave3上是 tesla的GPU,2块GPU卡,GPU信息可使用 nvidia-smi 来查看。本人如下图

GresTypes=gpu

NodeName=slave3 Sockets=2 Procs=32 CoresPerSocket=8 ThreadsPerCore=2 RealMemory=3000 Gres=gpu:tesla:2 State=UNKNOWN NodeAddr=10.135.12.29此外,slave3这个机器需配置GPU信息,编辑 /usr/local/etc/gres.conf 文件,内容如下。Type 和 File 自行修改

# Configure support for four GPUs (with MPS), plus bandwidth

Name=gpu Type=tesla File=/dev/nvidia0

Name=gpu Type=tesla File=/dev/nvidia1在虚机(是slurm里的一个节点), 编写提交脚本test.slurm,内容如下:

#!/bin/bash

#SBATCH -J TF-test

#SBATCH -p AiTf

#SBATCH -N 1

#SBATCH --cpus-per-task=1

#SBATCH -t 5:00

#SBATCH --ntasks-per-node=1

#SBATCH -o job.%j.out

#SBATCH --qos=low

#SBATCH --mail-type=end

#SBATCH [email protected]

#SBATCH --gres=gpu:tesla:2

module load python-2.7

srun python test.py

其中J 为任务名,p指定任务运行的分区,t 运行时间限制,gres指定gpu资源,module 加载py2.7,若不懂module可见本人另一篇博客 Linux environment modules

test.py 必须在slave3这台机器存在,本人的是 gpu的tensorflow例子。先在slave3机器,安装tensorflow-gpu版本,执行python test.py。可能会出现类似错误,具体修改tf.device里面的内容

tensorflow.python.framework.errors_impl.InvalidArgumentError: Cannot assign a device for operation MatMul: node MatMul (defined at test.py:12) was explicitly assigned to /device:GPU:0 but available devices are [ /job:localhost/replica:0/task:0/device:CPU:0, /job:localhost/replica:0/task:0/device:XLA_CPU:0, /job:localhost/replica:0/task:0/device:XLA_GPU:0, /job:localhost/replica:0/task:0/device:XLA_GPU:1 ]. Make sure the device specification refers to a valid device.import datetime

import tensorflow as tf

print('gpuversion')

# Creates a graph.(gpu version)

starttime2 = datetime.datetime.now()

#running

with tf.device('/job:localhost/replica:0/task:0/device:XLA_GPU:0'):

a = tf.constant([1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0,1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0], shape=[6, 9], name='a')

b = tf.constant([1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0,1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0], shape=[9, 6], name='b')

c = tf.matmul(a, b)

c = tf.matmul(c,a)

c = tf.matmul(c,b)

# Creates a session with log_device_placement set to True.

sess2 = tf.Session(config=tf.ConfigProto(log_device_placement=True))

# Runs the op.

for i in range(59999):

sess2.run(c)

print(sess2.run(c))

sess2.close()

endtime2 = datetime.datetime.now()

time2 = (endtime2 - starttime2).microseconds

print('time2:',time2)

这个执行的结果类似:

[[ 18225. 36450. 54675. 72900. 91125. 109350.]

[ 24300. 48600. 72900. 97200. 121500. 145800.]

[ 18225. 36450. 54675. 72900. 91125. 109350.]

[ 24300. 48600. 72900. 97200. 121500. 145800.]

[ 18225. 36450. 54675. 72900. 91125. 109350.]

[ 24300. 48600. 72900. 97200. 121500. 145800.]]

('time2:', 618094)

参考文献

slurm命令 https://www.jianshu.com/p/e560b19dbd3e

his host (xx) not valid controller https://slurm-dev.schedmd.narkive.com/iPJzbg5x/newbie-issue-with-new-slurm-install

搭建slurm https://blog.csdn.net/datuqiqi/article/details/50827040

http://bicmr.pku.edu.cn/~wenzw/pages/quickstart.html

slurm官方文档 https://slurm.schedmd.com/overview.html

ubuntu slurm-gpu https://gummary.github.io/2018/11/09/install-slurm/

https://www.nrel.gov/hpc/assets/pdfs/slurm-advanced-topics.pdf

http://blog.zxh.site/2018/10/14/HPC-series-13-schedule-GPU/

https://www.cuhk.edu.hk/itsc/hpc/slurm.html