Windows7-64编译hadoop-3.2.0

1 文件准备

打开Hadoop src下的BUILDING.txt(多看看该文件),找到Building on Windows

- Jdk1.8

- hadoop-3.2.0-src.tar

https://hadoop.apache.org/release/3.2.0.html - apache-maven-3.6.1

http://maven.apache.org/download.cgi - protobuf-2.5/protoc

https://github.com/protocolbuffers/protobuf/releases/tag/v2.5.0 - CMake3.15.1

https://cmake.org/download/ - Visual studio 2010

http://pan.baidu.com/s/1b06vlc 密码:dui3 - GIT2.22

http://git-scm.com/downloads - zlib

2 安装程序

- 安装jdk1.8配置环境变量

- 解压maven配置环境变量

- 解压protobuf

- 解压CMake

- Visual studio 安装教程(大概需要30min左右)

文件我都存放在D:\resource目录,截图如下

Visual studio 安装在 D:\Program Files (x86)\Microsoft Visual Studio 10.0

Git 安装在 D:\Program Files\Git

环境变量配置

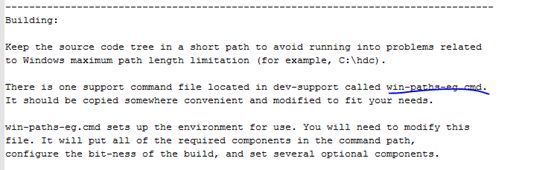

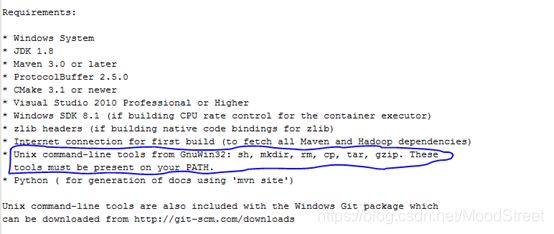

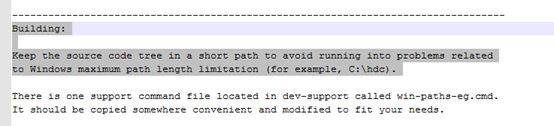

在网上找了很多文章,发现不同的版本编译步骤不一样,环境变量配置也不一样,这时候发现BUILDING.txt里面有这样一段话

意思是在src/dev-support目录有一个叫win-paths-eg-cmd文件,可用来配置环境变量,文档还说这个文件需要经常用,把它换一个地方保存 - 编辑win-paths-eg-cmd文件,修改里面的环境变量配置,如下截图

- 保存后运行win-paths-eg-cmd文件设置环境变量

- 打开cmd命令窗口,切换到Hadoop src目录(D:\resource\hadoop-3.2.0-src)

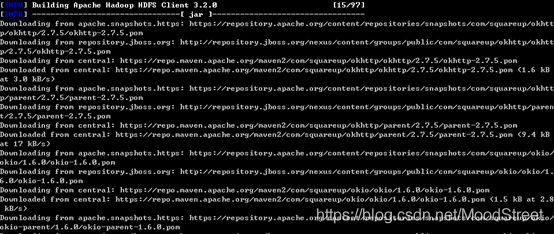

运行mvn package -Pdist,native-win -DskipTests –Dtar

开始下载文件,此时已经1点了,加上安装visual等了太久,不想再等下载完,就直接睡觉了,哈哈哈哈

3 错误分析

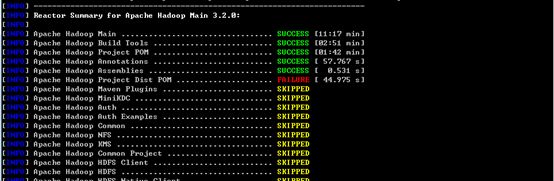

一大早起来看结果BUILD FAILURE,心凉一截

-

Command execution failed.: Cannot run program “bash” ,,缺少Unix tools

[ERROR] Failed to execute goal org.codehaus.mojo:exec-maven-plugin:1.3.1:exec (pre-dist) on project hadoop-project-dist: Command execution failed.: Cannot run program “bash” (in directory “D:\resource\hadoop-3.2.0-src\hadoop-project-dist\target”): CreateProcess error=2, 系统找不到指定的文件。

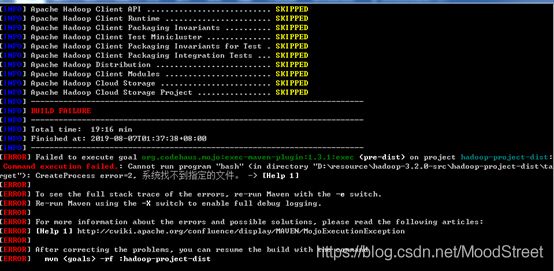

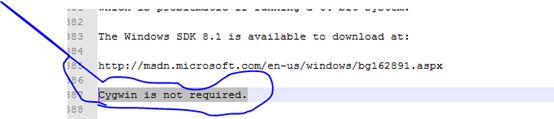

在网上找到一个出现类似错误的小伙伴,说是缺少unix tools,说要安装cygwin,但是building文件里面已经说明不需要cygwin 如下图

再仔细分析错误,不能运行bash命令,是不是缺少组件,然后想起文章开头提到的building.txt里面有介绍到安装unix command-line tools

刚好我的早餐熟了,先吃早餐,附上一张图,哈哈哈

吃完早餐接着来

打开building.txt

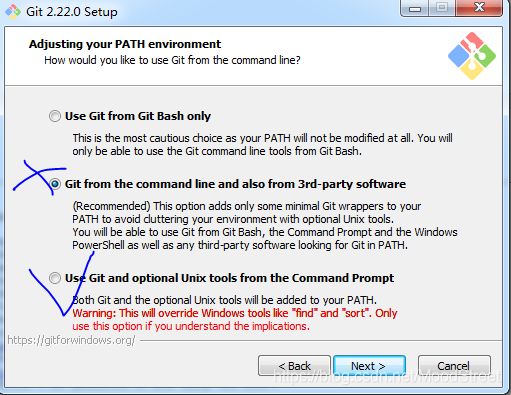

细想是不是安装git时没有装这个命令工具,然后又打开git安装程序,一步一步看,直到截图:

当时安装是选的是第二项,没有安装unix tools,选择use git and optional Unix tools 下面那项重新安装

再次运行打包命令,还是报上面那个错误,想想是不是因为没有卸载git,直接重装的不行,所以直接卸载git然后再安装,运行还是上面那个错误

再想是不是因为环境变量的问题,打开环境变量配置,看到git配置如下

bash在D:\Program Files\Git\bin 目录,是没有加载到path里面的,难怪运行报错,上面那些是通过win-paths-eg.cmd添加进去的,手动添加GIT_HOME=D:\Program Files\Git

%GIT_HOME%\bin运行还是报同样错误,我颈椎痛。。。细想加了环境变量,当前cmd窗口是不是没有生效,重新开了个cmd窗口运行,没有报错又开始下载了。。。。看了几分钟还没报错一直在下载,就先没管它了

解决方案:卸载Git,重装,新窗口执行命令

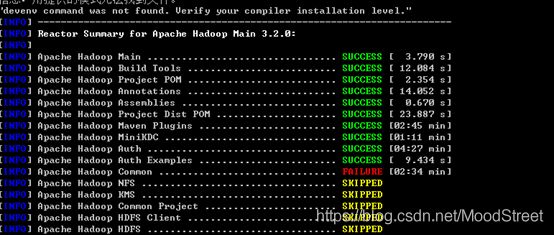

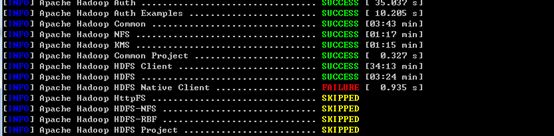

忙了一会儿过来看,报错。。。,不过错误不同了 -

exec (convert-ms-winutils) on project hadoop-common: Command execution failed

[ERROR] Failed to execute goal org.codehaus.mojo:exec-maven-plugin:1.3.1:exec (convert-ms-winutils) on project hadoop-common: Command execution failed.: Process exited with an error: 1 (Exit value: 1) -> [Help 1]

到晚上了,很困但是又不想写代码,想起Hadoop还未编译成功,立即决定来填坑

网上找了很多方法试了都不行,先后加了环境变量配置

%ZLIB_HOME%;C:\Windows\Microsoft.NET\Framework\v4.0.30319; 都不行,后面看到文章说用Visual的命令端Visual Studio x64 兼容工具命令提示(2010) 工具运行命令,果然没有报那个错误了(我也不确定到底是哪一步解决了这个问题)报了个新的错误,找不到protoc.exe,复制protoc.exe到C:\Windows\System32目录(心想这次不行就算了,大不了每次把程序丢到服务器上运行),再运行报了如下错误:

-

Error running javah command: Error executing command line(命令行太长)

解决方案:修改hadoop源码及maven仓库路径改短点

[ERROR] Failed to execute goal org.codehaus.mojo:native-maven-plugin:1.0-alpha-8

:javah (default) on project hadoop-common: Error running javah command: Error ex

ecuting command line. Exit code:1 ->

心想难道是因为路径太长导致最终执行命令太长,因为building文件一开始就提示把源码放到一个比较短的路径

好吧,我改路径,还能怎么办呢。。。。

把Hadoop源码移动到D盘根目录还是不行。。。仔细查看执行的命令里面包含很多maven仓库的jar,所以试着把maven的仓库路径也改短一点,果然,没有报命令行太长错误了。But… -

exec-maven-plugin:1.3.1:exec (compile-ms-winutils) on project hadoop-common: Command execution failed.

这个错误上面也报错,这次是因为cvtes.exe版本问题

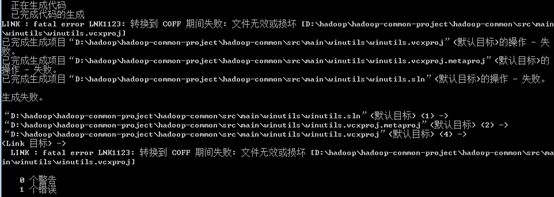

又报错 winutils错误,不过是其他原因

LINK : fatal error LNK1123: 转换到 COFF 期间失败: 文件无效或损坏 [D:\hadoop\hadoop-common-project\hadoop-common\src\ma

in\winutils\winutils.vcxproj]

[ERROR] Failed to execute goal org.codehaus.mojo:exec-maven-plugin:1.3.1:exec (compile-ms-winutils) on project hadoop-co

mmon: Command execution failed.: Process exited with an error: 1 (Exit value: 1) -> [Help 1]

网上大牛果然很多

对比版本号后我是直接把本机安装目录D:\Program Files (x86)\Microsoft Visual Studio 10.0\VC\bin\cvtres.exe 的文件重新命名了,再执行

此刻我的电脑有点卡,因为好像开始编译了。。。

又到1点,不等了,睡觉去了。。。 -

An Ant BuildException has occured: Execute failed: java.io.IOException: Cannot run program “cmake” (in directory"D:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native"):CreateProcess error=2, 系统找不到指定的文件

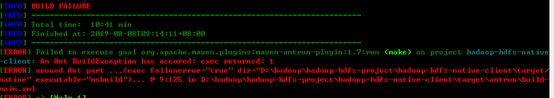

又到早上起来了,结果构建失败。。。

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-hdfs-native-client: An Ant BuildException has occured: Execute failed: java.io.IOException: Cannot run program “cmake” (in directory “D:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native”): CreateProcess error=2, 系统找不到指定的文件。

[ERROR] around Ant part …… @ 5:123 in D:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\antrun\build-main.xml

[ERROR] -> [Help 1]

解决方案: 安装 cmake配置环境变量,要检查是否安装成功

早餐。。。。。

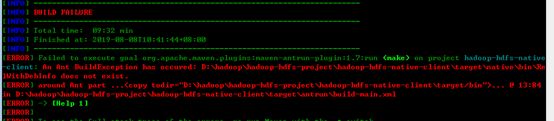

-

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-hdfs-native-client: An Ant BuildException has occured: exec returned: 1

[ERROR] around Ant part …… @ 9:125 in D:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\antrun\build-main.xml

网友提供解决方案:

按上面操作后运行报其他错误 -

hadoop-hdfs-native-client\target\native\bin\RelWithDebInfo does not exist.

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-hdfs-native-client: An Ant BuildException has occured: D:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\bin\RelWithDebInfo does not exist.

[ERROR] around Ant part …… @ 13:84in D:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\antrun\build-main.xml

试了很多方法都不行,灵机一动把 D:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\target 目录下的 \bin\RelWithDebInfo 整个给拷贝到了 \native 目录下,再运行居然没有报错了。。。

1个多小时还在运行,本以为这次回成功了,结果还是报错了

编译yarn时报错,错误一大堆

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 01:15 h

[INFO] Finished at: 2019-08-08T14:18:26+08:00

[INFO] ------------------------------------------------------------------------

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:3.1:compile (default-compile) on project hadoop-yarn-server-timelineservice-hbase-common: Compilation failure: Compilation failure:

[ERROR] /D:/hadoop/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-server/hadoop-yarn-server-timelineservice-hbase/hadoop-yarn-server-timelineservice-hbase-common/src/main/java/org/apache/hadoop/yarn/server/timelineservice/storage/flow/FlowRunR

owKey.java:[25,68] 找不到符号

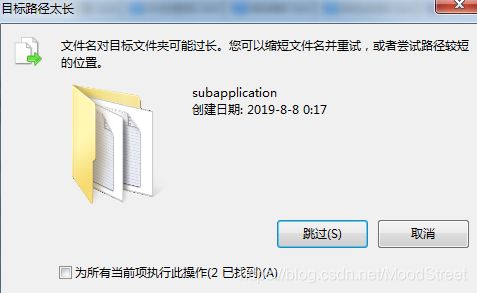

直接进入到这个类里面看,缺少了某个类文件,当时以为是源码问题,后面与解压出来的源码对比发现源码那边是有文件的,这个原因也是自己作的,之前修改了Hadoop路径,直接把源码拷贝到新目录这中间丢失了一些文件,如下图,当时直接忽略了。。。,建议大家直接解压到要编译的目录(唉,也不知道前面的一些问题是不是因为这个原因造成的,一把辛酸一把泪)

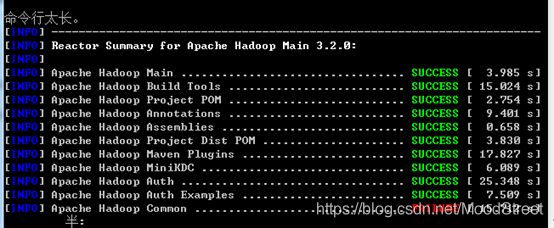

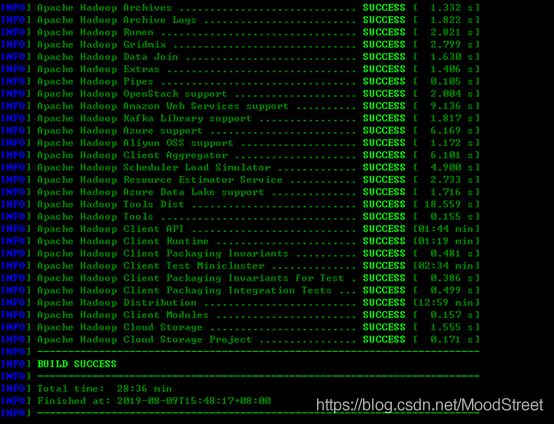

Finally,皇天不负有心人

本机环境变量配置

%JAVA_HOME%\bin;%HADOOP_HOME%\bin;%HADOOP_HOME%\sbin;%M2_HOME%\bin;%GIT_HOME%\bin;%ANT_HOME%\bin;%CMAKE_HOME%\bin;C:\Windows\Microsoft.NET\Framework\v4.0.30319;

win-paths-eg.cmd 文件(在path配置一样,建议都配置在path)

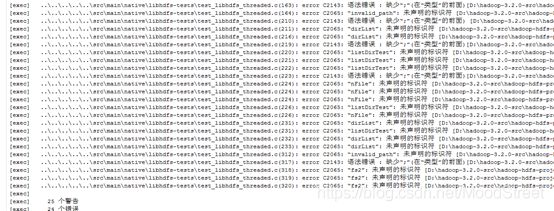

你以为就这样结束了?不,既然已经成功了,索性我就再次解压了个源码重新打包,出现了上面3.6那个错误,这次没有改pom文件,贴出错误信息:

hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\ALL_BUILD.vcxproj”(默认目标)的操作 - 失败

[exec] 生成失败。

[exec] …\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(163): error C2143: 语法错误 : 缺少“;”(在“类型”的前面)[D:\hadoop-3.2.0-src\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] …\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(164): error C2065: “invalid_path”: 未声明的标识符 [D:\hadoop-3.2.0-src\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] …\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(210): error C2143: 语法错误 : 缺少“;”(在“类型”的前面) [D:\hadoop-3.2.0-src\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] …\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(211): error C2065: “dirList”: 未声明的标识符 [D:\hadoop-3.2.0-src\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

。。。。。。

C语言的问题,这简直是在挑战我,硬着头皮上

按目录找到 test_libhdfs_threaded.c 这个文件,然后百度这个错误,说是要使用的变量必须要在程序之前先声明,然后我就把所有报错的变量都移动程序前面先定义好,如下图:

再次运行mvn package -Pdist,native-win -DskipTests -Dmaven.javadoc.skip=true -Dtar,完美收场。

总结

仔细想想整个编译过程也不是很难,仔细阅读building.txt文件,按照里面的步骤来就好,虽然会出现各种各样的问题,但是我们需要的就是解决问题的能力嘛,哈哈。