9.transforms图像增强(二)

文章目录

- 1.图像变换

-

- 1.1 Pad

- 1.2 ColorJitter

- 1.3 RandomGrayscale

- 1.4 RandomAffine

- 1.5 RandomErasing

- 1.6 transforms.Lambda

- 2.transforms方法操作

-

- 2.1 transforms.RandomChoice

- 2.2 transforms.RandomApply

- 2.3 transforms.RandomOrder

- 3.自定义transforms方法

-

- 3.1 自定义transforms要素:

- 3.2 椒盐噪声

- 3.3 数据增强实战应用

- 4.本节代码

-

- 4.1 图像变换

- 4.2 椒盐噪声代码

- 4.3 人民币分类模型数据增强实验

1.图像变换

1.1 Pad

功能:对图片边缘进行填充

- padding:设置填充大小

当为a时,上下左右均填充a个像素

当为(a, b)时,上下填充b个像素,左右填充a个像素

当为(a, b, c, d)时,左,上,右,下分别填充a, b, c, d - padding_mode:填充模式,有4种模式,constant、edge、reflect和symmetric

- fill:constant时,设置填充的像素值,(R, G, B) or (Gray)

transforms.Pad(padding, fill=0,

padding_mode='constant')

1.2 ColorJitter

功能:调整亮度、对比度、饱和度和色相

- brightness:亮度调整因子

当为a时,从[max(0, 1-a), 1+a]中随机选择

当为(a, b)时,从[a, b]中 - contrast:对比度参数,同brightness

- saturation:饱和度参数,同brightness

- hue:色相参数,当为a时,从[-a, a]中选择参数,注: 0<= a <= 0.5

当为(a, b)时,从[a, b]中选择参数,注:-0.5 <= a <= b <= 0.5

transforms.ColorJitter(brightness=0,

contrast=0, saturation=0, hue=0)

1.3 RandomGrayscale

功能:依概率将图片转换为灰度图

- num_ouput_channels:输出通道数 只能设1或3

- p:概率值,图像被转换为灰度图的概率

RandomGrayscale(num_output_channels, p=0.1)

Grayscale(num_output_channels)

1.4 RandomAffine

功能:对图像进行仿射变换,仿射变换是二维 的线性变换,由五种基本原子变换构成,分别 是旋转、平移、缩放、错切和翻转

- degrees:旋转角度设

- translate:平移区间设置,如(a, b), a设置宽(width),b设置高(height) 图像在宽维度平移的区间为 -img_width * a < dx < img_width * a

- scale:缩放比例(以面积为单位)

- fill_color:填充颜色设置

RandomAffine(degrees, translate=None,

scale=None, shear=None,

resample=False, fillcolor=0)

1.5 RandomErasing

功能:对图像进行随机遮挡

- p:概率值,执行该操作的概率

- scale:遮挡区域的面积

- ratio:遮挡区域长宽比

- value:设置遮挡区域的像素值,(R, G, B)

1.6 transforms.Lambda

功能:用户自定义lambda方法

- lambd:lambda匿名函数

- lambda [arg1 [,arg2, … , argn]] : expression

transforms.Lambda(lambd)

transforms.TenCrop(200, vertical_flip=True),

transforms.Lambda(lambda crops: torch.stack([transforms.Totensor()(crop) for crop in crops])),

2.transforms方法操作

2.1 transforms.RandomChoice

功能:从一系列transforms方法中随机挑选一个

transforms.RandomChoice([transforms1,

transforms2, transforms3])

2.2 transforms.RandomApply

功能:依据概率执行一组transforms操作

transforms.RandomApply([transforms1,

transforms2, transforms3], p=0.5)

2.3 transforms.RandomOrder

功能:对一组transforms操作打乱顺序

transforms.RandomOrder([transforms1, transforms2, transforms3])

3.自定义transforms方法

3.1 自定义transforms要素:

- 仅接收一个参数,返回一个参数

- 注意上下游的输出与输入

class Compose(object):

def __call__(self, img):

for t in self.transforms:

img = t(img)

return img

通过类实现多参数传入:

class YourTransforms(object):

def __init__(self, ...): ...

def __call__(self, img): ...

return img

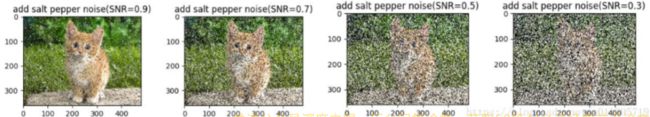

3.2 椒盐噪声

class AddPepperNoise(object):

def __init__(self, snr, p):

self.snr = snr

self.p = p

def __call__(self, img):

```添加椒盐噪声具体实现过程 ```

return img

class Compose(object):

def __call__(self, img):

for t in self.transforms:

img = t(img) return img

3.3 数据增强实战应用

原则:让训练集与测试集更接近

- 空间位置:平移

- 色彩:灰度图,色彩抖动

- 形状:仿射变换

- 上下文场景:遮挡,填充

4.本节代码

4.1 图像变换

import os

import numpy as np

import torch

import random

from matplotlib import pyplot as plt

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

from tools.my_dataset import RMBDataset

from tools.common_tools import transform_invert

def set_seed(seed=1):

random.seed(seed)

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed(seed)

set_seed(1) # 设置随机种子

# 参数设置

MAX_EPOCH = 10

BATCH_SIZE = 1

LR = 0.01

log_interval = 10

val_interval = 1

rmb_label = {

"1": 0, "100": 1}

# ============================ step 1/5 数据 ============================

split_dir = os.path.join("..", "..", "data", "rmb_split")

train_dir = os.path.join(split_dir, "train")

valid_dir = os.path.join(split_dir, "valid")

norm_mean = [0.485, 0.456, 0.406]

norm_std = [0.229, 0.224, 0.225]

train_transform = transforms.Compose([

transforms.Resize((224, 224)),

# 1 Pad

# transforms.Pad(padding=32, fill=(255, 0, 0), padding_mode='constant'),

# transforms.Pad(padding=(8, 64), fill=(255, 0, 0), padding_mode='constant'),

# transforms.Pad(padding=(8, 16, 32, 64), fill=(255, 0, 0), padding_mode='constant'),

# transforms.Pad(padding=(8, 16, 32, 64), fill=(255, 0, 0), padding_mode='symmetric'),

# 2 ColorJitter

# transforms.ColorJitter(brightness=0.5),

# transforms.ColorJitter(contrast=0.5),

# transforms.ColorJitter(saturation=0.5),

# transforms.ColorJitter(hue=0.3),

# 3 Grayscale

# transforms.Grayscale(num_output_channels=3),

# 4 Affine

# transforms.RandomAffine(degrees=30),

# transforms.RandomAffine(degrees=0, translate=(0.2, 0.2), fillcolor=(255, 0, 0)),

# transforms.RandomAffine(degrees=0, scale=(0.7, 0.7)),

# transforms.RandomAffine(degrees=0, shear=(0, 0, 0, 45)),

# transforms.RandomAffine(degrees=0, shear=90, fillcolor=(255, 0, 0)),

# 5 Erasing

# transforms.ToTensor(),

# transforms.RandomErasing(p=1, scale=(0.02, 0.33), ratio=(0.3, 3.3), value=(254/255, 0, 0)),

# transforms.RandomErasing(p=1, scale=(0.02, 0.33), ratio=(0.3, 3.3), value='1234'),

# 1 RandomChoice

# transforms.RandomChoice([transforms.RandomVerticalFlip(p=1), transforms.RandomHorizontalFlip(p=1)]),

# 2 RandomApply

# transforms.RandomApply([transforms.RandomAffine(degrees=0, shear=45, fillcolor=(255, 0, 0)),

# transforms.Grayscale(num_output_channels=3)], p=0.5),

# 3 RandomOrder

# transforms.RandomOrder([transforms.RandomRotation(15),

# transforms.Pad(padding=32),

# transforms.RandomAffine(degrees=0, translate=(0.01, 0.1), scale=(0.9, 1.1))]),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std),

])

valid_transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std)

])

# 构建MyDataset实例

train_data = RMBDataset(data_dir=train_dir, transform=train_transform)

valid_data = RMBDataset(data_dir=valid_dir, transform=valid_transform)

# 构建DataLoder

train_loader = DataLoader(dataset=train_data, batch_size=BATCH_SIZE, shuffle=True)

valid_loader = DataLoader(dataset=valid_data, batch_size=BATCH_SIZE)

# ============================ step 5/5 训练 ============================

for epoch in range(MAX_EPOCH):

for i, data in enumerate(train_loader):

inputs, labels = data # B C H W

img_tensor = inputs[0, ...] # C H W

img = transform_invert(img_tensor, train_transform)

plt.imshow(img)

plt.show()

plt.pause(0.5)

plt.close()

4.2 椒盐噪声代码

import os

import numpy as np

import torch

import random

import torchvision.transforms as transforms

from PIL import Image

from matplotlib import pyplot as plt

from torch.utils.data import DataLoader

from tools.my_dataset import RMBDataset

from tools.common_tools import transform_invert

def set_seed(seed=1):

random.seed(seed)

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed(seed)

set_seed(1) # 设置随机种子

# 参数设置

MAX_EPOCH = 10

BATCH_SIZE = 1

LR = 0.01

log_interval = 10

val_interval = 1

rmb_label = {

"1": 0, "100": 1}

class AddPepperNoise(object):

"""增加椒盐噪声

Args:

snr (float): Signal Noise Rate

p (float): 概率值,依概率执行该操作

"""

def __init__(self, snr, p=0.9):

assert isinstance(snr, float) or (isinstance(p, float))

self.snr = snr

self.p = p

def __call__(self, img):

"""

Args:

img (PIL Image): PIL Image

Returns:

PIL Image: PIL image.

"""

if random.uniform(0, 1) < self.p:

img_ = np.array(img).copy()

h, w, c = img_.shape

signal_pct = self.snr

noise_pct = (1 - self.snr)

mask = np.random.choice((0, 1, 2), size=(h, w, 1), p=[signal_pct, noise_pct/2., noise_pct/2.])

mask = np.repeat(mask, c, axis=2)

img_[mask == 1] = 255 # 盐噪声

img_[mask == 2] = 0 # 椒噪声

return Image.fromarray(img_.astype('uint8')).convert('RGB')

else:

return img

# ============================ step 1/5 数据 ============================

split_dir = os.path.join("..", "..", "data", "rmb_split")

train_dir = os.path.join(split_dir, "train")

valid_dir = os.path.join(split_dir, "valid")

norm_mean = [0.485, 0.456, 0.406]

norm_std = [0.229, 0.224, 0.225]

train_transform = transforms.Compose([

transforms.Resize((224, 224)),

AddPepperNoise(0.9, p=0.5),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std),

])

valid_transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std)

])

# 构建MyDataset实例

train_data = RMBDataset(data_dir=train_dir, transform=train_transform)

valid_data = RMBDataset(data_dir=valid_dir, transform=valid_transform)

# 构建DataLoder

train_loader = DataLoader(dataset=train_data, batch_size=BATCH_SIZE, shuffle=True)

valid_loader = DataLoader(dataset=valid_data, batch_size=BATCH_SIZE)

# ============================ step 5/5 训练 ============================

for epoch in range(MAX_EPOCH):

for i, data in enumerate(train_loader):

inputs, labels = data # B C H W

img_tensor = inputs[0, ...] # C H W

img = transform_invert(img_tensor, train_transform)

plt.imshow(img)

plt.show()

plt.pause(0.5)

plt.close()

4.3 人民币分类模型数据增强实验

import os

import random

import numpy as np

import torch

import torch.nn as nn

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

import torch.optim as optim

from matplotlib import pyplot as plt

from model.lenet import LeNet

from tools.my_dataset import RMBDataset

from tools.common_tools import transform_invert

def set_seed(seed=1):

random.seed(seed)

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed(seed)

set_seed() # 设置随机种子

rmb_label = {

"1": 0, "100": 1}

# 参数设置

MAX_EPOCH = 10

BATCH_SIZE = 16

LR = 0.01

log_interval = 10

val_interval = 1

# ============================ step 1/5 数据 ============================

split_dir = os.path.join("..", "..", "data", "rmb_split")

train_dir = os.path.join(split_dir, "train")

valid_dir = os.path.join(split_dir, "valid")

norm_mean = [0.485, 0.456, 0.406]

norm_std = [0.229, 0.224, 0.225]

train_transform = transforms.Compose([

transforms.Resize((32, 32)),

transforms.RandomCrop(32, padding=4),

transforms.RandomGrayscale(p=0.9),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std),

])

valid_transform = transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std),

])

# 构建MyDataset实例

train_data = RMBDataset(data_dir=train_dir, transform=train_transform)

valid_data = RMBDataset(data_dir=valid_dir, transform=valid_transform)

# 构建DataLoder

train_loader = DataLoader(dataset=train_data, batch_size=BATCH_SIZE, shuffle=True)

valid_loader = DataLoader(dataset=valid_data, batch_size=BATCH_SIZE)

# ============================ step 2/5 模型 ============================

net = LeNet(classes=2)

net.initialize_weights()

# ============================ step 3/5 损失函数 ============================

criterion = nn.CrossEntropyLoss() # 选择损失函数

# ============================ step 4/5 优化器 ============================

optimizer = optim.SGD(net.parameters(), lr=LR, momentum=0.9) # 选择优化器

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, step_size=10, gamma=0.1) # 设置学习率下降策略

# ============================ step 5/5 训练 ============================

train_curve = list()

valid_curve = list()

for epoch in range(MAX_EPOCH):

loss_mean = 0.

correct = 0.

total = 0.

net.train()

for i, data in enumerate(train_loader):

# forward

inputs, labels = data

outputs = net(inputs)

# backward

optimizer.zero_grad()

loss = criterion(outputs, labels)

loss.backward()

# update weights

optimizer.step()

# 统计分类情况

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).squeeze().sum().numpy()

# 打印训练信息

loss_mean += loss.item()

train_curve.append(loss.item())

if (i+1) % log_interval == 0:

loss_mean = loss_mean / log_interval

print("Training:Epoch[{:0>3}/{:0>3}] Iteration[{:0>3}/{:0>3}] Loss: {:.4f} Acc:{:.2%}".format(

epoch, MAX_EPOCH, i+1, len(train_loader), loss_mean, correct / total))

loss_mean = 0.

scheduler.step() # 更新学习率

# validate the model

if (epoch+1) % val_interval == 0:

correct_val = 0.

total_val = 0.

loss_val = 0.

net.eval()

with torch.no_grad():

for j, data in enumerate(valid_loader):

inputs, labels = data

outputs = net(inputs)

loss = criterion(outputs, labels)

_, predicted = torch.max(outputs.data, 1)

total_val += labels.size(0)

correct_val += (predicted == labels).squeeze().sum().numpy()

loss_val += loss.item()

valid_curve.append(loss_val)

print("Valid:\t Epoch[{:0>3}/{:0>3}] Iteration[{:0>3}/{:0>3}] Loss: {:.4f} Acc:{:.2%}".format(

epoch, MAX_EPOCH, j+1, len(valid_loader), loss_val, correct / total))

train_x = range(len(train_curve))

train_y = train_curve

train_iters = len(train_loader)

valid_x = np.arange(1, len(valid_curve)+1) * train_iters*val_interval # 由于valid中记录的是epochloss,需要对记录点进行转换到iterations

valid_y = valid_curve

plt.plot(train_x, train_y, label='Train')

plt.plot(valid_x, valid_y, label='Valid')

plt.legend(loc='upper right')

plt.ylabel('loss value')

plt.xlabel('Iteration')

plt.show()

# ============================ inference ============================

BASE_DIR = os.path.dirname(os.path.abspath(__file__))

test_dir = os.path.join(BASE_DIR, "test_data")

test_data = RMBDataset(data_dir=test_dir, transform=valid_transform)

valid_loader = DataLoader(dataset=test_data, batch_size=1)

for i, data in enumerate(valid_loader):

# forward

inputs, labels = data

outputs = net(inputs)

_, predicted = torch.max(outputs.data, 1)

rmb = 1 if predicted.numpy()[0] == 0 else 100

img_tensor = inputs[0, ...] # C H W

img = transform_invert(img_tensor, train_transform)

plt.imshow(img)

plt.title("LeNet got {} Yuan".format(rmb))

plt.show()

plt.pause(0.5)

plt.close()