FFMPEG音频视频开发:QT获取Android、Linux、Windows系统上的摄像头数据帧与声卡音频通过FFMPEG编码为MP4存储(v1.0)

一、操作系统介绍

Linux系统: ubuntu18.04 64位

Android系统: Android 8.1/9.0

windows系统: win10

QT版本: 5.12

FFMPEG版本: 4.2.2

NDK:R19C

声卡: win10 电脑自带声卡、罗技USB摄像头声卡、Android手机自带声卡都可以获取声音数据

摄像头: 手机摄像头、罗技USB摄像头

二、需求与代码实现

通过QT代码获取摄像头与声卡数据,通过ffmpeg编码为视频存储到本地。

代码里可以选择视频声音的来源: 自动生成的声音和来自声卡的声音。

代码里声音采集、视频采集、视频编码都是独立线程。

在自己设备上需要注意采集的声音配置必须与FFMPEG编码的声音参数一样,否则录制的声音无法正常播放。

三、具体代码

mainwindow.cpp代码: 主界面

#include "mainwindow.h"

#include "ui_mainwindow.h"

/*

* 设置QT界面的样式

*/

void MainWindow::SetStyle(const QString &qssFile) {

QFile file(qssFile);

if (file.open(QFile::ReadOnly)) {

QString qss = QLatin1String(file.readAll());

qApp->setStyleSheet(qss);

QString PaletteColor = qss.mid(20,7);

qApp->setPalette(QPalette(QColor(PaletteColor)));

file.close();

}

else

{

qApp->setStyleSheet("");

}

}

MainWindow::MainWindow(QWidget *parent)

: QMainWindow(parent)

, ui(new Ui::MainWindow)

{

ui->setupUi(this);

this->SetStyle(":/images/blue.css"); //设置样式表

this->setWindowIcon(QIcon(":/log.ico")); //设置图标

this->setWindowTitle("照相机");

//获取本机可用摄像头

videoaudioencode.cameras = QCameraInfo::availableCameras();

if(videoaudioencode.cameras.count())

{

for(int i=0;icomboBox->addItem(tr("%1").arg(i));

}

}

else

{

QMessageBox::warning(this,tr("提示"),"本机没有可用的摄像头!\n"

"软件作者:DS小龙哥\n"

"BUG反馈:[email protected]");

}

ui->pushButton_stop->setEnabled(false); //设置停止按钮不可用

ui->pushButton_Camear_up->setEnabled(false);

//创建工作目录

#ifdef ANDROID_DEVICE

QDir dir;

if(!dir.exists("/sdcard/DCIM/Camera/"))

{

if(dir.mkpath("/sdcard/DCIM/Camera/"))

{

Log_Display("/sdcard/DCIM/Camera/目录创建成功.\n");

}

else

{

Log_Display("/sdcard/DCIM/Camera/目录创建失败.\n");

}

}

#endif

//相关的初始化

videoaudioencode.VideoWidth=640;

videoaudioencode.VideoHeight=480;

//连接摄像头采集信号,在主线程实时显示视频画面

connect(&videoReadThread,SIGNAL(VideoDataOutput(QImage &)),this,SLOT(VideoDataDisplay(QImage &)));

//连接音频线程输出的日志信息

connect(&audioReadThread,SIGNAL(LogSend(QString)),this,SLOT(Log_Display(QString)));

//连接音频视频编码线程输出的日志信息

connect(&thread_VideoenCode,SIGNAL(LogSend(QString)),this,SLOT(Log_Display(QString)));

}

MainWindow::~MainWindow()

{

delete ui;

}

void MainWindow::Log_Display(QString text)

{

ui->plainTextEdit->insertPlainText(text);

}

//视频刷新显示

void MainWindow::VideoDataDisplay(QImage &image)

{

QPixmap my_pixmap;

my_pixmap.convertFromImage(image.copy());

ui->label_ImageDisplay->setPixmap(my_pixmap);

}

void MainWindow::on_pushButton_open_camera_clicked()

{

audio_buffer_w_count=0; //音频缓冲区的指针

audio_buffer_r_count=0;

//1. 启动音频视频编码线程

videoaudioencode.run_flag=1;

thread_VideoenCode.start();

//2.启动摄像头采集线程

videoaudioencode.camera_node=ui->comboBox->currentText().toInt(); //当前选择的摄像头编号

videoReadThread.start();

//3. 启动音频采集线程

audioReadThread.start();

//设置界面按钮状态

ui->pushButton_open_camera->setEnabled(false);

ui->pushButton_stop->setEnabled(true); //设置停止按钮可用

ui->pushButton_Camear_up->setEnabled(true);

}

/*

* 摄像头输出的信息

D libandroid_camera_save.so: 32 max rate = 15 min rate = 15 resolution QSize(640, 480) Format= Format_NV21 QSize(1, 1)

D libandroid_camera_save.so: 33 max rate = 30 min rate = 30 resolution QSize(640, 480) Format= Format_NV21 QSize(1, 1)

D libandroid_camera_save.so: 34 max rate = 15 min rate = 15 resolution QSize(640, 480) Format= Format_YV12 QSize(1, 1)

D libandroid_camvoid Camear_Init(int node)era_save.so: 35 max rate = 30 min rate = 30 resolution QSize(640, 480) Format= Format_YV12 QSize(1, 1)

*/

void MainWindow::on_pushButton_Camear_up_clicked()

{

const QPixmap pix=ui->label_ImageDisplay->pixmap()->copy();

QDateTime dateTime(QDateTime::currentDateTime());

//时间效果: 2020-03-05 16:25::04 周四

QString qStr="";

qStr+=SAVE_FILE_PATH; //Android 手机的照相机文件夹

qStr+=dateTime.toString("yyyy-MM-dd-hh-mm-ss");

qStr+=".jpg";

pix.save(qStr);

QString text=tr("照片保存路径:%1\n").arg(qStr);

Log_Display(text);

}

void MainWindow::on_pushButton_stop_clicked()

{

Stop_VideoAudioEncode();

ui->pushButton_open_camera->setEnabled(true);

ui->pushButton_stop->setEnabled(false); //设置停止按钮不可用

ui->pushButton_Camear_up->setEnabled(false);

}

void MainWindow::Stop_VideoAudioEncode()

{

//退出视频采集

videoReadThread.quit(); //告诉线程的事件循环以return 0(成功)退出

videoReadThread.wait(); //等待线程退出

//退出音频采集

audioReadThread.quit(); //告诉线程的事件循环以return 0(成功)退出

audioReadThread.wait(); //等待线程退出

//退出编码线程

videoaudioencode.run_flag=0; //停止视频编码

thread_VideoenCode.wait(); //等待编码线程退出

}

//窗口关闭事件

void MainWindow::closeEvent(QCloseEvent *event)

{

int ret = QMessageBox::question(this, tr("车载主机录像设备"),

tr("是否需要退出程序?"),

QMessageBox::Yes | QMessageBox::No);

if(ret==QMessageBox::Yes)

{

Stop_VideoAudioEncode();

event->accept(); //接受事件

}

else

{

event->ignore(); //清除事件

}

}

mainwindow.h代码: 主界面头文件

#ifndef MAINWINDOW_H

#define MAINWINDOW_H

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include "video_audio_encode.h"

#include "video_data_input.h"

#include "audio_data_input.h"

QT_BEGIN_NAMESPACE

namespace Ui { class MainWindow; }

QT_END_NAMESPACE

class MainWindow : public QMainWindow

{

Q_OBJECT

public:

void Stop_VideoAudioEncode();

void SetStyle(const QString &qssFile);

MainWindow(QWidget *parent = nullptr);

~MainWindow();

private slots:

void Log_Display(QString text);

void VideoDataDisplay(QImage &);

void on_pushButton_open_camera_clicked();

void on_pushButton_Camear_up_clicked();

void on_pushButton_stop_clicked();

private:

Ui::MainWindow *ui;

protected:

void closeEvent(QCloseEvent *event); //窗口关闭事件

};

#define ANDROID_DEVICE

#ifdef ANDROID_DEVICE

//设置保存文件的路径

#define SAVE_FILE_PATH "/sdcard/DCIM/Camera/"

#else

//设置保存文件的路径

#define SAVE_FILE_PATH "./"

#endif

#endif // MAINWINDOW_H

video_audio_encode.cpp代码: 编码文件

#include "video_audio_encode.h"

class VideoAudioEncode videoaudioencode;

Thread_VideoAudioEncode thread_VideoenCode; //视频音频编码的线程

char audio_buffer[AUDIO_BUFFER_MAX_SIZE]; //音频缓存

int audio_buffer_r_count=0;

int audio_buffer_w_count=0;

//音频相关参数设置

#define AUDIO_RATE_SET 44100 //音频采样率

#define AUDIO_BIT_RATE_SET 64000 //设置码率

#define AUDIO_CHANNEL_SET AV_CH_LAYOUT_MONO //AV_CH_LAYOUT_MONO 单声道 AV_CH_LAYOUT_STEREO 立体声

#define STREAM_DURATION 10.0 //录制时间秒单位

#define STREAM_FRAME_RATE 30 /* 30 images/s 图片是多少帧1秒*/

#define STREAM_PIX_FMT AV_PIX_FMT_YUV420P /* default pix_fmt 视频图像格式 */

#define SCALE_FLAGS SWS_BICUBIC

// 单个输出AVStream的包装器

typedef struct OutputStream {

AVStream *st;

AVCodecContext *enc;

/* 下一帧的点数*/

int64_t next_pts;

int samples_count;

AVFrame *frame;

AVFrame *tmp_frame;

float t, tincr, tincr2;

struct SwsContext *sws_ctx;

struct SwrContext *swr_ctx;

} OutputStream;

static int write_frame(AVFormatContext *fmt_ctx, const AVRational *time_base, AVStream *st, AVPacket *pkt)

{

/*将输出数据包时间戳值从编解码器重新调整为流时基 */

av_packet_rescale_ts(pkt, *time_base, st->time_base);

pkt->stream_index = st->index;

/*将压缩的帧写入媒体文件*/

return av_interleaved_write_frame(fmt_ctx, pkt);

}

/* 添加输出流。 */

static void add_stream(OutputStream *ost, AVFormatContext *oc,

AVCodec **codec,

enum AVCodecID codec_id)

{

AVCodecContext *c;

int i;

/* find the encoder */

*codec = avcodec_find_encoder(codec_id);

if (!(*codec)) {

qDebug("Could not find encoder for '%s'\n",avcodec_get_name(codec_id));

exit(1);

}

ost->st = avformat_new_stream(oc, nullptr);

if (!ost->st) {

qDebug("Could not allocate stream\n");

exit(1);

}

ost->st->id = oc->nb_streams-1;

c = avcodec_alloc_context3(*codec);

if (!c) {

qDebug("Could not alloc an encoding context\n");

exit(1);

}

ost->enc = c;

switch ((*codec)->type) {

case AVMEDIA_TYPE_AUDIO:

//设置数据格式

//c->sample_fmt = (*codec)->sample_fmts ? (*codec)->sample_fmts[0] : AV_SAMPLE_FMT_FLTP;

c->sample_fmt = AV_SAMPLE_FMT_FLTP;

c->bit_rate = AUDIO_BIT_RATE_SET; //设置码率

c->sample_rate = AUDIO_RATE_SET; //音频采样率

//编码器支持的采样率

if ((*codec)->supported_samplerates)

{

c->sample_rate = (*codec)->supported_samplerates[0];

for (i = 0; (*codec)->supported_samplerates[i]; i++)

{

//判断编码器是否支持

if ((*codec)->supported_samplerates[i] == AUDIO_RATE_SET)

{

c->sample_rate = AUDIO_RATE_SET;

}

}

}

//设置采样通道

c->channels= av_get_channel_layout_nb_channels(c->channel_layout);

c->channel_layout = AUDIO_CHANNEL_SET; //AV_CH_LAYOUT_MONO 单声道 AV_CH_LAYOUT_STEREO 立体声

if ((*codec)->channel_layouts)

{

c->channel_layout = (*codec)->channel_layouts[0];

for (i = 0; (*codec)->channel_layouts[i]; i++)

{

if ((*codec)->channel_layouts[i] == AUDIO_CHANNEL_SET)

{

c->channel_layout = AUDIO_CHANNEL_SET;

}

}

}

c->channels = av_get_channel_layout_nb_channels(c->channel_layout);

ost->st->time_base = (AVRational){ 1, c->sample_rate };

break;

case AVMEDIA_TYPE_VIDEO:

c->codec_id = codec_id;

//码率:影响体积,与体积成正比:码率越大,体积越大;码率越小,体积越小。

c->bit_rate = 400000; //设置码率 400kps

/*分辨率必须是2的倍数。 */

c->width = 640;

c->height = 480;

/*时基:这是基本的时间单位(以秒为单位)

*表示其中的帧时间戳。 对于固定fps内容,

*时基应为1 / framerate,时间戳增量应为

*等于1。*/

ost->st->time_base = (AVRational){1,STREAM_FRAME_RATE};

c->time_base = ost->st->time_base;

c->gop_size = 12; /* 最多每十二帧发射一帧内帧 */

c->pix_fmt = STREAM_PIX_FMT;

if(c->codec_id == AV_CODEC_ID_MPEG2VIDEO)

{

/* 只是为了测试,添加了B帧 */

c->max_b_frames = 2;

}

if (c->codec_id == AV_CODEC_ID_MPEG1VIDEO) {

/*需要避免使用其中一些系数溢出的宏块。

*普通视频不会发生这种情况,因为

*色度平面的运动与亮度平面不匹配。 */

c->mb_decision = 2;

}

break;

default:

break;

}

/* 某些格式希望流头分开。 */

if (oc->oformat->flags & AVFMT_GLOBALHEADER)

c->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

}

/**************************************************************/

/* audio output */

static AVFrame *alloc_audio_frame(enum AVSampleFormat sample_fmt,

uint64_t channel_layout,

int sample_rate, int nb_samples)

{

AVFrame *frame = av_frame_alloc();

int ret;

if (!frame) {

qDebug("Error allocating an audio frame\n");

exit(1);

}

frame->format = sample_fmt;

frame->channel_layout = channel_layout;

frame->sample_rate = sample_rate;

frame->nb_samples = nb_samples;

if (nb_samples) {

ret = av_frame_get_buffer(frame, 0);

if (ret < 0) {

qDebug("Error allocating an audio buffer\n");

exit(1);

}

}

return frame;

}

static void open_audio(AVFormatContext *oc, AVCodec *codec, OutputStream *ost, AVDictionary *opt_arg)

{

AVCodecContext *c;

int nb_samples;

int ret;

AVDictionary *opt = nullptr;

c = ost->enc;

/* open it */

av_dict_copy(&opt, opt_arg, 0);

ret = avcodec_open2(c, codec, &opt);

av_dict_free(&opt);

if (ret < 0) {

qDebug("无法打开音频编解码器\n");

exit(1);

}

/* 初始化信号发生器 */

ost->t = 0;

ost->tincr = 2 * M_PI * 110.0 / c->sample_rate;

/* 每秒增加110 Hz的频率 */

ost->tincr2 = 2 * M_PI * 110.0 / c->sample_rate / c->sample_rate;

if (c->codec->capabilities & AV_CODEC_CAP_VARIABLE_FRAME_SIZE)

nb_samples = 10000;

else

nb_samples = c->frame_size;

ost->frame = alloc_audio_frame(c->sample_fmt, c->channel_layout,

c->sample_rate, nb_samples);

ost->tmp_frame = alloc_audio_frame(AV_SAMPLE_FMT_S16, c->channel_layout,

c->sample_rate, nb_samples);

/*将流参数复制到多路复用器 */

ret = avcodec_parameters_from_context(ost->st->codecpar, c);

if (ret < 0) {

qDebug("无法复制流参数\n");

exit(1);

}

/* 创建重采样器上下文 */

ost->swr_ctx = swr_alloc();

if(!ost->swr_ctx)

{

qDebug("无法分配重采样器上下文\n");

exit(1);

}

/* 设定选项 */

av_opt_set_int (ost->swr_ctx, "in_channel_count", c->channels, 0);

av_opt_set_int (ost->swr_ctx, "in_sample_rate", c->sample_rate, 0);

av_opt_set_sample_fmt(ost->swr_ctx, "in_sample_fmt", AV_SAMPLE_FMT_S16, 0);//带符号16bit

av_opt_set_int (ost->swr_ctx, "out_channel_count", c->channels, 0);

av_opt_set_int (ost->swr_ctx, "out_sample_rate", c->sample_rate, 0);

av_opt_set_sample_fmt(ost->swr_ctx, "out_sample_fmt", c->sample_fmt, 0);

qDebug("音频通道数=%d\n",c->channels);

qDebug("音频采样率=%d\n",c->sample_rate);

/* 初始化重采样上下文 */

if ((ret = swr_init(ost->swr_ctx)) < 0) {

qDebug("无法初始化重采样上下文\n");

exit(1);

}

}

/*准备一个'frame_size'样本的16位虚拟音频帧,然后'nb_channels'频道。 */

static AVFrame *get_audio_frame(OutputStream *ost)

{

AVFrame *frame = ost->tmp_frame;

int j, i, v;

int16_t *q = (int16_t*)frame->data[0];

/* 检查我们是否要生成更多帧----用于判断是否结束*/

if(av_compare_ts(ost->next_pts, ost->enc->time_base,

STREAM_DURATION, (AVRational){ 1, 1 }) >= 0)

return nullptr;

// qDebug("frame->nb_samples=%d\n",frame->nb_samples); //1024

// qDebug("ost->enc->channels=%d\n",ost->enc->channels);

//消费者

// videoaudioencode.audio_encode_mutex.lock();

// videoaudioencode.audio_encode_Condition.wait(&videoaudioencode.audio_encode_mutex);

// memcpy(audio_buffer_temp,audio_buffer,sizeof(audio_buffer));

// videoaudioencode.audio_encode_mutex.unlock();

#if 0 //使用声卡的声音

if(audio_buffer_r_count>=AUDIO_BUFFER_MAX_SIZE)audio_buffer_r_count=0;

//音频数据赋值

for(j = 0; jnb_samples; j++) //nb_samples: 此帧描述的音频样本数(每个通道)

{

for(i=0;ienc->channels;i++) //channels:音频通道数

{

*q++ = audio_buffer[j+audio_buffer_r_count]; //音频数据

}

ost->t += ost->tincr;

ost->tincr += ost->tincr2;

}

frame->pts = ost->next_pts;

ost->next_pts += frame->nb_samples;

//qDebug()<<"audio_buffer_r_count="<nb_samples; j++) //nb_samples: 此帧描述的音频样本数(每个通道)

{

v=(int)(sin(ost->t) * 1000);

for(i=0;ienc->channels;i++) //channels:音频通道数

{

*q++ = v; //音频数据

}

ost->t += ost->tincr;

ost->tincr += ost->tincr2;

}

frame->pts = ost->next_pts;

ost->next_pts += frame->nb_samples;

#endif

return frame;

}

/*

*编码一个音频帧并将其发送到多路复用器

*编码完成后返回1,否则返回0

*/

static int write_audio_frame(AVFormatContext *oc, OutputStream *ost)

{

AVCodecContext *c;

AVPacket pkt = { 0 }; // data and size must be 0;

AVFrame *frame;

int ret;

int got_packet;

int dst_nb_samples;

av_init_packet(&pkt);

c = ost->enc;

frame = get_audio_frame(ost);

if(frame)

{

/*使用重采样器将样本从本机格式转换为目标编解码器格式*/

/*计算样本的目标数量*/

dst_nb_samples = av_rescale_rnd(swr_get_delay(ost->swr_ctx, c->sample_rate) + frame->nb_samples,

c->sample_rate, c->sample_rate, AV_ROUND_UP);

av_assert0(dst_nb_samples == frame->nb_samples);

/*当我们将帧传递给编码器时,它可能会保留对它的引用

*内部;

*确保我们不会在这里覆盖它

*/

ret = av_frame_make_writable(ost->frame);

if (ret < 0)

exit(1);

/*转换为目标格式 */

ret = swr_convert(ost->swr_ctx,

ost->frame->data, dst_nb_samples,

(const uint8_t **)frame->data, frame->nb_samples);

if (ret < 0) {

qDebug("Error while converting\n");

exit(1);

}

frame = ost->frame;

frame->pts = av_rescale_q(ost->samples_count, (AVRational){1, c->sample_rate}, c->time_base);

ost->samples_count += dst_nb_samples;

}

ret = avcodec_encode_audio2(c, &pkt, frame, &got_packet);

if (ret < 0) {

qDebug("Error encoding audio frame\n");

exit(1);

}

if (got_packet) {

ret = write_frame(oc, &c->time_base, ost->st, &pkt);

if (ret < 0) {

qDebug("Error while writing audio frame\n");

exit(1);

}

}

return (frame || got_packet) ? 0 : 1;

}

/**************************************************************/

/* video output */

static AVFrame *alloc_picture(enum AVPixelFormat pix_fmt, int width, int height)

{

AVFrame *picture;

int ret;

picture = av_frame_alloc();

if (!picture)

return nullptr;

picture->format = pix_fmt;

picture->width = width;

picture->height = height;

/* allocate the buffers for the frame data */

ret = av_frame_get_buffer(picture, 32);

if(ret < 0)

{

qDebug("Could not allocate frame data.\n");

exit(1);

}

return picture;

}

static void open_video(AVFormatContext *oc, AVCodec *codec, OutputStream *ost, AVDictionary *opt_arg)

{

int ret;

AVCodecContext *c = ost->enc;

AVDictionary *opt = nullptr;

av_dict_copy(&opt, opt_arg, 0);

/* open the codec */

ret = avcodec_open2(c, codec, &opt);

av_dict_free(&opt);

if (ret < 0) {

qDebug("Could not open video codec\n");

exit(1);

}

/* allocate and init a re-usable frame */

ost->frame = alloc_picture(c->pix_fmt, c->width, c->height);

if (!ost->frame) {

qDebug("Could not allocate video frame\n");

exit(1);

}

ost->tmp_frame = nullptr;

/* 将流参数复制到多路复用器 */

ret = avcodec_parameters_from_context(ost->st->codecpar, c);

if (ret < 0) {

qDebug("Could not copy the stream parameters\n");

exit(1);

}

}

/*

准备图像数据

YUV422占用内存空间 = w * h * 2

YUV420占用内存空间 = width*height*3/2

*/

static int fill_yuv_image(AVFrame *pict, int frame_index,int width, int height)

{

unsigned int y_size=width*height;

//消费者

while(videoaudioencode.void_data_queue.isEmpty())

{

QThread::msleep(10);

if(videoaudioencode.run_flag==0) //停止编码

{

return -1;

}

}

videoaudioencode.video_encode_mutex.lock();

QByteArray byte=videoaudioencode.void_data_queue.dequeue();

//qDebug()<<"out="<data[0],byte.data(),y_size);

memcpy(pict->data[1],byte.data()+y_size,y_size/4);

memcpy(pict->data[2],byte.data()+y_size+y_size/4,y_size/4);

return 0;

}

static AVFrame *get_video_frame(OutputStream *ost)

{

AVCodecContext *c = ost->enc;

/* 检查我们是否要生成更多帧---判断是否结束录制 */

if(av_compare_ts(ost->next_pts, c->time_base,STREAM_DURATION, (AVRational){ 1, 1 }) >= 0)

return nullptr;

/*当我们将帧传递给编码器时,它可能会保留对它的引用

*内部; 确保我们在这里不覆盖它*/

if (av_frame_make_writable(ost->frame) < 0)

exit(1);

//制作虚拟图像

//DTS(解码时间戳)和PTS(显示时间戳)

int err=fill_yuv_image(ost->frame, ost->next_pts, c->width, c->height);

if(err)return nullptr;

ost->frame->pts = ost->next_pts++;

return ost->frame;

}

/*

*编码一个视频帧并将其发送到多路复用器

*编码完成后返回1,否则返回0

*/

static int write_video_frame(AVFormatContext *oc, OutputStream *ost)

{

int ret;

AVCodecContext *c;

AVFrame *frame;

int got_packet = 0;

AVPacket pkt = {0};

c=ost->enc;

//获取一帧数据

frame = get_video_frame(ost);

if(frame==nullptr)return 1;

av_init_packet(&pkt);

/* 编码图像 */

ret=avcodec_encode_video2(c, &pkt, frame, &got_packet);

if(ret < 0)

{

qDebug("Error encoding video frame\n");

exit(1);

}

if(got_packet)

{

ret=write_frame(oc, &c->time_base, ost->st, &pkt);

}

else

{

ret = 0;

}

if(ret < 0)

{

qDebug("Error while writing video frame\n");

exit(1);

}

return (frame || got_packet) ? 0 : 1;

}

static void close_stream(AVFormatContext *oc, OutputStream *ost)

{

avcodec_free_context(&ost->enc);

av_frame_free(&ost->frame);

av_frame_free(&ost->tmp_frame);

sws_freeContext(ost->sws_ctx);

swr_free(&ost->swr_ctx);

}

int Thread_VideoAudioEncode::StartUp_VideoAudioEncode()

{

OutputStream video_st = {0}, audio_st = { 0 };

AVOutputFormat *fmt;

AVFormatContext *oc;

AVCodec *audio_codec, *video_codec;

int ret;

int have_video = 0, have_audio = 0;

int encode_video = 0, encode_audio = 0;

AVDictionary *opt = nullptr;

QDateTime dateTime(QDateTime::currentDateTime());

//时间效果: 2020-03-05 16:25::04 周四

QString qStr="";

qStr+=SAVE_FILE_PATH; //Android 手机的照相机文件夹

qStr+=dateTime.toString("yyyy-MM-dd-hh-mm-ss");

qStr+=".mp4";

char filename[50];

strcpy(filename,qStr.toLatin1().data());

emit LogSend(tr("当前的文件名称:%1\n").arg(filename));

/* 分配输出环境 */

avformat_alloc_output_context2(&oc,nullptr,nullptr,filename);

if(!oc)

{

emit LogSend("无法从文件扩展名推断出输出格式:使用MPEG\n");

avformat_alloc_output_context2(&oc,nullptr,"mpeg",filename);

}

if(!oc)

{

emit LogSend("code error.\n");

return -1;

}

fmt=oc->oformat;

/*使用默认格式的编解码器添加音频和视频流,初始化编解码器。 */

if(fmt->video_codec != AV_CODEC_ID_NONE)

{

add_stream(&video_st,oc,&video_codec,fmt->video_codec);

have_video = 1;

encode_video = 1;

}

if(fmt->audio_codec != AV_CODEC_ID_NONE)

{

add_stream(&audio_st, oc, &audio_codec, fmt->audio_codec);

have_audio = 1;

encode_audio = 1;

}

/*现在已经设置了所有参数,可以打开音频视频编解码器,并分配必要的编码缓冲区。 */

if (have_video)

open_video(oc, video_codec, &video_st, opt);

if (have_audio)

open_audio(oc, audio_codec, &audio_st, opt);

av_dump_format(oc, 0, filename, 1);

/* 打开输出文件(如果需要) */

if(!(fmt->flags & AVFMT_NOFILE))

{

ret = avio_open(&oc->pb, filename, AVIO_FLAG_WRITE);

if (ret < 0)

{

qDebug("Could not open '%s'\n",filename);

return -1;

}

}

/* 编写流头(如果有)*/

ret=avformat_write_header(oc,&opt);

if(ret<0)

{

qDebug("Error occurred when opening output file\n");

return -1;

}

while(encode_video || encode_audio)

{

/* 选择要编码的流*/

if(encode_video &&(!encode_audio || av_compare_ts(video_st.next_pts, video_st.enc->time_base,audio_st.next_pts, audio_st.enc->time_base) <= 0))

{

encode_video = !write_video_frame(oc,&video_st);

}

else

{

encode_audio = !write_audio_frame(oc,&audio_st);

}

}

/*编写预告片(如果有)。 预告片必须在之前写好

*关闭在编写标头时打开的CodecContext; 除此以外

* av_write_trailer()可能会尝试使用已释放的内存

* av_codec_close()。 */

av_write_trailer(oc);

/* Close each codec. */

if (have_video)

close_stream(oc, &video_st);

if (have_audio)

close_stream(oc, &audio_st);

if (!(fmt->flags & AVFMT_NOFILE))

/* Close the output file. */

avio_closep(&oc->pb);

/* free the stream */

avformat_free_context(oc);

qDebug("编码完成.线程退出.\n");

return 0;

}

//编码

void Thread_VideoAudioEncode ::run()

{

while(1)

{

qDebug()<<"编码线程开始运行.";

audio_buffer_r_count=0;

audio_buffer_w_count=0;

StartUp_VideoAudioEncode(); //启动视频音频编码

if(videoaudioencode.run_flag==0) //判断是否停止编码

{

break;

}

}

}

video_audio_encode.h代码: 编码文件

#ifndef VIDEO_AUDIO_ENCODE_H

#define VIDEO_AUDIO_ENCODE_H

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include "audio_data_input.h"

#include "mainwindow.h"

extern "C"

{

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

}

//线程的子类化

class Thread_VideoAudioEncode : public QThread

{

Q_OBJECT

public:

int StartUp_VideoAudioEncode();

protected:

void run();

signals:

void LogSend(QString text);

};

//视频音频编码类

class VideoAudioEncode

{

public:

/*继续采集标志*/

bool run_flag;

/*视频相关*/

int VideoWidth; //视频的宽度

int VideoHeight; //视频的高度

QMutex video_encode_mutex;

QQueue void_data_queue;

int camera_node; //当前选择的摄像头

QList cameras; //存放系统支持的摄像头列表

/*音频相关*/

QWaitCondition audio_encode_Condition; //构造条件变量

QMutex audio_encode_mutex;

};

extern Thread_VideoAudioEncode thread_VideoenCode; //视频音频编码的线程

extern class VideoAudioEncode videoaudioencode;

extern char audio_buffer[]; //音频缓存

extern int audio_buffer_w_count;

extern int audio_buffer_r_count;

#define AUDIO_BUFFER_MAX_SIZE 1024*100

#endif // VIDEO_AUDIO_ENCODE_H

video_data_input.cpp代码: 视频采集文件

#include "video_data_input.h"

VideoReadThread videoReadThread; //读取摄像头数据的线程

//析构函数

VideoReadThread::~VideoReadThread()

{

}

//执行线程

void VideoReadThread::run()

{

delete camera;

delete m_pProbe;

Camear_Init();

qDebug()<<"摄像头开始采集数据";

this->exec(); //启动事件循环

}

void VideoReadThread::Camear_Init()

{

int node=videoaudioencode.camera_node;

/*创建摄像头对象,根据选择的摄像头打开*/

camera = new QCamera(videoaudioencode.cameras.at(node));

m_pProbe = new QVideoProbe;

if(m_pProbe != nullptr)

{

m_pProbe->setSource(camera); // Returns true, hopefully.

connect(m_pProbe, SIGNAL(videoFrameProbed(QVideoFrame)),this, SLOT(slotOnProbeFrame(QVideoFrame)), Qt::QueuedConnection);

}

/*配置摄像头捕 QCamera *camera;

QVideoProbe *m_pProbe;

获模式为帧捕获模式*/

//camera->setCaptureMode(QCamera::CaptureStillImage); //如果在Linux系统下运行就这样设置

camera->setCaptureMode(QCamera::CaptureVideo);//如果在android系统下运行就这样设置

/*启动摄像头*/

camera->start();

/*设置摄像头的采集帧率和分辨率*/

QCameraViewfinderSettings settings;

settings.setPixelFormat(QVideoFrame::Format_YUYV); //设置像素格式 Android上只支持NV21格式

settings.setResolution(QSize(videoaudioencode.VideoWidth, videoaudioencode.VideoHeight)); //设置摄像头的分辨率

camera->setViewfinderSettings(settings);

//获取摄像头支持的分辨率、帧率等参数

#if 0

int i=0;

QList ViewSets = camera->supportedViewfinderSettings();

foreach (QCameraViewfinderSettings ViewSet, ViewSets) {

qDebug() << i++ <<" max rate = " << ViewSet.maximumFrameRate() << "min rate = "<< ViewSet.minimumFrameRate() << "resolution "<> 8;

g = (y - (88 * u) - (183 * v)) >> 8;

b = (y + (454 * u)) >> 8;

*(ptr++) = (r > 255) ? 255 : ((r < 0) ? 0 : r);

*(ptr++) = (g > 255) ? 255 : ((g < 0) ? 0 : g);

*(ptr++) = (b > 255) ? 255 : ((b < 0) ? 0 : b);

if(z++)

{

z = 0;

yuyv += 4;

}

}

}

// image_src是源图像,image_dst是转换后的图像

void VideoReadThread::NV21_YUV420P(const unsigned char* image_src, unsigned char* image_dst,int image_width, int image_height)

{

unsigned char* p = image_dst;

memcpy(p, image_src, image_width * image_height * 3 / 2);

const unsigned char *pNV = image_src + image_width * image_height;

unsigned char *pU = p + image_width * image_height;

unsigned char *pV = p + image_width * image_height + ((image_width * image_height)>>2);

for (int i=0; i<(image_width * image_height)/2; i++)

{

if((i%2)==0)

*pV++=*(pNV+i);

else

*pU++=*(pNV+i);

}

}

//YUYV==YUV422

int VideoReadThread::yuyv_to_yuv420p(const unsigned char *in, unsigned char *out, unsigned int width, unsigned int height)

{

unsigned char *y = out;

unsigned char *u = out + width*height;

unsigned char *v = out + width*height + width*height/4;

unsigned int i,j;

unsigned int base_h;

unsigned int is_u = 1;

unsigned int y_index = 0, u_index = 0, v_index = 0;

unsigned long yuv422_length = 2 * width * height;

//序列为YU YV YU YV,一个yuv422帧的长度 width * height * 2 个字节

//丢弃偶数行 u v

for(i=0; i 255) r = 255;

if(g > 255) g = 255;

if(b > 255) b = 255;

if(r < 0) r = 0;

if(g < 0) g = 0;

if(b < 0) b = 0;

index = rgb_index % width + (height - i - 1) * width;

//rgb[index * 3+0] = b;

//rgb[index * 3+1] = g;

//rgb[index * 3+2] = r;

//颠倒图像

//rgb[height * width * 3 - i * width * 3 - 3 * j - 1] = b;

//rgb[height * width * 3 - i * width * 3 - 3 * j - 2] = g;

//rgb[height * width * 3 - i * width * 3 - 3 * j - 3] = r;

//正面图像

rgb[i * width * 3 + 3 * j + 0] = b;

rgb[i * width * 3 + 3 * j + 1] = g;

rgb[i * width * 3 + 3 * j + 2] = r;

rgb_index++;

}

}

}

void VideoReadThread::YUV420P_TO_RGB24(unsigned char *yuv420p, unsigned char *rgb24, int width, int height) {

int index = 0;

for (int y = 0; y < height; y++) {

for (int x = 0; x < width; x++) {

int indexY = y * width + x;

int indexU = width * height + y / 2 * width / 2 + x / 2;

int indexV = width * height + width * height / 4 + y / 2 * width / 2 + x / 2;

u_char Y = yuv420p[indexY];

u_char U = yuv420p[indexU];

u_char V = yuv420p[indexV];

rgb24[index++] = Y + 1.402 * (V - 128); //R

rgb24[index++] = Y - 0.34413 * (U - 128) - 0.71414 * (V - 128); //G

rgb24[index++] = Y + 1.772 * (U - 128); //B

}

}

}

video_data_input.h代码: 视频采集文件

#ifndef VIDEO_DATA_INPUT_H

#define VIDEO_DATA_INPUT_H

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include "video_audio_encode.h"

#include

class VideoReadThread:public QThread

{

Q_OBJECT

public:

QCamera *camera;

QVideoProbe *m_pProbe;

VideoReadThread(QObject* parent=nullptr):QThread(parent){}

~VideoReadThread();

void Camear_Init(void);

void YUV420P_to_RGB24(unsigned char *data, unsigned char *rgb, int width, int height);

void NV21_TO_RGB24(unsigned char *data, unsigned char *rgb, int width, int height);

void YUV420P_TO_RGB24(unsigned char *yuv420p, unsigned char *rgb24, int width, int height);

void yuyv_to_rgb(unsigned char *yuv_buffer,unsigned char *rgb_buffer,int iWidth,int iHeight);

int yuyv_to_yuv420p(const unsigned char *in, unsigned char *out, unsigned int width, unsigned int height);

void NV21_YUV420P(const unsigned char* image_src, unsigned char* image_dst,int image_width, int image_height);

public slots:

void slotOnProbeFrame(const QVideoFrame &frame);

signals:

void VideoDataOutput(QImage &); //输出信号

protected:

void run();

};

extern VideoReadThread videoReadThread; //读取摄像头数据的线程

#endif // VIDEO_DATA_INPUT_H

audio_data_input.cpp代码: 音频采集文件

#include "audio_data_input.h"

AudioReadThread audioReadThread; //音频数据获取线程

//析构函数

AudioReadThread::~AudioReadThread()

{

}

//执行线程

void AudioReadThread::run()

{

qDebug()<<"音频采集线程开始执行.";

delete audio_in;

Audio_Init();

this->exec(); //启动事件循环

}

void AudioReadThread::Audio_Init()

{

QString text;

QAudioFormat auido_input_format;

//设置录音的格式

auido_input_format.setSampleRate(44100); //设置采样率以对赫兹采样。

auido_input_format.setChannelCount(1); //将通道数设置为通道。

auido_input_format.setSampleSize(32); /*将样本大小设置为指定的sampleSize(以位为单位)通常为8或16,但是某些系统可能支持更大的样本量。*/

auido_input_format.setCodec("audio/pcm"); //设置编码格式

auido_input_format.setByteOrder(QAudioFormat::LittleEndian); //样本是小端字节顺序

auido_input_format.setSampleType(QAudioFormat::Float); //样本类型

//选择默认设备作为输入源

QAudioDeviceInfo info = QAudioDeviceInfo::defaultInputDevice();

emit LogSend(tr("当前的录音设备的名字:%1\n").arg(info.deviceName()));

//判断输入的格式是否支持,如果不支持就使用系统支持的默认格式

if(!info.isFormatSupported(auido_input_format))

{

emit LogSend("返回与系统支持的提供的设置最接近的QAudioFormat\n");

auido_input_format=info.nearestFormat(auido_input_format);

/*

* 返回与系统支持的提供的设置最接近的QAudioFormat。

这些设置由所使用的平台/音频插件提供。

它们还取决于所使用的QAudio :: Mode。

*/

}

//当前设备支持的编码

emit LogSend("当前设备支持的编码格式:\n");

QStringList list=info.supportedCodecs();

for(int i=0;istart(); //开始音频采集

//关联音频读数据信号

connect(audio_streamIn,SIGNAL(readyRead()),this,SLOT(audio_ReadyRead()),Qt::QueuedConnection);

}

//有音频信号可以读

void AudioReadThread::audio_ReadyRead()

{

char audio_buffer_tmp[1024];

//生产者

// videoaudioencode.audio_encode_mutex.lock();

int r_cnt=audio_streamIn->read(audio_buffer_tmp,1024);

if(audio_buffer_w_count+r_cnt>AUDIO_BUFFER_MAX_SIZE) //判断剩下的缓存区是否够写

{

memcpy(audio_buffer+audio_buffer_w_count,audio_buffer_tmp,AUDIO_BUFFER_MAX_SIZE-audio_buffer_w_count);

audio_buffer_w_count=0; //写指针归位

//memcpy(audio_buffer+audio_buffer_w_count,audio_buffer_tmp+AUDIO_BUFFER_MAX_SIZE-audio_buffer_w_count,r_cnt-(AUDIO_BUFFER_MAX_SIZE-audio_buffer_w_count));

}

else

{

//将数据拷贝到缓存区

memcpy(audio_buffer+audio_buffer_w_count,audio_buffer_tmp,r_cnt);

audio_buffer_w_count+=r_cnt;

}

//qDebug()<<"audio_buffer_w_count="<readAll();

//qDebug()<<"read="<error() != QAudio::NoError) {

// Error handling

qDebug()<<"录音出现错误.\n";

} else {

// Finished recording

qDebug()<<"完成录音\n";

}

break;

case QAudio::ActiveState:

// Started recording - read from IO device

qDebug()<<"开始从IO设备读取PCM声音数据.\n";

break;

default:

// ... other cases as appropriate

break;

}

}

audio_data_input.h代码: 音频采集文件

#ifndef AUDIO_DATA_INPUT_H

#define AUDIO_DATA_INPUT_H

#include //这五个是QT处理音频的库

#include

#include

#include

#include

#include

#include

#include

#include "video_audio_encode.h"

class AudioReadThread:public QThread

{

Q_OBJECT

public:

AudioReadThread(QObject* parent=nullptr):QThread(parent){}

~AudioReadThread();

void Audio_Init();

QAudioInput *audio_in;

QIODevice* audio_streamIn;

public slots:

void handleStateChanged_input(QAudio::State newState);

void audio_ReadyRead();

signals:

void LogSend(QString text);

protected:

void run();

};

extern AudioReadThread audioReadThread; //音频数据获取线程

#endif // AUDIO_DATA_INPUT_H

xxx.pro工程文件

QT += core gui

QT += multimediawidgets

QT += xml

QT += multimedia

QT += network

greaterThan(QT_MAJOR_VERSION, 4): QT += widgets

CONFIG += c++11

# The following define makes your compiler emit warnings if you use

# any Qt feature that has been marked deprecated (the exact warnings

# depend on your compiler). Please consult the documentation of the

# deprecated API in order to know how to port your code away from it.

DEFINES += QT_DEPRECATED_WARNINGS

# You can also make your code fail to compile if it uses deprecated APIs.

# In order to do so, uncomment the following line.

# You can also select to disable deprecated APIs only up to a certain version of Qt.

#DEFINES += QT_DISABLE_DEPRECATED_BEFORE=0x060000 # disables all the APIs deprecated before Qt 6.0.0

SOURCES += \

audio_data_input.cpp \

main.cpp \

mainwindow.cpp \

video_audio_encode.cpp \

video_data_input.cpp

HEADERS += \

audio_data_input.h \

mainwindow.h \

video_audio_encode.h \

video_data_input.h

FORMS += \

mainwindow.ui

# Default rules for deployment.

qnx: target.path = /tmp/$${TARGET}/bin

else: unix:!android: target.path = /opt/$${TARGET}/bin

!isEmpty(target.path): INSTALLS += target

RESOURCES += \

image.qrc

DISTFILES +=

#指定库文件的路径

LIBS += -L$$PWD/so_file -lavcodec

LIBS += -L$$PWD/so_file -lavfilter

LIBS += -L$$PWD/so_file -lavutil

LIBS += -L$$PWD/so_file -lavdevice

LIBS += -L$$PWD/so_file -lavformat

LIBS += -L$$PWD/so_file -lpostproc

LIBS += -L$$PWD/so_file -lswscale

LIBS += -L$$PWD/so_file -lswresample

#指定头文件的路径

INCLUDEPATH+=$$PWD/so_file/include

contains(ANDROID_TARGET_ARCH,arm64-v8a) {

ANDROID_EXTRA_LIBS = \

$$PWD/so_file/libavcodec.so \

$$PWD/so_file/libavfilter.so \

$$PWD/so_file/libavformat.so \

$$PWD/so_file/libavutil.so \

$$PWD/so_file/libpostproc.so \

$$PWD/so_file/libswresample.so \

$$PWD/so_file/libswscale.so \

$$PWD/so_file/libavdevice.so \

$$PWD/so_file/libclang_rt.ubsan_standalone-aarch64-android.so

}

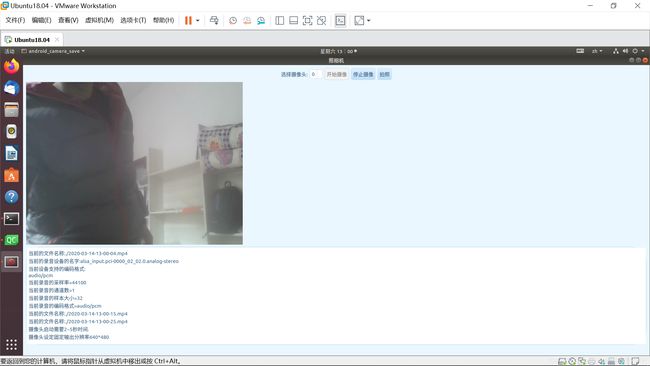

运行效果截图:

ubuntu系统效果:

Android平板效果:

Android手机效果:

下面公众号里有QT、C++、C、单片机的基础全套学习教程: