CDH5.14.4离线安装Spark2.2.0详细步骤

目录

一、简介:

二、安装准备

三、开始安装

四、spark-shell启动问题

五、spark安装问题

一、简介:

在我的CDH5.14.4集群中,默认安装的spark是1.6版本,这里需要将其升级为spark2.x版本。经查阅官方文档,发现spark1.6和2.x是可以并行安装的,也就是说可以不用删除默认的1.6版本,可以直接安装2.x版本,它们各自用的端口也是不一样的( History Server port is 18089 instead of the usual 18088)。这里做一下安装spark2.2.0版本的步骤记录。

二、安装准备

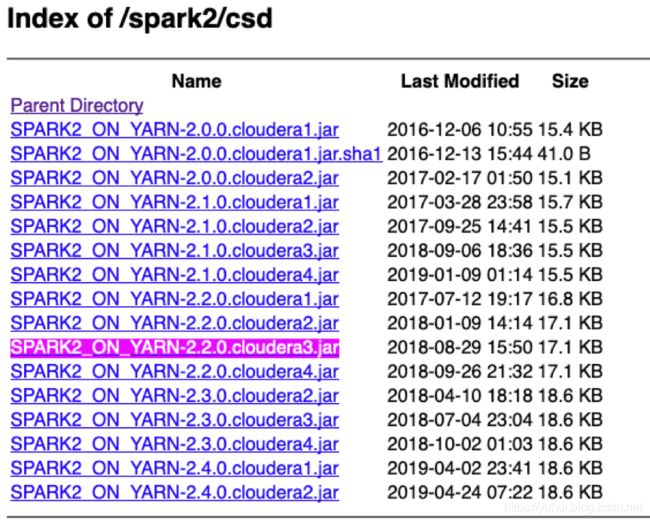

csd包:http://archive.cloudera.com/spark2/csd/

SPARK2_ON_YARN-2.2.0.cloudera3.jar

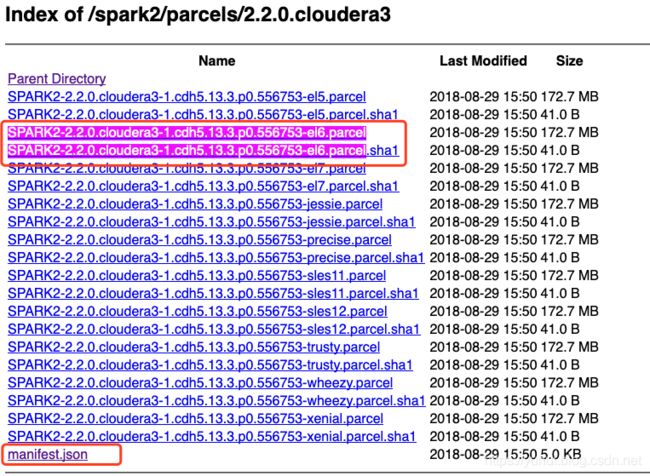

parcel包:http://archive.cloudera.com/spark2/parcels/2.2.0.cloudera3/

SPARK2-2.2.0.cloudera3-1.cdh5.13.3.p0.556753-el6.parcel

SPARK2-2.2.0.cloudera3-1.cdh5.13.3.p0.556753-el6.parcel.sha1

manifest.json

注意,下载对应版本的包,比如:CentOS7系统,下载el7的包,若是CentOS6,就要下el6的包。

特别注意,如果你安装spark2.2,按照上面下载就是了,注意一下操作系统的版本;如果你不打算安装spark2.2,想安装其他版本,比如2.0,那么一定要注意下面的事项:

如果你仔细浏览过这些路径,会发现下图中,csd和parcel包会有.clouderal1和.clouderal2之分,和2.0与2.1版本之分,那么在下载parcel时也要注意,下载对应的包。即如果下载到的是.clouderal1的csd包,下载parcel包也要下载文件名中是.clouderal1的包,不能下载.clouderal2的包,同时csd2.0的包也不能用于parcel2.1的包,不然很可能安不上

三、开始安装

1.安装前可以停掉集群和Cloudera Management Service

2. 下面的操作,只需要在安装spark2的机器上面进行,我只选择CM server机器。

3. 上传CSD包到机器的/opt/cloudera/csd目录,并且修改文件的用户和组。注意如果本目录下有其他的jar包,把删掉或者移到其他目录

备注:修改用户组

chown cloudera-scm:cloudera-scm SPARK2_ON_YARN-2.2.0.cloudera3.jar

4.上传parcel包到机器的/opt/cloudera/parcel-repo目录下。

注意。如果有其他的安装包,不用删除 。但是如果本目录下有其他的重名文件比如manifest.json文件,把它重命名备份掉。然后把那3个parcel包的文件放在这里。

SPARK2-2.2.0.cloudera3-1.cdh5.13.3.p0.556753-el6.parcel

SPARK2-2.2.0.cloudera3-1.cdh5.13.3.p0.556753-el6.parcel.sha1

manifest.json

备注

SPARK2-2.2.0.cloudera3-1.cdh5.13.3.p0.556753-el6.parcel.sha1

更名为:

SPARK2-2.2.0.cloudera3-1.cdh5.13.3.p0.556753-el6.parcel.sha

其中,SPARK2-2.2.0.cloudera3-1.cdh5.13.3.p0.556753-el6.parcel.torrent是CDH分配时候自动生成的

5.如果刚刚没有停掉CM和集群,现在将他们停掉。然后运行命令。

备注: 我启动了server,没有启动agent,网上有说法是server和agent全部启动

/opt/cloudera-manager/cm-5.14.4/etc/init.d/cloudera-scm-server restart

6.把CM和集群启动起来。然后点击主机->Parcel页面,看是否多了个spark2的选项。如下图,你这里此时应该是分配按钮,点击,等待操作完成后,点击激活按钮

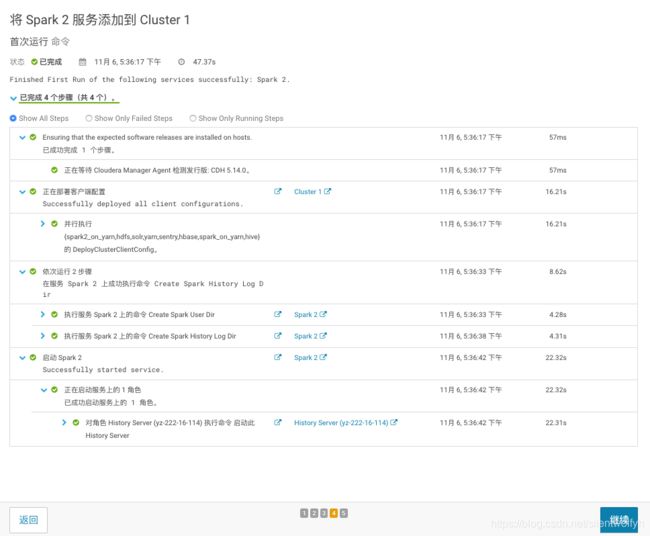

7.激活后,点击你的群集-》添加服务,添加spark2服务。注意,如果你这里看不到spark2服务,就请检查你的CSD包和parcel包是否对应,上面的步骤是否有漏掉。正常情况下,应该是能用了。

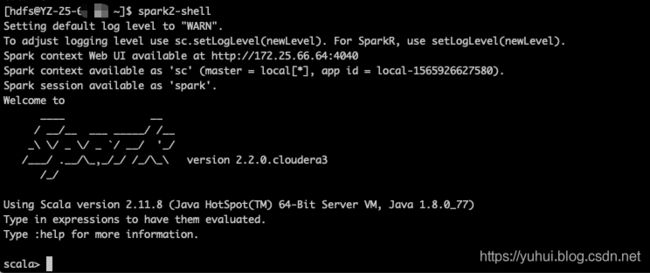

四、spark-shell启动问题

[hdfs@hadoop11 ~]$ spark2-shell

Exception in thread "main" java.lang.NoClassDefFoundError: org/apache/hadoop/fs/FSDataInputStream

at org.apache.spark.deploy.SparkSubmitArguments$$anonfun$mergeDefaultSparkProperties$1.apply(SparkSubmitArguments.scala:124)

at org.apache.spark.deploy.SparkSubmitArguments$$anonfun$mergeDefaultSparkProperties$1.apply(SparkSubmitArguments.scala:124)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.deploy.SparkSubmitArguments.mergeDefaultSparkProperties(SparkSubmitArguments.scala:124)

at org.apache.spark.deploy.SparkSubmitArguments.(SparkSubmitArguments.scala:110)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:112)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.lang.ClassNotFoundException: org.apache.hadoop.fs.FSDataInputStream

at java.net.URLClassLoader.findClass(URLClassLoader.java:381)

at java.lang.ClassLoader.loadClass(ClassLoader.java:424)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:331)

at java.lang.ClassLoader.loadClass(ClassLoader.java:357)

... 7 more

解决:

拷贝文件

cp /opt/cloudera/parcels/CDH/etc/spark/conf.dist/* /opt/cloudera/parcels/SPARK2/etc/spark2/conf.dist/

配置spark-env.sh文件

vim /opt/cloudera/parcels/SPARK2/etc/spark2/conf.dist/spark-env.sh

添加如下内容

export SPARK_DIST_CLASSPATH=$(hadoop classpath) //指定hadoop class文件目录

export HADOOP_CONF_DIR=/etc/hadoop/conf //指定hadoop配置文件目录

https://spark.apache.org/docs/latest/hadoop-provided.html

五、spark安装问题

+ replace '{{JAVA_LIBRARY_PATH}}' '' /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf/yarn-site.xml

+ perl -pi -e 's#{{JAVA_LIBRARY_PATH}}##g' /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf/yarn-site.xml

+ replace '{{CMF_CONF_DIR}}' /etc/spark/conf.cloudera.spark_on_yarn/yarn-conf /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf/yarn-site.xml

+ perl -pi -e 's#{{CMF_CONF_DIR}}#/etc/spark/conf.cloudera.spark_on_yarn/yarn-conf#g' /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf/yarn-site.xml

+ '[' -d /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/hbase-conf ']'

++ get_default_fs /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf

++ get_hadoop_conf /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf fs.defaultFS

++ local conf=/opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf

++ local key=fs.defaultFS

++ '[' 1 == 1 ']'

++ /opt/cloudera/parcels/CDH-5.14.4-1.cdh5.14.4.p0.3/lib/hadoop/../../bin/hdfs --config /opt/cloudera-manager/cm-5.14.4/run/cloudera-scm-agent/process/ccdeploy_spark-conf_etcsparkconf.cloudera.spark_on_yarn_-519253865165339747/spark-conf/yarn-conf getconf -confKey fs.defaultFS

Error: JAVA_HOME is not set and could not be found.

+ DEFAULT_FS=