源文件内容示例:

http://bigdata.beiwang.cn/laoli http://bigdata.beiwang.cn/laoli http://bigdata.beiwang.cn/haiyuan http://bigdata.beiwang.cn/haiyuan

实现代码:

object SparkSqlDemo11 {

/**

* 使用开窗函数,计算TopN

* @param args

*/

def main(args: Array[String]): Unit = {

val session = SparkSession.builder()

.appName(this.getClass.getSimpleName)

.master("local")

.getOrCreate()

import session.implicits._

//原数据:http://bigdata.beiwang.cn/laoli

val sourceData = session.read.textFile("E:\\北网学习\\K_第十一个月_Spark 2(2019.8)\\8.5\\teacher.log")

val df = sourceData.map(line => {

val index = line.lastIndexOf("/")

val t_name = line.substring(index + 1)

val url = new URL(line.substring(0, index))

val subject = url.getHost.split("\\.")(0)

(subject, t_name)

}).toDF("subject", "t_name")

操作01:得到所有专业下所有老师的访问数:

df.createTempView("temp")

//获得所有学科下老师的访问量:

val middleData: DataFrame = session.sql("select subject,t_name,count(*) cnts from temp group by subject,t_name")

//middleData.show()

+-------+--------+----+ |subject| t_name|cnts| +-------+--------+----+ |bigdata| laoli| 2| |bigdata| haiyuan| 15| | javaee|chenchan| 6| | php| laoliu| 1| | php| laoli| 3| | javaee| laoshi| 9| |bigdata| lichen| 6| +-------+--------+----+

操作02:row_number() over()【按照老师的访问数,降序开窗】

//再将中间值middleData注册成一张表

middleData.createTempView("middleTemp")

//执行第二部查询,使用row_number()开窗函数,对所有的老师的访问数进行排序并添加编号

//开窗后生成的编号列 rn 是一个伪列,只能用于展示,不能用于查询

//row_number() over() 函数是按照某种规则对数据进行编号,需要我们在over()中指定一个排序规则,无规则将会报错

//此处是按照cnts列降序开窗

session.sql(

"""

|select subject,t_name,cnts,row_number() over(order by cnts desc) rn from middleTemp

""".stripMargin).show()

+-------+--------+----+---+ |subject| t_name|cnts| rn| +-------+--------+----+---+ |bigdata| haiyuan| 15| 1| | javaee| laoshi| 9| 2| | javaee|chenchan| 6| 3| |bigdata| lichen| 6| 4| | php| laoli| 3| 5| |bigdata| laoli| 2| 6| | php| laoliu| 1| 7| +-------+--------+----+---+

♈ 注意:over()内必须指定开窗规则,否则会抛出解析异常:

session.sql(

"""

|select subject,t_name,cnts,row_number() over() rn from middleTemp

""".stripMargin).show()

Exception in thread "main" org.apache.spark.sql.AnalysisException: Window function row_number() requires window to be ordered, please add ORDER BY clause. For example SELECT row_number()(value_expr) OVER (PARTITION BY window_partition ORDER BY window_ordering) from table; at org.apache.spark.sql.catalyst.analysis.CheckAnalysis$class.failAnalysis(CheckAnalysis.scala:39) at org.apache.spark.sql.catalyst.analysis.Analyzer.failAnalysis(Analyzer.scala:91) at org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveWindowOrder$$anonfun$apply$31$$anonfun$applyOrElse$12.applyOrElse(Analyzer.scala:2173) at org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveWindowOrder$$anonfun$apply$31$$anonfun$applyOrElse$12.applyOrElse(Analyzer.scala:2171)

操作03:row_number() over(partition by.. 【根据学科进行分区后为每个分区开窗】

//根据学科进行分区后为每个分区开窗

session.sql(

"""

|select subject,t_name,cnts,row_number() over(partition by subject order by cnts desc) rn from middleTemp

""".stripMargin).show()

+-------+--------+----+---+ |subject| t_name|cnts| rn| +-------+--------+----+---+ | javaee| laoshi| 9| 1| | javaee|chenchan| 6| 2| |bigdata| haiyuan| 15| 1| |bigdata| lichen| 6| 2| |bigdata| laoli| 2| 3| | php| laoli| 3| 1| | php| laoliu| 1| 2| +-------+--------+----+---+

♎ 注意:开窗生成的列是伪列,不能用于实际操作:

//开窗形成的列是伪列,不能用于实际操作

session.sql(

"""

|select subject,t_name,cnts,row_number() over(partition by subject order by cnts desc) rn from middleTemp

|where rn <=2

""".stripMargin).show()

操作04:伪列的使用:

由于开窗形成的伪列不能被直接用于查询,那么我们可以将整个开窗语句的操作作为一个子查询使用,那么开窗语句的结果集对于父查询来说就是一张完整的表,这时候伪列就是一个有效的列,可以用于查询:

//开窗生成的伪列不能用于直接查询,但是我们可以将开窗语句的结果集作为一张表或者说一个子查询,这时候伪列就是一个有效的列,可以进行再次嵌套查询,

session.sql(

"""

|select * from (

|select subject,t_name,cnts,row_number() over(partition by subject order by cnts desc) rn from middleTemp

|) where rn <= 2

""".stripMargin).show()

+-------+--------+----+---+ |subject| t_name|cnts| rn| +-------+--------+----+---+ | javaee| laoshi| 9| 1| | javaee|chenchan| 6| 2| |bigdata| haiyuan| 15| 1| |bigdata| lichen| 6| 2| | php| laoli| 3| 1| | php| laoliu| 1| 2| +-------+--------+----+---+

操作05:【开窗嵌套开窗】rank() over() 函数

在row_number() over() 分区+开窗的基础上,再次进行rank() over() 按照cnts进行全部数据的开窗

//开窗嵌套开窗:

//rank() over() 函数

session.sql(

"""

|select t.*,rank() over(order by cnts desc) rn1 from (

|select subject,t_name,cnts,row_number() over(partition by subject order by cnts desc) rn from middleTemp

|) t

|where rn <= 2

""".stripMargin).show()

+-------+--------+----+---+---+ |subject| t_name|cnts| rn|rn1| +-------+--------+----+---+---+ |bigdata| haiyuan| 15| 1| 1| | javaee| laoshi| 9| 1| 2| | javaee|chenchan| 6| 2| 3| |bigdata| lichen| 6| 2| 3| | php| laoli| 3| 1| 5| | php| laoliu| 1| 2| 6| +-------+--------+----+---+---+

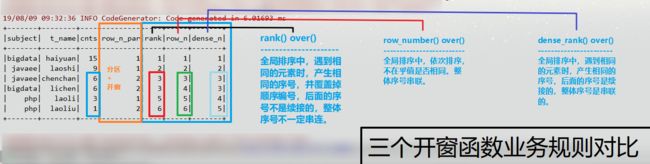

操作06:dense_rank() over() 函数 【三个开窗函数的业务对比】:

//dense_rank() over() 函数

//三个开窗函数的业务对比:

session.sql(

"""

|select t.*,rank() over(order by cnts desc) rank,

|row_number() over(order by cnts desc) row_n,

|dense_rank() over(order by cnts desc) dense_n

|from (

|select subject,t_name,cnts,row_number() over(partition by subject order by cnts desc) row_n_par from middleTemp

|) t

|where row_n_par <= 2

""".stripMargin).show()

+-------+--------+----+---------+----+-----+-------+ |subject| t_name|cnts|row_n_par|rank|row_n|dense_n| +-------+--------+----+---------+----+-----+-------+ |bigdata| haiyuan| 15| 1| 1| 1| 1| | javaee| laoshi| 9| 1| 2| 2| 2| | javaee|chenchan| 6| 2| 3| 3| 3| |bigdata| lichen| 6| 2| 3| 4| 3| | php| laoli| 3| 1| 5| 5| 4| | php| laoliu| 1| 2| 6| 6| 5| +-------+--------+----+---------+----+-----+-------+

操作07:整合为一句SQL完成:

//合并两个SQL语句:

session.sql(

"""

|select t.*,rank() over(order by cnts desc) rank,

|row_number() over(order by cnts desc) row_n,

|dense_rank() over(order by cnts desc) dense_n

|from

|(select subject,t_name,cnts,row_number() over(partition by subject order by cnts desc) row_n_par from

|(select subject,t_name,count(*) cnts from temp group by subject,t_name)) t

|where row_n_par <= 2

""".stripMargin).show()

+-------+--------+----+---------+----+-----+-------+ |subject| t_name|cnts|row_n_par|rank|row_n|dense_n| +-------+--------+----+---------+----+-----+-------+ |bigdata| haiyuan| 15| 1| 1| 1| 1| | javaee| laoshi| 9| 1| 2| 2| 2| | javaee|chenchan| 6| 2| 3| 3| 3| |bigdata| lichen| 6| 2| 3| 4| 3| | php| laoli| 3| 1| 5| 5| 4| | php| laoliu| 1| 2| 6| 6| 5| +-------+--------+----+---------+----+-----+-------+