scrapy 爬取空值

DEBUG: Redirecting (301) to

import scrapy

class S1Spider(scrapy.Spider):

name = 's1' # 爬虫的名字

allowed_domains = ['blog.csdn.net'] # 如果URL地址的HOST不属于allowed_domains,则过滤掉该请求

start_urls = ['http://blog.csdn.net/'] # 项目启动时,访问的URL地址

def parse(self, response):

print('-'*90)

# print(response.body)

# print('-'*40)# 访问start_urls,得到响应后调用的方案,response为响应对象

print(response.xpath('//div[@class="nav_com"]/ul/li/a/text()').extract())

print('-' * 90)

# print('-'*40)# 对response 做Xpath

# 爬虫开始,执行的方法,相当于start_urls

# def start_requests(self): # 向调度器发送一个request对象

# yield scrapy.Request(

# url=('http://edu.csdn.net'), # 请求地址,默认是GET方式

# callback=self.parse2, # 得到响应后,调用的函数

# )

# def parse2(self,response): # 得到响应后,调用的函数

# print(response.xpath('//div[@id="nav_com"]//li/a/text()')) # 得到字节类型 的数据

D:\爬虫\scrapy_spider1\myscrapy1>scrapy crawl s1

2020-10-03 08:25:48 [scrapy.utils.log] INFO: Scrapy 2.3.0 started (bot: myscrapy1)

2020-10-03 08:25:48 [scrapy.utils.log] INFO: Versions: lxml 4.5.2.0, libxml2 2.9.5, cssselect 1.1.0, parsel 1.6.0,

w3lib 1.22.0, Twisted 20.3.0, Python 3.8.5 (tags/v3.8.5:580fbb0, Jul 20 2020, 15:43:08) [MSC v.1926 32 bit (Intel)]

, pyOpenSSL 19.1.0 (OpenSSL 1.1.1h 22 Sep 2020), cryptography 3.1.1, Platform Windows-10-10.0.18362-SP0

2020-10-03 08:25:48 [scrapy.utils.log] DEBUG: Using reactor: twisted.internet.selectreactor.SelectReactor

2020-10-03 08:25:48 [scrapy.crawler] INFO: Overridden settings:

{

'BOT_NAME': 'myscrapy1',

'NEWSPIDER_MODULE': 'myscrapy1.spiders',

'SPIDER_MODULES': ['myscrapy1.spiders'],

'USER_AGENT': 'Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like '

'Gecko) Chrome/41.0.2228.0 Safari/537.36'}

2020-10-03 08:25:49 [scrapy.extensions.telnet] INFO: Telnet Password: b9d51701061fdac5

2020-10-03 08:25:49 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.logstats.LogStats']

2020-10-03 08:25:49 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2020-10-03 08:25:49 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2020-10-03 08:25:49 [scrapy.middleware] INFO: Enabled item pipelines:

[]

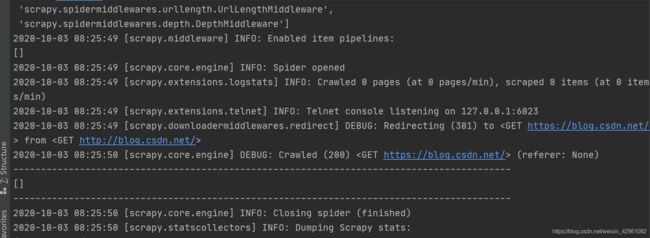

2020-10-03 08:25:49 [scrapy.core.engine] INFO: Spider opened

2020-10-03 08:25:49 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 item

s/min)

2020-10-03 08:25:49 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2020-10-03 08:25:49 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (301) to <GET https://blog.csdn.net/

> from <GET http://blog.csdn.net/>

2020-10-03 08:25:50 [scrapy.core.engine] DEBUG: Crawled (200) <GET https://blog.csdn.net/> (referer: None)

------------------------------------------------------------------------------------------

[]

------------------------------------------------------------------------------------------

2020-10-03 08:25:50 [scrapy.core.engine] INFO: Closing spider (finished)

2020-10-03 08:25:50 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{

'downloader/request_bytes': 558,

'downloader/request_count': 2,

'downloader/request_method_count/GET': 2,

'downloader/response_bytes': 14583,

'downloader/response_count': 2,

'downloader/response_status_count/200': 1,

'downloader/response_status_count/301': 1,

'elapsed_time_seconds': 0.527588,

'finish_reason': 'finished',

'finish_time': datetime.datetime(2020, 10, 3, 0, 25, 50, 372656),

'log_count/DEBUG': 2,

'log_count/INFO': 10,

'response_received_count': 1,

'scheduler/dequeued': 2,

'scheduler/dequeued/memory': 2,

'scheduler/enqueued': 2,

'scheduler/enqueued/memory': 2,

'start_time': datetime.datetime(2020, 10, 3, 0, 25, 49, 845068)}

2020-10-03 08:25:50 [scrapy.core.engine] INFO: Spider closed (finished)