自定义shell脚本采集日志信息

一、模拟日志的动态产生

在真实的环境中,日志是有nginx或者是tomcat等容器生成的,只需要采集的脚本或者是框架如flume、logstack等。

在本次测试中,采取log4j动态生成来模拟真实环境。

GenerateLog.java

package log;

import org.apache.log4j.LogManager;

import org.apache.log4j.Logger;

import java.util.Date;

/**

*模拟生成日志

* Created by tianjun on 2017/3/16.

*/

public class GenerateLog {

public static void main(String[] args) throws InterruptedException {

Logger logger = LogManager.getLogger("testlog");

int i = 0;

while (true){

logger.info(new Date().toString() + "-------------------------------");

i++;

Thread.sleep(500);

if(i>1000000){

break;

}

}

}

}

把上面代码打包上传到linux即可。

需要注意如下:

log4j.properties

log4j.rootLogger=INFO,testlog

#滚动

log4j.appender.testlog = org.apache.log4j.RollingFileAppender

log4j.appender.testlog.layout = org.apache.log4j.PatternLayout

log4j.appender.testlog.layout.ConversionPattern = [%-5p][%-22d{yyyy/MM/dd HH:mm:ssS}][%l]%n%m%n

log4j.appender.testlog.Threshold = INFO

log4j.appender.testlog.ImmediateFlush = TRUE

log4j.appender.testlog.Append = TRUE

#日志打印到的路径

log4j.appender.testlog.File = /home/hadoop/logs/log/access.log

log4j.appender.testlog.MaxFileSize = 10KB

log4j.appender.testlog.MaxBackupIndex = 20

#log4j.appender.testlog.Encoding = UTF-8pom.xml

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0modelVersion>

<groupId>tianjun.cmcc.orggroupId>

<artifactId>mr.demoartifactId>

<version>1.0-SNAPSHOTversion>

<dependencies>

<dependency>

<groupId>org.apache.hadoopgroupId>

<artifactId>hadoop-clientartifactId>

<version>2.6.4version>

dependency>

<dependency>

<groupId>junitgroupId>

<artifactId>junitartifactId>

<version>4.11version>

dependency>

<dependency>

<groupId>log4jgroupId>

<artifactId>log4jartifactId>

<version>1.2.17version>

dependency>

<dependency>

<groupId>org.slf4jgroupId>

<artifactId>slf4j-apiartifactId>

<version>1.7.13version>

dependency>

dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.pluginsgroupId>

<artifactId>maven-compiler-pluginartifactId>

<version>3.1version>

<configuration>

<source>1.7source>

<target>1.7target>

configuration>

plugin>

<plugin>

<groupId>org.apache.maven.pluginsgroupId>

<artifactId>maven-shade-pluginartifactId>

<version>2.4.1version>

<executions>

<execution>

<phase>packagephase>

<goals>

<goal>shadegoal>

goals>

<configuration>

<transformers>

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>log.GenerateLogmainClass>

transformer>

transformers>

configuration>

execution>

executions>

plugin>

plugins>

build>

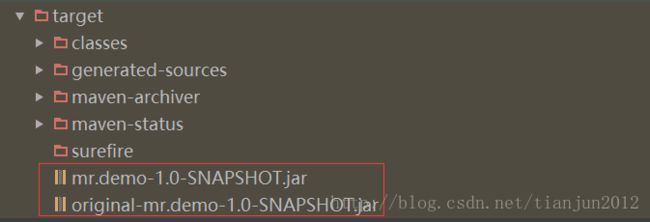

project>比较推荐这个种maven插件打包,可以看到打包后又两个jar包

其中 original开头的就是我们普通的打包,不带任何依赖包。

另外一个就是打包进了所有的依赖jar包。

一边选择有依赖包的jar即可。

模拟结果:

.

└── logs

├── log

│ ├── access.log

│ ├── access.log.1

│ ├── access.log.10

│ ├── access.log.11

│ ├── access.log.12

│ ├── access.log.13

│ ├── access.log.14

│ ├── access.log.15

│ ├── access.log.2

│ ├── access.log.3

│ ├── access.log.4

│ ├── access.log.5

│ ├── access.log.6

│ ├── access.log.7

│ ├── access.log.8

│ └── access.log.9

└── toupload二、shell脚本的编写

collectdata.sh:

#!/bin/bash

#set java env

export JAVA_HOME=/export/server/jdk/jdk

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

#set hadoop env

export HADOOP_HOME=/export/server/hadoop

export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH

#解决的问题

# 1、先将需要上传的文件移动到待上传目录

# 2、在讲文件移动到待上传目录时,将文件按照一定的格式重名名

# /export/software/hadoop.log1 /export/data/click_log/xxxxx_click_log_{date}

#日志文件存放的目录

log_src_dir=/home/hadoop/logs/log/

#待上传文件存放的目录

log_toupload_dir=/home/hadoop/logs/toupload/

#日志文件上传到hdfs的根路径

hdfs_root_dir=/data/clickLog/20170216/

#打印环境变量信息

echo "envs: hadoop_home: $HADOOP_HOME"

#读取日志文件的目录,判断是否有需要上传的文件

echo "log_src_dir:"$log_src_dir

ls $log_src_dir | while read fileName

do

if [[ "$fileName" == access.log.* ]]; then

# if [ "access.log" = "$fileName" ];then

date=`date +%Y_%m_%d_%H_%M_%S`

#将文件移动到待上传目录并重命名

#打印信息

echo "moving $log_src_dir$fileName to $log_toupload_dir"xxxxx_click_log_$fileName"$date"

mv $log_src_dir$fileName $log_toupload_dir"xxxxx_click_log_$fileName""_"$date

#将待上传的文件path写入一个列表文件willDoing

echo $log_toupload_dir"xxxxx_click_log_$fileName""_"$date >> $log_toupload_dir"willDoing."$date

fi

done

#找到列表文件willDoing

ls $log_toupload_dir | grep will |grep -v "_COPY_" | grep -v "_DONE_" | while read line

do

#打印信息

echo "toupload is in file:"$line

#将待上传文件列表willDoing改名为willDoing_COPY_

mv $log_toupload_dir$line $log_toupload_dir$line"_COPY_"

#读列表文件willDoing_COPY_的内容(一个一个的待上传文件名) ,此处的line 就是列表中的一个待上传文件的path

cat $log_toupload_dir$line"_COPY_" |while read line

do

#打印信息

echo "puting...$line to hdfs path.....$hdfs_root_dir"

hadoop fs -put $line $hdfs_root_dir

done

mv $log_toupload_dir$line"_COPY_" $log_toupload_dir$line"_DONE_"

done预先创建:

1、日志文件存放的目录

log_src_dir=/home/hadoop/logs/log/

2、待上传文件存放的目录

log_toupload_dir=/home/hadoop/logs/toupload/

3、日志文件上传到hdfs的根路径

hdfs_root_dir=/data/clickLog/20170216/

即可。

某次的采集结果:

.

└── logs

├── log

│ ├── access.log

└── toupload

├── willDoing.2017_03_16_14_39_01_DONE_

├── willDoing.2017_03_16_14_40_01_DONE_

├── willDoing.2017_03_16_14_41_01_DONE_

├── willDoing.2017_03_16_14_42_01_DONE_

├── willDoing.2017_03_16_14_47_02_DONE_

├── willDoing.2017_03_16_14_47_03_DONE_

├── willDoing.2017_03_16_14_48_50_DONE_

├── willDoing.2017_03_16_14_59_14_DONE_

├── xxxxx_click_log_2017_03_16_14_39_01

├── xxxxx_click_log_2017_03_16_14_40_01

├── xxxxx_click_log_2017_03_16_14_41_01

├── xxxxx_click_log_2017_03_16_14_42_01

├── xxxxx_click_log_access.log.10_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.11_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.1_2017_03_16_14_47_02

├── xxxxx_click_log_access.log.1_2017_03_16_14_48_50

├── xxxxx_click_log_access.log.1_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.12_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.13_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.14_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.15_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.16_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.17_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.2_2017_03_16_14_47_02

├── xxxxx_click_log_access.log.2_2017_03_16_14_48_50

├── xxxxx_click_log_access.log.2_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.3_2017_03_16_14_47_02

├── xxxxx_click_log_access.log.3_2017_03_16_14_48_50

├── xxxxx_click_log_access.log.3_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.4_2017_03_16_14_47_02

├── xxxxx_click_log_access.log.4_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.5_2017_03_16_14_47_03

├── xxxxx_click_log_access.log.5_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.6_2017_03_16_14_47_03

├── xxxxx_click_log_access.log.6_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.7_2017_03_16_14_47_03

├── xxxxx_click_log_access.log.7_2017_03_16_14_59_14

├── xxxxx_click_log_access.log.8_2017_03_16_14_59_14

└── xxxxx_click_log_access.log.9_2017_03_16_14_59_14三、定期启动脚步采集

日志不可能只采集一次,所以需要时间调度,如每隔一分钟采集一次。

采用crontab进行定期采集:

crontab语法:

crontab [-u username] [-l|-e|-r]

选项与参数:

-u :只有 root 才能进行这个任务,亦即帮其他使用者创建/移除 crontab 工作排程;

-e :编辑 crontab 的工作内容

-l :查阅 crontab 的工作内容

-r :移除所有的 crontab 的工作内容,若仅要移除一项,请用 -e 去编辑查询使用者目前的 crontab 内容:

crontab -l

#每隔五分钟采集一次

*/5 * * * * /root/collectdata.sh清空使用者目前的 crontab:

crontab -r

crontab -l

no crontab for blue如果你想删除当前用户的某一个crontab任务,那么使用crontab -e进入编辑器,再删除对应的任务。